Validating Neurochemical-Enriched Dynamic Causal Models: A New Frontier for CNS Drug Development

This article explores the validation of neurochemical-enriched Dynamic Causal Models (DCMs), a transformative computational approach that integrates neurochemical data with neural circuit models.

Validating Neurochemical-Enriched Dynamic Causal Models: A New Frontier for CNS Drug Development

Abstract

This article explores the validation of neurochemical-enriched Dynamic Causal Models (DCMs), a transformative computational approach that integrates neurochemical data with neural circuit models. Targeting researchers and drug development professionals, we detail how these biophysically grounded models non-invasively infer receptor-specific pathophysiology (e.g., NMDA/AMPA dysfunction) and drug mechanisms in humans. Covering foundational principles, methodological applications in Alzheimer's and psychiatric disorders, optimization strategies, and rigorous validation against biomarkers and clinical outcomes, this review synthesizes how validated DCMs can de-risk CNS drug development, identify patient subpopulations, and serve as sensitive biomarkers for experimental medicine studies.

The Foundation of Neurochemical-Enriched DCMs: Bridging Molecules, Circuits, and Behavior

The development of effective therapeutics for Central Nervous System (CNS) disorders represents one of the most challenging frontiers in modern medicine. Neurological conditions are now the leading cause of ill health and disability worldwide [1], creating an urgent need for new treatments. However, CNS drug development faces a crisis of productivity, with success rates for final marketing approval less than half of those for non-CNS drugs (6.2% vs. 13.3%) and development times that are significantly longer [2]. This high failure rate persists despite decades of advances in basic neuroscience, prompting a fundamental reevaluation of the tools and methodologies used in CNS research and development.

The core challenges are multifaceted and interconnected. The blood-brain barrier (BBB) prevents more than 98% of small-molecule drugs and all macromolecular therapeutics from accessing the brain [1], creating a formidable delivery challenge. Furthermore, the complex pathophysiology of the CNS, with its elaborate networks of neurons and glial cells, makes targeted interventions difficult without causing system-wide issues [1]. Perhaps most critically, a dearth of reliable biomarkers impacts early diagnosis, treatment monitoring, and drug development efforts, contributing to variability in patient response and complicating the development of standardized therapies [1].

Table 1: Key Challenges in CNS Drug Development

| Challenge | Impact on Development | Consequence |

|---|---|---|

| Blood-Brain Barrier | Limits brain access for >98% of small molecules and all macromolecules | Low efficacy, increased peripheral side effects |

| Disease Heterogeneity | Multiple root causes for conditions like Alzheimer's and MS | Difficult patient stratification, inconsistent clinical trial results |

| Biomarker Scarcity | Limited objective measures for diagnosis and treatment monitoring | High variability in patient response, difficulty proving efficacy |

| Scientific Complexity | Incomplete understanding of disease mechanisms | High failure rates due to lack of efficacy |

Neurochemical-Enriched DCMs: A Novel Framework for Validation

In response to these challenges, a new generation of tools is emerging that integrates neurochemical measurements directly with neurophysiological modeling. The neurochemistry-enriched dynamic causal model (DCM) represents a significant methodological advance that directly addresses the biomarker scarcity problem in CNS disorders [3] [4].

Theoretical Foundation and Experimental Protocol

This framework employs a hierarchical empirical Bayesian approach to test hypotheses about how neurotransmitter concentrations serve as empirical priors for synaptic physiology. The methodology integrates two complementary neuroimaging techniques:

- Ultra-High Field Magnetic Resonance Spectroscopy (7T-MRS): Provides precise in vivo measurements of regional neurotransmitter concentrations, particularly GABA and glutamate.

- Magnetoencephalography (MEG): Records neurophysiological activity with high temporal resolution, capturing the dynamic interactions within neural circuits.

The experimental workflow begins with first-level dynamic causal modeling of cortical microcircuits to infer connectivity parameters from individual MEG data. At the second level, the 7T-MRS estimates of regional neurotransmitter concentration supply empirical priors on synaptic connectivity parameters [4]. For efficiency and reproducibility, the analysis employs Bayesian model reduction (BMR), parametric empirical Bayes, and variational Bayesian inversion to compare alternative model evidence of how spectroscopic neurotransmitter measures inform estimates of synaptic connectivity [3] [4].

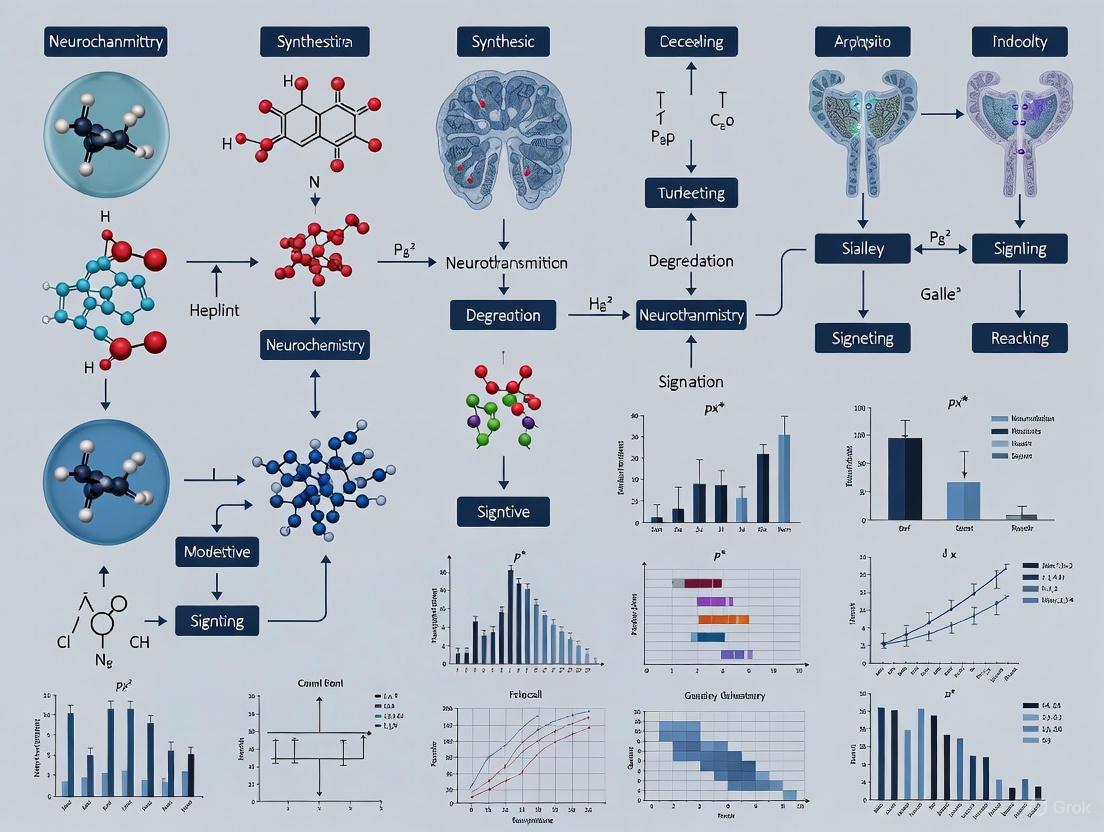

Diagram 1: DCM-MRS experimental workflow for hypothesis testing.

Key Research Findings and Validation

Application of this method to resting-state MEG and 7T-MRS data from healthy adults has yielded crucial insights into the specific relationships between neurotransmitter systems and synaptic connectivity. The results confirm that GABA concentration influences local recurrent inhibitory intrinsic connectivity in both deep and superficial cortical layers, while glutamate influences the excitatory connections between superficial and deep layers and connections from superficial to inhibitory interneurons [4]. These findings provide a quantitative framework for understanding how individual differences in neurochemistry shape neural circuit function.

Validation through within-subject split-sampling of MEG datasets (using held-out data for testing) has demonstrated that this model comparison approach for hypothesis testing is highly reliable [4]. The method is suitable for applications with both magnetoencephalography and electroencephalography, positioning it as a powerful tool for revealing the mechanisms of neurological and psychiatric disorders, including responses to psychopharmacological interventions.

Table 2: Neurotransmitter-Synaptic Connectivity Relationships Identified via DCM-MRS

| Neurotransmitter | Synaptic Connection Type Influenced | Circuit Level Impact |

|---|---|---|

| GABA | Local recurrent inhibitory intrinsic connectivity | Inhibition in deep and superficial cortical layers |

| Glutamate | Excitatory connections between superficial and deep layers | Feedforward and feedback excitation |

| Glutamate | Connections from superficial to inhibitory interneurons | Disynaptic inhibition and circuit regulation |

The Evolving Computational Toolkit for CNS Drug Discovery

Beyond specialized neuroimaging approaches, the computational toolbox for CNS drug discovery has expanded dramatically with the integration of artificial intelligence (AI) and machine learning (ML). These platforms are revolutionizing pharmaceutical research by accelerating the identification of novel drug candidates, optimizing clinical trials, and reducing development costs [5].

AI-Driven Drug Discovery Platforms

The current landscape of AI drug discovery platforms includes both comprehensive suites and specialized tools targeting specific phases of the development pipeline. These platforms leverage machine learning, deep learning, and generative AI to analyze vast biological and chemical datasets, potentially cutting traditional drug development timelines from over a decade to just a few years [5].

Table 3: AI Drug Discovery Platforms Relevant to CNS Research

| Platform | Primary Application | Key Features | CNS Relevance |

|---|---|---|---|

| Exscientia | Small-molecule design & optimization | Centaur AI for rapid candidate design; 80% Phase I success rate | Precision oncology with CNS applications |

| Insilico Medicine | End-to-end drug discovery | PandaOmics for target discovery; Chemistry42 for molecule generation | Novel target identification for CNS disorders |

| BenevolentAI | Target identification & drug repurposing | Processes millions of scientific papers for hidden connections | Rare CNS disease and oncology focus |

| Atomwise | Hit-to-lead optimization | AtomNet for structure-based drug design; predicts binding affinity | Rare disease and oncology applications |

| Deepmirror | Hit-to-lead and lead optimization | Generative AI for molecular design; property prediction | Reduces ADMET liabilities; speeds discovery 6x |

| Recursion Pharmaceuticals | Target identification & validation | LOWE LLM for querying biological datasets; knowledge graphs | Rare disease and oncology research |

Specialized Software for Molecular Modeling

In addition to comprehensive AI platforms, specialized software solutions continue to play a critical role in CNS drug discovery by providing advanced molecular modeling capabilities:

- Chemical Computing Group (MOE): Offers an all-in-one platform for drug discovery integrating molecular modeling, cheminformatics, and bioinformatics, with strengths in structure-based drug design and molecular docking [6].

- Schrödinger: Integrates advanced quantum chemical methods with machine learning approaches, offering tools like Free Energy Perturbation (FEP) for calculating binding affinities and DeepAutoQSAR for predicting molecular properties [6].

- Cresset (Flare V8): Provides advanced protein-ligand modeling capabilities including Free Energy Perturbation enhancements and MM/GBSA methods for calculating binding free energy of ligand-protein complexes [6].

Experimental Protocols and Research Reagent Solutions

Detailed DCM-MRS Methodology

The neurochemistry-enriched DCM protocol involves specific steps that can be adapted for testing hypotheses about synaptic connectivity in various CNS disorders:

Participant Selection and Preparation: Recruit participants according to study objectives (patients vs. healthy controls). Instruct participants to refrain from alcohol and psychoactive substances for 24-48 hours prior to testing. Conduct sessions at a consistent time of day to control for circadian neurotransmitter fluctuations.

7T-MRS Data Acquisition: Acquire structural MRI images for anatomical localization. Position MRS voxels in regions of interest (e.g., prefrontal cortex, primary sensory areas). Use specialized editing sequences (e.g., MEGA-PRESS or SPECIAL) for enhanced GABA detection. Acquire water-unsuppressed reference scans for quantification. Typical parameters: TR = 2000 ms, TE = 68 ms for GABA; 128-256 averages.

MEG Data Collection: Conduct resting-state recordings with eyes closed for 5-10 minutes in a magnetically shielded room. Monitor heart rate and eye movements for artifact identification. Acquire structural MRI for source reconstruction co-registration.

Data Processing and Analysis: Reconstruct MRS spectra using appropriate processing tools (e.g., Gannet, LCModel). Quantify metabolite concentrations relative to creatine or water. Preprocess MEG data: filter (0.5-48 Hz), remove artifacts (SSP, ICA), and coregister with structural MRI.

Dynamic Causal Modeling: Specify canonical microcircuit models with biologically plausible architectures. Invert DCMs for individual participants using variational Bayesian methods. Implement parametric empirical Bayes to assess group effects and the relationship between MRS measures and connectivity parameters.

Bayesian Model Reduction and Comparison: Use BMR to efficiently compare alternative models of how neurotransmitters influence specific connection types. Calculate model evidence and use random-effects Bayesian model selection to identify the most likely model.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagent Solutions for Neurochemical-Enriched DCM Research

| Reagent/Software Solution | Function | Application in DCM-MRS |

|---|---|---|

| 7T MRI Scanner with MRS Capabilities | High-field magnetic resonance imaging and spectroscopy | Precise quantification of regional GABA and glutamate concentrations |

| MEG System with Neuromagnetic Sensors | Recording magnetic fields generated by neural activity | High-temporal resolution measurement of neural circuit dynamics |

| Gannet MRS Toolkit | MRS data processing and quantification | Standardized analysis of GABA-edited and other MRS spectra |

| SPM12 with DCM Framework | Statistical parametric mapping and dynamic causal modeling | Generative modeling of MEG/EEG data and Bayesian parameter estimation |

| Bayesian Model Reduction (BMR) Tools | Efficient model comparison and evidence approximation | Hypothesis testing regarding neurotransmitter effects on connectivity |

| LCModel | Linear combination model for in vivo MRS data | Quantitative analysis of MR spectra using basis sets of metabolite spectra |

Integrated Approaches and Future Directions

The future of CNS drug development lies in the strategic integration of complementary methodologies. Neurochemical-enriched DCM provides a direct window into how neurotransmitter systems shape neural circuit dynamics, creating a critical bridge between molecular targets and system-level effects. When combined with AI-driven drug discovery platforms that can rapidly generate and optimize compounds targeting these systems, a more efficient and effective development pipeline emerges.

Diagram 2: Integrated CNS drug development pipeline with validation.

This integrated approach addresses the fundamental challenge in CNS drug development: the translation from molecular targets to clinically relevant effects. By validating that compound engagement at molecular targets produces specific, predictable changes in neural circuit function measured through neurochemical-enriched DCM, developers can derisk the transition from preclinical to clinical stages. Furthermore, these methods enable patient stratification based on individual neurochemical profiles, moving the field toward the precision medicine approaches necessary to overcome the heterogeneity that has plagued CNS clinical trials [7].

The imperative for new tools in CNS drug development is clear, and the emerging toolkit of neurochemical-enriched DCM, combined with advanced computational platforms, offers a promising path forward. As these methodologies mature and become more widely adopted, they have the potential to transform the challenging landscape of CNS therapeutic development, ultimately delivering effective treatments for the millions affected by neurological and psychiatric disorders.

Biophysical models of brain circuits have revolutionized clinical neuroscience by providing a mechanistic understanding of how systems-level neuroimaging biomarkers emerge from underlying synaptic-level perturbations associated with disease states [8]. These computational models describe how patterns of functional connectivity observed in resting-state functional magnetic resonance imaging (fMRI) emerge from neural dynamics shaped by inter-areal interactions through underlying structural connectivity [8]. However, a critical explanatory gap has persisted in understanding how molecular and synaptic-level disturbances in the human brain propagate across levels to impact systems-level neural activity and cognitive computations in neuropsychiatric disorders [8].

The integration of neurochemical data into these models addresses this fundamental gap, creating neurochemical-enriched dynamic causal models (DCM) that can more accurately represent the brain's synaptic-level functioning. This integration is particularly valuable for drug development professionals seeking to understand how pharmacological interventions affect brain-wide circuits, as it enables tracking of molecular-level drug actions through to systems-level effects [8]. The core challenge has been bridging vastly different biophysical scales – from molecular interactions at synapses to region-level functional connectivity measured by neuroimaging [9]. Recent research has demonstrated the feasibility of integrating data from these disparate scales to provide a more comprehensive understanding of brain connectivity and its person-to-person variability [9].

Comparative Analysis of Integration Methodologies

Multi-Scale Data Integration Approach

Table 1: Multi-Scale Data Integration Methodology

| Integration Component | Data Types Collected | Scale Bridging Strategy | Key Measurements |

|---|---|---|---|

| Molecular Data | Proteomics, Gene Expression | Protein modules contextualized with dendritic spine morphology | Protein abundance via TMT mass spectrometry, RNA sequencing |

| Cellular Data | Dendritic Spine Morphometry | Spine attributes as cellular context for molecular data | Spine density, backbone length, head diameter, volume |

| Anatomical Data | Structural MRI | Atlas-based parcellation | Structural attributes across 62 anatomical regions |

| Functional Data | Resting-state fMRI | Functional connectivity estimation | Correlation between 100 functionally homogeneous regions |

This approach leverages a unique cohort design with antemortem neuroimaging and genetic data combined with postmortem molecular and cellular data from the same individuals [9]. The methodology successfully identified hundreds of proteins that explain interindividual differences in functional connectivity and structural covariation, with these proteins enriched for synaptic structures and functions, energy metabolism, and RNA processing [9]. The critical innovation was using dendritic spine morphometric attributes as the cellular context to bridge proteins with region-level functional connectivity, demonstrating that proteins alone were insufficient to explain connectivity differences without this cellular contextualization [9].

Neurotransmitter Circuit Mapping Approach

Table 2: Neurotransmitter Circuit Mapping Methodology

| Method Component | Implementation | Neurotransmitter Systems | Key Outputs |

|---|---|---|---|

| Receptor/Transporter Mapping | PET data from 1200 healthy individuals | Acetylcholine, dopamine, noradrenaline, serotonin | Normative location density maps |

| White Matter Projection | Functionnectome method with tractography | 4 major neurotransmitter systems | White matter atlas of neurotransmitter circuits |

| Presynaptic/Postsynaptic Differentiation | Lesion proportion analysis | Receptor and transporter-specific | Presynaptic and postsynaptic ratios |

| Clinical Application | Stroke lesion analysis in 1333 patients | 8 neurochemical clusters | Neurochemical fingerprints of stroke |

This methodology enables in vivo mapping of neurotransmitter circuits that had previously been hampered by technical challenges [10]. By projecting gray matter voxel values onto white matter according to voxel-wise weighted probability of structural connection, the approach accounts for neurochemical diaschisis – how damage to pre or postsynaptic neurons' axons disrupts neurotransmitter circuits even when synaptic structures remain intact [10]. The differentiation between presynaptic injury (decreased neurotransmitter release) and postsynaptic injury (impaired postsynaptic mediation) provides crucial information for targeted pharmacological interventions, such as receptor agonists or transporter inhibitors [10].

Dynamic C Modeling Framework

The DCM framework provides a foundational approach for specifying models, fitting them to data, and comparing their evidence using Bayesian model comparison [11]. DCM uses nonlinear state-space models in continuous time, specified using stochastic or ordinary differential equations, to estimate the coupling among brain regions and changes in coupling due to experimental manipulations [11]. For neurochemical integration, DCM has been extended through:

- Conductance-based models which derive from Hodgkin-Huxley equations and enable inference about ligand-gated excitatory (Na+) and inhibitory (Cl-) ion flow mediated through fast glutamatergic and GABAergic receptors [11].

- Mean-field models that include the full probability distribution of activity within neural populations and allow incorporation of voltage-gated NMDA ion channels [11].

- Stochastic DCM for resting state studies which estimates both neural fluctuations and connectivity parameters [11].

The parametric empirical Bayes (PEB) framework in DCM enables hierarchical modeling over parameters across subjects, which is particularly valuable for understanding population variability in neurochemical responses [11].

Experimental Protocols for Validation

Multi-Scale Integration Protocol

Table 3: Experimental Protocol for Multi-Scale Integration

| Protocol Stage | Detailed Procedures | Quality Control Measures |

|---|---|---|

| Participant Cohort | 98 individuals from ROSMAP study | Average 3±2 years between MRI and death, PMI 8.5±4.6 hours |

| Neuroimaging Data | BIDS-organized data from 1,210 participants | CuBIDS validation, motion confound regression |

| Molecular Measurements | Multiplex tandem mass tag mass spectrometry | Standard preprocessing, covarying protein modules identification |

| Dendritic Spine Analysis | Golgi stain impregnation, ×60 widefield microscopy | 8-12 pyramidal neurons per individual, 3D reconstruction |

| Data Integration | Protein modules contextualized with spine morphology | Confounding factor adjustment (age, sex, education, PMI, motion) |

This protocol successfully demonstrated that synaptic protein modules alone did not detectably associate with functional connectivity between superior frontal and inferior temporal gyri (P = 0.6839), but when contextualized with dendritic spine morphology, a significant association emerged (P = 0.0174) [9]. This finding underscores the necessity of bridging scales through cellular context rather than directly correlating molecular with systems-level data.

Neurotransmitter Circuit Validation Protocol

The validation of neurotransmitter circuit mapping involved:

- Normative mapping: Compiling receptor and transporter densities from 1200 healthy individuals using PET data [10].

- White matter projection: Using the Functionnectome method with whole-brain 7T deterministic tractographies from 100 Human Connectome Project participants as anatomical priors [10].

- Streamline selection: Focusing on neurotransmitter-producing nuclei in the brainstem and basal forebrain based on histochemistry and neuronal tracing literature [10].

- Clinical validation: Applying the method to two large stroke patient samples (1333 patients from University College London Hospitals and 143 patients from Washington University School of Medicine) [10].

- Cluster analysis: Using unsupervised k-means clustering to identify distinct neurochemical profiles and their association with cognitive outcomes [10].

The method successfully identified eight clusters with different neurochemical patterns in stroke patients, though associations with cognitive profiles were scarce, suggesting finer underlying neurochemical disturbances than the analysis granularity could capture [10].

Visualization of Integration Frameworks

Multi-Scale Data Integration Workflow

Multi-Scale Data Integration Workflow

Neurotransmitter Circuit Mapping

Neurotransmitter Circuit Mapping Process

Dynamic Causal Modeling Framework

DCM Framework with Neurochemical Integration

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Essential Research Reagents and Materials

| Research Reagent/Material | Function in Neurochemical Integration | Example Implementation |

|---|---|---|

| Tandem Mass Tag Mass Spectrometry | Protein abundance quantification | Multiplex TMT-MS on SFG and ITG tissue samples [9] |

| Golgi Stain Impregnation | Dendritic spine visualization | Impregnation of postmortem tissue slices for spine morphometry [9] |

| Neurolucida 360 | 3D dendritic reconstruction | Reconstruction of Z stacks for spine attribute quantification [9] |

| High-Field MRI Scanners | Structural and functional connectivity | 7T scanners for deterministic tractography [10] |

| Positron Emission Tomography | Receptor/transporter density mapping | Normative maps from 1200 healthy individuals [10] |

| Functionnectome Software | White matter projection of gray matter values | Projection of receptor densities to white matter tracts [10] |

| Bayesian Model Selection | Comparison of competing models | Random effects BMS for group-level analysis [11] |

| Parametric Empirical Bayes | Hierarchical parameter modeling | PEB for between-subject variability in connection strengths [11] |

The integration of neurochemical data into biophysical models of brain circuits represents a paradigm shift in clinical neuroscience and drug development. The validation of these neurochemical-enriched models rests on their ability to explain person-to-person variability in brain connectivity through measurable molecular and cellular correlates [9], and to generate testable predictions about neurochemical dysfunction in neurological disorders such as stroke [10]. The multi-scale integration approach demonstrates that bridging biophysical scales requires cellular contextualization, as proteins alone were insufficient to explain functional connectivity differences without dendritic spine morphology data [9].

For drug development professionals, these integrated models offer unprecedented opportunities to understand how pharmacological interventions targeting specific neurotransmitter systems (acetylcholine, dopamine, noradrenaline, serotonin) affect whole-brain dynamics and connectivity [10]. The differentiation between presynaptic and postsynaptic injury provides a neurochemical basis for tailoring receptor agonists or transporter inhibitors to individual patient profiles [10]. Future developments will likely focus on expanding the range of neurotransmitter systems modeled, incorporating dynamic receptor binding parameters, and integrating real-time neurochemical measurements from techniques such as fast-scan cyclic voltammetry. As these models become increasingly refined and validated, they will accelerate the development of targeted therapies for neurological and psychiatric disorders based on individual neurochemical fingerprints.

The delicate balance between excitatory and inhibitory (E/I) neurotransmission is a fundamental principle of central nervous system (CNS) function. This equilibrium is primarily governed by the coordinated actions of the major excitatory neurotransmitter, glutamate, and the primary inhibitory neurotransmitter, gamma-aminobutyric acid (GABA). Disruptions in this E/I balance are implicated in a vast array of neurological and psychiatric disorders, including depression, schizophrenia, epilepsy, and neurodegenerative diseases [12] [13] [14]. Glutamate mediates its excitatory effects predominantly through ionotropic receptors, specifically N-methyl-D-aspartate (NMDA) and α-amino-3-hydroxy-5-methyl-4-isoxazolepropionic acid (AMPA) receptors, which are crucial for synaptic transmission, plasticity, and learning [15] [14]. In contrast, GABA exerts its inhibitory influence largely via ligand-gated chloride channels, the GABA-A receptors, which hyperpolarize neurons and reduce their firing probability [12]. The integration of these receptor systems defines the cortical E/I balance, and their modulation represents a pivotal target for therapeutic intervention. Contemporary research, particularly in the field of neurochemical-enriched Dynamic Causal Modeling (DCM), seeks to formalize these neurochemical mechanisms within a computational framework. This approach uses generative models to infer hidden neuronal states and their receptor-mediated interactions from non-invasive imaging data, thereby validating and refining our understanding of these key targets in health and disease [3] [16].

Target Profiles: Glutamate and GABA Receptor Systems

Glutamate Receptors: NMDA and AMPA

Ionotropic glutamate receptors are the main drivers of fast excitatory synaptic transmission. The NMDA and AMPA receptors have distinct but complementary roles.

NMDA Receptors: These receptors are heterotetrameric complexes, typically composed of two obligatory GluN1 subunits and two regulatory GluN2 subunits (e.g., GluN2A-D) [14]. Their activation requires both the binding of glutamate and the co-agonist glycine (or D-serine). A defining feature is their voltage-dependent block by magnesium ions (Mg²⁺), which is relieved upon sufficient depolarization of the postsynaptic membrane, often mediated by AMPA receptor activation. This property allows NMDA receptors to function as coincidence detectors of pre- and postsynaptic activity. Upon activation, they permit a substantial influx of calcium (Ca²⁺), which acts as a critical second messenger to trigger long-term potentiation (LTP), synaptic plasticity, and learning [14]. However, excessive NMDA receptor activation leads to excitotoxicity and neuronal death, a process implicated in stroke and neurodegenerative disorders [13] [14].

AMPA Receptors: These receptors are the primary workhorses of fast excitatory transmission, mediating the majority of basal synaptic currents. They are typically tetramers formed from combinations of GluA1-4 subunits [13] [17]. Unlike NMDA receptors, they are permeable primarily to sodium (Na⁺) and potassium (K⁺) ions, leading to rapid depolarization. The trafficking and synaptic density of AMPA receptors are dynamically regulated and are a core mechanism underlying synaptic plasticity and learning. Their activation is essential for depolarizing the postsynaptic membrane to relieve the Mg²⁺ block from NMDA receptors, thereby enabling their activation [13]. As such, the AMPA/NMDA ratio is a critical metric for assessing synaptic strength and E/I balance.

Table 1: Comparative Profile of Ionotropic Glutamate Receptors

| Feature | NMDA Receptor | AMPA Receptor |

|---|---|---|

| Subunit Composition | GluN1 + GluN2 (A-D); GluN3 | GluA1-GluA4 |

| Endogenous Agonist | Glutamate & Glycine/D-Serine | Glutamate |

| Ion Permeability | Ca²⁺, Na⁺, K⁺ (High Ca²⁺) | Na⁺, K⁺ (Low Ca²⁺; GluA2-lacking) |

| Key Properties | Voltage-dependent Mg²⁺ block; Slow kinetics | Fast activation & desensitization; Rapid kinetics |

| Primary Function | Synaptic plasticity, Learning, Coincidence detection | Fast excitatory transmission, Membrane depolarization |

| Pathological Role | Excitotoxicity (stroke, neurodegeneration) | Seizures, Neurotoxicity from overstimulation |

GABA Receptors: The Primary Inhibitory System

GABA is the chief inhibitory neurotransmitter in the mature brain, synthesized from glutamate via the enzyme glutamic acid decarboxylase (GAD) [12].

- GABA-A Receptors: These are pentameric, ligand-gated chloride channels assembled from a variety of subunits (e.g., α1-6, β1-3, γ1-3, δ, etc.). When GABA binds, the channel opens, allowing chloride ions (Cl⁻) to flow into the neuron, leading to hyperpolarization and reduced neuronal excitability. This underlies fast inhibitory postsynaptic potentials (IPSPs). The diversity of subunit composition creates a vast array of receptor subtypes with distinct pharmacological properties and distributions, allowing for targeted therapeutic modulation [12].

- GABA-B Receptors: These are G-protein coupled receptors (GPCRs) that mediate slow and prolonged inhibitory signaling. They can function presynaptically to inhibit neurotransmitter release or postsynaptically to activate K⁺ channels, further contributing to hyperpolarization [12].

The E/I balance is therefore not static but a dynamic interplay where glutamatergic excitation is constantly shaped and refined by GABAergic inhibition. A disruption in this balance—whether toward excess excitation (e.g., in epilepsy) or excess inhibition (e.g., impairing learning)—is a hallmark of many brain disorders [12].

Comparative Pharmacological Modulation and Experimental Data

Targeting NMDA, AMPA, and GABA receptors is a cornerstone of psychopharmacology. Recent breakthroughs, particularly with NMDA receptor antagonists, have transformed the therapeutic landscape for treatment-resistant conditions.

NMDA Receptor Antagonism as a Rapid-Antidepressant Strategy

A landmark finding is that a single, low dose of the NMDA channel blocker ketamine can produce rapid (within hours) antidepressant effects in patients with treatment-resistant depression (TRD) [18] [15]. Preclinical studies using a chronic unpredictable stress (CUS) model in rats demonstrate that this effect is not merely symptomatic but involves a rapid reversal of the neurobiological deficits caused by chronic stress. Ketamine (10 mg/kg, i.p.) rapidly ameliorated CUS-induced anhedonia and anxiety-like behaviors [18]. Mechanistically, it reversed the CUS-induced decrease in synaptic protein expression (e.g., synapsin I, PSD95), spine density, and the frequency/amplitude of excitatory postsynaptic currents (EPSCs) in layer V pyramidal neurons of the prefrontal cortex (PFC) [18]. Crucially, these behavioral and synaptic effects were abolished by pre-treatment with rapamycin, an inhibitor of the mTOR pathway, indicating that mTOR-dependent synaptogenesis is a key mechanism underlying ketamine's rapid antidepressant action [18].

Convergent Mechanisms of Rapid-Acting Antidepressants

While ketamine is a benchmark, research reveals a convergent mechanism shared by many glutamatergic rapid-acting antidepressants (RAADs). This includes novel agents like the NMDA receptor antagonist esmethadone (REL-1017) and positive allosteric modulators (PAMs) of AMPA receptors (e.g., rapastinel) [15]. Despite different primary targets, these compounds ultimately enhance AMPA receptor activation relative to NMDA receptor activation. This triggered AMPA flux leads to the release of brain-derived neurotrophic factor (BDNF), which subsequently activates the mTOR signaling pathway. The final common pathway is one of enhanced synaptic strengthening through increased AMPA receptor trafficking and the formation of new dendritic spines, effectively reversing the synaptic deficits associated with depression [15].

Table 2: Key Pharmacological Agents Targeting Glutamate Receptors

| Agent / Molecule | Primary Target | Key Experimental Finding | Functional Outcome |

|---|---|---|---|

| Ketamine | Non-competitive NMDA channel blocker | Reverses CUS-induced synaptic deficits in PFC (spine density, EPSCs) via mTOR [18] | Rapid antidepressant effect |

| Ro 25-6981 | Selective NR2B NMDA antagonist | Rapidly ameliorates CUS-induced anhedonia and anxiety in rats [18] | Rapid antidepressant effect |

| GLP-1–MK-801 Conjugate | GLP-1R + NMDA antagonist | Targeted NMDA antagonism in hypothalamus/brainstem; synergistically lowers body weight in DIO mice without MK-801's adverse effects [19] | Effective obesity treatment |

| AMPA Potentiators (S18986) | AMPA Receptor PAM | Chronic admin in aging rats improved spatial memory, increased BDNF, protected against age-related neurochemical decline [13] | Cognitive enhancement, neuroprotection |

The following diagram illustrates this convergent pathway for rapid-acting antidepressants.

Novel Targeting Strategies: GLP-1-Directed NMDA Antagonism

Innovative drug development strategies are being employed to enhance efficacy and reduce side effects. A prime example is the creation of GLP-1–MK-801, a bimodal molecule that conjugates the potent NMDA receptor antagonist MK-801 to a glucagon-like peptide-1 (GLP-1) analogue via a cleavable disulfide linker [19]. This design leverages the high density of GLP-1 receptors in appetite-regulating brain regions like the hypothalamus and brainstem. The conjugate is designed to be inactive in plasma, only releasing active MK-801 intracellularly upon cleavage in GLP-1 receptor-expressing neurons. In diet-induced obese (DIO) mice, GLP-1–MK-801 produced synergistic and superior weight loss (vehicle-corrected: 23.2%) compared to monotherapies, while circumventing the hyperthermia and hyperlocomotion associated with systemic MK-801 administration [19]. This represents a pioneering approach to cell-specific ionotropic receptor modulation.

Experimental Protocols for Key Findings

Protocol 1: Assessing Rapid Antidepressant Efficacy in Rodents

This protocol is based on the CUS model detailed in [18].

- Animal Model: Male Sprague–Dawley rats.

- CUS Procedure: Animals are exposed to a variable sequence of two mild, unpredictable stressors per day for 21 days (e.g., cold ambient temperature, strobe light, tilted cages, food/water deprivation).

- Drug Administration: On day 21, a single intraperitoneal (i.p.) injection of vehicle, ketamine (10 mg/kg), or the NR2B-selective antagonist Ro 25-6981 (10 mg/kg) is administered.

- Behavioral Testing:

- Sucrose Preference Test (SPT): Performed after 4h water deprivation. Measures anhedonia by calculating the ratio of sucrose solution consumed to total liquid consumed in 1 hour.

- Novelty-Suppressed Feeding (NSF): After overnight food deprivation, the latency for a rodent to feed in a novel, anxiety-provoking open field is recorded. Home cage food consumption is measured immediately after to control for appetite.

- Molecular & Electrophysiological Analysis:

- Immunoblotting: Prefrontal cortex (PFC) synaptoneurosomes are analyzed for synaptic proteins (e.g., PSD95, Synapsin I).

- Slice Electrophysiology: Brain slices are prepared 24h post-treatment. Layer V PFC pyramidal neurons are patched, and spontaneous EPSCs are recorded.

- mTOR Pathway Blockade: To test mechanism, the mTOR inhibitor rapamycin (0.2 nmol in 2 μl) or vehicle is infused intracerebroventricularly (i.c.v.) 30 minutes before ketamine injection.

Protocol 2: Evaluating a Novel Bimodal Therapeutic (GLP-1–MK-801)

This protocol is derived from the study on GLP-1–MK-801 [19].

- Molecule Design: A stabilized GLP-1 analogue is conjugated to MK-801 via a self-immolative disulfide linker, with a C-terminal l-penicillamine residue to optimize plasma stability.

- In Vitro Validation:

- Receptor Signaling: GLP-1–MK-801's signaling potency at the GLP-1 receptor is confirmed via cAMP assays and compared to parent GLP-1, semaglutide, and liraglutide.

- Target Engagement: Electrophysiological recordings in GLP-1-receptor-positive neurons in the arcuate nucleus demonstrate that GLP-1–MK-801, but not GLP-1 alone, suppresses NMDA-induced inward currents.

- In Vivo Metabolic Phenotyping:

- Subjects: Diet-induced obese (DIO) mice.

- Dosing: Once-daily subcutaneous (s.c.) injections of vehicle, GLP-1 analogue, MK-801, or GLP-1–MK-801 for 14 days.

- Outcome Measures: Body weight and food intake are tracked daily. Body composition (fat/lean mass) is measured. Plasma insulin, cholesterol, and triglycerides are analyzed. Energy expenditure and respiratory exchange ratio (RER) are assessed in metabolic cages. Adverse effect profiling (e.g., hyperthermia, hyperlocomotion) is conducted.

Integration with Neurochemical-Enriched Dynamic Causal Modeling (DCM)

The empirical data on receptor function and pharmacological modulation provides a critical foundation for building and validating computational models of brain function. Neurochemical-enriched DCM is a Bayesian framework that aims to infer hidden neuronal states and their connectivity from non-invasive neuroimaging data [3] [16].

Traditional neural mass models used in DCM represent populations of neurons as point sources, described by ordinary differential equations (ODEs). However, neural field models extend this by modeling current fluxes as continuous processes on the cortical manifold using partial differential equations (PDEs) [16]. This allows for the explicit incorporation of spatial parameters, such as the density and extent of lateral connections between neuronal units. The activity in these models is shaped by the intrinsic connectivity and the specific neurotransmitter systems—glutamate and GABA—that mediate interactions between different neuronal populations (e.g., pyramidal cells and interneurons) [16].

By integrating the quantitative pharmacological data from the previous sections—such as how an NMDA antagonist alters synaptic efficacy and network oscillations—researchers can construct more biologically constrained DCMs. For instance, the known role of NMDA receptors in synaptic plasticity and of GABA-A receptors in inhibitory gain control can be hard-coded as priors in the model parameters. The workflow below illustrates how empirical research and computational modeling interact.

A key application is the use of Magnetic Resonance Spectroscopy (MRS) in conjunction with magnetoencephalography (MEG). MRS can provide in vivo measurements of regional glutamate and GABA levels [3]. These neurochemical measurements can then be used to inform the parameters of a DCM that is used to explain concurrently acquired MEG data. This allows researchers to test specific hypotheses, such as whether altered E/I balance in a patient group is best explained by a deficiency in GABAergic inhibition or an excess of glutamatergic excitation, thereby bridging the gap between molecular pharmacology and systems-level neuroscience.

Table 3: Essential Research Reagents for Investigating E/I Balance

| Reagent / Resource | Function / Application | Example Use Case |

|---|---|---|

| Ketamine | Non-competitive NMDA receptor channel blocker. | Probe rapid antidepressant mechanisms in rodent stress models (e.g., CUS) [18]. |

| Ro 25-6981 | Selective antagonist for NMDA receptors containing the GluN2B subunit. | Study the specific role of GluN2B-containing receptors in plasticity and behavior [18]. |

| Rapamycin | Specific inhibitor of the mTOR protein synthesis pathway. | Determine the dependency of synaptogenesis and behavioral effects on mTOR signaling [18]. |

| AMPA Potentiators (PAMs) | Positive allosteric modulators (e.g., S18986) that enhance AMPA receptor function. | Investigate cognitive enhancement, neuroprotection, and antidepressant efficacy [15] [13]. |

| Bicuculline | Competitive GABA-A receptor antagonist. | Induce disinhibition and study the consequences of reduced GABAergic tone in circuits [12]. |

| Muscimol | Potent GABA-A receptor agonist. | Mimic enhanced inhibition and study its effects on network activity and behavior. |

| MRS (Magnetic Resonance Spectroscopy) | Non-invasive in vivo measurement of brain metabolite levels (Glu, GABA). | Correlate regional neurochemistry with behavior or model parameters in DCM studies [3]. |

| iPS Cell-Derived Neurons | Human neuronal cultures from induced pluripotent stem cells. | Model patient-specific disorders and perform in vitro psychopharmacological screens [20]. |

The precise regulation of cortical E/I balance by glutamate (via NMDA and AMPA receptors) and GABA systems is indispensable for normal brain function. The empirical data clearly demonstrates that targeted pharmacological modulation of these receptors—exemplified by the rapid antidepressant action of ketamine and the innovative design of GLP-1–MK-801 for obesity—holds immense therapeutic promise. The convergence of diverse RAADs on a final common pathway of mTOR-mediated synaptogenesis provides a unifying neurobiological framework for drug development. Moving forward, the integration of this rich pharmacological data into sophisticated computational frameworks like neurochemical-enriched DCM is a vital step. This synergy between molecular experimentation and computational modeling will enable a more principled, mechanistic approach to validating hypotheses about brain dysfunction in neurological and psychiatric disorders, ultimately guiding the development of more effective and targeted treatments.

In the pursuit of understanding complex brain disorders, Dynamic Causal Modelling (DCM) has emerged as a powerful Bayesian framework for inferring hidden neuronal states from neuroimaging data. This approach enables researchers to formulate and test explicit hypotheses about the neurobiological mechanisms that underlie pathological conditions. When enriched with neurochemical constraints, DCM provides a unique window into the synaptic and receptor-level dysfunctions that characterize diseases as seemingly distinct as Alzheimer's disease (AD) and schizophrenia (SZ). Both disorders exhibit profound disruptions in large-scale brain networks, yet through different molecular pathways: while AD is increasingly recognized as a synaptopathy with progressive synaptic failure, schizophrenia manifests as a dysconnection syndrome with altered synaptic gain and signal integration.

This review integrates evidence from recent studies employing neurochemistry-enriched DCM to bridge the gap between molecular pathology and systems-level dysfunction. By comparing the specific parameter estimates derived from DCM in these two conditions, we aim to establish a common framework for understanding how distinct etiological pathways converge on similar network-level phenotypes, thereby informing targeted therapeutic interventions.

Theoretical Foundations: Predictive Coding and Selective Neuronal Vulnerability

The theoretical underpinning of many DCM applications in psychiatry and neurology rests on hierarchical predictive coding frameworks. In this model, the brain continuously generates top-down predictions about sensory inputs and updates these predictions based on bottom-up prediction errors. The precision or confidence assigned to prediction errors is thought to be encoded by the postsynaptic gain of superficial pyramidal cells, which is regulated by inhibitory interneurons and neuromodulatory systems [21].

In schizophrenia, research suggests a fundamental failure in predictive coding, where patients show an impaired ability to adjust the precision of sensory predictions based on contextual cues. This manifests behaviorally as a difficulty in filtering irrelevant information and perceptually as a misattribution of significance to sensory events, potentially underlying positive symptoms like hallucinations and delusions [21]. Neurobiologically, this is linked to dysregulated NMDA receptor function and aberrant neuromodulation of cortical gain control, particularly in supragranular cortical layers where dopamine D1 and NMDA receptors are densely expressed [21].

Alzheimer's disease, while traditionally considered a neurodegenerative condition, also exhibits early disturbances in predictive coding frameworks. The default mode network (DMN)—central to internally-directed cognition—shows particularly early vulnerability in AD [22]. The progressive synaptopathy observed in AD begins with functional alterations in synaptic transmission before culminating in structural synapse loss and neuronal death [23]. DCM studies reveal that AD targets specific receptor systems and laminar-specific connections within cortical hierarchies, with emerging evidence for differential effects on AMPA versus NMDA receptor-mediated neurotransmission [22].

Table 1: Theoretical Constructs Linking Molecular Pathology to Network Dysfunction

| Theoretical Construct | Alzheimer's Disease Manifestation | Schizophrenia Manifestation |

|---|---|---|

| Predictive Coding | DMN connectivity alterations; impaired memory prediction | Failure to contextualize sensory input; aberrant salience |

| Synaptic Dysfunction | Progressive synaptopathy preceding neuronal loss | Dysconnection without degeneration |

| Receptor Specificity | Selective NMDA/AMPA receptor alterations | NMDA hypofunction; dopaminergic dysregulation |

| Network Impact | Default mode network disruption | Thalamocortical & frontotemporal dysconnection |

| Excitation/Inhibition Balance | Early hyperexcitability followed by hypoactivity | Context-dependent E/I imbalance |

Dynamic Causal Modelling: A Primer on Methodology

Dynamic Causal Modelling represents a fundamental shift from descriptive connectivity analyses to model-based approaches that test explicit mechanistic hypotheses. DCM uses Bayesian model inversion to infer the hidden neuronal states and connection parameters that best explain observed neuroimaging data. Unlike functional connectivity, which measures statistical dependencies, DCM estimates effective connectivity—the directed, causal influence that one neural system exerts over another [24].

The fundamental innovation of neurochemistry-enriched DCM lies in its incorporation of neurotransmitter concentrations as empirical priors on synaptic parameters. In one implementation, magnetic resonance spectroscopy (MRS) estimates of regional GABA and glutamate concentrations constrain the parameter space of canonical microcircuit models applied to MEG data [4]. This creates a biophysically plausible link between molecular specificity and systems-level dynamics.

Recent methodological extensions include:

- Longitudinal DCM: Models disease progression by incorporating repeated measures and testing specific hypotheses about temporal evolution of parameters [22]

- Stochastic DCM: Accounts for endogenous fluctuations in neuronal states, enabling analysis of resting-state data without experimental manipulations [24]

- Nonlinear DCM: Captures modulatory effects and interactions that cannot be explained by simple linear models [25]

- Parametric Empirical Bayes: Enables hierarchical modeling across subjects and groups while incorporating neurochemical constraints [4]

Alzheimer's Disease: Modelling Synaptopathy and Network Degeneration

Experimental Protocols and DCM Parameterization

Recent DCM studies of Alzheimer's disease have employed sophisticated longitudinal designs to track disease progression. One protocol [22] involved:

- Participants: 29 individuals with amyloid-positive mild cognitive impairment and early Alzheimer's dementia

- Timeline: Baseline and follow-up assessments after an average interval of 16 months

- Imaging: Resting-state magnetoencephalography (MEG) focusing on the default mode network

- Model Features:

- Regional specificity to accommodate variability in disease burden across brain regions

- Dual parameterization of excitatory neurotransmission to distinguish AMPA vs. NMDA receptor contributions

- Constraints to test specific clinical hypotheses about disease progression

The DCM implementation incorporated three key innovations: (1) region-specific contributions of cortical laminar activities, (2) separate parameterization of AMPA and NMDA receptor-mediated neurotransmission, and (3) condition-specific parameters to model disease progression between timepoints [22].

Key Findings and Parameter Estimates

Bayesian model comparison revealed strong evidence for regional specificity of Alzheimer's effects, with selective changes in NMDA receptor-mediated neurotransmission rather than uniform effects across receptor types. The most prominent changes occurred in connectivity within and between the precuneus and medial prefrontal cortex—key hubs of the DMN. Furthermore, individual differences in the severity of connectivity alterations correlated with measures of cognitive decline, suggesting their potential utility as biomarkers for tracking disease progression [22].

Table 2: DCM Parameter Changes in Alzheimer's Disease

| Parameter Type | Brain Regions | Direction of Change | Clinical Correlation |

|---|---|---|---|

| NMDA-mediated connectivity | Precuneus Medial PFC | Progressive reduction | Correlated with cognitive decline |

| AMPA-mediated connectivity | DMN nodes | Less affected than NMDA | Weak correlation with symptoms |

| Inhibitory connectivity | Multiple cortical regions | Variable alterations | Associated with neuropsychiatric symptoms |

| Longitudinal changes | Default Mode Network | Progressive deterioration | Predictive of clinical progression |

The synaptic basis of these network-level alterations finds support in molecular studies. Post-mortem analyses of AD brains reveal substantial synapse loss that correlates better with cognitive impairment than amyloid plaque or neurofibrillary tangle burden [23]. There are also specific alterations in synaptic receptor expression, including reductions in GluA1, GluA2, GluN1, GluN2A, and GluN2B subunits [23]. These molecular changes manifest functionally as impaired long-term potentiation and disrupted oscillatory activity, which can be captured by neurophysiological measures like MEG.

Schizophrenia: Mapping Receptor Dysfunction to Circuit-Level Dysconnection

Experimental Protocols and DCM Parameterization

Schizophrenia research using DCM has focused extensively on thalamocortical circuits and hierarchical processing. One seminal study [21] employed:

- Participants: 25 schizophrenia patients and 25 age-matched controls

- Task: Processing of predictable versus unpredictable visual targets during EEG recording

- Model Features:

- Focus on extrinsic (between-region) and intrinsic (within-region) connectivity

- Specific hypotheses about excitability of superficial pyramidal cells

- Precision encoding via strength of inhibitory recurrent connections

Another study using stochastic DCM for resting-state fMRI [24] examined the default mode network in first-episode schizophrenia patients, testing specific hypotheses about afferent connectivity to the anterior frontal node based on predictive coding accounts of psychosis.

Key Findings and Parameter Estimates

DCM studies consistently reveal abnormal effective connectivity in schizophrenia, particularly affecting backward connections from higher to lower hierarchical levels [21]. Patients show attenuated modulation of intrinsic connectivity when processing predictable versus unpredictable targets, suggesting a failure to optimize precision weighting of prediction errors based on contextual cues [21].

In the DMN, stochastic DCM revealed reduced effective connectivity to the anterior frontal node, reflecting impaired postsynaptic efficacy of prefrontal afferents [24]. This finding aligns with the neurodevelopmental hypothesis of schizophrenia, which posits altered maturation of frontal-related circuits.

Table 3: DCM Parameter Changes in Schizophrenia

| Parameter Type | Neural Circuits | Direction of Change | Clinical Correlation |

|---|---|---|---|

| Backward connectivity | Higher → Lower levels | Reduced modulation | Correlated with reality distortion |

| Intrinsic inhibition | Superficial pyramidal cells | Altered gain control | Associated with perceptual abnormalities |

| Thalamocortical connectivity | MD thalamus PFC | Reduced nonlinear modulation | Related to psychotic symptoms |

| Precision encoding | Prediction error units | Context-dependent deficits | Correlated with formal thought disorder |

The receptor basis of these connectivity alterations involves primarily NMDA receptor hypofunction and dopaminergic dysregulation. Unlike Alzheimer's, schizophrenia does not typically involve neurodegenerative changes but rather a functional dysregulation of synaptic transmission. Post-mortem studies show altered expression of NMDA receptor subunits and dopamine receptors, particularly in superficial cortical layers where pyramidal cells encoding prediction errors reside [21].

Comparative Analysis: Cross-Disease Insights from Model Parameters

Despite their distinct etiologies and clinical presentations, Alzheimer's disease and schizophrenia share intriguing similarities in their network-level manifestations when examined through the lens of DCM. Both conditions show preferential targeting of specific receptor systems—particularly NMDA receptor-mediated transmission—though through different pathological mechanisms. In AD, NMDA dysfunction emerges from the toxic proteinopathy and subsequent synaptic loss, while in SZ, it reflects neurodevelopmental alterations in receptor regulation and signaling.

A key difference emerges in the longitudinal trajectory of these connectivity alterations. Alzheimer's disease demonstrates progressive deterioration of network integrity that correlates with clinical decline [22], while schizophrenia exhibits relatively stable dysconnection patterns after disease onset, consistent with its neurodevelopmental rather than neurodegenerative nature.

Notably, both disorders affect higher-order associative networks, albeit with different emphases: AD most prominently affects the default mode network, while SZ targets executive control and salience networks alongside DMN alterations. This network selectivity aligns with the characteristic cognitive profiles of each disorder—episodic memory deficits in AD versus executive dysfunction and reality distortion in SZ.

Table 4: Essential Research Tools for Neurochemistry-Enriched DCM Studies

| Tool Category | Specific Examples | Research Function | Key Features |

|---|---|---|---|

| Neuroimaging Modalities | MEG, EEG, fMRI (resting-state & task-based) | Source-level neural activity recording | High temporal resolution; whole-brain coverage |

| Neurochemical Mapping | 7T Magnetic Resonance Spectroscopy (MRS) | In vivo neurotransmitter concentration measurement | GABA/glutamate quantification; regional specificity |

| Biophysical Modeling | Dynamic Causal Modelling (DCM) software | Bayesian model inversion and comparison | Tests mechanistic hypotheses; multiple variants available |

| Analysis Platforms | SPM12, FSL, FreeSurfer | Data preprocessing and anatomical analysis | Standardized pipelines; reproducibility |

| Validation Tools | PET receptor ligands, post-mortem histology | Cross-validation of model parameters | Molecular specificity; ground truth verification |

Future Directions: Toward Clinically Actionable Model Parameters

The integration of neurochemical measurements with dynamic causal modeling represents a promising avenue for computational psychiatry and neurology. Future developments will likely include:

- Multi-modal integration: Combining MEG/EEG with fMRI and MRS in unified modeling frameworks

- Genetically-informed models: Incorporating polygenic risk scores and specific genetic variants as priors on model parameters [26]

- Drug development applications: Using DCM parameters as target engagement biomarkers and predictive tools for treatment response

- Cross-disease comparisons: Systematic characterization of common and distinct network motifs across the neuropsychiatric spectrum

Emerging evidence of genetic overlap between schizophrenia spectrum disorders and Alzheimer's disease [26] suggests potential shared pathophysiological mechanisms that could be elucidated through comparative DCM studies. Similarly, documented white matter abnormalities common to both disorders [27] point to the need for integrated models that incorporate both structural and functional connectivity.

The ultimate validation of neurochemistry-enriched DCM will come from its ability to guide targeted therapeutic interventions based on individual patterns of network dysfunction. As these models become more refined and validated against molecular and clinical measures, they hold the potential to transform how we classify, diagnose, and treat complex brain disorders.

Methodology and Translational Applications: From Model Fitting to Clinical Trial Design

Dynamic Causal Modeling (DCM) represents a fundamental shift from conventional neuroimaging analyses, moving beyond descriptive observations to test explicit hypotheses about the neurobiological mechanisms that generate observed brain signals [28]. For magneto- and electroencephalography (M/EEG), DCM uses a spatiotemporal model in which the temporal component is formulated in terms of neurobiologically plausible dynamics of interacting neuronal populations [28] [29]. While traditional DCM has provided invaluable insights into network architectures and effective connectivity, a significant frontier has emerged: the incorporation of neurochemical parameterization to bridge the critical gap between macroscale dynamics and microscale synaptic mechanisms.

This evolution addresses a central challenge in translational neuroscience. The effects of neurodegenerative diseases and pharmacological interventions are often understood at the level of specific neurotransmitter systems, yet non-invasive human neuroimaging measures brain function at the macroscopic scale [22] [30]. Advanced DCM frameworks now tackle this "circular explanatory gap" by incorporating parameters that represent distinct neurochemical processes, enabling researchers to make mechanistic inferences about receptor-specific dysfunction and drug effects directly from M/EEG data [22]. This guide examines the methodology, validation, and practical application of these neurochemically-enriched DCM frameworks, providing a comprehensive resource for researchers and drug development professionals seeking to leverage these powerful analytical tools.

Core Methodological Framework: From Neural Masses to Neurochemical Specificity

Foundations of DCM for M/EEG

The foundational DCM framework for M/EEG models the brain as a dynamic input-output system. It assumes that sensory inputs are processed by a network of interacting neuronal sources, with each source described using a neural mass model that approximates the average activity of cortical macrocolumns [28]. A typical canonical microcircuit (CMC) model within DCM represents three key neuronal subpopulations arranged in a laminar structure: granular (spiny stellate cells), supragranular (pyramidal cells and inhibitory interneurons), and infragranular layers (pyramidal cells and inhibitory interneurons) [28] [30]. These populations are connected through intrinsic connections within a source, and brain regions are linked via extrinsic connections (forward, backward, and lateral) that follow anatomical principles [28]. The resulting neuronal dynamics are described by a set of differential equations, and the observed M/EEG signals are generated via a forward model that maps the depolarization of pyramidal cells to sensor readings through a lead field [28].

Table: Core Components of a Standard DCM for M/EEG

| Component | Description | Neurobiological Interpretation |

|---|---|---|

| Neural Mass Model | Simplified model of a cortical macrocolumn | Average dynamics of neuronal populations |

| Neuronal Subpopulations | Typically three subpopulations per source | Represent different cell types in layered cortex |

| Intrinsic Connections | Connections within a single neural source | Local circuit dynamics (excitatory/inhibitory) |

| Extrinsic Connections | Connections between different neural sources | Long-range cortico-cortical pathways |

| Lead Field | Linear mapping from source activity to sensors | Accounts for volume conduction effects |

| Parameter Estimation | Variational Bayesian inversion | Optimizes model parameters given observed data |

Incorporating Neurochemical Parameterization

Recent advances in DCM have introduced parameterizations that move beyond generic excitatory and inhibitory neurotransmission to model specific receptor-mediated signaling. This neurochemical enrichment enables more precise hypotheses about disease mechanisms and drug effects. Two key methodological innovations include:

Dual Glutamatergic Parameterization: Standard neural mass models typically employ a single parameter for excitatory (glutamatergic) neurotransmission. Neurochemically-enriched DCM introduces separate parameters for AMPA receptor-mediated and NMDA receptor-mediated synaptic transmission [22]. This distinction is critical because these receptor subtypes have different kinetic properties and roles in neural computation, and they can be differentially affected in pathological states. For example, Alzheimer's disease may preferentially affect NMDA receptor function [22].

Region-Specific Receptor Constraints: Another approach incorporates empirical data on regional neurotransmitter receptor densities derived from post-mortem autoradiography studies [30]. These molecular characteristics serve as empirical priors that constrain the estimation of synaptic connectivity parameters during model inversion. This effectively creates a bridge between the molecular architecture of a region and its large-scale electrophysiological signatures.

Figure: Workflow for Neurochemically-Constrained DCM. Molecular constraints inform the neural mass model, which is inverted using Bayesian approaches to yield receptor-specific parameter estimates.

The inversion of these enriched models and subsequent model selection relies on Bayesian frameworks. Variational Laplace enables estimation of the posterior distribution of neurochemical parameters, while Bayesian model comparison allows researchers to test competing hypotheses about which receptor systems are affected in a particular condition [28] [22]. This rigorous statistical framework is essential for making valid inferences about neurochemical mechanisms from non-invasive data.

Comparative Analysis: Neurochemical DCM vs. Alternative Modeling Approaches

The landscape of computational models for M/EEG analysis is diverse, with each approach offering distinct strengths and limitations. Understanding how neurochemically-enriched DCM compares to alternative frameworks is essential for selecting the appropriate tool for specific research questions.

Table: Comparison of Modeling Approaches for M/EEG Analysis

| Framework | Primary Strength | Neurochemical Specificity | Hypothesis Testing Framework | Translational Utility |

|---|---|---|---|---|

| Neurochemical DCM | Explicit receptor-level parameterization; Direct hypothesis testing | High (AMPA/NMDA, GABAA, regional receptor densities) | Strong (Bayesian model comparison) | High (Direct mapping to drug targets) |

| Standard DCM | Network connectivity inference; Biophysical plausibility | Medium (Generic excitatory/inhibitory) | Strong (Bayesian model comparison) | Medium (Circuit-level effects) |

| The Virtual Brain (TVB) | Whole-brain network modeling; Multi-scale integration | Low to Medium (Varies with node model) | Moderate | Medium (Large-scale dynamics) |

| Human Neocortical Neurosolver (HNN) | Single-source detailed modeling; Laminar resolution | Medium (Can incorporate receptor kinetics) | Limited | Low to Medium (Mechanistic insights) |

| FieldTrip/EEGLAB | Data-driven analysis; Flexibility | None | Limited (Statistical comparisons) | Low (Phenomenological descriptions) |

Neurochemical DCM's distinctive advantage lies in its balance between biological specificity and statistical rigor. Unlike more detailed biophysical simulations (e.g., Blue Brain Project) that prioritize biological realism but face challenges in parameter estimation from non-invasive data, neurochemical DCM incorporates just enough biological detail to test specific hypotheses about receptor function while remaining statistically identifiable [22]. Similarly, compared to purely data-driven approaches like traditional EEGLAB or FieldTrip analyses, neurochemical DCM provides a generative modeling framework that can make causal inferences about underlying mechanisms rather than simply describing statistical patterns in the data [31].

The Bayesian model comparison capabilities are particularly crucial for neurochemical applications. This approach allows researchers to compare multiple competing hypotheses about receptor dysfunction—for example, whether observed spectral changes in Alzheimer's disease are better explained by AMPA versus NMDA receptor pathology—in a principled way that accounts for model complexity [22]. This formal hypothesis testing framework, combined with receptor-specific parameterization, makes neurochemical DCM particularly valuable for drug development applications, where understanding mechanism of action is essential.

Experimental Protocols and Validation Studies

Protocol: Longitudinal DCM for Alzheimer's Disease Progression

A recent pioneering study demonstrates the application of neurochemical DCM to characterize progressive neurophysiological changes in Alzheimer's disease (AD) [22]. The experimental protocol provides a template for longitudinal studies of neurodegenerative diseases:

Participant Cohort and Data Acquisition: The study included 29 individuals with amyloid-positive mild cognitive impairment or early Alzheimer's disease dementia. Researchers acquired resting-state MEG data at baseline and after an average follow-up interval of 16 months, alongside detailed cognitive assessments to quantify disease progression [22].

Model Specification and Comparison: The analysis implemented three key innovations in DCM:

- Regional specificity of disease burden, allowing differential parameter changes across brain regions

- Dual parameterization of excitatory neurotransmission into AMPA and NMDA-mediated components

- Clinical hypothesis constraints using parametric empirical Bayes to test specific progression models [22]

Bayesian Model Selection: Researchers compared multiple competing models at the group level to identify which combination of parameterizations best explained the longitudinal spectral changes. The winning model provided evidence for regional specificity of AD effects and selective NMDA neurotransmission changes, particularly within and between key default mode network regions (precuneus and medial prefrontal cortex) [22].

Clinical Correlation Analysis: The study tested whether the neurophysiological parameter changes estimated by DCM correlated with individual differences in cognitive decline during the follow-up period, establishing the clinical relevance of the estimated parameters [22].

Protocol: Linking Receptor Densities to Spectral Phenotypes

Another innovative approach established a normative link between molecular architecture and electrophysiological signals [30]:

Multimodal Data Integration: The study combined intracranial EEG (iEEG) data from regions remote from epileptogenic zones (providing a measure of normal regional spectral phenotypes) with post-mortem receptor density data from the same cortical regions [30].

Model Fitting with Empirical Priors: Researchers fitted canonical microcircuit DCMs to the regional iEEG power spectral densities. They then incorporated normative receptor density measurements as empirical priors on synaptic connectivity parameters during model inversion [30].

Model Evidence Comparison: Bayesian model comparison determined whether models constrained by regional receptor density data provided better explanations of the iEEG spectra compared to unconstrained models [30].

Atlas Generation: The output was a cortical atlas of neurobiologically informed intracortical synaptic connectivity parameters, providing normative priors for future patient-specific modeling studies [30].

Figure: Experimental workflow for linking receptor densities to spectral phenotypes using DCM.

Quantitative Findings and Comparative Performance

Empirical studies implementing neurochemical DCM have yielded quantifiable results that demonstrate both its biological validity and practical utility.

Table: Key Quantitative Findings from Neurochemical DCM Studies

| Study Application | Key Finding | Model Evidence | Clinical Correlation |

|---|---|---|---|

| Alzheimer's Disease Progression [22] | Selective NMDA receptor changes in precuneus and medial PFC | Strong evidence for dual parameterization (AMPAR/NMDAR) over single excitatory parameter | Significant correlation between connectivity changes and cognitive decline |

| Receptor Density Mapping [30] | Regional receptor densities predict synaptic connectivity parameters | Models with receptor-based priors outperformed unconstrained models | Creates normative atlas for future patient studies |

| Neurovascular Coupling [32] | Hemodynamic responses linked to pre- and post-synaptic activity | Bayesian comparison identifies preferred neurovascular model | Enriches BOLD fMRI interpretation with neuronal specificity |

The Alzheimer's disease study demonstrated that models incorporating dual glutamatergic parameterization (separate AMPA and NMDA receptors) and regional specificity received the highest model evidence, strongly outperforming simpler models with a single excitatory parameter [22]. Furthermore, the estimated progressive changes in effective connectivity within the default mode network showed significant correlations with individual differences in cognitive decline, validating the clinical relevance of the neurophysiological parameters [22].

The receptor density mapping study provided quantitative evidence that incorporating empirical receptor density data substantially improved model evidence across multiple cortical regions [30]. This establishes an important proof of concept: that molecular cortical characteristics can directly inform and constrain generative models of electrophysiological signals, creating a principled bridge between microstructural and macroscopic scales of brain organization.

Implementation: The Scientist's Toolkit

Successful implementation of neurochemically-enriched DCM requires specific software tools and analytical resources. The following toolkit provides essential components for researchers embarking on this methodology.

Table: Essential Research Reagents and Software Solutions for Neurochemical DCM

| Tool/Resource | Function | Implementation in Neurochemical DCM |

|---|---|---|

| SPM Software | Primary platform for DCM analysis | Provides core algorithms for model inversion and Bayesian comparison [33] [34] |

| Canonical Microcircuit Model | Neural mass model with laminar specificity | Base model extended with receptor-specific parameterizations [22] [30] |

| Parametric Empirical Bayes | Hierarchical modeling framework | Enables group-level analysis and incorporation of empirical priors [22] [34] |

| Bayesian Model Reduction | Rapid model comparison algorithm | Facilitates comparison of multiple receptor-level hypotheses [34] |

| Receptor Density Atlas | Normative neurotransmitter receptor maps | Provides empirical priors for region-specific synaptic parameters [30] |

| MNE-Python/EEGLAB | Preprocessing and data quality control | Handles artifact removal and basic spectral analysis before DCM [31] |

The Statistical Parametric Mapping (SPM) software package remains the primary platform for DCM analysis, with continuous development incorporating the latest methodological advances [33] [34]. Recent versions have introduced support for Optically Pumped Magnetometers (OPMs), a next-generation MEG technology that offers enhanced sensitivity and enables recordings during head movement [34]. For researchers preferring open-source environments, the new SPM-Python wrapper provides access to SPM's core functionality without requiring a MATLAB license [34].

The practical workflow typically begins with data preprocessing and quality control using established tools like EEGLAB or MNE-Python to handle artifact removal, filtering, and basic spectral analysis [31]. The preprocessed data then moves to SPM for DCM specification, estimation, and comparison. For neurochemical applications, researchers typically specify multiple competing models representing different hypotheses about receptor involvement, then use Bayesian model comparison to identify the most plausible account of the data [22]. The winning model's parameters can then be related to clinical variables or experimental manipulations to draw inferences about neurochemical mechanisms in health and disease.

Neurochemically-enriched Dynamic Causal Modeling represents a significant advancement in computational neuroimaging, offering a principled framework for making receptor-level inferences from non-invasive M/EEG data. By incorporating dual glutamatergic parameterization, region-specific receptor constraints, and rigorous Bayesian model comparison, this approach addresses the critical translational gap between molecular pharmacology and systems-level neuroscience.

The experimental validation of this framework—through both longitudinal studies of Alzheimer's disease and normative mapping of receptor densities to spectral phenotypes—demonstrates its potential to transform both basic neuroscience and drug development [22] [30]. For pharmaceutical researchers, these methods offer the possibility of demonstrating target engagement and mechanism of action for novel compounds directly from non-invasive neurophysiological measurements. For clinical neuroscientists, they provide tools to characterize receptor-specific pathophysiology in individual patients or patient groups.

Future developments will likely enhance these approaches through integration with multi-omic data, expanded receptor parameterizations (including neuromodulatory systems), and application to personalized medicine challenges. As these methods become more accessible through open-source software implementations [34], neurochemical DCM is poised to become an increasingly essential tool for understanding and treating brain disorders.

The development of central nervous system therapeutics is fundamentally constrained by the challenge of demonstrating direct pharmacological engagement in the living human brain. For decades, the validation of drug action has relied on indirect behavioral measures or preclinical models. This guide uses the NMDA receptor antagonist memantine as a case study to objectively compare the experimental methods that provide conclusive evidence of target engagement in humans. We focus on the critical emergence of non-invasive neuroimaging techniques, particularly magnetoencephalography (MEG) combined with dynamic causal modeling (DCM), which now enables direct quantification of receptor-level drug effects in patients, thereby establishing a new paradigm for validating neurochemical-enriched models in drug development.