Unlocking the Brain's Code: How Monte Carlo Simulations Power Behavioral Neuroscience Discovery

This article provides a comprehensive guide to Monte Carlo simulation methods in behavioral neuroscience and psychopharmacology.

Unlocking the Brain's Code: How Monte Carlo Simulations Power Behavioral Neuroscience Discovery

Abstract

This article provides a comprehensive guide to Monte Carlo simulation methods in behavioral neuroscience and psychopharmacology. Targeted at researchers and drug development professionals, it explores foundational concepts, practical applications in modeling neural dynamics and behavior, strategies for troubleshooting and optimizing simulations, and frameworks for validation against empirical data. The article synthesizes current methodologies to enhance study design, increase statistical power, and improve the translation of computational findings into biomedical insights.

What Are Monte Carlo Simulations? A Neuroscience Primer for Probabilistic Modeling

Monte Carlo (MC) methods, a class of computational algorithms relying on repeated random sampling, have evolved from their origins in nuclear physics to become indispensable in modern systems neuroscience and drug discovery. Within behavioral neuroscience, these stochastic simulations power research by enabling the modeling of complex, high-dimensional systems—from molecular interactions to whole-brain network dynamics and decision-making processes—where deterministic solutions are intractable.

Core MC Methodologies in Neuroscience Research

The table below summarizes key MC applications, their quantitative outputs, and relevance to behavioral neuroscience.

Table 1: Primary Monte Carlo Applications in Behavioral Neuroscience & Drug Development

| Application Domain | Typical MC Method | Key Quantitative Output | Neuroscience Relevance |

|---|---|---|---|

| Molecular Dynamics (MD) | Metropolis-Hastings, Langevin Dynamics | Protein-ligand binding free energy (ΔG in kcal/mol), conformational ensembles | Prediction of drug candidate efficacy at neural targets (e.g., GPCRs, ion channels). |

| Neural Population Modeling | Markov Chain Monte Carlo (MCMC) | Posterior probability distributions of model parameters (mean ± SD). | Inferring synaptic strengths or neural tuning curves from spike train data. |

| Diffusion-Weighted MRI Tractography | Random Walk / Probabilistic Tracking | Probabilistic connectivity matrices between brain regions. | Mapping connectome alterations in psychiatric or neurological disorders. |

| Behavioral Choice Modeling | Particle Filtering, Gibbs Sampling | Estimated model parameters (e.g., drift rate, decision threshold) with Credible Intervals. | Unpacking trial-by-trial decision variables and learning rates in cognitive tasks. |

| Pharmacokinetic/Pharmacodynamic (PK/PD) | Stochastic PK/PD Simulation | Concentration-time profiles, probability of target engagement. | Predicting brain penetration and dose-response relationships for CNS drugs. |

Detailed Experimental Protocols

Protocol 1: MCMC for Inferring Synaptic Parameters from Electrophysiology Data

Objective: Estimate posterior distributions of synaptic conductance parameters from postsynaptic current recordings.

- Data Preparation: Whole-cell voltage-clamp recordings of evoked excitatory postsynaptic currents (EPSCs) under receptor blockade. Pre-process to extract amplitude and decay time constants per trial.

- Model Specification: Define a biophysical model:

I(t) = g_max * (V - E_rev) * (exp(-t/τ_rise) - exp(-t/τ_decay)), where parameters θ = {g_max,τ_rise,τ_decay}. - Prior Definition: Assign weakly informative priors (e.g., Log-Normal) based on literature.

- MCMC Sampling: Implement Hamiltonian Monte Carlo (HMC) using Stan or PyMC. Run 4 chains for 20,000 iterations each (50% warm-up).

- Convergence Diagnostics: Ensure Gelman-Rubin statistic (R̂) < 1.05 and effective sample size (ESS) > 400 per parameter.

- Posterior Analysis: Report median and 95% highest density interval (HDI) for each parameter. Perform posterior predictive checks against held-out data.

Protocol 2: Monte Carlo Simulation for Probabilistic Tractography

Objective: Reconstruct white matter pathways from diffusion MRI data using a random walk approach.

- Data Acquisition: Acquire high-angular-resolution diffusion-weighted imaging (HARDI) data. Preprocess with eddy current and motion correction.

- Local Modeling: Estimate fiber orientation distributions (FODs) at each voxel using spherical deconvolution.

- Seed Initialization: Define seed regions (e.g., Brodmann Area) from co-registered structural atlas.

- Random Walk Propagation: For each seed point (N=10,000), initiate a "particle." At each step, sample a new direction from the local FOD. Step size is randomly sampled from a distribution (e.g., 0.5–1.0 mm).

- Termination Criteria: Stop propagation if particle enters a region with low fractional anisotropy (FA < 0.1) or exceeds maximum path length (250 mm).

- Connectivity Mapping: Count the number of particle visits to each target brain region. Normalize by seed region volume to create a probabilistic connectivity matrix.

Protocol 3: MC Binding Free Energy Calculation for CNS Target Drug Screening

Objective: Compute the binding affinity (ΔG) of a novel compound to a neuronal ion channel.

- System Preparation: Obtain high-resolution protein structure (e.g., via cryo-EM). Prepare ligand and protein using molecular modeling software (e.g., Schrodinger Maestro). Solvate in an explicit water box with physiological ions.

- Equilibration: Run classical MD simulation (100 ns) to equilibrate the solvated system under NPT conditions.

- Enhanced Sampling: Perform Metropolis Monte Carlo-based alchemical free energy perturbation (FEP). Define a λ schedule (20+ windows) to morph ligand into a non-interacting "dummy" molecule.

- Sampling: At each λ window, run MC sampling of particle moves (translation, rotation, dihedral) for 1 million steps. Use a replica-exchange protocol between windows to improve sampling.

- Analysis: Use the Bennett Acceptance Ratio (BAR) or Multistate BAR (MBAR) to estimate ΔG_bind. Report mean and standard error from 5 independent runs.

- Validation: Compare computed ΔG against known experimental IC50/Kd for a set of reference inhibitors (R² > 0.7 validates the protocol).

Visualizations

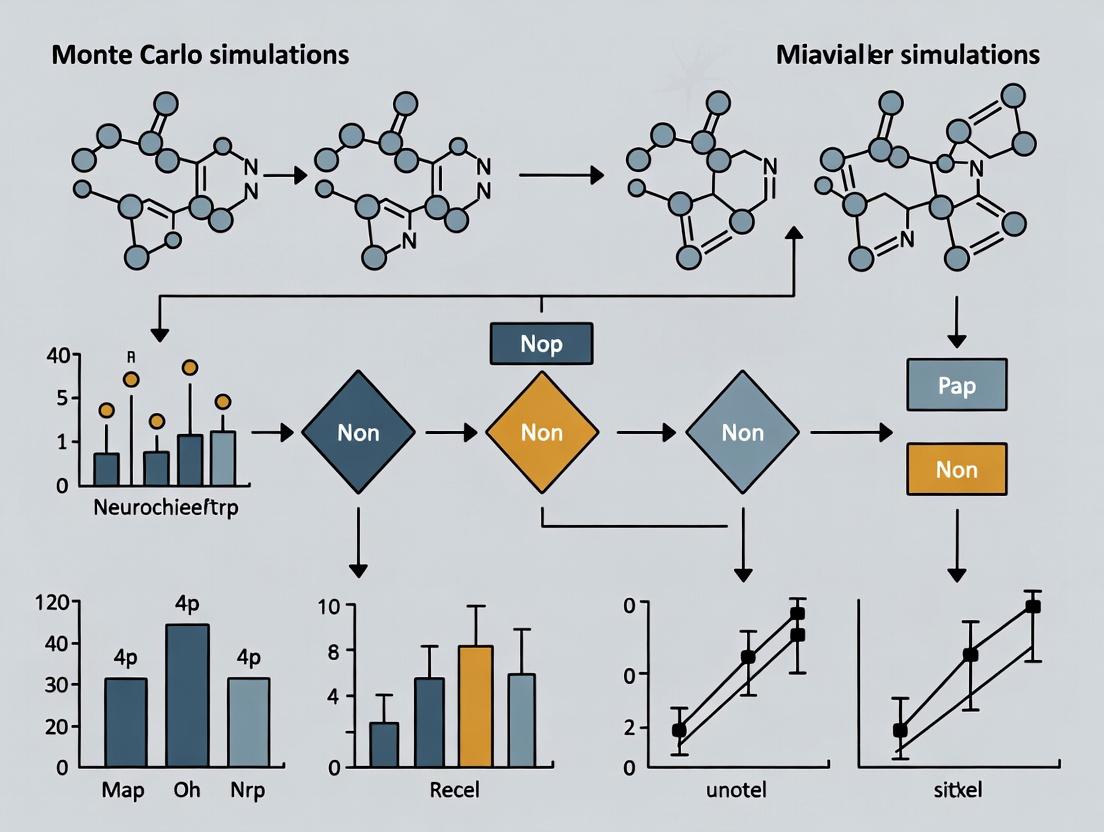

Title: Monte Carlo Sampling in Signaling Pathways

Title: MCMC Inference Workflow in Neuroscience

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Reagents for MC-Informed Neuroscience Experiments

| Item Name | Supplier/Example | Function in MC-Powered Research |

|---|---|---|

| Stan / PyMC3 | Open Source (mc-stan.org, pymc.io) | Probabilistic programming languages for flexible implementation of Bayesian MCMC models. |

| FSL's PROBTRACKX | FMRIB Software Library | Implements MC probabilistic tractography for diffusion MRI data analysis. |

| CHARMM/AMBER Force Fields | D. E. Shaw Research, UC San Diego | Provides empirical energy parameters for MC/MD simulations of biomolecules (proteins, lipids). |

| GROMACS | gromacs.org | High-performance MD simulation package enabling large-scale MC-like sampling of molecular systems. |

| PsychoPy | Open Source (psychopy.org) | Generates precise behavioral task stimuli; collects trial-by-trial data essential for MC choice models. |

| Neuropixels Probes | IMEC | High-density electrophysiology arrays providing the large-scale neural activity datasets for population MC models. |

| Bayesian Model Fitting Toolboxes | (e.g., TAPAS, HDDM) | Provide pre-built, validated MCMC samplers for cognitive models (drift-diffusion, reinforcement learning). |

| Cloud Compute Credits | AWS, Google Cloud, Azure | Enable scalable, parallelized MC simulations (e.g., 1000s of FEP runs) without local HPC constraints. |

This document details the application of Monte Carlo (MC) simulation principles—random sampling, iteration, and convergence—to research in behavioral neuroscience and neuropharmacology. Framed within a broader thesis on enhancing research power through computational stochastic methods, these notes provide protocols for designing, executing, and interpreting simulations of neural systems, from molecular interactions to circuit-level dynamics.

Monte Carlo methods provide a statistical framework for solving deterministic and stochastic problems through repeated random sampling. In neuroscience, these principles are critical for modeling systems with inherent randomness (e.g., neurotransmitter release, ion channel gating) or high-dimensional parameter spaces (e.g., neural network dynamics, drug-receptor interactions).

- Random Sampling: The probabilistic selection of parameter values or states from defined distributions (e.g., synaptic weight distributions, pharmacokinetic parameters).

- Iteration: The repeated execution of a simulation model using new random samples each cycle.

- Convergence: The point at which the aggregate results of iterations stabilize to a solution within an acceptable error margin, indicating a reliable approximation.

Application Notes & Quantitative Data

Application: Estimating Synaptic Transmission Probability

MC simulations model the stochasticity of vesicular release at synapses. Random sampling determines whether a presynaptic action potential results in neurotransmitter release based on a baseline probability (p).

Table 1: Simulation Output for Synaptic Transmission (10,000 Iterations)

| Release Probability (p) | Simulated Mean EPSP Amplitude (mV) | 95% Confidence Interval (mV) | Coefficient of Variation |

|---|---|---|---|

| 0.3 | 0.45 | [0.41, 0.49] | 0.32 |

| 0.5 | 0.75 | [0.70, 0.80] | 0.25 |

| 0.8 | 1.20 | [1.16, 1.24] | 0.12 |

EPSP: Excitatory Post-Synaptic Potential. Parameters: Quantal size = 1.5mV.

Application: Pharmacodynamic Model of Drug-Receptor Binding

Simulations assess variability in drug response by sampling affinity (Kd) and efficacy parameters from populations.

Table 2: Simulated Population Response to a Novel Anxiolytic

| Dose (mg/kg) | Mean % Inhibition of Fear Response | Standard Deviation | % of Population with >50% Response |

|---|---|---|---|

| 1.0 | 25 | 8.2 | 12 |

| 3.0 | 58 | 10.5 | 65 |

| 10.0 | 82 | 7.1 | 98 |

Experimental Protocols

Protocol 3.1: MC Simulation of Ion Channel Stochasticity

Aim: To model the macroscopic current from a patch containing N stochastic ion channels. Materials: Computational software (Python/R, NEURON, STAN). Procedure:

- Define Model: For a channel, define open probability (p_open), and conductance (γ). Set total channels N, voltage V, and simulation time T.

- Initialize: Set total current I_total = 0. Discretize time into steps dt.

- Iterate & Sample: For each time step t: a. For each of N channels, sample a uniform random number r ~ U(0,1). b. If r < p_open(V), channel state = OPEN; else CLOSED. c. Calculate I_t = (Number of OPEN channels) * γ * (V - E_rev). d. Record I_t.

- Aggregate: Repeat Step 3 for M iterations (different random seeds).

- Analyze: Calculate mean current trajectory and variance across iterations. Determine convergence by plotting running mean versus iteration number.

Protocol 3.2: Population Pharmacokinetic/Pharmacodynamic (PK/PD) Simulation

Aim: To predict variability in behavioral outcome following drug administration. Materials: Population PK parameters, in vitro potency (IC50/EC50) data, behavioral assay model. Procedure:

- Define Distributions: For key parameters (e.g., Clearance, Volume of Distribution, EC50, Hill coefficient), define statistical distributions (e.g., log-normal) based on prior data.

- Sample Virtual Cohort: Randomly sample a parameter set for each virtual subject (n = 1000) from the defined distributions.

- Run Deterministic Model: For each virtual subject, run a deterministic PK/PD-behavioral outcome model using their unique parameter set.

- Iterate: Repeat steps 2-3 for K virtual cohorts to ensure robust estimation of outcome distributions.

- Convergence & Power Analysis: Monitor convergence of key outcome metrics (e.g., % responders). Use the final distribution to calculate statistical power for a proposed clinical trial design.

Visualization of Workflows and Pathways

Title: Monte Carlo Simulation Core Workflow

Title: Neuropharmacology PK/PD Simulation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for MC-Enhanced Neuroscience Research

| Item | Function/Description in Simulation Context |

|---|---|

| NEURON Simulation Environment | A platform for building and conducting MC simulations of stochastic ion channels and synaptic transmission within detailed neuronal morphologies. |

| Brian 2 Spiking Neural Network Simulator | Python-based library for simulating stochastic, iterative models of large-scale neural networks with built-in monte carlo methods. |

| Stan (PyStan/CmdStanR) | Probabilistic programming language for specifying complex Bayesian statistical models (e.g., hierarchical PK/PD), utilizing MCMC sampling for inference. |

| Custom Python/R Scripts with NumPy/TensorFlow Probability | Flexible code for implementing custom MC sampling algorithms, random number generation, and convergence diagnostics (e.g., Gelman-Rubin statistic). |

| High-Performance Computing (HPC) Cluster Access | Essential for running thousands of iterative simulations required for robust convergence in high-dimensional models. |

| Experimental Parameter Priors (e.g., Patch-clamp data, HPLC-MS results) | Quantitative empirical data used to define the probability distributions from which random samples are drawn during simulation. |

Within the framework of a thesis on Monte Carlo (MC) simulations for powering behavioral neuroscience research, understanding and modeling stochasticity is paramount. The inherent randomness in ion channel gating, synaptic transmission, and network dynamics is not mere "noise" but a fundamental feature of neural computation and behavior. MC methods provide the statistical engine to simulate these probabilistic processes, enabling researchers to quantify variability, estimate the power of experimental designs, and generate null distributions for hypothesis testing. This document outlines application notes and protocols for integrating stochastic modeling into neuroscience research, with a focus on neuronal firing and behavioral output.

Table 1: Key Sources of Stochasticity in Neural Systems

| Source | Typistic Timescale | Key Metric/Variability | Biological Consequence |

|---|---|---|---|

| Ion Channel Gating | Microseconds to Milliseconds | Open probability (Popen); Mean open/closed times | Variability in membrane potential trajectory, spike timing. |

| Synaptic Vesicle Release | Milliseconds | Release probability (Pr); 0.1 - 0.9 at central synapses | Fluctuations in postsynaptic potential amplitude (quantal variation). |

| Neurotransmitter Diffusion & Receptor Binding | Sub-millisecond to Milliseconds | Number of bound receptors; ~2000 glutamate molecules per vesicle. | Variability in signal integration. |

| Network Connectivity | Persistent | Connection probability in local circuits; e.g., ~0.1 in cortical layers. | Emergent variability in population dynamics and attractor states. |

| Behavioral State (e.g., Arousal) | Seconds to Minutes | Neuromodulator tone (e.g., norepinephrine, acetylcholine). | Modulation of neural variability and signal-to-noise ratios. |

Table 2: Monte Carlo Simulation Parameters for Power Analysis

| Parameter | Typical Range/Value | Description | Impact on Power |

|---|---|---|---|

| Number of Stochastic Trials (N) | 103 - 106 | Independent MC runs per condition. | Higher N reduces error in power estimate. |

| Effect Size (Cohen's d, Δ firing rate) | 0.2 (small) - 0.8 (large) | Hypothesized biological difference. | Larger effect → higher power, fewer subjects needed. |

| Within-Subject Neural Variability (σ) | Derived from pilot data (e.g., CV of ISI) | Intrinsic stochasticity in the measure. | Larger σ → lower power, more subjects needed. |

| Alpha (Significance Level) | 0.05, 0.01 | Probability of Type I error. | Lower alpha → lower power. |

| Target Statistical Power (1-β) | 0.8, 0.9 | Probability of detecting a true effect. | Directly determines required sample size. |

Experimental Protocols

Protocol 1: In Vitro Patch-Clamp Recording of Stochastic Ion Channel Gating

- Objective: To measure the probabilistic opening and closing of single voltage-gated Na+ or K+ channels for parameterizing MC models.

- Materials: Cell culture or acute brain slice, patch-clamp rig (amplifier, digitizer, micromanipulator), pipette puller, solution with tetrodotoxin (TTX, 1 µM) to block most Na+ channels.

- Method:

- Establish cell-attached or inside-out patch configuration on a neuronal soma.

- Hold potential at -120 mV. Apply a series of 1000+ depolarizing step pulses to -40 mV (duration: 20-50 ms).

- Record unitary currents. The all-or-none events represent single-channel openings.

- For analysis, construct ensemble averages and open probability (Popen) time courses. Fit dwell-time histograms (open and closed times) to exponential distributions to extract kinetic rate constants.

- MC Integration: The extracted rate constants become transition probabilities in a Markov chain model of the channel, used in stochastic Hodgkin-Huxley simulations.

Protocol 2: Two-Photon Imaging of Stochastic Calcium Events in Dendritic Spines

- Objective: To quantify the stochasticity of synaptic inputs and NMDA receptor-mediated Ca2+ transients.

- Materials: Transgenic mouse expressing a genetically encoded calcium indicator (e.g., GCaMP6f) in neurons, two-photon microscope, cranial window, pipette for glutamate uncaging (MNI-glutamate).

- Method:

- Identify a dendritic branch of a layer 2/3 pyramidal neuron in vivo or in vitro.

- Select individual spines. Use subthreshold glutamate uncaging (at low, fixed power) to mimic single synaptic release events.

- Perform 50-100 trials per spine, recording the fluorescent transient (ΔF/F) for each trial.

- Analyze the distribution of ΔF/F amplitudes. A bimodal distribution (failure vs. success) with variable success amplitude directly quantifies release probability (Pr) and quantal variability.

- MC Integration: The distribution of ΔF/F amplitudes provides the empirical probability density function for postsynaptic response magnitude in a network synapse model.

Protocol 3: Behavioral Variability and Psychometric Curve Estimation

- Objective: To measure stochasticity in perceptual decision-making and fit a psychometric function.

- Materials: Head-fixed mouse setup with virtual reality or operant conditioning chamber, visual/auditory stimulus delivery system, lickport or lever.

- Method:

- Train an animal on a two-alternative forced-choice task (e.g., left vs. right grating orientation).

- Present stimuli of varying difficulty (e.g., different contrast levels) in a randomized, interleaved order over many trials (500+).

- Record the animal's choice (left/right) and reaction time on each trial.

- Fit the proportion of "rightward" choices as a function of stimulus strength (e.g., contrast) with a sigmoidal function (Weibull or logistic). The slope and lapse rate (upper/lower asymptote errors) parameterize behavioral stochasticity.

- MC Integration: The fitted psychometric function defines the likelihood of a correct decision given a stimulus, forming the behavioral readout layer in a full brain-behavior MC model. MC simulations can test how neural variability in the sensory pathway propagates to alter the psychometric slope.

Visualization Diagrams

Title: MC Framework for Stochastic Neuroscience

Title: From Neural Noise to Behavioral Variability

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Stochastic Neuroscience Research

| Item/Category | Example Product/Name | Function in Stochastic Modeling |

|---|---|---|

| Stochastic Simulation Software | NEURON with MCell, STEPS (STochastic Engine for Pathway Simulation), Brian2 | Provides built-in solvers for exact or approximate stochastic simulation of biochemical and electrical signaling. |

| Channel Blocker (Na+) | Tetrodotoxin (TTX) citrate | Blocks voltage-gated Na+ channels to isolate single-channel recordings or study network dynamics without fast spiking. |

| Genetically Encoded Calcium Indicator (GECI) | GCaMP6f / jGCaMP7f / jGCaMP8f | Reports presynaptic (axon terminal) and postsynaptic (spine) Ca2+ transients with high SNR, enabling quantification of release probability and stochastic events. |

| Caged Neurotransmitter | MNI-glutamate | Allows precise, sub-micron spatial and millisecond temporal uncaging of glutamate to mimic stochastic synaptic activation at individual spines. |

| Optogenetic Actuator (Stochastic) | Channelrhodopsin-2 (ChR2) mutants with low efficiency, stabilized step function opsins (SSFO) | Enables probabilistic activation of neurons or axons when used with very low light intensities, introducing controlled experimental stochasticity. |

| Data Analysis Suite | Python with SciPy, NumPy, statsmodels | Custom scripting for analyzing distributions of neural/behavioral data, fitting psychometric functions, and running custom MC power analyses. |

This document details essential concepts and protocols for enhancing the rigor of behavioral neuroscience research, particularly in the context of Monte Carlo simulation-based power analysis. The accurate characterization of parameter spaces and underlying distributions is fundamental for generating testable hypotheses and designing robust experiments, ultimately reducing irreproducibility in preclinical drug development.

Core Concepts: Definitions and Applications

Parameter Spaces

A parameter space is the multidimensional set of all possible values that the parameters of a statistical or computational model can take. In behavioral neuroscience, this often includes variables like effect size (e.g., Cohen's d), baseline response rate, variance, dropout rates, and learning curve slopes.

Application: Defining the plausible parameter space for a novel cognitive enhancer involves literature review and pilot data to bound parameters like expected improvement in Morris water maze latency (e.g., 15-40% reduction) and its standard deviation.

Probability Distributions

Distributions describe the likelihood of different outcomes for a variable. Moving beyond point estimates to distributional assumptions (e.g., log-normal for reaction times, beta for proportions, gamma for neural spike intervals) is critical for realistic simulation.

Application: Simulating control group performance in a forced-swim test requires modeling immobility time not as a single mean but as a distribution (e.g., normally distributed with mean=150s, SD=30s, truncated at zero).

Hypothesis Generation

Formal hypothesis generation involves specifying a prior probability distribution over the parameter space before seeing new experimental data. This quantifies uncertainty and allows for Bayesian and frequentist power analysis.

Application: Before testing a new anxiolytic, one might generate a hypothesis that it reduces elevated plus maze open arm time by an effect size (d) drawn from a distribution centered at 0.8 (based on prior drug classes) with substantial uncertainty (SD=0.3).

Table 1: Parameter Estimates and Distributions for Common Behavioral Assays

| Behavioral Assay | Typical Control Mean (SD) | Common Effect Size (Cohen's d) Range | Suggested Distribution for Simulation | Key Parameter Source |

|---|---|---|---|---|

| Morris Water Maze (Latency) | 45s (12s) | 0.7 - 1.2 (learning impairment) | Log-Normal | (Crusio, 2019; Meta-analysis) |

| Forced Swim Test (Immobility) | 160s (35s) | 0.6 - 1.5 (antidepressant effect) | Truncated Normal (0, ∞) | (Kara et al., 2018; Systematic Review) |

| Elevated Plus Maze (% Open Arm Time) | 25% (8%) | 0.5 - 1.0 (anxiolytic effect) | Beta Distribution | (Wahlsten et al., 2003; Large-scale phenotyping) |

| Prepulse Inhibition (% Inhibition) | 65% (15%) | 0.8 - 1.8 (drug-induced deficit) | Normal (often arcsin transformed) | (Swerdlow et al., 2008; Consortium study) |

| Social Interaction Ratio | 1.5 (0.4) | 0.6 - 1.1 (pro-social effect) | Log-Normal | (Silverman et al., 2010; Multi-lab validation) |

Note: Ranges are illustrative. Actual parameter space definition must be based on specific experimental conditions, cohort, and model system.

Experimental Protocols for Parameter Estimation

Protocol 4.1: Systematic Pilot Study for Parameter Space Definition

Objective: To collect preliminary data for estimating means, variances, and distribution shapes of key behavioral endpoints. Materials: See "Scientist's Toolkit" below. Procedure:

- Power a Pilot: Conduct a pilot study with n≥10-12 per group (control, naive). While not powered for significance, this provides initial distribution estimates.

- Assess Distribution: Test raw data for normality (Shapiro-Wilk test) and homoscedasticity (Levene's test). Apply transformations (log, square root) if necessary.

- Calculate Descriptive Statistics: Compute mean, SD, median, interquartile range, and skewness.

- Define Plausible Range: Calculate 95% confidence intervals for the mean and variance. Use these as the core of the plausible parameter space for simulation.

- Archive Raw Data: Store individual subject data publicly (e.g., OSF.io) to contribute to community meta-analyses of parameter distributions.

Protocol 4.2: Monte Carlo Simulation for Power Analysis

Objective: To estimate the probability (power) of detecting a true effect across the defined parameter space. Procedure:

- Define Simulation Grid: Specify ranges for key parameters (e.g., effect size d from 0.3 to 1.0 in steps of 0.1; sample size n from 8 to 30 per group).

- Specify Data-Generating Process: Program a function that, given a parameter combination, generates synthetic datasets reflecting the assumed distribution (e.g.,

rnorm(n, mean = control_mean + d*pooled_sd, sd = pooled_sd)). - Run Iterations: For each parameter combination, simulate 5,000-10,000 hypothetical experiments. For each, perform the planned statistical test (e.g., t-test).

- Calculate Empirical Power: The proportion of simulated experiments yielding a significant result (p < α, typically 0.05) is the estimated power for that parameter set.

- Visualize Power Surface: Create a contour or heat map plot showing power as a function of sample size and effect size.

Diagrams and Visual Workflows

Workflow for Hypothesis-Driven Simulation

Title: Simulation-Based Experimental Design Workflow

Relationship Between Parameter Space, Hypothesis, and Power

Title: Parameter Space Informs Hypothesis & Power

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Behavioral Parameter Estimation

| Item / Solution | Function / Purpose | Example Vendor / Tool |

|---|---|---|

| Open-Source Behavioral Software (e.g., DeepLabCut, ezTrack) | Provides automated, high-throughput, and unbiased scoring of animal behavior, yielding continuous data ideal for distributional analysis. | Mathis Lab; Pennington Lab |

| Statistical Software with Scripting (R, Python) | Enables custom simulation code, distribution fitting, and automated power analysis across parameter grids. | R (pwr, simr packages); Python (scipy, statsmodels) |

| Dedicated Power Analysis Software (SIMR, G*Power) | User-friendly interfaces for running simulations and computing power for complex designs. | R package; Universität Düsseldorf |

| Electronic Lab Notebook (ELN) with Data Links | Ensures raw behavioral data, analysis code, and simulation parameters are version-controlled and linked for full reproducibility. | LabArchives; Benchling |

| Reference Database of Published Effect Sizes | Provides empirical prior distributions for hypothesis generation (e.g., typical effect size of an SSRI in the FST). | Meta-analyses; AnimalStudyRegistry.org |

| High-Throughput Phenotyping System | Standardized, automated home-cage or arena systems for collecting large-scale pilot data to define cohort-specific parameters. | Noldus PhenoTyper; Tecniplas LAB-CAGE |

Understanding behavior requires integrating molecular, cellular, and circuit-level data. Monte Carlo (MC) simulation methods provide a powerful stochastic framework for modeling the probabilistic nature of molecular interactions (e.g., neurotransmitter-receptor binding, intracellular signaling cascades) and scaling their emergent effects to neuronal firing patterns and, ultimately, behavioral phenotypes. This application note details protocols for implementing such multi-scale simulations, with a focus on applications in neuropharmacology.

Application Notes: Multi-Scale Monte Carlo Simulation in Behavioral Neuroscience

Core Concept: Use MC methods to simulate stochasticity at a lower biological scale (e.g., molecular) and observe its propagation to a higher scale (e.g., neural network output/behavior).

Key Advantages:

- Handles Low-Copy Number Events: Accurately models stochastic binding and diffusion where deterministic ODEs fail.

- Parameter Exploration: Efficiently samples from probability distributions of kinetic parameters (e.g., binding affinity

Kd, rate constants) to understand outcome variability. - Linking Scales: Outputs from a molecular-scale MC simulation (e.g., postsynaptic current amplitude distribution) can serve as inputs to a network-scale model.

Table 1: Example Multi-Scale Simulation Parameters & Outputs

| Simulation Scale | Key Stochastic Parameters | Typical Output Metrics | Link to Next Scale |

|---|---|---|---|

| Molecular (Synaptic) | Neurotransmitter count, receptor state (open/closed/desensitized), Kd, kon, koff |

Postsynaptic current (PSC) amplitude, PSC decay time constant, release probability | PSC amplitude distribution seeds network model synapse strength. |

| Cellular / Circuit | Synaptic weight distribution (from prior scale), ion channel gating, connectivity probability | Neuronal firing rate, local field potential (LFP) rhythms, population bursting dynamics | Spike-train output serves as input to behavioral readout model. |

| Systems / Behavioral | Network activation patterns, neuromodulator tone (e.g., diffuse dopamine level) | Decision variable trajectory, locomotor activity count, reward prediction error signal | Simulated behavioral readouts (e.g., % correct choices) can be compared to in vivo data. |

Detailed Experimental Protocols

Protocol 1: MC Simulation of Glutamatergic Synaptic Transmission

Aim: To simulate the stochastic release of glutamate and AMPA receptor activation to generate a distribution of excitatory postsynaptic currents (EPSCs).

Materials: Software: STEPS (STochastic Engine for Pathway Simulation), NEURON, or custom Python/R scripts with Gillespie2 or tau-leaping algorithms.

Procedure:

- Define the Reaction Scheme: Model the vesicle release, glutamate diffusion, and receptor binding.

Vesicle (V) -> Vesicle (V_released) + Glutamate (10000 molecules)upon arrival of an action potential (AP).Glutamate (G) + AMPAR (R) <-> G:R(closed) withkonandkoff.G:R (closed) -> G:R (open)with rateα.G:R (open) -> G:R (closed)with rateβ.G -> ∅(glutamate clearance via uptake).

- Set Parameters: Use empirically derived values. Example:

kon = 5.0e6 M^-1s^-1,koff = 100 s^-1,α = 1000 s^-1,β = 200 s^-1.- Uptake rate:

1e4 s^-1. Initial[AMPAR]: 50 molecules per synapse. - Run 1000 trials for one AP event.

- Execute Simulation: Implement using the Gillespie Stochastic Simulation Algorithm (SSA) within a defined volume (synaptic cleft).

- Analyze Output: For each trial, record the peak open channel count. Convert to conductance and then EPSC amplitude using a driving force (e.g., Vm = -70 mV, E_rev = 0 mV). Plot the histogram of EPSC amplitudes.

Protocol 2: Incorporating MC Synaptic Output into a Network Model

Aim: To use the probabilistic EPSC distribution from Protocol 1 to simulate spike output in a feed-forward cortical microcircuit.

Procedure:

- Construct Network: Build a point-neuron network (100:25 excitatory:inhibitory) with integrate-and-fire dynamics. Define connectivity with 10% probability.

- Define Synaptic Strength: For each synaptic connection, draw the baseline EPSC amplitude from the distribution generated in Protocol 1. Assign this as the synaptic weight

w. - Stimulate and Simulate: Provide a patterned input to a subset of excitatory neurons. Run the network simulation using a discrete-time simulator (e.g.,

NESTorBrian2). - Analyze Output: Record spike times of all neurons. Compute population firing rates, cross-correlations, and detect oscillatory activity in the LFP proxy.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Reagents & Computational Tools for Cross-Level Validation

| Item / Tool Name | Function / Purpose | Example Use Case |

|---|---|---|

| Fluorescent False Neurotransmitter (FFN) | Optical probe for visualizing vesicular release and recycling in presynaptic terminals. | Validate stochastic release probabilities assumed in MC synaptic model. |

| Genetically Encoded Calcium Indicators (GECIs; e.g., GCaMP) | Report neural activity (Ca2+ influx) in populations of neurons in vivo. | Compare simulated population activity dynamics (from Protocol 2) with in vivo calcium imaging data. |

| Conditional Knockout (cKO) Models | Enables cell-type or region-specific deletion of a target gene (e.g., a specific receptor subunit). | Test model predictions by altering a molecular parameter (e.g., kon) in vivo and measuring behavioral impact. |

| STEPS Software | 3D stochastic reaction-diffusion simulation platform specialized for molecular biology in neurons. | Direct implementation of Protocol 1 in a realistic morphological geometry. |

| NEURON Simulation Environment | Multi-scale modeling environment for constructing and simulating detailed neuronal and network models. | Integration of stochastic synaptic models (from STEPS) into biophysically detailed cells and networks. |

| PsychoPy / Bpod | Open-source systems for precise behavioral task control and data acquisition. | Generate quantitative behavioral data (e.g., choice, reaction time) for comparison with systems-level model outputs. |

Visualizations

Title: Monte Carlo Synapse Model

Title: Multi-Scale MC Simulation & Validation Workflow

Building Better Brain Models: Step-by-Step Applications in Neuroscience & Drug Development

Within the context of advancing Monte Carlo (MC) simulation methodologies for behavioral neuroscience and drug development research, this protocol details the process of translating a neuroscientific research question into a robust, validated computational architecture. MC simulations are indispensable for modeling stochastic neural processes, receptor dynamics, and the effects of pharmacological interventions under uncertainty.

Core Workflow: From Question to Architecture

The foundational workflow for designing a simulation in this domain follows a structured pipeline, essential for ensuring biological relevance and statistical rigor.

Diagram Title: Simulation Design Workflow for Neuroscience

Key Application Notes & Protocols

Protocol: Defining the Research Question & Conceptual Model

Objective: To frame a testable hypothesis about a neuropharmacological mechanism into a formalized conceptual model.

Procedure:

- Hypothesis Specification: Clearly state the relationship between variables (e.g., "Blockade of dopamine D2 receptors reduces simulated neural ensemble burst firing in a stochastic, dose-dependent manner").

- System Boundary Definition: Determine the scope (e.g., synaptic cleft, single neuron, microcircuit).

- State Variables & Interactions: Identify key entities (e.g., neurotransmitter concentration, receptor states, ion channels) and their hypothesized interactions.

- Stochastic Element Identification: Pinpoint sources of randomness (e.g., ligand-receptor binding, channel gating, vesicle release) essential for MC modeling.

- Documentation: Create a diagram of the proposed biological signaling pathway or system dynamics.

Example: Modeling NMDA Receptor Modulation

Diagram Title: Stochastic NMDA Receptor Signaling Model

Protocol: Parameterization from Experimental Data

Objective: To populate the model with biologically realistic parameters and define their statistical distributions for MC sampling.

Procedure:

- Literature Mining: Extract parameter values (e.g., receptor binding rates, diffusion coefficients) from peer-reviewed primary sources.

- Distribution Fitting: For each stochastic parameter, determine an appropriate probability distribution (e.g., Exponential for wait times, Binomial for binding events).

- Table Creation: Compile parameters, sources, and distributions into a master table.

Table 1: Example Parameters for a Synaptic MC Model

| Parameter | Symbol | Value (Mean ± SD or Range) | Distribution for MC | Source (Example) |

|---|---|---|---|---|

| Glutamate Vesicle Release Probability | Prel | 0.3 ± 0.1 | Beta(α, β) | Holderith et al., Neuron (2012) |

| Glutamate Diffusion Constant | DGlu | 0.2 - 0.4 μm²/ms | Uniform | Nielsen et al., J. Neurosci (2004) |

| NMDA Receptor Open Time | τopen | 10 - 100 ms | Log-Normal | Popescu et al., Nature Neurosci (2004) |

| MK-801 Binding Rate | konMK801 | 5.0 x 10⁶ M⁻¹s⁻¹ | Fixed (Deterministic) | Huettner & Bean, PNAS (1988) |

| D2 Receptor EC50 for Modulation | EC50 | 50 nM | Log-Normal | Seamans & Yang, Prog. Neurobiol (2004) |

Protocol: Computational Architecture & MC Engine Implementation

Objective: To design and implement the software architecture that executes the stochastic simulation.

Procedure:

- Algorithm Selection: Choose an appropriate MC algorithm (e.g., Gillespie's Stochastic Simulation Algorithm (SSA) for chemical kinetics, or Kinetic Monte Carlo (KMC) for state transitions).

- Modular Design: Structure code into modules:

Parameter Manager,Stochastic Engine,State Updater,Data Recorder. - Pseudo-Random Number Generator (PRNG): Select a high-quality, reproducible PRNG (e.g., Mersenne Twister).

- Implementation: Code the core event loop: a. Initialize system state. b. Calculate propensities for all possible stochastic events. c. Determine next event time and type using random draws. d. Update system state and time. e. Record data at intervals. f. Repeat until stop condition.

- Parallelization Strategy: For parameter sweeps, design architecture to run independent replicates on multiple cores (embarrassingly parallel).

Protocol: Model Verification, Validation & Sensitivity Analysis

Objective: To ensure the simulation is correct (verification), biologically accurate (validation), and to identify critical parameters.

Procedure: Verification (Is the model built right?):

- Unit Testing: Test individual functions (e.g., PRNG distribution, event scheduler).

- Sanity Checks: Run simulations with reduced complexity or known limits.

- Convergence Tests: Ensure results stabilize with increasing MC replicates.

Validation (Is the right model built?):

- Qualitative Validation: Compare simulation output trends with established literature phenomena.

- Quantitative Validation: Use statistical tests (e.g., Kolmogorov-Smirnov) to compare simulated distributions with withheld experimental data.

Sensitivity Analysis (Global Uncertainty Quantification):

- Method: Use variance-based methods (e.g., Sobol indices) or Monte Carlo filtering.

- Process: a. Define plausible ranges for all uncertain input parameters. b. Sample parameter sets using a space-filling design (e.g., Latin Hypercube Sampling). c. Run the MC simulation for each parameter set. d. Calculate sensitivity indices for each parameter on key outputs (e.g., burst frequency).

Table 2: Key Research Reagent Solutions & Computational Tools

| Item / Reagent / Tool | Function in Simulation Pipeline | Example / Vendor |

|---|---|---|

| Experimental Data Sources | Provide empirical parameters for model grounding. | In vitro electrophysiology (patch-clamp), in vivo calcium imaging, radioligand binding assays (Kd, Bmax). |

| Literature Databases | Source for parameter extraction and validation benchmarks. | PubMed, Google Scholar, IONCHANNELBOOK (parameter repository). |

| High-Performance Computing (HPC) Cluster | Executes thousands of independent MC replicates or parameter sweeps. | SLURM workload manager, cloud computing (AWS, GCP). |

| Stochastic Simulation Algorithm (SSA) | Core engine for simulating coupled biochemical stochastic events. | Gillespie's Direct Method, Next Reaction Method, τ-leaping. |

| Programming Language & Libraries | Implementation and analysis environment. | Python (NumPy, SciPy, stochpy), Julia (DifferentialEquations.jl), C++ (for performance-critical cores). |

| Sensitivity Analysis Library | Quantifies parameter influence on model output. | SALib (Python), gsa package in Julia. |

| Data & Visualization Software | Analyzes and presents simulation results. | Pandas, Matplotlib, Seaborn (Python); R ggplot2. |

| Version Control System | Manages code integrity and collaboration. | Git with GitHub or GitLab. |

This application note details the integration of Monte Carlo (MC) simulation techniques to model dose-response curves and PK/PD relationships, framed within a thesis on enhancing behavioral neuroscience research power. By accounting for biological variability and uncertainty, these methods provide robust predictions of drug effects in vivo, crucial for preclinical drug development and experimental design.

Pharmacokinetics (PK) describes what the body does to a drug (absorption, distribution, metabolism, excretion), while pharmacodynamics (PD) describes what the drug does to the body (therapeutic and adverse effects). Monte Carlo simulations employ repeated random sampling to estimate the probability distribution of outcomes in complex, variable systems. In behavioral neuroscience, this is critical for predicting individual variability in drug response, optimizing dosing regimens, and increasing the statistical power of experimental designs.

Core Quantitative Data & Parameters

Table 1: Common PK Parameters for Simulation

| Parameter | Symbol | Typical Value Range | Description |

|---|---|---|---|

| Bioavailability | F | 0.2 - 1.0 | Fraction of dose reaching systemic circulation |

| Absorption Rate Constant | Ka | 0.1 - 5.0 h⁻¹ | First-order rate constant for drug absorption |

| Volume of Distribution | Vd | 0.1 - 20 L/kg | Apparent volume in which a drug distributes |

| Elimination Rate Constant | Ke | 0.01 - 1.0 h⁻¹ | First-order rate constant for drug elimination |

| Clearance | CL | 0.01 - 5.0 L/h/kg | Volume of plasma cleared of drug per unit time |

Table 2: Common PD (Hill Equation) Parameters for Simulation

| Parameter | Symbol | Typical Value Range | Description |

|---|---|---|---|

| Maximum Effect | E_max | 0 - 100% | Maximum achievable pharmacologic effect |

| Potency (EC50) | EC₅₀ | Variable (nM-μM) | Drug concentration producing 50% of E_max |

| Hill Coefficient | n | 0.5 - 3.0 | Steepness of the dose-response curve |

| Baseline Effect | E₀ | Variable | Effect in the absence of drug |

| Variability Source | Distribution Type | Example CV% | Application |

|---|---|---|---|

| Inter-individual PK | Log-normal | 20-40% | Vd, CL |

| Inter-occasional PK | Log-normal | 10-25% | Ka, F |

| Residual Error (PK) | Normal or Proportional | 5-20% | Plasma concentration measures |

| PD Parameter Variability | Log-normal | 30-50% | EC₅₀, E_max |

| PD Response Error | Normal or Logistic | 10-30% | Behavioral assay readout |

Experimental Protocols

Protocol 1: Simulating a Population Dose-Response Curve

Objective: To generate a simulated dose-response relationship for a novel psychoactive compound accounting for population variability.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Define Base PK Model: Select an appropriate compartmental model (e.g., one-compartment oral). Set population mean values for PK parameters (Ka, Vd/F, CL/F).

- Define PD Model: Use the Hill equation: E = E₀ + (E_max * Cⁿ)/(EC₅₀ⁿ + Cⁿ), where C is the plasma concentration at steady state or a specific time.

- Define Variability: Assign distributions (typically log-normal) and coefficients of variation (CV%) to key PK and PD parameters (e.g., CL, EC₅₀).

- Monte Carlo Loop: For i = 1 to N (N=10,000 recommended): a. For each virtual subject, randomly sample a value for each parameter from its defined distribution. b. For a series of doses (e.g., 0.1, 1, 3, 10, 30 mg/kg), calculate the steady-state plasma concentration (Css) using sampled PK parameters: Css = (F * Dose)/(CL * Dosing Interval). c. Calculate the corresponding effect (E) for each dose using the sampled PD parameters. d. Store the effect for each dose for the virtual subject.

- Analysis: Calculate the median effect and prediction intervals (e.g., 5th, 95th percentiles) at each dose level. Plot dose (log scale) vs. population effect.

- Output: Simulated dose-response curve with confidence bands, estimated ED₅₀ distribution.

Protocol 2: Simulating a Time-Course PK/PD Experiment

Objective: To simulate the time-dependent effect of a drug based on its PK profile and the direct-link PD model.

Procedure:

- Define PK Time Course: Using the sampled PK parameters for a virtual subject, simulate plasma concentration over time post-dose: C(t) = (F * Dose * Ka)/(Vd * (Ka - Ke)) * (e^(-Ke * t) - e^(-Ka * t)).

- Link PK to PD: For each time point t, calculate the drug effect E(t) using the Hill equation, where C = C(t). Assume no effect delay (direct link model).

- Introduce Hysteresis (if applicable): For drugs with a delayed effect (counter-clockwise hysteresis), implement an effect compartment model. Add a first-order rate constant k_e0 governing the equilibration between plasma and effect site.

- Population Simulation: Repeat steps 1-2/3 for N virtual subjects.

- Analysis: Plot median and prediction intervals for concentration-time and effect-time profiles. Calculate key metrics like maximum effect (E_max,obs), time of maximum effect, and effect duration.

Visualizing Relationships & Workflows

Title: Monte Carlo PK/PD Simulation Workflow

Title: Example GPCR Signaling Pathway for PK/PD Modeling

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for PK/PD Simulation Studies

| Item/Category | Function/Description | Example Vendor/Software |

|---|---|---|

| Pharmacokinetic Modeling Software | Platform for building PK/PD models, running simulations, and statistical analysis. | Certara Phoenix NLME, R (mrgsolve, RxODE), MATLAB SimBiology, NONMEM |

| Statistical Programming Environment | For custom Monte Carlo scripting, data manipulation, and visualization. | R, Python (NumPy, SciPy, pandas), Julia |

| Behavioral Data Acquisition System | Measures in vivo PD endpoints (e.g., locomotor activity, operant responding). | Noldus EthoVision, Med Associates operant chambers, ANY-maze |

| In Vivo Microdialysis/HPLC | For validating PK simulations by measuring actual drug concentrations in brain/plasma. | Harvard Apparatus pumps, CMA probes, Thermo Fisher Scientific HPLC |

| Chemical Standards & Assay Kits | For calibrating concentration assays. Analytical grade drug compound, ELISA/LC-MS/MS kits. | Sigma-Aldrich, Cayman Chemical, Tecan |

| High-Performance Computing (HPC) Resources | Cloud or cluster computing for large-scale (N>10,000) Monte Carlo runs. | Amazon Web Services (AWS), Google Cloud Platform, local computing clusters |

Decision-making in behavioral neuroscience is increasingly modeled as a probabilistic process, where organisms learn and adapt based on uncertain outcomes. This paradigm aligns with the core thesis that Monte Carlo simulations provide the necessary computational power to capture the stochasticity inherent in neural circuits and behavioral choices. This Application Note details protocols and models for studying these circuits, with a focus on translating neurobiological data into testable, simulated frameworks for drug development.

Table 1: Key Parameters for Probabilistic Learning Models (e.g., Two-Armed Bandit Task)

| Parameter | Typical Value (Range) | Description | Neural Correlate |

|---|---|---|---|

| Learning Rate (α) | 0.3 - 0.7 | Speed of value update per trial. | Striatal dopamine-dependent plasticity. |

| Inverse Temperature (β) | 2.0 - 5.0 | Choice stochasticity/exploration. | Prefrontal cortex (PFC) to striatum output. |

| Outcome Probability (P) | 0.2 - 0.8 | Reward probability for a given option. | Environment variable. |

| Temporal Discount Factor (γ) | 0.9 - 0.99 | Devaluation of future rewards. | Ventromedial PFC, hippocampus. |

| Perseveration Bias (ρ) | -0.5 - 0.5 | Tendency to repeat/avoid previous choice. | Anterior cingulate cortex (ACC) conflict monitoring. |

Table 2: Neural Activity Correlates in Rodent Probabilistic Reversal Learning

| Brain Region | Recording Modality | Change Associated with Positive Prediction Error | Change Associated with Negative Prediction Error | Key Reference (2023-2024) |

|---|---|---|---|---|

| Ventral Striatum (NAc) | Fiber Photometry (DA) | ↑ Dopamine Transient | ↓ Dopamine Dip | (Saunders et al., 2023) |

| Orbitofrontal Cortex (OFC) | Calcium Imaging | ↑ Activity at Reward Omission (Re-evaluation) | ↑ Activity at Unexpected Reward | (Bari et al., 2024) |

| Dorsal Medial Striatum | Electrophysiology | ↑ Firing for Chosen Action Value | ↑ Firing for Action-Outcome Contingency Shift | (Lee et al., 2023) |

| Anterior Cingulate Cortex (ACC) | Electrophysiology | Sustained Activity during Decision Uncertainty | ↑ Error-Related Negativity Signal | (Fitzgerald et al., 2024) |

Experimental Protocols

Protocol 1: Probabilistic Reversal Learning Task in Rodents

Objective: To assess behavioral flexibility and model decision-making parameters. Materials: Operant conditioning chambers with two retractable levers or nosepoke ports, reward delivery system (liquid or pellet), behavioral software (e.g., Bpod, Med-PC). Procedure:

- Habituation & Magazine Training: Habituate animal to chamber. Train to collect reward from magazine.

- Initial Discrimination: Present two choices (A & B). Choice A yields reward with high probability (e.g., P=0.8), Choice B yields reward with low probability (e.g., P=0.2). Conduct daily sessions of 100-200 trials.

- Criterion & Reversal: Once a performance criterion is met (e.g., >80% choice of A over 20 trials), reverse the contingency without cue (A now P=0.2, B now P=0.8).

- Data Collection: Record choice, outcome (reward/omission), reaction time, and magazine entry latency.

- Model Fitting: Fit trial-by-trial choice data using a Q-learning or Rescorla-Wagner model via maximum likelihood estimation in MATLAB (fitrlm) or Python (scikit-learn, pymc3).

- Model:

Q_chosen(t+1) = Q_chosen(t) + α * (outcome(t) - Q_chosen(t)) - Choice probability via softmax:

P(choice) = exp(β * Q_choice) / Σ exp(β * Q_all)

- Model:

Protocol 2: In Vivo Calcium Imaging During Decision-Making

Objective: To record neural ensemble dynamics in prefrontal-striatal circuits during probabilistic learning. Materials: Head-mounted miniaturized microscope (e.g., Inscopix nVoke), GCaMP6f/virus-expressing mice, GRIN lens implanted in target region (e.g., OFC), data acquisition software. Procedure:

- Surgery: Sterotaxically inject AAV expressing GCaMP6f under a neural promoter (e.g., CaMKIIa). Implant GRIN lens above target region. Allow 3-4 weeks for expression and recovery.

- Habituation: Habituate animal to head-fixed or freely moving setup with microscope attached.

- Task Execution: Animal performs the probabilistic learning task (Protocol 1) while neural activity is recorded.

- Data Processing: Use manufacturer's software (Inscopix Data Processing) for motion correction, source extraction, and deconvolution (e.g., CNMF-E) to extract fluorescence transients (ΔF/F) and inferred spike rates.

- Analysis: Align neural data to behavioral events (cue, choice, outcome). Use regression models to identify neurons encoding chosen value, prediction error, or decision variables. Perform population analysis (PCA, demixed) to trace decision trajectories.

Monte Carlo Simulation Protocol

Protocol 3: Simulating Neural Circuit Dynamics for Drug Effect Prediction

Objective: To use Monte Carlo methods to simulate stochastic neural activity and predict behavioral effects of pharmacological perturbation. Materials: High-performance computing cluster, simulation software (NEURON, Brian2, or custom Python/R scripts). Procedure:

- Define Network Architecture: Implement a reduced model of the PFC-ACC-striatum circuit. Represent each region as a population of stochastic leaky integrate-and-fire neurons or as rate-based units.

- Parameterize Model: Set baseline synaptic weights, time constants, and noise levels based on literature (see Table 1). Incorporate dopamine as a neuromodulator that scales learning rate (α) and inverse temperature (β).

- Implement Learning: Use an actor-critic framework where the striatum (actor) selects actions based on PFC (critic) value estimates. Synaptic plasticity follows a dopamine-dependent STDP rule.

- Run Monte Carlo Simulations: a. For each virtual "subject" (parameter set), run 1000+ trials of the probabilistic task. b. Introduce randomness at multiple levels: spike generation (Poisson process), synaptic release (probabilistic), and reward delivery (per task probability). c. Repeat simulations 1000 times to build a distribution of possible behavioral outcomes (e.g., trials to reversal).

- Pharmacological Intervention: Simulate a drug effect by systematically altering a parameter (e.g., reduce α to simulate D2 antagonist, increase β to simulate enhanced norepinephrine). Re-run the Monte Carlo ensemble.

- Output Analysis: Compare pre- and post-intervention distributions of key behavioral metrics using statistical tests (e.g., Kolmogorov-Smirnov). Generate predictions for in vivo experiments.

Visualizations

Diagram 1: Core Probabilistic Learning Signaling Pathway

Diagram 2: Monte Carlo Simulation Workflow for Drug Testing

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Decision-Making Circuit Research

| Item | Function & Application | Example Product/Model |

|---|---|---|

| GCaMP6f/v AAV | Genetically encoded calcium indicator for in vivo neural activity imaging. Enables recording of population dynamics during behavior. | AAV9-syn-GCaMP6f (Addgene viral prep) |

| Dopamine Sensor AAV | Specific detection of dopamine transients via GRABDA or dLight sensors. Critical for correlating neuromodulator release with choice. | AAV-hSyn-DA2m (Addgene) |

| Fiber Photometry System | System for recording fluorescence signals from genetically encoded indicators via an implanted optical fiber. | Tucker-Davis Technologies RZ5P system with LEDs (405nm/465nm) |

| Miniature Microscope | Head-mounted fluorescence microscope for calcium imaging in freely moving rodents. | Inscopix nVoke2 (integrated optogenetics + imaging) |

| Operant Conditioning Chamber | Configurable test chamber for automated behavioral tasks with precise control of stimuli and reward. | Med Associates operant chamber with Bpod controller |

| NeuroML-Compatible Simulator | Software for building and simulating biophysically realistic or reduced neural network models. | NEURON Simulation Environment; Brian2 (Python) |

| Psychophysics Toolbox | Software library for generating controlled visual/auditory stimuli and collecting responses in behavioral tasks. | MATLAB Psychtoolbox; PsychoPy (Python) |

| D1/D2 Receptor Agonist/Antagonist | Pharmacological tools for manipulating dopaminergic signaling in vivo to test model predictions. | SCH-23390 (D1 antagonist), Quinpirole (D2 agonist) (Tocris) |

| High-Performance Computing Core | Essential for running large-scale Monte Carlo simulations with thousands of parameter sets and iterations. | Amazon Web Services EC2 instance (e.g., C5.18xlarge); Local HPC cluster |

Within the broader thesis on Monte Carlo simulations for behavioral neuroscience power research, this case study addresses the critical challenge of estimating statistical power for complex, multi-factorial experimental designs. Traditional power analysis software often fails to account for nested data structures, repeated measures, interacting fixed and random effects, and the nuanced variance structures typical of modern behavioral phenotyping. Monte Carlo simulation provides a flexible framework to estimate power for these complex designs, enabling robust study planning and resource allocation.

Current Landscape & Search Findings

A live search confirms a growing reliance on simulation-based power analysis in preclinical behavioral neuroscience and psychopharmacology. Key trends identified include:

- Increased use of mixed-effects models to handle longitudinal behavioral data and litter effects.

- Focus on power estimation for multivariate outcomes (e.g., behavioral test batteries).

- Integration of Bayesian methods with simulation for power analysis with prior information.

- Development of R packages (

simr,MixedPower,pwr) as primary tools, with growing tutorials and workshops for researchers.

Table 1: Comparison of Power Analysis Methods for Behavioral Studies

| Method | Key Strength | Key Limitation | Best Suited For |

|---|---|---|---|

| Analytical (e.g., G*Power) | Fast, simple for basic designs. | Cannot handle complex designs (nested, repeated measures). | Simple t-tests, ANOVAs, correlations. |

| Monte Carlo Simulation | Extremely flexible; can model any design, distribution, and model. | Computationally intensive; requires coding/programming knowledge. | Mixed models, multivariate outcomes, nested designs, non-normal data. |

| Bootstrapping | Non-parametric; uses empirical data distribution. | Requires existing pilot dataset; less straightforward for prospective power. | Sensitivity analysis, post-hoc power using collected data. |

| Bayesian | Incorporates prior knowledge; provides probability statements. | Requires specification of priors; computationally intensive. | Studies with strong prior evidence; sequential designs. |

Table 2: Example Power Estimates from a Simulated Social Defeat Study (Monte Carlo, 1000 iterations)

| Effect Size (Cohen's d) | Sample Size (n/group) | Estimated Power (Alpha=0.05) | Notes |

|---|---|---|---|

| 0.8 | 10 | 0.78 | Typical target for a "large" effect. |

| 0.5 | 10 | 0.33 | Underpowered for a "medium" effect. |

| 0.5 | 20 | 0.57 | Closer to acceptable, but below 0.8. |

| 0.5 | 30 | 0.76 | Adequate power for a medium effect. |

| 0.3 | 30 | 0.31 | Underpowered for a "small" effect. |

Experimental Protocols

Protocol 4.1: Monte Carlo Power Simulation for a Longitudinal Sucrose Preference Test (SPT)

Objective: To estimate power for detecting a group-by-time interaction in a chronic stress paradigm with repeated SPT measurements.

Materials: R statistical environment with packages lme4, simr, and tidyverse.

Procedure:

- Define the Data Structure: Specify the experimental design: 2 groups (Control vs. Stressed), 4 weekly SPT measurements, N=15 subjects per group. Assume potential random intercepts for subject and measurement week.

- Specify the Statistical Model: Define a linear mixed model:

SPT ~ Group * Time + (1|SubjectID). - Set Model Parameters: Input fixed effects estimates (intercept, group, time, interaction) based on pilot data or literature. Define residual variance and random intercept variance.

- Simulate the Data: Use the

simrpackage to simulate 1000+ replicate datasets from the specified model. - Analyze Simulated Data: For each simulated dataset, fit the defined mixed model and extract the p-value for the group-by-time interaction effect.

- Calculate Power: Power is the proportion of simulations where the p-value for the target effect is less than the alpha level (typically 0.05).

Protocol 4.2: Power Analysis for a Multivariate Behavioral Z-Score

Objective: To estimate power for detecting a drug effect on a composite z-score derived from an open field test (OFT), elevated plus maze (EPM), and social interaction test.

Materials: R environment with MASS and pwr packages. Pilot data on correlations between behavioral measures.

Procedure:

- Construct Composite Score: From pilot data, calculate the correlation matrix between OFT (distance), EPM (% open arm time), and social interaction ratio.

- Define the Multivariate Model: The composite z-score is calculated per subject as the mean of the standardized individual scores.

- Simulate Correlated Data: Use the

mvrnorm()function from theMASSpackage to generate multivariate normal data for two groups, preserving the observed correlations between measures. - Calculate Composite & Analyze: For each simulated subject, compute the composite z-score. Perform a t-test between groups on this composite score.

- Iterate and Estimate Power: Repeat simulation & analysis 5000 times. Statistical power is the percentage of iterations yielding a significant t-test (p < 0.05).

Visualizations

Power Simulation Workflow

Composite Score for Multivariate Power

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Simulation-Based Power Analysis

| Tool / Resource | Function / Purpose | Example / Note |

|---|---|---|

| R Statistical Software | Open-source environment for statistical computing and graphics. Foundation for all simulation packages. | Use with RStudio IDE for improved workflow. |

simr R Package |

Conducts power analysis for linear & generalized linear mixed models by simulation. Extends lme4. |

Ideal for power analysis for longitudinal or nested behavioral data. |

MixedPower R Package |

Calculates power for specific effects in mixed models for factorial designs. | Useful for determining contribution of different factors. |

pwr R Package |

Basic power analysis functions for common statistical tests (t-tests, correlations, etc.). | Good for simple, preliminary calculations. |

| Pilot Dataset | Small-scale preliminary data used to estimate effect sizes, variances, and correlations for simulation inputs. | Critical for realistic power estimation; meta-analytic estimates can substitute. |

| High-Performance Computing (HPC) Cluster | Computing resource for running thousands of iterative simulations efficiently. | Essential for large-scale simulations (e.g., >10,000 iterations). |

| Git / Version Control | Tracks changes in simulation code, ensuring reproducibility and collaboration. | Use GitHub or GitLab for code sharing and backup. |

Application Notes

This protocol provides a framework for integrating multimodal data to test complex hypotheses in behavioral neuroscience, such as how a specific genetic polymorphism influences neural circuit activity to mediate a behavioral phenotype. The approach is computationally anchored in Monte Carlo simulation to determine statistical power and guard against false positives from multiple comparisons across high-dimensional data.

Core Challenge: Heterogeneous data types (categorical genotypes, continuous imaging metrics, time-series behavioral data) exist on different scales and have complex, non-independent correlation structures. Traditional univariate analyses are underpowered and increase false discovery rates.

Proposed Solution: A multi-stage data integration pipeline:

- Within-Modality Feature Reduction: Use principal component analysis (PCA) or similar on high-dimensional data (e.g., voxels from fMRI, timepoints from behavior) to extract latent variables.

- Cross-Modality Linking: Employ partial least squares correlation (PLSC) or canonical correlation analysis (CCA) to identify maximally covarying components across two data modalities (e.g., genetic/imaging, imaging/behavior).

- Mediation & Path Analysis: Test formal causal pathways (e.g., Gene → Neural Activity → Behavior) using mediation models.

- Monte Carlo Power Validation: Simulate synthetic datasets based on empirical effect sizes to determine the required sample size and optimal analytical approach before full data collection.

Quantitative Power Considerations (Monte Carlo Simulation Output):

Table 1: Simulated Power for Detecting a Genetic-Imaging-Behavioral Pathway (α = 0.05, 10,000 iterations)

| Sample Size (N) | Direct Gene-Behavior Effect (d=0.5) | Mediated Effect (a*b paths) | Full PLSC Model (Imaging-Behavior) |

|---|---|---|---|

| 30 | 0.45 | 0.18 | 0.22 |

| 50 | 0.72 | 0.41 | 0.48 |

| 80 | 0.89 | 0.65 | 0.75 |

| 100 | 0.96 | 0.78 | 0.87 |

Table 2: Example Integrated Dataset Structure (Simulated for N=100)

| Subject ID | Genotype (SNP rsID) | fMRI ROI Activity (Mean β) | Behavioral Score (Reaction Time ms) | Integrated PLS Latent Score (LV1) |

|---|---|---|---|---|

| SUB_001 | AA | 1.23 | 450 | 0.85 |

| SUB_002 | AG | 0.87 | 520 | -0.12 |

| SUB_003 | GG | 0.45 | 610 | -1.03 |

| ... | ... | ... | ... | ... |

| Mean | - | 0.85 ± 0.32 | 525 ± 85 | 0.00 ± 0.78 |

Experimental Protocols

Protocol 1: Multimodal Data Acquisition for a Fear Conditioning Paradigm

Aim: To collect synchronized genetic, neuroimaging (fMRI), and behavioral data.

- Genotyping:

- Sample: Saliva or blood draw from each participant (N ≥ 80, based on power simulation).

- Method: Standard DNA extraction, followed by targeted SNP array or whole-genome sequencing. Focus on candidate SNPs (e.g., BDNF Val66Met, rs6265).

- Output: Categorical or additive genotype codes per subject.

fMRI during Fear Acquisition:

- Task: Computerized fear conditioning. A neutral visual stimulus (CS+) is paired with a mild electric shock (US) in 60% of trials; a control stimulus (CS-) is never paired.

- Acquisition: Use a 3T MRI scanner. Acquire T1-weighted structural images and T2*-weighted EPI functional scans (TR=2000ms, TE=30ms, voxel size=3x3x3mm).

- Analysis (First-Level): Preprocess data (realignment, normalization, smoothing). Model BOLD response to CS+ vs CS- events. Extract contrast estimates (β weights) from a priori ROIs: amygdala and ventromedial prefrontal cortex (vmPFC).

Behavioral Phenotyping:

- Skin Conductance Response (SCR): Recorded during fMRI task. Quantify as the mean differential SCR (CS+ minus CS-) during the acquisition phase.

- Post-Task Measure: Explicit threat rating on a visual analog scale (1-100) for each CS.

Protocol 2: Data Integration & Analysis Pipeline

Aim: To formally link the three data modalities.

- Data Preparation:

- Code genotypes numerically (e.g., 0, 1, 2 for additive model).

- Create an imaging matrix X (subjects x ROIs) from fMRI β weights.

- Create a behavioral matrix Y (subjects x measures: SCR, threat rating).

Partial Least Squares Correlation (PLSC) Analysis:

- Compute the cross-correlation matrix between X and Y.

- Perform singular value decomposition (SVD) on this matrix to identify latent variables (LVs) that maximally covary.

- Statistically assess LV significance using permutation testing (5000 iterations) on the singular value.

- Calculate subject scores and variable loadings for significant LVs.

Mediation Analysis:

- Test the hypothesis: Genotype → fMRI Activity (Amygdala) → SCR.

- Use a bootstrap approach (5000 samples) to estimate the indirect (mediation) effect (path a*b) and its 95% confidence interval.

Monte Carlo Power Simulation (Pre-Study):

- Model Specification: Define the expected effect sizes (e.g., genotype explains 8% variance in amygdala activity; amygdala activity explains 10% variance in SCR).

- Simulation: In R or Python, generate 10,000 synthetic datasets for a range of sample sizes (N=30 to 150), respecting the correlation structure between variables.

- Analysis: Apply the planned PLSC and mediation models to each simulated dataset.

- Output: Calculate statistical power (proportion of simulations where p < 0.05) for each sample size, as shown in Table 1.

Mandatory Visualizations

Title: Multimodal Data Integration & Analysis Workflow

Title: Mediation Model for Gene-Brain-Behavior Pathway

The Scientist's Toolkit

Table 3: Essential Research Reagents & Materials

| Item / Solution | Function in Protocol | Example/Specification |

|---|---|---|

| DNA Genotyping Kit | Extracts and prepares DNA from biospecimens for genetic analysis. | Saliva collection kit (e.g., Oragene), automated DNA extraction system. |

| fMRI Scanner & Coil | Acquires high-resolution BOLD signal data during task performance. | 3T or 7T MRI system with multiband EPI sequences; 64-channel head coil. |

| Fear Conditioning Setup | Presents stimuli and delivers precise unconditioned stimuli during scanning. | MRI-compatible visual projection system, biopotential amplifier for SCR, programmable air puff or electrical stimulator for US. |

| Statistical Software (R/Python) | Performs data integration, PLSC, mediation, and Monte Carlo simulations. | R with pls, lavaan, psych packages; Python with scikit-learn, nilearn, pingouin. |

| High-Performance Computing (HPC) Cluster | Runs computationally intensive permutation tests and large-scale simulations. | Cluster with parallel processing capabilities for 10,000+ iterations. |

| Data Management Platform | Securely stores, version controls, and harmonizes heterogeneous data files. | BIDS (Brain Imaging Data Structure) format, REDCap database, or internal lab server with structured directories. |

Beyond the Error Bar: Solving Common Pitfalls and Optimizing Simulation Performance

In Monte Carlo (MC) simulations for behavioral neuroscience and psychopharmacological power research, the integrity of results is fundamentally dependent on the quality of random number generation (RNG). Biased RNGs can systematically skew simulation outcomes, leading to inaccurate power estimates, inflated Type I/II error rates, and ultimately, flawed conclusions regarding drug efficacy or neural mechanisms. This application note details prevalent sources of bias in common RNG algorithms and provides protocols for their identification and mitigation.

The table below summarizes key quantitative characteristics and bias risks of widely used RNG algorithms, based on current literature and empirical testing.

Table 1: Characteristics and Bias Risks of Common RNG Algorithms

| Algorithm | Period | Speed | Known Bias Risks | Typical Use in Neuroscience |

|---|---|---|---|---|

| Linear Congruential Generator (LCG) | ~2³² | Very Fast | Severe serial correlation in higher dimensions; lattice structure | Legacy systems; not recommended for modern MC |

| Mersenne Twister (MT19937) | 2¹⁹⁹³⁷ -1 | Fast | Fails many statistical tests for randomness in high-dimensional spaces; predictable if sufficiently many outputs are observed | Common default in Python (NumPy), R, MATLAB |

| Xorshift Generators | ~2¹²⁸ - 2¹⁰²⁴ | Very Fast | Can fail binary matrix rank and linear complexity tests | Low-memory environments; often used as a component |

| PCG Family | 2¹²⁸ or more | Fast | No severe known biases in well-seeded implementations | Increasingly popular for general-purpose simulation |

| Cryptographic RNGs (e.g., AES-CTR DRBG) | Effectively Infinite | Slow | Statistically robust, but performance overhead is high | Security-critical applications; not typically for MC |

Experimental Protocols for Bias Detection

Protocol 3.1: Empirical Testing for Sequential Correlation

Objective: To detect serial correlation in sequences of pseudo-random numbers (PRNs), which can bias stochastic simulations of neural firing or behavioral trial order.

Materials:

- Device/Software under test (DUT) generating PRNs.

- Statistical analysis software (e.g., Python with SciPy, R).

Procedure:

- Generate a sequence of 1,000,000 uniform PRNs

U = [u1, u2, ..., uN]using the DUT. - Create lagged pairs:

(u1, u2), (u2, u3), ..., (uN-1, uN). - Perform a 2D Kolmogorov-Smirnov test comparing the distribution of lagged pairs against the uniform distribution on the unit square.

- Calculation: Compute the empirical distance

Das per the KS statistic. A p-value < 0.001 after Bonferroni correction for multiple lags (e.g., lags 1, 2, 5, 10) indicates significant serial correlation. - Mitigation: If bias is detected, switch to an RNG from a different family (e.g., from Mersenne Twister to PCG or a cryptographically secure generator seeded from system entropy).

Protocol 3.2: Assessment of Dimensional Regularity (Spectral Test)

Objective: To identify lattice structures in high-dimensional space, crucial for simulations involving multi-dimensional integration (e.g., modeling coupled neural populations).

Materials:

- DUT generating PRNs.

- Visualization/analysis toolkit (e.g., MATLAB, Python with Matplotlib).

Procedure:

- Generate

Ntuples ofddimensions:(u1...ud), (ud+1...u2d), .... - For

d = 2, 3, 4, plot the points in the unit hypercube. - Visually and statistically (e.g., via nearest-neighbor distribution analysis) assess whether points form hyperplanes, leaving large empty regions.

- Quantitative Metric: Calculate the normalized spectral test figure of merit

S_d. A value ofS_d < 0.1for low dimensions suggests poor lattice structure. - Mitigation: Use RNGs known to perform well in high dimensions, such as the Sobol sequence (a quasi-random generator) for integration tasks, or modern, robust pseudo-RNGs like PCG or MRG32k3a.

Protocol 3.3: Seed Generation and Entropy Audit

Objective: To ensure the initial state of the RNG is sufficiently unpredictable, preventing reproducible simulation biases across multiple lab runs.

Procedure:

- Source Audit: Document the entropy source for seeding. Unacceptable: Current time in seconds. Acceptable: System entropy pools (

/dev/urandom,CryptGenRandom), hardware RNGs, or a combination of multiple system variables hashed together. - Protocol: Implement a seeding function that gathers ≥ 128 bits of entropy. Example: On a Unix-like system, read 16 bytes from

/dev/urandom. - Verification: For critical applications, run the NIST STS or Dieharder test suite on the first 10,000 numbers generated from 1000 different seeds. Failure rates should be within confidence intervals for a true random source.

Recommended RNG Implementation for Neuroscience MC

A hybrid approach balances speed and statistical robustness.

- Primary Generator: Use a PCG64 or MRG32k3a generator for main sampling.

- Seeding: Initialize state using a cryptographic RNG (e.g.,

secretsmodule in Python). - Quasi-Random Sequences: For fixed-design parameter sweeps or integration, use Sobol or Halton sequences to reduce variance and improve convergence.

- Documentation: In publications, explicitly state the RNG algorithm, library, version, and seed value to ensure full reproducibility.

Visualization of RNG Assessment Workflow

RNG Assessment and Mitigation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for RNG Bias Mitigation in Computational Research

| Item/Category | Function/Description | Example Tools/Libraries |

|---|---|---|

| High-Quality Pseudo-RNG Libraries | Provides robust algorithms with long periods and good statistical properties. | numpy.random (Generator with PCG64), randomgen (Python), Random123 (C++), R dqrng package. |

| Quasi-Random Sequence Generators | Produces low-discrepancy sequences for faster convergence in integration tasks. | Sobol, Halton sequences (via SciPy, gsl or specialized libraries). |

| Cryptographic Seeding Source | Provides high-entropy seeds to initialize pseudo-RNGs, ensuring unpredictable starting points. | OS sources: /dev/urandom (Unix), CryptGenRandom() (Windows). Libraries: secrets (Python), java.security.SecureRandom. |

| Statistical Test Suites | Battery of tests to empirically detect deviations from true randomness. | Dieharder, TestU01 (BigCrush), NIST Statistical Test Suite, PractRand. |

| Reproducibility Framework | Records the precise computational environment, including RNG state, for exact replication of simulations. | RandomState capture (NumPy), containerization (Docker/Singularity), workflow managers (Nextflow, Snakemake). |

Application Notes: Convergence in Behavioral Neuroscience Monte Carlo Studies

Monte Carlo (MC) simulations are indispensable in behavioral neuroscience for power analysis, modeling neural dynamics, and estimating pharmacokinetic/pharmacodynamic (PK/PD) parameters in drug development. The core challenge is determining when an MC simulation has converged—reached a stable, reliable estimate—to avoid spurious results from under-sampling or wasteful computational overhead.