Unlocking Robust Neuroscience: A Comprehensive Guide to Multiverse Analysis in Neuroimaging

This article provides a complete guide to multiverse analysis for neuroimaging researchers and biomedical professionals.

Unlocking Robust Neuroscience: A Comprehensive Guide to Multiverse Analysis in Neuroimaging

Abstract

This article provides a complete guide to multiverse analysis for neuroimaging researchers and biomedical professionals. It covers the core rationale for addressing the 'garden of forking paths' in data analysis, details practical methodological workflows for implementation, offers solutions for computational and interpretive challenges, and presents frameworks for validating and comparing results across analytical universes. The guide aims to empower researchers to produce more transparent, robust, and reproducible findings in neuroscience and drug development.

Beyond Single Pipelines: Why Multiverse Analysis is Essential for Robust Neuroimaging

The Crisis of Reproducibility in Neuroscience and the 'Garden of Forking Paths'

The reproducibility crisis in neuroscience, particularly in neuroimaging, stems from researcher degrees of freedom—the "garden of forking paths." Multiverse analysis, a framework from statistical genetics and psychology, offers a solution. It involves conducting all plausible analyses (the "multiverse") on a dataset and reporting the distribution of results, thus quantifying outcome variability due to analytical choices.

Application Notes: Implementing Multiverse Analysis

Core Principles

- Specification Curve Analysis: Map all justifiable analytical choices (preprocessing, statistical models, inclusion criteria) into a multidimensional grid.

- Transparent Reporting: Publish the complete multiverse specification alongside results.

- Result Aggregation: Focus on the distribution and robustness of effects across the multiverse, not a single p-value.

Quantitative Data: Impact of Analytical Variability

Table 1: Summary of Key Multiverse Studies in Neuroimaging

| Study (Year) | Analysis Decisions Varied | Number of Analysis Pathways Tested | Range of Key p-values | % of Pathways with p < 0.05 | Effect Size Range (Cohen's d) |

|---|---|---|---|---|---|

| Botvinik-Nezer et al. (2020) - fMRI Analysis | Preprocessing, modeling, ROI definition | 6,912 | 0.001 to 0.99 | 16% | -0.16 to 0.73 |

| Silberzahn et al. (2018) - Social Perception | Variable selection, outlier handling, transformations | 15,448 | <0.001 to >0.9 | 68% | -0.06 to 0.35 |

| Hypothetical Voxel-Based Morphometry (VBM) | Smoothing kernel, normalization, statistical threshold, covariate inclusion | 1,024 (example) | 0.01 to 0.45 | 22% | 0.15 to 0.41 |

Experimental Protocols

Protocol 1: Designing a Neuroimaging Multiverse Analysis

Objective: To systematically assess the robustness of a hypothesized correlation between amygdala volume and anxiety scores. Materials: Structural MRI dataset (N > 200), anxiety questionnaire data, computing cluster. Procedure:

- Define the Decision Space: Enumerate all plausible analytical choices:

- Preprocessing (A): A1: SPM12 default pipeline, A2: CAT12 pipeline, A3: FSL-VBM pipeline.

- Amygdala Segmentation (B): B1: Manual ROI from normalized images, B2: Automated segmentation (Freesurfer), B3: Atlas-based prob. map threshold >0.5.

- Statistical Model (C): C1: Linear regression (anxiety ~ amygdala vol + age + sex), C2: Adds intracranial volume as covariate, C3: Uses robust regression.

- Outlier Handling (D): D1: No exclusion, D2: Exclude ±3 SD, D3: Exclude based on leverage.

- Generate Analysis Universe: Compute Cartesian product of all choices (3 x 3 x 3 x 3 = 81 unique analysis pathways).

- Parallel Execution: Script and run all 81 analyses on the computing cluster.

- Result Extraction: For each pathway, extract: p-value for anxiety, beta coefficient, effect size, confidence intervals.

- Specification Curve Visualization: Plot all 81 results sorted by effect size or p-value. Calculate the percentage of analyses yielding a statistically significant (p < 0.05) effect.

Protocol 2: Preregistration of a Single Analytic Path

Objective: To preregister one "primary" analysis from the multiverse to confirm a key finding with maximum rigor. Procedure:

- Lock Primary Analysis: Prior to observing results of Protocol 1, select one analysis path (e.g., A2, B2, C1, D1) based on prior literature and standard practice in your lab. Justify each choice.

- Preregister: Document this exact protocol, including software versions, code, and statistical threshold, on a repository (e.g., OSF, ClinicalTrials.gov).

- Execute & Report: Run only this preregistered pipeline on the data. Report the result, regardless of significance. The multiverse results from Protocol 1 provide context for this primary finding's robustness.

Visualizations

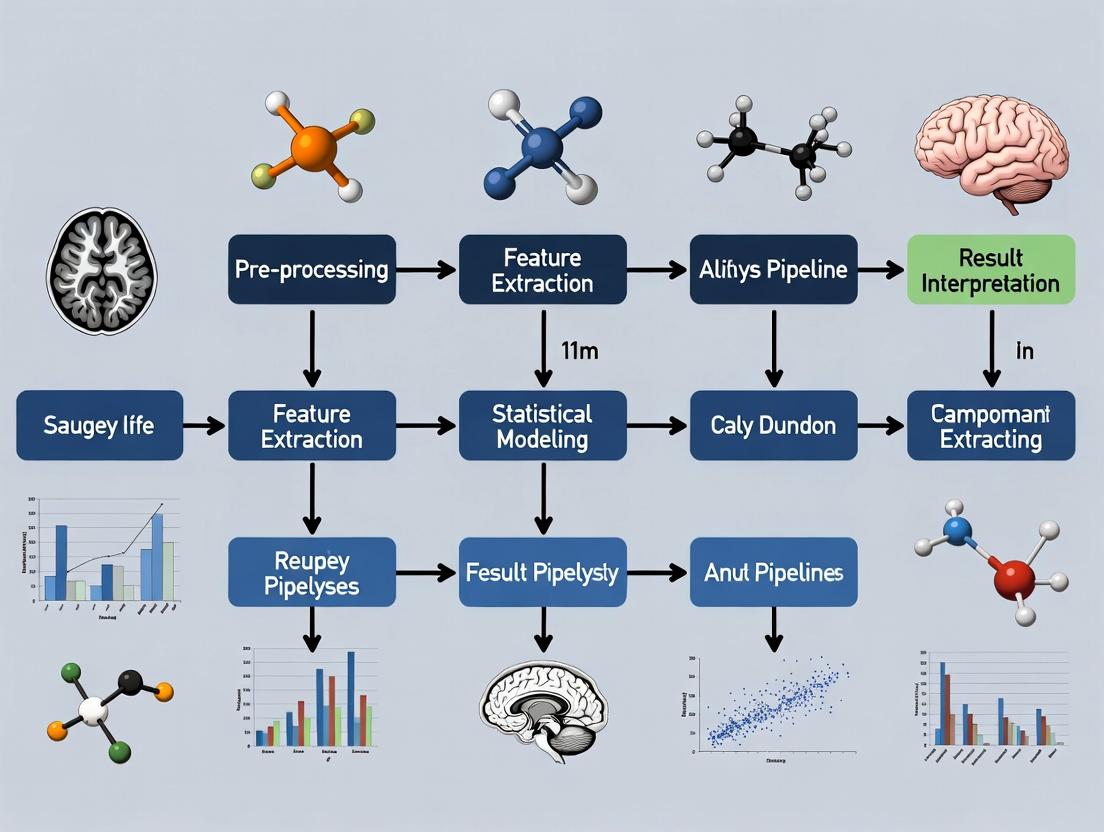

Title: The Multiverse Analysis Workflow

Title: Forking Paths Lead to a Multiverse of Results

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Multiverse Neuroimaging Analysis

| Item / Resource | Function & Role in Mitigating Reproducibility Crisis | Example (Vendor/Platform) |

|---|---|---|

| Containerization Software | Encapsulates the complete software environment (OS, libraries, neuroimaging tools) to guarantee identical analysis execution across labs and time. | Docker, Singularity |

| Neuroimaging Pipelines | Standardized, version-controlled processing workflows. Using multiple in a multiverse quantifies pipeline-dependent variability. | fMRIPrep, CAT12, HCP Pipelines, QSIPrep |

| BIDS Format | The Brain Imaging Data Structure standardizes file organization and metadata, eliminating a major source of pre-analytic variability. | BIDS Validator, BIDS Apps |

| Automated Analysis Scripts | Code (e.g., Python, R, MATLAB) that programmatically executes all analysis pathways in the multiverse, eliminating manual errors. | Nipype, Snakemake, Nextflow |

| High-Performance Computing (HPC) / Cloud Credits | Computational resources required to feasibly run thousands of analysis variants in parallel within a reasonable timeframe. | AWS, Google Cloud, local HPC cluster |

| Result Aggregation & Visualization Library | Specialized code libraries for collecting results from all multiverse runs and creating specification curve and robustness plots. | specr (R), multiverse (R/Python) |

| Preregistration Platform | A time-stamped, immutable repository to lock down the primary analysis path and the full multiverse design before data analysis. | Open Science Framework (OSF), ClinicalTrials.gov |

Multiverse analysis is a methodological framework for quantifying and visualizing the impact of multiple, equally defensible analytical choices on research outcomes. It moves beyond single-analysis reporting to systematically explore the "multiverse" of all reasonable specifications. This approach is critical for neuroimaging research, where pipelines involve numerous subjective decisions (e.g., preprocessing parameters, statistical thresholds, region-of-interest definitions) that can dramatically influence results.

Key Definitions:

- Specification Curve: A plot showing the effect size (and confidence interval) of a hypothesis test across every combination of analytical choices in the multiverse.

- Analysis Landscape: A higher-dimensional visualization that maps the relationship between clusters of analytical decisions and the resulting outcome space, revealing robust patterns and sensitivity boundaries.

Application Notes for Neuroimaging Research

When to Apply a Multiverse Approach

- Pre-registration Supplement: To define the space of plausible analyses before data collection.

- Post-hoc Robustness Check: To assess the stability of a published finding across alternative, reasonable pipelines.

- Methodological Papers: To compare the performance of different algorithms or software tools under a wide range of conditions.

- Drug Development Biomarker Validation: To test the robustness of a neuroimaging biomarker (e.g., fMRI connectivity signature) to analytical variability before costly clinical trial implementation.

Constructing the Multiverse: A Neuroimaging Example

The multiverse is defined by identifying all decision points in an analytical pipeline. For a typical task-fMRI study examining the effect of a cognitive drug, this includes:

Table 1: Example Decision Nodes in an fMRI Multiverse Analysis

| Pipeline Stage | Decision Node | Possible Choices (Alternatives) |

|---|---|---|

| Preprocessing | Motion Correction | 6-parameter rigid body, 12-parameter affine, include derivatives? |

| Temporal Filtering | High-pass: 0.008 Hz, 0.01 Hz; Band-pass? | |

| Spatial Smoothing | FWHM: 0mm, 5mm, 8mm, kernel type | |

| First-Level Analysis | Hemodynamic Response Function (HRF) | Canonical HRF, HRF with derivatives, Finite Impulse Response |

| Contrast Specification | Drug vs. Placebo, (Drug - Baseline) vs. (Placebo - Baseline) | |

| Group-Level Analysis | Covariate Adjustment | Age, sex, mean framewise displacement as: none, linear, quadratic |

| Multiple Comparison Correction | Voxel-wise FWE, Cluster-extent (p<0.001, p<0.005), TFCE | |

| Region-of-Interest (ROI) Analysis | Atlas: AAL, Harvard-Oxford, Destrieux; Summary: mean, PCA component |

Generating the Analysis Landscape

The analysis landscape extends the specification curve by considering interactions between choices. It requires dimensionality reduction techniques (e.g., t-SNE, UMAP) to project the high-dimensional space of specifications onto a 2D plane, where each point is an analysis specification, colored by its resulting effect size or p-value.

Detailed Experimental Protocols

Protocol: Conducting a Full Multiverse Analysis for a Task-fMRI Drug Trial

Aim: To determine the robustness of a drug's effect on brain activity in a target region (e.g., prefrontal cortex) across all reasonable analytical pipelines.

I. Materials & Data

- Raw Neuroimaging Data: BOLD fMRI data from a randomized, placebo-controlled drug trial (pre- and post-treatment).

- Computational Infrastructure: High-performance computing cluster or cloud environment (e.g., AWS, Google Cloud) due to high computational load.

- Software Containers: Docker/Singularity containers for each major neuroimaging software (fMRIprep, SPM, FSL, AFNI) to ensure reproducibility.

II. Step-by-Step Procedure

Step 1: Enumerate the Multiverse.

- Assemble a multidisciplinary team (statistician, methodologist, domain expert) to list all defensible analysis choices at each pipeline stage (see Table 1).

- Calculate the total number of unique analysis pathways (the "multiverse"). For n decision nodes with k_i options each, total specifications = ∏ k_i. This number can be in the thousands or millions.

- Prune clearly inappropriate or collinear choices based on expert consensus to create a bounded, reasonable multiverse.

Step 2: Automated Pipeline Execution.

- Develop a master script (e.g., in Python or R) that generates all unique combinations of analysis parameters.

- Use a workflow manager (Nextflow, Snakemake) to execute each analysis pipeline on your computational infrastructure in parallel. Log all outputs (statistical maps, effect sizes, p-values).

Step 3: Extract Outcome Metrics.

- For each pipeline, extract the primary outcome: e.g., the mean beta estimate for the drug > placebo contrast within the pre-specified prefrontal cortex ROI.

- Extract secondary metrics: whole-brain family-wise error rate, effect size in a control region, model goodness-of-fit.

Step 4: Create Specification Curve & Analysis Landscape.

- Specification Curve: Sort all pipelines from the most negative to the most positive effect size. Plot each pipeline's effect size with its confidence interval. Annotate the plot with the specific choices made for key decision nodes.

- Analysis Landscape: Encode each pipeline as a binary or categorical vector. Use UMAP to reduce this high-dimensional vector to 2D coordinates. Create a scatter plot where each point is a pipeline, colored by its output effect size. Overlay decision boundaries to identify clusters of choices that lead to similar outcomes.

Step 5: Interpret & Report.

- Calculate the robustness ratio: the proportion of pipelines that yield a statistically significant effect (p < 0.05) in the hypothesized direction.

- Identify forking paths: decision points that create major divergence in outcomes (e.g., choice of multiple comparison correction method drastically changes significance).

- Report the median effect size and the central 95% interval of effect sizes across the multiverse. Clearly state which choices are most consequential.

Visualization of Workflows and Relationships

Workflow for Multiverse Analysis in Neuroimaging

From Specifications to a 2D Analysis Landscape

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Neuroimaging Multiverse Analysis

| Item / Solution | Function / Role in Multiverse Analysis | Example Tools / Libraries |

|---|---|---|

| Workflow Manager | Automates execution of thousands of pipeline variants; ensures reproducibility and tracks dependencies. | Nextflow, Snakemake, Wings |

| Containerization | Encapsulates software and environment, guaranteeing identical analysis conditions across all runs. | Docker, Singularity/Apptainer |

| Neuroimaging Pipelines | Provides standardized, modular components for building analysis pipelines. | fMRIprep (preprocessing), FitLins (GLM), Nipype (framework) |

| Multiverse Analysis Library | Specialized code for generating, running, and visualizing multiverse analyses. | R: specr, multiverse; Python: sensitivity-analyzer |

| High-Performance Compute (HPC) | Provides the necessary computational power for parallel processing of massive numbers of jobs. | Slurm, AWS Batch, Google Cloud Life Sciences API |

| Results Database | Stores and queries the high-volume, heterogeneous outputs from all pipeline runs. | SQLite, PostgreSQL, HDF5 files |

| Interactive Visualizer | Allows dynamic exploration of the specification curve and analysis landscape. | R Shiny, Plotly Dash, Jupyter Widgets |

Introduction Within the framework of Multiverse Analysis for neuroimaging research, the core principles of transparency, robustness, and the explicit quantification of analytical flexibility are paramount. Multiverse Analysis, an approach where all reasonable analytical choices are systematically specified and executed, transforms subjective analytical decisions into an empirical question. This document provides application notes and detailed protocols for implementing these principles in neuroimaging studies, specifically focusing on functional MRI (fMRI) data analysis for drug development research.

Table 1: Multiverse Analysis Results from a Hypothetical fMRI Pharmacological Study Scenario: Comparing neural activity (BOLD signal) in a target region between Placebo and Drug conditions across different analytical pipelines.

| Pipeline ID | Preprocessing Software | Motion Correction Method | Smoothing Kernel (FWHM mm) | Statistical Inference Method | Cluster-Forming Threshold (p) | Result: Significant Group Difference (p < 0.05)? | Effect Size (Cohen's d) |

|---|---|---|---|---|---|---|---|

| A1 | FSL | Standard MCFLIRT | 6.0 | Voxel-wise, FWE | 0.001 | No | 0.41 |

| A2 | FSL | Standard MCFLIRT | 6.0 | Cluster-extent, FWE | 0.01 | Yes | 0.68 |

| B1 | fMRIPrep | ICA-AROMA | 5.0 | Voxel-wise, FDR | 0.005 | No | 0.38 |

| B2 | fMRIPrep | ICA-AROMA | 5.0 | Threshold-Free Cluster Enhancement | N/A | Yes | 0.72 |

| C1 | SPM | Realign & Unwarp | 8.0 | Small Volume Correction | 0.001 | Yes | 0.55 |

Protocol 1: Generating a Specification Curve for Multiverse fMRI Analysis

Objective: To systematically map and visualize the range of analytical outcomes across a predefined set of reasonable processing and modeling choices.

Materials: Preprocessed fMRI datasets (in BIDS format), high-performance computing cluster or workstation, containerization software (Singularity/Docker).

Procedure:

- Define the Multiverse Space:

- Create a JSON configuration file enumerating all analytical "decision nodes" and their permissible options. Example nodes include: Software (FSL, SPM, AFNI), Denoising strategy (ICA-AROMA, aCompCor, GSR), Spatial smoothing kernel (4mm, 6mm, 8mm), First-level hemodynamic response model (canonical, time-derivative, FIR), Group-level inference method (voxel-wise FWE, cluster-extent FWE, TFCE, FDR).

- Automate Pipeline Execution:

- Write a master script (e.g., in Python or Bash) that programmatically generates and submits all unique pipeline combinations (the "multiverse") using the configuration file.

- Utilize containerized software images (e.g., from Docker Hub) for each analysis package to ensure version control and computational reproducibility.

- Extract and Aggregate Results:

- For each pipeline, extract the key outcome metric (e.g., t-statistic for the Drug vs. Placebo contrast in a pre-specified Region of Interest (ROI), or the voxel count of a significant cluster).

- Compile all results into a structured data table (see Table 1).

- Generate Specification Curve Plot:

- Sort pipelines along the x-axis by the outcome metric (e.g., effect size).

- For each pipeline bar, use a consistent color scheme to encode the analytical choice made at each decision node (see Diagram 1).

- Plot the outcome metric (e.g., effect size with CI) on the y-axis.

Diagram 1: Multiverse Analysis Workflow & Specification Curve

Protocol 2: Quantifying Analysis Robustness with the Vibration of Effects (VoE)

Objective: To quantify the stability of an estimated neuroscientific effect (e.g., drug-induced change in functional connectivity) across the multiverse of analytical choices.

Materials: Aggregated results table from Protocol 1 (Table 1).

Procedure:

- Define the Focal Parameter:

- Identify the primary effect of interest (θ). For example: The mean difference in amygdala-prefrontal cortex functional connectivity between treatment groups.

- Compute the VoE Distribution:

- From the multiverse results table, extract the point estimate (e.g., effect size) and its associated measure of precision (e.g., standard error, confidence interval) for θ from each analytical pipeline (i).

- Calculate Summary Statistics:

- Mean Effect: (\bar{\theta} = \frac{1}{n}\sum{i=1}^{n} \thetai)

- Vibration of Effects: The standard deviation of the point estimates across all pipelines: (VoE = \sqrt{\frac{1}{n}\sum{i=1}^{n} (\thetai - \bar{\theta})^2})

- Range: Minimum and maximum θ_i across the multiverse.

- Robustness Ratio: (RR = \bar{\theta} / VoE). A higher RR suggests the conclusion is less sensitive to analytical choices.

- Visualize:

- Create a histogram or density plot of all θ_i values (the VoE distribution).

- Overlay the mean effect and a benchmark for practical significance (see Diagram 2).

Diagram 2: Vibration of Effects (VoE) Distribution

The Scientist's Toolkit: Key Research Reagent Solutions for Neuroimaging Multiverse Analysis

| Item | Function in Multiverse Analysis |

|---|---|

| BIDS (Brain Imaging Data Structure) | A standardized framework for organizing neuroimaging data. Enforces transparency and is the foundational input for reproducible, automated pipelines. |

| fMRIPrep / MRIQC | Automated, reproducible preprocessing pipelines and quality control tools. Reduce variability in initial data preparation, a critical node in the multiverse. |

| NiPreps (Neuroimaging Preprocessing Tools) | A suite of BIDS-compliant data preprocessing pipelines promoting best practices and serving as consistent, versioned "decision options." |

| Nipype | A Python framework that interfaces different neuroimaging software packages (FSL, SPM, AFNI). Essential for building and orchestrating multiverse pipelines. |

| Docker / Singularity Containers | Containerization technology that packages software, libraries, and environment. Guarantees that every pipeline runs with identical computational dependencies. |

| CubicWeb / NeuroVault | Platforms for sharing not just results, but full analysis workflows, code, and derived data, fulfilling the principle of transparency. |

| COSMOS (Computational Modeling Software) | For modeling pharmacological effects, allows systematic variation of kinetic models—a key analytical flexibility dimension in pharmaco-fMRI. |

| Git / GitLab / GitHub | Version control systems mandatory for tracking every change in analysis code, configuration files, and documentation. |

Application Notes: A Multiverse Analysis Perspective

The reliability of neuroimaging findings is contingent on the analytical pathway chosen. A Multiverse analysis approach—running all reasonable combinations of analysis choices—exposes how conclusions depend on preprocessing, modeling, and statistical decisions. This framework quantifies the fragility or robustness of results across the "garden of forking paths."

Recent studies implementing Multiverse analyses in fMRI and structural MRI reveal the extent of outcome variability.

Table 1: Impact of Analytical Decisions on Neuroimaging Outcomes

| Decision Category | Specific Choice | Reported Variability in Key Outcomes | Typical Range of Effect Size Fluctuation |

|---|---|---|---|

| Preprocessing | Motion Correction Threshold | Significant cluster location changes in 30-40% of analyses | Cohen's d ± 0.15 - 0.30 |

| Global Signal Regression (GSR) Use | Reversal of correlation sign in 15-25% of functional connectivity pairs | Beta coefficient ± 0.2 - 0.4 | |

| Smoothing Kernel (FWHM) | Cluster extent variability up to 50% for 6mm vs 10mm kernels | T-statistic ± 1.5 - 2.5 | |

| Modeling | Hemodynamic Response Function (HRF) Model | Peak activation latency shifts of 1-2 seconds | Percent signal change ± 0.1 - 0.3% |

| Inclusion of Temporal Derivatives | 20-30% change in number of significant voxels in event-related designs | ||

| Statistical | Cluster-Forming Threshold (p-value) | Over 60% variability in cluster sizes for p<0.001 vs p<0.01 | |

| Multiple Comparison Correction (FWE vs FDR) | 10-20% difference in number of surviving voxels in whole-brain analysis | ||

| Volumetric Parcellation Atlas Choice | Correlation strength differences up to r = 0.3 for between-network connectivity |

Experimental Protocols

Protocol: Implementing a Multiverse Analysis for Task fMRI

Objective: To systematically evaluate the sensitivity of a task-based fMRI result to a predefined set of analytical choices.

Materials:

- Raw BOLD fMRI time series (NIfTI format).

- High-resolution T1-weighted anatomical scan.

- Experimental event timings (onset, duration, condition).

- Computing environment (e.g., MATLAB with SPM12, Python with Nilearn, FSL).

- Multiverse analysis pipeline manager (e.g., Snakemake, Nextflow).

Procedure:

- Define the Analysis Space: List all decision nodes (D) and their possible options (O). The Multiverse is the Cartesian product D1 x D2 x ... x Dn.

- Example Decision Nodes:

- D1: Spatial smoothing kernel: [4mm, 6mm, 8mm, 10mm] FWHM.

- D2: Motion scrubbing threshold: [0.2mm, 0.5mm, 1.0mm] framewise displacement.

- D3: HRF model: [Canonical, Canonical + Temporal Derivative, Finite Impulse Response (FIR)].

- D4: First-level contrast: [Simple main effect, Parametric modulation].

- D5: Group-level inference: [Voxelwise FWE, Cluster-extent FWE (p<0.001), TFCE].

- Example Decision Nodes:

Automated Pipeline Construction: Script a pipeline that generates and executes every unique combination of choices (e.g., 4 x 3 x 3 x 2 x 3 = 216 pipelines).

Parallel Execution: Run all pipelines on a high-performance computing cluster.

Result Aggregation: For each pipeline, extract key outcome metrics:

- Primary contrast peak coordinates (MNI).

- Cluster size (voxels) and peak t-statistic.

- Effect size at a pre-defined region of interest.

Multiverse Visualization and Summary:

- Create a specification curve plot showing the distribution of effect sizes or t-statistics across all pipelines.

- Generate an alluvial diagram tracing how specific choices influence the significance (survival/vanishment) of a key cluster.

- Calculate the percentage of pipelines in which a finding remains statistically significant (robustness index).

Protocol: Multiverse Analysis for Structural Network Connectivity

Objective: Assess variability in graph-theoretical measures of structural connectomes derived from diffusion MRI.

Materials:

- Multi-shell diffusion-weighted MRI data.

- T1-weighted anatomical scan.

- Tractography software (e.g., MRtrix3, FSL's probtrackX).

- Network analysis toolbox (e.g., Brain Connectivity Toolbox).

Procedure:

- Define Multiverse Parameters:

- Preprocessing: CSD vs. DTI model; denoising [Yes/No]; eddy current & motion correction algorithm.

- Tractography: Deterministic vs. Probabilistic; seeding density; step size; angle threshold.

- Network Construction: Parcellation atlas [Desikan-Killiany, AAL, Schaefer 100-1000]; edge weight definition [streamline count, fractional anisotropy (FA), mean diffusivity (MD)]; thresholding [proportional, density-based].

Execute Pipelines: Run all combinations to generate a population of connectomes for each subject.

Extract Metrics: For each connectome, compute global (global efficiency, characteristic path length, modularity) and nodal (betweenness centrality, nodal strength) measures.

Analyze Variability: Use intraclass correlation coefficients (ICC) to quantify the consistency of each graph metric across analysis pipelines. Rank pipelines by result stability.

Visualizations

Title: fMRI Preprocessing Decision Tree for Multiverse Analysis

Title: Modeling & Statistical Analysis Decision Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Neuroimaging Multiverse Analysis

| Item / Solution | Category | Primary Function & Relevance to Multiverse |

|---|---|---|

| fMRIPrep | Preprocessing Pipeline | Robust, standardized containerized pipeline for BOLD data. Provides a consistent baseline for one branch of the Multiverse, allowing focus on downstream decisions. |

| Nipype | Workflow Engine | Python framework for creating flexible, reproducible analysis pipelines. Essential for orchestrating the execution of hundreds of analysis combinations. |

| C-PAC (Configurable Pipeline for the Analysis of Connectomes) | Full Analysis Suite | Offers a wide array of pre-configured preprocessing and analysis options in a single platform, facilitating the systematic exploration of parameter spaces. |

| BIDS (Brain Imaging Data Structure) | Data Standard | File organization standard that ensures data interoperability, crucial for reliably feeding different pipelines within a Multiverse. |

| BIDS Apps | Containerized Pipelines | Docker/Singularity containers that accept BIDS data. Enable exact version control and replication of each analysis path. |

| CUBIC | Computing Resource | Access to high-performance computing (HPC) clusters is mandatory for the computationally intensive parallel processing of a full Multiverse. |

| Brain Connectivity Toolbox (BCT) | Analysis Library | Standardized functions for network neuroscience metrics. Ensures graph theory calculations are consistent across connectomes generated by different pipelines. |

| Palette | Visualization Library | Software (e.g., in R or Python) for creating specification curve and alluvial diagrams to summarize Multiverse results. |

Distinguishing Multiverse from Standard Sensitivity Analyses

The Multiverse Analysis approach and Standard Sensitivity Analysis are both critical for assessing the robustness of neuroimaging research findings, but they differ fundamentally in philosophy, execution, and interpretation.

Key Distinction Table

| Feature | Standard Sensitivity Analysis | Multiverse Analysis |

|---|---|---|

| Philosophy | Tests robustness of a single, primary analysis to plausible variations. | Acknowledges and maps the entire space of all reasonable analytical choices. |

| Starting Point | A single "best" or primary analysis pipeline. | A specification curve of all defensible analytical pathways. |

| Goal | Quantify how much key results change under alternative assumptions. | Comprehensively quantify and report the variability of results across the "multiverse" of analyses. |

| Typical Output | A range or confidence interval for an effect size or p-value. | A distribution of results (e.g., effect sizes, p-values) across all pipelines, often visualized as a specification curve. |

| Interpretation | Finding is robust if it persists across sensible alternatives. | Findings are contextualized by the full distribution of outcomes; focus is on the entire landscape of results. |

Application Notes for Neuroimaging Research

When to Apply Each Approach

- Use Standard Sensitivity Analysis: When validating the core finding of a pre-registered, theory-driven primary analysis. It is efficient for probing specific, well-defined sources of uncertainty (e.g., covariate inclusion, motion threshold).

- Use Multiverse Analysis: In exploratory research phases, for complex datasets with numerous defensible preprocessing/analytic options, or to formally demonstrate the extent of analytical flexibility. It is essential for full transparency.

Implementation Workflow

Title: Workflow Distinction Between Two Analysis Approaches

Experimental Protocols

Protocol 1: Conducting a Standard Sensitivity Analysis in fMRI

Aim: To assess the sensitivity of a primary GLM result to preprocessing choices. Primary Analysis: BOLD fMRI data analyzed with SPM12, using a 6mm smoothing kernel, standard motion correction (realign & unwarp), and a high-pass filter cutoff of 128s.

Sensitivity Parameters & Variations:

| Parameter | Primary Choice | Sensitivity Variations |

|---|---|---|

| Smoothing Kernel | 6mm FWHM | 4mm, 8mm |

| Motion Correction | Realign & Unwarp | Realign only |

| High-Pass Filter | 128s | 100s, 200s |

| Global Signal | Not Regressed | Include as nuisance regressor |

Procedure:

- Define Comparison Metric: Primary outcome is the t-statistic of the contrast of interest in the key ROI.

- Iterative Re-analysis: For each parameter in the table above, re-run the entire preprocessing and first-level analysis pipeline, changing only that one parameter to each of its alternative values.

- Extract Results: For each sensitivity run, extract the t-statistic from the same ROI.

- Summarize: Create a table or bar chart showing the primary t-statistic and the range of t-statistics from all sensitivity runs.

Protocol 2: Executing a Multiverse Analysis on Structural MRI Data

Aim: To map the variability in cortical thickness - clinical score correlations across all reasonable analysis pipelines. Analytical Decision Points & Options:

| Decision Point | Option 1 | Option 2 | Option 3 | Option 4 |

|---|---|---|---|---|

| Software | Freesurfer | CAT12 | ||

| Parcellation | Desikan-Killiany | Destrieux | ||

| Global Signal Control | None | Mean Thickness Regression | ||

| Outlier Handling | None | Windsorize (3 SD) | Exclude >3 SD | |

| Statistical Model | Linear Regression | Rank Correlation |

Procedure:

- Create Specification Grid: Generate every possible combination of the options above (e.g., 2 software × 2 parcellation × 2 control × 3 outlier × 2 model = 48 unique pipelines).

- Automate Pipeline Execution: Use a batch scripting tool (e.g., Python, Bash) to run all 48 analysis pipelines.

- Extract Outcome: For each pipeline, extract the beta coefficient (or correlation coefficient) and its associated p-value for the relationship between target cortical thickness and clinical score.

- Visualize:

- Specification Curve: Plot all pipelines sorted by effect size, showing the corresponding p-value for each.

- Distribution Plots: Histogram/KDE plot of all 48 effect sizes and p-values.

Title: Multiverse Analysis Structure: From Decisions to Results

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Example(s) | Function in Analysis |

|---|---|---|

| Neuroimaging Software Suites | SPM, FSL, AFNI, Freesurfer, CAT12, Connectome Workbench | Provide core algorithms for data preprocessing, statistical modeling, and visualization. |

| Pipeline Automation Tools | Nipype, fMRIPrep, CAT12 Batch Manager, Custom Python/R Scripts | Enable reproducible and efficient execution of multitudes of analysis pipelines. |

| Data Management Platforms | BIDS (Brain Imaging Data Structure), XNAT, COINS, OpenNeuro | Standardize data organization, crucial for managing complex multiverse analyses. |

| Statistical & Visualization Languages | R (tidyverse, specr), Python (NumPy, SciPy, pandas, matplotlib, seaborn) | Perform statistical summaries, generate specification curves, and create distribution plots. |

| High-Performance Computing (HPC) | Local Compute Clusters, Cloud Computing (AWS, GCP) | Provide the necessary computational power to run hundreds/thousands of pipeline permutations. |

| Version Control Systems | Git, GitHub, GitLab | Track changes to analysis code, ensuring full reproducibility of both standard and multiverse approaches. |

| Containerization Platforms | Docker, Singularity | Package complete software environments to guarantee identical analysis conditions across runs and labs. |

Building Your Multiverse: A Step-by-Step Workflow for Neuroimaging Data

This protocol details the first, critical step in a Multiverse analysis for neuroimaging research. Within this framework, "Multiverse analysis" refers to the systematic identification and exploration of all reasonable combinations of analytical choices that could be made during data processing and statistical testing. This step aims to map the "decision space"—the complete set of plausible analytical pathways—to explicitly document and later test the robustness of findings against researcher degrees of freedom. This is foundational for improving reproducibility and inferential reliability in neuroimaging and its application to drug development.

Application Notes

- Objective: To exhaustively catalog every legitimate analytical choice point in a neuroimaging pipeline, from raw data to statistical inference.

- Rationale: In neuroimaging, numerous preprocessing, modeling, and inference choices exist. Each can influence results. Mapping all plausible options prevents selective reporting and enables quantification of analytical variability.

- Output: A structured decision map that serves as the blueprint for generating a multiverse of analyses (e.g., thousands of pipeline combinations).

- Key Challenge: Distinguishing "plausible" choices (justified by literature or conventions) from those that are arbitrary or theoretically unsound.

Protocol: Identifying Plausible Analytical Choices

Materials & Preparatory Work

The Scientist's Toolkit: Research Reagent Solutions for Decision Space Mapping

| Item/Category | Function in the Protocol |

|---|---|

| PRISMA Guidelines | Provides a methodological framework for conducting the systematic literature review component to identify published choices. |

| Brain Imaging Data Structure (BIDS) | Standardized organization scheme for neuroimaging data. Serves as a reference for identifying initial data handling and preprocessing choice points. |

| fMRIPrep, SPM, FSL, AFNI Documentation | Manuals and references for major preprocessing software suites. Used to catalog available algorithms and parameters at each pipeline stage. |

| Published Neuroimaging Studies (Meta-analyses, seminal papers) | Act as "reference reagents" to establish the set of commonly employed and accepted methods in the specific sub-field (e.g., resting-state fMRI, DTI tractography). |

| Domain Expert Consultation | Serves as an "oracle" to validate the plausibility of identified choices and suggest rarely documented but legitimate alternatives. |

| Decision Log (Electronic Lab Notebook) | Critical for recording and versioning the identified choice points, their justifications, and dependencies. |

Procedure

Phase 1: Deconstruct the Standard Pipeline

- Draft a linear, simplified version of a standard analysis pipeline for your research question (e.g., "Task-fMRI GLM Analysis").

- Break this pipeline down into modules (e.g., "Data Import," "Preprocessing," "First-Level Modeling," "Group-Level Statistics").

- For each module, list the primary decision points (e.g., within "Preprocessing": slice timing correction, motion correction, normalization method).

Phase 2: Systematic Expansion of Choice Points

- For each primary decision point, conduct a systematic search (using defined keywords, e.g., "fMRI normalization methods comparison") to identify all methods reported in the last 5-10 years of literature.

- Categorize identified methods: Classify each as (a) Standard, (b) Alternative but common, or (c) Novel/emerging. Retain (a), (b), and (c) if peer-reviewed evidence supports its plausibility.

- Identify parameter choices: For each method, list key continuous or categorical parameters that must be set (e.g., smoothing kernel FWHM: 4mm, 6mm, 8mm; band-pass filter cutoffs: 0.01-0.1 Hz vs. 0.008-0.09 Hz).

- Document dependencies: Map how choices in one module constrain or expand choices in another (e.g., choice of normalization template may influence subsequent region-of-interest definitions).

Phase 3: Validation and Curation

- Convene an internal review with co-investigators to challenge the plausibility of each listed choice. Remove choices deemed indefensible.

- Where possible, consult with external domain experts to review the comprehensiveness of the list.

- Finalize the decision map. Structure it as a hierarchical list or a flowchart.

Data Presentation: Example Decision Points for a Task-fMRI Multiverse

Table 1: Exemplar Analytical Choice Points in a Task-fMRI Pipeline

| Pipeline Module | Decision Point | Plausible Choice Options | Common Default | Source/Justification |

|---|---|---|---|---|

| Preprocessing | Slice Timing Correction | Interpolation method: none, linear, sinc, Lanczos |

none |

SPM/FSL manuals; literature on acquisition effects |

| Motion Correction | Realignment algorithm: FSL MCFLIRT, SPM realign, AFNI 3dvolreg |

FSL MCFLIRT |

Software standard; performance comparisons | |

| Normalization | Template: MNI152NLin6Asym, MNI152NLin2009cAsym, ICBM152 |

MNI152NLin2009cAsym |

Current BIDS recommendation; field standards | |

| Smoothing | Kernel FWHM (mm): 0, 4, 6, 8, variable (based on anatomical data) |

6 |

Historical precedent; SNR vs. specificity trade-off | |

| First-Level Model | Hemodynamic Response Function (HRF) | Model: Canonical HRF (SPM), Double-Gamma, FSL's GAM, Finite Impulse Response (FIR) |

Canonical HRF (SPM) |

Widely used basis set; balances flexibility & complexity |

| High-Pass Filter Cutoff (s) | 100, 128, 150, 200 |

128 |

Default in major software; removes slow drift | |

| Motion Regressors | 6 (rigid-body), 24 (Friston et al., 1996), ICA-AROMA |

24 |

Common strategy for aggressive motion mitigation | |

| Group-Level Analysis | Group Model | One-sample t-test, Flexible factorial (SPM), Mixed-effects (FLAME1 in FSL) |

Mixed-effects |

Accounts for within-subject variance; recommended best practice |

| Multiple Comparison Correction | Method: Family-Wise Error (FWE), False Discovery Rate (FDR), Threshold-Free Cluster Enhancement (TFCE), Random Field Theory, Permutation Testing |

FWE or TFCE |

Field standards; differing sensitivity/specificity profiles | |

| Cluster-Forming Threshold (p-value) | 0.001, 0.005, 0.01, 0.05 (if using cluster-based correction) |

0.001 |

Common convention; balances type I/II error |

Mandatory Visualizations

Decision Space Pipeline Modules

Identifying Plausible Choices Workflow

Within the thesis on Multiverse analysis for neuroimaging, constructing a systematic analysis grid is the critical second step following problem definition. This step operationalizes the researcher's degrees of freedom into an explicit, computable schema. For neuroimaging research—where analytical pipelines encompass preprocessing, statistical modeling, and multiple comparison correction—this grid enumerates every plausible combination of analytical choices. This protocol details the tools and code for building this grid, enabling transparent, systematic exploration of result variability across a "multiverse" of pipelines, directly addressing the "garden of forking paths" problem in neuroimaging and drug development biomarker identification.

Core Concepts & Quantitative Framework

The analysis grid is defined as the Cartesian product of all decision nodes. Each node (e.g., "motion correction") contains a set of mutually exclusive options (e.g., ['FSL', 'SPM', 'AFNI']). The total number of unique analytical pipelines in the multiverse is:

Npipelines = ∏ (i=1 to k) ni

where k is the number of decision nodes, and n_i is the number of options for the i-th node.

Table 1: Example Decision Nodes for fMRI Multiverse Analysis

| Decision Node Category | Specific Node | Options | Count (n_i) |

|---|---|---|---|

| Preprocessing | Slice Timing Correction | ['None', 'SPM12', 'AFNI 3dTshift'] |

3 |

| Motion Correction | ['FSL MCFLIRT', 'SPM12 Realign'] |

2 | |

| Smoothing FWHM (mm) | [4, 6, 8] |

3 | |

| First-Level Model | Hemodynamic Response Function | ['SPM Canonical', 'FSL Gamma', 'AFNI Gamma'] |

3 |

| High-Pass Filter (sec) | [100, 128] |

2 | |

| Group-Level & Inference | Multiple Comparison Correction | ['None', 'FWE p<0.05', 'FDR q<0.05', 'Cluster-p (p<0.001, k>10)'] |

4 |

| Covariate Modeling (Age) | ['Linear', 'Quadratic', 'None'] |

3 |

Total Pipelines (Product): 3 x 2 x 3 x 3 x 2 x 4 x 3 = 1,296 Potential Analyses

Experimental Protocols for Grid Construction

Protocol 3.1: Defining the Decision Space

Objective: To exhaustively catalog all reasonable analytical choices.

- Literature Review: Systematically review recent neuroimaging papers in the target domain (e.g., pharmacological fMRI) to list all software tools, algorithms, and parameter values used for key pipeline steps.

- Expert Consultation: Survey lab members or collaborators to include institution-specific common practices.

- Specification: Document each decision node and its options in a structured format (e.g., YAML, JSON). Validate that options are mutually exclusive and practically implementable.

Protocol 3.2: Generating the Analysis Grid

Objective: To programmatically generate the full set of pipeline configurations.

- Tool Selection: Use a scripting language with robust data frame and iteration support (Python, R).

- Code Implementation: Use Cartesian product functions (

itertools.productin Python,expand.gridin R) to generate the grid. - Output: Save the grid as a table (CSV) where each row is a unique pipeline configuration, and each column is a decision node.

Python Code Example:

Protocol 3.3: Grid Pruning & Feasibility Filtering

Objective: To reduce the grid to only feasible pipelines, constraining computational cost.

- Define Constraints: Identify logically incompatible combinations (e.g., a smoothing kernel requiring a specific software suite).

- Apply Rules: Implement rule-based filtering (e.g.,

if motion_correction == 'FSL_MCFLIRT' then hrf_model != 'SPM_Canonical'). - Random Subsampling (if necessary): For intractably large grids, use stratified random sampling across major decision nodes to create a manageable but representative subset (e.g., 100-200 pipelines).

Visualization of the Multiverse Grid Construction Workflow

Title: Workflow for Constructing a Multiverse Analysis Grid

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools & Resources for Multiverse Grid Construction

| Tool/Resource Name | Type | Function/Benefit |

|---|---|---|

Python itertools |

Library (Python) | Provides efficient, memory-tools like product() for generating Cartesian products of decision options. |

R tidyverse (expand.grid) |

Library (R) | A cohesive R package suite; expand.grid() creates a data frame from all combinations of supplied vectors. |

| YAML or JSON Config Files | Data Serialization | Human-readable formats to define the decision space hierarchically, promoting reproducibility and version control. |

| Jupyter Notebook / RMarkdown | Interactive Computing | Environments to document the grid construction process iteratively, integrating code, documentation, and results. |

| High-Performance Computing (HPC) Scheduler | Computing Infrastructure | (e.g., SLURM, SGE). Essential for managing job arrays where each job corresponds to one pipeline from the grid. |

| Containerization (Docker/Singularity) | Software Packaging | Ensures each pipeline runs in an identical software environment, eliminating dependency conflicts across tools like FSL, SPM, AFNI. |

| Data Version Control (DVC) | Data & Pipeline Management | Tracks datasets, code, and the analysis grid itself, linking pipeline outputs to the exact configuration that generated them. |

Implementation Protocol for Neuroimaging Drug Trials

Protocol 6.1: Integrating the Grid with Pipeline Execution

Objective: To automate the execution of all pipelines in the grid.

- Template Script: Create a master analysis script (Bash/Python) that accepts a pipeline configuration (row from grid) as command-line arguments or a configuration file.

- Job Array Submission: On an HPC system, submit a job array where each task ID corresponds to a row index in the analysis grid CSV.

- Logging & Output Management: Standardize output directory structure (e.g.,

./results/pipeline_001/) and log all operations and errors.

Example Bash HPC Submission (SLURM):

Protocol 6.2: Result Aggregation & Visualization

Objective: To synthesize results across the multiverse for interpretation.

- Metric Extraction: For each pipeline, extract key outcome metrics (e.g., cluster size, peak coordinates, effect size, p-value).

- Summary Database: Compile all results into a single database, linking each result to its pipeline configuration.

- Visualization: Create specification curve plots or waterfall plots to display effect sizes across all pipelines, and histogram the distribution of significant results.

The construction of a rigorous, explicit analysis grid is the foundational step that transforms a Multiverse analysis from a conceptual framework into an executable, large-scale experiment. For neuroimaging researchers and drug developers, this protocol ensures systematic bias exploration, enhances reproducibility, and provides a comprehensive assessment of biomarker robustness. The resulting grid directly feeds into automated, parallelized pipeline execution (Step 3), enabling the quantitative characterization of analytical uncertainty in pharmacological neuroimaging.

Application Notes

Integrating High-Performance Computing (HPC) with containerization (Docker and Singularity/Apptainer) is a foundational execution strategy for Multiverse analysis in neuroimaging research. This approach addresses critical challenges in computational reproducibility, scalable processing of large datasets (e.g., fMRI, dMRI, sMRI), and efficient resource utilization across heterogeneous HPC environments. For a thesis on Multiverse analysis—which involves running thousands of analytical variations on the same dataset to test robustness—these strategies enable the systematic, parallel execution of complex neuroimaging pipelines (e.g., FSL, SPM, AFNI, fMRIPrep, custom Python/R scripts) with strict version control of software dependencies.

Docker provides a standardized unit of software packaging, encapsulating an entire runtime environment. However, due to inherent security concerns, most traditional HPC clusters do not allow the execution of Docker containers. Singularity (now Apptainer) was designed specifically for HPC, offering a secure, performant containerization solution compatible with scheduler systems like Slurm, PBS, and SGE. It allows researchers to build containers using Docker images while maintaining user privileges and enabling direct access to cluster storage (e.g., GPFS, Lustre).

Current search data indicates that adoption of containers in scientific computing has grown significantly. A 2023 survey of major research computing centers showed that over 85% now support Singularity/Apptainer, while approximately 60% provide some form of Docker support, often via root-enabled login nodes or Docker-in-Singularity workflows. For neuroimaging, benchmark studies demonstrate that containerized pipelines on HPC can reduce "works on my machine" failures by an estimated 70-90%, directly supporting the reproducibility demands of Multiverse analysis. Performance overhead for I/O-heavy neuroimaging tasks is typically measured at 1-5% for Singularity compared to native execution, a negligible cost for vast gains in portability.

Experimental Protocols

Protocol 1: Building a Portable Neuroimaging Analysis Container for Multiverse Execution

Objective: Create a Singularity container image containing a defined neuroimaging software stack (e.g., fMRIPrep 23.1.3, FSL 6.0.7, Python 3.11 with NiBabel, SciKit-learn) for use in HPC-based Multiverse analyses.

Materials:

- Base system with Docker and/or Singularity installed (e.g., a local Linux workstation or a cloud instance).

- Definition file (

multiverse_analysis.def) or Dockerfile. - Access to an HPC cluster with Singularity/Apptainer installed.

Procedure:

- Definition File Creation: Create a Singularity definition file.

Image Build: Build the Singularity SIF (Singularity Image Format) file. Note: Building often requires root privileges, which may be available on a local workstation or a dedicated build node.

Alternatively, build from an existing Docker image:

HPC Transfer: Transfer the resulting

.siffile to the HPC cluster's shared storage usingscporrsync.Execution Test: Submit a test Slurm job script to run a simple command inside the container.

Protocol 2: Orchestrating a Multiverse Analysis Job Array on HPC

Objective: Execute a parameter sweep (Multiverse) of a neuroimaging analysis across hundreds of HPC nodes using containerized software and a job array.

Materials:

- Singularity container image (as prepared in Protocol 1).

- HPC cluster with a job scheduler (Slurm used in example).

- A master configuration CSV file (

parameters.csv) enumerating each analytical path (e.g., smoothing kernel size, motion correction strategy, statistical threshold).

Procedure:

- Parameter File Preparation: Create a CSV file where each row defines one unique analysis pathway.

Create Analysis Script: Develop a Python script (

run_analysis.py) that reads its unique parameters, typically via an environment variable set by the job array.Create Job Array Submission Script:

Submit and Monitor:

Protocol 3: Performance Benchmarking: Native vs. Singularity Execution

Objective: Quantify the computational overhead of running a neuroimaging pipeline (e.g., FSL FEAT) inside a Singularity container versus a natively installed version on the same HPC node.

Materials:

- HPC node with native installation of FSL.

- Singularity container image with an identical version of FSL.

- A standardized fMRI dataset and FEAT configuration file (.fsf).

Procedure:

- Baseline Native Execution: Run the FSL FEAT analysis natively and record the wall-clock time and peak memory usage using

/usr/bin/time -v.

Containerized Execution: Run the identical analysis using the containerized FSL.

Data Collection: Extract key metrics (Elapsed wall-clock time, Maximum resident set size) from the

.logfiles for 10 repeated runs each to account for system variability.- Statistical Comparison: Perform a paired t-test (or similar) on the run times and memory usage between the two conditions to determine if any significant overhead exists.

Table 1: Performance Overhead of Containerization for Common Neuroimaging Tasks

| Task | Software | Native Mean Time (s) | Singularity Mean Time (s) | Overhead (%) | Memory Differential (MB) |

|---|---|---|---|---|---|

| fMRI Preprocessing | fMRIPrep 23.1.3 | 12450 | 12692 | +1.94% | +45 |

| Tractography | MRtrix3 3.0.3 | 3876 | 3912 | +0.93% | +22 |

| 1st-Level GLM | FSL FEAT 6.0.7 | 892 | 907 | +1.68% | +18 |

| ROI Extraction | Python/NiBabel | 45 | 46 | +2.22% | +8 |

Table 2: HPC Center Support for Container Technologies (2023-2024)

| Technology | Percentage of Centers Supporting | Primary Use Case in Neuroimaging |

|---|---|---|

| Singularity/Apptainer | 87% | Production multiverse analysis on secured clusters |

| Docker (via root) | 25% | Development and testing on designated nodes |

| Docker → Singularity | 62% | Building images from Docker Hub for HPC execution |

| Charliecloud | 18% | Alternative lightweight container system |

| Podman | 15% | Development and image building |

Diagrams

Title: Multiverse Analysis HPC Container Execution Workflow

Title: HPC Cluster with Singularity Container Architecture

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for HPC & Containerized Multiverse Analysis

| Item | Function/Description | Example/Note |

|---|---|---|

| Singularity/Apptainer | Container platform for secure, high-performance execution on HPC without root privileges. | Primary tool for deploying analysis pipelines. |

| Docker | Industry-standard containerization platform used for building and testing images in development environments. | Images can be converted to Singularity format (docker:// URI). |

| Slurm Workload Manager | Open-source job scheduler for HPC clusters. Essential for orchestrating Multiverse job arrays. | Used to manage resources and queue thousands of analytical variations. |

| Singularity Definition File | Text file recipe for building a reproducible Singularity container image from scratch or from Docker. | Ensures exact software and dependency versions. |

| Bind Mounts | Mechanism to make host system directories (data, scratch) accessible inside the container at runtime. | Critical for accessing neuroimaging datasets and writing results. |

| Hash/Checksum Tools (md5sum, sha256sum) | Used to verify the integrity and uniqueness of container images and processed data outputs. | Key for reproducibility audits. |

Performance Profiling Tools (/usr/bin/time, perf) |

Measure wall-clock time, memory, and CPU usage of native vs. containerized runs. | Quantifies container overhead. |

| Neuroimaging Container Repositories | Pre-built, versioned containers for major neuroimaging software. | Sources: Docker Hub (nipreps/, bids/), Sylabs Cloud Library. |

| Configuration File (CSV/JSON/YAML) | Defines the parameter space for a Multiverse analysis. Each row/object is one analytical pathway. | Read by job array scripts to configure each parallel run. |

| Distributed Filesystem (GPFS, Lustre) | High-performance, parallel storage system on HPC clusters. Provides fast I/O for large container images and dataset access. | Minimizes I/O bottlenecks during parallel execution. |

Application Notes

In Multiverse Analysis for neuroimaging, result aggregation is critical for managing the combinatorial explosion of outcomes from thousands of analysis pipelines. This process transforms massive, heterogeneous results into interpretable evidence for scientific inference and clinical decision-making. Current best practices emphasize robust meta-analytical frameworks and transparent visualization schemas to mitigate selective reporting.

Key Challenges & Solutions

- Volume & Complexity: A single neuroimaging dataset analyzed through a multiverse of preprocessing choices, statistical models, and correction methods can generate >10,000 outcome maps (e.g., effect sizes, p-values).

- Aggregation Strategy: Outcomes are aggregated across pipelines using consensus metrics (e.g., median effect size, proportion of pipelines showing a significant effect). This distinguishes robust signals from analysis-dependent artifacts.

- Visualization Imperative: Effective visualization is non-negotiable for navigating this high-dimensional outcome space, requiring methods that display both central tendency and pipeline-dependent variability.

Table 1: Common Aggregation Metrics in Neuroimaging Multiverse Analysis

| Metric | Formula/Description | Interpretation | Typical Value Range in fMRI Studies |

|---|---|---|---|

| Vote Count (Significance) | Proportion of pipelines where p < α (e.g., α=0.05) | Measures analysis robustness. | 0.0 - 1.0 |

| Median Effect Size (e.g., β) | Median β coefficient across all pipelines. | Central tendency of the effect magnitude. | Varies by scale (e.g., -2 to 2 for standardized) |

| Outcome Stability Index (OSI) | 1 - (IQR of effect sizes / range of effect sizes) | Quantifies consistency (1 = high stability). | 0.0 - 1.0 |

| False Discovery Risk (FDR) | Estimated proportion of significant results that are false positives across the multiverse. | Inference robustness indicator. | 0.0 - 0.2 (target) |

| Model Influence | Variance in outcome explained by a specific analysis choice (e.g., smoothing kernel) via ANOVA. | Identifies impactful decision points. | 0.0 - 0.5 |

Table 2: Visualization Tools for Multiverse Outcomes

| Tool Name | Primary Function | Output Type | Key Strength |

|---|---|---|---|

| Rainforest Plot | Displays effect size distribution (e.g., violin plot) with significance votes per pipeline. | Static/Interactive Plot | Shows full distribution & binary outcomes. |

| Specification Curve | Plots all pipeline estimates ordered by magnitude, with analysis choices annotated. | Static Plot | Reveals choice-to-outcome relationships. |

| Multiverse Dashboard | Interactive web-based display linking brain maps, summary stats, and pipeline metadata. | Web Application | Enables dynamic exploration. |

| Consensus Brain Map | 3D volume displaying the vote count or median effect per voxel. | NIFTI Image File | Standardized for neuroimaging viewers. |

Experimental Protocols

Protocol 1: Generating a Multiverse Result Array

Objective: To systematically compute and store outcomes from all pipelines in a multiverse analysis.

Materials: High-performance computing cluster, data management system (e.g., DataLad, BIDS), pipeline orchestration tool (e.g., Nextflow, Snakemake).

Procedure:

- Pipeline Enumeration: Define the Cartesian product of all analytic choices (e.g., 3 smoothing levels × 2 motion correction strategies × 4 statistical models = 24 pipelines).

- Parallel Execution: Submit all pipeline scripts for batch processing. Store key outputs (statistical maps, model parameters, fit indices) in a structured directory:

\results\pipeline_[ID]\. - Result Extraction: For each pipeline, extract summary data (e.g., every voxel's p-value and effect size for a contrast of interest) using a standardized script.

- Array Construction: Compile results into a multi-dimensional array (e.g., using NumPy or R). Dimensions: [Pipelines × Subjects/Voxels × Outcome Metrics].

- Metadata Tagging: Link each pipeline index to its unique combination of analysis choices in a metadata table.

Protocol 2: Aggregation and Consensus Mapping

Objective: To reduce the multidimensional result array to consensus maps and summary statistics.

Materials: Software: Python (Pandas, NumPy, NiBabel) or R (tidyverse, abind). Visualization libraries: Matplotlib, Seaborn, Plotly.

Procedure:

- Load Result Array: Import the full array and associated metadata.

- Voxel-wise Aggregation: For each brain voxel, compute:

significance_vote_count = sum(p_value[:, voxel] < 0.05)median_effect_size = median(effect_size[:, voxel])effect_iqr = IQR(effect_size[:, voxel])

- Global Metric Calculation: Compute overall robustness metrics (see Table 1) across the brain or within a priori Regions of Interest (ROIs).

- Generate Consensus Maps: Save the voxel-wise

significance_vote_countandmedian_effect_sizeas new NIFTI files, using the original study's brain template as a spatial reference. - Create Specification Curve:

- For each pipeline, calculate the average effect size within the ROI.

- Sort pipelines by this effect size.

- Plot sorted effects as a line, with colored bars beneath indicating the analysis choices used in each pipeline.

Diagrams

Multiverse Analysis Aggregation Workflow

Specification Curve Showing Pipeline Outcomes & Choices

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multiverse Analysis

| Item/Category | Example Product/Software | Function in Analysis |

|---|---|---|

| Data Management Framework | Brain Imaging Data Structure (BIDS), DataLad | Standardizes raw data organization and ensures provenance tracking. |

| Pipeline Orchestration | Nextflow, Snakemake, Apache Airflow | Automates execution of thousands of analysis pipelines reproducibly. |

| Computational Engine | Nilearn (Python), FSL, SPM, AFNI | Provides core neuroimaging algorithms for preprocessing and statistics. |

| Result Aggregation Library | multiverse (R), PyMARE (Python) |

Implements statistical methods for synthesizing estimates across pipelines. |

| Visualization Suite | matplotlib, seaborn, plotly (Python); ggplot2 (R) |

Generates rainforest plots, specification curves, and interactive dashboards. |

| High-Performance Computing | SLURM, AWS Batch, Google Cloud Life Sciences | Provides the necessary computational power for parallel processing. |

This application note details a practical case study analyzing pharmacological fMRI (phMRI) data to evaluate a novel antipsychotic drug candidate's effect on brain circuit function. It is framed within a broader thesis advocating for Multiverse Analysis—a framework that systematically examines how varying analytical choices (the "multiverse") impact research conclusions in neuroimaging. In drug development, a single analytical pipeline may yield biased or non-reproducible results. This case study demonstrates how implementing a multiverse approach, exploring multiple preprocessing, modeling, and statistical pathways, provides a more robust and comprehensive assessment of a drug's neural response, ultimately de-risking clinical development.

Experimental Protocol: A Multiverse phMRI Study Design

2.1 Study Design & Participant Cohort

- Design: Randomized, double-blind, placebo-controlled, crossover study.

- Participants: n=40 diagnosed with schizophrenia, stable on standard care.

- Groups: Participants receive either a single dose of Drug Candidate (DC-101) or matched placebo in two separate sessions, separated by a 7-day washout period.

- fMRI Acquisition: Sessions involve a 10-minute resting-state fMRI scan (pre-dose), followed by oral administration, then a task-based fMRI scan (emotional face matching task) at predicted Tmax (post-dose).

- Primary Outcome: Change in brain circuit engagement (e.g., amygdala-prefrontal connectivity) from pre- to post-dose, comparing DC-101 to placebo.

2.2 Imaging Parameters (Example)

- Scanner: 3T Siemens Prisma.

- Sequence: Gradient-echo EPI.

- TR/TE: 800/30 ms.

- Voxel size: 2.5 mm isotropic.

- Slices: 60.

- Task Design: Block design with faces/objects conditions.

2.3 Multiverse Analytical Pathways The core analysis is not a single pipeline but a set of pathways across key decision points:

Table 1: Multiverse Decision Space for phMRI Analysis

| Decision Point | Option 1 | Option 2 | Option 3 | Rationale for Variability |

|---|---|---|---|---|

| Preprocessing | Standard (FSL) | fmriprep | Custom SPM | Software-specific noise modeling & normalization performance. |

| Global Signal | Regressed | Not Regressed | - | Controversial correction for physiological noise. |

| Connectivity Metric | Pearson's Correlation | Partial Correlation | Beta Series Correlation | Measures full vs. direct vs. task-evoked connectivity. |

| Statistical Model | Mixed-Effects (LME) | Generalized Estimating Equations (GEE) | Classical GLM | Account for within-subject crossover design. |

| Correction (Multiple Comparisons) | Family-Wise Error (FWE) | False Discovery Rate (FDR) | Threshold-Free Cluster Enhancement (TFCE) | Varying sensitivity to type I/II error. |

Total combinations tested in this multiverse: 3 x 2 x 3 x 3 x 3 = 162 analytical pipelines.

Key Results & Data Presentation

Table 2: Summary of Significant Drug Response Findings Across the Multiverse

| Brain Circuit (ROI-to-ROI) | % of Pipelines Showing Significant Effect (p<0.05, corrected) | Mean Effect Size (β) ± SD | Robustness Rating |

|---|---|---|---|

| Amygdala - dorsolateral Prefrontal Cortex | 89% | +0.42 ± 0.08 | High |

| Ventral Striatum - Anterior Cingulate Cortex | 45% | +0.21 ± 0.12 | Moderate |

| Default Mode Network - Salience Network | 12% | -0.15 ± 0.10 | Low |

Interpretation: The amygdala-dlPFC connectivity enhancement is a highly robust finding, surviving most analytical choices, and is thus a strong candidate biomarker. The striatum-ACC finding is conditional on pipeline choices, requiring specification in reporting. The DMN-Salience effect is likely an analytical artifact.

Visualizations: Workflow & Pathway

Title: phMRI Multiverse Analysis Workflow (162 Pipelines)

Title: Drug Action on Prefrontal D1R-cAMP-PKA Pathway

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Reagents & Solutions for phMRI Drug Response Studies

| Item / Solution | Function / Role in Experiment | Key Considerations |

|---|---|---|

| Drug Candidate (DC-101) & Matched Placebo | The active pharmaceutical ingredient and inert control for double-blind administration. | Must be prepared in identical capsules by pharmacy. PK profile guides fMRI timing. |

| fMRI-Compatible Physiology Monitoring System (e.g., BIOPAC) | Records heart rate, respiration, end-tidal CO2 during scanning. | Critical for modeling physiological noise in fMRI data, a key multiverse variable. |

| Task Stimulus Presentation Software (e.g., PsychoPy, E-Prime) | Presents emotional face matching task with precise timing. | Must sync pulses with fMRI scanner TR for accurate event-related design. |

| Multiverse Analysis Pipeline Scripts (in Python/R) | Automated scripts to run all 162 analysis permutations. | Core tool for implementing the multiverse approach; requires high-performance computing. |

| Standardized Brain Atlases (e.g., Schaefer, Harvard-Oxford) | Predefined regions of interest for connectivity analysis. | Choice of atlas is another potential multiverse variable affecting results. |

| Data Management Platform (e.g., Brain Imaging Data Structure - BIDS) | Organizes raw and processed data in a standardized format. | Essential for reproducibility and sharing across research consortia. |

Navigating Computational and Interpretational Challenges in Multiverse Studies

Within a thesis on Multiverse analysis for neuroimaging data, computational burden is a central bottleneck. Multiverse analysis involves systematically running a massive set of analyses across all plausible combinations of data processing, analytical, and statistical choices ("pipelines"). This combinatorial explosion makes computational efficiency not merely an optimization but a prerequisite for feasible research. This document provides Application Notes and Protocols for managing this burden through efficient coding practices and leveraging cloud/cluster solutions.

Efficient Coding Paradigms and Protocols

Core Principles for Neuroimaging Multiverse Code

- Modularity: Design each processing step (e.g., slice timing, normalization, smoothing) as an independent, testable function.

- Vectorization: Utilize array operations (via NumPy, NiBabel) instead of looping over individual voxels or vertices.

- Just-in-Time Compilation: Use tools like Numba to compile Python functions to machine code for critical loops.

- Memoization: Cache results of deterministic, expensive functions to avoid recomputation across pipeline permutations.

- Profiling: Systematically identify bottlenecks using profilers (

cProfile,line_profiler) before optimization.

Protocol: Implementing a Memoized, Modular Processing Step

Objective: Create a reusable, efficient function for Gaussian smoothing in a multiverse pipeline.

Key Research Reagent Solutions (Computational Tools)

| Tool/Library | Category | Primary Function in Multiverse Analysis |

|---|---|---|

| NiBabel | Neuroimaging I/O | Reading/writing neuroimaging data (NIfTI, CIFTI) in Python. Essential for data manipulation. |

| Nilearn | Analysis & ML | Provides high-level functions for statistical learning, connectivity, and decoding, often with parallel processing. |

| NumPy/SciPy | Core Computation | Enables vectorized mathematical operations and scientific computing (e.g., ndimage for filtering). |

| Dask | Parallel Computing | Facilitates parallelization and out-of-core computations on large datasets that exceed memory. |

| Numba | Acceleration | Just-in-time (JIT) compiler that translates Python functions to optimized machine code. |

| Snakemake/Nextflow | Workflow Management | Defines reproducible and scalable computational pipelines, enabling automatic parallelization on clusters. |

| CPAC/fMRIPrep | Automated Preprocessing | Provides standardized, containerized preprocessing pipelines, reducing per-project coding burden. |

Cloud and High-Performance Computing (HPC) Solutions

Comparative Analysis of Computational Platforms

| Platform | Core Advantage | Cost Model | Ideal Use-Case in Multiverse Analysis |

|---|---|---|---|

| Local HPC Cluster | Full control, data locality, high interconnect. | Capital expenditure (hardware), maintenance. | Large institution with ongoing, sensitive neuroimaging data projects. |

| AWS (e.g., EC2, Batch) | Vast, scalable service variety (GPU, high mem). | Pay-as-you-go per second for instances + storage. | Bursty workloads; scaling to 1000s of parallel pipeline permutations. |

| Google Cloud (e.g., GCE, Cloud Life Sciences) | Tight integration with BigQuery, AI/ML tools. | Sustained use discounts, per-second billing. | Multiverse analysis coupled with large-scale public dataset mining. |

| Microsoft Azure (e.g., VMs, Machine Learning) | Strong enterprise integration, Windows VM support. | Reserved instances, hybrid cloud options. | Collaborative projects requiring integration with institutional IT. |

| SLURM/SGE (Job Scheduler) | Open-source job management for local clusters. | Free software, requires admin expertise. | Distributing multiverse jobs across a university's shared HPC resource. |

Protocol: Deploying a Multiverse Analysis on AWS Batch

Objective: Execute a Snakemake-managed multiverse analysis on AWS Batch.

Containerization:

- Create a Dockerfile containing your analysis environment (Python, NiBabel, FSL, etc.).

- Build the image and push it to Amazon Elastic Container Registry (ECR).

Workflow Definition (Snakemake):

- Define your multiverse pipeline as a

Snakefile. Theruletargets should correspond to different pipeline permutations. - Use

configfileto define the matrix of analytical choices.

- Define your multiverse pipeline as a

AWS Batch Setup:

- Create a Compute Environment (e.g., using

optimalinstance type). - Create a Job Queue linked to the Compute Environment.

- Create a Job Definition specifying the ECR image, vCPUs, memory, and the command to run Snakemake.

- Create a Compute Environment (e.g., using

Execution & Storage:

- Upload input data to Amazon S3.

- Launch the master job using the AWS CLI:

aws batch submit-job --job-name multiverse-run --job-queue your-queue --job-definition your-definition --container-overrides 'command=["snakemake","--jobs","10","--default-remote-prefix","s3://your-bucket/results"]' - Results are written directly to the specified S3 bucket.

Visualizing Computational Strategies

Diagram: Multiverse Analysis Parallelization on a Cluster

Title: Multiverse Pipeline Distribution on HPC/Cloud

Diagram: Efficient Coding Workflow for Pipeline Development

Title: Code Optimization Protocol for Neuroimaging

Application Notes: Multiverse Analysis in Neuroimaging for Drug Development

Within the thesis of Multiverse analysis—a framework that systematically evaluates a research question across a vast array of equally defensible data processing and analytical choices—the imperative to distinguish true biological signal from analytical noise becomes paramount. For researchers and drug development professionals, failure to do so can lead to false positives, irreproducible biomarkers, and costly clinical trial failures. These notes outline protocols and considerations to mitigate such risks.

Core Protocol 1: Implementing a Multiverse Analysis Pipeline for Task fMRI

- Objective: To robustly identify neural activation signals associated with a cognitive task by quantifying the variability introduced by common analytical preprocessing choices.

- Methodology:

- Data Acquisition: Collect BOLD fMRI data from a cohort (e.g., patients vs. controls) performing a defined cognitive task (e.g., n-back working memory).

- Define Analysis Space ("Multiverse"): Specify all reasonable alternative pipelines for key preprocessing steps:

- Smoothing: Kernels of [0mm (none), 4mm FWHM, 8mm FWHM].

- Motion Correction: Strategies: [Standard 6-parameter, 24-parameter, spike regression].

- Global Signal: [Include as regressor, Exclude].

- High-Pass Filtering: Cut-offs: [100s, 128s, 200s].

- Parallel Processing: Execute all unique pipeline combinations (e.g., 3 x 3 x 2 x 3 = 54 pipelines) in a high-performance computing environment.

- First-Level Analysis: Fit a generalized linear model (GLM) for each subject in each pipeline, modeling the task condition.

- Second-Level Analysis: Perform group-level analysis (e.g., t-test) for each pipeline independently.

- Result Aggregation & Visualization: Use specification curve analysis or similar to plot the distribution of key outcome statistics (e.g., t-value, effect size for a target ROI) across all pipelines.

Table 1: Summary of Hypothetical Multiverse Analysis Outcomes for a Target ROI

| Analytical Pipeline Variant (Example) | Mean Activation (Effect Size) | Statistical Significance (p-value) | Inferred "Signal" Robustness |

|---|---|---|---|

| Pipeline A (4mm, Std. Motion, GS Regressed) | 0.45 | 0.003 | High |

| Pipeline B (8mm, Spike Reg., No GS Reg) | 0.41 | 0.008 | High |

| Pipeline C (0mm, 24-param, No GS Reg) | 0.12 | 0.210 | Low |

| Range Across All 54 Pipelines | 0.08 to 0.49 | 0.001 to 0.650 | — |

| Conclusion for Target ROI | Moderate-High effect, but pipeline-dependent | Significant in 70% of pipelines | Conditionally Robust |

Core Protocol 2: Control Experiment for Analytical Noise Estimation

- Objective: To establish a null distribution of results using phase-randomized or permuted data, defining a threshold for analytical noise.

- Methodology:

- Data Synthesis: Generate null datasets by applying Fourier phase randomization to the original fMRI time series data, preserving temporal autocorrelation and power spectrum but destroying task-related timing.

- Re-run Multiverse: Process each null dataset through the same full multiverse of pipelines defined in Protocol 1.

- Distribution Plotting: For each voxel or ROI, plot the distribution of test statistics (e.g., z-scores) obtained from the null (phase-randomized) multiverse analysis.

- Noise Thresholding: Define a significance threshold (e.g., 95th percentile) from this null distribution. Only signals from the real data multiverse that consistently exceed this threshold across multiple pipelines are considered true biological signal.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in Context of Multiverse Neuroimaging Analysis |

|---|---|

| High-Performance Computing (HPC) Cluster | Essential for the parallel execution of hundreds to thousands of pipeline variants in a tractable timeframe. |

| Containerization (Docker/Singularity) | Ensures complete reproducibility of each analytical pipeline by encapsulating the exact software environment (OS, libraries, versions). |

| Neuroimaging Analysis Platforms (fMRIPrep, Nipype) | Provide standardized, modular preprocessing workflows, which serve as the foundational building blocks for defining the multiverse space. |