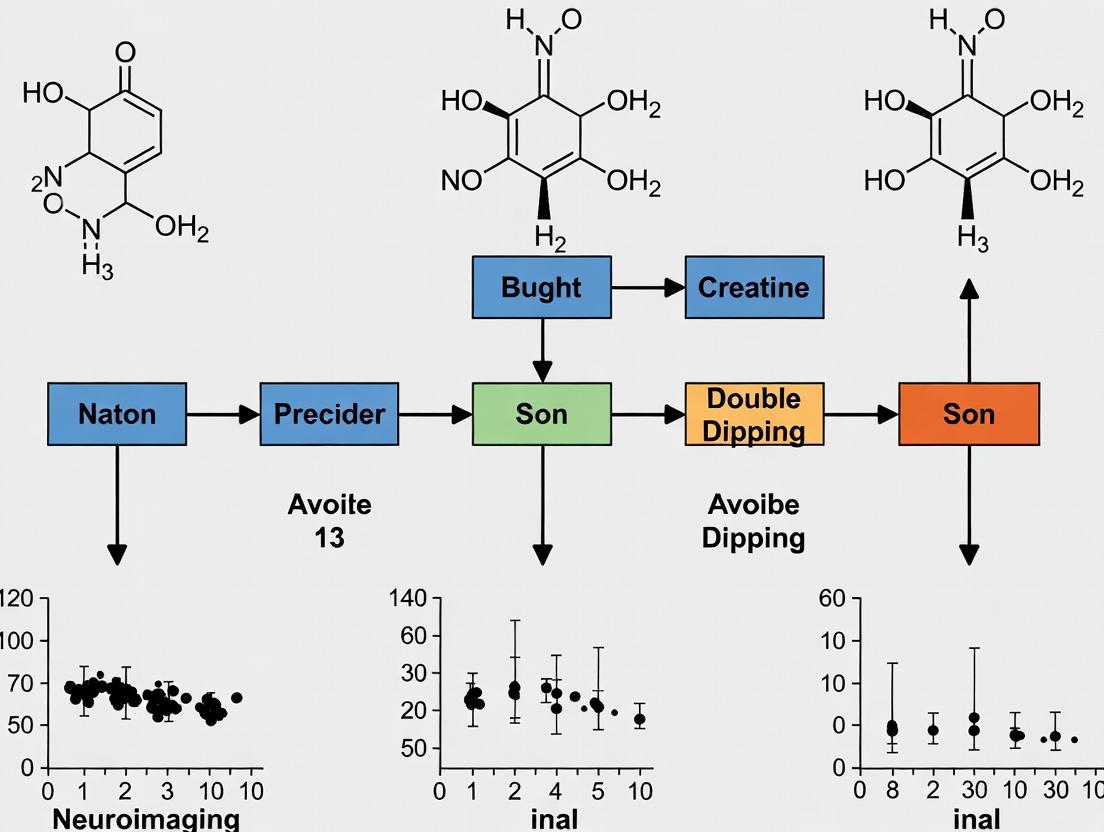

The Double-Dipping Dilemma in Neuroimaging: How to Avoid Circular Feature Selection and Ensure Valid Results

This article provides a comprehensive guide for researchers and professionals in neuroscience and drug development on identifying, avoiding, and remedying double-dipping in neuroimaging feature selection.

The Double-Dipping Dilemma in Neuroimaging: How to Avoid Circular Feature Selection and Ensure Valid Results

Abstract

This article provides a comprehensive guide for researchers and professionals in neuroscience and drug development on identifying, avoiding, and remedying double-dipping in neuroimaging feature selection. We define the problem of circular analysis, explore its pervasive impact on biomarker discovery and clinical predictions, and detail methodological frameworks for clean data partitioning and cross-validation. The content offers practical troubleshooting steps to diagnose and correct double-dipping in existing pipelines, alongside comparative validation strategies to benchmark the robustness of findings. The goal is to empower scientists with the knowledge to produce statistically valid, reproducible, and translatable neuroimaging results.

What is Double-Dipping? Defining Circular Analysis in Neuroimaging Biomarker Discovery

Troubleshooting Guides & FAQs

Q1: I ran a voxel-based analysis on my fMRI data, selecting the top 10% most active voxels from my full dataset and then testing only on those. My p-values are extremely low. Is this valid? A: No. This is a classic case of "double-dipping" or circular analysis. By using the entire dataset for feature (voxel) selection, you have capitalized on random noise. When you subsequently test for significance on the same data, the statistical test is no longer independent. The p-values are invalid and grossly inflated because the hypothesis (which voxels are active) was formed after seeing the data. You have violated the assumption of independence between selection and testing.

Q2: How can I tell if my feature selection method is causing inflation? What are the symptoms in my results? A: Key symptoms include:

- Excessively high classification accuracy (e.g., >95%) on what should be a noisy neuroimaging dataset.

- Unbelievably low p-values (e.g., 10^-10) in group-level analyses.

- Poor generalization: The results fail completely when applied to a new, independent dataset or during cross-validation on held-out data.

- Spatially scattered but statistically "significant" clusters that don't correspond to established neuroanatomy.

Q3: I used cross-validation (CV) in my machine learning pipeline. Doesn't this prevent double-dipping? A: Only if implemented correctly. Double-dipping occurs if feature selection is performed outside the CV loop. If you perform selection on the entire training set before CV, the information from the validation folds has "leaked" into the selection process. The correct protocol is to perform feature selection independently within each fold of the CV, using only the training fold for that iteration.

Q4: What is the definitive experimental design to avoid double-dipping in a biomarker discovery study? A: The gold standard is a three-way split of your data:

- Discovery/Training Set: Used for initial feature selection and model building.

- Validation Set: Used to tune hyperparameters and perform initial, unbiased evaluation.

- Test Set: Used ONLY ONCE for the final, unbiased assessment of the model's performance. This set must never be used in any part of the selection or tuning process.

Detailed Experimental Protocols

Protocol 1: Nested Cross-Validation for Neuroimaging Classification

- Purpose: To provide an unbiased estimate of classifier performance when feature selection and hyperparameter tuning are required.

- Methodology:

- Partition the full dataset into K outer folds (e.g., K=5).

- For each outer fold i: a. Designate fold i as the temporary test set. The remaining K-1 folds are the outer training set. b. On the outer training set, perform an inner cross-validation (e.g., 5-fold) to: i. Independently select features within each inner fold. ii. Train a model with candidate hyperparameters. iii. Choose the best hyperparameter set based on average inner-fold performance. c. Using the chosen hyperparameters, train a final model on the entire outer training set, performing feature selection on this full outer training set. d. Apply this final model to the held-out outer test fold (fold i) to obtain one unbiased performance metric.

- Average the performance metrics from all K outer test folds. This is the final unbiased performance estimate.

Protocol 2: Hold-Out Test Set with Independent Discovery Cohort

- Purpose: For definitive validation of a neuroimaging biomarker, especially in multi-site studies or clinical trials.

- Methodology:

- Acquire data from two completely independent cohorts.

- Cohort A (Discovery): Use this data for all exploratory analyses, including feature selection, algorithm development, and initial model training. Any parameter tuning must be done via internal cross-validation within this cohort only.

- Cohort B (Test): Keep this data completely locked and separate. Do not perform any analysis on it until the final model from Cohort A is fully fixed (features, algorithms, parameters all finalized).

- Apply the final, frozen model from Cohort A to Cohort B. Calculate performance metrics (accuracy, AUC, etc.) on Cohort B. These are the definitive, unbiased results.

Table 1: Simulated Impact of Double-Dipping on Classification Accuracy

| Analysis Type | True Effect Size | Reported Accuracy (Inflated) | Unbiased Accuracy (Correct) |

|---|---|---|---|

| Double-Dipped Analysis | None (Noise) | 85% | 50% (Chance) |

| Double-Dipped Analysis | Small | 98% | 62% |

| Independent Test Set Analysis | None (Noise) | 52% | 51% |

| Independent Test Set Analysis | Small | 65% | 63% |

Table 2: Key Reagent Solutions for Robust Neuroimaging Analysis

| Reagent / Tool | Function / Purpose |

|---|---|

| Nilearn (Python Library) | Provides built-in functions for safe feature selection (e.g., SelectKBest) within a scikit-learn CV pipeline. |

| Scikit-learn Pipeline | Encapsulates preprocessing, feature selection, and classification into a single object to prevent data leakage. |

| Permutation Testing Framework | Generates a null distribution of results by shuffing labels to establish a baseline for true significance. |

| COINSTAC (Platform) | Enables decentralized, privacy-respecting multi-site analysis with standardized preprocessing to create larger, independent test sets. |

Visualizations

Diagram 1: The Problem of Double-Dipping in Neuroimaging

Diagram 2: Correct Protocol with Independent Test Set

Diagram 3: Nested Cross-Validation Workflow

Technical Support Center: Troubleshooting & FAQs

Troubleshooting Guides

Issue: Circular Analysis (Double-Dipping) in Voxelwise Analysis

- Symptoms: Inflated effect sizes, non-replicable clusters of activation, statistically significant results from randomly permuted data.

- Diagnosis: The same dataset was used for both feature selection (e.g., defining an ROI based on a whole-brain contrast) and for the subsequent statistical analysis within that ROI without proper correction.

- Resolution: Implement strict data splitting or cross-validation with independent datasets. Use hold-out test sets for final inference. For exploratory analysis, apply family-wise error correction (FWE) across the entire search volume.

Issue: Misdefined ROI Leading to Biased Results

- Symptoms: ROI masks are anatomically imprecise or based on underpowered localizer tasks. Results are highly sensitive to small changes in mask boundaries.

- Diagnosis: Lack of an a priori, independently defined region of interest.

- Resolution: Use atlases from independent publications or anatomical landmarks. For functional localizers, use an independent dataset or session. Document the exact definition source and coordinate space (MNI/Talairach).

Issue: Poor Statistical Power in Whole-Brain Analysis

- Symptoms: No significant clusters despite a strong hypothesized effect, or only very small clusters survive correction.

- Diagnosis: Inadequate sample size, overly conservative multiple comparison correction, or high measurement noise.

- Resolution: Conduct power analysis prior to the study. Consider using more sensitive statistical methods (e.g., Threshold-Free Cluster Enhancement - TFCE). Ensure optimal preprocessing to minimize noise.

Frequently Asked Questions (FAQs)

Q1: What is the most critical step to avoid double-dipping in my feature selection pipeline? A1: The most critical step is complete separation of datasets. The data used to generate a hypothesis (e.g., select features or define an ROI) must be independent from the data used to test that hypothesis. This typically requires splitting your data into a discovery set and a validation set at the very beginning of analysis.

Q2: Is it acceptable to use an ROI defined from a meta-analysis or published paper to avoid circularity? A2: Yes, this is a strong method. Using an ROI defined from an independent study, a meta-analysis, or a standard anatomical atlas is considered a non-circular, a priori approach, as long as the definition is applied without further modification based on your current data.

Q3: How does the multiple comparisons problem differ between ROI-based and whole-brain voxelwise analysis? A3: The correction scope is different. In a whole-brain analysis, you must correct for all comparisons across ~100,000+ voxels (e.g., using FWE or FDR). In a properly defined, singular ROI analysis, you are only correcting for the number of voxels within that single, pre-defined region, which is a much smaller number, increasing sensitivity. However, if the ROI was defined from the same data, this "advantage" is statistically invalid.

Q4: Can I use cross-validation to prevent double-dipping in machine learning analyses on neuroimaging data? A4: Yes, but it must be implemented correctly. The feature selection step (e.g., voxel filtering) must be performed inside each fold of the cross-validation loop, using only the training data for that fold. Performing feature selection once on the entire dataset before cross-validation is a form of double-dipping that will overfit the model.

Supporting Data & Protocols

Table 1: Comparison of Analysis Approaches and Associated Pitfalls

| Analysis Approach | Primary Strength | Key Pitfall | Risk of Circularity | Recommended Correction |

|---|---|---|---|---|

| Whole-Brain Voxelwise | Unbiased, data-driven exploration | Low statistical power, severe multiple comparisons | Low (if corrected properly) | Family-Wise Error (FWE) or Threshold-Free Cluster Enhancement (TFCE) |

| A Priori ROI (Independent) | High sensitivity, hypothesis-driven | Requires strong prior justification | None | Small-Volume Correction (SVC) within the independent mask |

| A Posteriori ROI (Data-Driven) | Can identify unexpected regions | Extremely High (Double-Dipping) | Very High | Requires a fully independent validation dataset for confirmation |

| Cross-Validated Searchlight | Localized predictive mapping | Computationally intensive, complex interpretation | Medium (mitigated by proper CV) | Permutation testing within the CV framework |

Experimental Protocol: Validating an ROI with an Independent Dataset

- Acquisition: Collect two independent datasets (Session 1 & Session 2) from the same participants or two matched cohorts.

- Preprocessing: Process each dataset separately through a standard pipeline (realignment, normalization, smoothing).

- ROI Definition: Using only Session 1 data, perform a whole-brain contrast (e.g., Task A > Task B). Apply an appropriate whole-brain statistical threshold (p<0.05 FWE). Define an ROI mask from the resulting significant cluster(s).

- Hypothesis Test: Extract the mean signal from the ROI mask in the independent Session 2 data. Perform the statistical test of interest (e.g., correlation with behavior) on this extracted signal.

- Inference: Statistical conclusions are drawn solely from the results of Step 4, using the ROI defined in Step 3.

Visualization: Analytical Workflows

Diagram 1: Circular vs. Non-Circular Analysis Pipeline

Diagram 2: Nested Cross-Validation for ML

The Scientist's Toolkit: Research Reagent Solutions

| Item | Category | Function in Neuroimaging Analysis |

|---|---|---|

| SPM, FSL, AFNI | Software Suite | Core platforms for MRI/fMRI data preprocessing, statistical modeling, and voxelwise inference. |

| fMRIPrep | Preprocessing Pipeline | Robust, standardized, and automated pipeline for BOLD data preprocessing, minimizing user-induced variability. |

| FreeSurfer | Anatomical Toolbox | Provides cortical surface reconstruction, subcortical segmentation, and surface-based analysis to improve anatomical accuracy. |

| Nilearn, nipy | Python Libraries | Enable flexible statistical learning, connectivity analysis, and machine learning on brain maps, often with built-in CV tools. |

| Brainvoyager | Commercial Software | Integrated platform for advanced analysis, including multivariate pattern analysis (MVPA) and cross-validation designs. |

| FSL's Randomise | Statistical Tool | Permutation-based non-parametric testing tool, ideal for dealing with non-normal data and complex designs for valid inference. |

| BIDS Validator | Data Standardization Tool | Ensures neuroimaging data is organized according to the Brain Imaging Data Structure, promoting reproducibility and sharing. |

| Atlas Libraries (e.g., AAL, Harvard-Oxford) | Reference Maps | Provide pre-defined, anatomically labeled region masks for a priori ROI analysis, preventing circular definition. |

Technical Support Center: Avoiding Double-Dipping in Neuroimaging Analysis

Troubleshooting Guides & FAQs

Q1: My cross-validated predictive model shows perfect accuracy (>95%) on a small neuroimaging dataset. Is this a cause for concern?

A: Yes, this is a major red flag for potential double-dipping (circular analysis). High accuracy on small datasets often results from feature selection or model tuning performed on the entire dataset before cross-validation, causing data leakage. The model is effectively tested on data it has already "seen," inflating performance.

Diagnostic Steps:

- Audit your workflow script. Trace the order of operations. Ensure feature selection (e.g., voxel-wise thresholding, ROI selection based on group contrast) is performed independently within each training fold of the cross-validation loop.

- Check for independent test set. Verify you have a completely held-out validation cohort that was never used, even indirectly, in any feature selection or parameter tuning step.

- Run a permutation test. Shuffle your class labels and re-run the entire analysis. If the permuted label analysis yields similarly high accuracy, your pipeline is likely capturing noise through circularity.

Protocol Correction (Nested Cross-Validation):

Q2: How can I correctly define Regions of Interest (ROIs) for a drug development study without introducing circularity?

A: ROIs must be defined a priori using an independent dataset or a completely independent sample from the same study.

Valid Protocol:

- Use an independent atlas: Derive ROIs from a published, population-level brain atlas (e.g., AAL, Harvard-Oxford) that was generated from a separate cohort.

- Hold-out a discovery cohort: Split your cohort into a Discovery Sample (e.g., 60%) and a Validation Sample (e.g., 40%). Perform hypothesis-generating, whole-brain analysis only on the Discovery Sample to identify candidate ROIs. Then, extract features from these ROIs in the independent Validation Sample for your final predictive model or drug efficacy test.

- Literature-based definition: Use ROIs consistently reported in prior, unrelated peer-reviewed studies on the same condition.

Invalid Protocol: Running a whole-brain group comparison (e.g., patients vs. controls) on your entire dataset, selecting the most significant cluster as your ROI, and then extracting features from that same ROI to run a classification or correlation analysis on the same dataset.

Q3: My biomarker's effect size dropped from d=0.8 to d=0.3 after correcting my analysis for double-dipping. Is my finding still valid for informing a clinical trial?

A: This is a common and critical outcome. The initial inflated effect size would have led to a severely underpowered clinical trial, likely causing its failure. The corrected, smaller effect size is your valid basis for decision-making.

- Actionable Steps:

- Recalculate statistical power. Use the corrected effect size (d=0.3) to re-estimate the necessary sample size for your preclinical or clinical study.

- Consult Table 1 to understand the direct impact on downstream development.

Table 1: Impact of Double-Dipping Correction on Trial Design Parameters

| Parameter | With Double-Dipping (d=0.8) | After Correction (d=0.3) | Consequence of Using Inflated Estimate |

|---|---|---|---|

| Sample Size Needed (Power=0.8) | ~50 total | ~350 total | Trial is 7x underpowered, high false-negative risk. |

| Estimated Biomarker Effect | Large, compelling | Modest, requires careful validation | Misallocation of R&D resources. |

| Probability of Trial Success | Grossly overestimated | Realistically estimated | Failed trial, lost investment, halted drug development. |

Key Experimental Protocols for Valid Analysis

Protocol 1: Split-Sample Analysis for Biomarker Discovery

- Randomly partition your neuroimaging cohort into a Discovery Set (typically 50-70%) and a Hold-out Validation Set (30-50%).

- In the Discovery Set, perform exploratory feature selection (e.g., voxel-wise analysis, network metric calculation). Record the selected features/ROIs/parameters.

- Apply only the feature selection criteria (e.g., "voxels with p<0.001 in discovery contrast") to the Hold-out Validation Set. Do not re-run the selection on this set.

- Using only the features extracted from the Hold-out Set, build and evaluate your final predictive model or test the biomarker-drug response correlation.

- Report performance metrics only from the Hold-out Validation Set.

Protocol 2: Nested Cross-Validation for Model Development (Detailed workflow depicted in Diagram 1).

Research Reagent Solutions Toolkit

Table 2: Essential Tools for Reproducible Neuroimaging Feature Selection

| Item/Category | Function | Example/Tool |

|---|---|---|

| Version Control System | Tracks every change to analysis code and parameters, ensuring exact reproducibility. | Git, GitHub, GitLab |

| Containerization Platform | Packages the complete software environment (OS, libraries, tools) for identical execution anywhere. | Docker, Singularity |

| Pipeline Management Tool | Automates and documents multi-step neuroimaging analysis workflows. | Nipype, fMRIPrep, Nextflow |

| Pre-registration Platform | Publicly archives hypothesis, methods, and analysis plan before data analysis begins. | OSF, AsPredicted, ClinicalTrials.gov |

| Code Repositories | Hosts and shares analysis code, enabling peer scrutiny and reuse. | GitHub, GitLab, BioLINCC |

| Data & ROI Atlases | Provides independently defined anatomical or functional regions for a priori ROI analysis. | Harvard-Oxford Cortical Atlas, AAL, Yeo Network Parcellations |

Visualizations

Diagram 1: Nested Cross-Validation Workflow

Diagram 2: Consequences of Double-Dipping in Drug Development

Technical Support Center: Troubleshooting & FAQs

FAQ 1: "My model achieves 99% accuracy on the training set but only 55% on the independent test set. What is happening?"

Answer: This is a classic symptom of overfitting. Your model has learned patterns specific to your training data (including noise) that do not generalize. Combined with circular inference (e.g., using the same data for feature selection and final model training without proper cross-validation), this leads to inflated, non-reproducible results.

Troubleshooting Guide:

- Audit Your Workflow: Implement strict separation. Perform feature selection within each fold of cross-validation only on the training partition of that fold.

- Simplify the Model: Reduce model complexity (e.g., increase regularization, reduce polynomial degree, use fewer features).

- Gather More Data: Increase your sample size to improve the model's ability to learn generalizable patterns.

- Use a Hold-Out Test Set: Only touch your final test set once, after the entire model (including feature selection parameters) is finalized.

FAQ 2: "I suspect data leakage is corrupting my neuroimaging analysis. How can I systematically detect it?"

Answer: Data leakage occurs when information from outside the training dataset is used to create the model, often leading to overly optimistic performance. In neuroimaging, common sources include: performing global signal normalization across all subjects before splitting data, or using site-scanner information that is only available post-hoc.

Troubleshooting Guide:

- Check Preprocessing: Ensure all subject-specific preprocessing steps (filtering, normalization) are fit only on the training data and then applied to the validation/test data.

- Review Feature Origins: Ask: "Would this feature be available in a real-world, clinical deployment at the time of prediction?" If no, it's likely leakage.

- Performance Discrepancy: A model performing implausibly well (e.g., >95% accuracy on a complex psychiatric classification) is a major red flag.

- Conduct Ablation Tests: Re-run your pipeline, deliberately removing suspected leaking features. A significant drop in training (not just test) performance can indicate leakage.

FAQ 3: "My cross-validation results are excellent, but the model fails completely on a new cohort. Could feature selection be the culprit?"

Answer: Yes. This is frequently caused by non-independent feature selection—a form of double-dipping. If you select features based on their performance across the entire dataset before cross-validation, you bias the CV process. The model has already "seen" information from the validation folds during selection.

Troubleshooting Guide:

- Implement Nested Cross-Validation: Use an inner CV loop for feature selection and model tuning, and an outer CV loop for performance estimation. This provides an unbiased estimate of generalizability.

- Use Independent Screening: If possible, perform an initial feature selection (e.g., based on univariate tests) on a completely separate, held-out dataset.

- Validate on External Data: Always reserve a fully independent dataset (different site, scanner, population) for the final, one-time validation of your complete, locked-down pipeline.

FAQ 4: "What's the practical difference between circular inference and data leakage? They seem similar."

Answer: Both lead to overfitting and invalid results, but their point of origin differs.

| Aspect | Circular Inference (Double-Dipping) | Data Leakage |

|---|---|---|

| Core Issue | Using the same data to inform an analysis step and to test the outcome, violating independence. | Allowing information from the test/validation set to leak into the training process. |

| Common Context | Feature selection on full dataset before CV. Peeking at test results to adjust model. | Preprocessing (e.g., normalization using all data). Temporal leakage from future data. |

| Analogy | Using the final exam questions to study, then being surprised you aced it. | Accidentally having the answer key in your study notes. |

| Solution | Strict procedural separation (e.g., nested CV). | Strict process isolation during pipeline construction. |

Experimental Protocol: Nested Cross-Validation to Avoid Circular Inference

Objective: To obtain an unbiased estimate of model performance when feature selection is required.

Protocol:

- Outer Loop (Performance Estimation): Split the entire dataset into k folds (e.g., 5 or 10).

- For each outer fold: a. Hold Out one fold as the outer test set. b. The remaining k-1 folds form the outer training set. c. Inner Loop (Model/Feature Selection Tuning): On the outer training set, perform another m-fold cross-validation. i. For each inner fold, split the outer training set into inner train/validation sets. ii. Perform feature selection only on the inner training set. iii. Train the model on the inner training set (with selected features). iv. Evaluate on the inner validation set. d. Optimize: Choose the best feature set and model parameters based on average inner CV performance. e. Train Final Model: Using the optimized parameters, perform feature selection on the entire outer training set, then train the model. f. Test: Evaluate this final model on the held-out outer test set (fold from step 2a). Record this score.

- Final Performance: Average the scores from each of the k outer test folds. This is your unbiased performance estimate.

Diagrams

Diagram 1: Nested CV Workflow

Diagram 2: Circular Inference vs. Correct Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Reagent | Function in Avoiding Double-Dipping | Example/Note |

|---|---|---|

| Nilearn / scikit-learn | Provides pre-built functions for nested cross-validation and pipeline creation, enforcing correct data partitioning. | sklearn.model_selection.NestedCV, Pipeline with SelectKBest inside. |

| PRONTO (PRediction Oriented Neuroimaging Toolbox) | A MATLAB toolbox specifically designed for robust neuroimaging classification, automating correct validation structures. | Handles fMRI/MRI data, includes feature selection wrappers. |

| COINSTAC | A decentralized platform for collaborative analysis. Enables external validation by training on one dataset and testing on another from a different site. | Critical for testing generalizability and proving no leakage. |

| Custom Python Scripts for Permutation Testing | To establish a null distribution for model performance, distinguishing true signal from chance due to circular analysis. | Shuffle labels many times, re-run entire pipeline to get p-value. |

| Data Version Control (DVC) | Tracks exact dataset splits, preprocessing steps, and model versions to ensure reproducibility and audit trails against leakage. | Tags specific data commits as "finaltestset" - immutable. |

| MRIQC / fMRIPrep | Standardized, containerized preprocessing. Ensures preprocessing is applied consistently but separately to train and test data, preventing leakage. | Use the --participant-label flag to process specific groups independently. |

Building Robust Pipelines: Methodological Frameworks to Prevent Data Leakage

Technical Support Center: Troubleshooting & FAQs

Q1: How can I detect accidental data leakage from the test set into my feature selection process?

A: A definitive check is to perform a "dummy" test where you shuffle or randomize your target variable (e.g., diagnostic label) in the test set only, while keeping your feature selection and model training pipeline unchanged. If your trained model performs significantly above chance level (e.g., accuracy > 50% for binary classification) on this randomized test set, it indicates leakage. The test set information has contaminated the training phase.

- Diagnostic Table:

Test Condition Model Performance (AUC/Accuracy) Indication True Test Set High (e.g., AUC = 0.85) Expected if model is valid. Randomized-Label Test Set High (e.g., AUC > 0.6) CRITICAL: Data leakage confirmed. Randomized-Label Test Set At chance level (e.g., AUC ≈ 0.5) No leakage detected.

Q2: My dataset is small. Isn't nested cross-validation sufficient without a hold-out test set?

A: No. Nested cross-validation (CV) provides an unbiased estimate of model performance for a given modeling pipeline and is excellent for algorithm selection and hyperparameter tuning. However, it does not replace a final, locked-away test set. The performance estimate from nested CV itself becomes an optimized metric after you use it to make decisions. You must evaluate the final chosen model on a completely untouched test set for an unbiased assessment of its generalizability.

- Protocol: Nested Cross-Validation Workflow:

- Outer Split: Partition data into K outer folds.

- For each outer fold: a. Designate the fold as the validation set. b. The remaining K-1 folds form the development set. c. Inner Loop: Perform cross-validation only on the development set to select features and tune hyperparameters. d. Train a final model on the entire development set using the best parameters. e. Evaluate this model on the held-out outer validation set.

- The average performance across all outer folds is the unbiased performance estimate.

- Crucial Final Step: Train your final model on all available data using the optimal pipeline and report its performance only on the completely independent hold-out test set that was never used in any CV loop.

Q3: I performed voxel-wise analysis first, then extracted ROI means for classification. Is this double-dipping?

A: Yes, if not done correctly. If the same whole-brain voxel-wise analysis (e.g., a mass-univariate t-test) that identifies significant regions is performed on the entire dataset (training+test), and those regions are then used to extract features for classification, you have leaked global information. The test set has influenced feature selection.

- Corrected Experimental Protocol:

- Isolate Test Set: Lock away the test set (

Data_Test). - Feature Selection on Training Data Only: Perform voxel-wise analysis (e.g., t-test, ANOVA) only on

Data_Trainto identify significant voxels or ROIs. - Define ROI Mask: Create a binary mask from the significant areas in Step 2.

- Apply Mask: Use only this mask to extract ROI summary features (e.g., mean signal) from both

Data_TrainandData_Test. - Train Classifier: Train your model using the features from

Data_Train. - Final Test: Evaluate the trained model on the features from

Data_Test.

- Isolate Test Set: Lock away the test set (

Q4: How should I handle preprocessing steps (like normalization) to avoid leakage?

A: Any preprocessing step that uses statistics (mean, variance, etc.) from the data must be fit on the training set only, then applied to the validation and test sets. Never fit preprocessing on the combined dataset.

- Table: Preprocessing Leakage Checklist

Preprocessing Step Leakage Risk Safe Protocol Scaling/Normalization High Fit StandardScaleron training data; transform train, val, and test sets.Imputation (mean/median) High Calculate imputation values from training data; use them on all sets. PCA/Dimensionality Reduction Critical Fit PCA on training data; project all datasets onto training-derived components. Temporal Filtering Low* Filter parameters should be defined a priori or from separate data.

Diagram Title: Safe Preprocessing & Modeling Pipeline

Q5: What are the practical consequences of double-dipping in a drug development context?

A: It leads to inflated, unrealistic performance estimates for a neuroimaging biomarker. This can cause:

- False Positive Findings: Advancing a biomarker to costly clinical validation phases based on biased optimism.

- Failed Clinical Trials: The biomarker fails to generalize in independent, multi-site trials, wasting resources (millions of dollars) and time.

- Erosion of Trust: Undermines the credibility of neuroimaging methods in translational research.

The Scientist's Toolkit: Essential Reagents for Robust Analysis

| Item/Category | Function in Avoiding Double-Dipping |

|---|---|

scikit-learn Pipeline |

Encapsulates all preprocessing and modeling steps, ensuring transformers are fit only on training data during cross-validation. |

GroupShuffleSplit or LeavePGroupsOut |

Critical for creating independent train/test splits when data has related samples (e.g., multiple scans from same subject, family studies). |

Nilearn Masker Objects |

Enforce application of statistical masks derived from training data to new datasets, preventing ROI selection leakage. |

DummyClassifier/Randomized Test |

Provides a sanity check baseline to test for fundamental leakage, as described in FAQ #1. |

| Pre-registration Protocol | A written, time-stamped plan (e.g., on OSF) detailing the analysis pipeline, including exact feature selection and validation steps, before data analysis begins. |

Diagram Title: Data Splitting Strategy for Generalization

Technical Support Center

FAQs & Troubleshooting Guides

Q1: I am getting overly optimistic performance estimates (e.g., 99% accuracy) on my neuroimaging classification task. What is the most likely cause and how do I fix it?

A: This is a classic symptom of data leakage, specifically "double-dipping," where feature selection or hyperparameter tuning has been performed on the entire dataset before cross-validation. To fix this, you must implement Nested Cross-Validation (NCV). The outer loop evaluates model performance, while the inner loop handles all data-dependent steps like feature selection and hyperparameter tuning strictly within each training fold of the outer loop. This ensures the test set in the outer loop is completely unseen during model development.

Q2: My nested cross-validation script is taking an extremely long time to run. Are there strategies to manage computational cost?

A: Yes. Consider these approaches:

- Dimensionality Reduction First: Apply a preliminary, non-data-driven filter (e.g., keeping voxels with highest variance) to reduce the feature space before NCV. This must be done without using class labels.

- Efficient Inner Loop: Use a simpler/faster model or fewer hyperparameter combinations in the inner loop. Bayesian optimization can be more efficient than grid search.

- Parallelize: Run outer folds or inner loops in parallel if hardware allows.

- Reduce Folds: Use fewer folds in the inner loop (e.g., 5-fold) than in the outer loop (e.g., 10-fold), though this may increase bias.

Q3: How do I correctly report the final performance and model from a nested cross-validation procedure?

A: It is critical to understand that the primary output of NCV is an unbiased performance estimate. The procedure does not yield a single, final model for deployment. You should report the mean and standard deviation (e.g., accuracy, AUC) across the outer test folds. To obtain a final model for application to new data, you must retrain your entire pipeline (including feature selection and hyperparameter tuning, now optimized based on the inner loop results) on the complete dataset, using the best parameters identified from the NCV analysis.

Q4: Can I use the same data for feature selection in a meta-analysis and then predictive modeling?

A: No. This constitutes double-dipping at the project level. If a dataset is used to identify a significant brain region (feature) in a group analysis, that same dataset cannot be used to test the predictive power of that specific region without independent validation. The solution is to use an independent cohort for the predictive modeling test. If only one dataset is available, you must split it independently for the discovery (feature selection) and validation (predictive modeling) phases, or use a hold-out test set that is never used in any feature selection step.

Key Experimental Protocols

Protocol 1: Standard Nested Cross-Validation for fMRI MVPA

- Outer Loop Setup: Split the entire neuroimaging dataset (subjects or trials) into k non-overlapping folds (e.g., 10 folds). One fold is held out as the test set; the remaining k-1 folds form the training/validation set.

- Inner Loop (on training/validation set):

- Split the training/validation set into j folds (e.g., 5 folds).

- For each hyperparameter combination and/or feature selection method:

- Train the model on j-1 folds.

- Validate on the held-out j-th fold.

- Identify the best hyperparameters/feature set that maximize average validation performance.

- Outer Loop Evaluation:

- Using the optimal pipeline from Step 2, train a model on the entire training/validation set.

- Apply this model to the completely untouched outer test fold to obtain a performance score.

- Iteration & Aggregation: Repeat steps 2-3 for each of the k outer folds. The final model performance is the average of the k outer test scores.

Protocol 2: Preventing Double-Dipping in Seed-Based Feature Selection

- Independent Discovery Set: Use Dataset A (or a subset of your main data) to perform a whole-brain analysis (e.g., group-level t-test, connectivity analysis) to identify a significant cluster (the "seed" or ROI).

- ROI Definition: Extract the coordinates or mask of the cluster from Dataset A.

- Independent Validation Set: Apply this pre-defined ROI mask to Dataset B (a completely independent cohort or a held-out partition of the main data that was not used in Step 1).

- Feature Extraction & Modeling: Extract features (e.g., mean activation, connectivity maps) from this ROI in Dataset B.

- Nested CV: Perform nested cross-validation only on Dataset B using the extracted ROI features to train and test a predictive model. The outer loop provides the unbiased performance estimate for the ROI.

Table 1: Comparison of Cross-Validation Strategies and Risk of Double-Dipping

| Method | Feature Selection / Tuning Step | Test Set | Bias Risk | Recommended Use |

|---|---|---|---|---|

| Hold-Out | Done on training set | Single hold-out set | Low (if done correctly) | Very large datasets |

| Simple k-Fold CV | Done on the entire dataset before splitting | All data (through folds) | High (Optimistic bias) | Not recommended for small samples or with data-driven preprocessing |

| Train-Validation-Test Split | Done on training set | Single hold-out test set | Low | Large datasets, initial prototyping |

| Nested k x j-Fold CV | Done strictly within each training fold of outer loop | Outer test folds (never used in tuning) | Very Low (Gold standard) | Small-to-medium neuroimaging datasets, final performance reporting |

Table 2: Example Computation Time for Different CV Schemes (Simulated Data: 100 subjects, 10k features)

| Scheme | Outer Folds | Inner Folds | Approx. Computation Time | Relative Cost |

|---|---|---|---|---|

| Simple 10-Fold CV | 10 | N/A | 1x (Baseline) | 1 |

| Nested 5x5 CV | 5 | 5 | ~25x | 25 |

| Nested 10x5 CV | 10 | 5 | ~50x | 50 |

| Nested 10x10 CV | 10 | 10 | ~100x | 100 |

Visualization: Nested CV Workflow & Double-Dipping

Title: Nested Cross-Validation Workflow to Prevent Double-Dipping

Title: Correct vs. Incorrect Model Evaluation Paths

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Neuroimaging NCV Research |

|---|---|

Scikit-learn (sklearn) |

Primary Python library for implementing GridSearchCV, cross_val_score, and custom estimators for seamless nested CV pipelines. |

| NiLearn / Nilearn | Provides tools for neuroimaging-specific data handling (masking, feature extraction from ROIs) that integrate with scikit-learn pipelines. |

| Hyperopt / Optuna | Frameworks for Bayesian hyperparameter optimization, reducing computational cost in the inner loop compared to exhaustive grid search. |

| Datalad / Git-annex | Version control system for data, essential for managing and reproducing precise dataset splits used in outer and inner loops. |

| Precomputed Brain Atlases | (e.g., AAL, Harvard-Oxford). Provide pre-defined, data-independent ROIs for feature extraction, eliminating the need for data-driven selection. |

| High-Performance Computing (HPC) Cluster | Enables parallelization of outer folds, making the computationally intensive NCV procedure feasible for large neuroimaging datasets. |

| Containerization (Docker/Singularity) | Ensures the entire analysis pipeline (software, libraries) is identical across all folds and reproducible by other researchers. |

Best Practices for Feature Selection Within the Training Loop Only

Troubleshooting Guides & FAQs

Q1: I am using cross-validation (CV). When I perform feature selection before the CV loop, my model performance is excellent on the test folds but fails on a completely held-out dataset. What is wrong?

A1: This is a classic case of "double-dipping" or data leakage. Performing feature selection on the entire dataset before CV allows information from the test folds to leak into the training process via the feature selection step. This optimistically biases performance estimates. The best practice is to perform feature selection within each training fold of the CV loop, using only the training data from that fold.

Q2: In my neuroimaging analysis, I have a high-dimensional feature set (e.g., 10,000 voxels) and a small sample size (N=50). How can I perform robust feature selection within the training loop without overfitting?

A2: For small-sample, high-dimensional data, consider these nested approaches:

- Use a stable, regularized method like L1-penalized (Lasso) logistic/linear regression as your model. The regularization inherently performs feature selection during training.

- Implement a nested CV with an inner loop for hyperparameter (including feature selection parameter) tuning and an outer loop for performance estimation. Feature selection is confined to the inner loop.

- Employ stability selection within the training fold. Run your selection method (e.g., Lasso) multiple times on bootstrap samples of the training data and select features that are consistently chosen above a threshold.

Q3: My feature selection method (e.g., ANOVA F-value) requires group labels. How do I apply it correctly within a training fold for a classification task?

A3: The procedure must be strictly confined to the training partition of the fold.

- Split the fold data into

X_trainandX_test. - Compute the selection criterion (e.g., F-values) using only

X_trainand the correspondingy_trainlabels. - Identify the top-k features based on these scores.

- Transform both

X_trainandX_testby selecting only these k features. - Train and validate your model on these transformed sets. Never use any information from

y_testorX_testduring the selection step.

Q4: Are there specific Python/R functions that automatically prevent leakage during feature selection?

A4: Yes, but you must use them within a correct pipeline structure.

- Python (scikit-learn): Use the

Pipelineobject to chain a feature selector (likeSelectKBest) with your model. Then pass this pipeline tocross_val_scoreorGridSearchCV. This ensures the selector isfitonly on the training portion of each fold. - R (caret): The

train()function, when used with methods likerfe(Recursive Feature Elimination) orsbf(Selection By Filtering), automatically handles resampling and prevents leakage if the pre-processing options (likepreProcess) are set correctly within the resampling control.

Q5: How do I document my "within-training-loop" feature selection process for publication to ensure reproducibility and demonstrate I avoided double-dipping?

A5: Provide a clear, diagrammatic workflow and specify key details in your methods:

- State the type of validation (e.g., "We used a nested 5x5-fold cross-validation scheme").

- Explicitly state the scope of feature selection (e.g., "Feature selection was performed independently within each fold of the outer cross-validation loop, using only the training data for that fold").

- Report the selection algorithm and parameters (e.g., "The top 10% of features were selected based on ANOVA F-test computed on the training set").

- Include a workflow diagram (see below).

Experimental Protocols & Methodologies

Protocol 1: Nested Cross-Validation with Inner-Loop Feature Selection

Purpose: To obtain an unbiased performance estimate for a model that requires feature selection. Steps:

- Outer Loop: Split data into K folds.

- For each outer fold

i: a. Designate foldias the outer test set. The remaining K-1 folds are the outer training set. b. Inner Loop: Split the outer training set into L folds. c. For each inner foldj: i. Designate inner foldjas the validation set. The rest is the inner training set. ii. Perform feature selection exclusively on the inner training set. iii. Train the model on the selected features of the inner training set. iv. Evaluate on the validation set (with features selected in step ii). d. Tune the feature selection/model hyperparameters based on average inner-loop validation performance. e. With the tuned parameters, perform feature selection on the entire outer training set. f. Train the final model on the selected features of the outer training set. g. Evaluate the final model on the held-out outer test set (foldi). - Report the mean and standard deviation of performance across all K outer test folds.

Protocol 2: Stability Selection within a Training Fold

Purpose: To identify robust features within a single training partition. Steps:

- From the training data, generate

Bbootstrap samples (e.g., B=100). - For each bootstrap sample

b: a. Apply your feature selection method (e.g., Lasso with a fixed regularization lambda). b. Record the set of selected features. - Compute the selection probability for each original feature: (# of times selected) / B.

- Select features with a selection probability exceeding a pre-defined threshold (e.g., 0.8).

- Use this stable feature subset to train your final model on the original (non-bootstrapped) training data.

Data Presentation

Table 1: Comparison of Feature Selection Strategies on a Simulated Neuroimaging Dataset (N=100, Features=5000)

| Strategy | Mean CV Accuracy (%) | Accuracy on True Hold-Out Set (%) | Estimated Bias |

|---|---|---|---|

| No Feature Selection | 62.1 ± 3.5 | 61.5 | Low |

| Selection BEFORE CV Loop (Leaky) | 85.3 ± 2.1 | 63.8 | High (+21.5) |

| Selection WITHIN CV Loop (Correct) | 70.5 ± 4.0 | 69.9 | Low |

| Nested CV with Stability Selection | 69.8 ± 4.2 | 69.2 | Low |

Table 2: Key Reagent Solutions for Reproducible Feature Selection Research

| Reagent / Tool | Function / Purpose | Example (Python) |

|---|---|---|

| Pipeline Object | Chains preprocessing, feature selection, and modeling steps to prevent data leakage during resampling. | sklearn.pipeline.Pipeline |

| Cross-Validation Wrapper | Automatically manages data splitting and ensures transformations are refit on each training fold. | sklearn.model_selection.cross_val_score, GridSearchCV |

| Feature Selector Modules | Implements various selection strategies (filter, wrapper, embedded) for use within pipelines. | sklearn.feature_selection (e.g., SelectKBest, RFE) |

| Regularized Estimators | Performs built-in (embedded) feature selection as part of the model training process. | sklearn.linear_model.Lasso, sklearn.svm.LinearSVC |

| Stability Selection Libs | Provides tools for computing selection stability across resamples. | stability-selection (3rd party) |

Mandatory Visualizations

Title: Nested CV Workflow Preventing Feature Selection Leakage

Title: Incorrect Feature Selection Causing Data Leakage

Technical Support Center: Troubleshooting & FAQs

Q1: I've implemented nested cross-validation (CV), but my biomarker's performance still drops drastically on a completely independent test set. What could be the cause? A: Nested CV only protects against overfitting within the CV loop. A common cause is feature pre-selection leakage. If you performed any form of global feature filtering (e.g., based on whole-dataset variance or univariate correlation with the target) before splitting data into training and test sets, you have committed double-dipping. The independent test set is no longer independent because information from it influenced which features were selected. The solution is to move all feature selection steps inside the inner loop of your nested CV pipeline, ensuring they only see the training fold data at each iteration.

Q2: My clean pipeline seems correct, but results are highly unstable across different random seeds for data splitting. How can I improve reliability? A: This is often a symptom of high-dimensional, low-sample-size data with noisy features. Your clean pipeline is likely correct, but the underlying signal may be weak.

- Action 1: Increase the number of outer CV repeats (e.g., 100 repeats of 10-fold CV) to obtain a more robust performance distribution.

- Action 2: Perform a power analysis or stability assessment on the selected features themselves. Use metrics like the Dice coefficient across splits to see if the same features are consistently chosen.

- Action 3: Consider if more stringent regularization within your model (inside the inner loop) is needed.

Q3: What is the concrete difference between "filter" and "wrapper" methods in the context of a clean pipeline? A: The key difference is the use of the target variable.

- Filter Methods (e.g., ANOVA F-test, mutual information): Rank features based on univariate statistical scores computed only on the training data. The selection threshold (e.g., top k features) is a hyperparameter that must be optimized within the inner CV loop.

- Wrapper Methods (e.g., recursive feature elimination - RFE): Use the model's performance (e.g., SVM weights) to iteratively select features. The core model is trained and evaluated only on the training data during the selection process. The number of features to select is a hyperparameter for the inner loop.

Table 1: Comparison of Feature Selection Strategies in Clean Pipelines

| Strategy | Mechanism | Risk of Double-Dipping | Where it Belongs in Pipeline |

|---|---|---|---|

| Univariate Filter (Global Threshold) | Select top k features based on whole-dataset stats. | Extremely High | FORBIDDEN - Uses test set info. |

| Univariate Filter (CV-Optimized) | Select top k features, where 'k' is optimized via inner CV. | Low (if implemented correctly) | Inside the inner CV loop. |

| Wrapper Method (e.g., RFE) | Iteratively remove features based on model coefficients. | Low (if implemented correctly) | Inside the inner CV loop. |

| Embedded Method (e.g., L1 Regularization) | Feature selection is intrinsic to the model training (e.g., Lasso). | Low | Model training in the inner CV loop. |

Experimental Protocol: Implementing a Clean sMRI Biomarker Pipeline

This protocol details a clean pipeline for identifying structural MRI (voxel-based morphometry) biomarkers for disease classification.

1. Data Partitioning:

- Split the entire dataset into a Locked-Holdout Test Set (20-30%) and a Training/Validation Set (70-80%). The holdout set is set aside and never touched until the final, single evaluation.

2. Nested Cross-Validation on Training/Validation Set:

- Outer Loop (Performance Estimation): Perform 10-fold CV. For each of the 10 folds:

- Inner Loop (Hyperparameter & Feature Selection Optimization): On the 90% training fold, perform another 5-fold CV to optimize:

- Hyperparameters (e.g., SVM

C, Lassoalpha). - Feature selection parameter

k(number of features). The feature selection (e.g., ANOVA F-test) is re-computed on the 90% training fold for each inner loop configuration.

- Hyperparameters (e.g., SVM

- Refit: Train a final model on the entire 90% training fold using the best inner-loop parameters. Apply the fitted feature selector and model to the 10% outer validation fold to get a prediction.

- Inner Loop (Hyperparameter & Feature Selection Optimization): On the 90% training fold, perform another 5-fold CV to optimize:

- Result: An unbiased performance estimate (e.g., mean accuracy) from the outer loop validation folds.

3. Final Model Training & Holdout Test:

- Train a single model on the entire Training/Validation Set, using the best-averaged hyperparameters from the nested CV.

- Apply this final model once to the Locked-Holdout Test Set for the final performance report.

- The features selected in this final model can be reported as the biomarker.

Diagram: Clean Pipeline for sMRI/fMRI Biomarker ID

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Clean Pipeline Implementation

| Tool/Reagent | Category | Function in Clean Pipeline |

|---|---|---|

Scikit-learn (Pipeline, GridSearchCV) |

Software Library | Provides the fundamental framework for creating sequential pipelines and embedding nested cross-validation, preventing data leakage. |

| Nilearn | Neuroimaging Library | Offers wrappers to integrate neuroimaging feature extraction (e.g., brain atlas maps) directly into scikit-learn pipelines. |

| NiBabel | Neuroimaging Library | Handles reading/writing of neuroimaging data (NIfTI files) to interface with Python-based machine learning workflows. |

| Custom Permutation Test Script | Statistical Tool | Validates the significance of the final model's performance against a null distribution, guarding against over-optimism. |

| Stability Selection Algorithm | Feature Selection Method | Combines results of feature selection across many subsamples to identify robust, stable biomarkers, complementing CV. |

| Docker/Singularity Container | Computational Environment | Ensures pipeline reproducibility by encapsulating the exact software environment, including library versions. |

Diagnosing and Fixing Double-Dipping in Existing Analysis Pipelines

Technical Support Center: Troubleshooting Circularity in Neuroimaging Feature Selection

FAQs & Troubleshooting Guides

Q1: My model's cross-validated accuracy is implausibly high (>95%). Is this a red flag for circularity? A: Yes. Implausibly high performance is a primary red flag. This often indicates feature selection or model tuning was performed on the entire dataset before cross-validation, leaking information across folds. To troubleshoot:

- Audit Step: Isolate your test/hold-out set before any feature selection or parameter optimization.

- Protocol: Implement a nested cross-validation, where an outer loop handles data splitting and an inner loop performs feature selection/model tuning exclusively on the outer loop's training fold.

- Check: Ensure no steps (e.g., normalization, smoothing) use information from the test set.

Q2: I used a brain-wide search (e.g., whole-brain voxel analysis). How can I tell if my feature selection is independent? A: Independence is critical. A common circular error is using the same dataset to both select a region of interest (ROI) and to test the hypothesis about that ROI.

- Troubleshooting Checklist:

- Data Splitting: Was the ROI defined on a completely independent dataset or a held-back subset?

- Temporal Independence: If using longitudinal data, was the ROI defined on timepoint A and tested on timepoint B?

- Literature Basis: Is the ROI defined a priori from a published meta-analysis or atlas not derived from the current dataset?

Q3: How do I properly perform feature selection within cross-validation for a neuroimaging pipeline? A: Follow this strict nested protocol to avoid double-dipping:

Experimental Protocol: Nested Cross-Validation for fMRI Feature Selection

- Outer Loop (k-fold, e.g., 5-fold): Split full dataset into 5 folds. Iterate:

- Hold out Fold i as the test set. Use Folds ~i as the design set.

- Inner Loop (on the Design Set):

- Split the design set into another k folds (e.g., 4-fold).

- On each inner training fold, perform feature selection (e.g., voxel-wise ANOVA, SVM-RFE).

- Train a model on the selected features from that inner training fold.

- Validate it on the corresponding inner validation fold.

- Repeat across all inner folds to identify the optimal feature number or selection threshold that generalizes best within the design set.

- Apply to Outer Loop:

- Using the optimal parameters determined from the entire inner loop, perform feature selection anew on the entire design set.

- Train a final model on the design set with these features.

- Apply this trained model to the held-out Outer Loop Test Fold (i) to get a single, unbiased performance estimate.

- Repeat: Iterate so each fold serves as the test set once. The final performance is the average across all outer test folds.

Q4: What are the key statistical red flags in my results table? A: Review your results against this quantitative checklist:

Table 1: Quantitative Red Flags in Results Reporting

| Metric | Green Flag (Likely Valid) | Red Flag (Possible Circularity) |

|---|---|---|

| Classification Accuracy | In line with literature (~70-85% for many clinical fMRI tasks). | Implausibly high (>95% or at ceiling). |

| p-value / Effect Size | Significant in final test, but modest. Effect size reasonable. | Extremely small p-values (e.g., <1e-10) with huge effect sizes on final test. |

| Feature Stability | Selected features vary somewhat across CV folds but cluster in plausible regions. | Identical features selected across all CV folds (may indicate pre-selection on full data). |

| Train-Test Performance Gap | Small, reasonable gap (e.g., train: 88%, test: 82%). | Near-zero gap (train: 98%, test: 97%). |

Q5: What is a "tripartite" data split and when should I use it? A: It's a gold-standard protocol for complex analyses with exploratory steps.

Experimental Protocol: Tripartite Data Split

- Split your full dataset randomly into three independent sets:

- Discovery Set (e.g., 50%): Used for exploratory analysis, feature selection, and hypothesis generation.

- Validation Set (e.g., 25%): Used to tune and refine the model/analysis pipeline based on the discovery set results.

- Test Set (e.g., 25%): Used ONCE for the final, unbiased evaluation of the model/analysis. No further changes allowed.

- Key Rule: The test set is locked and must not influence the discovery or validation phases in any way.

Visualization of Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Avoiding Circularity

| Item / Solution | Function / Purpose in Preventing Double-Dipping |

|---|---|

Nilearn (nilearn.decoding)* |

Provides scikit-learn compatible estimators for neuroimaging data that force correct cross-validation structure for feature selection (e.g., NestedCV objects). |

Scikit-learn (sklearn.model_selection) |

Critical for implementing GridSearchCV within a cross_val_score or StratifiedKFold loop to create nested designs. |

| PubMRI (Simulated Datasets) | Provides fully disclosed, ground-truth benchmark datasets (e.g., brainomics) to validate your pipeline and test for inadvertent circularity. |

| CoSMoMVPA | MVPA toolbox that emphasizes partition-based analysis where independent data partitions are required for feature selection and classification. |

| Pre-registration Template (e.g., OSF) | A protocol for pre-specifying hypotheses, ROIs, and analysis pipelines before data collection/analysis to prevent hindsight bias and data dredging. |

| Dual-ROI Atlases (e.g., AAL, Harvard-Oxford) | Pre-defined, anatomically labeled atlases allow for a priori ROI selection without using your experimental data, eliminating one source of circularity. |

| Custom Scripts for Tripartite Splitting | Scripts that randomly assign subjects to Discovery/Validation/Test sets and lock the Test set (e.g., by moving files to a secure directory) before any analysis begins. |

Note: Libraries and tools should be used with strict awareness of data leakage pitfalls in their default examples.

Technical Support Center: Troubleshooting Double-Dipping in Neuroimaging Feature Selection

FAQ & Troubleshooting Guides

Q1: I trained a classifier on my whole dataset and got 95% accuracy. Why is my reviewer saying the result is invalid due to "double-dipping"? A: This is a classic case of feature selection bias. Using the entire dataset for both feature selection and classifier training optimistically biases performance estimates. The classifier has effectively "seen" the test data during training. You must re-analyze using proper partitioning.

Q2: What is the minimum safe partitioning strategy to avoid circular analysis? A: The most robust method is a Nested Cross-Validation with an explicit inner loop for feature selection. See the experimental protocol below.

Q3: My sample size is small (n<50). Are permutation tests still valid for correcting p-values? A: Yes, permutation testing is particularly valuable for small samples as it makes no parametric assumptions. However, ensure the permutation scheme respects your null hypothesis (e.g., permute labels within site for multi-site data). A minimum of 5000 permutations is recommended for reliable p-values.

Q4: I used a 'train-test split' (80/20). Is this sufficient to avoid double-dipping? A: Only if the feature selection was performed exclusively on the 80% training set. If feature selection used any information from the test set (even indirectly, e.g., during whole-brain normalization), the analysis is circular. Re-analysis with a held-out dataset or simulation is required.

Q5: How do I implement a permutation test for a cross-validated classification accuracy? A: The key is to repeat the entire CV procedure, including feature selection, for each permutation of the labels. This accounts for the feature selection step within the null distribution. See the workflow diagram and protocol.

Experimental Protocols

Protocol 1: Nested Cross-Validation for Unbiased Estimation

Purpose: To obtain an unbiased estimate of classifier performance when feature selection is required.

- Outer Loop (Performance Estimation): Split data into K-folds (e.g., K=5 or 10).

- Hold Out: For fold i, set it aside as the test set. Use the remaining K-1 folds as the training pool.

- Inner Loop (Feature Selection & Model Tuning): Perform a second, independent cross-validation only on the training pool to:

- Select optimal parameters (e.g., regularization constant).

- Perform feature selection (e.g., identify top N voxels based on t-statistic). Crucially, this selection uses only the inner loop's training folds.

- Train Final Model: Using the entire training pool and the optimal parameters/features identified in Step 3, train a final classifier.

- Test: Apply the final classifier to the held-out outer test set (i) to get a performance score (e.g., accuracy).

- Repeat: Iterate Steps 2-5 for all K outer folds.

- Report: The mean performance across all K outer test folds is the unbiased estimate.

Protocol 2: Permutation Testing for Statistical Significance

Purpose: To compute a non-parametric p-value for an observed classification accuracy.

- Calculate True Statistic: Run the Nested CV protocol (Protocol 1) on the correctly labeled data. Record the true performance metric (e.g., mean accuracy = A_true).

- Null Distribution Generation:

- Randomly permute the class labels of the entire dataset, breaking the relationship between data and outcome.

- Run the entire Nested CV protocol (Protocol 1) on this permuted dataset. Record the resulting null performance metric (Apermi).

- Critical: The feature selection in each permutation must be re-run from scratch, mimicking the procedure on the true data.

- Repeat: Perform Step 2 a large number of times (N >= 5000).

- Calculate p-value: p = (count of permutations where Apermi >= A_true + 1) / (N + 1).

Data Presentation

Table 1: Comparison of Corrective Strategies for Circular Analysis

| Strategy | Core Principle | Key Advantage | Key Limitation | Recommended Use Case |

|---|---|---|---|---|

| Independent Test Set | Hold out a completely untouched dataset for final testing. | Conceptually simple, gold standard if data is plentiful. | Reduces sample size for discovery; risky if data is heterogeneous. | Large datasets (n > 200). |

| Nested Cross-Validation | Uses an inner CV loop for feature selection/ tuning within the training folds of an outer CV loop. | Provides nearly unbiased performance estimate with efficient data use. | Computationally intensive; must be implemented carefully. | Standard for most MRI studies (n ~ 50-150). |

| Permutation Testing | Compares observed result to a null distribution generated by randomly shuffling labels. | Non-parametric; directly tests the null hypothesis of no structure. | Defines the null; does not prevent bias but helps assess significance. | Essential final step to attach a p-value to any CV result. |

Table 2: Impact of Double-Dipping on Reported Classification Accuracy (Simulation Data)

| Analysis Method | True Effect Size (AUC) | Mean Reported AUC (SD) | Inflation (Bias) |

|---|---|---|---|

| Grossly Circular (Feature selection on full set, test on same) | 0.70 | 0.95 (0.02) | +0.25 (Severe) |

| Leaky Circular (Feature selection on train set, but normalization on full set) | 0.70 | 0.78 (0.05) | +0.08 (Moderate) |

| Nested CV (Proper partitioning) | 0.70 | 0.71 (0.07) | +0.01 (Minimal) |

Mandatory Visualization

Diagram Title: Nested Cross-Validation Workflow to Prevent Double-Dipping

Diagram Title: Permutation Testing for CV Classification Significance

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Correcting Circular Analysis |

|---|---|

Nilearn (Scikit-learn wrapper) |

Python library providing high-level functions for implementing nested CV and permutation tests on brain maps, ensuring correct data partitioning. |

Scikit-learn Pipeline & GridSearchCV |

Essential for encapsulating feature selection and classifier steps, allowing them to be safely fitted within inner CV loops without data leakage. |

| PermutationTestScore from Scikit-learn | A function that performs permutation tests for cross-validation scores, automating the null distribution generation process. |

| Custom Seed/Random State | Using a fixed random seed ensures the reproducibility of data splits and permutation orders, a critical component for replicable results. |

| High-Performance Computing (HPC) Cluster | Nested CV with permutation testing (5000+ iterations) is computationally prohibitive on a desktop; HPC resources are often necessary. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I received a high accuracy score on my test set during cross-validation, but my model fails on a completely independent dataset. Could this be double-dipping?

A: Yes, this is a classic symptom of feature selection leakage (double-dipping). If feature selection is performed before cross-validation on the entire dataset, information from the 'test' folds contaminates the 'training' folds. The model appears to perform well due to this leakage but lacks generalizability. To fix this, you must nest the feature selection inside the cross-validation loop. Here is a corrected protocol using scikit-learn:

Q2: What is the concrete difference between nested and non-nested cross-validation in the context of feature selection, and which one should I report?

A: The key difference is what stage of the workflow is evaluated. Use this table to decide:

| Aspect | Non-Nested CV (Flawed) | Nested CV (Correct) |

|---|---|---|

| Feature Selection Step | Performed once on the entire dataset before CV. | Performed independently within each training fold of the outer CV loop. |

| Information Leakage | High. Test fold data influences which features are selected. | None. The outer test fold is never used for selection. |

| Performance Estimate | Optimistically biased. Measures how well features fit the specific dataset, not the underlying population. | Unbiased/Pessimistic. Estimates how the entire modeling process (including feature selection) generalizes. |

| Final Model | Trained on all data using the features selected from all data. | Trained on all data; the optimal feature count (hyperparameter) is chosen based on inner CV results. |

| What to Report | Do not report this score as generalizable performance. | Report the mean score of the outer CV loop as your model's estimated generalization performance. |

You must report the performance from the outer loop of a nested CV design.

Q3: Which Python libraries have built-in safeguards to prevent feature selection leakage?

A: Several modern libraries enforce or encourage correct practices by design. The key is to use a Pipeline object.

| Library / Module | Key Tool / Class | How It Prevents Leakage | Code Snippet Example |

|---|---|---|---|

scikit-learn |

Pipeline |

Guarantees that fit_transform is only called on training folds during CV. |

make_pipeline(StandardScaler(), SelectKBest(), LogisticRegression()) |

scikit-learn |

GridSearchCV / RandomizedSearchCV |

When used with a Pipeline, it correctly nests hyperparameter tuning (like k in SelectKBest) within CV. |

GridSearchCV(pipeline, {'selectkbest__k': [10, 50]}, cv=5) |

imblearn |

Pipeline (from imblearn) |

Extension of sklearn's pipeline that safely handles resampling (e.g., SMOTE) without leakage. Essential if your workflow includes balancing. | from imblearn.pipeline import make_pipeline |

NeuroLearn / nilearn |

SearchLight, Decoder |

These high-level neuroimaging tools often implement nested CV internally for mass-univariate or multivariate analysis. | decoder = Decoder(cv=5, screening_percentile=10) # Screening is nested |

Q4: My neuroimaging dataset has high dimensionality (e.g., 100k voxels) and a small sample size (N=50). How can I perform feature selection safely?

A: In high-dimensional, small-sample settings, stability becomes a major issue. A safe protocol involves variance thresholding, univariate screening within CV, and stability analysis.

Q5: How do I quantify and report the stability of my selected features to add credibility to my study?

A: Stability measures the reproducibility of the selected feature set across data subsamples. Use the following protocol:

- Subsampling: Repeatedly (e.g., 100 times) draw a random subsample (e.g., 80%) of your subjects.

- Selection: Run your entire, nested feature selection and modeling pipeline on each subsample.

- Calculation: Compute the pairwise Jaccard index or overlap coefficient between the selected feature sets from each run.

- Report: Present the average stability score and the most frequently selected features.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Safe Feature Selection Workflow |

|---|---|

scikit-learn Pipeline |

The foundational "reagent." Encapsulates the sequence of transformation and modeling steps, ensuring fit and transform are called in the correct order during validation. |

| Nested Cross-Validation Schema | The core experimental "protocol." Rigorously separates data used for model/feature development from data used for performance evaluation, preventing optimistic bias. |

| Stability Analysis Script | A "quality control" assay. Quantifies the reproducibility of the selected feature subset across data perturbations, adding robustness to findings. |

| High-Performance Computing (HPC) / Cloud Credits | Essential "lab infrastructure." Nested CV and stability analysis are computationally intensive; parallelization across many CPUs/cores is often necessary. |

| Version Control (Git) & Containerization (Docker/Singularity) | The "lab notebook and environment control." Guarantees the exact reproducibility of the entire computational workflow, including all library versions. |

| Public Benchmark Datasets (e.g., ABIDE, HCP, UK Biobank) | The "positive control." Allows validation of methods on known data before applying to novel, proprietary datasets in drug development. |

Workflow Visualizations

Diagram 1: Non-Nested vs. Nested Cross-Validation

Diagram 2: High-Dimensional Neuroimaging Analysis Pipeline

Troubleshooting Guides & FAQs

FAQ 1: Why does my model's performance drop drastically after applying PCA to my neuroimaging features?

- Answer: This is a classic symptom of over-reduction or violation of statistical independence. Principal Component Analysis (PCA) is sensitive to scaling and may discard components containing signal if variance is not properly normalized. More critically, if PCA was applied to the entire dataset before train-test splitting, it constitutes "double-dipping," where information from the test set contaminates the training process, leading to inflated and non-generalizable results. Ensure PCA is fit only on the training fold within each cross-validation iteration.

FAQ 2: How can I verify if my feature selection process is statistically independent?

- Answer: Implement a strict nested cross-validation (CV) workflow. The inner CV loop selects features and tunes model parameters using only the training partition of that fold. The outer CV loop evaluates the final model's performance on the held-out test data. Document the selected features per outer fold; high variability suggests instability, while perfect consistency may indicate data leakage.

FAQ 3: What is the minimum sample size required for stable dimensionality reduction on high-dimensional neuroimaging data (e.g., fMRI voxels)?

- Answer: There is no universal rule, but guidelines exist based on feature-to-sample ratios. See Table 1 for empirical recommendations from recent literature.

Table 1: Sample Size Guidelines for Dimensionality Reduction Techniques

| Technique | Recommended Min. Sample Size | Stability Metric (Avg. Dice Score >0.8) | Key Reference (Year) |

|---|---|---|---|

| PCA | 10 samples per feature (p>>n context) | N/A | Jolliffe & Cadima (2016) |

| Stability Selection | 50-100 total samples | Feature selection consistency | Meinshausen & Bühlmann (2010) |

| Recursive Feature Elimination (RFE) | 100+ total samples | Model accuracy variance <5% | Vabalas et al. (2019) |

FAQ 4: My selected features are biologically implausible. How do I balance statistical rigor with interpretability?

- Answer: Pure multivariate methods may yield complex, non-intuitive feature combinations. Incorporate a domain knowledge filter before the final modeling stage. For example, after initial screening with an independent test (e.g., mass-univariate ANOVA on training data only), restrict subsequent multivariate selection to regions from a pre-defined atlas. This constrains the solution space to biologically meaningful features without peeking at the test set.

Experimental Protocols

Protocol 1: Nested Cross-Validation for Double-Dip-Free Feature Selection

- Partition Data: Split full dataset into K outer folds (e.g., K=5 or K=10).

- Outer Loop: For each outer fold i: a. Designate fold i as the test set; remaining K-1 folds are the development set. b. Inner Loop: Split the development set into L inner folds. c. Perform feature selection and hyperparameter tuning using only the data in the L-1 inner training folds. d. Validate tuned models on the held-out inner test fold. e. Select the best feature set/parameters based on inner loop performance. f. Train a final model on the entire development set using the chosen features/parameters. g. Evaluate this model once on the outer test fold i.

- Aggregate: The final performance is the average across all K outer test folds.

Protocol 2: Stability Analysis for Feature Selection

- Subsampling: Generate M (e.g., M=100) bootstrap subsamples of your training data (e.g., 80% of samples).

- Independent Selection: Apply your chosen feature selection method (e.g., ANOVA, LASSO) to each subsample.

- Compute Frequency: For each original feature, calculate its selection frequency across the M subsamples.

- Threshold: Retain only features selected in more than a pre-defined threshold (e.g., 80%) of subsamples. This yields a stable, consensus feature set.

Visualizations

Diagram Title: Nested Cross-Validation Workflow to Prevent Double-Dipping

Diagram Title: Stability Selection for Robust Feature Identification

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Dimensionality Reduction in Neuroimaging Research

| Item / Software | Function / Purpose | Key Consideration for Integrity |

|---|---|---|

| Scikit-learn Pipeline | Encapsulates preprocessing, selection, and modeling steps. | Ensures transformations are refit on each training fold, preventing leakage. |

| NiLearn / Nilearn | Python toolbox for statistical learning on neuroimaging data. | Provides ready-made functions for masker operations and spatially-aware CV. |

| Stability Selection | A wrapper method (e.g., StabilitySelection in sklearn) that aggregates selection across subsamples. |

Quantifies feature reliability, reducing false positives from arbitrary single-run selection. |

| Atlas Libraries (e.g., AAL, Harvard-Oxford) | Pre-defined anatomical or functional region-of-interest (ROI) maps. | Provides a biologically grounded constraint for feature space, aiding interpretability. |

| Permutation Testing Framework | Non-parametric method to establish significance of model performance. | Generates a null distribution by repeatedly shuffling labels, validating against chance. |