Strategies for Reducing Variance in Neuroimaging Classification Models: Enhancing Reproducibility for Clinical and Research Applications

This article provides a comprehensive guide for researchers and drug development professionals on managing variability in neuroimaging-based classification models.

Strategies for Reducing Variance in Neuroimaging Classification Models: Enhancing Reproducibility for Clinical and Research Applications

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing variability in neuroimaging-based classification models. Covering foundational concepts, methodological applications, troubleshooting, and validation techniques, it addresses critical challenges such as the impact of cross-validation setups on statistical significance, the necessity of feature reduction to combat the curse of dimensionality, and strategies for ensuring robust and generalizable model performance. By synthesizing recent research and practical solutions, this resource aims to equip scientists with the tools to build more reliable and reproducible machine learning models for neurological disorder classification, ultimately supporting more accurate diagnostic tools and therapeutic development.

Understanding the Sources and Impact of Variance in Neuroimaging ML

Defining Variance and the Reproducibility Crisis in Biomedical ML

Did You Know?

A survey of machine learning (ML) applications across scientific fields found that data leakage affects at least 294 studies from 17 different fields, leading to overoptimistic and irreproducible results [1]. In neuroimaging, this often stems from improper handling of the "small-n-large-p" problem, where the number of features (voxels) vastly outnumbers the number of observations (subjects) [2].

FAQ & Troubleshooting Guide

This guide addresses common challenges researchers face when working to reduce variance and ensure reproducibility in neuroimaging classification models.

Q1: What is the practical difference between high and low variance in my neuroimaging model's performance?

A: Variance refers to your model's sensitivity to the specific training data it was built on [3].

- High Variance: Your model is too complex and has overfit the training data. It learns the noise and idiosyncrasies of your specific dataset rather than the general underlying brain patterns.

- Symptoms: High accuracy on your training set, but poor performance on your held-out test set or new, unseen data [3].

- Low Variance: Your model is too simple and has underfit the training data. It fails to capture the relevant features and patterns in the data.

- Symptoms: Poor performance on both the training and test sets [3].

The goal is to find a balance through the bias-variance tradeoff, creating a model that is complex enough to learn the true patterns but simple enough to generalize to new data [4].

Q2: My model works perfectly in development but fails in validation. What is the most likely cause?

A: The most pervasive cause is data leakage, where information from your test set inadvertently influences the training process [5]. This creates wildly overoptimistic performance during development that does not hold up. The table below summarizes common leakage pitfalls and their solutions.

Table 1: Common Data Leakage Pitfalls and Mitigations in Neuroimaging Research

| Leakage Type | Description | How to Fix It |

|---|---|---|

| No Train-Test Split [5] | The model is evaluated on the same data it was trained on. | Always perform a strict hold-out or cross-validation split before any pre-processing. |

| Feature Selection on Full Dataset [2] [5] | Selecting the "most relevant" voxels using data from all subjects (train and test) before splitting. | Perform all feature reduction steps only on the training set. The test set must be treated as unseen data. |

| Pre-processing on Full Dataset [5] | Normalizing or scaling the entire dataset (e.g., using StandardScaler) before splitting. | Fit pre-processing parameters (like mean and standard deviation) on the training data only, then apply that same transformation to the test data [6]. |

| Non-Independence between Train & Test Sets [5] | Having data from the same subject or related scans in both splits. | Ensure subjects or data points are independent between splits. For longitudinal data, use a subject-wise split. |

Q3: How can I proactively prevent data leakage in my research pipeline?

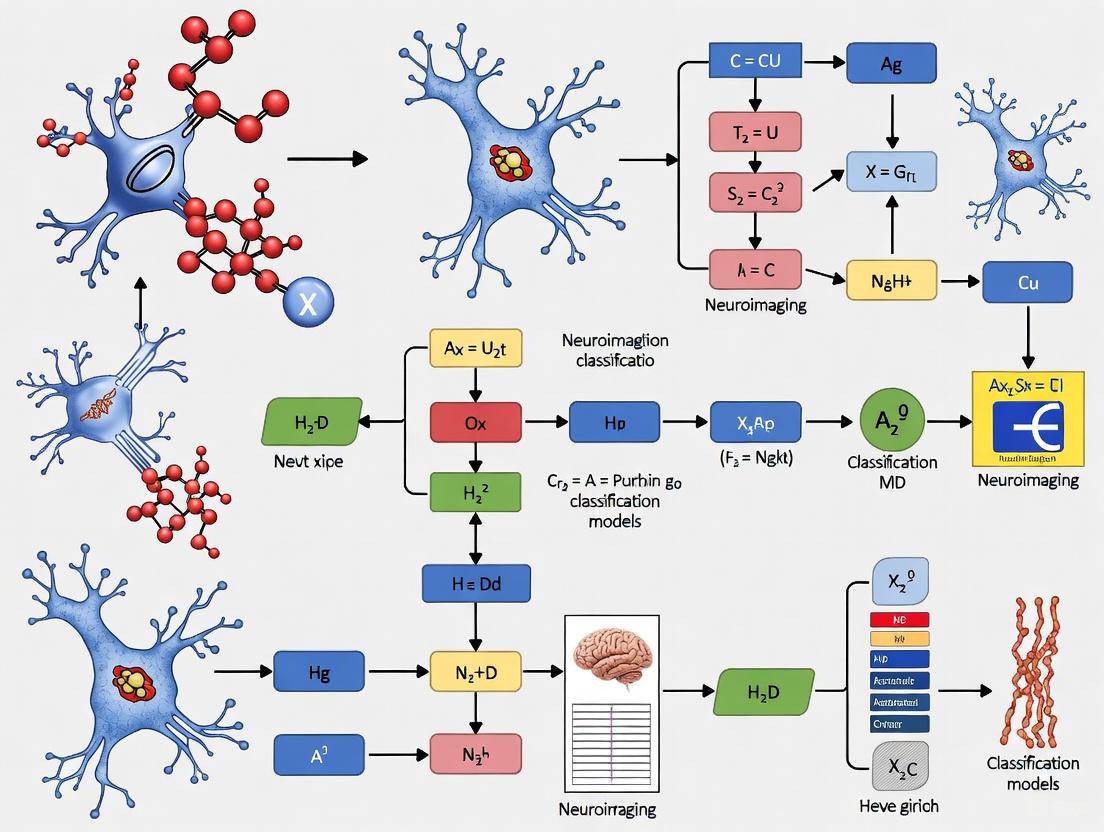

A: Adopt a structured checklist, such as a Model Info Sheet [5] [1], to document your workflow. The diagram below outlines a leakage-proof experimental workflow.

Diagram 1: A leakage-proof ML workflow. Note the test set is isolated until the final step.

Q4: Beyond leakage, what other factors contribute to the reproducibility crisis?

A: Several other technical and methodological challenges can undermine reproducibility:

- Randomness in Training: Deep learning models are trained using stochastic (random) processes. If the random seed is not fixed, you can get different results every time you run the same code on the same data [7]. One study found that changing the random seed could inflate estimated model performance by as much as two-fold [7].

- Software and Version Dependencies: Default parameters can differ between software libraries and even between versions of the same library, leading to substantially different conclusions [7].

- Computational Costs: Reproducing state-of-the-art models (e.g., large transformers) can be prohibitively expensive, sometimes costing millions of dollars, which limits independent verification [7].

The Scientist's Toolkit: Research Reagent Solutions

This table details key methodological "reagents" essential for conducting robust and reproducible neuroimaging ML research.

Table 2: Essential Tools and Methods for Reproducible Neuroimaging ML

| Item | Function & Explanation | Example Use-Case |

|---|---|---|

| Strict Train-Test Split | The foundational step to prevent leakage. Isolates a portion of the data for final evaluation only. | Randomly hold out 20% of subjects' scans before any analysis. |

| Fixed Random Seed | Ensures that any random processes (e.g., data splitting, model initialization) can be replicated. | In Python, use random.seed(123) and np.random.seed(123) at the start of your script. |

| Feature Reduction | Mitigates the "small-n-large-p" problem by reducing the number of voxels/features, fighting overfitting. | Use filter methods (t-tests, Pearson correlation) or embedded methods (Lasso) on the training set to select the most predictive features [2]. |

| Model Info Sheets [5] [1] | A documentation template that forces researchers to justify the absence of data leakage, increasing transparency. | Complete a checklist detailing how data was split, how features were selected, and how pre-processing was performed. |

| Open Science Practices | Sharing code and data (where possible) allows for direct reproducibility checks by the scientific community [7]. | Use repositories like GitHub for code and public data archives (e.g., UK Biobank) for data, with clear documentation. |

The Critical Role of Cross-Validation Setups and Statistical Pitfalls

Frequently Asked Questions (FAQs) and Troubleshooting Guides

Why does my model show a significant accuracy difference just by changing the number of CV folds or repetitions?

Problem: Researchers often find that statistical significance in model comparisons changes substantially when altering cross-validation parameters, such as the number of folds (K) or repetitions (M), even when comparing models with no intrinsic performance difference.

Explanation: This occurs because the statistical testing procedure commonly used is fundamentally flawed. When you perform repeated K-fold cross-validation, the resulting accuracy scores are not independent. Using a standard paired t-test on these dependent scores violates the test's independence assumption, creating artificial significance that depends on your CV configuration rather than true model superiority [8].

Solution:

- Avoid using simple paired t-tests on accuracy scores from repeated cross-validation.

- Use statistical tests specifically designed for correlated samples or consider methods like nested cross-validation for more reliable model comparison [8] [9].

- Pre-specify your CV parameters before analysis and report them thoroughly to avoid p-hacking accusations [8].

Table: Impact of CV Parameters on False Positive Rates

| Dataset | Number of Folds (K) | Number of Repetitions (M) | Positive Rate (p < 0.05) |

|---|---|---|---|

| ABCD | 2 | 1 | 0.08 |

| ABCD | 50 | 1 | 0.21 |

| ABCD | 2 | 10 | 0.35 |

| ABCD | 50 | 10 | 0.57 |

| ABIDE | 2 | 1 | 0.10 |

| ABIDE | 50 | 1 | 0.24 |

| ADNI | 2 | 1 | 0.12 |

| ADNI | 50 | 1 | 0.29 |

Why does my classifier perform significantly below chance level in a properly counterbalanced experiment?

Problem: Despite using counterbalancing to control for order effects, cross-validation shows classification accuracy significantly below the 50% chance level expected in a balanced binary classification task [10].

Explanation: This occurs due to a mismatch between counterbalanced experimental designs and cross-validation. In a counterbalanced design, the confounding factor (e.g., trial order) is equally distributed across conditions. However, when using leave-one-run-out cross-validation, the training set contains an imbalance of the confound, which the classifier learns. When applied to the test set with the opposite imbalance, it systematically misclassifies all samples [10].

Example Experimental Setup:

- 4 runs with 2 trials each (1 per condition)

- Perfectly counterbalanced: Conditions A and B equally often in 1st and 2nd trial positions

- Neuroimaging data: y=10 for all first trials, y=20 for all second trials

- True difference between conditions: None (only trial order effect exists)

Solution:

- Ensure your cross-validation scheme respects the experimental design structure.

- Use the "Same Analysis Approach" (SAA): Test your analysis pipeline on simulated null data with the same design structure to detect such mismatches before analyzing real data [10].

- Consider modified cross-validation schemes that maintain the balance of potential confounds across training and test sets.

Why do my classification results differ dramatically when using block-wise versus random cross-validation?

Problem: Classification accuracy inflates significantly when using random splits that ignore the block structure of data collection compared to block-wise cross-validation that respects temporal boundaries [11].

Explanation: Neuroimaging data contains temporal dependencies across multiple timescales - from neural processes themselves to experimental factors like decreasing alertness or initial nervousness. When data is split randomly without respecting blocks, the classifier can exploit these temporal patterns rather than true condition-related signals, leading to optimistically biased performance estimates [11].

Quantitative Impact:

- Riemannian minimum distance (RMDM) classifiers: Differences up to 12.7%

- Filter Bank Common Spatial Pattern (FBCSP) with LDA: Differences up to 30.4% [11]

Solution:

- Always use cross-validation that respects the block/trial structure of your experiment.

- For blocked designs, use leave-one-block-out or similar approaches rather than random splits.

- Report your data splitting procedures in detail, including how you handled temporal dependencies [11].

How can I avoid overoptimistic performance estimates with small sample sizes?

Problem: With limited samples, cross-validation produces large error bars in performance estimation, creating false confidence in results and enabling p-hacking through selective reporting [12].

Explanation: The variance in cross-validation accuracy estimates is inherently large with small samples. Error bars can be around ±10% with typical neuroimaging sample sizes. This problem is particularly severe for inter-subject diagnostics studies, though less so for cognitive neuroscience studies with multiple trials per subject [12].

Solution:

- Use larger sample sizes - predictive modeling requires more samples than standard statistical approaches.

- Be transparent about cross-validation variability by reporting confidence intervals alongside point estimates.

- Avoid the temptation to experiment with different CV parameters until obtaining significant results.

- Use nested cross-validation when performing model selection to avoid optimistic bias [9] [12].

Experimental Protocols for Robust Cross-Validation

Framework for Comparing Model Accuracy

This protocol creates two classifiers with identical intrinsic predictive power to test whether CV setups artificially create significance [8]:

Step 1: Data Preparation

- Randomly choose N samples from each class to ensure balance

- Use linear Logistic Regression as base classifier

Step 2: Model Perturbation

- Create a random zero-centered Gaussian vector with standard deviation of 1/E, where E is the perturbation level

- The vector dimension equals the number of features

- Create two perturbed models by adding and subtracting this vector from the decision boundary coefficients

Step 3: Cross-Validation

- Apply K-fold cross-validation repeated M times

- Train both perturbed models on each training fold

- Evaluate accuracy on corresponding test folds

Step 4: Statistical Testing

- Apply hypothesis testing (e.g., paired t-test) to the K × M accuracy scores

- Record the p-value quantifying significant accuracy difference

Step 5: Interpretation

- Since models have identical intrinsic power, significant differences indicate CV artifact

- Repeat with different K, M combinations to assess impact of CV parameters

The Same Analysis Approach (SAA) Protocol

This methodology tests your entire analysis pipeline for hidden confounds [10]:

Step 1: Analyze Experimental Design

- Apply your analysis method to aspects of the experimental design itself

- Check if the design structure alone produces significant results

Step 2: Simulate Confounds

- Create simulated data containing only potential confounds (no true effect)

- Run your full analysis pipeline on this data

Step 3: Simulate Null Data

- Generate data with no effect or confounds (pure noise)

- Apply your analysis to establish a true null baseline

Step 4: Analyze Control Data

- Run the same analysis on any available control measurements (e.g., reaction times)

Step 5: Compare Results

- Keep the analysis method identical across all tests

- Only if all control analyses show no effect can you trust your main results

Research Reagent Solutions

Table: Essential Tools for Robust Neuroimaging Classification

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Stratified K-Fold | Maintains class distribution across folds | sklearn.model_selection.StratifiedKFold [13] |

| Nested Cross-Validation | Provides unbiased performance estimation with hyperparameter tuning | Custom implementation with inner & outer loops [9] |

| Block-Wise Splitting | Respects temporal dependencies in data | sklearn.model_selection.GroupKFold with block IDs [11] |

| Pipeline Class | Prevents data leakage during preprocessing | sklearn.pipeline.Pipeline [14] |

| Statistical Testing Framework | Compares models without independence violation | Corrected resampled t-test or permutation tests [8] |

Workflow Diagrams

Frequently Asked Questions (FAQs)

1. What is the "small-n-large-p" problem in neuroimaging? The "small-n-large-p" problem, also known as the curse of dimensionality, describes a common scenario in neuroimaging where the number of features (p), such as voxels in a brain scan, is vastly greater than the number of observations or subjects (n). A typical study may have fewer than 1000 subjects but over 100,000 non-zero voxels. This creates a high-dimensional feature space that is sparsely populated by data points, leading to major challenges in training robust machine learning models [2] [15].

2. Why is this problem particularly critical for neuroimaging classification models? This problem is critical because it directly leads to model overfitting. An overfitted model learns patterns from the training data too closely, including noise and irrelevant features, resulting in poor generalization to new, unseen data. This compromises the model's predictive accuracy and clinical utility, as it becomes unable to make reliable predictions on individual subjects [2] [16]. Furthermore, it can inflate performance estimates during development, leading to unexpected failures when the model is deployed on real-world data [15].

3. What are the primary strategies to mitigate this problem? The main strategies involve feature reduction and employing robust model validation techniques [2] [17]. Feature reduction can be broken down into:

- Feature Selection: Choosing a subset of the most relevant features.

- Dimensionality Reduction: Transforming the original high-dimensional features into a lower-dimensional space. Using rigorous cross-validation procedures and controlling for confounding variables are also essential to ensure generalizable results [16].

4. How can I tell if my feature selection method is stable? A feature selection method is considered stable if it produces a similar set of relevant features when applied to different subsets of your data. You can assess stability using resampling strategies like bootstrap or complimentary pairs stability selection. These methods repeatedly apply the feature selection algorithm to resampled versions of your dataset. The frequency with which a feature is selected across these iterations indicates its stability. Selecting stable features helps ensure that your findings are not just a fluke of a particular data split and are more likely to be replicable [18].

5. My model performs well in cross-validation but fails on a separate test set. What went wrong? This is a classic sign of overfitting and can be caused by several factors related to the curse of dimensionality [15]. A common flaw is double-dipping or data leakage, where information from the test set is inadvertently used during the feature selection or model training process. Feature reduction must be performed using only the training data in each cross-validation fold. If the entire dataset is used for feature selection before cross-validation, the model's performance will be optimistically biased and will not reflect its true ability to generalize [2]. Furthermore, the statistical significance of accuracy differences between models can be highly sensitive to the cross-validation setup (e.g., the number of folds and repetitions), potentially leading to misleading conclusions [8].

Troubleshooting Guides

Issue 1: High Model Variance and Overfitting

Problem: Your classification model achieves high accuracy on the training data but performs poorly on validation or hold-out test data.

Solution: Implement a rigorous feature reduction pipeline.

Step 1: Apply Feature Reduction. Integrate one of the following techniques into your cross-validation workflow, ensuring it is fit only on the training fold.

- Filter Methods: Use simple univariate statistics to rank and select features.

- Pearson Correlation Coefficient: Ranks features by their linear correlation with the class labels or continuous targets [2].

- T-tests/ANOVA: Identify features with statistically significant differences between groups.

- Wrapper Methods: Use the performance of a predictive model (e.g., SVM) to evaluate feature subsets.

- Recursive Feature Elimination (RFE): Iteratively removes the least important features based on the model's coefficients or feature importance scores [18].

- Embedded Methods: Perform feature selection as part of the model training process.

- Filter Methods: Use simple univariate statistics to rank and select features.

Step 2: Use Regularization. If not using an embedded method, apply regularization techniques to your classifier to constrain model complexity.

- L2 Regularization (Ridge): Penalizes large coefficients, encouraging a more distributed use of features [17].

- Dropout: Randomly deactivates neurons during training in neural networks to prevent co-adaptation [17].

- Early Stopping: Halts training when performance on a validation set stops improving [17].

Step 3: Apply Data Augmentation. Artificially increase the effective size of your training dataset (n) by creating modified versions of your existing data. For neuroimaging, this can include spatial transformations (rotations, flips), adding noise, or simulating intensity variations [17].

Issue 2: Unstable and Non-Reproducible Feature Sets

Problem: The set of "important" voxels or features identified by your model changes drastically when you re-run the analysis on a slightly different data subset.

Solution: Adopt stability-based selection frameworks.

- Step 1: Implement Stability Selection. This meta-algorithm works with any feature selection method (e.g., LASSO) to improve stability [18].

- Generate multiple random subsamples of your training data (e.g., using bootstrap).

- Apply your chosen feature selection method to each subsample.

- Calculate the selection frequency for each feature across all subsamples.

- Step 2: Threshold the Results. Retain only those features whose selection frequency exceeds a user-defined threshold (e.g., 70-80%). This yields a consensus feature set that is robust to data perturbations [18].

- Step 3: Consider Hybrid Decompositions. For neuroimaging, instead of using raw voxels, consider using a hybrid functional decomposition like the NeuroMark pipeline. This approach uses spatially constrained Independent Component Analysis (ICA) to derive subject-specific functional networks from group-level priors. It provides a more stable, dimensional, and functionally relevant set of features for modeling compared to fixed anatomical atlases or fully data-driven voxel-wise approaches [19].

Issue 3: Poor Generalization to New Data (Domain Shift)

Problem: Your model, trained on data from one scanner or site, fails to perform accurately on data collected from a different scanner or site.

Solution: Employ strategies to enhance model robustness and generalizability.

- Step 1: Harmonize Data. Use techniques like ComBat to remove unwanted site- or scanner-specific effects from your neuroimaging features before model training [16].

- Step 2: Use Domain Adaptation. Leverage transfer learning to pre-train your model on a large, public neuroimaging dataset, then fine-tune it on your specific, smaller dataset. This helps the model learn more general, invariant features [17].

- Step 3: Leverage Ensemble Learning. Combine predictions from multiple models to create a stronger, more robust final prediction.

Experimental Protocols & Data Summaries

Table 1: Comparison of Common Feature Reduction Techniques in Neuroimaging

| Technique | Type | Key Principle | Advantages | Limitations |

|---|---|---|---|---|

| Pearson Correlation | Filter | Ranks features by linear correlation with target variable. | Fast, simple to implement and interpret. | Only captures linear relationships. |

| Recursive Feature Elimination (RFE) | Wrapper | Iteratively removes least important features using a classifier's weights. | Model-aware; can find complex, multivariate interactions. | Computationally expensive; risk of overfitting to the training split. |

| LASSO (L1) | Embedded | Adds a penalty that forces some feature coefficients to exactly zero. | Performs feature selection and model training simultaneously. | Can be unstable with highly correlated features; may select one feature arbitrarily from a correlated group. |

| Elastic Net | Embedded | Combines L1 (LASSO) and L2 (Ridge) penalties. | Handles correlated features better than LASSO alone. | Has two hyperparameters to tune, increasing complexity. |

| Principal Component Analysis (PCA) | Dimensionality Reduction | Projects data into a lower-dimensional space of orthogonal components that maximize variance. | Effective for noise reduction; guarantees orthogonal features. | Components are linear combinations of all original features, reducing interpretability. |

| Independent Component Analysis (ICA) | Dimensionality Reduction | Separates data into statistically independent components. | Can capture non-Gaussian, independent sources (useful for fMRI). | Order and sign of components can be ambiguous. |

| Stability Selection | Meta-Method | Applies a base feature selector to data subsamples and selects features with high selection frequency. | Dramatically improves stability and controls false positives. | Adds a layer of computational complexity. |

Detailed Methodology: Stability Selection with LASSO

This protocol is designed to identify a stable set of features for a neuroimaging-based classifier [18].

- Input: A data matrix ( X ) (subjects × voxels) and target vector ( y ) (e.g., diagnostic labels).

- Subsampling: Generate ( B ) (e.g., 100) bootstrap samples. Each sample is drawn randomly from the training set with replacement.

- Base Feature Selection: For each bootstrap sample ( b = 1 ) to ( B ):

- Run the LASSO algorithm on the subsampled data.

- Vary the regularization parameter ( \lambda ) across a defined range.

- For each value of ( \lambda ), record the set of selected features, ( S^\lambda(b) ).

- Calculate Selection Probabilities: For each feature ( j ) and each ( \lambda ), compute its selection probability: ( \hat{\Pi}j^\lambda = \frac{1}{B} \sum{b=1}^B I{j \in S^\lambda(b)} ) where ( I ) is the indicator function.

- Determine Stable Features: A feature is deemed stable if its maximum selection probability over all ( \lambda ) values exceeds a predefined threshold ( \pi{thr} ) (typically between 0.6 and 0.9). The final stable set is: ( \hat{S}^{stable} = { j : \max\lambda(\hat{\Pi}j^\lambda) \geq \pi{thr} } ).

Workflow Visualization: A Robust ML Pipeline for Neuroimaging

Relationship Diagram: Mitigation Strategies and Their Goals

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Software and Methodological Tools

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| LASSO / L1 Regularization | Embedded Feature Selector | A linear model with a penalty that promotes sparsity, automatically performing feature selection by driving the coefficients of irrelevant features to zero [18] [17]. |

| Stability Selection | Meta-Algorithm Framework | A wrapper method that improves the stability and reliability of any base feature selector (e.g., LASSO) by aggregating results across multiple data subsamples [18]. |

| NeuroMark Pipeline | Hybrid Decomposition Tool | An ICA-based tool that provides subject-specific functional network maps from fMRI data by using group-level spatial priors. It offers a stable, data-driven feature set that balances correspondence and individual variability [19]. |

| ComBat | Data Harmonization Tool | A statistical method used to remove unwanted site- or scanner-specific biases from neuroimaging data, thereby reducing domain shift and improving multi-site study integration [16]. |

| Cross-Validation (CV) | Model Validation Protocol | A resampling procedure used to evaluate model performance and mitigate overfitting. Data is split repeatedly into training and validation sets. Crucially, all feature reduction must be performed independently within each training fold to prevent data leakage [2] [8]. |

| Data Augmentation | Preprocessing Strategy | A set of techniques (e.g., rotation, noise injection) that artificially expands the training dataset by creating slightly modified copies of existing data. This helps the model learn invariances and improves robustness [17]. |

| Ensemble Methods (Bagging) | Modeling Technique | A machine learning approach that combines predictions from multiple models (e.g., trained on different data subsets) to reduce variance and improve overall predictive performance and stability [17]. |

How Data Heterogeneity and Confounds Introduce Model Instability

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common sources of data heterogeneity in neuroimaging studies? Data heterogeneity, often called "dirty data," arises from multiple sources that introduce unwanted variability into your models [20]. Key sources include:

- Phenotypic Measures: The clinical or behavioral labels you use can be subjective, have poor inter-rater reliability, or be non-specific, meaning the same score can appear across different disorders [20].

- Participant Characteristics: Real-world clinical populations often have comorbidities (overlapping symptoms across diagnoses), are on medications that can alter the BOLD signal, and exhibit episodic symptoms that cause brain states to vary from day to day [20].

- Data Collection: Using multiple scanner sites introduces inter-scanner variability. Furthermore, data can be incomplete due to subjects' inability to complete scans or questionnaires, which is more common in clinical populations [20].

- Sample Bias: Studies often over-represent Western, educated, industrialized, rich, and democratic (WEIRD) populations, and may exclude individuals who cannot remain still during a scan, consent, or attend to tasks, leading to models that do not generalize [20].

FAQ 2: How do confounds specifically lead to model instability and poor generalization? A confound is a variable (e.g., age, gender, acquisition site) that affects your neuroimaging data and has a sample association with your target variable that differs from the true association in the broader population you care about (the population-of-interest) [21]. When a model learns from this biased sample, it can learn the spurious correlations introduced by the confound rather than the true brain-behavior relationship. This makes the model unstable and its predictions inaccurate when applied to new, more representative data from the population-of-interest [21].

FAQ 3: I have a high-performance model on the training set. Why does it fail to detect disease in a real-world clinical sample? This common issue can be explained by the accuracy-sensitivity trade-off [22]. Complex, highly accurate models trained to predict a variable like chronological age with high precision often achieve this by relying on stable, low-variance aging features. However, these same models may inadvertently ignore the higher-variance, noisier brain signals that are actually most informative for detecting disease. A simpler or even a deliberately over-regularized model, while less accurate at predicting age, might be more sensitive to these disease-relevant deviations and thus serve as a better biomarker [22].

FAQ 4: What is the minimum number of site-matched controls needed to reliably calibrate a normative model? While normative models can be adapted to new scanner sites with a relatively small control cohort, the sample size is critical for stability. Empirical benchmarking suggests that using only 10 site-matched controls leads to high variance in effect size estimates, with significant over- or underestimation [23]. Using around 30 controls substantially improves consistency and robustness, providing a more reliable calibration for identifying patient deviations [23].

Troubleshooting Guides

Issue 1: Managing Confounds in Predictive Modeling

Problem: Your model's performance degrades because it is learning from confounded data (e.g., a sample where age is correlated with your clinical target).

Solution Protocol: Several methodological approaches can be employed to handle confounds [21].

Table: Comparison of Confound Mitigation Strategies

| Method | Brief Description | Key Considerations |

|---|---|---|

| Image Adjustment | Statistically removing the effect of the confound from the imaging data before model training. | May inadvertently remove signal of interest along with the confound. |

| Confound as Predictor | Including the confound as an additional input feature in the model. | Can lead to less accurate models than a baseline that ignores confounding, as the model may over-rely on the confound. |

| Instance Weighting | Weighting samples during training to make the distribution of the confound in the training sample resemble that of the population-of-interest. | Can focus predictions favorably on certain population strata, but may not improve overall accuracy over the baseline. |

Experimental Workflow: The following diagram outlines a general workflow for diagnosing and addressing confounds in a modeling pipeline.

Issue 2: High Model Complexity Leading to Poor Clinical Utility

Problem: Your highly complex model (e.g., a deep neural network) achieves excellent chronological age prediction accuracy but shows low sensitivity for detecting neurological or psychiatric diseases.

Solution Protocol: Re-evaluate the modeling objective. For clinical biomarker development, sensitivity to disease may be more important than raw prediction accuracy for a proxy variable [22].

- Benchmark Simpler Models: Train a set of models with varying complexity (e.g., from regularized linear regression to CNNs and Transformers) on your data.

- Evaluate on Clinical Outcome: Instead of only reporting age prediction error (e.g., MAE), calculate the effect size (e.g., Cohen's d) of the brain-age gap between a patient group and carefully matched controls for each model.

- Select for Sensitivity: You may find that a simpler model, despite having a higher age prediction error, produces a larger patient-control effect size and is therefore a more clinically useful biomarker [22].

Experimental Workflow: The logical relationship between model complexity, age prediction accuracy, and clinical sensitivity is summarized below.

Issue 3: Leveraging Normative Models with Limited Local Controls

Problem: You want to use a pre-trained normative model to quantify individual deviations in your clinical cohort, but you have a very small number of healthy controls from your local scanner site for calibration.

Solution Protocol: Follow best practices for normative modeling to ensure your deviation scores (e.g., z-scores) are valid [23].

- Choose a Pretrained Platform: Select a platform (e.g., BrainChart, Brain MoNoCle, PCN Toolkit) that supports the brain morphometric you are analyzing (e.g., cortical thickness) and is compatible with your atlas [23].

- Calibrate with Available Controls: Use any site-matched healthy controls you have for calibration. Hierarchical Bayesian models can adapt reasonably well even with small samples [23].

- Understand the Limitations: If you have fewer than 30 controls, be aware that your effect size estimates for group differences may be unstable. Where possible, use multiple platforms to validate key findings, as absolute z-scores can vary systematically between tools even if relative patterns are consistent [23].

Table: Essential Resources for Stable Neuroimaging Predictive Modeling

| Resource Category | Specific Examples | Function & Utility |

|---|---|---|

| Large-Scale, Representative Datasets | UK Biobank [22], Adolescent Brain Cognitive Development (ABCD) Study [20] | Provides large, demographically diverse training data that helps reduce bias and improves model generalizability by capturing broader population variance. |

| Normative Modeling Platforms | Brain MoNoCle [23], BrainChart [23], PCN Toolkit [23], CentileBrain [23] | Offer pre-trained models that establish population benchmarks for brain structure. They allow quantification of individual deviations without needing large, matched control groups. |

| Machine Learning Libraries with Regularization | Scikit-learn (Ridge regression), PyTorch/TensorFlow (with L2 penalty) | Provides algorithms to implement simpler models and control model complexity through regularization, which can enhance sensitivity to disease-relevant signals [22]. |

| Data Harmonization Tools | ComBat | Statistical methods designed to remove unwanted inter-scanner and multi-site variability from neuroimaging data, directly addressing a major source of heterogeneity. |

Practical Techniques for Variance Reduction in Model Pipelines

Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: Why is feature selection critical for neuroimaging classification models, and how does it directly impact variance? Feature selection is indispensable in neuroimaging due to the high-dimensional nature of the data (e.g., thousands of functional connectivity features from fMRI) and the typically small sample sizes. It reduces dimensionality to prevent overfitting, improves model generalization, and enhances the interpretability of biomarkers. Crucially, robust feature selection directly reduces variance in model performance by identifying a stable set of features that are consistently informative across different data splits or cohorts, rather than fitting to noise. Instability in selected features is a major contributor to the high variance often observed in neuroimaging model performance [24] [25] [26].

FAQ 2: I am getting different feature sets every time I run my feature selection with cross-validation. How can I improve stability? This is a classic sign of feature instability. To address this:

- Quantify Stability: Use stability metrics like the Kuncheva index or Jaccard index to measure how consistent your selected features are across cross-validation folds. One study reported a Kuncheva index of 0.74 for the LASSO method, indicating good stability [25].

- Prioritize Stable Algorithms: Embedded methods like LASSO often demonstrate higher stability compared to some filter or wrapper methods [25].

- Incorporate Stability into Selection: Employ frameworks that explicitly consider feature stability, such as stability-driven feature selection or multi-stage algorithms that filter out volatile features [25] [27].

FAQ 3: My model's cross-validation accuracy is high, but it fails on an external validation set. Could my feature selection method be the cause? Yes. This is a common symptom of overfitting during the feature selection process. If feature selection is performed on the entire dataset before cross-validation, information from the test set leaks into the training process. The solution is to perform nested feature selection: execute the entire feature selection process independently within each training fold of the cross-validation. This ensures that the test fold is completely unseen during both feature selection and model training, providing a more reliable estimate of generalization error and reducing performance variance on external datasets [8].

FAQ 4: How do I choose between Filter, Wrapper, and Embedded methods for my neuroimaging data? The choice involves a trade-off between computational cost, stability, and performance. The following table provides a comparative overview:

Table 1: Comparison of Feature Selection Method Types

| Method Type | Core Principle | Key Strengths | Common Pitfalls | Ideal Neuroimaging Use Case |

|---|---|---|---|---|

| Filter Methods (e.g., ANOVA) | Selects features based on statistical scores (e.g., F-value) independent of the classifier [25]. | Fast computation; model-agnostic; scalable to very high-dimensional data. | Ignores feature dependencies and interaction with the classifier; may select redundant features. | Initial pre-filtering to drastically reduce feature space before applying more complex methods. |

| Wrapper Methods (e.g., Relief, GWO) | Uses the performance of a specific classifier to evaluate and select feature subsets [24] [25]. | Can capture feature interactions; often finds high-performing feature subsets. | Computationally intensive; high risk of overfitting; feature sets can be unstable. | When computational resources are available and the goal is to maximize accuracy for a specific model. |

| Embedded Methods (e.g., LASSO) | Performs feature selection as an integral part of the model training process [25] [26]. | Balances performance and computation; built-in regularization reduces overfitting; often more stable. | Tied to the learning algorithm's inherent biases. | General-purpose robust selection for linear models; highly effective for connectome-based classification [25]. |

FAQ 5: What are some advanced strategies to further enhance feature selection for neuroimaging?

- Multi-task Feature Selection: This approach leverages information from multiple related datasets (e.g., different patient cohorts) to identify a more robust set of features that generalize better. It can be implemented using optimization algorithms like the Gray Wolf Optimizer (GWO) [24] [28].

- Hybrid and Multi-stage Methods: Combine the strengths of different methods. For example, use a filter method for initial aggressive dimensionality reduction, followed by a more refined embedded or wrapper method on the shortlisted features [26] [27].

- Integrating Explainability: Use techniques like counterfactual explanations to understand why certain features were selected. This can validate the clinical relevance of features by showing how perturbing them would change the model's prediction (e.g., from schizophrenic to healthy) [24] [28].

Experimental Protocols & Methodologies

Protocol 1: Implementing a Stability-Assessed Feature Selection Pipeline

This protocol is designed to ensure the selected features are both predictive and stable.

Table 2: Key Reagents & Computational Tools

| Research Reagent / Tool | Function / Explanation |

|---|---|

| fMRI/ sMRI Preprocessed Data | Input data; typically represented as a connectivity matrix (connectome) or regional volumetric/ thickness measures [24] [27]. |

| Feature Stability Indices | Kuncheva Index (KI): Corrects for the chance that features overlap across folds. Jaccard Index: Measures the similarity between feature sets. A higher score indicates greater stability [25]. |

| LASSO (Logistic Regression) | An embedded method that uses L1 regularization to shrink coefficients of irrelevant features to exactly zero, effectively performing feature selection [25]. |

| Nested Cross-Validation | The outer loop estimates model performance, while an inner loop performs feature selection and hyperparameter tuning on the training fold only, preventing data leakage [8]. |

Workflow:

- Data Preparation: Extract features from neuroimages (e.g., vectorize the upper triangular elements of a functional connectivity matrix) [24] [25].

- Nested CV Setup: Define outer K-folds (e.g., 10-fold) for performance estimation and inner folds (e.g., 5-fold) for feature selection tuning.

- Feature Selection & Training: For each outer training fold:

- Perform feature selection (e.g., using LASSO, ANOVA) using the inner CV loop to tune parameters.

- Train the final classifier (e.g., Logistic Regression, Random Forest) using only the selected features from that training fold.

- Stability Assessment: Collect all feature sets selected in each outer training fold. Calculate the Kuncheva and Jaccard indices across these folds to quantify stability [25].

- Validation: Assess the final model's performance on the held-out outer test folds and, if available, on a completely external dataset.

Figure 1: Stability-assessed nested cross-validation workflow for robust feature selection.

Protocol 2: Advanced Multi-Task Feature Selection with Counterfactual Explanation

This protocol uses multiple datasets and explainable AI to enhance robustness and interpretability.

Workflow:

- Multi-Task Setup: Pool data from several related neuroimaging datasets (e.g., different SZ cohorts). The learning objective is shared across these "tasks" to find universally relevant features [24] [28].

- Multi-Task Feature Selection: Implement a robust multi-task feature selection framework. This can be achieved using an optimization algorithm like the Gray Wolf Optimizer (GWO). The GWO algorithm simulates wolf pack hierarchy (α, β, δ wolves as best solutions) to efficiently search the feature space across multiple tasks, identifying a robust subset of features [24].

- Model Training & Validation: Train a classification model using the selected features and evaluate its performance across the different cohorts.

- Counterfactual Explanation: To interpret the selected features, generate counterfactual examples. For a patient classified as SZ, minimally perturb the abnormal functional connectivity features (identified in step 2) until the model prediction flips to "healthy." These perturbations reveal the minimal changes needed to alter the outcome, providing clinically actionable insights [24] [28].

Figure 2: Multi-task feature selection workflow with model explanation.

Data Presentation: Quantitative Comparisons

Table 3: Exemplary Performance and Stability of Different Feature Selection Methods on a Neuroimaging Classification Task (Schizophrenia vs. Healthy Controls) [25]

| Feature Selection Method | Type | Reported Accuracy (%) | F1-Score (%) | Stability (Kuncheva Index) | Stability (Jaccard Index) |

|---|---|---|---|---|---|

| LASSO | Embedded | 91.85 | 91.98 | 0.74 | 0.69 |

| Relief | Wrapper | Results reported as lower than LASSO | Results reported as lower than LASSO | Lower than LASSO | Lower than LASSO |

| ANOVA | Filter | Results reported as lower than LASSO | Results reported as lower than LASSO | Lower than LASSO | Lower than LASSO |

Note: This table summarizes results from a specific study on an fMRI dataset. Actual performance will vary with data and experimental conditions. It demonstrates that LASSO, an embedded method, can achieve high performance with superior feature stability.

Advanced Optimization Algorithms for Stable Model Training

Frequently Asked Questions

Q: Why does my neuroimaging classifier show high variance in cross-validation results, and how can optimization algorithms help?

High variance in cross-validation results often stems from the sensitivity of model comparison procedures to cross-validation configurations, particularly in neuroimaging studies with limited sample sizes. Research demonstrates that statistical significance of accuracy differences can be artificially inflated by increasing the number of folds or repetitions, potentially leading to p-hacking and reproducibility issues [8] [29]. Advanced optimization algorithms address this by providing more stable convergence properties and reducing sensitivity to initial conditions through adaptive learning rates and momentum terms [30] [31].

Q: When should I choose adaptive optimizers like Adam over traditional SGD for neuroimaging classification?

Adam is particularly beneficial when working with sparse gradients or noisy data, as it adapts learning rates for each parameter individually and incorporates momentum [31]. However, well-tuned SGD with momentum often achieves better final test accuracy in image classification tasks, as Adam's adaptive methods might converge faster initially but potentially overfit to training data [31]. For neuroimaging applications, consider Adam when dealing with high-dimensional feature spaces or when computational efficiency is prioritized, while SGD with momentum may be preferable when generalization performance is the primary concern [32] [31].

Q: What optimization techniques are most effective for handling small-to-medium neuroimaging datasets (N<1000)?

For smaller neuroimaging datasets, regularization techniques become crucial to prevent overfitting. L1 and L2 regularization penalize model complexity, with L1 particularly effective for feature selection by reducing the number of features by up to 80% without significant performance loss [33]. Dropout, which randomly sets a portion of input units to zero during training, has been shown to improve accuracy by 2-5% on average in deep neural networks [33]. Early stopping can reduce training time by up to 50% while preventing overfitting [33]. Additionally, batch normalization accelerates training by 2-4 times and improves model accuracy by 2-5% by normalizing layer inputs [33].

Q: How can I optimize generative AI models for neuroimaging applications while managing computational costs?

Generative AI optimization employs techniques like quantization, pruning, and knowledge distillation to reduce computational demands [33]. Quantization reduces model size by up to 75% by lowering numerical precision from 32-bit floats to 8-bit integers [33]. Pruning removes redundant weights, potentially reducing model size by up to 90% without significant accuracy loss [33]. Knowledge distillation, where a large "teacher" model trains a compact "student" network, improves student model accuracy by 3-5% on average while reducing complexity [33]. These approaches are particularly valuable for deploying models on clinical hardware with limited resources [34].

Troubleshooting Guides

Problem: Unstable Convergence During Model Training

Symptoms: Loss values oscillate wildly between iterations, models fail to converge to a minimum, or training produces different results with identical data and hyperparameters.

Diagnosis and Solutions:

Implement Adaptive Learning Rate Methods

- Switch from basic SGD to Adam or RMSprop, which adjust learning rates for each parameter automatically [31]

- Adam combines the benefits of RMSprop with momentum, making it particularly effective for handling sparse gradients and noisy data [33] [31]

- For neuroimaging data with varying feature importance, Adam's parameter-specific learning rates prevent overshooting in sensitive dimensions [31]

Apply Gradient Clipping

- Implement gradient value constraints to prevent explosion during backpropagation

- Particularly important in recurrent architectures for neuroimaging time series analysis

- Set clipping threshold based on observed gradient norms during initial training epochs

Add Momentum Terms

Problem: Model Performance Variance Across Cross-Validation Folds

Symptoms: Significant accuracy differences between cross-validation folds, inconsistent feature importance across splits, or unreliable model selection.

Diagnosis and Solutions:

Address Statistical Flaws in CV Comparison

- Recognize that paired t-tests on cross-validation scores are fundamentally flawed due to violated independence assumptions [8]

- Implement statistical testing that accounts for the implicit dependency in accuracy scores from overlapping training folds [8]

- Use the same cross-validation splits when comparing multiple algorithms to ensure fair comparison

Optimize Cross-Validation Configuration

Apply Regularization Techniques

- Use L2 regularization to encourage smaller weights and smoother decision boundaries [33]

- Implement dropout for neural networks, which has been shown to improve accuracy by 2-5% on average [33]

- Consider elastic net regularization that combines L1 and L2 penalties for neuroimaging data with correlated features

Problem: Long Training Times for Deep Neuroimaging Models

Symptoms: Training processes taking days or weeks, inability to complete hyperparameter tuning due to time constraints, or models that cannot be updated with new data in clinically relevant timeframes.

Diagnosis and Solutions:

Implement Model Compression Techniques

- Apply pruning to remove unnecessary weights and connections [33]

- Use quantization to reduce numerical precision from 32-bit to 8-bit representations, decreasing model size by up to 75% [33]

- Employ knowledge distillation to train smaller, faster student models that maintain 3-5% of teacher model accuracy [33]

Utilize Architecture Optimization

- Implement Neural Architecture Search (NAS) to automatically design efficient architectures, improving accuracy by an average of 15% over manual designs [33]

- Use efficient network designs like MobileNet for deployment on clinical hardware, achieving 70-90% of larger model accuracy with 10x fewer parameters [33]

- Consider model parallelism techniques to distribute training across multiple GPUs

Optimize Hyperparameter Search

- Replace grid search with Bayesian optimization, which outperforms grid search by 20-30% in efficiency [33]

- Use random search for hyperparameter optimization, which often outperforms grid search with less computational cost [33]

- Implement automated hyperparameter tuning frameworks like Optuna, Hyperopt, or Keras Tuner [33]

Experimental Protocols for Optimization Evaluation

Protocol 1: Evaluating Optimization Algorithm Stability

Objective: Quantify the stability of different optimization algorithms for neuroimaging classification tasks while controlling for cross-validation variability.

Methodology:

Data Preparation

Experimental Framework

Optimization Comparison

- Train identical model architectures with different optimizers (SGD, SGD+momentum, Adam, RMSprop)

- Use multiple cross-validation configurations (K=2-50 folds, M=1-10 repetitions) [8]

- Record accuracy scores and loss trajectories for each fold/repetition

Stability Metrics

- Calculate variance in accuracy across folds for each optimizer

- Compute convergence time and number of iterations until stabilization

- Record sensitivity to weight initialization through multiple random seeds

Protocol 2: Cross-Validation Configuration Impact Assessment

Objective: Systematically evaluate how cross-validation setups affect perceived optimization performance and statistical significance.

Methodology:

Dataset Selection

- Utilize three publicly available neuroimaging datasets with different characteristics [8]:

- Alzheimer's Disease Neuroimaging Initiative (ADNI): 222 controls vs. 222 patients

- Autism Brain Imaging Data Exchange (ABIDE): 391 ASD vs. 458 controls

- Adolescent Brain Cognitive Development (ABCD): 6125 boys vs. 5600 girls

- Utilize three publicly available neuroimaging datasets with different characteristics [8]:

Cross-Validation Design

- Implement repeated K-fold cross-validation with systematic variation [8]:

- K values: 2, 5, 10, 20, 50

- M repetitions: 1, 3, 5, 10

- Use stratified sampling to maintain class distributions in all splits

- Implement repeated K-fold cross-validation with systematic variation [8]:

Statistical Analysis

Optimization Performance Metrics

- Document how perceived optimizer superiority changes with CV parameters

- Identify configurations that minimize false positive findings

- Establish guidelines for CV setup based on dataset size and characteristics

Optimization Algorithm Performance Comparison

Table 1: Optimization Algorithm Characteristics for Neuroimaging Applications

| Algorithm | Best For | Key Parameters | Convergence Speed | Stability | Neuroimaging Considerations |

|---|---|---|---|---|---|

| SGD with Momentum | Final test accuracy, Generalization | Learning rate (0.01), Momentum (0.9) | Moderate | High | Often outperforms Adam in image classification tasks; preferred when generalization is critical [31] |

| Adam | Sparse gradients, Noisy data | Learning rate (0.001), β1 (0.9), β2 (0.999) | Fast | Moderate | Fast convergence but potential overfitting; good for high-dimensional neuroimaging data [31] |

| RMSprop | Non-stationary objectives | Learning rate (0.001), Decay rate (0.9) | Fast | Moderate | Adapts learning rates based on recent gradient magnitudes; effective for RNNs in time-series neuroimaging [33] |

| Adagrad | Sparse data, Feature-specific learning | Learning rate (0.01) | Moderate initially | High | Adapts learning rates individually for each parameter; effective for neuroimaging with heterogeneous feature importance [33] |

Table 2: Regularization Techniques for Variance Reduction in Neuroimaging Classification

| Technique | Mechanism | Implementation | Performance Improvement | Computational Cost |

|---|---|---|---|---|

| L1 Regularization | Feature selection through sparsity | Add absolute value of weights to loss | Reduces features by up to 80% without significant performance loss [33] | Low |

| L2 Regularization | Weight decay for smoother boundaries | Add squared weights to loss | Improves generalization; prevents overfitting | Low |

| Dropout | Prevents co-adaptation of features | Randomly disable units during training | Improves accuracy by 2-5% on average [33] | Moderate |

| Batch Normalization | Stabilizes internal covariate shift | Normalize layer inputs | Accelerates training 2-4x; improves accuracy 2-5% [33] | Moderate |

| Early Stopping | Prevents overfitting to training data | Monitor validation loss and stop when plateaus | Reduces training time by up to 50% [33] | Low |

Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization Experiments

| Tool Name | Type | Primary Function | Application in Neuroimaging Optimization |

|---|---|---|---|

| Optuna | Hyperparameter Optimization Framework | Automated hyperparameter tuning | Implements Bayesian optimization for efficient hyperparameter search; reduces manual tuning effort [33] |

| TensorRT | Deep Learning Optimization SDK | Model optimization for inference | Optimizes trained models for deployment on clinical hardware; reduces inference time by up to 80% [33] |

| ONNX Runtime | Model Interoperability Framework | Cross-platform model deployment | Standardizes model optimization across different frameworks and hardware platforms [33] |

| OpenVINO Toolkit | Hardware Acceleration Toolkit | Model optimization for Intel hardware | Provides quantization and pruning capabilities specifically optimized for CPU deployment [35] |

| FastMRI Dataset | Benchmark Dataset | Accelerated MRI reconstruction | Provides public k-space data for evaluating reconstruction algorithms; enables standardized optimization comparison [34] |

Optimization Workflow Visualization

Optimization Workflow for Stable Training

CV Configuration for Variance Reduction

Data Augmentation and Synthetic Data Generation with Diffusion Models

For researchers in neuroimaging and drug development, achieving robust classification models is often hindered by high variance, frequently stemming from limited dataset sizes and inherent data heterogeneity. Data augmentation and synthetic data generation present powerful strategies to mitigate this issue. By artificially expanding and balancing training datasets, these techniques help models learn more generalized features, ultimately reducing overfitting and improving reliability on unseen data.

Among the various generative approaches, Denoising Diffusion Probabilistic Models (DDPMs) have recently emerged as a leading method for generating high-quality, diverse synthetic data [36]. This technical support center provides a practical guide to implementing these advanced methods, specifically framed within the context of neuroimaging classification tasks.

Frequently Asked Questions (FAQs)

Q1: Why should I use diffusion models over GANs for neuroimaging data augmentation?

Diffusion models offer several advantages for neuroimaging applications. They are known for their training stability, reducing the risk of mode collapse that can plague Generative Adversarial Networks (GANs) [36]. Furthermore, they demonstrate a superior ability to model the underlying complex data distributions of brain images, leading to high-fidelity generations that preserve crucial anatomical details [37]. Their probabilistic nature also provides more interpretable intermediate states during the generation process [36].

Q2: What are the primary challenges when using synthetically generated neuroimaging data?

While powerful, synthetic data generation comes with key challenges that must be managed:

- Realism and Clinical Relevance: The synthetic data must accurately mirror the complex, high-dimensional statistics of real neuroimaging data. There is a risk of introducing subtle, artificial patterns or missing critical anatomical features, creating a "simulation-to-reality gap" [38] [39] [40].

- Data Pollution and Feedback Loops: Using synthetic data can lead to "model autophagy disorder (MAD)," where models trained on AI-generated outputs degrade in quality and diversity over generations if not carefully validated against real data [40].

- Privacy Risks: The quest for high fidelity creates a paradox: the more realistic the synthetic data, the higher the risk it may inadvertently reveal information about the real individuals in the training dataset [40].

Q3: How can I ensure my synthetic data improves model fairness and reduces bias?

Synthetic data can be a tool to enhance fairness by intentionally oversampling underrepresented classes or demographic groups in your dataset [41] [40]. For instance, you can condition your diffusion model to generate data for specific, under-represented patient cohorts. It is crucial, however, to pair this with rigorous fairness metrics from toolkits like AIF360 to audit the outcomes, as the choice of what constitutes a "fair" representation is a value-laden decision that requires careful consideration [41] [40].

Q4: My model is overfitting even with basic data augmentation. What might be wrong?

Overfitting can persist or be exacerbated by augmentation that is either too aggressive or insufficiently diverse. Excessive augmentation can cause the model to learn the augmented patterns instead of the true underlying features [42]. Conversely, if the original dataset has fundamental quality issues like noise or lack of diversity, augmentation may not resolve them [42]. The solution is to strike a balance, ensure the quality of your base data, and consider more advanced augmentation techniques like diffusion models that introduce semantically meaningful variation [36].

Troubleshooting Guides

Issue 1: Synthetic Data Lacks Realism and Anatomical Coherence

Problem: The generated synthetic MRI scans appear blurry, contain anatomically implausible structures, or fail to capture the statistical properties of the real dataset.

| Possible Cause | Verification Method | Solution |

|---|---|---|

| Insufficient or low-quality training data. | Check dataset size and quality. Perform visual inspection by a domain expert. | Curate a higher-quality, larger base dataset. Apply minimal, non-destructive pre-processing to original images. |

| Poorly chosen diffusion model hyperparameters. | Review the noise schedule and loss curves. Compare samples from different training checkpoints. | Calibrate the noise schedule (β~t~). Extend the number of diffusion timesteps (T). Ensure the model has converged. |

| Lack of conditioning during generation. | Check if generated samples are unconditioned. | Use a conditional diffusion model. Condition the generation on labels (e.g., disease status, demographic info) using classifier-free guidance [37]. |

Issue 2: Classification Model Performance Does Not Improve with Synthetic Data

Problem: After augmenting the training set with synthetic samples, the performance (e.g., accuracy, AUC) of the neuroimaging classifier does not improve, or even degrades.

| Possible Cause | Verification Method | Solution |

|---|---|---|

| Distribution mismatch between real and synthetic data. | Calculate quantitative metrics like Maximum Mean Discrepancy (MMD) [36] or FID. Perform a t-SNE visualization of real vs. synthetic feature embeddings. | Improve the generative model as per Issue 1. Use a "reference set" and positive/negative prompting to enhance inter-class separation [43]. |

| Data pollution or "closed-loop" training. | Audit your data pipeline. Ensure the test set contains only real, unseen data. | Strictly separate synthetic data used for training from real data used for testing and validation. Continuously validate with fresh real-world data [40]. |

| Classifier is overfitting to artifacts in synthetic data. | Monitor the gap between training and validation accuracy (using a real-data validation set). | Apply traditional augmentation (rotation, scaling) to synthetic data. Use regularization techniques (dropout, weight decay) in the classifier. Blend synthetic data with real data instead of replacing it. |

Issue 3: Long Training Times and High Computational Demand

Problem: Training the diffusion model is prohibitively slow and requires excessive computational resources.

| Possible Cause | Verification Method | Solution |

|---|---|---|

| Training on full, high-resolution 3D volumes. | Check the input dimensions to the model. | Use patch-based training instead of whole volumes. Employ Latent Diffusion Models (LDMs) that operate in a compressed latent space [36] [37]. |

| Inefficient sampling process. | Check the number of sampling steps (e.g., 1000 in vanilla DDPM). | Use accelerated sampling algorithms like Denoising Diffusion Implicit Models (DDIM) [37] which can reduce steps to 50-100. |

| Large model size. | Review the model architecture (e.g., U-Net parameters). | Optimize the U-Net architecture. Reduce model capacity if possible, especially when starting with smaller datasets. Use mixed-precision training. |

Experimental Protocols & Workflows

Protocol 1: DDPM for Neuroimaging Data Augmentation

This protocol details the methodology for generating synthetic 3D T1-weighted brain MRI images to augment a training dataset for a classification task [36].

1. Objective: To generate anatomically coherent and realistic synthetic neuroimaging data to augment a limited dataset, thereby reducing variance in a downstream classification model.

2. Research Reagent Solutions

| Item | Function in the Protocol |

|---|---|

| Denoising Diffusion Probabilistic Model (DDPM) | The core generative framework. It learns to reverse a forward noising process to create data from noise [36]. |

| Pre-processed T1-weighted MRI Dataset | The high-quality, real data used to train the DDPM. Preprocessing may include skull-stripping, intensity normalization, and spatial normalization. |

| Multilayer Perceptron (MLP) or U-Net | The neural network architecture used within the DDPM to predict and remove noise at each denoising step [36]. |

| Quantitative Evaluation Metrics (e.g., MMD) | Used to assess the similarity between the distributions of real and generated data, ensuring statistical fidelity [36]. |

3. Workflow Diagram

Title: DDPM Training and Augmentation Workflow

4. Method Details:

- Forward Process: The original data ( x0 ) is progressively corrupted with Gaussian noise over ( T ) timesteps. The data at step ( t ) is given by ( xt = \sqrt{\bar{\alpha}t}x0 + \sqrt{1-\bar{\alpha}t}\epsilon ), where ( \epsilon \sim \mathcal{N}(0, \textbf{I}) ) and ( \bar{\alpha}t ) is a function of the noise schedule ( \beta_t ) [36].

- Reverse Process: A neural network ( \epsilon\theta (xt, t) ) is trained to predict the noise ( \epsilon ) added at each timestep. The training objective is to minimize ( \mathcal{L} = \mathbb{E}{t,x0,\epsilon}[\Vert \epsilon - \epsilon\theta (xt, t)\Vert ^2] ) [36].

- Sampling: To generate a new sample, start from pure noise ( xT \sim \mathcal{N}(0, \textbf{I}) ) and iteratively apply the trained model to denoise for ( T ) steps, following ( x{t-1} = \frac{1}{\sqrt{1 - \betat}} ( xt - \frac{\betat}{\sqrt{1 - \bar{\alpha}t}} \epsilon\theta (xt, t) ) + \sigma_t z ) [36].

Protocol 2: Conditional Generation for Fairness Enhancement

This protocol uses a conditional diffusion model to address class imbalance and improve fairness in a binary classification task, inspired by applications in tabular data that can be adapted to neuroimaging [41].

1. Objective: To improve the fairness and performance of a classifier by generating synthetic data for underrepresented classes using a conditional diffusion model, followed by sample reweighting.

2. Workflow Diagram

Title: Conditional Generation for Fairness

3. Method Details:

- Conditional Training: The diffusion model is trained on the original dataset where each sample is associated with a condition (e.g., disease label, protected attribute). Techniques like classifier-free guidance are used, where the condition is randomly dropped during training to improve robustness [37].

- Targeted Generation: After training, the model is explicitly conditioned on the underrepresented class to generate a balanced number of synthetic samples.

- Sample Reweighting: As a complementary step, reweighting techniques from fairness toolkits like AIF360 can be applied. This involves calculating weights for different privilege-outcome groups (e.g., privileged group with positive outcome, unprivileged group with negative outcome) to further mitigate bias in the combined dataset during classifier training [41].

The following table summarizes key quantitative findings from research on using synthetic data for model improvement, which can serve as benchmarks for your own experiments.

Table: Impact of Synthetic Data Augmentation on Model Performance

| Study / Model | Application Domain | Key Metric | Result with Real Data Only | Result with Synthetic Augmentation | Notes |

|---|---|---|---|---|---|

| Tab-DDPM [41] | Tabular Data Fairness | Fairness Metric (e.g., Statistical Parity Difference) | Varies by base model | Improvement (e.g., RF fairness improved with more generated data) | Improvement observed across 5 ML models with 20k-150k synthetic samples. |

| DDPM for Neuroimaging [36] | Brain MRI Generation | Maximum Mean Discrepancy (MMD) | N/A | Low MMD value reported | Confirms similarity between real and generated data distributions. |

| Two-Stage Diffusion [43] | Long-tailed Food Classification | Top-1 Accuracy | Lower performance on tail classes | Superior performance compared to previous works | Framework promotes intra-class diversity & inter-class separation. |

Cross-Validated Confound Regression to Control for Bias

In neuroimaging research, a fundamental limitation of decoding analyses arises when the variable you wish to decode (e.g., clinical status) is correlated with another variable that is not of primary interest (e.g., age, sex, or motion). This confounding variable can become the primary source of information that a model learns, making the interpretation of decoding performance ambiguous and potentially invalidating your conclusions about the target variable [44].

Cross-Validated Confound Regression is a method used to control for such confounding variables. Evidence from comprehensive simulations and empirical analyses shows that it is the only method among several evaluated that yields nearly unbiased results, thereby providing genuine insight into the source of information driving a decoding analysis [44].

Experimental Protocols & Methodologies

Core Protocol: Implementing Cross-Validated Confound Regression

This protocol ensures that the process of regressing out confounds does not leak information from the test set into the training set, which would cause overoptimistic and biased performance [44].

Step-by-Step Workflow:

- Data Partitioning: Split your dataset into K-folds for cross-validation.

- Confound Regression per Fold: For each fold

i(wherei = 1 to K):- Training Phase: On the training set (all folds except

i), fit a regression model to predict the target variable (Y_train) using the confound variable (Z_train). - Application: Use this fitted confound regression model to predict the target variable in both the training set (

Y_pred_train) and the test set (Y_pred_test). - Residual Calculation: Create a confound-corrected version of the target variable for both sets by calculating the residuals:

Y_residual_train = Y_train - Y_pred_trainY_residual_test = Y_test - Y_pred_test

- Training Phase: On the training set (all folds except

- Model Training & Testing: Train your primary classification or decoding model on the training set features (

X_train) to predict the confound-corrected target,Y_residual_train. Then, evaluate the model's performance on the test set features (X_test) usingY_residual_test. - Repetition and Aggregation: Repeat steps 2-3 for all K folds. Aggregate the performance metrics (e.g., accuracy) from all test folds to get a final, unbiased estimate of your model's ability to predict the target variable while controlling for the confound.

Protocol Validation: Empirical Demonstration

A key study validated this protocol by attempting to decode gender from structural MRI data while controlling for the confound "brain size" [44]. The findings are summarized in the table below.

Table 1: Comparison of Methods for Controlling Confounds in Decoding Analyses

| Method | Description | Reported Outcome | Bias Introduced |

|---|---|---|---|

| No Correction | Decoding the target variable without controlling for the confound. | High, but ambiguous performance. | Positive Bias: Performance is driven by the confound, not the target. |

| Post-hoc Counterbalancing | Subsampling data after model training to balance the confound across classes. | Better-than-expected performance. | Strong Positive Bias: The subsampling process tends to remove hard-to-classify samples [44]. |

| Non-Cross-Validated Confound Regression | Regressing the confound out of the target variable once, before cross-validation. | Worse-than-expected, sometimes below-chance performance. | Strong Negative Bias: The model learns a anti-correlated pattern due to information leakage [44]. |

| Cross-Validated Confound Regression | Regressing the confound out of the target variable independently within each fold of cross-validation. | Plausible, above-chance performance. | Nearly Unbiased: Correctly reveals the source of information driving the analysis [44]. |

The workflow for the core protocol can be visualized as follows:

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Why does my model show significant below-chance performance when I use confound regression? A: This is a classic signature of a methodological error. It occurs when confound regression is performed on the entire dataset before cross-validation, which leaks information about the test data into the training process. This causes the model to learn a pattern that is anti-correlated with the true signal. The solution is to perform the confound regression independently within each fold of the cross-validation loop to prevent this leakage [44].

Q2: I have controlled for my confound using cross-validation, but my results are still highly variable. What else could be affecting stability?

A: The stability of your model comparison can be significantly influenced by your cross-validation setup itself. Research shows that the number of folds (K) and the number of cross-validation repetitions (M) can artificially inflate the statistical significance of performance differences, even between models with no intrinsic predictive difference. This variability is a known challenge that can exacerbate the reproducibility crisis and requires rigorous, standardized reporting of CV parameters [8] [29].

Q3: Are there other effective strategies for mitigating motion artifacts in functional neuroimaging? A: Yes, dynamic functional connectivity analyses face similar challenges with motion artifacts. A systematic evaluation of 12 confound regression strategies found that pipelines incorporating Global Signal Regression (GSR) were among the most effective at minimizing the relationship between connectivity and motion. However, the effectiveness of different de-noising pipelines can vary, and they should be chosen based on the specific benchmarks of your study [45].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Cross-Validated Confound Regression Experiments

| Item Name / Concept | Function / Description | Example / Note |

|---|---|---|

| Linear Regression Model | The statistical engine used within each cross-validation fold to model and remove the relationship between the target variable and the confound. | Can be implemented via standard libraries (e.g., scikit-learn LinearRegression). |

| K-Fold Cross-Validator | A framework to partition the data and manage the iterative training/testing process, ensuring no data leakage. | Use KFold or StratifiedKFold from scikit-learn. The choice of K (e.g., 5, 10) should be reported. |

| Residual Target Variable | The confound-corrected version of your original target variable, which becomes the new goal for your primary decoding model. | Calculated as Y_residual = Y_actual - Y_predicted_by_confound_model. |

| Primary Decoding Classifier | The machine learning model (e.g., SVM, Logistic Regression) whose goal is to predict the residual target from neuroimaging features. | Its performance on the residual target is interpreted as accuracy in predicting the target independent of the confound. |

| Performance Metric | A standardized measure to evaluate the decoding model's performance across folds. | Common metrics include Accuracy, Area Under the Curve (AUC), or F1-score. |

Diagnosing and Correcting Common Sources of Model Instability

Avoiding P-Hacking and Overfitting in Model Comparison

Troubleshooting Guides

Guide 1: Resolving Overfitting in Neuroimaging Classifiers