Rigorous Statistical Comparison of Neuroimaging Classification Models: A Framework for Accuracy Assessment and Reproducible Machine Learning

This article provides a comprehensive guide for researchers and drug development professionals on the rigorous statistical comparison of machine learning model accuracy in neuroimaging.

Rigorous Statistical Comparison of Neuroimaging Classification Models: A Framework for Accuracy Assessment and Reproducible Machine Learning

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the rigorous statistical comparison of machine learning model accuracy in neuroimaging. It covers foundational principles of statistical testing, best-practice methodologies for model evaluation, solutions to common pitfalls in cross-validation, and frameworks for robust validation and benchmarking. Drawing on recent studies that highlight a reproducibility crisis in biomedical machine learning, this content is essential for ensuring statistically sound and clinically meaningful conclusions in neuroimaging-based classification tasks, ultimately supporting more reliable drug development and clinical translation.

Core Concepts and Critical Need for Rigorous Model Comparison in Neuroimaging

The Reproducibility Crisis in Biomedical Machine Learning

Machine learning (ML) has significantly transformed biomedical research, leading to a growing interest in model development to advance classification accuracy in various clinical applications [1]. However, this rapid progress raises essential questions regarding how to rigorously compare the accuracy of different ML models and has exposed a deepening reproducibility crisis within the field [1] [2]. In biomedical research, reproducibility means that given access to the original data and analysis code, an independent group can obtain the same results observed in the initial study, while replication means that an independent group reaches the same conclusions after performing the same experiments on new data [2]. The reproducibility crisis manifests through multiple channels: statistical flaws in validation procedures, data leakage, sensitivity to random seeds, and publication pressures that prioritize novel findings over verification [3] [4]. This crisis is particularly concerning in clinical applications where unreliable models could impact patient care and treatment decisions.

Nowhere are these challenges more evident than in neuroimaging-based classification, where researchers increasingly rely on cross-validation (CV) techniques to evaluate and compare model performance due to limited sample sizes [1]. The fundamental problem is that many common practices for comparing ML models are statistically flawed, leading to potentially misleading conclusions about model superiority [1]. This article examines the specific mechanisms through which reproducibility breaks down in biomedical ML, with particular focus on neuroimaging classification tasks, and provides frameworks for more rigorous model evaluation and comparison.

Experimental Evidence: Quantifying the Reproducibility Problem

Statistical Flaws in Cross-Validation Based Comparisons

A critical examination of current practices reveals that the likelihood of detecting significant differences among models varies substantially with the intrinsic properties of the data, testing procedures, and CV configurations [1]. Researchers have demonstrated this through an unbiased framework that constructs two classifiers with identical intrinsic predictive power, then investigates whether statistical testing procedures can consistently quantify the significance of accuracy differences with different CV setups [1].

In this experimental framework, researchers create two classifiers with the same "intrinsic" predictive power by taking a linear Logistic Regression model and creating perturbed versions by adding and subtracting a random zero-centered Gaussian vector to the linear coefficients of its decision boundary [1]. This ensures any observed accuracy differences arise from chance rather than algorithmic superiority. When this framework was applied to three neuroimaging datasets—Alzheimer's Disease Neuroimaging Initiative (ADNI), Autism Brain Imaging Data Exchange (ABIDE I), and Adolescent Brain Cognitive Development (ABCD)—concerning patterns emerged.

Table 1: Impact of Cross-Validation Setup on False Positive Rates

| Dataset | Sample Size | CV Folds (K) | Repetitions (M) | False Positive Rate |

|---|---|---|---|---|

| ADNI | 444 (222/222) | 2 | 1 | 0.08 |

| ADNI | 444 (222/222) | 50 | 1 | 0.12 |

| ADNI | 444 (222/222) | 2 | 10 | 0.22 |

| ADNI | 444 (222/222) | 50 | 10 | 0.45 |

| ABIDE | 849 (391/458) | 2 | 1 | 0.07 |

| ABIDE | 849 (391/458) | 50 | 1 | 0.14 |

| ABIDE | 849 (391/458) | 2 | 10 | 0.24 |

| ABIDE | 849 (391/458) | 50 | 10 | 0.49 |

| ABCD | 11,725 (6125/5600) | 2 | 1 | 0.06 |

| ABCD | 11,725 (6125/5600) | 50 | 1 | 0.15 |

| ABCD | 11,725 (6125/5600) | 2 | 10 | 0.25 |

| ABCD | 11,725 (6125/5600) | 50 | 10 | 0.52 |

The data demonstrates an undesired artifact where test sensitivity increases (lower p-values) with both the number of CV repetitions (M) and the number of folds (K), despite comparing models with identical predictive power [1]. If researchers use p<0.05 as the significance threshold, the likelihood of falsely detecting a significant accuracy difference between models increases substantially with higher K and M values—in some cases exceeding 50% false positive rate [1]. This creates substantial potential for p-hacking, where researchers could consciously or unconsciously select CV parameters that produce statistically significant but ultimately spurious results.

The Impact of Data Leakage on Model Performance Claims

Data leakage represents another critical threat to reproducibility in biomedical ML, occurring when information from outside the training dataset is used to create the model, leading to overoptimistic performance estimates [3]. A survey of ML research found that data leakage affects at least 294 studies across 17 fields, substantially inflating claimed performance metrics [3].

In one compelling case study examining civil war prediction, complex ML models were initially reported to outperform traditional statistical models significantly [3]. However, when data leakage was identified and corrected, the supposed superiority of ML models disappeared entirely—they performed no better than simpler, older methods [3]. This pattern has significant implications for neuroimaging research, where complex preprocessing pipelines and feature extraction methods create multiple potential pathways for inadvertent data leakage.

Randomness and Instability in Model Training

The training of many machine learning models makes use of randomness, especially in deep learning models trained via stochastic gradient descent [2]. This randomness means that if the same model is retrained using the same data, different parameter values will be found each time, potentially leading to different performance outcomes and feature importance rankings [2] [5].

Research has demonstrated that changing a single parameter—the random seed that controls how random numbers are generated during training—could inflate estimated model performance by as much as 2-fold compared to what different random seeds would yield [2]. This variability poses fundamental challenges for reproducibility, as two research teams using identical data and algorithms might report substantially different results based solely on their choice of random seed.

Methodological Flaws: How Validation Practices Undermine Reproducibility

The Misuse of Statistical Testing in Cross-Validation

A commonly misused procedure in biomedical ML is applying paired t-tests to compare two sets of K × M accuracy scores from models evaluated through repeated cross-validation [1]. This approach is fundamentally flawed because the overlap of training folds between different runs induces implicit dependency in accuracy scores, thereby violating the basic assumption of sample independence in most hypothesis testing procedures [1]. These dependencies also impact the normality of data distribution and the assumption of equal variance across groups, further invalidating the statistical tests [1].

The problematic nature of these practices is particularly concerning given their prevalence in high-impact literature. Many studies continue to employ inappropriate statistical comparisons that overstate the significance of their findings, potentially leading to a literature filled with false discoveries and impeding genuine scientific progress.

The Computational Reproducibility Barrier

Even when methodological choices are sound, the practical reproduction of state-of-the-art ML models can present prohibitive challenges. For example, in natural language processing, reproducing a modern transformer model with neural architecture search was estimated to cost between $1-3.2 million using publicly available cloud computing resources [2]. The same process would generate approximately 626,155 pounds of CO2 emissions—roughly five times the amount an average car generates over its entire lifetime [2].

While most medical deep learning models are currently smaller and more focused on specific image recognition tasks, the trend toward larger, more computationally intensive models suggests these reproducibility challenges may become increasingly relevant to biomedical research in the near future.

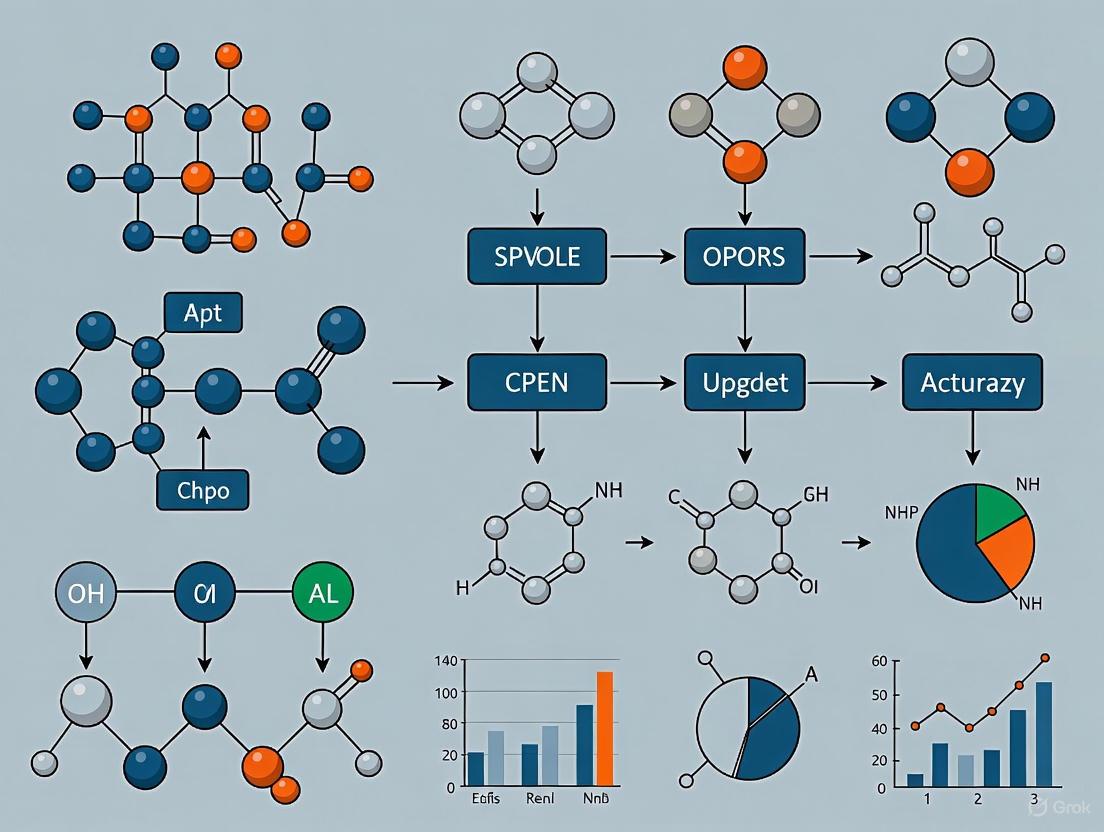

Diagram 1: Methodological pathways leading to either irreproducible or robust findings in biomedical ML research. Flawed practices (top pathway) introduce statistical errors and biases, while rigorous methodologies (bottom pathway) ensure findings are verifiable and reliable.

Case Study: Alzheimer's Disease Classification

Comparative Analysis of Neuroimaging Classification Methods

The challenges of reproducible model comparison are vividly illustrated in Alzheimer's disease (AD) classification research. A recent study compared radiomics-based analysis with conventional standardized uptake value ratio (SUVr) methods for classifying Alzheimer's disease using AV45 PET imaging [6]. The study included 79 patients diagnosed with AD and 34 patients with non-Alzheimer's dementia (NAD) and evaluated three models: an SUVr model, a radiomics model, and a combined model [6].

Table 2: Performance Comparison of Alzheimer's Disease Classification Models

| Model Type | AUC | 95% CI | Accuracy | Sensitivity | Specificity | Precision |

|---|---|---|---|---|---|---|

| SUVr Model | 0.67 | 0.45-0.86 | 68% | 78% | 45% | 75% |

| Radiomics Model | 0.89 | 0.75-0.98 | 88% | 96% | 73% | 88% |

| Combined Model | 0.88 | 0.74-0.97 | 87% | 95% | 72% | 87% |

The radiomics-based approach significantly outperformed the conventional SUVr method, particularly in terms of sensitivity and specificity [6]. However, without rigorous statistical comparison that accounts for cross-validation dependencies and potential data leakage, such performance differences might be overstated. This highlights the critical need for appropriate statistical frameworks when comparing biomedical ML models.

Reproducibility Challenges in Model Implementation

The implementation of ML models introduces additional reproducibility challenges. For instance, many ML libraries make "silent" decisions through default parameters that may differ between libraries and even between versions of the same library [2]. Thus, two researchers using the same code but different software versions could reach substantially different conclusions if important parameters receive different values by default [2].

This version dependency creates a hidden reproducibility threat even when code and data are openly shared. Combined with the impact of random seeds on model training and the sensitivity of results to cross-validation configurations, these implementation factors create multiple layers of potential variability that can compromise the reproducibility of biomedical ML research.

Solutions: Toward More Reproducible Biomedical ML Research

Technical Solutions for Enhanced Reproducibility

Stabilizing Model Performance and Feature Importance

To address variability in model performance and interpretation, researchers have proposed novel validation approaches that enhance model stability. One method involves conducting multiple trials (e.g., 400 trials per subject) while randomly seeding the ML algorithm between each trial [5]. This introduces variability in the initialization of model parameters, providing a more comprehensive evaluation of the ML model's features and performance consistency [5].

By aggregating feature importance rankings across trials, this method identifies the most consistently important features, reducing the impact of noise and random variation in feature selection [5]. The process results in stable, reproducible feature rankings, enhancing both subject-level and group-level model explainability [5].

Controlling Reproducibility in Training

There are two basic techniques to manage reproducibility in ML training: controlling the seeds for every randomizer used, and serializing the training process executed across concurrent and distributed resources [7]. While these approaches require platform support, frameworks like PyTorch provide documentation on how to set various random seeds, deterministic modes, and their implications on performance [7].

Diagram 2: Framework for stabilizing machine learning models through multiple trials and aggregated feature importance, reducing variability and improving reproducibility for clinical applications.

Methodological and Cultural Solutions

Adoption of Reporting Standards and Guidelines

Medical researchers using ML would benefit from adopting practices common in the broader ML community, including open sharing of data, code, and results whenever possible [2]. When privacy concerns prevent data sharing, a "walled-garden" approach where reviewers receive access to a private network subject to data use agreements could allow reproducibility analysis during peer review [2].

Similarly, ML researchers moving into medical applications should adhere to standard reporting guidelines such as TRIPOD, CONSORT, and SPRINT, which are now being adapted for ML and artificial intelligence applications [2]. These guidelines set reasonable standards for reporting and transparency and help communicate how an analysis was conducted to the broader scientific community.

Recommendations for Acquisition and TEVV Communities

For organizations testing, evaluating, verifying, and validating (TEVV) ML systems, three key recommendations emerge [7]:

- The acquisition community should require reproducibility and diagnostic modes in requests for proposals

- The testing community should understand how to use these modes in support of final certification

- Provider organizations should include reproducibility and diagnostic modes in their products

These objectives are readily achievable if required and designed into systems from the beginning, ultimately reducing engineering and test costs compared to discovering defects in later stages [7].

Table 3: Research Reagent Solutions for Reproducible Biomedical Machine Learning

| Resource Category | Specific Tools/Solutions | Function in Reproducible Research |

|---|---|---|

| Statistical Validation Frameworks | Unbiased CV comparison frameworks [1] | Provides mathematically sound methods for comparing model performance while accounting for CV dependencies |

| Stabilization Techniques | Multiple trials with random seed variation [5] | Reduces variability in feature importance and performance metrics through aggregation across runs |

| Reproducibility Controls | Fixed random seeds; Deterministic modes [7] | Ensures training process can be exactly reproduced when needed for debugging and verification |

| Benchmark Datasets | ADNI [1]; ABIDE [1]; ABCD [1] | Standardized, publicly available datasets enabling direct comparison across different methods |

| Reporting Guidelines | TRIPOD-ML; CONSORT-AI [2] | Structured reporting frameworks that ensure comprehensive documentation of methods and parameters |

| Data Leakage Prevention | Model info sheets [3] | Systematic documentation to identify and prevent eight common types of data leakage |

The reproducibility crisis in biomedical machine learning stems from interconnected technical, methodological, and cultural factors that collectively undermine the reliability of reported findings. The statistical flaws in cross-validation procedures, particularly the inappropriate use of significance testing with dependent samples, create substantial potential for p-hacking and spurious claims of model superiority [1]. Compounding these issues, data leakage affects numerous studies across multiple fields, generating overoptimistic results [3], while the inherent randomness in ML training introduces additional variability that is often insufficiently controlled [2] [5].

Addressing these challenges requires a multi-faceted approach combining technical solutions like stabilized validation frameworks [5], methodological reforms including adherence to reporting guidelines [2], and cultural shifts toward greater transparency and data/code sharing [2]. The development of standardized reagent solutions for reproducible research—including statistical frameworks, stabilization techniques, and benchmark datasets—provides a pathway toward more reliable biomedical ML research [1] [5].

Ultimately, as machine learning plays an increasingly prominent role in clinical decision-making, ensuring the reproducibility and robustness of these models becomes not merely an academic concern but an ethical imperative for patient safety and effective healthcare delivery.

In neuroimaging and machine learning (ML) research, selecting an appropriate statistical test is fundamental for validating model performance and ensuring reproducible findings. The choice between parametric and non-parametric tests significantly impacts the reliability of conclusions drawn from comparative studies of classification models, such as those differentiating patient groups from healthy controls based on brain imaging data [1]. These tests provide the mathematical framework for determining whether observed accuracy differences between models are statistically significant or attributable to random chance. Within the neuroimaging field, where datasets are often characterized by high dimensionality, complex correlations, and potential deviations from normality, this choice becomes particularly critical. Misapplication of statistical tests can lead to inflated performance claims, thereby exacerbating the reproducibility crisis noted in biomedical ML research [1]. This guide objectively compares parametric and non-parametric tests, detailing their underlying assumptions, relative advantages, and correct application protocols to facilitate robust model comparison in neuroimaging.

Fundamental Definitions and Comparisons

Parametric tests are a class of statistical hypothesis tests that assume the sample data comes from a population that follows a known probability distribution (most commonly, the normal distribution) and that key parameters of that distribution, such as the mean and variance, can be estimated from the data [8] [9]. This assumption about the underlying population's parameters is the origin of the term "parametric."

Non-parametric tests, in contrast, are "distribution-free" tests that do not rely on any strict assumptions about the shape or parameters of the population distribution from which the data were sampled [10] [11]. They often operate on the ranks or signs of the data rather than the raw data values themselves, making them more flexible for analyzing non-normal or categorical data.

Table 1: Core Characteristics of Parametric and Non-Parametric Tests

| Aspect | Parametric Tests | Non-Parametric Tests |

|---|---|---|

| Core Assumptions | Assumes normal distribution of data and, often, homogeneity of variance between groups [8] [12]. | No assumption of a specific distribution (distribution-free); however, some tests assume identical shapes or dispersions across groups [10] [12]. |

| Data Types | Best suited for continuous data (interval or ratio scale) [12]. | Can analyze ordinal, nominal, and continuous data [10] [12]. |

| Central Tendency | Assess group means [10]. | Assess group medians [10]. |

| Statistical Power | Generally higher power to detect an effect when assumptions are met [10] [8]. | Typically less powerful than their parametric counterparts when assumptions are met, but can be more powerful when assumptions are violated [8] [12]. |

| Robustness to Outliers | Sensitive to outliers, which can skew mean estimates and test results [12]. | Generally more robust to outliers [10] [12]. |

Advantages, Disadvantages, and Common Test Counterparts

Each class of tests offers distinct advantages and disadvantages, making them suitable for different scenarios in research.

Advantages of Parametric Tests: The primary advantage is their higher statistical power; if an effect truly exists, a parametric test is more likely to detect it, provided its assumptions are satisfied [10]. They can also provide trustworthy results with distributions that are skewed and non-normal, provided the sample size is sufficiently large, thanks to the Central Limit Theorem [10]. Furthermore, they can handle groups with different amounts of variability (heterogeneity of variance), as many modern implementations offer corrections for this (e.g., Welch's t-test) [10].

Disadvantages of Parametric Tests: Their main weakness is sensitivity to violations of their underlying assumptions. If data are severely non-normal and sample sizes are small, or if extreme outliers are present, parametric tests can produce misleading results [8] [12].

Advantages of Non-Parametric Tests: Their key strength is robustness. They are valid for small sample sizes and non-normal data and are not easily tripped up by outliers [10]. They are also the only choice for analyzing ordinal or ranked data [10].

Disadvantages of Non-Parametric Tests: The major disadvantage is generally lower statistical power, meaning they require a larger effect size or sample size to reject a false null hypothesis compared to a parametric test [12]. They can also be less informative, as they sometimes use less information from the data (e.g., ranks instead of raw values) [8].

Table 2: Common Parametric and Non-Parametric Test Pairs

| Testing Scenario | Parametric Test | Non-Parametric Test |

|---|---|---|

| Compare one group to a hypothetical mean or two paired groups | One-sample or Paired t-test | Sign test, Wilcoxon signed-rank test [10] [9] |

| Compare two independent groups | Independent (Two-sample) t-test | Mann-Whitney U test (Wilcoxon rank-sum test) [10] [9] |

| Compare three or more independent groups | One-Way ANOVA | Kruskal-Wallis test [10] [9] |

| Compare three or more dependent groups (repeated measures) | Repeated-Measures ANOVA | Friedman test [10] [13] |

| Assess relationship between two variables | Pearson's correlation | Spearman's correlation [10] |

Application in Neuroimaging Classification Model Comparison

Statistical rigor is paramount when comparing the accuracy of different neuroimaging-based classification models, a common task in biomedical ML research. Cross-validation (CV) is a prevalent method for model assessment, but it introduces specific statistical challenges, such as dependency between CV folds, which can violate the independence assumption of many tests [1].

A frequently misused practice is applying a paired t-test directly to the (K \times M) accuracy scores obtained from (M) repetitions of a (K)-fold CV. This approach is flawed because the overlapping training sets across folds create implicit dependencies in the accuracy scores, violating the t-test's assumption of sample independence [1]. This misuse can lead to an inflated false-positive rate, where a significant difference is found between models that, in truth, have equivalent performance.

Recommended non-parametric tests offer more robust alternatives for model comparison. The Wilcoxon signed-rank test is suitable for comparing two classifiers across multiple datasets or data resamples [13]. It works by ranking the absolute differences in performance between the two models on each dataset and comparing the sums of the positive and negative ranks. For comparing more than two classifiers, the Friedman test is appropriate, which ranks the models for each dataset and then tests whether the average ranks are significantly different [13].

The choice of CV setup itself (e.g., the number of folds (K) and repetitions (M)) can impact the outcome of hypothesis tests. Studies have shown that the likelihood of detecting a "significant" difference can vary substantially with different CV configurations, even when comparing models with the same intrinsic predictive power, creating a potential for p-hacking [1].

Experimental Protocol for Model Comparison

A robust framework for statistically comparing two classification models (Model A vs. Model B) on a single neuroimaging dataset involves repeated cross-validation and correct non-parametric testing, as outlined below [1].

Title: Workflow for Comparing Two ML Models

Protocol Steps:

- Experimental Setup: Define the neuroimaging dataset, the two classification models (Model A and Model B) to be compared, and the performance metric (e.g., classification accuracy).

- Repeated Cross-Validation: Perform (M) repetitions of (K)-fold cross-validation for each model. A common configuration might be 5 repetitions of 10-fold CV, resulting in 50 performance estimates per model [1].

- Data Collection: Record the performance metric (e.g., accuracy) for each model on every test fold across all repetitions, resulting in two paired vectors of (K \times M) performance scores.

- Statistical Testing: Instead of a paired t-test, apply the Wilcoxon signed-rank test to the paired differences (Model A score - Model B score) from all test folds. This test assesses whether the median difference in performance is significantly different from zero.

- Hypothesis Interpretation:

- Null Hypothesis (H₀): The median difference in performance between the two models is zero.

- Alternative Hypothesis (H₁): The median difference in performance is not zero.

- A p-value below the significance level (e.g., α = 0.05) provides sufficient evidence to reject H₀ and conclude a significant difference exists [13].

The Researcher's Toolkit for Statistical Testing

Table 3: Essential Tools and Resources for Statistical Comparison

| Tool/Resource | Function/Description |

|---|---|

| Normality Tests (e.g., Shapiro-Wilk, Kolmogorov-Smirnov) | Formal hypothesis tests to check if a data sample deviates significantly from a normal distribution. A significant p-value indicates a violation of the normality assumption [8]. |

| Q-Q (Quantile-Quantile) Plot | A graphical tool for visually assessing if a dataset follows a normal distribution. Data that is normally distributed will appear as points roughly along a straight line [8]. |

| Statistical Software (R, Python with scipy/statsmodels) | Provides implementations for all common parametric and non-parametric tests, as well as functions for diagnostic checks like normality and homogeneity of variance. |

| Wilcoxon Signed-Rank Test | The recommended non-parametric test for comparing the performance of two models across multiple datasets or cross-validation folds [13]. |

| Friedman Test with Post-hoc Analysis | The recommended non-parametric test for comparing more than two models across multiple datasets, often followed by post-hoc tests for pairwise comparisons [13]. |

The following diagram provides a structured path for choosing the correct statistical test, integrating the key concepts discussed.

Title: Statistical Test Selection Flowchart

Conclusion: The choice between parametric and non-parametric tests is a critical step in ensuring the validity of findings in neuroimaging classification research. While parametric tests are powerful when their strict assumptions are met, the complex nature of neuroimaging data often necessitates the robustness of non-parametric alternatives. As demonstrated, a rigorous experimental protocol using repeated cross-validation and appropriate non-parametric tests like the Wilcoxon signed-rank test provides a more reliable foundation for comparing model accuracies than flawed practices such as applying a paired t-test to cross-validation results. Adhering to these principles mitigates the risk of p-hacking and supports the development of reproducible and trustworthy ML models in biomedicine.

Understanding P-Values, Effect Size, and Confidence Intervals

In neuroimaging, particularly for comparing the accuracy of classification models, a profound understanding of statistical measures is paramount. Relying solely on a single metric, such as a p-value, provides an incomplete picture and can lead to non-reproducible findings or claims of model superiority that lack practical meaning. This guide provides an objective comparison of three core statistical concepts—p-values, effect sizes, and confidence intervals—framed within the context of neuroimaging classification model research. We synthesize current reporting standards, experimental data from recent studies, and methodological protocols to equip researchers with the knowledge to conduct rigorous and interpretable model comparisons.

Statistical Product Comparison: P-Values, Effect Size, and Confidence Intervals

The table below provides a direct comparison of the three key statistical measures, outlining their core functions, interpretations, and roles in inference.

Table 1: Comparison of Key Statistical Measures for Model Evaluation

| Feature | P-Value | Effect Size | Confidence Interval (CI) |

|---|---|---|---|

| Core Function | Quantifies surprise under the null hypothesis; tests if an effect exists [14] [15]. | Quantifies the magnitude or practical importance of an effect [16] [17]. | Estimates a plausible range of values for the true population parameter [17] [15]. |

| Answers the Question | "How likely is my observed data, assuming the null hypothesis is true?" [14] | "How large is the effect, and does it matter in the real world?" [16] | "What is the range of values compatible with my observed data?" [15] |

| Interpretation | Smaller p-values (< 0.05) indicate stronger evidence against the null [14]. | Compared to field-specific benchmarks (e.g., Cohen's d: 0.2=small, 0.5=medium, 0.8=large) [16] [17]. | The interval has a certain confidence (e.g., 95%) of containing the true effect size [17]. |

| Role in Inference | A tool for binary decision-making (reject/fail to reject H₀); prone to misuse if used alone [15]. | Provides context for the p-value; essential for assessing practical significance [18] [16]. | Provides information about the precision of the estimate and its practical implications [15]. |

| Key Limitation | Does not measure the size or importance of an effect; sensitive to sample size [18] [14]. | Does not convey the statistical reliability or uncertainty of the estimate on its own. | A wide interval indicates low precision and uncertainty, even if statistically significant [15]. |

Experimental Protocols for Statistical Comparison

Protocol 1: Cross-Validation for Comparing Model Accuracy

A prevalent challenge in neuroimaging is statistically comparing the classification accuracy of two machine learning models using cross-validation (CV). A 2025 study highlighted that common practices for this are fundamentally flawed, as the statistical significance of accuracy differences can be artificially influenced by CV setup choices [1].

Methodology:

- Data Splitting: A dataset is split into K folds. The model is trained on K-1 folds and tested on the held-out fold, repeating the process K times [1].

- Model Training & Evaluation: Two models (e.g., a proposed model vs. a baseline) undergo the same CV procedure, generating K paired accuracy scores.

- Flawed Significance Testing: A commonly misused practice is applying a paired t-test directly to the K accuracy scores. This is invalid because the training (and sometimes test) data across folds are not independent, violating the test's assumptions [1].

- Impact of Setup: The study demonstrated that varying the number of folds (K) and the number of CV repetitions (M) can drastically alter the resulting p-value. With a higher K and M, there is a greater likelihood of detecting a "statistically significant" difference between two models, even when no intrinsic difference in predictive power exists. This creates a pathway for p-hacking and inconsistent conclusions [1].

Figure 1: Workflow of a cross-validation procedure, highlighting the common pitfall of using a paired t-test on non-independent accuracy scores, leading to unreliable p-values [1].

Protocol 2: Reporting Effect Estimates in Neuroimaging Analyses

Beyond classification accuracy, a long-standing issue in general neuroimaging result reporting is the omission of effect estimates. The field has been dominated by displaying statistical maps (t- or z-values) without the underlying physical measurement of effect magnitude [18].

Methodology:

- Model Estimation: In a task-fMRI analysis, a General Linear Model (GLM) is fitted to the data, producing a β (beta) value for each task condition. This β represents the estimated amplitude of the BOLD response [18].

- Statistical Mapping: A t-statistic is calculated for each β, representing the effect estimate divided by its standard error (a measure of its reliability) [18].

- Standard vs. Recommended Reporting:

- Standard Practice: Only the t-statistic map is reported, often thresholded at p < 0.05. A table of results may list peak activation coordinates and their corresponding t-values [18].

- Recommended Practice: Report the effect estimate (the β value, often converted to percent signal change for interpretability) alongside its corresponding t-statistic and confidence interval. This allows for the assessment of both statistical reliability and biological relevance [18].

Table 2: Neuroimaging Software for Meta- and Mega-Analysis (2019-2024)

| Software Package | Primary Method | Usage Prevalence (2019-2024) | Key Note |

|---|---|---|---|

| GingerALE [19] | Activation Likelihood Estimation (ALE) | 49.6% (407/820 papers) | Versions prior to 2.3.6 had inflated false positive rates. |

| SDM-PSI | Seed-based d Mapping | 27.4% | A hybrid method that can incorporate both peak coordinates and statistical maps. |

| Neurosynth | Automated Coordinate-Based Meta-Analysis | 11.0% | A database and platform for large-scale, automated meta-analyses. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Statistical Comparison in Neuroimaging

| Item Name | Function/Description |

|---|---|

| Effect Size Calculator (e.g., Cohen's d) | Standardizes the difference between two groups (e.g., two models' accuracy distributions), allowing for comparison across studies. Calculated as the difference in means divided by the pooled standard deviation [16] [17]. |

| Cross-Validation Framework | A resampling procedure to evaluate model performance on limited data. Mitigates overfitting and provides a more robust estimate of true accuracy than a single train-test split [1]. |

| Confidence Interval for Proportions | Used to calculate the uncertainty around a classification accuracy score (a proportion). A 95% CI provides a range of plausible values for the model's true accuracy [15]. |

| Meta-Analytic Software (e.g., GingerALE, SDM-PSI) | Tools for synthesizing findings across multiple neuroimaging studies. They help identify robust brain activation patterns and require the reporting of effect sizes and coordinates for meaningful aggregation [19]. |

| Equivalence Testing | A statistical technique used to demonstrate that two effects (e.g., the accuracy of two models) are statistically equivalent within a pre-specified margin, rather than just testing for a difference [17]. |

Integrated Decision Framework

To avoid common pitfalls, researchers should not rely on a single statistic. The following diagram outlines a workflow that integrates p-values, effect sizes, and confidence intervals for a more robust conclusion.

Figure 2: An integrated decision framework for interpreting model comparison results, emphasizing the need to move beyond a binary reliance on p-values [16] [15].

The Role of Neuroimaging Biomarkers in Drug Development and Clinical Trials

Neuroimaging biomarkers are transforming the development of central nervous system (CNS) therapeutics by providing objective, quantifiable measures of brain structure and function. These tools are increasingly critical for de-risking drug development and improving the probability of success in clinical trials, particularly for complex psychiatric and neurodegenerative disorders [20]. Their integration into clinical trials addresses long-standing challenges in CNS drug development, including high attrition rates and difficulties in demonstrating clinical efficacy [21] [22].

The following table summarizes the primary roles and applications of key neuroimaging modalities in the drug development pipeline.

Table 1: Key Neuroimaging Biomarkers in CNS Drug Development

| Imaging Modality | Primary Applications in Drug Development | Key Measured Parameters |

|---|---|---|

| Positron Emission Tomography (PET) | Target engagement, brain penetration, dose selection, pharmacokinetics [20] [23] | Receptor occupancy, protein pathology (e.g., amyloid, tau), glucose metabolism (FDG-PET) [23] [24] |

| Magnetic Resonance Imaging (MRI) | Patient stratification, disease progression monitoring, safety, structural changes [20] [22] | Brain volume (volumetry), functional connectivity (fMRI), blood-oxygen-level-dependent (BOLD) signal, white matter integrity [20] |

| Electroencephalography (EEG) | Functional target engagement, pharmacodynamic response, dose-response relationships [20] | Resting-state brain rhythms, event-related potentials (ERPs), quantitative EEG (qEEG) [20] [24] |

Experimental Protocols for Key Applications

Protocol for Assessing Target Engagement with PET

Purpose: To confirm that an investigational drug reaches its intended molecular target in the human brain and to establish a relationship between dose, target occupancy, and physiological effect [20] [23].

Detailed Methodology:

- Tracer Administration: A radiolabeled ligand (tracer) with high specificity for the drug's target (e.g., a receptor or enzyme) is intravenously administered to study participants [23].

- Image Acquisition: Dynamic PET scanning is performed over a period of 60-90 minutes to capture the tracer's uptake and binding kinetics in the brain [23].

- Occupancy Calculation: Target occupancy by the drug is calculated by comparing tracer binding in the same individual before and after drug administration, or against a baseline group. The simplified reference tissue model is often used to derive the binding potential, from which percentage occupancy is computed [23].

- Dose-Occupancy Relationship: Multiple drug doses are tested to model the relationship between plasma concentration, target occupancy, and functional effects, informing optimal dose selection for later-stage trials [20].

Protocol for Functional Pharmacodynamics with fMRI

Purpose: To measure a drug's effect on brain circuit function and identify a dose-response relationship for functional changes [20].

Detailed Methodology:

- Study Design: A randomized, placebo-controlled, within- or between-subjects design is employed, often including multiple dose levels of the investigational drug [20].

- Task-Based or Resting-State fMRI: Participants undergo fMRI scanning while performing a cognitive task known to engage brain circuits relevant to the disease (e.g., a working memory task for schizophrenia) or during a resting state [20] [24].

- Data Analysis: The BOLD signal is preprocessed and analyzed. For task-based fMRI, brain activation in response to the task is compared between drug and placebo conditions. For resting-state fMRI, changes in functional connectivity between brain networks are assessed [20].

- Dose-Response Modeling: Changes in functional activation or connectivity are modeled against the administered dose or plasma drug levels to determine the minimal efficacious dose and the dose at which functional effects plateau [20].

The following diagram illustrates the multi-stage workflow for developing and applying a novel neuroimaging biomarker, such as a PET ligand, in drug development.

Statistical Considerations for Model Comparison

A critical challenge in using neuroimaging for patient classification is ensuring robust statistical comparison of machine learning model accuracy. Research highlights significant variability in outcomes based on cross-validation (CV) setups [1].

Key Statistical Flaws and Considerations:

- Misuse of Paired T-tests: Directly applying a paired t-test to the

K x Maccuracy scores from a repeated K-fold CV is flawed, as the overlapping training sets violate the test's assumption of sample independence [1]. - Impact of CV Configuration: The likelihood of detecting a statistically significant difference between models (

p < 0.05) is highly sensitive to the number of folds (K) and the number of CV repetitions (M). HigherKandMvalues artificially increase the "positive rate," leading to potential p-hacking and non-reproducible conclusions [1]. - Recommended Practice: The field urgently requires unified and unbiased testing procedures that account for data dependencies inherent in CV to mitigate the reproducibility crisis in biomedical machine learning [1].

The diagram below outlines the framework for a statistically sound comparison of classification models, highlighting steps to avoid common pitfalls.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of neuroimaging biomarkers relies on a suite of specialized tools and reagents. The following table details key components of this "toolkit."

Table 2: Essential Research Reagent Solutions for Neuroimaging Biomarkers

| Tool/Reagent | Function | Example Applications |

|---|---|---|

| Validated PET Tracers | Binds to specific molecular targets (e.g., receptors, pathological proteins) for quantitative imaging. | Amyloid (PiB) and tau tracers for Alzheimer's disease; PDE10A tracers for schizophrenia trials [23] [24]. |

| Cognitive Task Paradigms | Engages specific brain circuits during fMRI to measure drug-induced changes in neural activity. | N-back task for working memory; emotional face matching task for emotional processing [20]. |

| EEG ERP Paradigms | Prescribes sensory or cognitive stimuli to evoke specific, time-locked brain potentials. | Mismatch Negativity (MMN) or P300 paradigms to assess cognitive function in schizophrenia [20] [24]. |

| Automated Image Analysis Pipelines | Provides standardized, reproducible processing of raw neuroimaging data (e.g., MRI, PET) into quantitative metrics. | FreeSurfer for cortical thickness; SPM or FSL for fMRI analysis; in-house pipelines for amyloid PET SUVr calculation [25]. |

| Biobanked Biofluids (CSF/Plasma) | Provides correlative data for validating imaging biomarkers and understanding pathophysiology. | Correlating CSF Aβ42 with amyloid PET; linking plasma neurofilament light chain (NfL) with MRI atrophy [26] [22]. |

The future of neuroimaging in drug development is moving towards a precision psychiatry and neurology framework [20]. This involves using biomarkers early in development to understand dosing and mechanism, and later to enrich clinical trials with patients most likely to respond, ultimately improving clinical outcomes [20]. Key future trends include the development of novel PET ligands for targets like neuroinflammation and synaptic integrity, the integration of digital biomarkers from wearables, and the application of artificial intelligence to analyze complex, multimodal datasets [27] [28].

In conclusion, neuroimaging biomarkers are no longer merely research tools but are integral to de-risking the costly and complex process of CNS drug development. Their rigorous application, coupled with sound statistical practices and a growing toolkit of reagents, is paving the way for more effective and personalized therapies for brain disorders.

In machine learning, particularly within the high-stakes field of neuroimaging, evaluating model performance extends far beyond simple accuracy. Classification accuracy, defined as the proportion of all correct predictions among the total number of cases, provides an initial, intuitive performance snapshot [29] [30]. However, this metric becomes dangerously misleading with imbalanced datasets—a common scenario in biomedical research where the number of patients with a condition is often much smaller than healthy controls [31] [32]. A model could achieve 99% accuracy by simply always predicting "healthy" if a disease affects only 1% of the population, yet miss every single sick patient [31].

This limitation is particularly critical in neuroimaging-based classification, where rigorous statistical comparison of models is essential for advancing diagnostic capabilities [1]. Research highlights a reproducibility crisis in biomedical machine learning, exacerbated by inappropriate evaluation practices and flawed statistical testing procedures when comparing model accuracy [1]. This guide provides researchers with a comprehensive framework for selecting, calculating, and interpreting classification metrics within neuroimaging contexts, enabling more meaningful model comparisons and supporting robust scientific conclusions.

Comprehensive Metrics for Classification Performance

Core Metrics Derived from the Confusion Matrix

The confusion matrix forms the foundation for most classification metrics, providing a complete breakdown of model predictions versus actual outcomes across four categories: True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN) [33] [31]. From these, several essential metrics are derived:

- Precision (Positive Predictive Value): Measures the reliability of positive predictions [29] [30]. Formula: ( \text{Precision} = \frac{TP}{TP + FP} ) [29] [30]. Critical when false positives are costly, such as in diagnostic applications where incorrectly identifying a healthy person as sick leads to unnecessary treatments [31].

- Recall (Sensitivity/True Positive Rate): Measures the ability to identify all actual positive cases [30] [34]. Formula: ( \text{Recall} = \frac{TP}{TP + FN} ) [29] [34]. Essential when false negatives are dangerous, such as missing a disease in a sick patient [31].

- Specificity (True Negative Rate): Measures the ability to identify actual negative cases [34]. Formula: ( \text{Specificity} = \frac{TN}{TN + FP} ) [29] [34].

- F1-Score: The harmonic mean of precision and recall, providing a single metric that balances both concerns [33] [34]. Formula: ( \text{F1} = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} ) [34]. Particularly valuable for imbalanced datasets where accuracy is misleading [31].

Table 1: Core Classification Metrics and Their Applications

| Metric | Formula | Optimal Use Case | Neuroimaging Example |

|---|---|---|---|

| Accuracy | ((TP+TN)/(TP+TN+FP+FN)) [29] | Balanced classes, similar error costs [30] | Initial screening tool for balanced cohorts |

| Precision | (TP/(TP+FP)) [29] | High cost of false positives [31] | Minimizing false disease diagnoses |

| Recall (Sensitivity) | (TP/(TP+FN)) [29] | High cost of false negatives [31] | Identifying all disease cases |

| Specificity | (TN/(TN+FP)) [29] | Correctly identifying healthy cases | Confirming healthy control subjects |

| F1-Score | (2 \times \frac{Precision \times Recall}{Precision + Recall}) [34] | Imbalanced data, balanced focus on FP/FN [31] | Overall performance summary for skewed populations |

Threshold-Independent Metrics: ROC-AUC and PR-AUC

Unlike the previous metrics that require a fixed classification threshold, these metrics evaluate performance across all possible thresholds:

- ROC Curve & AUC: The Receiver Operating Characteristic (ROC) curve plots the True Positive Rate (Recall) against the False Positive Rate at various classification thresholds [29] [31]. The Area Under the ROC Curve (AUC) quantifies the overall ability to distinguish between positive and negative classes, with 1.0 representing perfect discrimination and 0.5 indicating random guessing [29] [31]. ROC-AUC is particularly valuable because it's independent of class distribution and provides a comprehensive view of model performance across all operating points [33].

- PR Curve & AUC: The Precision-Recall (PR) curve plots precision against recall at various thresholds and is often more informative than ROC for highly imbalanced datasets where the positive class is rare but important [31]. The area under this curve (PR-AUC) focuses specifically on the model's performance regarding the positive class, making it superior to ROC-AUC for situations where the negative class dominates but correct identification of positives is critical [31].

The following diagram illustrates the relationship between these metrics and their foundation in the confusion matrix:

Diagram 1: Classification Metrics Taxonomy. This diagram shows the relationships between the confusion matrix components and various classification metrics, highlighting both threshold-dependent and threshold-independent evaluation approaches.

Statistical Comparison of Classification Models in Neuroimaging

Experimental Protocols for Model Comparison

Rigorous comparison of classification models in neuroimaging requires carefully designed experimental protocols. Recent research highlights critical methodological considerations:

- Cross-Validation Framework: A study investigating statistical variability in neuroimaging classification compared model accuracy using a repeated cross-validation framework [1]. The researchers created two classifiers with identical intrinsic predictive power by:

- Training a linear Logistic Regression (LR) model on neuroimaging data

- Creating two perturbed models by adding and subtracting random Gaussian vectors to the decision boundary coefficients

- Evaluating accuracy differences across multiple K-fold cross-validation runs with different K and repetition values (M) [1]

- Statistical Testing Flaws: The study demonstrated that commonly used procedures, such as applying paired t-tests to accuracy scores from repeated cross-validation, are fundamentally flawed [1]. The statistical significance of accuracy differences varied substantially with cross-validation configurations (K and M values), despite comparing models with no theoretical performance differences [1].

- Dataset Characteristics: Experiments were conducted on three neuroimaging datasets: Alzheimer's Disease Neuroimaging Initiative (ADNI) with 222 patients/controls, Autism Brain Imaging Data Exchange (ABIDE) with 391 ASD/458 controls, and Adolescent Brain Cognitive Development (ABCD) with 11,225 participants for sex classification [1].

Quantitative Comparison of Evaluation Metrics

Table 2: Metric Performance Across Neuroimaging Classification Tasks

| Metric | ADNI (Alzheimer's) | ABIDE (Autism) | ABCD (Sex Classification) | Statistical Robustness |

|---|---|---|---|---|

| Accuracy | High variance with CV setup [1] | Sensitive to class balance [1] | More stable with large N [1] | Low - highly dependent on test setup [1] |

| AUC-ROC | Recommended for overall discrimination [33] | Less affected by class imbalance [31] | Consistent across thresholds [31] | High - threshold-independent [33] |

| F1-Score | Balances precision/recall tradeoff [33] | Useful for skewed groups [1] | Provides single summary metric [34] | Medium - depends on threshold choice [34] |

| Precision | Critical for diagnostic specificity [31] | Important for minimizing false ASD diagnoses | Less critical for balanced problem | Medium - varies with threshold [30] |

| Recall | Essential for identifying all patients [31] | Crucial for comprehensive detection | High for sex classification | Medium - varies with threshold [30] |

The following workflow diagram illustrates a robust experimental protocol for comparing neuroimaging classification models:

Diagram 2: Neuroimaging Model Comparison Workflow. This diagram outlines a rigorous experimental protocol for comparing classification models in neuroimaging research, emphasizing proper cross-validation and statistical testing procedures.

The Researcher's Toolkit: Essential Methodological Components

Table 3: Research Reagent Solutions for Neuroimaging Classification Studies

| Tool/Component | Function | Implementation Example |

|---|---|---|

| Cross-Validation Framework | Robust performance estimation while mitigating overfitting | K-fold (K=5-10) with stratification; repeated multiple times with different random seeds [1] |

| Multiple Evaluation Metrics | Comprehensive performance assessment from complementary perspectives | Calculate AUC-ROC, F1-Score, Precision, Recall simultaneously rather than relying on single metric [34] [32] |

| Statistical Testing Suite | Rigorous comparison of model performance accounting for dependencies | Tests that properly handle cross-validation dependencies; avoid flawed paired t-tests on correlated accuracy scores [1] |

| Public Neuroimaging Datasets | Standardized benchmarks for model development and comparison | ADNI, ABIDE, ABCD datasets providing curated neuroimaging data with diagnostic labels [1] |

| Probability Calibration Methods | Ensuring predicted probabilities reflect true empirical frequencies | Platt scaling, isotonic regression; particularly important for clinical decision support [35] |

Moving beyond simple accuracy is not merely methodological sophistication—it is a scientific necessity in neuroimaging research. Different evaluation metrics answer different questions about model performance: "Can we trust its positive predictions?" (precision), "Will it find all the true cases?" (recall), and "How well does it separate groups across all thresholds?" (AUC-ROC) [31]. The choice among these metrics must be driven by the clinical or research context, particularly the relative costs of different error types [30] [31].

Furthermore, rigorous statistical comparison of models requires acknowledging and accounting for the dependencies introduced by common evaluation procedures like cross-validation [1]. Studies demonstrate that even models with identical intrinsic performance can appear significantly different based solely on the statistical testing approach and cross-validation configuration [1]. By adopting the comprehensive evaluation framework outlined in this guide—employing multiple complementary metrics, implementing robust experimental protocols, and utilizing appropriate statistical tests—neuroimaging researchers can advance the field with more reproducible, meaningful, and clinically relevant classification models.

Statistical Methods and Cross-Validation Frameworks for Model Evaluation

The rigorous comparison of classification model accuracy is a cornerstone of machine learning (ML) advancement in neuroimaging research. Selecting appropriate statistical tests is paramount for determining whether a proposed model genuinely outperforms existing alternatives or if observed differences stem from random variability. Parametric tests like t-tests and ANOVA offer power and simplicity but require specific assumptions about data distribution. When these assumptions are violated—a common occurrence with neuroimaging data—their non-parametric counterparts provide a robust alternative for validating model performance.

Within neuroimaging, this process is complicated by unique data characteristics, including complex spatiotemporal structures, extremely high dimensionality, and significant heterogeneity across subjects and studies [36]. Furthermore, the standard use of cross-validation (CV) for model assessment introduces additional statistical challenges, as the resulting accuracy scores are not fully independent, potentially violating key assumptions of parametric tests [1]. This guide objectively compares these statistical families and provides a structured framework for their application in neuroimaging classification research.

Comparative Analysis of Statistical Tests

The table below summarizes the key hypothesis tests for comparing model accuracy, outlining their parametric and non-parametric equivalents.

| Test Objective | Parametric Test | Non-Parametric Counterpart | Key Assumptions & Use Cases |

|---|---|---|---|

| Compare two independent groups | Independent samples t-test | Mann-Whitney U test (Wilcoxon Rank Sum Test) [37] [38] | Parametric: Data normality, homogeneity of variance. Non-Parametric: Independent observations, ordinal data. Ideal for non-normal data or ranks [38]. |

| Compare two paired/related groups | Paired samples t-test | Wilcoxon Signed-Rank Test [38] | Parametric: Normality of differences between pairs. Non-Parametric: Matched pairs, ordinal data. For repeated measures on same subjects [38]. |

| Compare three or more independent groups | One-Way ANOVA | Kruskal-Wallis Test [39] [38] | Parametric: Normality, homogeneity of variance, independent observations. Non-Parametric: Independent observations, ordinal data. Interprets as difference in medians or dominance [39]. |

| Compare three or more related groups | Repeated Measures ANOVA | Friedman's Test [38] | Parametric: Sphericity, normality of residuals. Non-Parametric: Repeated measures on the same entities. For multiple treatments or conditions [38]. |

Key Distinctions and Selection Criteria

The fundamental distinction lies in their assumptions. Parametric tests assume the data follows a known distribution (typically normal), while non-parametric tests are "distribution-free," making them more flexible [38]. This makes non-parametric tests particularly valuable in neuroimaging for analyzing ordinal data, data with outliers, or when instrument detection limits create "non-detectable" values that cannot be assigned arbitrary numbers [38].

However, this flexibility has a cost. If the assumptions of a parametric test are met, using a non-parametric test can result in a loss of statistical power [39]. Non-parametric tests often work with the ranks of the data rather than the raw values, which can discard some information [37] [38]. Therefore, the choice is not about one being universally better, but about selecting the right tool for the data at hand. A simple workflow for this decision is illustrated below.

Experimental Protocols for Model Comparison

A critical application of these statistical tests in neuroimaging is comparing the predictive accuracy of different classification models. A common but flawed practice is using a paired t-test on accuracy scores from a repeated K-fold cross-validation, which can inflate significance due to the non-independence of the scores [1].

A Framework for Unbiased Comparison

To objectively assess the impact of cross-validation setup on statistical significance, researchers can employ a controlled framework using classifiers with identical intrinsic predictive power [1]. The workflow for this validation procedure is detailed in the following diagram.

Protocol Steps:

- Sample Selection: Randomly choose N samples from each class to form a balanced dataset [1].

- Perturbation Vector: Create a random, zero-centered Gaussian vector with a predefined standard deviation (perturbation level, E). The dimension matches the number of features [1].

- Base Model Training: In each of the K×M cross-validation runs, train a baseline linear classifier (e.g., Logistic Regression) on the training data [1].

- Generate "Twin" Models: Create two perturbed models by adding and subtracting the random vector to the linear coefficients of the base model's decision boundary. This creates two classifiers with no inherent algorithmic superiority [1].

- Model Evaluation: Assess the accuracy of both perturbed models on the testing data [1].

- Statistical Testing: Apply a hypothesis test (e.g., paired t-test) to the K×M accuracy scores to produce a p-value. Under ideal conditions, this should not show a significant difference. This framework can reveal how CV setup choices (K and M) alone can lead to spurious claims of significance [1].

Empirical Findings from Neuroimaging Data

Application of this framework on neuroimaging datasets (e.g., ADNI, ABIDE, ABCD) demonstrates that statistical sensitivity can be artificially inflated. Specifically:

- The likelihood of detecting a "significant" difference between models increased with the number of CV folds (K) and repetitions (M), even when no real difference existed [1].

- For instance, in the ABCD dataset, the positive rate (false positive rate in this context) increased by an average of 0.49 from M=1 to M=10 across different K settings [1]. This highlights a clear risk of p-hacking through CV configuration.

Essential Research Reagents and Tools

The table below lists key analytical "reagents" essential for conducting statistically sound model comparisons in neuroimaging.

| Research Reagent | Function | Application Context |

|---|---|---|

| Mann-Whitney U Test | Compares medians of two independent groups [38]. | Replacing an independent t-test for non-normal data or ordinal outcomes. |

| Kruskal-Wallis Test | Extends Mann-Whitney to compare three or more independent groups [39] [38]. | Non-parametric alternative to one-way ANOVA. |

| Permutation Tests | Non-parametric method that computes significance by randomizing data labels [40]. | Ideal for complex statistic images in SPM when parametric assumptions are untenable [40]. |

| Cross-Validation (K-fold) | Resampling procedure to assess and compare model generalizability [1]. | Standard protocol for evaluating neuroimaging-based classifiers with limited data. |

| Prevalence Inference (i-test) | Group-level test concluding an effect is typical in the population, not just present in some individuals [41]. | Second-level fMRI decoding analysis to claim an effect is common. |

| Non-Parametric Calibration | Adjusts confidence estimates of a classifier to better reflect true correctness probability [42]. | Improving reliability of predictive uncertainty in deep neural networks. |

Selecting between t-tests, ANOVA, and their non-parametric counterparts is a critical decision that directly impacts the validity of conclusions in neuroimaging classification research. Parametric tests offer power when their strict assumptions are met, but neuroimaging data often violate these assumptions, making non-parametric tests a more robust choice for comparing model accuracies.

To ensure rigorous and reproducible results, researchers should:

- Formally Check Assumptions: Always test for normality and homogeneity of variance before defaulting to parametric tests.

- Use Robust Validation Protocols: Be aware that common cross-validation practices can inflate statistical significance. Employ controlled frameworks to understand the behavior of statistical tests under different CV setups.

- Report Transparently: Clearly state the statistical test used, the justification for its selection, and the exact cross-validation configuration (K, M). Presenting medians and interquartile ranges is often more appropriate than means when using non-parametric methods [39].

- Go Beyond Simple Significance: Consider using advanced group-level statistics like prevalence inference [41] to make more meaningful claims about how common an effect is within a population.

By adhering to these practices and thoughtfully selecting statistical tests based on data properties rather than convention, researchers can generate more reliable and interpretable evidence to advance the field of neuroimaging machine learning.

In machine learning for neuroimaging, the paramount goal is to develop classification models that generalize reliably to new, unseen data. Cross-validation (CV) serves as the cornerstone technique for estimating this generalization ability, making it indispensable for validating models that classify conditions such as Alzheimer's disease, autism spectrum disorders, or cognitive states based on brain data [1] [43]. Unlike a simple train-test split, CV maximizes the use of often limited and costly neuroimaging data by systematically partitioning the dataset into training and testing subsets multiple times [44] [45].

The choice of cross-validation strategy is not merely a technical detail; it directly impacts the bias and variance of the performance estimate and can significantly influence the conclusions drawn from a study [43]. Within this framework, k-Fold Cross-Validation and Repeated k-Fold Cross-Validation have emerged as two of the most prevalent methods. However, as neuroimaging data often exhibit complex structures—including temporal dependencies, subject-specific effects, and high dimensionality—selecting and implementing a robust validation strategy is critical. Inadequate CV designs can lead to over-optimistic performance estimates and contribute to the reproducibility crisis in biomedical machine learning research [1]. This guide provides a objective comparison of these two core strategies, focusing on their application in statistically comparing the accuracy of neuroimaging-based classification models.

Understanding k-Fold and Repeated k-Fold Cross-Validation

The k-Fold Cross-Validation Protocol

k-Fold Cross-Validation is a foundational resampling technique. Its core protocol is methodical [46] [47]:

- Partition: The entire dataset is randomly divided into k approximately equal-sized subsets, known as "folds."

- Iterate: For each of the k iterations:

- A single fold is designated as the test set.

- The remaining k-1 folds are combined to form the training set.

- A model is trained on the training set and evaluated on the test set, yielding a performance score (e.g., accuracy).

- Aggregate: The final reported performance metric is the average of the k individual scores obtained from each iteration [44] [47].

This process ensures that every observation in the dataset is used exactly once for testing, providing a more reliable estimate of model performance than a single train-test split [46]. The value of k is a critical choice; common values in practice are 5 and 10 [47].

The Repeated k-Fold Cross-Validation Protocol

Repeated k-Fold Cross-Validation is an extension designed to address the variability inherent in a single k-fold run. Its procedure is as follows [48]:

- Execute k-Fold: A complete k-fold cross-validation cycle, as described above, is performed.

- Repeat: The entire k-fold process is repeated M times. Crucially, for each repetition, the dataset is randomly reshuffled and split into k folds anew.

- Aggregate: The final performance metric is the average of all k × M individual performance scores.

By introducing multiple repetitions with new random splits, this method mitigates the risk of the model's performance estimate being dependent on a single, potentially fortunate or unfortunate, partitioning of the data [49] [48]. The following workflow diagram illustrates the structural difference between the two methods.

Statistical and Methodological Comparison

The choice between k-Fold and Repeated k-Fold has profound implications for the statistical properties of the performance estimate. The trade-off is primarily between computational cost and the stability of the evaluation.

Table 1: Statistical and Practical Comparison of k-Fold and Repeated k-Fold CV

| Aspect | k-Fold Cross-Validation | Repeated k-Fold Cross-Validation |

|---|---|---|

| Core Principle | Single random split into k folds; each fold tests once [46] [47]. | M independent repetitions of the k-fold procedure with reshuffling [48]. |

| Variance of Estimate | Higher variance, as the estimate depends on a single data partition [48]. | Lower variance, as averaging over multiple splits provides a more stable estimate [49]. |

| Bias of Estimate | Generally low bias, as most data is used for training (e.g., 90% with k=10) [47]. | Similar low bias to standard k-fold. |

| Computational Cost | Lower; requires training k models [46]. | Higher; requires training k × M models, which can be prohibitive for complex models [48]. |

| Data Utilization | Excellent; every data point is used for training and testing exactly once [46]. | Excellent; uses all data, but through more comprehensive resampling. |

| Primary Advantage | Computationally efficient and straightforward to implement. | More reliable and robust performance estimate, less dependent on a single split [49]. |

| Key Disadvantage | Results can be sensitive to the initial random partition of the data. | Increased computational time and resources [48]. |

Experimental Evidence from Neuroimaging Studies

Empirical studies in neuroimaging highlight the practical consequences of cross-validation choices on model comparison and the potential for inflated or unreliable results.

Impact on Model Comparison and Statistical Significance

A critical study investigated the statistical variability in comparing classification models for neuroimaging data [1]. The researchers developed a framework to compare two classifiers with identical intrinsic predictive power. When a paired t-test was incorrectly applied to the k × M accuracy scores from a repeated k-fold CV, they found an undesired artifact: the statistical significance of the (non-existent) difference between models increased artificially with both the number of folds (k) and the number of repetitions (M) [1].

Table 2: Impact of CV Setup on False Positive Rate (Positive Rate) Data adapted from Scientific Reports 15, 28745 (2025), demonstrating the likelihood of incorrectly detecting a significant difference between two identical models [1].

| Dataset | CV Setup (k, M) | Positive Rate (p < 0.05) |

|---|---|---|

| ABCD (Sex Classification) | k=2, M=1 | 0.08 |

| k=2, M=10 | 0.35 | |

| k=50, M=1 | 0.25 | |

| k=50, M=10 | 0.57 | |

| ABIDE (ASD Classification) | k=2, M=1 | 0.10 |

| k=2, M=10 | 0.40 | |

| k=50, M=1 | 0.30 | |

| k=50, M=10 | 0.62 | |

| ADNI (Alzheimer's Classification) | k=2, M=1 | 0.07 |

| k=2, M=10 | 0.32 | |

| k=50, M=1 | 0.22 | |

| k=50, M=10 | 0.52 |

As shown in Table 2, using a 50-fold CV repeated 10 times (M=10) led to a false positive rate exceeding 50% in some cases, meaning a coin toss would be more reliable for detecting a true difference. This demonstrates that inappropriate statistical testing on repeated CV outputs can severely exacerbate the problem of p-hacking, where researchers might unconsciously tune their CV parameters until a statistically significant result is achieved [1].

Variability in Reported Classification Accuracy

Another source of variability is the structure of the data itself. In passive Brain-Computer Interface (pBCI) research, which uses neuroimaging to classify mental states, the choice between a standard k-fold and a block-wise k-fold that respects the temporal structure of the experiment can lead to dramatically different conclusions [43].

A study comparing these schemes across three EEG datasets found that classification accuracies could be inflated by up to 30.4% when temporal dependencies between training and test sets were not properly controlled [43]. This suggests that a standard k-fold CV might report an overly optimistic accuracy if the data is not independent and identically distributed (i.i.d.), a common scenario in time-series neuroimaging data. Repeated k-fold does not inherently solve this problem, but it can help characterize the variability of the estimate under different random splits, potentially alerting the researcher to instability issues.

Essential Research Reagent Solutions

Implementing robust cross-validation in neuroimaging requires more than just conceptual understanding; it relies on a suite of software tools and methodological "reagents."

Table 3: Essential Research Reagent Solutions for Cross-Validation

| Research Reagent | Function in CV for Neuroimaging | Examples and Notes |

|---|---|---|

| scikit-learn Library | Provides the core implementation for k-Fold and Repeated k-Fold splitters and evaluation functions [44]. | sklearn.model_selection.KFold, RepeatedKFold, cross_val_score, cross_validate. The de facto standard for Python. |

| Caret Package (R) | Offers a unified interface for training and evaluating models using various resampling methods, including repeated CV [48]. | trainControl(method = "repeatedcv", number=10, repeats=3). Widely used in R for statistical modeling. |

| Stratified K-Fold | A variant of k-Fold that preserves the percentage of samples for each class in every fold [49] [45]. | Critical for imbalanced datasets (common in clinical neuroimaging). Available in sklearn and caret. |

| Pipeline Tooling | Ensures that data preprocessing (e.g., scaling, feature selection) is fitted only on the training fold, preventing data leakage [44]. | sklearn.pipeline.Pipeline is essential for producing valid CV results. |

| Statistical Test Correctives | Methods to properly compare models evaluated via CV, accounting for the non-independence of CV scores. | Nested CV, corrected paired t-tests, or upper-bound risk validation (K-fold CUBV) are proposed solutions [1] [50]. |

The comparative analysis between k-Fold and Repeated k-Fold Cross-Validation reveals that there is no one-size-fits-all solution. The choice hinges on the specific goals and constraints of the neuroimaging study. k-Fold CV offers a solid, computationally efficient baseline for model evaluation. In contrast, Repeated k-Fold CV provides a more robust and stable estimate of performance, which is valuable for reliably comparing different algorithms or tuning hyperparameters, especially when dataset size is a concern [49] [48].

Based on the experimental evidence, the following best practices are recommended for neuroimaging classification research:

- Prioritize Repeated k-Fold for Model Comparison: When the goal is to rigorously compare the accuracy of two or more models, repeated k-fold is generally preferred for its lower variance and more stable estimate. A typical configuration is 10 folds repeated 5 or 10 times (k=10, M=5/10) [48].

- Use Appropriate Statistical Tests: Never apply a standard paired t-test directly to the k × M scores from a repeated k-fold, as this dramatically inflates false positive rates [1]. Employ specialized tests designed for correlated CV results or use methods like nested cross-validation for a final, unbiased comparison.

- Account for Data Structure: For neuroimaging data with inherent temporal or block structure (e.g., EEG, fMRI time-series), standard random splits are invalid. Always use a structured CV approach, such as block-wise or group-wise splits, to prevent data leakage and inflated accuracy estimates [43].

- Report CV Configuration in Detail: To enhance reproducibility, explicitly report the type of CV, the values of k and M, whether stratification was used, and how data preprocessing was handled within the CV loop [43].

In conclusion, a "robust" cross-validation strategy in neuroimaging is one that is not only statistically sound but also context-aware, taking into account the nature of the data, the computational budget, and the ultimate inferential goal of the research.

In neuroimaging-based machine learning (ML), the rigorous comparison of classification model accuracy is fundamental to scientific progress. Cross-validation (CV) remains the primary procedure for assessing ML models in biomedical research, particularly for small-to-medium-sized datasets common in neuroimaging studies with N < 1000 [1]. However, essential questions persist regarding how to rigorously compare the accuracy of different ML models. The statistical significance of observed accuracy differences can be profoundly influenced by the specific configuration of the cross-validation setup, including the number of folds (K) and the number of repetitions (M) [1]. This variability poses a substantial threat to the reproducibility of neuroimaging ML research, as it can potentially lead to p-hacking and inconsistent conclusions about model improvement. This guide objectively examines the impact of CV setups on statistical significance, providing experimental data and methodologies to inform researchers, scientists, and drug development professionals in the field.

Understanding Cross-Validation Setups

Cross-validation is a model validation technique used to assess how the results of a statistical analysis will generalize to an independent dataset, with a key goal of flagging problems like overfitting [51]. In a typical k-fold CV, the original sample is randomly partitioned into k equal-sized subsamples or "folds". Of these k subsamples, a single subsample is retained as validation data for testing the model, and the remaining k − 1 subsamples are used as training data. The process is repeated k times, with each of the k subsamples used exactly once as validation data [51]. The k results are then averaged to produce a single estimation.