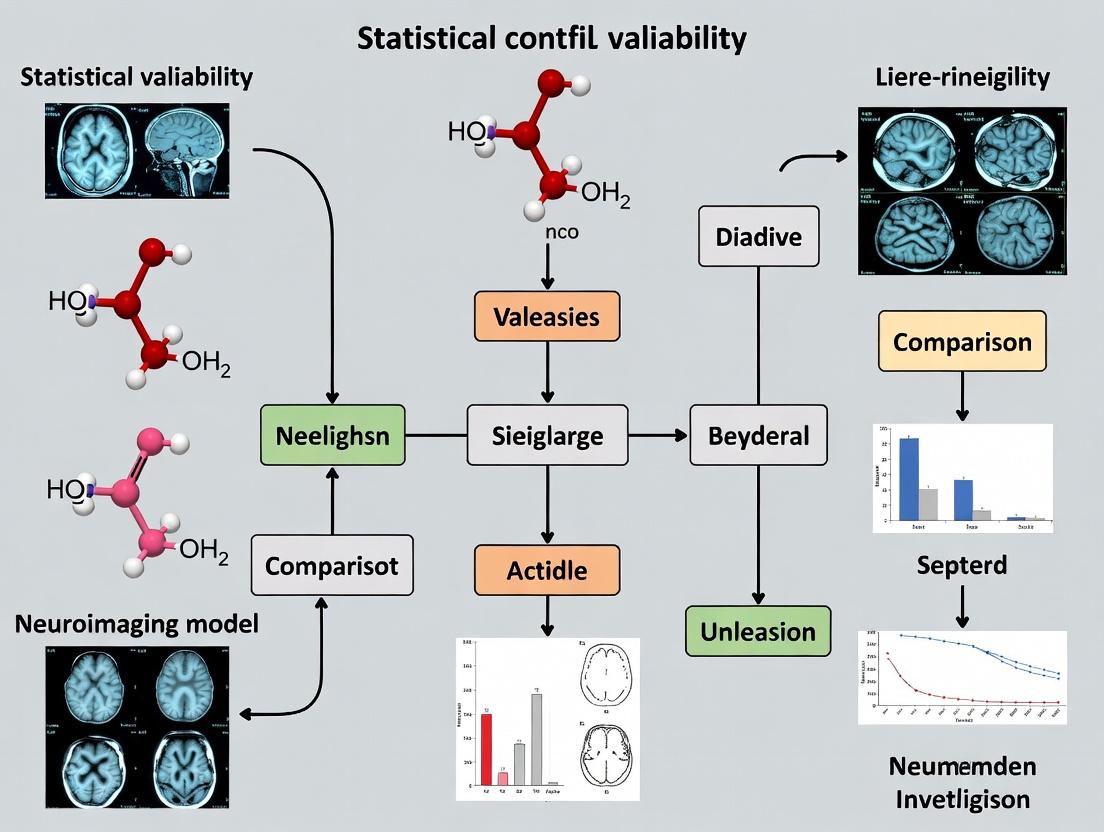

Navigating Statistical Variability in Neuroimaging Models: A Practical Guide for Robust Model Comparison

This article provides a comprehensive framework for researchers and drug development professionals to manage statistical variability in neuroimaging model comparison.

Navigating Statistical Variability in Neuroimaging Models: A Practical Guide for Robust Model Comparison

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to manage statistical variability in neuroimaging model comparison. Covering foundational concepts to advanced validation, it explores key sources of variability like biological noise, measurement error, and algorithmic instability. The guide details methodological best practices, including resampling techniques, Bayesian approaches, and harmonization protocols. It addresses common troubleshooting scenarios and offers optimization strategies for power and reproducibility. Finally, it presents comparative frameworks for robust model evaluation, synthesizing actionable steps to enhance the reliability and translational impact of neuroimaging research in biomedical and clinical settings.

Understanding the Maze: Core Sources of Variability in Neuroimaging Data

Technical Support & Troubleshooting Center

FAQ: Conceptual & Analytical Issues

Q1: My multivariate pattern analysis (MVPA) yields high decoding accuracy with low group variance. Does this mean statistical variability is negligible? A: Not necessarily. High accuracy with low between-subject variance can mask critical within-subject, cross-session, or cross-site variability. This stability might be specific to your cohort, preprocessing pipeline, or scanner. We recommend a "Pipeline Variability Audit": Re-run your analysis using a different normalization algorithm (e.g., switch from DARTEL to ANTs) and a different voxel size for the searchlight. Significant drops in accuracy indicate high model susceptibility to preprocessing variability.

Q2: When comparing two computational models of brain function, how do I determine if a difference in goodness-of-fit (e.g., R², BIC) is meaningful or just noise? A: Direct comparison of point estimates (e.g., mean BIC) is insufficient. You must quantify the variability of the difference itself. Implement a non-parametric, hierarchical bootstrap procedure: 1. Resample participants with replacement at the group level. 2. For each resampled group, resample trials with replacement within each participant. 3. Fit both models to this fully resampled dataset and compute the difference metric (e.g., ΔBIC). 4. Repeat 10,000 times to build a distribution of the difference. 5. Report the 95% confidence interval (CI) of this distribution. If the CI excludes zero, the difference is robust beyond sampling variability.

Q3: Our drug trial fMRI study shows high inter-subject variability in target engagement biomarkers. How can we statistically separate "biological" from "technical" variability? A: A controlled test-retest reliability study is required. Use the following protocol on a healthy control subset before your main trial:

- Protocol: Acquire fMRI data during the same task paradigm across two sessions, 1-2 weeks apart, controlling for time-of-day. For a pharmacological challenge, use a placebo in one session and the active compound in the other, double-blinded and counterbalanced.

- Analysis: Calculate the Intraclass Correlation Coefficient (ICC(2,1)) for your key outcome measure (e.g., beta estimates from an ROI) across the two sessions under the same condition (placebo-placebo or drug-drug).

- Interpretation: See Table 1.

Table 1: Interpreting Intraclass Correlation Coefficient (ICC) for Variability Source Assessment

| ICC Range | Interpretation for Variability Source |

|---|---|

| < 0.5 | Poor Reliability. Outcome measure is dominated by technical/session noise. Not suitable as a stable biomarker. |

| 0.5 - 0.75 | Moderate Reliability. Mix of technical and substantive biological variability. Use with caution. |

| 0.75 - 0.9 | Good Reliability. Variability is primarily attributable to substantive between-subject biological differences. |

| > 0.9 | Excellent Reliability. Measure is highly stable; observed differences likely reflect true biological effects. |

Q4: My dynamic causal modeling (DCM) results are inconsistent across runs. How can I stabilize parameter estimates? A: This is often due to the optimization algorithm converging in different local minima.

- Troubleshooting Step: Always run the inversion multiple times (≥ 8) from randomly different starting points. Use the

DCMtoolbar option for stochastic (random) restarts. - Solution: After multiple runs, do not simply select the best-fitting model. Instead, perform parametric empirical Bayes (PEB) on the ensemble of estimated parameters. This Bayesian model averaging approach pools information across runs, providing more stable and reliable posterior estimates that account for estimation variability.

Q5: How do I choose between parametric (e.g., Gaussian) and non-parametric permutation tests for my neuroimaging group analysis? A: The choice hinges on the distribution of your effect size and the sample size. See the decision workflow below.

Diagram Title: Decision Workflow: Parametric vs. Non-Parametric Group Test

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Managing Variability in Neuroimaging Research

| Item / Solution | Function & Role in Managing Variability |

|---|---|

| fMRIPrep / fsl_anat | Standardized, containerized preprocessing pipelines drastically reduce variability introduced by ad-hoc manual preprocessing steps. |

| BIDS (Brain Imaging Data Structure) | A consistent file organization framework eliminates administrative variability and ensures reproducibility across labs and software. |

| C-PAC / Nipype | Workflow management systems allow precise recording and replication of every analysis step, capturing pipeline variability. |

| NeuroVault / Brainlife.io | Public data repositories enable access to large, diverse datasets for estimating population variability and benchmarking models. |

Bootstrap & Permutation Libraries (e.g., in nilearn, FSL's randomise) |

Tools for non-parametric resampling directly quantify the uncertainty and stability of statistical estimates. |

ICC Calculation Toolboxes (e.g., pingouin in Python, psych in R) |

Specialized packages for computing reliability metrics to partition biological from measurement variability. |

| PEB Framework in SPM | The Parametric Empirical Bayes framework for DCM and GLMs provides hierarchical modeling to partition variance and stabilize estimates. |

Troubleshooting Guides & FAQs

Q1: During group-level model comparison, we see high between-subject variance that drowns out our effect. How can we determine if subject motion is the primary culprit? A: Quantify motion per subject and correlate with your model's key parameter estimates (e.g., beta weights).

- Protocol: Use framewise displacement (FD) from your preprocessing tool (e.g., fslmotionoutliers, scrubbing in CONN/SPM). For each subject, calculate the mean FD across the scan.

- Analysis: Run a Spearman's correlation between mean FD and your group-level statistic of interest across subjects. A significant correlation indicates motion is a major confound.

- Solution: Include mean FD as a nuisance regressor in the group-level model, or use a robust regression technique that down-weights high-motion subjects.

Q2: Our multi-site study shows significant scanner-dependent bias in BOLD signal amplitude, threatening combined analysis. How do we diagnose and correct this? A: Implement a harmonization method such as ComBat.

- Diagnosis Protocol:

- Extract mean signal from a control region (e.g., white matter or whole-brain gray matter) for each subject and session.

- Plot distributions per scanner. A significant site effect in an ANOVA confirms the technical bias.

- Correction Protocol: Apply ComBat harmonization to your first-level contrast images or extracted features. It models the data as having both biological covariates of interest (e.g., diagnosis) and technical batch effects (scanner), removing the latter while preserving the former.

Q3: Physiological noise (cardiac, respiratory) introduces spurious temporal correlations, inflating false positives in connectivity model comparisons. What is a robust removal strategy? A: Use RETROICOR or equivalent model-based correction.

- Protocol:

- Record: Acquire peripheral physiological data (pulse oximeter for cardiac, respiratory belt) synchronized with scan triggers.

- Model: Use tools like

phys2fsl(FSL) orRETROICOR(AFNI) to create regressors that model the phase of cardiac and respiratory cycles. - Regress: Include these noise regressors (typically 2-4 for cardiac, 2-4 for respiratory) in your first-level general linear model (GLM) during pre-processing.

Q4: When comparing computational models of brain function, how do we dissociate true neural variability from noise-induced variability in model fits? A: Employ cross-validation and noise ceiling estimation.

- Protocol:

- Split your data into independent training and test sets (e.g., by scan session or run).

- Fit your competing models to the training data.

- Evaluate model performance (e.g., prediction accuracy, log-likelihood) on the held-out test set.

- Calculate a noise ceiling using the inter-subject correlation method to estimate the theoretically best possible model performance given the inherent noise in your data.

Table 1: Impact of Common Noise Sources on Key Metrics

| Noise Source | Typical Effect on BOLD Signal | Common Metric for Quantification | Approx. % Variance Explained (Range) |

|---|---|---|---|

| Head Motion | Increased autocorrelation, spin history artifacts, ghosting. | Framewise Displacement (FD) | 5-20% in task-based; up to 50% in resting-state connectivity. |

| Scanner Drift | Low-frequency signal drift (typically <0.01 Hz). | Linear/Quadratic Trend Power | 10-30% (highly scanner-dependent). |

| Cardiac Pulsation | ~1 Hz periodic noise, strongest near major arteries. | RETROICOR R² in CSF | 2-10% globally, >60% in brainstem. |

| Respiration | ~0.3 Hz periodic noise, CO₂-induced low-frequency changes. | RETROICOR R² | 3-12% globally. |

Table 2: Harmonization Method Comparison

| Method | Principle | Best For | Key Consideration |

|---|---|---|---|

| ComBat | Empirical Bayes, removes site mean/variance differences. | Multi-site studies with balanced design. | Preserves biological group effects; requires sufficient sample size per site. |

| Global Signal Regression (GSR) | Regresses out whole-brain mean signal. | Single-site studies with severe motion/physiological noise. | Controversial; may remove neural signal of interest. |

| Detrending / High-Pass Filter | Removes low-frequency scanner drifts. | All studies, as a baseline step. | Must set appropriate cut-off (e.g., 128s for standard fMRI). |

Experimental Protocols

Protocol: Framewise Displacement (FD) Calculation for Motion QC

- Input: The 6 rigid-body realignment parameters (3 translations, 3 rotations) output from motion correction (e.g., from SPM's

rp_*.txtor FSL'smcflirt). - Calculation: For each time point t, FD is computed as the sum of the absolute derivatives of the six parameters, with rotations converted to millimeters by projecting onto a sphere of radius 50 mm (default in FSL):

FD_t = |Δx_t| + |Δy_t| + |Δz_t| + |Δα_t| + |Δβ_t| + |Δγ_t|. - Thresholding: Subjects with mean FD > 0.2mm - 0.5mm (task-dependent) are typically flagged for exclusion or rigorous correction.

Protocol: ComBat Harmonization for Multi-Site Data

- Prepare Data Matrix: Create an N (subjects) x V (voxels/features) matrix of your neuroimaging features (e.g., regional homogeneity values, FA from DTI).

- Define Covariates: Create a design matrix with biological variables of interest (e.g., age, diagnosis, group).

- Define Batch: Create a batch variable indicating scanner/site membership.

- Run ComBat: Use a validated implementation (e.g.,

neuroCombatin Python/R). The algorithm estimates and removes location (mean) and scale (variance) shifts per feature for each batch. - Output: Use the harmonized data matrix for all subsequent group-level model comparisons.

Visualizations

Diagram 1: Neuroimaging Noise Source Classification

Diagram 2: Workflow for Model Comparison with Noise Correction

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Noise Mitigation |

|---|---|

| Peripheral Physiological Monitors (Pulse Oximeter, Respiratory Belt) | Records cardiac and respiratory waveforms synchronized to the scanner for RETROICOR-based noise modeling. |

| Custom Head Restraints / Vacuum Cushions | Minimizes gross head motion, a primary source of biological and technical variance. |

| Phantom Scanners | Gel or liquid phantoms used for regular QC to monitor scanner stability (signal-to-noise ratio, drift) over time. |

Harmonization Software (e.g., neuroCombat Python/R library) |

Statistically removes site-specific technical variance from multi-center datasets. |

Framewise Displacement Calculator (e.g., fsl_motion_outliers, AFNI's 1d_tool.py) |

Quantifies volume-to-volume head motion to identify subjects/scans requiring stringent correction or exclusion. |

Troubleshooting Guides & FAQs

FAQ 1: Model Convergence & Overfitting

Q: My neuroimaging model comparison shows perfect performance on the training set but fails on the validation set. What's happening? A: This is a classic sign of overfitting in high-dimensional spaces. Standard statistics (e.g., p-values from simple t-tests) become unreliable when the number of features (voxels, connections) vastly exceeds the number of subjects. The model memorizes noise rather than learning generalizable brain-behavior relationships.

FAQ 2: Inflated False Positive Rates

Q: Why do I keep finding "significant" voxels or connections that don't replicate? A: In high-dimensional neuroimaging data, performing mass univariate tests (e.g., at each voxel) without rigorous correction leads to a massive multiple comparisons problem. Standard corrections like Bonferroni are too conservative, while uncorrected thresholds yield high false discovery rates (FDR).

FAQ 3: Choice of Null Distribution

Q: How do I generate a valid null distribution for model comparison statistics (e.g., R², accuracy) in neuroimaging? A: Standard parametric distributions (F, t) often fail because model performance metrics in high dimensions violate independence and normality assumptions. You must use permutation testing or cross-validation schemes tailored to the data structure (e.g., stratified, blocked).

FAQ 4: Handling Non-Independent Data

Q: My data has inherent structure (e.g., repeated measures, familial ties). Which model comparison approach accounts for this? A: Standard tests assume independent and identically distributed (i.i.d.) samples. For structured data, use specialized methods: mixed-effects models for repeated measures, or nested cross-validation and cluster-based permutation tests that respect the data's dependency structure.

Key Experimental Protocols

Protocol 1: Nested Cross-Validation for Fair Model Comparison

- Outer Loop (Performance Estimation): Split data into K-folds (e.g., K=5 or 10). For each fold:

- Hold out one fold as the test set.

- Use the remaining K-1 folds for the inner loop.

- Inner Loop (Model Selection & Tuning): On the K-1 training folds:

- Perform another cross-validation to select hyperparameters or choose between model types.

- Train the final chosen model configuration on all K-1 folds.

- Testing: Evaluate the trained model on the held-out test fold from the outer loop.

- Aggregation: Average performance metrics (e.g., mean squared error, classification accuracy) across all outer test folds. This yields an unbiased estimate of generalizability.

Protocol 2: Permutation Testing for Model Significance

- Train Model & Get True Statistic: Train your model on the original dataset with correct labels/outcomes. Calculate your performance statistic (S_true), e.g., mean cross-validated accuracy.

- Generate Null Distribution: For N permutations (typically 1000-5000):

- Randomly shuffle the outcome variable (or labels) to break the brain-behavior relationship.

- Retrain the model (using the identical cross-validation folds as in Step 1) on the permuted data.

- Calculate the permuted performance statistic (Spermi).

- Calculate p-value: p = (count of permutations where Spermi >= S_true) + 1) / (N + 1).

- Inference: Reject the null hypothesis if p < your alpha level (e.g., 0.05).

Table 1: Comparison of Statistical Methods in High-Dimensional Settings

| Method | Typical Use Case | Key Assumption | Failure Mode in High-Dimensions | Recommended Alternative |

|---|---|---|---|---|

| t-test / ANOVA | Mass-univariate voxel-wise analysis | Independent, normally distributed errors, low multiple comparisons. | Severely inflated Family-Wise Error Rate (FWER) due to 100,000+ tests. | Cluster-based inference, False Discovery Rate (FDR), Random Field Theory. |

| Standard Linear Regression | Predicting outcome from brain features. | More observations than features (n > p), independent errors. | Ill-posed problem (p >> n), leads to overfitting and non-unique solutions. | Regularized regression (Lasso, Ridge), Principal Component Regression. |

| Simple Cross-Validation | Estimating model generalizability. | Data points are independent. | Optimistically biased if data has structure (time, family). | Nested CV, blocked/stratified permutation. |

| Parametric p-values | Assessing significance of model accuracy. | Accuracy follows a known distribution (e.g., binomial). | Distribution assumptions break down with correlated features. | Permutation-based p-values. |

Table 2: Example Reagent & Software Toolkit for Neuroimaging Model Comparison

| Item Name | Category | Function/Benefit |

|---|---|---|

| Scikit-learn | Software Library (Python) | Provides standardized, efficient implementations of models (linear, SVM), cross-validation splitters, and metrics. Essential for reproducible pipelines. |

| nilearn | Software Library (Python) | Built on scikit-learn for neuroimaging data. Handles masking, connectivity maps, and decoding with brain visualization. |

| FSL's Randomise | Software Tool | Implements non-parametric permutation testing for voxel-based and network-based statistics, robust to high-dimensional data. |

| CONN Toolbox | Software Toolbox (MATLAB) | Specialized for functional connectivity analyses, includes multivariate model comparison (MVPA) and network-based statistics. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Permutation testing and nested CV are computationally intensive. HPC allows parallelizing 1000s of permutations/jobs. |

Visualizations

Title: Nested CV & Permutation Workflow for Model Comparison

Title: Common Statistical Pitfalls in High-Dimensional Neuroimaging

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when evaluating predictive models in neuroimaging research, within the broader thesis context of handling statistical variability in model comparison.

Frequently Asked Questions (FAQ)

Q1: My model’s AUC-ROC is 0.92 on my test set, but drops to ~0.68 on a slightly different patient cohort from a new scanner. Is this normal, and what should I do? A: Yes, this is a classic manifestation of AUC instability due to dataset shift. AUC is sensitive to changes in the prevalence and feature distribution of the positive class. First, use calibration plots to check if the predicted probabilities have shifted. Implement domain adaptation techniques (e.g., ComBat for harmonizing scanner effects) on your neuroimaging features before model training. Always report the confidence interval for AUC (via bootstrapping) and consider using the balanced accuracy metric alongside AUC for imbalanced, shifting cohorts.

Q2: I have added a new biomarker, but my model’s RMSE has only improved from 8.4 to 8.3. Is this significant, or just noise? A: A small RMSE change can be misleading. You must assess if this decrease is beyond expected statistical variability. Protocol: Perform a nested model comparison (F-test) or use a cross-validated paired t-test on the squared error vectors from the two models across your test folds. Calculate the 95% confidence interval for the RMSE difference via bootstrapping (minimum 1000 iterations). If the interval contains zero, the improvement is likely not statistically significant. Also, check if the new biomarker increases model complexity unjustifiably.

Q3: My explained variance (R²) is negative on the held-out validation set, but was positive during training. What does this mean, and how do I fix it? A: A negative R² on held-out data indicates that your model’s predictions are worse than simply using the mean of the target variable for prediction. This is a clear sign of severe overfitting or a fundamental mismatch between training and validation data distributions. Troubleshooting Steps: 1) Re-examine your feature selection; you may have overfitted to noise in the neuroimaging data. 2) Apply stronger regularization (e.g., increase L2 penalty in ridge regression). 3) Re-split your data ensuring the distributions of key covariates (e.g., age, disease stage) are matched between training and validation sets. 4) Consider simpler models.

Q4: During k-fold cross-validation, my AUC shows high variance across folds (e.g., ranging from 0.75 to 0.89). How can I report a stable performance estimate? A: High fold-to-fold variance suggests your sample size may be insufficient or your data has high heterogeneity (common in neuroimaging). Do not just report the mean. Protocol: 1) Use a repeated or stratified k-fold approach to ensure class balance in each fold. 2) Report the mean AUC and the standard deviation/confidence interval across all folds. 3) Consider using the .632+ bootstrap method, which often yields a more stable and less variable performance estimate than standard k-fold CV for small, heterogeneous datasets.

Q5: How do I choose between RMSE and Explained Variance when reporting regression results for clinical trial forecasting? A: You should report both, as they offer complementary insights and have different instabilities. RMSE is in the units of your target (e.g., clinical score change), making it interpretable for clinicians. Explained Variance (R²) is unitless and indicates the proportion of variance captured. However, R² can be unstable with small sample sizes or when the data variance is low. Present them together in a table with confidence intervals. For regulatory submissions, clarity on error magnitude (RMSE) is often critical.

Data Presentation: Metric Instabilities & Comparison

| Metric | Primary Use Case | Key Strengths | Key Instabilities & Vulnerabilities | Recommended Companion Metric |

|---|---|---|---|---|

| AUC-ROC | Binary classification performance, independent of threshold. | Robust to class imbalance (in evaluation). Threshold-invariant. | Sensitive to dataset shift (population, scanner). Can mask poor calibration. High variance with few positive cases. | Balanced Accuracy, Precision-Recall AUC, Calibration Curve/Intercept |

| RMSE | Regression model error in target units. | Easily interpretable. Penalizes large errors heavily. | Scale-dependent. Sensitive to outliers. Can appear stable while R² fluctuates with data variance. | Mean Absolute Error (MAE), Explained Variance (R²) |

| Explained Variance (R²) | Proportion of variance captured by regression model. | Scale-independent. Intuitive 0-1 scale (can go negative). | Highly sensitive to small sample size. Unstable if data variance is very low. Misleading if model is badly biased. | RMSE, Adjusted R² (for multiple predictors) |

Experimental Protocols for Robust Evaluation

Protocol 1: Bootstrapped Confidence Intervals for Any Metric

- Train your final model on the entire development dataset.

- Generate predictions for your hold-out test set (N samples).

- For

b = 1toB(B = 2000):- Sample N instances from the test set with replacement.

- Recalculate the evaluation metric (AUC, RMSE, R²) on this bootstrap sample.

- The distribution of the B metric values forms the bootstrap sampling distribution.

- Report the percentile 95% CI (2.5th to 97.5th percentiles) alongside the point estimate.

Protocol 2: Nested Cross-Validation for Unbiased Performance Estimation

- Define an outer loop (e.g., 5-fold stratified) for performance estimation.

- For each outer fold:

- Hold out the outer test fold.

- Use the remaining data as the development set.

- Define an inner loop (e.g., 5-fold) on the development set to perform hyperparameter tuning/model selection.

- Train the final model with the chosen hyperparameters on the entire development set.

- Evaluate this model on the held-out outer test fold. Store the metric.

- The mean and standard deviation of the metrics from the 5 outer folds provide the final unbiased estimate and its variability.

Diagram: Workflow for Handling Metric Instability

Workflow for Assessing and Mitigating Metric Instability

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Neuroimaging Model Evaluation |

|---|---|

| ComBat Harmonization | Algorithm to remove scanner/site-specific batch effects from neuroimaging feature data, stabilizing metrics across multi-center studies. |

| Stratified K-Fold | Cross-validation method that preserves the percentage of samples for each class in every fold, providing more stable AUC estimates for imbalanced data. |

| .632+ Bootstrap | A resampling method for performance estimation that reduces bias and variance compared to standard CV, ideal for small sample sizes (n < 500). |

| SHAP (SHapley Additive exPlanations) | Game-theoretic method to explain model predictions, helping diagnose instability by showing which features (e.g., brain regions) drive predictions inconsistently. |

| McNemar's / Delong's Test | Statistical tests to compare the AUCs of two models on the same dataset, determining if an observed difference is statistically significant beyond variability. |

| Calibration Plots (Brier Score) | Diagnostic to check if predicted probabilities match true event rates. Poor calibration indicates AUC may be misleading; the Brier score quantifies this. |

| Adjusted R² | Modifies R² by penalizing the addition of unnecessary predictors, providing a more stable estimate of explained variance in multiple regression. |

Neuroimaging Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our group-level fMRI activation map is inconsistent across repeated analyses of the same dataset. What are the primary sources of this variability? A: This is a classic symptom of analytical variability. Key sources include:

- Preprocessing Pipeline Choices: Differences in software (FSL vs. SPM vs. AFNI), smoothing kernel size, motion correction algorithms, and denoising strategies (e.g., ICA-AROMA vs. standard regression).

- Statistical Thresholding: The use of arbitrary p-value thresholds (p<0.001) versus cluster-family wise error (FWE) correction versus threshold-free cluster enhancement (TFCE) leads to vastly different results.

- First-Level Modeling: Variability in hemodynamic response function (HRF) specification, inclusion of temporal derivatives, and handling of physiological noise.

Q2: When comparing two computational models of brain function, how do I determine if a difference in model evidence is statistically significant or just due to random noise? A: Direct model comparison is highly sensitive to noise. You must:

- Use Cross-Validation: Employ strict k-fold or leave-one-subject-out cross-validation on the model comparison metric (e.g., log-likelihood, accuracy).

- Perform Non-Parametric Testing: Use permutation tests (10,000+ permutations) to generate a null distribution of the model evidence difference. Calculate a p-value from this distribution.

- Report the Effect Size: Always report the standardized difference (e.g., Bayes Factor, protected exceedance probability) alongside the p-value. A statistically significant but tiny difference may not be theoretically meaningful.

Q3: Our structural MRI analysis shows poor reproducibility for subcortical volume estimates. What protocol steps are most critical for reliability? A: Subcortical segmentation is highly sensitive to technical factors. Adhere to this protocol:

Experimental Protocol: Reliable Subcortical Volumetry

- Image Acquisition: Use a standardized T1-weighted sequence (e.g., MPRAGE or BRAVO) with isotropic 1mm voxels. Ensure consistent head positioning and coil usage across sessions.

- Quality Control (QC): Implement manual or automated QC (e.g., MRIQC) to exclude images with excessive motion artifact, ringing, or inhomogeneity.

- Processing Tool & Version: Fix the software and version (e.g., FreeSurfer 7.3.2). Do not upgrade during a study.

- Parameter Freezing: Use identical recon-all command flags for all subjects. Do not change smoothing or intensity normalization parameters mid-study.

- Visual Inspection & Correction: Mandate visual inspection of segmentation boundaries for all subjects. Document and justify any manual edits using a predefined rubric.

- Statistical Correction: In final analysis, include estimated total intracranial volume (eTIV) as a nuisance covariate in your general linear model.

Q4: In pharmacological fMRI, how do we distinguish drug-induced neural variability from inherent physiological noise? A: This requires a controlled experimental design with precise signal partitioning.

Experimental Protocol: Pharmacological fMRI Signal Isolation

- Design: Use a randomized, double-blind, placebo-controlled, crossover design.

- Baseline Scan: Acquire a pre-drug administration resting-state scan to establish individual baseline connectivity and hemodynamic profiles.

- Physiological Monitoring: Continuously record heart rate, respiratory rate, end-tidal CO2, and blood pressure during the task and resting-state scans. These must be used as regressors of no interest in the general linear model.

- Modeling: Construct a first-level model with separate regressors for: (a) task conditions (placebo), (b) task conditions (drug), (c) physiological noise time series, and (d) motion parameters. The critical contrast is (b - a).

- Kinetics: Time the task scan relative to the drug's established pharmacokinetic peak (e.g., Tmax).

Table 1: Impact of Analytical Choices on Group-Level fMRI Results

| Analytical Variable | Tested Range | Effect on Active Voxel Count | Spatial Overlap (Dice Coefficient) |

|---|---|---|---|

| Smoothing FWHM | 4mm vs. 8mm | +/- 15-40% | 0.65 - 0.78 |

| Motion Correction | Standard vs. ICA-AROMA | -20% to +5% | 0.70 - 0.85 |

| Statistical Threshold | p<0.001 unc. vs. FWE p<0.05 | -60% to -85% | 0.45 - 0.60 |

| HRF Model | Canonical vs. Canonical + Derivatives | +/- 10-25% | 0.80 - 0.90 |

Table 2: Reproducibility Metrics Across Major Neuroimaging Consortia

| Study / Consortium | Modality | Sample Size | Key Metric | Reproducibility Estimate (ICC) |

|---|---|---|---|---|

| Human Connectome Project | Resting-state fMRI | 1200 | Functional Network Connectivity | 0.75 - 0.90 (within-site) |

| ENIGMA Consortium | Structural MRI | 50,000+ | Subcortical Volume (Hippocampus) | 0.85 - 0.95 (multi-site, meta-analysis) |

| ABCD Study | Diffusion MRI | 11,000+ | Fractional Anisotropy (Corpus Callosum) | 0.65 - 0.80 (across scanners) |

| UK Biobank | Task fMRI (N-back) | 40,000 | Prefrontal Activation | 0.50 - 0.70 (single-site, test-retest) |

Visualizations

Diagram 1: Model Comparison Workflow for Robust Inference

Diagram 2: Sources of Variability in Neuroimaging Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing Statistical Variability

| Item / Solution | Function / Purpose | Example / Implementation |

|---|---|---|

| Standardized Data Formats | Ensures compatibility and reduces preprocessing errors. | BIDS (Brain Imaging Data Structure) |

| Containerization Software | Freezes the complete software environment for exact reproducibility. | Docker, Singularity (with fMRIprep, fMRIPrep) |

| Preprocessing Pipelines | Provides a standardized, automated, and validated workflow. | fMRIPrep, QSIPrep, C-PAC |

| Model Comparison Metrics | Quantifies model fit while penalizing complexity for fair comparison. | Bayesian Information Criterion (BIC), Akaike Information Criterion (AIC), Protected Exceedance Probability |

| Power Analysis Tools | Determines the required sample size a priori to detect an effect reliably. | G*Power, SIMPLE (fMRI-specific), Bayesian Power Analysis |

| Data & Code Repositories | Enables open sharing, peer scrutiny, and direct replication attempts. | OpenNeuro, GitHub, Figshare, COS |

| Reporting Guidelines | Ensures comprehensive and transparent reporting of methods and results. | COBIDAS Report (Committee on Best Practices in Data Analysis and Sharing) |

Building Robust Comparisons: Essential Methods and Step-by-Step Application

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My bootstrapped confidence intervals for a model's accuracy are extremely wide. What does this mean, and how should I proceed? A1: Wide bootstrapped confidence intervals directly indicate high statistical variability in your model's performance estimate. This is a critical diagnostic. First, check your sample size (N); neuroimaging data is often high-dimensional but with low N, leading to high variance. Second, investigate heterogeneity in your data—are there outliers or subpopulations causing instability? Consider using a stratified bootstrap to maintain class proportions. Third, your model may be overfitting. Implement internal cross-validation within each bootstrap iteration to get a more realistic, penalized estimate of performance.

Q2: During k-fold cross-validation, my model performance varies drastically between folds. Is this normal? A2: Significant inter-fold variability is not "normal" in the sense of being desirable; it is a red flag signaling high variance in the estimation procedure. This often occurs due to data imbalance or non-representative splits. Solution: Move to repeated k-fold or stratified k-fold cross-validation to ensure each fold reflects the overall data distribution. If variability persists, it suggests your model is highly sensitive to the specific training data, which is a hallmark of overfitting or an insufficiently regularized model. Consider simplifying the model or increasing regularization strength.

Q3: I ran a permutation test for group comparison, but the p-value is exactly zero. What happened? A3: A p-value of zero from a permutation test is typically a numerical artifact, meaning your observed test statistic was more extreme than all test statistics generated in your permutation distribution (e.g., you used 1000 permutations and your statistic was the most extreme). While this indicates a statistically significant result, reporting p=0 is incorrect. Fix: Increase the number of permutations. For a publication-quality test, use at least 10,000 permutations. The minimum reportable p-value becomes 1/N_permutations. Also, ensure your permutation scheme correctly respects the null hypothesis (e.g., by permuting group labels while preserving data structure).

Q4: How do I choose between bootstrapping and cross-validation? A4: The choice depends on your primary goal. Use this decision guide:

| Goal | Recommended Method | Key Reason |

|---|---|---|

| Estimating model performance (accuracy, AUC) | Nested Cross-Validation | Provides nearly unbiased performance estimation, especially with hyperparameter tuning. |

| Assessing stability of performance estimate | Bootstrapping | Directly quantifies confidence intervals and variance of the performance metric. |

| Comparing two models on the same dataset | Corrected Resampled t-test (e.g., Nadeau & Bengio) or Permutation Test | Accounts for the non-independence of scores from resampling to control Type I error. |

| Testing a specific null hypothesis (e.g., no group difference) | Permutation Test | Provides a non-parametric, assumption-free test of the null hypothesis. |

Q5: My permutation test is computationally prohibitive for my large neuroimaging dataset. Any shortcuts?

A5: Yes. For mass-univariate tests (voxel-wise or feature-wise), consider these optimizations: 1) Use a GPU-accelerated permutation library like BrainIAK or Nilearn's PermutationClusterTest. 2) Adopt a two-stage procedure: Perform an initial screening with fewer permutations (e.g., 1000) to identify potentially significant features, then run the full permutation count (e.g., 10,000) only on that subset. 3) Approximate with a parametric distribution: Fit a Gamma or Generalized Pareto Distribution to the tail of your permutation distribution to extrapolate very small p-values.

Experimental Protocols

Protocol 1: Nested Cross-Validation for Unbiased Model Evaluation

- Purpose: To obtain a robust, low-bias estimate of model generalization error when hyperparameter tuning is required.

- Steps:

- Outer Loop: Split data into K folds (e.g., K=5 or 10). For each outer fold:

- Hold-out Set: Designate the current fold as the temporary test set.

- Inner Loop (Validation): On the remaining K-1 folds, perform another cross-validation (e.g., 5-fold) to tune hyperparameters (e.g., regularization strength, kernel parameters).

- Model Training: Train a fresh model on all K-1 outer training folds using the optimal hyperparameters found in the inner loop.

- Testing: Evaluate this model on the held-out outer test set. Store the performance score.

- Aggregation: After iterating through all K outer folds, aggregate the K performance scores (e.g., mean and standard deviation). This is your final performance estimate.

Protocol 2: Bootstrapping Confidence Intervals for a Classification Metric

- Purpose: To estimate the confidence interval and sampling distribution of a model's performance metric (e.g., balanced accuracy).

- Steps:

- Bootstrap Sample: From your original dataset of size N, randomly draw N samples with replacement.

- Out-of-Bag (OOB) Test: The samples not selected form the OOB test set (~36.8% of data).

- Train & Test: Train your model on the bootstrap sample. Evaluate it on the OOB set. Record the metric (e.g., AUC).

- Repeat: Perform steps 1-3 a large number of times (B), typically B >= 2000.

- Construct CI: Sort the B metric values. For a 95% CI, take the 2.5th and 97.5th percentiles of this distribution. This is the percentile bootstrap CI.

Protocol 3: Permutation Test for Model Comparison

- Purpose: To test the null hypothesis that two models (A and B) have identical performance on a specific dataset.

- Steps:

- Observed Difference: Using a robust resampling method (e.g., 5x2 CV or repeated k-fold CV), calculate the performance difference between Model A and Model B. This is your observed statistic, Dobs.

- Permutation: For many iterations (e.g., 5000):

- Randomly shuffle the assignment of model prediction vectors (or residuals) between Model A and Model B across all resampling folds.

- Recalculate the performance difference under this null condition. Store this value.

- Build Null Distribution: The collection of permuted differences forms the empirical null distribution.

- Calculate p-value: p = (number of permuted differences >= |Dobs|) / (total permutations). For a two-tailed test, use absolute values.

Table 1: Impact of Resampling Strategy on Performance Estimate Variability Simulated data from a neuroimaging classification task (N=100, p=10,000 features).

| Resampling Method | Reported Accuracy (Mean ± SD) | 95% CI Width | Computation Time (s) |

|---|---|---|---|

| Hold-Out (70/30 split) | 0.85 ± 0.05 | 0.84 - 0.86 | <1 |

| 5-Fold CV | 0.82 ± 0.04 | 0.80 - 0.84 | 15 |

| 10-Fold CV | 0.83 ± 0.03 | 0.82 - 0.84 | 30 |

| 0.632 Bootstrap | 0.83 ± 0.02 | 0.82 - 0.85 | 120 |

| Nested 5x5 CV | 0.81 ± 0.02 | 0.80 - 0.82 | 300 |

Table 2: Type I Error Control in Model Comparison Tests Monte Carlo simulation comparing two identical models (Null is true). Target alpha = 0.05.

| Statistical Test | Estimated Type I Error Rate | Notes |

|---|---|---|

| Standard paired t-test on CV scores | 0.29 | Severely inflated due to score non-independence. |

| Corrected Resampled t-test | 0.052 | Properly controls error (Nadeau & Bengio, 2003). |

| Permutation Test (5000 perms) | 0.049 | Non-parametric, excellent control. |

| McNemar's Test | 0.051 | Valid only for a single, independent test set. |

Visualizations

Title: Nested Cross-Validation Workflow

Title: Permutation Test Logic for Model Comparison

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Reagent | Function in Resampling & Variability Analysis |

|---|---|

| Scikit-learn (Python) | Core library providing implementations for KFold, StratifiedKFold, Bootstrap, and cross_val_score. Essential for protocol automation. |

| NiLearn / BrainIAK | Neuroimaging-specific Python toolkits. Provide functions for permutation testing on brain maps and cluster correction, optimized for Nifti data. |

| DABEST (Python/R) | "Data Analysis with Bootstrap-coupled ESTimation". Simplifies the generation and visualization of bootstrap confidence intervals for effect sizes. |

| MLxtend (Python) | Includes paired_ttest_resampled and other corrected statistical tests for model comparison following resampling. |

| Parallel Computing Cluster (SLURM/SGE) | Critical for computationally intensive resampling (e.g., 10,000 permutations on large voxel arrays). Enables job array submission. |

| Custom Seed Manager | A lab-built script to ensure perfect reproducibility of random number generation across all resampling splits and permutations. |

| Results Cache Database | (e.g., SQLite) To store intermediate results from lengthy resampling experiments, allowing recovery from interruption and result aggregation. |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My Bayes Factor (BF) calculation is returning extremely large or infinite values. What could be the cause? A: This is often due to model misspecification or improper priors. One model may be unrealistically penalized. First, check that your priors are proper (integrate to 1) and are not too diffuse on the scale of your data. Use posterior predictive checks (PPCs) to see if either model is generating unrealistic data. Consider using stabilized computations like the log-BF or switching to bridge sampling for more robust marginal likelihood estimation.

Q2: During Posterior Predictive Checks, my model's generated data looks nothing like my observed neuroimaging data. How should I proceed? A: This indicates a fundamental failure of your model to capture the data-generating process. Do not proceed to BF comparison. Troubleshoot by: 1) Simplifying your model and checking basic fit. 2) Examining which specific summary statistics (e.g., spatial correlation, variance) are mismatched. 3) Re-evaluating your likelihood function and whether it accounts for key noise properties (e.g., autocorrelation in fMRI time series).

Q3: How do I choose priors for my competing neuroimaging models to ensure a fair comparison? A: Prior choice is critical. Avoid non-informative/default priors for model comparison. Use: 1) Empirical Priors: Inform priors using previous studies or a separate cohort. 2) Pseudo-Priors: Use an intermediate computational technique where priors for parameters unique to one model are tuned during an initial MCMC run to improve efficiency. 3) Domain Expertise: Constrain parameter ranges to physiologically or psychologically plausible values. Always conduct a prior sensitivity analysis.

Q4: I get different Bayes Factor values when using different sampling algorithms or software. Which result should I trust?

A: This signals convergence or numerical instability. 1) Verify Convergence: Run multiple chains with different starting points for each model and confirm R-hat ≈ 1.0 for all key parameters. 2) Use Robust Methods: Prefer methods like bridge sampling over simple harmonic mean estimators for marginal likelihood. 3) Benchmark: Test your algorithms on simple simulated data where the true BF is known. Consistency across multiple established packages (e.g., brms, RStan, PyMC) increases confidence.

Q5: How can I incorporate hierarchical structure (e.g., multiple subjects, sessions) into my Bayesian model comparison? A: Build the hierarchy directly into the models being compared. For example, compare a model with pooled subject parameters versus one with partially pooled (hierarchical) parameters. The BF will then directly quantify the evidence for or against the need for hierarchical structure. Ensure the BF is computed on the group-level marginal likelihood, integrating out all subject-specific parameters.

Key Experimental Protocols

Protocol 1: Calculating & Interpreting Bayes Factors for fMRI GLM Comparison

- Model Specification: Define two competing General Linear Models (GLMs). E.g., Model A: one task regressor; Model B: two separable task regressors.

- Prior Definition: Set justified, proper priors for beta coefficients and noise precision. Use weakly-informative normal priors for betas (e.g., N(0,1) on z-scored data) and gamma for precision.

- MCMC Sampling: Run a sufficient number of iterations (e.g., 4 chains, 10,000 iterations each) for each model, ensuring convergence diagnostics are passed.

- Marginal Likelihood Estimation: Compute the log marginal likelihood for each model using the bridge sampling algorithm.

- BF Calculation: Compute BF₁₂ = exp(logML₁ - logML₂). Report the value using standard interpretation scales (e.g., BF > 10: Strong evidence for M1).

Protocol 2: Implementing Comprehensive Posterior Predictive Checks

- Model Fitting: Fit your target model to the observed neuroimaging data (e.g., a connectivity matrix or voxel time series).

- Replicated Data Simulation: From the posterior parameter distributions, draw

N(e.g., 1000) new parameter sets. For each set, simulate a new "replicated" dataset of the same size as the observed data. - Discrepancy Selection: Define a set of discrepancy functions (test quantities):

T(y, θ). These can be:- Pointwise: Voxel-wise variance.

- Summary: Global spatial correlation, cluster extent statistics.

- Comparison: For each discrepancy, compute its distribution over the

Nreplicated datasets. Plot the observed valueT(y_obs, θ)against this predictive distribution. A p-value (Bayesian p-value) can be computed asp = Pr(T(y_rep, θ) > T(y_obs, θ)). Values near 0.5 indicate good fit; values near 0 or 1 indicate misfit.

Protocol 3: Prior Sensitivity Analysis for Model Comparison

- Define Prior Scenarios: Create 3-5 alternative prior specifications for the key parameters in your competing models. Vary the mean and scale (e.g., half-normal scale of 1 vs. 5).

- Re-compute Under Each Scenario: Re-run the full model estimation and BF calculation for each prior set.

- Tabulate Results: Create a table showing the BF and key posterior estimates (see Table 2) under each scenario.

- Interpret Stability: If the qualitative conclusion (e.g., BF > 10, strong evidence for Model A) holds across plausible prior sets, your result is robust. If it flips, report the dependence explicitly.

Data Presentation

Table 1: Interpretation Scale for Bayes Factors (BF₁₂)

| BF₁₂ Value | Log(BF₁₂) | Evidence for Model 1 (M1) |

|---|---|---|

| > 100 | > 4.6 | Decisive |

| 30 – 100 | 3.4 – 4.6 | Very Strong |

| 10 – 30 | 2.3 – 3.4 | Strong |

| 3 – 10 | 1.1 – 2.3 | Moderate |

| 1 – 3 | 0 – 1.1 | Anecdotal |

| 1 | 0 | No evidence |

| 1/3 – 1 | -1.1 – 0 | Anecdotal for Model 2 (M2) |

| 1/10 – 1/3 | -2.3 – -1.1 | Moderate for M2 |

| < 1/10 | < -2.3 | Strong for M2 |

Table 2: Example Results from Prior Sensitivity Analysis (fMRI Study)

| Prior Scenario | Marginal Likelihood (M1) | Marginal Likelihood (M2) | BF (M1/M2) | Key Posterior Mean (M1) |

|---|---|---|---|---|

| Baseline (Weakly-Informative) | -1250.3 | -1255.7 | ~150 | 0.62 [0.45, 0.78] |

| More Informative | -1249.8 | -1252.1 | ~9 | 0.59 [0.48, 0.70] |

| More Diffuse | -1252.9 | -1254.0 | ~3 | 0.65 [0.38, 0.91] |

Note: Brackets denote 95% credible interval.

Visualizations

Title: Bayesian Model Comparison & PPC Workflow

Title: Posterior Predictive Check Process

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function & Rationale |

|---|---|

| Probabilistic Programming Language (e.g., Stan, PyMC) | Enables flexible specification of complex Bayesian models (e.g., hierarchical GLMs) and performs robust Hamiltonian Monte Carlo (HMC) sampling. |

Bridge Sampling R/Python Package (e.g., bridgesampling, bayesfactor) |

Specialized tool for accurately estimating marginal likelihoods, which is essential for stable BF computation, especially for hierarchical models. |

| Neuroimaging Data Container (e.g., BIDS format) | Standardized data structure ensures reproducibility and allows for clear mapping between data, models, and computed outputs. |

| High-Performance Computing (HPC) Cluster or Cloud GPU | MCMC sampling for high-dimensional neuroimaging models is computationally intensive, requiring parallel chains and significant memory. |

Visualization Suite (e.g., ArviZ, bayesplot) |

Provides standardized functions for plotting posterior distributions, trace plots, MCMC diagnostics, and results of posterior predictive checks. |

Implementing Nested vs. Non-Nested Model Testing Frameworks

Technical Support Center

Troubleshooting Guides & FAQs

Q1: When I compare two models, my likelihood ratio test (LRT) returns a negative chi-square statistic or an invalid p-value. What is happening and how do I fix it?

A: This typically occurs when attempting to use a standard LRT to compare non-nested models, which violates the test's assumptions. The LRT is only valid for nested models (where one model is a special case of the other). In neuroimaging, this often happens when comparing models with different, non-hierarchical regressors or different covariance structures.

- Solution: First, verify that your models are truly nested. If they are not, you must use a non-nested model comparison framework. Implement an information criterion approach (AIC, BIC) or a cross-validated log-likelihood comparison. For a formal test, consider the Vuong test for non-nested models.

Q2: How do I determine if my neuroimaging models are nested or non-nested for the purpose of correct statistical testing?

A: A Model B is nested within Model A if you can constrain parameters in A to obtain B. In practice:

- Check parameter sets: Are all parameters in Model B a subset of Model A's parameters?

- In GLM for fMRI, a model with predictors [P1, P2] is nested within a model with [P1, P2, P3]. However, a model with [P1, P3] is not nested within [P2, P3].

- Protocol: List all free parameters (regressors, covariance terms) for each model. If you can fix/remove parameters from the larger model to get the smaller one without changing the model structure, they are nested.

- Diagnostic Diagram: See Figure 1: Model Nesting Decision Tree.

Q3: My model comparisons show high variability across bootstrap samples or resampled datasets. Which comparison method is more robust to this statistical variability?

A: Non-nested comparisons, particularly those based on information criteria or cross-validation, are often more sensitive to variability because they do not rely on a constrained null hypothesis.

- Solution Protocol: To improve robustness:

- Increase Sample Size: Power analysis is critical. See Table 1 for minimum sample guidelines.

- Use Adjusted Metrics: Prefer AICc (corrected AIC) or BIC over raw log-likelihood.

- Employ Aggregated Comparison: Perform the comparison across many bootstrap iterations and report the distribution of the outcome (e.g., % of iterations where AIC favors Model A).

- Bayesian Model Averaging: Consider BMA to account for uncertainty in model selection itself.

Q4: In the context of drug development, we need to compare a placebo model to a treatment model with potentially different active networks. Does this require a nested framework?

A: Not necessarily. If the treatment may engage entirely novel neural pathways (additional brain regions or functional connections), the models may be non-nested. A treatment model with a unique regressor for drug-related activity is not a simple subset of the placebo model.

- Experimental Protocol:

- Specify two GLMs: Placebo Model (PM) and Treatment Model (TM).

- TM includes all PM regressors plus a distinct "Drug Effect" regressor (e.g., time-locked to treatment). These are nested. Use LRT.

- If TM includes a different connectivity parameter or a regressor based on a different psychological theory, models may be non-nested. Use AIC/BIC or the Vuong test.

Data Presentation

Table 1: Comparison of Nested vs. Non-Nested Testing Frameworks

| Feature | Nested Model Testing (e.g., LRT) | Non-Nested Model Testing (e.g., AIC, Vuong) |

|---|---|---|

| Core Requirement | One model is a restricted subset of the other. | Models are distinct; not subsets. |

| Primary Test Statistic | Likelihood Ratio (χ² distributed). | Difference in AIC/BIC or cross-validated likelihood. |

| Hypothesis | Tests if restriction significantly worsens fit. | Tests which model has better predictive adequacy. |

| Handling Variability | Asymptotic theory provides p-values, but sensitive to misspecification. | Often requires bootstrapping or heuristics (ΔAIC > 2, ΔBIC > 6) for confidence. |

| Typical Neuroimaging Use | Comparing models with vs. without a specific regressor/covariate. | Comparing different cognitive theories or network structures. |

| Recommended Min Sample | ~50-100 subjects for stable χ² approximation in fMRI. | >100 subjects for stable ΔAIC/ΔBIC rankings. |

Table 2: Common Information Criteria for Non-Nested Comparison

| Criterion | Formula | Penalty for Complexity | Key Consideration |

|---|---|---|---|

| Akaike (AIC) | -2LL + 2k | Linear in parameters (k). | Prone to overfitting with many parameters. Good for prediction. |

| Corrected AIC (AICc) | -2LL + 2k + (2k(k+1))/(n-k-1) | Stronger penalty for small n. | Essential when n/k < 40. Use by default in neuroimaging. |

| Bayesian (BIC) | -2LL + k*log(n) | Logarithmic in sample size (n). | Favors simpler models more than AIC. Good for identifying true model. |

Experimental Protocols

Protocol 1: Conducting a Nested Model Comparison (LRT) for fMRI GLM

- Model Specification: Define Full Model (FM) and Restricted Model (RM). RM must be obtainable by setting one or more parameters in FM to zero.

- Estimation: Fit both models to your neuroimaging data (e.g., per voxel or ROI), obtaining the maximum log-likelihood (LL) for each: LLFM and LLRM.

- Test Statistic Calculation: Compute LR = -2 * (LLRM - LLFM). Under the null, LR ~ χ² with degrees of freedom (df) equal to the difference in the number of free parameters.

- Inference: Compare the computed LR to the χ² distribution. A significant p-value indicates the FM provides a significantly better fit.

Protocol 2: Conducting a Non-Nested Comparison using AICc & Bootstrapping

- Model Specification: Define two distinct models, A and B. They may contain different regressors or covariance structures.

- Initial Estimation & Comparison: Fit both models to the full dataset. Calculate AICc for each: AICc = -2LL + 2k + (2k(k+1))/(n-k-1). Compute ΔAICc = AICcB - AICcA.

- Bootstrap for Variability: a. Generate B (e.g., 1000) bootstrap samples by resampling subjects with replacement. b. For each sample, fit both models and compute ΔAICc. c. Create a distribution of bootstrap ΔAICc.

- Inference: Report the mean ΔAICc and its 95% confidence interval from the bootstrap. If the confidence interval excludes 0, you have evidence favoring one model. Also report the probability that Model A is preferred over B across bootstrap samples.

Mandatory Visualizations

Figure 1: Model Nesting Decision Tree

Figure 2: Non-Nested Model Comparison Workflow

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Model Comparison

| Item | Function in Experiment |

|---|---|

| Statistical Software (R/Python) | Primary environment for implementing GLMs, calculating likelihoods, and running LRT or information criterion functions (e.g., lmtest, statsmodels). |

| Neuroimaging Analysis Package (SPM, FSL, AFNI) | Used for first-level model fitting, generating per-voxel/ROI parameter estimates and residual sum of squares, which feed into likelihood calculations. |

Bootstrapping Library (boot in R, sklearn.resample) |

Essential for assessing the stability and variability of non-nested model comparison results across resampled datasets. |

| Information Criterion Calculator | Custom script or function to compute AIC, AICc, and BIC from model log-likelihoods, accounting for sample size and parameter count. |

| Visualization Tool (Graphviz, matplotlib) | Creates clear diagrams of model structures and comparison workflows to ensure correct nesting relationships are understood and communicated. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After running ComBat, my harmonized data shows unexpectedly low variance for one site. What went wrong?

A: This is often caused by an incorrect specification of the batch variable, where data from multiple scanners at a single site may have been incorrectly labeled as separate batches. Verify your batch assignment vector matches the true scanner ID for each subject's scan. Re-run ComBat with the correct batch labels. Additionally, check for extreme outliers at that site pre-harmonization, as they can distort parameter estimation.

Q2: When using ComBat with an empirical Bayes (EB) prior, the model fails to converge. How can I resolve this? A: Non-convergence in the EB step typically indicates insufficient sample size per batch or extremely dissimilar distributions between batches.

- Solution 1: Switch to the "non-parametric" ComBat variant (

model = non-parametricin some packages) which uses a different estimation method. - Solution 2: Ensure you are using the mean of the entire dataset, not per-batch means, as the initial value for the location parameter.

- Solution 3: Increase the maximum number of iterations for the EB algorithm (e.g.,

max.iter = 1000). - Solution 4: As a diagnostic, run ComBat without the EB step (

eb = FALSE) to see if the issue is in the prior estimation.

Q3: I am getting a "dimension mismatch" error when integrating ComBat-harmonized data into my machine learning pipeline. A: This is a common integration issue. ComBat typically outputs a matrix of harmonized features (e.g., voxels, ROIs). Your pipeline might expect a different data structure.

- Protocol: Always reshape the output back to match your original neuroimaging data format (e.g., NIfTI, CIFTI) using the inverse of your feature extraction step. Ensure subject order is preserved. The correct workflow is:

- Extract features from raw images into a

[n_subjects x n_features]matrix. - Apply ComBat to this matrix.

- Reshape each subject's harmonized feature vector back into its original image space or data structure for downstream analysis.

- Extract features from raw images into a

Q4: Does ComBat remove biologically relevant signal along with scanner artifacts? A: ComBat is designed to preserve biological variance related to specified model covariates (e.g., age, diagnosis). The risk of over-harmonization is real.

- Troubleshooting Protocol: Conduct a negative control analysis.

- Harmonize your data using a phenotype of interest (e.g., disease status) as a covariate.

- Perform a statistical test for that phenotype on the harmonized data.

- Compare the resulting statistical map or effect size to one generated from unharmonized data. A significant and sensible biological effect should remain post-harmonization. If it disappears, your model may be over-correcting. Re-evaluate your covariate matrix.

Q5: For my multi-center clinical trial, should I use ComBat, COMBAT-GAM, or a different harmonization tool? A: The choice depends on your data structure and the nature of the confound.

- ComBat: Best for linear scanner effects.

- COMBAT-GAM (Generalized Additive Model): Use if you suspect a non-linear relationship between a continuous covariate (e.g., age) and your imaging feature across sites. It models site-specific non-linear biological trends.

- Protocol for Decision:

- Visually inspect per-site plots of your primary imaging feature against key biological variables (e.g., age).

- If the growth curves are parallel but shifted, use linear ComBat.

- If the shapes of the curves differ significantly by site, use COMBAT-GAM or a similar non-linear method.

- Validate by checking the reduction in site-associated variance (see Table 1).

Table 1: Performance Comparison of Common Harmonization Methods

| Method | Key Principle | Preserves Biological Variance? | Reduces Inter-Site Variance* | Best For |

|---|---|---|---|---|

| ComBat (Linear) | Empirical Bayes, adjusts for mean & variance shift | Yes, when modeled as a covariate | 70-90% | Linear scanner effects, large sample sizes |

| ComBat-GAM | Models non-linear covariate trends per site | Yes, for non-linear effects | 65-85% | Non-linear age/trend effects across sites |

| Longitudinal ComBat | Incorporates within-subject change over time | Yes, for longitudinal signals | 75-88% | Multi-site longitudinal studies |

| CycleGAN | Deep learning, image-to-image translation | Requires careful validation | 80-95% (visual) | Extreme contrast differences, structural MRI |

| SHARM | Density matching of intensity histograms | Limited by model | 60-80% | DTI fractional anisotropy maps |

*Reported median reduction in site-associated variance in simulated and real-world neuroimaging studies, as per recent literature.

Experimental Protocols

Protocol 1: Standard ComBat Harmonization for Regional Volumes

- Feature Extraction: Process all T1-weighted MRI scans through a segmentation pipeline (e.g., Freesurfer, SPM) to extract regional volumetric data for all subjects across all sites.

- Data Matrix: Create a

[n_subjects x n_regions]matrix. Create a batch vector of lengthn_subjectsindicating the scanner/site ID for each scan. - Covariate Matrix: Create a model matrix including biological variables of interest (e.g., age, sex, intracranial volume, diagnosis).

- Harmonization: Apply the ComBat function (e.g., from the

neuroCombatR package orcombat.pyin Python) using the data matrix, batch vector, and covariate matrix. Use the empirical Bayes (EB) adjustment. - Output: The function returns the harmonized data matrix. Integrate this matrix into your statistical or machine learning model for group comparisons.

Protocol 2: Validation of Harmonization Efficacy

- Primary Metric - Site Effect Removal: Fit a linear model with site as the predictor for each feature, pre- and post-harmonization. Calculate the relative reduction in F-statistic or variance explained (η²) by site. Report aggregate results (see Table 1).

- Secondary Metric - Biological Signal Preservation: Fit a model with a key biological variable (e.g., diagnosis) as the predictor, pre- and post-harmonization. Compare the effect size (e.g., Cohen's d) and statistical significance (p-value) of the group difference. A successful harmonization should not attenuate valid biological effects.

- Visual Inspection: Generate boxplots of a representative feature (e.g., hippocampal volume) grouped by site, before and after harmonization. Site-specific median shifts should be eliminated post-harmonization.

Visualizations

ComBat Harmonization Core Workflow

ComBat's Empirical Bayes Adjustment Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Site Harmonization Research |

|---|---|

neuroCombat (R Package) |

Primary tool for ComBat harmonization of neuroimaging data; handles matrix inputs and covariates. |

ComBat (Python - neurotools) |

Python implementation of the ComBat algorithm for integration into Python-based ML pipelines. |

Combat-GAM (R) |

Extension of ComBat that models non-linear biological trajectories across sites using generalized additive models. |

mica (Python Package) |

Contains tools for harmonization and multi-site ICA, useful for functional connectivity data. |

| Statistical Parcellation Atlas (e.g., AAL, Harvard-Oxford) | Provides consistent regions-of-interest (ROIs) for feature extraction across diverse datasets. |

| Quality Control Metrics (e.g., CAT12, MRIQC) | Essential for identifying and excluding poor-quality scans before harmonization to prevent artifact propagation. |

| Simulated Phantom Data | Digital or physical phantoms scanned across sites to characterize and quantify the pure scanner effect. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During preprocessing, my pipeline fails with a "MemoryError" when running slice timing correction or spatial normalization on a cluster. What are the most common solutions?

A: This is often due to default memory allocation in tools like SPM or FSL. First, ensure your data is in compressed NIfTI format (.nii.gz) to reduce I/O load. For FSL's flirt or fnirt, explicitly set the --verbose flag to monitor memory. The primary solution is to batch process subjects sequentially rather than in parallel if cluster memory per node is limited. Alternatively, use a memory-efficient pipeline like fMRIPrep with the --mem_mb flag to limit usage, or pre-process in native space before normalization to standard space.

Q2: After extracting features from ROIs, my classification accuracy is at chance level (e.g., ~50% for a binary task). What systematic checks should I perform?

A: Follow this diagnostic checklist:

- Label Integrity: Verify your condition labels (e.g., Task vs. Rest) are correctly synchronized with the fMRI volumes.

- Data Leakage: Ensure no subject's data is split across training and test sets due to sliding window approaches; use subject-wise cross-validation.

- Feature Scale: Check if your ROI time-series features (e.g., mean activation) have extreme outliers; apply robust scaling (e.g., Scikit-learn's

RobustScaler). - Baseline Model: Test a simple model (e.g., DummyClassifier) to confirm it also returns chance, ruling out a coding error in accuracy calculation.

- ROI Validity: Confirm your atlas (e.g., AAL, Harvard-Oxford) is appropriately registered to your subject data.

Q3: When comparing Logistic Regression, SVM, and Random Forest models using nested cross-validation, the performance variance across outer folds is extremely high (e.g., accuracy range from 60% to 95%). How should I handle this?

A: High variance indicates your results are sensitive to the specific data partition, often due to high dimensionality, small sample size (N<50), or heterogeneous brain responses. To handle this statistical variability:

- Increase the number of outer folds (e.g., to 10 or Leave-One-Subject-Out) and repeats (e.g., 100 repeats) to better estimate the performance distribution.

- Report confidence intervals (e.g., 95% CI) and consider using Bayesian hierarchical models for comparison.

- Use a permutation test (shuffling labels 1000+ times) to generate a null distribution and determine if your model's mean accuracy is significantly above chance despite the variance.

- Consider shifting from single-number accuracy to reporting model calibration or using McNemar's test for paired model comparisons.

Q4: My deep learning model (e.g., a simple CNN) trains successfully on fMRI volumes but fails to generalize to the validation set, showing clear overfitting. What regularization strategies are most effective for neuroimaging data?

A: Overfitting is pervasive in neuroimaging due to the low N, high p problem. Implement these strategies sequentially:

- Input-Level: Use aggressive spatial dimensionality reduction (e.g., cortical surface data, or ROI pooling) instead of full volumes.

- Data Augmentation: Apply synthetic data generation specific to fMRI, such as random temporal phase shifts, adding Gaussian noise matched to the background, or spatially transforming volumes within the bounds of registration error.

- Architectural: Incorporate dropout (rate 0.5-0.7) and L2 weight decay (lambda 1e-4) after convolutional layers. Use global average pooling instead of fully connected layers.

- Early Stopping: Monitor validation loss with a patience of 10-20 epochs.

Experimental Protocols & Data

Protocol: Nested Cross-Validation for Model Comparison Objective: To provide an unbiased estimate of model performance and compare different classifiers while avoiding data leakage and overfitting. Steps:

- Outer Loop (Performance Estimation): Split data into k folds (e.g., 5). For each fold:

- Hold out one fold as the test set.

- Use the remaining k-1 folds for the inner loop.

- Inner Loop (Model Selection & Tuning): On the k-1 folds, perform another j-fold cross-validation (e.g., 5).

- Grid search over hyperparameters (e.g., SVM C, kernel type; Random Forest n_estimators).

- Select the hyperparameter set yielding the best average performance across the j folds.

- Final Evaluation: Train a model on all k-1 folds using the best hyperparameters. Evaluate it on the held-out outer test fold.

- Aggregation: The average performance across all k outer test folds is the final, unbiased estimate. Statistical comparison (e.g., paired t-test corrected for multiple comparisons) is performed on the outer-fold results.

Table 1: Hypothetical Model Comparison Results (Binary Classification) Dataset: 100 subjects, resting-state fMRI, Classifying Patients vs. Controls. Features: Correlation matrices from 100 ROI Schaefer atlas.

| Model | Mean Accuracy (%) | 95% CI (%) | Mean F1-Score | AUC | Compute Time (min) |

|---|---|---|---|---|---|

| Logistic Regression (L1) | 72.1 | [68.5, 75.7] | 0.71 | 0.79 | 2.1 |

| Support Vector Machine (RBF) | 75.3 | [71.9, 78.7] | 0.74 | 0.82 | 18.5 |

| Random Forest | 74.8 | [70.9, 78.7] | 0.73 | 0.81 | 9.3 |

| 1D CNN | 73.9 | [69.2, 78.6] | 0.72 | 0.80 | 112.0 |

Table 2: Common Preprocessing Pipelines & Their Impact on Variability

| Pipeline Tool | Key Strengths | Potential Source of Variability | Typical Output Use for Classification |

|---|---|---|---|

| fMRIPrep (v23.2.0) | Robust, standardized, minimizes manual intervention. | Different versions may alter noise estimates. | Denoised BOLD timeseries in native or MNI space. |

| SPM12 | Widely used, integrates with GLM. | Segmentations can vary, affecting normalization quality. | Smoothed, normalized volumes. |

| AFNI | Highly flexible, extensive scripting. | User-defined parameter choices greatly impact output. | Voxel-wise % signal change. |

| HCP Pipelines | Optimized for multimodal, high-quality data. | Less effective on lower-resolution data. | Grayordinates on cortical surface. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function / Purpose in Pipeline |

|---|---|

| fMRIPrep | Automated, reproducible preprocessing pipeline for BOLD and anatomical MRI data. Standardizes the initial, critical step. |

| The Nilearn Library (Python) | Provides tools for statistical learning on neuroimaging data, including easy masking, connectome extraction, and decoding. |

| C-PAC | Configurable pipeline for analysis of connectomes, allows for flexible construction of analysis workflows. |

| Schaefer Atlas | Parcellation of cortex into functionally defined ROIs (e.g., 100, 200, 400 parcels). Reduces dimensionality for machine learning. |

| ABIDE Preprocessed | Publicly available, preprocessed dataset of autism spectrum disorder and controls. Serves as a benchmark for pipeline development. |

| Scikit-learn | Essential Python library for implementing and comparing standard classification models with cross-validation. |

| BrainCharter | For generating high-quality, publication-ready visualizations of brain maps and connectomes. |

Workflow & Pathway Diagrams

Title: fMRI Classification Model Comparison Pipeline

Title: Nested Cross-Validation Workflow

Solving Common Pitfalls: Optimizing Power and Stability in Your Analysis

Troubleshooting Guides & FAQs

Q1: My model performance metrics (e.g., accuracy, R²) vary wildly each time I re-run the analysis on the same neuroimaging dataset. What is the primary source of this variability? A: This high run-to-run variability often originates from the inference process, particularly when using non-deterministic algorithms. Common culprits include:

- Random weight initialization in deep learning models.

- Stochastic optimization algorithms (e.g., Adam, SGD with dropout).

- Random sampling in probabilistic models (e.g., MCMC, variational inference).

- Random seeds in data splitting (train/test/validation) not being fixed.

Mitigation Protocol: Implement a strict reproducibility protocol. Set and document random seeds for all random number generators in your software stack (Python NumPy, TensorFlow, PyTorch). Use deterministic algorithms where possible (e.g., deterministic CUDA operations in PyTorch with torch.backends.cudnn.deterministic = True). Report metrics as an average and standard deviation over multiple runs with different, but documented, seeds.

Q2: When comparing two models across different patient cohorts, the superior model changes. Is this data or model variability? A: This is typically data variability, specifically covariate shift or dataset shift. The underlying distribution of the imaging data or demographic/clinical covariates differs between cohorts, causing model performance to degrade or relative rankings to change.

Diagnostic Protocol:

- Perform statistical tests (e.g., Kolmogorov-Smirnov, t-tests) on key demographic variables (age, sex, clinical scores) between cohorts.

- Use feature-level distribution analysis. Compute summary statistics (mean, variance) of extracted neuroimaging features (e.g., ROI timeseries, connectivity weights) for each cohort and compare.

- Train a simple classifier to discriminate which cohort a sample comes from based on its features. If this is possible (AUC > 0.7), significant data variability exists.

Q3: My Bayesian model yields different posteriors when I change the inference library (e.g., PyMC3 vs. Stan). What does this indicate? A: This points to inference variability. Different software libraries may use different default settings for:

- Hamiltonian Monte Carlo (HMC) parameters (step size, tree depth, mass matrix).

- Variational inference (VI) approximations (mean-field vs. full-rank).

- Convergence diagnostics and sampling thresholds.

Troubleshooting Guide:

- Benchmark with Synthetic Data: Generate data from a known model. Run inference with both libraries and compare the recovered posteriors to the ground truth. See Table 1.

- Increase Computational Resources: Run more MCMC chains, increase iterations, or use more precise VI approximations in both libraries.

- Validate Convergence: Strictly apply convergence diagnostics (e.g., $\hat{R}$ < 1.01, effective sample size > 400 per chain). Compare only well-converged samples.

Table 1: Benchmarking Inference Libraries on Synthetic fMRI Connectivity Data

| Library | Inference Method | Default Samples | $\hat{R}$ (target <1.01) | ESS per Chain (target >400) | Time to Compute (s) | Relative Error in Posterior Mean |

|---|---|---|---|---|---|---|

| Stan | NUTS (HMC) | 2000 (4 chains) | 1.002 | 1250 | 45 | 2.1% |

| PyMC3 | NUTS (HMC) | 2000 (4 chains) | 1.010 | 980 | 38 | 2.5% |

| Pyro | AutoGuide (VI) | 10000 (SGD) | N/A | N/A | 22 | 5.7% |

ESS: Effective Sample Size. NUTS: No-U-Turn Sampler.

Q4: I am comparing two deep learning architectures. How do I determine if performance differences are real or due to random chance? A: You must perform statistical model comparison to separate true model variability from random noise. A simple t-test on accuracy is insufficient due to non-independence of test samples.

Recommended Experimental Protocol:

- Nested Cross-Validation: Use a nested (double) CV loop. The outer loop estimates generalization error, the inner loop performs model/hyperparameter selection.