Multivariate Analysis of Neurochemical Data: From Foundational Concepts to Clinical Applications in Biomarker Discovery and Drug Development

This comprehensive review explores multivariate analysis (MVA) techniques for neurochemical data, addressing the critical need to analyze complex, interacting variables in neuroscience research.

Multivariate Analysis of Neurochemical Data: From Foundational Concepts to Clinical Applications in Biomarker Discovery and Drug Development

Abstract

This comprehensive review explores multivariate analysis (MVA) techniques for neurochemical data, addressing the critical need to analyze complex, interacting variables in neuroscience research. Targeting researchers, scientists, and drug development professionals, we cover foundational principles of MVA including dimension reduction methods like Principal Component Analysis (PCA) and their advantages over traditional univariate approaches. The article details practical applications across psychiatric and neurological disorders, addresses methodological challenges including preprocessing variability and overfitting, and provides comparative validation of different MVA techniques. By synthesizing current methodologies with real-world applications in biomarker discovery and pharmaceutical development, this resource aims to enhance analytical rigor and translational impact in neurochemical research.

Beyond Single Variables: Foundational Principles and Exploratory Applications of Multivariate Neurochemical Analysis

Modern neuroscience has evolved from studying brain components in isolation to investigating how complex interactions within entire neural systems give rise to function and behavior. This paradigm shift necessitates multivariate approaches that can simultaneously analyze multiple variables and their relationships. Unlike traditional univariate methods that examine one variable at a time, multivariate analysis evaluates correlation and covariance across brain regions, providing signatures of neural networks that cannot be detected by voxel-wise techniques [1]. This network-oriented perspective is essential for understanding how information is encoded, processed, and transmitted across neural populations [2].

The analytical challenge lies in capturing the emergent properties of neural systems, where the interaction between elements produces phenomena that cannot be predicted from individual components alone. Multivariate techniques address this challenge by representing system components as network nodes and their relations as links, creating a framework for studying neural information processing [3]. These approaches are particularly valuable for reverse-engineering how information processing functions emerge from interactions between neurons or brain areas [2], moving neuroscience closer to a comprehensive understanding of brain function in health and disease.

Theoretical Framework: From Data to Networks

Core Mathematical Foundations

Multivariate analysis in neuroscience relies on mathematical frameworks that can represent complex relationships between variables. Principal Components Analysis (PCA) serves as a fundamental multivariate decomposition technique that identifies patterns in data by transforming original variables into a new set of uncorrelated variables called principal components [1]. This transformation follows the equation Y = V√ΛW^T, where Y is the original data matrix, V contains the principal components (Eigen vectors in voxel space), Λ is a diagonal matrix of Eigen values, and W contains Eigen vectors in subject space [1]. This decomposition separates factors dependent on voxel locations in the brain from those dependent on subject indices, creating a coordinate system for efficiently summarizing complex neural data.

Information theory provides another crucial mathematical foundation, with Mutual Information (MI) serving as a key measure for quantifying how much information neural activity carries about sensory variables or behavioral outputs [2]. Unlike correlation-based measures, MI captures both linear and non-linear dependencies, making it particularly suitable for neural systems where non-linear interactions are common. The Partial Information Decomposition (PID) framework further extends this by separating the information about a target variable carried by multiple source variables into unique, synergistic, and redundant components [2]. This decomposition is vital for understanding how different brain regions contribute uniquely versus collaboratively to information processing.

Network Theory and Neural Systems

Network approaches formalize neural systems as collections of nodes (representing variables, neurons, or brain regions) connected by edges (representing statistical or functional associations) [3]. In psychometric network analysis, used for multivariate psychological data, network nodes correspond to variables in a dataset, and edges represent pairwise conditional associations between these variables while conditioning on all other variables in the network [3]. This approach allows researchers to move beyond studying isolated brain regions to investigating how the organization of neural systems gives rise to brain function.

The Pairwise Markov Random Field (PMRF) is a particularly relevant graphical model for representing the joint probability distribution of a set of variables in terms of pairwise statistical interactions [3]. In this framework, unconnected nodes are conditionally independent given all other nodes in the network, providing a principled way to distinguish direct from indirect associations. This network representation encodes essential information about the functional organization of neural systems and can be characterized using tools from network science, such as measures of node centrality, network topology, and small-world properties [3].

Application Notes: Implementing Multivariate Analysis

The MINT Toolbox for Neural Information Analysis

The Multivariate Information in Neuroscience Toolbox (MINT) provides a comprehensive implementation of multivariate information theoretic tools specifically designed for neuroscience applications [2] [4]. Written in MATLAB and compatible with Linux, Windows, and macOS operating systems, MINT combines methods for computing information encoding and transmission with statistical tools for robust estimation from limited-size empirical datasets [2]. The toolbox addresses three fundamental aspects of neural information processing: how information is encoded in neural activity, how it is transmitted across brain areas, and how it informs behavior [2].

MINT incorporates several specialized functions for multivariate analysis, including Information Breakdown to quantify how correlations between neurons shape information processing, Partial Information Decomposition (PID) to separate information into unique, redundant, and synergistic components, and Feature-Specific Information Transfer (FIT) to measure stimulus-specific information transmission between network nodes [2]. These tools can be applied to various neural data modalities, including electrophysiology, calcium imaging, fMRI, and M/EEG, making MINT a versatile solution for multivariate neural analysis [2].

Neurochemical Connectivity Assessment

Multivariate approaches can also be applied to neurochemical data to approximate neurotransmitter system connectivity across brain regions [5]. This method uses quantitative measurements of tissue neurotransmitter levels from post-mortem samples to analyze neurochemical connectivity through correlation of biochemical signals between brain regions [5]. While this approach lacks temporal resolution compared to in vivo methods, it offers enhanced spatial resolution and requires no complex data transformation [5].

The key insight in neurochemical connectivity analysis is that variability in quantitative neurochemical data stems not only from biological sources (such as interindividual differences) but also from analytical factors [5]. Well-designed, precise protocols can reduce variability caused by analytical and experimental biases, allowing researchers to study meaningful biological variability and identify correlation patterns that reflect underlying neurochemical connectivity [5]. This approach demonstrates how multivariate thinking can extract network-level information from what might otherwise be considered noise in quantitative measurements.

Table 1: Multivariate Analysis Tools in the MINT Toolbox

| Tool Name | Function | Neuroscience Application |

|---|---|---|

| Mutual Information (MI) | Measures information encoding about variables | Quantifies how much information neural activity carries about sensory stimuli or behavior [2] |

| Information Breakdown | Decomposes information into contributions from correlations | Identifies how interactions between neurons shape information processing [2] |

| Partial Information Decomposition (PID) | Separates information into unique, redundant, and synergistic components | Reveals how different brain regions contribute uniquely versus collaboratively to information [2] |

| Transfer Entropy (TE) | Measures directed information transmission | Quantifies information flow between nodes of neural networks [2] |

| Feature-Specific Information Transfer (FIT) | Measures stimulus-specific information transmission | Identifies which specific stimulus features are transmitted between brain areas [2] |

| Intersection Information (II) | Quantifies stimulus information used to inform behavior | Measures how much encoded information actually influences behavioral outputs [2] |

Experimental Protocols

Protocol 1: Multivariate Information Analysis Using MINT

Purpose and Scope

This protocol describes the procedure for applying multivariate information theory to analyze neural population data using the MINT toolbox [2]. It enables researchers to quantify how information is encoded, processed, and transmitted in neural systems, with applications to electrophysiology, calcium imaging, and other neural recording modalities.

Materials and Equipment

- Neural data: Array of neural activity recorded in each trial (spike times, calcium fluorescence, BOLD signals, etc.)

- Task variables: Sensory stimuli or behavioral responses presented or produced in each trial

- Computer system: Linux, Windows, or macOS with MATLAB version 2018b or newer

- MATLAB toolboxes: Statistics and Machine Learning, Optimization, Parallel Computing, and Signal Processing Toolboxes

- MINT toolbox: Freely available from https://github.com/panzerilab/MINT [2]

Procedure

Data Preparation: Format neural data as an array with dimensions (number of trials × number of neurons/recording channels × time bins). Format task variables as a vector of length (number of trials).

Toolbox Setup: Install MINT and required MATLAB toolboxes. For calculations requiring redundancy measures, install and compile provided C files or use pre-compiled files for your operating system.

Entropy Estimation: Use

H.mfunction to compute neural variability:entropy = H(neural_data)Mutual Information Calculation: Use

MI.mfunction to compute information encoding:information = MI(neural_data, task_variables)Information Decomposition: Apply

PID.mto separate information into unique, redundant, and synergistic components. Select appropriate redundancy measure based on data characteristics.Information Transmission Analysis: Use

TE.mfor directed information transfer andFIT.mfor feature-specific information transmission between brain regions.Statistical Validation: Employ MINT's permutation algorithms to test significance of information values against null hypotheses.

Data Analysis and Interpretation

- Apply limited-sampling bias corrections to account for estimation from finite data

- Use hierarchical permutation tests to assess significance of information encoding and transmission

- Interpret high synergistic information as indicating emergent computational properties

- Interpret high redundant information as indicating robust information transmission

Protocol 2: Neurochemical Connectivity Mapping

Purpose and Scope

This protocol describes a method for assessing neurotransmitter system connectivity through multivariate analysis of tissue neurotransmitter levels [5]. It enables researchers to infer functional connectivity between brain regions based on correlated neurochemical patterns across individuals.

Materials and Equipment

- Brain tissue samples: Post-mortem samples from multiple brain regions of interest

- Neurochemical assay equipment: HPLC, LC-MS, or other quantitative analytical platforms

- Statistical software: Capable of multivariate analysis (R, Python, SPSS, STATA)

- Brain atlas: For anatomical region standardization

Procedure

Tissue Collection: Obtain post-mortem brain samples from regions of interest following standardized dissection protocols.

Neurochemical Quantification: Extract and quantify neurotransmitter levels using validated analytical methods. Record absolute concentrations for all samples.

Data Quality Control: Implement measures to reduce analytical variability through standardized protocols and technical replicates.

Data Matrix Construction: Create a data matrix with rows representing subjects and columns representing neurotransmitter concentrations in different brain regions.

Correlation Analysis: Calculate pairwise correlation coefficients between neurotransmitter levels across different brain regions.

Multivariate Analysis: Apply PCA to identify patterns of covariance in neurochemical data across brain regions.

Network Construction: Create connectivity networks where nodes represent brain regions and edges represent significant correlations between neurotransmitter levels.

Data Analysis and Interpretation

- Interpret strong positive correlations as potential functional connectivity between regions

- Use PCA results to identify major dimensions of neurochemical organization

- Apply graph theory metrics to characterize network topology

- Relate interindividual variability in network patterns to behavioral or clinical variables

Visualization Methods

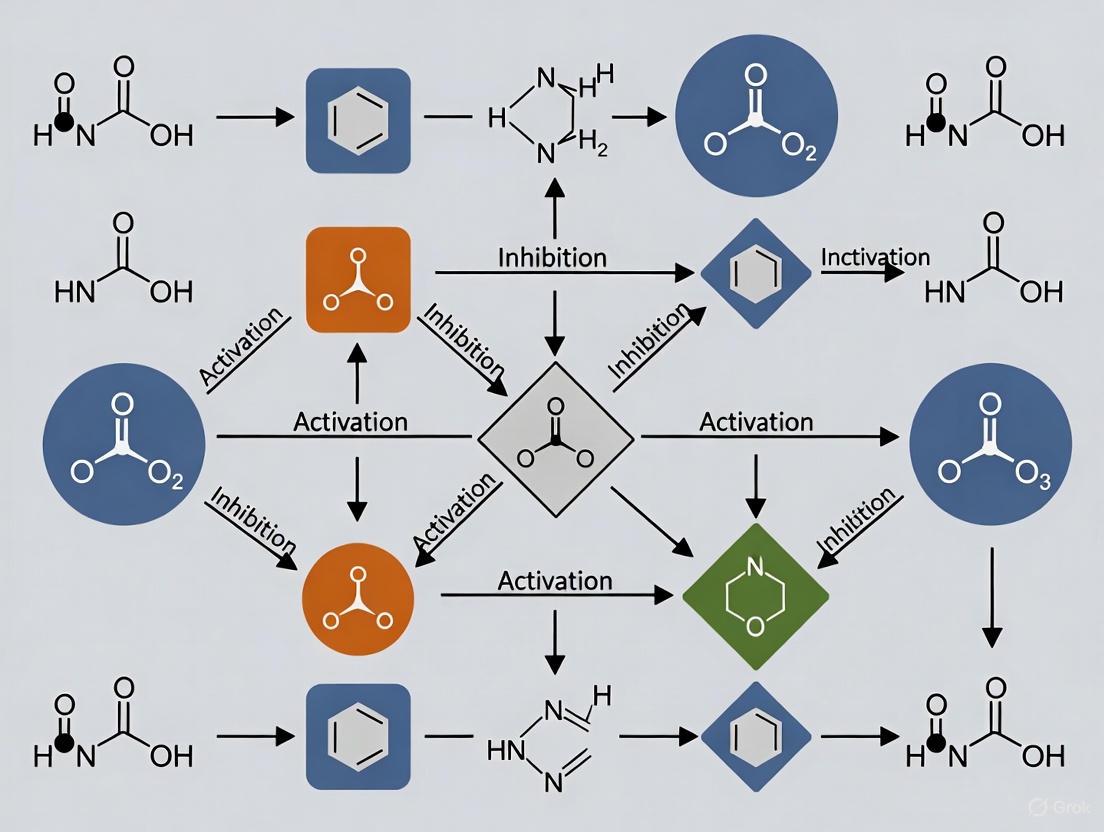

Workflow Diagram: Multivariate Analysis Pipeline

Multivariate analysis pipeline for neural data

Network Diagram: Information Processing Framework

Information processing framework in neural systems

Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for Multivariate Neuroscience

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| MINT Toolbox | Multivariate information theory analysis | MATLAB-based toolbox for quantifying information encoding and transmission in neural data [2] |

| MATLAB with Toolboxes | Computational environment | Requires Statistics and Machine Learning, Optimization, Parallel Computing, and Signal Processing Toolboxes [2] |

| 1H-MRS | In vivo metabolite quantification | Non-invasive technique measuring GABA, glutamate, choline, NAA, creatine, myo-inositol; useful for neurochemical studies [6] |

| Graphical Model Software | Network estimation and visualization | Implements Pairwise Markov Random Fields for conditional dependency networks [3] |

| Principal Components Analysis | Dimensionality reduction | Identifies major patterns of covariance in high-dimensional neural data [1] |

| Cross-Validation Tools | Model validation | Assesses generalizability of multivariate patterns to new datasets [1] |

Multivariate analysis represents a fundamental shift in neuroscience methodology, moving the field from studying isolated components to investigating complex networks of interactions. The protocols and applications outlined here provide researchers with practical frameworks for implementing these powerful approaches in their investigations of neural function. By embracing multivariate thinking and the analytical tools that support it, neuroscientists can address the fundamental challenge of understanding how interactions between neural elements give rise to cognition, behavior, and consciousness.

As multivariate methodologies continue to evolve, they promise to bridge gaps between different levels of neural organization—from molecular and neurochemical networks to large-scale brain systems. The integration of these approaches across scales and modalities will be essential for developing a comprehensive understanding of the brain in health and disease, ultimately advancing both basic neuroscience and therapeutic development for neurological and psychiatric disorders.

Multivariate analytical techniques are indispensable in modern neurochemical research, enabling scientists to distill complex, high-dimensional datasets into interpretable patterns and latent constructs. These methods are pivotal for identifying key biomarkers, understanding brain pathophysiology, and advancing therapeutic development. This document provides detailed application notes and experimental protocols for three core multivariate techniques—Principal Component Analysis (PCA), Factor Analysis, and Cluster Analysis—framed within the context of neurochemical and neuroimaging research.

The table below summarizes the primary applications and characteristics of each technique in neurochemical research.

Table 1: Core Multivariate Techniques in Neurochemical Research

| Technique | Primary Purpose | Key Neurochemical Applications | Underlying Model | Key Outputs |

|---|---|---|---|---|

| Principal Component Analysis (PCA) | Dimensionality reduction; identifying variables that contribute most to variance. | Identifying robust biomarkers of neurovascular coupling from multiple physiological parameters [7]. | Linear combinations of original variables (principal components) that are orthogonal. | Principal Components, Loadings, Variance Explained. |

| Factor Analysis | Identifying latent constructs that explain covariation among observed variables. | Deriving latent constructs of brain health from multimodal biomarkers (e.g., MRI, plasma, vascular risk factors) [8]. | Observed variables are linear functions of unobserved latent factors. | Latent Factors, Factor Loadings, Communalities. |

| Cluster Analysis | Grouping observations into subsets (clusters) with shared characteristics. | Discovering subtypes of stroke patients based on distinct neurochemical injury patterns [9]; identifying functional clusters of CNS drugs from brain activity maps [10]. | No formal model; groups data based on a defined measure of similarity or distance. | Cluster Assignments, Centroids, Dendrograms (for hierarchical). |

Experimental Protocols

Protocol for Principal Component Analysis (PCA) in Neurovascular Coupling Research

This protocol is adapted from a study using PCA to determine the most significant contributors to neurovascular coupling (NVC) responses across healthy and clinical populations [7].

1. Research Question and Objective: To reduce the dimensionality of a large NVC dataset and determine which physiological variables and cognitive tasks contribute the most variance to the cerebrovascular response.

2. Data Collection and Preprocessing:

- Participants: Recruit participant cohorts (e.g., Healthy Controls (HC), Alzheimer's Disease (AD), Mild Cognitive Impairment (MCI)) with appropriate ethical consent [7].

- Physiological Recording: Collect continuous data during cognitive tasks using:

- Transcranial Doppler ultrasonography (TCD) for cerebral blood flow velocity (CBFv).

- Beat-to-beat blood pressure (BP) monitoring.

- Electrocardiogram (ECG) for heart rate (HR).

- Capnography for end-tidal CO2 (ETCO2).

- Cognitive Tasks: Administer a battery of standardized cognitive tasks (e.g., from the Addenbrooke's Cognitive Examination-III) covering domains like attention, fluency, language, visuospatial, and memory.

- Parameter Extraction: From the recorded data, extract key NVC parameters for each task, such as:

- Peak percentage change in CBFv from baseline.

- Variance ratio (VR).

- Cross-correlation function peak (CCF).

- Data Cleaning and Filtering: Remove non-physiological spikes via linear interpolation and apply appropriate filters (e.g., median filter, zero-phase Butterworth filter).

3. PCA Execution and Analysis:

- Software: Standard statistical software (e.g., R, Python with scikit-learn, MATLAB).

- Steps:

- Structure Data: Organize data into a matrix where rows are observations (e.g., participant-task trials) and columns are variables (e.g., CBFv peak, VR, CCF, cognitive scores).

- Standardization: Standardize all variables to a mean of 0 and standard deviation of 1 (z-normalization) to prevent dominance by variables with larger scales [11].

- Perform PCA: Conduct PCA using singular value decomposition (SVD) on the standardized matrix.

- Determine Significant Components: Retain principal components with eigenvalues ≥ 1 (Kaiser's criterion).

- Rotation: Apply an orthogonal rotation (e.g., Equamax) to simplify the factor structure and enhance interpretability [7].

- Interpretation: Identify variables with rotated factor loadings ≥ |0.4| as significant contributors to a component. Interpret the biological or clinical meaning of the components based on these high-loading variables.

4. Key Findings from Exemplar Study: PCA identified that the peak percentage change in CBFv and the visuospatial task consistently accounted for a large proportion of the variance across datasets, suggesting them as robust NVC markers [7].

Protocol for Factor Analysis in Multimodal Brain Health

This protocol is based on a study that used exploratory factor analysis to identify latent constructs of brain health from multimodal biomarkers [8].

1. Research Question and Objective: To identify the latent constructs underlying multiple neurovascular imaging markers, brain atrophy metrics, plasma AD biomarkers, and cardiovascular risk factors.

2. Data Collection:

- Cohort: Recruit a well-characterized cohort (e.g., the Brain and Cognitive Health (BACH) cohort, N=127, mean age 67) [8].

- Multimodal Biomarkers:

- Neuroimaging: Acquire MRI markers including hippocampal volume, cortical thickness, fractional anisotropy (FA), cerebral blood flow (CBF), and enlarged perivascular spaces (ePVS) volume.

- Biofluid: Collect fasted blood plasma and quantify biomarkers such as amyloid-beta 42/40 ratio, phosphorylated tau (pTau181, pTau217), glial fibrillary acidic protein (GFAP), and neurofilament light chain (NfL).

- Clinical & Vascular: Record body mass index (BMI), cholesterol levels (HDL, LDL), and other cardiovascular risk factors.

3. Factor Analysis Execution:

- Software: R, Python, or specialized statistical software (e.g., SPSS, SAS).

- Steps:

- Data Preparation: Check for missing data and perform necessary transformations. Correlate variables to ensure sufficient shared variance for factor analysis.

- Factor Extraction: Use principal axis factoring or maximum likelihood estimation.

- Determine Number of Factors: Use parallel analysis, scree plot inspection, and retain factors with eigenvalues > 1.

- Factor Rotation: Apply an oblique rotation (e.g., Promax), which allows factors to be correlated, as is often biologically plausible.

- Interpretation: Identify variables with high factor loadings (e.g., > |0.4|) on each retained factor. Assign meaningful labels to the latent constructs based on these variables.

- Compute Factor Scores: Calculate individual-level factor scores for use in subsequent association analyses (e.g., with age or cognition).

4. Key Findings from Exemplar Study: The analysis revealed five latent constructs: "Brain & Vascular Health," "Structural Integrity," "White Matter Fluid Dysregulation," "AD Biomarkers," and "Neuronal Injury." The "Brain & Vascular Health" factor was significantly associated with global cognition [8].

Protocol for Cluster Analysis in Neuropharmacology and Stroke Profiling

This protocol integrates methods from studies using cluster analysis on brain activity maps and stroke lesion patterns [9] [10].

1. Research Question and Objective: To identify distinct clusters or subtypes within a dataset, such as subgroups of stroke patients with unique neurochemical injury patterns or clusters of drugs with similar whole-brain activity maps.

2. Data Preparation and Feature Extraction:

- For Stroke Profiling [9]: For each patient, map the stroke lesion onto a neurotransmitter white matter atlas. Calculate "presynaptic" and "postsynaptic" disruption ratios for key neurotransmitter systems (acetylcholine, dopamine, noradrenaline, serotonin).

- For Neuropharmacology [10]: Treat larval zebrafish with clinical CNS drugs and record whole-brain activity maps (BAMs). Use a convolutional autoencoder for deep learning to extract latent features from the BAMs that represent the drug's effect on brain physiology.

3. Cluster Analysis Execution:

- Software: R, Python (with scikit-learn), or MATLAB.

- Steps:

- Assess Cluster Tendency: Use statistics like the Hopkins statistic to confirm that the data is clusterable.

- Select Clustering Algorithm:

- Determine Optimal Number of Clusters (for K-means): Use the elbow method (looking for a bend in the plot of within-cluster variance vs. k) or optimize the silhouette coefficient, which measures how well each object lies within its cluster [10].

- Run Clustering Algorithm: Execute the chosen algorithm (e.g., K-means with the determined k) on the feature data (e.g., pre/postsynaptic ratios or deep learning features).

- Validate and Interpret Clusters: Analyze the clinical, behavioral, or anatomical patterns of the identified clusters to define their real-world meaning [9].

4. Key Findings from Exemplar Studies: K-means clustering applied to stroke neurotransmitter profiles revealed eight distinct clusters with different neurochemical patterns of injury [9]. In neuropharmacology, clustering of deep learning features from BAMs identified functional clusters of CNS drugs that predicted therapeutic potential [10].

Visualization of Workflows

The following diagrams illustrate the logical flow and key decision points for each multivariate technique.

PCA Workflow for Neurovascular Data

Factor Analysis for Multimodal Biomarkers

Cluster Analysis for Patient/Compound Subtyping

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and computational tools used in the featured multivariate analyses of neurochemical data.

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function/Application | Specific Example from Research |

|---|---|---|

| Transcranial Doppler (TCD) | Non-invasive measurement of cerebral blood flow velocity (CBFv) in major cerebral arteries for NVC studies. | Used to record CBFv during cognitive tasks as a key input variable for PCA [7]. |

| Arterial Spin Labeling (ASL) MRI | MRI technique to quantify cerebral blood flow (CBF) without exogenous contrast agents. | Provided a neurovascular imaging marker for inclusion in factor analysis of brain health [8]. |

| SIMOA HD-X Analyzer | Ultra-sensitive digital immunoassay platform for quantifying low-abundance plasma biomarkers. | Used to measure plasma biomarkers like GFAP, NfL, Aβ40, Aβ42, pTau181, and pTau217 [8]. |

| Restriction Spectrum Imaging (RSI) | Advanced multi-shell diffusion MRI model that differentiates intracellular and extracellular tissue compartments. | Provided sensitive microstructural metrics (e.g., restricted diffusion) for brain-behavior mapping [13]. |

| Larval Zebrafish Model | Vertebrate model for high-throughput in vivo neuropharmacological screening and whole-brain activity mapping. | Used to generate Brain Activity Maps (BAMs) for clustering analysis of CNS drug effects [10]. |

| Canonical Polyadic (CP) Decomposition | A tensor factorization method for decomposing multi-way data into unique, interpretable components. | Applied to multi-subject MEG data to extract latent spatiotemporal components of brain activity [12]. |

| Convolutional Autoencoder | A deep learning architecture for unsupervised feature learning from image data, such as brain activity maps. | Used to extract latent phenotypic features from whole-brain activity maps for subsequent clustering [10]. |

In the analysis of complex neurobiological systems, traditional univariate methods, which examine variables in isolation, often fall short. Multivariate analysis provides a powerful framework that leverages the inherent correlations within data to uncover patterns, interactions, and system-level properties that univariate approaches inevitably miss [14]. This is particularly critical in neuroscience, where function emerges from the dynamic, multi-scale interactions between numerous components, from molecules and cells to entire brain networks. This Application Note details the core advantages of multivariate techniques, provides executable protocols for their implementation in neurochemical research, and demonstrates their application through case studies relevant to drug discovery.

Core Advantages: Multivariate vs. Univariate Analysis

Univariate analyses summarize or test hypotheses about a single variable at a time. While useful for simple comparisons, this approach ignores correlations between variables, which can lead to an incomplete or misleading understanding of the system under investigation [14]. Multivariate methods analyze multiple variables simultaneously, offering two key classes of advantages.

- Enhanced Statistical Power and Detection Sensitivity: By considering the joint variation of multiple correlated measures, multivariate methods can detect subtle but consistent system-wide changes that are statistically insignificant when each variable is tested alone. This increases the ability to distinguish between experimental groups, such as healthy versus diseased states or treatment versus control.

- Deeper System-Level Insights: Multivariate methods are inherently suited for characterizing the structure and dynamics of complex systems. They move beyond asking "is this single measurement different?" to answer questions like "how is the entire network organized?" and "how do its components interact?".

The table below summarizes the fundamental differences in outcomes between the two approaches.

Table 1: Comparative Outcomes of Univariate and Multivariate Analysis

| Analysis Aspect | Univariate Approach | Multivariate Approach |

|---|---|---|

| Correlation Structure | Ignored; analyzed separately for each variable pair. | Incorporated directly into the model; reveals conditional dependencies [3]. |

| System-Wide Changes | May miss subtle, distributed effects. | Detects emergent patterns from combined small changes across multiple variables [14]. |

| Network Insights | Limited to properties of individual nodes. | Reveals global topology (e.g., modularity) and node roles (e.g., hubs) within a network [15] [16]. |

| Data Representation | Multiple individual tests and p-values. | Single model providing a unified view of the data structure. |

Experimental Protocols

The following protocols provide detailed methodologies for applying multivariate analysis to two common scenarios in neurochemical and network neuroscience research.

Protocol 1: Multivariate Workflow for Microelectrode Array (MEA) Biosensor Data Analysis

This protocol outlines a machine learning workflow to detect and characterize drug-induced changes in neuronal network activity, moving beyond simple spike-rate comparisons [16].

1. Research Question and Node Selection: Define the experimental question (e.g., "How does compound X alter functional connectivity in a cortical neuronal network?"). Nodes are predefined by the MEA setup (typically 64 electrodes).

2. Data Acquisition and Preprocessing:

- Culture Preparation: Plate dissociated cortical neurons (e.g., from E19 Wistar rats) onto polyethyleneimine (PEI)-coated MEA dishes. Maintain in glial-conditioned medium, replacing half the medium every third day [16].

- Recording: Record spontaneous extracellular activity from mature networks (e.g., 21-54 days in vitro). Use a sampling frequency of 25 kHz with band-pass filtering between 100-2000 Hz.

- Spike Detection: Manually exclude noisy electrodes. Remove electrical artifacts by zeroing signal portions around large positive peaks. Perform spike detection by setting a negative threshold for each electrode (e.g., -5 × standard deviation of the artifact-free signal) to generate spike timestamps [16].

3. Feature Engineering and Network Construction:

- Time Series Binning: Convert spike timestamps into sequential spike counts using a defined bin size (e.g., 10 ms).

- Segmentation: Divide the binned data into overlapping or non-overlapping windows (e.g., 60 s) for dynamic analysis.

- Connectivity Matrix Estimation: For each time window, calculate a functional connectivity matrix. Use correlation methods (e.g., Pearson correlation) or conditional association measures (e.g., partial correlation) between the spike trains of all electrode pairs [16] [3].

4. Multivariate Feature Extraction: Calculate a set of features from each connectivity matrix to describe the network's state. The table below lists key features.

Table 2: Research Reagent Solutions for MEA Network Analysis

| Reagent/Resource | Function in the Protocol |

|---|---|

| Dissociated Cortical Neurons | Primary biological unit for generating spontaneous and evoked network activity. |

| Polyethyleneimine (PEI) | Coating substance to promote neuronal adhesion to the MEA dish surface. |

| Microelectrode Array (MEA) Chip | Biosensor with 64 integrated electrodes for non-invasive, long-term recording of extracellular action potentials. |

| Artificial Cerebrospinal Fluid (aCSF) | Ionic solution for perfusing cultures during recording to maintain physiological pH and ion concentrations. |

| Bicuculline (BIC) | GABA_A receptor antagonist; pharmacological positive control for inducing network hypersynchrony (epileptiform activity). |

Extracted features should include:

- Complex Network Measures: Calculate metrics from graph theory, such as:

- Modularity: The extent to which the network is organized into distinct functional subgroups [15].

- Characteristic Path Length: The average shortest path between all node pairs, indicating network integration efficiency.

- Clustering Coefficient: The degree to which nodes tend to cluster together.

- Synchrony Measures: Compute global synchrony metrics (e.g., spike-rate synchrony) for reference [16].

5. Machine Learning and Interpretation:

- Classification: Train a machine learning model (e.g., Random Forest, Support Vector Machine) using the extracted features to classify network states (e.g., control vs. drug-treated).

- Model Interpretation: Use interpretability frameworks like SHapley Additive exPlanations (SHAP) to rank the importance of each feature in the classification. This translates model output into biologically meaningful insights (e.g., revealing that a drug's primary effect is a reduction in network modularity and complexity) [16].

The following diagram illustrates the core computational workflow of this protocol.

Protocol 2: Community Detection for Functional Brain Networks using fMRI

This protocol describes a multivariate community detection algorithm that identifies brain modules (networks) based on maximizing information redundancy, moving beyond standard pairwise correlation methods to account for higher-order interactions [15].

1. Research Question and Node Selection: Define the brain system of interest (e.g., the transmodal cortex). Nodes are typically brain regions defined by an atlas (e.g., a 200-region cortical parcellation [15]).

2. Data Acquisition and Preprocessing:

- fMRI Acquisition: Acquire resting-state functional MRI (fMRI) data (e.g., from the Human Connectome Project or similar datasets).

- Time Series Extraction: Preprocess data (motion correction, filtering) and extract BOLD time series for each of the N brain regions.

- Covariance Matrix Estimation: Calculate the N × N functional connectivity (FC) matrix, typically using covariance or correlation between the time series of all region pairs.

3. Multivariate Interaction Modeling via Total Correlation:

- The algorithm groups brain regions into modules such that the information shared among regions within a module (their redundancy) is maximized.

- Total Correlation (TC): The multivariate generalization of mutual information is used as the measure of redundancy within a set of regions [15].

- Quality Function: A "total correlation score" (

TC_score) is defined. For a given partition of the brain into modules, it quantifies how much the TC within each module exceeds the TC expected by chance for a random group of regions of the same size.

4. Optimization via Simulated Annealing:

- Initialization: Start with a random partition of the brain regions into M modules.

- Iterative Refinement: Randomly reassign a node to a different module and recalculate the

TC_score. - Simulated Annealing: Accept the new partition if it improves the score, or with a certain probability if it does not (to avoid local optima). Repeat for many iterations (e.g., 100,000) to find the partition that maximizes the

TC_score[15].

5. Analysis and Interpretation:

- Characterize Modules: Compare the identified redundancy-dominated modules to canonical functional systems (e.g., visual, somatomotor, default mode networks).

- Topological Specialization: Classify brain regions based on their contribution to within-module redundancy versus between-module synergy, providing a new axis for understanding regional function [15].

The logic of this advanced community detection method is summarized below.

Case Study & Data Presentation

Case: Distinguishing Network States with Bicuculline A study applied the MEA workflow (Protocol 1) to cortical networks treated with bicuculline (BIC), a GABA-A receptor antagonist. While univariate tests might show an increase in simple spike rate, the multivariate ML model, fed with complex network features, achieved high classification accuracy (AUC up to 90%) for discriminating control from BIC-treated networks [16].

Table 3: Quantitative Results from Bicuculline Case Study [16]

| Metric | Control State | Bicuculline State | Implication |

|---|---|---|---|

| Classification AUC | -- | -- | Model accurately distinguishes states based on multivariate features. |

| Key SHAP Features | Higher Network Complexity & Segregation | Reduced Complexity & Segregation | BIC induces a shift to a hyper-synchronized, less flexible network state. |

| Modularity | Higher | Lower | Loss of fine-scale functional organization, a hallmark of epileptiform activity. |

| Synchrony | Lower | Higher | Confirmation of expected univariate effect, but placed in a broader context. |

The SHAP value analysis demonstrated that the most important features for the model's decision were reductions in network complexity and segregation, hallmarks of the epileptiform state induced by BIC. This provides a nuanced, systems-level characterization of the drug's effect that goes beyond the known increase in synchrony [16].

Case: Revealing Multivariate Interactions in Metabolism Research on autism spectrum disorder (ASD) compared univariate and multivariate analysis of metabolites from the folate-dependent one-carbon metabolism (FOCM) and transsulfuration (TS) pathways. Univariate analysis of individual metabolites like S-adenosylmethionine (SAM) and S-adenosylhomocysteine (SAH) showed inconsistent results. In contrast, a multivariate Fisher Discriminant Analysis (FDA) model that incorporated the correlations between all metabolites successfully separated ASD and neurotypical (NT) cohorts, demonstrating superior classification power by capturing the system's state [14].

Application Note: Multivariate Analysis of fMRI Data for Psychiatric Classification

Background and Rationale

The complexity of psychiatric illnesses necessitates analytical approaches that can integrate multiple dimensions of neurochemical and functional data. Univariate analyses, which examine one variable at a time, are insufficient for capturing the network-based interactions that characterize brain disorders [17]. Multivariate analysis (MVA) techniques overcome this limitation by evaluating correlation and covariance across brain regions simultaneously, providing greater statistical power and better representation of neural network dynamics [1]. This application note details a protocol for applying multivariate approaches to classify psychiatric disorders based on integrated fMRI metrics, demonstrating a practical implementation from a recent proof-of-concept study [18].

Experimental Protocol: Integrated fMRI Analysis Using i-ECO

Objective: To distinguish between neurotypical individuals and patients with schizophrenia, bipolar disorder, or ADHD using an integrated fMRI analysis approach.

Summary of Workflow: The following diagram illustrates the core data integration and classification process.

Detailed Methodology:

Participant Population & Data Acquisition:

- Acquire data from 130 neurotypical controls, 50 participants with schizophrenia, 49 with bipolar disorder, and 43 with ADHD. Diagnoses should be confirmed using structured clinical interviews (e.g., SCID-I for DSM-IV TR criteria) [18].

- Perform fMRI scanning using a standardized protocol. The dataset from the UCLA Consortium for Neuropsychiatric Phenomics can serve as a reference or source of open data [18].

fMRI Data Preprocessing (using AFNI software):

- Remove the first 4 frames of each fMRI run to discard transient magnetization effects [18].

- Apply slice timing correction and despike methods to reduce noise [18].

- Co-register structural and functional images and warp to standard stereotactic space (e.g., MNI152 template) [18].

- Apply spatial blurring with a 6 mm full width at half maximum kernel and bandpass filtering (0.01–0.1 Hz) [18].

- Control for non-neural noise using regression based on 6 rigid body motion parameters and their derivatives, as well as mean time series from eroded cerebro-spinal fluid masks [18].

- Exclude subjects with excessive motion (>2 mm of motion and/or >20% of timepoints above a framewise displacement of 0.5 mm) [18].

Feature Calculation and Dimensionality Reduction:

- Regional Homogeneity (ReHo): Calculate the Kendall’s Coefficient of Concordance (KCC) to measure the similarity of the time series of a given voxel to its nearest 26 voxels. Normalize the KCC for each voxel using Fisher z-transformation [18].

- Eigenvector Centrality Mapping (ECM): Calculate ECM using the Fast Eigenvector Centrality method to measure network centrality, ensuring sensitivity to both cortical and subcortical regions [18].

- Fractional Amplitude of Low-Frequency Fluctuations (fALFF): Calculate using FATCAT functionalities. Transform the bandpassed time series into a periodogram using a Fast Fourier Transform (FFT) to estimate the power spectrum in the low-frequency range [18].

- For each participant, summarize individual variations by averaging the voxel-wise values per Region of Interest (ROI) [18].

Data Integration and Visualization (i-ECO):

- Integrate the three neurochemical metrics (ReHo, ECM, fALFF) using an additive color method (RGB) [18].

- Assign each metric to a color channel (e.g., ReHo to Red, ECM to Green, fALFF to Blue) to generate composite color-coded maps for each diagnostic group.

Multivariate Classification:

- Use a Convolutional Neural Network (CNN) to classify the integrated color-coded maps.

- Employ an 80/20 split for training and testing the model.

- Evaluate model performance using precision-recall Area Under the Curve (PR-AUC) [18].

Key Quantitative Results from Validation:

Table 1: Performance Metrics of the i-ECO Method in Psychiatric Classification

| Diagnostic Group | Sample Size (Pre-exclusion) | Excluded for Motion/Technical Issues | Classification PR-AUC |

|---|---|---|---|

| Neurotypical Controls | 130 | 11 | >84.5% |

| Schizophrenia | 50 | 18 | >84.5% |

| Bipolar Disorder | 49 | 11 | >84.5% |

| ADHD | 43 | 4 | >84.5% |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Resources for Integrated fMRI Analysis

| Item | Function/Description | Example/Reference |

|---|---|---|

| AFNI Software | A comprehensive software suite for fMRI data preprocessing and analysis, including ReHo, fALFF, and ECM calculations. | https://afni.nimh.nih.gov [18] |

| UCLA CNP Dataset | An open-source neuroimaging dataset including patients with schizophrenia, bipolar disorder, ADHD, and neurotypical controls. | UCLA Consortium for Neuropsychiatric Phenomics [18] |

| Standard Brain Atlas | Used for anatomical reference and Region of Interest (ROI) definition during spatial normalization and averaging. | MNI152 Template [18] |

| Python with Scikit-learn/TensorFlow | Programming environment for implementing multivariate classification algorithms, including Convolutional Neural Networks (CNNs). | Python.org |

| High-Performance Computing (HPC) Cluster | Essential for storing and processing large fMRI datasets and running computationally intensive CNN models. | Amazon Web Services (AWS), local HPC resources [19] |

Application Note: Mapping Neurochemical Diaschisis in Stroke

Background and Rationale

Stroke causes cognitive and behavioral deficits not only through local tissue damage but also through neurochemical diaschisis—the disruption of neurotransmitter circuits in brain areas distant from the lesion. Understanding these patterns is crucial for developing targeted neurochemical therapies, which have thus far shown inconsistent results in clinical trials [9]. This protocol describes a method to chart stroke lesions onto neurotransmitter circuits, differentiating between pre- and postsynaptic damage to enable a more nuanced approach to pharmacological intervention.

Experimental Protocol: Mapping Stroke-Induced Neurochemical Disruption

Objective: To create a white matter atlas of neurotransmitter circuits and quantify their damage in stroke patients.

Summary of Workflow: The procedure for mapping neurotransmitter circuit damage is outlined below.

Detailed Methodology:

Data Acquisition and Sources:

- Normative Neurotransmitter Maps: Obtain density maps of receptors and transporters from the atlas by Hansen et al., which compiles Positron Emission Tomography (PET) data from 1200 healthy individuals [9]. Key maps include:

- Acetylcholine (ACh): α4β2 and M1 receptors (42R, M1R), vesicular transporter (VAChT).

- Dopamine (DA): D1 and D2 receptors (D1R, D2R), transporter (DAT).

- Noradrenaline (NA): Transporter (NAT).

- Serotonin (5-HT): 5HT1a, 1b, 2a, 4, and 6 receptors (5HT1aR, etc.), transporter (5HTT).

- Structural Connection Priors: Use whole-brain, 7 Tesla deterministic tractographies from 100 participants of the Human Connectome Project (HCP) [9].

- Stroke Lesion Data: Utilize T1-weighted MRI images from two independent stroke cohorts (e.g., a training set of 1333 patients and a validation set of 143 patients) [9].

- Normative Neurotransmitter Maps: Obtain density maps of receptors and transporters from the atlas by Hansen et al., which compiles Positron Emission Tomography (PET) data from 1200 healthy individuals [9]. Key maps include:

Creating the White Matter Neurotransmitter Atlas:

- Use the Functionnectome method to project the gray matter PET-based receptor/transporter density maps onto the white matter [9].

- The projection is based on the voxel-wise weighted probability of structural connection derived from the HCP tractographies.

- Use streamline selection based on known neurotransmitter-producing nuclei (e.g., basal forebrain for acetylcholine, brainstem for monoamines) to refine the maps [9].

Quantifying Neurotransmitter Circuit Damage:

- Overlay individual stroke lesions onto the created white matter neurotransmitter atlas and the original PET density maps.

- Calculate two key ratios to distinguish the type of circuit disruption [9]:

- Presynaptic Ratio: For a given receptor, this measures relative presynaptic axonal injury. It is calculated as the lesion proportion of its transporter's white matter projection map divided by the lesion proportion of the receptor's own white matter projection map.

- Postsynaptic Ratio: For a given transporter, this measures relative postsynaptic axonal injury. It is calculated as the lesion proportion of its receptor's white matter projection map divided by the lesion proportion of the transporter's own white matter projection map.

- A ratio >1 indicates a relative predominance of that type of injury.

Multivariate Clustering and Analysis:

- Input the pre- and postsynaptic ratios for all neurotransmitters into an unsupervised k-means clustering algorithm to identify distinct neurochemical profiles of stroke damage [9].

- Validate the optimal number of clusters using the elbow method.

- Cross-reference the identified neurochemical clusters with detailed cognitive profiles of the patients to explore structure-function relationships.

Key Quantitative Results from Validation:

Table 3: Neurotransmitter System Asymmetries and Stroke Clustering Results

| Neurotransmitter Component | Significant Lateralization | Effect Size | Number of Identified Clusters |

|---|---|---|---|

| Serotonin Receptor 2a (5HT2aR) | Right | Large | 8 (in training set) |

| Serotonin Receptor 1b (5HT1bR) | Left | Large | 8 (in training set) |

| Dopamine D1 Receptor (D1R) | Right | Large | |

| Acetylcholine α4β2 Receptor (42R) | Right | Large | |

| Dopamine Transporter (DAT) | Not Significant | - | |

| Serotonin Receptor 1a (5HT1aR) | Right | Small |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Resources for Neurochemical Stroke Mapping

| Item | Function/Description | Example/Reference |

|---|---|---|

| Hansen Neurotransmitter Atlas | Provides normative in vivo density maps of neuroreceptors and transporters from healthy individuals. | Atlas from Hansen et al. [9] |

| Human Connectome Project (HCP) Data | Source of high-resolution structural and diffusion MRI data used to create connection priors for the white matter atlas. | https://www.humanconnectome.org |

| Functionnectome Software | A specialized tool for projecting gray matter values onto the white matter based on structural connectivity. | [9] |

| k-means Clustering Algorithm | An unsupervised multivariate analysis technique used to identify natural groupings (clusters) of patients based on their neurochemical injury profiles. | Available in R, Python (Scikit-learn) [17] [9] |

Multivariate Methods in Action: Techniques and Real-World Applications Across Neurodisciplines

In the field of neuroscience, particularly in the multivariate analysis of neurochemical data, the choice of machine learning approach is paramount. As research increasingly focuses on understanding complex neurotransmitter interactions and their implications in disease and treatment, leveraging the correct computational methodology can significantly enhance the validity and impact of findings. Machine learning offers powerful tools for deciphering these complex relationships, primarily through two distinct paradigms: supervised and unsupervised learning. The fundamental distinction lies in the use of labeled datasets; supervised learning requires pre-labeled data to train algorithms for outcome prediction, whereas unsupervised learning identifies hidden patterns and intrinsic structures within unlabeled data [20] [21]. For neuroscientists and drug development professionals, understanding this distinction is critical for designing robust experiments, from analyzing neurotransmitter dynamics to assessing drug efficacy.

Core Concepts and Their Relevance to Neurochemical Data

Supervised Learning

Supervised learning is defined by its use of labeled datasets to train algorithms, effectively "supervising" them to classify data or predict outcomes accurately [20]. By mapping input data to known outputs, the model can measure its accuracy and learn over time. This approach is typically divided into two types of problems:

- Classification: This involves predicting discrete categorical labels. In neurochemical research, this is instrumental in disease state identification, such as classifying subjects as having Alzheimer's disease or not based on neuroimaging data or neurotransmitter profiles [22] [23].

- Regression: This predicts continuous numerical values. It can be used to forecast clinical scores or estimate the concentration of specific neurotransmitters from sensor data [20] [22].

Unsupervised Learning

Unsupervised learning algorithms analyze and cluster unlabeled data sets without human intervention, discovering hidden patterns and structures [20] [21]. This is particularly valuable in exploratory neuroscience where pre-defined categories may not exist. Its primary tasks include:

- Clustering: This technique groups unlabeled data based on similarities or differences. A key application is in behavioral classification, where pose-tracking data from animals is clustered into recurring behavioral motifs without pre-labeled examples, thus reducing observer bias [24].

- Dimensionality Reduction: Used when the number of features in a dataset is excessively high, this technique reduces data inputs to a manageable size while preserving integrity. It is often a preprocessing step in neuroimaging data analysis [20] [1].

- Association: This method finds relationships between variables in a dataset, which can be useful for understanding co-fluctuations in neurotransmitter levels [20].

Comparative Analysis: A Guide for Selection

The choice between supervised and unsupervised learning depends on the research goal, data structure, and the specific problem at hand. The following table summarizes the key differences to guide researchers.

Table 1: Supervised vs. Unsupervised Learning at a Glance

| Criteria | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Data Input | Uses labeled datasets [20] [21] | Uses unlabeled, raw data [20] [21] |

| Primary Goal | Predict outcomes for new data [20] [25] | Discover hidden patterns, structures, or groupings in data [20] [25] |

| Common Algorithms | Logistic Regression, Linear Regression, Support Vector Machines (SVM), Random Forests, Neural Networks [20] [22] [21] | K-Means Clustering, Hierarchical Clustering, Principal Component Analysis (PCA), Autoencoders, Hidden Markov Models (HMM) [20] [24] [21] |

| Model Complexity | Relatively simpler; goal of prediction is well-defined [21] | Computationally complex; requires powerful tools for large unclassified data [20] |

| Key Neuroscience Applications | Medical diagnostics (e.g., Alzheimer's from MRI), Brain-Computer Interfaces (BCIs), Seizure prediction, Sentiment analysis from neural signals [20] [22] [23] | Animal behavior motif discovery, Market basket analysis in pharmacovigilance, Customer personas for clinical trials, Dimensionality reduction of neuroimaging data [20] [24] |

| Advantages | Highly accurate and trustworthy results for well-defined problems [20] | No need for labeled data; can uncover novel, unexpected patterns [20] [24] |

| Disadvantages | Time-consuming data labeling; requires expert intervention [20] | Results can be inaccurate without human validation; less transparency in how clusters are formed [20] |

Experimental Protocols for Neurochemical Research

Protocol 1: Supervised Learning for Neurotransmitter State Prediction

This protocol outlines the use of Iterative Random Forest (iRF) to model the predictive relationships between prefrontal cortex neurotransmitters and an physiological state (e.g., awake vs. anesthetized), as demonstrated in research on the effects of isoflurane [26].

1. Experimental Setup and Data Collection:

- Aim: To build a model that predicts anesthetic state from neurotransmitter concentrations.

- Materials: In vivo microdialysis or biosensors for data collection from the prefrontal cortex, a platform with Python/R and iRF implementation.

- Procedure: Collect time-series data on multiple neurotransmitter concentrations (e.g., glutamate, GABA, dopamine) under both awake and anesthetized conditions.

2. Data Preprocessing:

- Feature Engineering: Use the measured concentrations of all neurotransmitters as the feature set (predictors).

- Target Variable: Create a binary label indicating the state (e.g., 0 for awake, 1 for anesthetized).

- Data Splitting: Randomly split the dataset into a training set (75%) and a hold-out test set (25%) [26].

3. Model Training with iRF:

- Iterative Process: Train multiple Random Forest models. In each iteration, the model is built on a bootstrapped sample of the training data.

- Feature Importance: The iRF algorithm refines the identification of important features (specific neurotransmitters) and their interactions that are most predictive of the state [26].

- Model Validation: Use k-fold cross-validation on the training set to tune hyperparameters and avoid overfitting.

4. Model Evaluation and Interpretation:

- Prediction: Apply the final trained model to the 25% test set to predict the state.

- Performance Metrics: Calculate accuracy, precision, and recall.

- Network Visualization: Use tools like Cytoscape to visualize the directional, predictive networks of neurotransmitter interactions discovered by iRF, which can reveal reorganization under different conditions [26].

Protocol 2: Unsupervised Learning for Behavioral Motif Discovery

This protocol describes the use of unsupervised clustering on animal pose-tracking data to identify discrete, recurring behaviors, a critical step in linking neurochemical manipulations to phenotypic outcomes [24].

1. Experimental Setup and Data Acquisition:

- Aim: To automatically classify unlabeled pose-tracking data into meaningful behavioral motifs.

- Materials: High-speed video recording system, pose-estimation software (e.g., DeepLabCut, SLEAP), a computational environment for clustering algorithms (e.g., B-SOiD, VAME).

- Procedure: Record video of an animal in an open field. Use pose-estimation software to extract the X,Y coordinates of multiple body parts (keypoints) across all video frames.

2. Data Preprocessing and Feature Engineering:

- The Challenge: Raw keypoint data is highly dimensional and noisy.

- Approach 1 (B-SOiD): Calculate features such as inter-point distances, speeds, and angles over short time windows (e.g., 100ms). Then, use Uniform Manifold Approximation and Projection (UMAP) for non-linear dimensionality reduction [24].

- Approach 2 (VAME): Perform egocentric alignment of body parts to center the data on the animal. Use a sliding time window and a Variational Autoencoder (VAE) to create a latent space representation that captures the sequential nature of the poses [24].

3. Clustering and Motif Identification:

- Algorithm Selection:

- Execution: Run the chosen algorithm on the processed feature space. Each resulting cluster or hidden state is interpreted as a unique behavioral motif (e.g., rearing, grooming, scratching).

4. Validation and Analysis:

- Qualitative Validation: Manually inspect video snippets corresponding to the discovered motifs to verify their biological relevance.

- Quantitative Analysis: Analyze the sequence of motifs, transition probabilities, and the effect of a drug or genetic manipulation on the frequency and duration of these motifs.

Visualization of Workflows

To aid in the conceptual understanding and implementation of these methods, the following diagrams illustrate the core workflows for both supervised and unsupervised learning in a neurochemical and behavioral research context.

Figure 1: A high-level decision workflow for choosing and applying supervised versus unsupervised learning in neuroscience research.

Figure 2: Detailed step-by-step protocols for implementing the featured supervised and unsupervised learning experiments.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Materials and Tools for Machine Learning in Neurochemical and Behavioral Research

| Item | Function & Relevance in Research |

|---|---|

| In Vivo Microdialysis Systems | Enables continuous sampling of neurotransmitters from the brain extracellular fluid of live animals, providing the foundational chemical data for analysis. |

| DeepLabCut / SLEAP | Open-source pose-estimation software that uses supervised learning to track animal body parts from video with high accuracy, generating the raw data for unsupervised behavioral classification [24]. |

| B-SOiD, VAME, Keypoint-MoSeq | Unsupervised learning algorithms specifically designed to take pose-tracking data as input and automatically identify discrete, recurring behavioral motifs without human bias [24]. |

| Iterative Random Forest (iRF) | A advanced machine learning method that builds upon standard Random Forests to not only predict outcomes but also to more robustly identify important features and their interactions, ideal for complex neurochemical data [26]. |

| Cytoscape | An open-source platform for visualizing complex networks. It is used to illustrate the predictive networks of neurotransmitter interactions generated by methods like iRF [26]. |

| Python/R with scikit-learn, TensorFlow/PyTorch | Core programming languages and libraries that provide the computational environment for implementing a wide array of supervised and unsupervised learning algorithms. |

| Principal Component Analysis (PCA) | A classic linear dimensionality reduction technique used to simplify high-dimensional datasets (e.g., neuroimaging data) while preserving trends and patterns, often as a preprocessing step [1]. |

Canonical Correlation Analysis (CCA) for Brain-Behavior Relationships

Canonical Correlation Analysis (CCA) is a multivariate statistical method designed to identify and quantify the associations between two sets of variables. Introduced by Hotelling in 1936, it seeks linear combinations of the variables in each set—known as canonical variates—such that the correlation between these combinations is maximized [27] [28] [29]. In neuroscience, this technique is increasingly valued for its ability to elucidate complex brain-behavior relationships, moving beyond univariate analyses to capture the multidimensional nature of neural and behavioral data [27] [28]. Its application spans various domains, including linking functional connectivity to clinical symptoms, identifying neurophysiological biotypes of depression, and understanding how individual differences in brain dynamics relate to temperament and behavior [27] [30].

The utility of CCA stems from several key advantages. First, it can handle high inter-correlations among variables within the same set, a common characteristic of both brain imaging and behavioral measures [27]. Second, similar to Principal Component Analysis (PCA), CCA decomposes the relationship between two variable sets into a series of orthogonal modes of co-variation, each with a specific correlation coefficient [27]. Finally, by examining variable loadings—the correlations between original variables and the canonical variates—researchers can interpret the nature of each associative mode [27] [31]. Despite its power, applying CCA to neuroimaging data presents challenges, primarily concerning the stability and reliability of its results in high-dimensional settings where the number of features often vastly exceeds the number of subjects [27] [31] [32].

Theoretical Foundations of CCA

Basic Mathematical Formulation

Formally, given two centered data matrices, ( X \in \mathbb{R}^{n \times p} ) (e.g., brain measures) and ( Y \in \mathbb{R}^{n \times q} ) (e.g., behavior measures), CCA aims to find weight vectors ( \alpha \in \mathbb{R}^{p} ) and ( \beta \in \mathbb{R}^{q} ) such that the correlation ( \rho ) between the linear combinations ( X\alpha ) and ( Y\beta ) is maximized [28] [29]:

[ \rho = \max{\alpha, \beta} \text{corr}(X\alpha, Y\beta) = \max{\alpha, \beta} \frac{\alpha^T \Sigma{XY} \beta}{\sqrt{\alpha^T \Sigma{XX} \alpha \cdot \beta^T \Sigma_{YY} \beta}} ]

where ( \Sigma{XX} ) and ( \Sigma{YY} ) are the within-set covariance matrices for ( X ) and ( Y ), respectively, and ( \Sigma_{XY} ) is the between-set covariance matrix. The resulting linear combinations ( U = X\alpha ) and ( V = Y\beta ) are the first pair of canonical variates, and ( \rho ) is the first canonical correlation [28] [29]. The analysis can extract up to ( m = \min(p, q) ) such pairs of canonical variates, each orthogonal to the previous ones and associated with a successively smaller canonical correlation [29].

The CCA Workflow and Relationship to Other Methods

The following diagram outlines the core computational workflow of CCA and its relationship to other multivariate techniques.

CCA is a generalization of other common statistical methods. Simple Pearson correlation between two single variables is a special case of CCA, as is multiple regression analysis [27] [29]. Furthermore, CCA is mathematically linked to other multivariate techniques like Principal Component Analysis (PCA) and Partial Least Squares (PLS), though its objective—maximizing correlation rather than covariance—differs [31].

Critical Considerations for Application Stability

The Challenge of High-Dimensional Data and Instability

A major challenge in applying CCA to neuroimaging data is the curse of dimensionality. Often, the number of features (e.g., voxels, connections) far exceeds the number of subjects (( p \gg n )), leading to overfitting and unstable results [27] [31] [32]. Instability means that CCA results—including the estimated correlation strength and the feature weight patterns—can vary substantially across different samples from the same population, compromising replicability and interpretability [31].

Recent systematic investigations using generative models have quantified this problem. Key manifestations of instability in high-dimensional, low-sample-size regimes include:

- Inflated Association Strengths: In-sample canonical correlations are often significantly higher than the true population value or the out-of-sample (cross-validated) estimate [31].

- Unreliable Feature Patterns: The estimated weight vectors (( \alpha ), ( \beta )) that define the canonical variates can be inaccurate and non-generalizable, leading to erroneous biological interpretations [31].

- Low Statistical Power: The ability to detect a true existing association is often low at typical sample sizes [31].

Quantitative Guidelines for Stable CCA

Empirical and simulation studies have provided quantitative insights into the conditions required for stable CCA. The stability is influenced by the Subject-to-Variable Ratio (SVR) and the underlying correlation strength between the two datasets [27] [31].

Table 1: Factors Affecting CCA Stability Based on Empirical Characterization

| Factor | Effect on Stability | Practical Implication |

|---|---|---|

| Subject-to-Variable Ratio (SVR) | Stability increases with higher SVR [27]. | Dimension reduction (e.g., PCA) is often necessary before CCA to increase the SVR [27]. |

| True Correlation Strength | Stronger underlying correlations improve stability [27]. | Weaker associations require larger sample sizes for stable detection [31]. |

| Sample Size (n) | Error in weights and correlations decreases monotonically with increasing n [31]. | Thousands of subjects may be required for stable estimation in high-dimensional settings [31]. |

Table 2: Sample Size and Error in CCA Based on Generative Modeling (GEMMR) [31]

| Samples per Feature | Statistical Power | Weight Error (Cosine Distance) | Interpretability |

|---|---|---|---|

| ~5 (Typical in literature) | Low | High | Unreliable, prone to overfitting |

| Increasing | Increases | Decreases | Improves |

| Sufficient for Stability (e.g., n=20,000 for high-dim data) | High | Low | Reliable and generalizable |

These findings underscore that discovered association patterns in typical neuroimaging studies with modest sample sizes are prone to instability. One study suggests that only very large datasets, like the UK Biobank with ( n \approx 20,000 ), provide sufficient observations for stable mappings between brain imaging and behavioral features [31].

Experimental Protocols for CCA in Brain-Behavior Research

Protocol 1: A Standard CCA Pipeline with Dimension Reduction

This protocol outlines the foundational steps for conducting a CCA between brain imaging measures (X) and behavioral measures (Y).

Objective: To identify the dominant modes of association between a set of brain features and a set of behavioral traits. Materials: Preprocessed brain imaging data (e.g., voxel-based maps, connectivity matrices) and behavioral assessment scores.

Data Preparation and Preprocessing:

- Brain Data (X): Extract relevant features from neuroimaging data. Common examples include:

- Behavioral Data (Y): Compile demographic, cognitive, and psychometric measures into a single matrix.

- Centering: Center each variable in X and Y to have zero mean.

Dimension Reduction (if necessary):

- If the number of features (p or q) is larger than the number of subjects (n), apply dimensionality reduction.

- Principal Component Analysis (PCA) is the most commonly used method for this purpose [27]. Retain a subset of principal components (PCs) for both X and Y that explain a sufficient amount of variance (e.g., 80-90%). This step creates reduced datasets ( X{red} ) and ( Y{red} ) with a higher SVR.

Performing CCA:

- Input the reduced matrices ( X{red} ) and ( Y{red} ) (or the original X and Y if n > p, q) into a CCA algorithm.

- The analysis will return:

- Canonical weights (( \alphai, \betai )): For each mode i.

- Canonical variates (( Ui, Vi )): The projected scores for each subject.

- Canonical correlations (( \rho_i )): The correlation for each mode.

Statistical Inference:

- Use permutation testing or parametric tests like Wilk's Lambda to assess the statistical significance of the canonical correlations [28]. The null hypothesis is that all canonical correlations are zero.

Interpretation:

Protocol 2: Application of Regularized CCA (RCCA) for High-Dimensional Data

When dimension reduction via PCA leads to unacceptable information loss, Regularized CCA provides an alternative for analyzing high-dimensional data directly.

Objective: To model associations between two high-dimensional data sets without an initial dimension reduction step, mitigating overfitting. Materials: As in Protocol 1, but applied to high-dimensional feature sets.

Data Preparation: Follow Step 1 from Protocol 1.

Implementation of RCCA:

- RCCA addresses the singularity of sample covariance matrices by adding a penalty (ridge) to the diagonal [32]. The modified covariance matrices are:

- ( \Sigma{XX}(\lambda1) = \Sigma{XX} + \lambda1 Ip )

- ( \Sigma{YY}(\lambda2) = \Sigma{YY} + \lambda2 Iq )

- The objective function to maximize becomes: [ \rho{RCCA} = \frac{\alpha^T \Sigma{XY} \beta}{\sqrt{\alpha^T (\Sigma{XX} + \lambda1 I) \alpha \cdot \beta^T (\Sigma{YY} + \lambda2 I) \beta}} ]

- The penalty parameters ( \lambda1 ) and ( \lambda2 ) control the shrinkage of the canonical weights towards zero.

- RCCA addresses the singularity of sample covariance matrices by adding a penalty (ridge) to the diagonal [32]. The modified covariance matrices are:

Hyperparameter Tuning:

- Select the optimal values for ( \lambda1 ) and ( \lambda2 ) via cross-validation. A common strategy is to perform a grid search, choosing the values that maximize the predictive correlation between the canonical variates on a held-out validation set.

Computation via Kernel Trick:

- For extremely high-dimensional data (e.g., voxel-level fMRI), direct computation may be infeasible. In such cases, the kernel trick can be employed to reformulate RCCA in terms of inner products, drastically reducing the computational complexity [32].

Interpretation:

- Interpret the results by examining the regularized canonical weights or loadings, keeping in mind that the regularization may affect their magnitude and interpretability.

Protocol 3: A Penalized CCA Application for Brain-Oscillation and Temperament

This protocol is based on a specific research application that used penalized CCA to link brain oscillations to temperament traits [30].

Objective: To investigate the relationship between spatial patterns of brain oscillatory power and individual differences in temperament (e.g., behavioral inhibition, anxiety). Materials: MEG/EEG data recorded during controlled cognitive tasks (e.g., focused attention, anxious thought), and temperament questionnaire scores.

Experimental Design:

- Record brain activity (e.g., MEG) from participants under multiple conditions designed to elicit different cognitive states (e.g., focused attention vs. anxious thought) [30].

Feature Extraction:

- For each subject and condition, calculate the oscillatory power in frequency bands of interest (e.g., alpha: 8-12 Hz, beta: 15-30 Hz).

- Create spatial contrast maps representing the difference in oscillatory power between two conditions (e.g., Anxious-Thought minus Focused-Attention) [30]. These contrast maps form the brain feature set (X).

Behavioral Measures:

- Collect temperament and personality scores (e.g., behavioral inhibition, anxiety scales) to form the behavioral feature set (Y).

Penalized CCA:

- Apply a penalized CCA method (e.g., using the

PMApackage in R orscikit-learnin Python) to the brain contrast maps (X) and temperament scores (Y). - Penalized CCA incorporates sparsity constraints (e.g., lasso penalty) to yield a model where only a subset of features in X and Y have non-zero weights, enhancing interpretability [30].

- Apply a penalized CCA method (e.g., using the

Validation and Interpretation:

- Use cross-validation to ensure the robustness of the discovered association.

- Interpret the sparse weight vectors to identify which brain regions (from the contrast map) and which temperament traits are key drivers of the correlation [30]. For instance, a study found that behavioral inhibition was positively correlated with high oscillatory power in the bilateral precuneus and low power in the bilateral temporal regions in an anxious-thought condition [30].

The Scientist's Toolkit: Essential Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions for CCA in Neuroscience

| Item / Software Package | Function / Application | Example Use Case |

|---|---|---|

MATLAB canoncorr |

Performs standard CCA on sample data. | Basic CCA analysis with well-conditioned data where n > p, q [29]. |

R candisc, CCA, vegan |

Various R packages for CCA and visualization. | Conducting CCA and producing biplots for result interpretation [29]. |

Python scikit-learn (CrossDecomposition) |

Provides CCA and other multi-view methods. | Integrating CCA into a larger machine learning pipeline in Python [29]. |

Python CCA-Zoo |

Implements extensions like sparse, kernel, and deep CCA. | Applying structured or regularized CCA variants to high-dimensional data [29]. |

R PMA (Penalized Multivariate Analysis) |

Implements sparse CCA (SCCA). | Identifying a small subset of relevant brain and behavior features [33] [30]. |

| PCA (Preprocessing) | Dimension reduction technique to increase SVR. | Reducing voxel-wise brain maps to a manageable number of components before CCA [27]. |

| Regularization Parameters (λ₁, λ₂) | Tuneable hyperparameters for RCCA. | Controlling overfitting in high-dimensional datasets [32]. |

Advanced CCA Variants and Future Directions

To address the limitations of conventional CCA, several advanced variants have been developed. The following diagram maps the relationships between these different techniques.

These variants include:

- Sparse CCA (SCCA): Incorporates L1 (lasso) penalties to produce canonical weight vectors that are sparse, meaning many weights are exactly zero. This greatly enhances model interpretability by selecting the most important features from each set [33] [32].

- Kernel CKA (KCCA): A nonlinear extension of CCA that uses kernel functions to project data into a high-dimensional feature space where linear CCA is performed, thus capturing complex nonlinear relationships [28] [33].

- Group Regularized CCA (GRCCA): An extension of RCCA that incorporates known group structure among variables (e.g., genes in pathways, brain regions in networks), applying regularization that respects this structure [32].

- Multiset CCA (mCCA): Generalizes CCA to more than two datasets simultaneously, allowing for the integration of multiple modalities (e.g., structural MRI, functional MRI, genetics, behavior) in a single model [28].