Measuring the Mind: A 2024 Guide to Controlling Analytical Variation in Neuroimaging Research

This article provides a comprehensive framework for understanding, measuring, and mitigating analytical variation in neuroimaging experiments, essential for ensuring reproducibility and translational validity.

Measuring the Mind: A 2024 Guide to Controlling Analytical Variation in Neuroimaging Research

Abstract

This article provides a comprehensive framework for understanding, measuring, and mitigating analytical variation in neuroimaging experiments, essential for ensuring reproducibility and translational validity. We first establish the core sources and impact of analytical variability, from pipeline choices to software versions. We then detail methodological best practices for robust experimental design and data processing. A dedicated troubleshooting section addresses common pitfalls and optimization strategies. Finally, we review current validation standards and comparative evaluation frameworks for benchmarking analysis pipelines. Targeted at researchers and drug development professionals, this guide synthesizes recent consensus from large-scale initiatives to empower more reliable, clinically impactful neuroimaging science.

Defining the Problem: What is Analytical Variation and Why Does It Threaten Neuroimaging Reproducibility?

Within the broader thesis on best practices for capturing analytical variation in neuroimaging experiments, this guide addresses the "analytical noise" that arises from methodological choices in data processing and statistical analysis. This noise is a primary contributor to the reproducibility crisis, where studies fail to replicate due to hidden degrees of freedom in analytical pipelines.

The following tables summarize key quantitative findings from recent meta-research on analytical variability in neuroimaging.

Table 1: Impact of Analytical Choices on fMRI Results

| Analytical Choice | Range of Effect Size Variation | Key Study (Year) |

|---|---|---|

| Software Package (FSL, SPM, AFNI) | Cohen's d variation up to 0.8 | Botvinik-Nezer et al., 2020 (Nature) |

| Smoothing Kernel (4mm vs. 8mm FWHM) | >50% change in cluster extent | Carp, 2012 (NeuroImage) |

| Motion Correction Strategy | Can reverse sign of correlation | Power et al., 2015 (PNAS) |

| Statistical Threshold (p<0.01 vs. p<0.001) | 30-60% difference in activated voxels | Nieuwenhuis et al., 2011 (Nature Neuroscience) |

| Region-of-Interest (ROI) Definition | Correlation differences up to r=0.4 | Bowring et al., 2019 (NeuroImage) |

Table 2: Multilab Consortium Results for a Single Task

| Consortium / Project | Number of Analysis Teams | Key Outcome Metric | Result Variability |

|---|---|---|---|

| NARPS (Neuroimaging Analysis Replication) | 70 teams | Decision on hypothesis support | 29 teams supported, 21 teams rejected, 20 inconclusive |

| ABIDE (Autism Brain Imaging) | 15 analysis pipelines | Classification accuracy (Autism vs. Control) | Range: 28% to 85% accuracy |

| IMAGEN | Multiple pipelines | Brain-wide association study (BWAS) effect sizes | Major variability in significant loci |

Experimental Protocols for Quantifying Analytical Noise

Protocol 1: The Multiverse Analysis Framework

This protocol systematically explores the "garden of forking paths" in an analysis pipeline.

- Data Acquisition: Start with a single, high-quality raw dataset (e.g., resting-state fMRI from 100 participants).

- Define Analysis Nodes: List every step in the pipeline where a legitimate analytical choice exists (e.g., slice-timing correction: yes/no; denoising strategy: ICA-AROMA vs. global signal regression).

- Generate Pipeline Instances: Create every possible combination of choices (or a large, random subset if the space is too large). This yields N unique analysis pipelines.

- Execute and Compute Outcome: Run each pipeline to compute the primary outcome measure (e.g., group-level t-statistic map for a contrast).

- Quantify Variance: Calculate the voxel-wise standard deviation of the outcome measure across all N pipelines. This map represents the "analytical noise floor."

Protocol 2: The Benchmarking Datasets with Ground Truth (Simulated Phantoms)

This protocol uses data where the true signal is known.

- Phantom Data Generation: Use a neuroimaging simulator (e.g., fMRISim, BrainWeb) to create raw MRI data. Embed a known, quantitative ground truth signal (e.g., a specific activation pattern with defined amplitude).

- Invite Multiple Analysis Teams: Distribute the synthetic data to different labs or analysts.

- Independent Analysis: Each team processes the data using their preferred, published pipeline.

- Benchmark Comparison: Compare each team's final statistical map against the known ground truth. Calculate metrics like sensitivity, specificity, and bias in effect size estimation.

Protocol 3: Pre-Registration and Registered Reports

This protocol minimizes analytical noise by locking choices a priori.

- Study Design & Analysis Plan: Before data collection or access, authors submit a complete methods section, including detailed, executable analysis code (e.g., as a containerized pipeline).

- Peer Review: The introduction, methods, and proposed analyses are reviewed for soundness.

- In-Principle Acceptance (IPA): The journal grants IPA based on the protocol, guaranteeing publication regardless of the outcome.

- Data Collection & Analysis: Data is collected and analyzed exactly as pre-registered.

- Deviation Reporting: Any post-hoc deviation from the registered plan must be explicitly justified and its impact discussed.

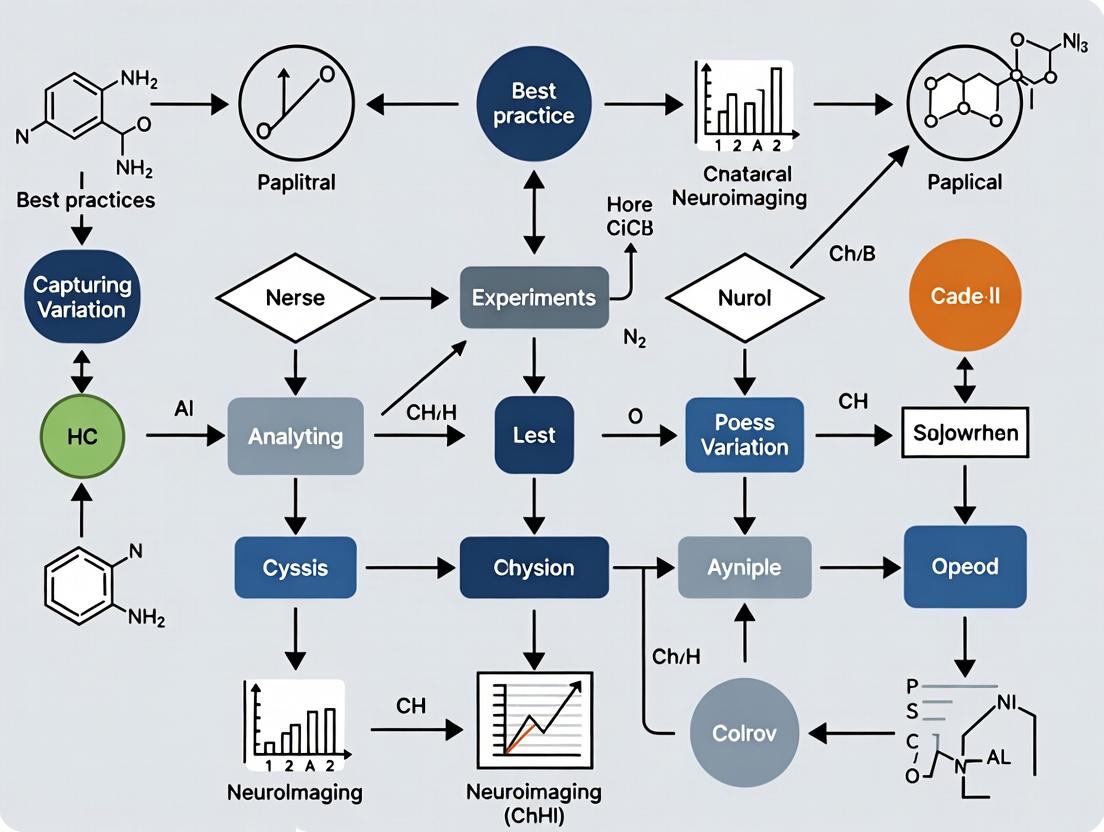

Diagram Title: Neuroimaging Pipeline & Noise Sources

Diagram Title: Multiverse Analysis Protocol Flow

The Scientist's Toolkit: Essential Reagents & Solutions

Table 3: Key Research Reagent Solutions for Managing Analytical Variation

| Item Name | Function/Benefit | Example/Format |

|---|---|---|

| Standardized Reference Datasets | Provides a common ground truth for benchmarking pipelines. Enables quantification of analytical bias. | Human Connectome Project (HCP) data; COBRE; ADHD-200; OpenNeuro datasets. |

| Containerized Analysis Environments | Freezes software versions and dependencies, eliminating "works on my machine" variability. | Docker or Singularity containers (e.g., fMRIPrep, Boutiques). |

| Pipeline Specification Tools | Allows precise, machine-readable documentation of every analysis step and parameter. | Common Workflow Language (CWL); Nipype pipelines; BIDS Apps. |

| Data Standardization Frameworks | Structures raw data uniformly, reducing errors in the initial processing steps. | Brain Imaging Data Structure (BIDS) specification. |

| Pre-Registration Platforms | Facilitates time-stamped, public registration of analysis plans before data inspection. | OSF Registries; AsPredicted. |

| Analysis-Sharing Platforms | Enables full replication, including code, environment, and data derivatives. | CodeOcean; Gigantum; NeuroVault (for results). |

| Meta-Analysis & Harmonization Tools | Corrects for cross-site and cross-protocol variability in multi-study analyses. | ComBat; ENIGMA Consortium protocols; random-effects models. |

| Quantitative Phantoms | Software or physical objects with known properties to validate MRI sequences and processing. | Digital Brain Phantom (e.g., from SPM); MRI system manufacturer phantoms. |

Mitigating the reproducibility crisis requires treating analytical pipelines as a major source of experimental variance. Best practices mandate the systematic capture and reporting of this variance through multiverse analyses, the use of standardized tools and data formats, and the adoption of pre-registration. By quantifying analytical noise, the field can distinguish true neurobiological signals from the artifacts of methodological choice, leading to more robust and replicable science.

In neuroimaging experiments, accurate measurement of brain structure and function is confounded by multiple, interacting sources of variability. Distinguishing true biological signal from confounding noise is paramount for robust statistical inference, particularly in translational drug development. This technical guide deconstructs variability into its three principal components—biological, technical, and analytical—within the thesis context of establishing best practices for capturing and controlling analytical variation. A systematic understanding of these sources is essential for optimizing experimental design, ensuring reproducibility, and validating biomarkers.

Biological Variability

Biological variability refers to genuine differences between subjects or within a subject over time, arising from genetic, physiological, or behavioral factors.

Intrinsic Biological Factors

- Genetic Polymorphisms: Heritable differences affecting brain morphology and circuit function.

- Age & Lifespan Effects: Non-linear changes in brain volume, connectivity, and perfusion.

- Sex & Hormonal Cycles: Structural dimorphisms and functional fluctuations tied to hormonal states.

- Cognitive State & Physiology: Fluctuations in attention, arousal, caffeine levels, cardiac and respiratory cycles.

Extrinsic Biological Factors

- Disease Progression/Subtype: Heterogeneity in pathology presentation and trajectory.

- Medication/Intervention Effects: Target engagement and downstream biological changes.

- Environmental & Lifestyle Factors: Diet, sleep, physical activity, and chronic stress.

Quantifying Biological Variance

Biological variance is typically estimated as the between-subject variance component in a mixed-effects model. In large-scale consortia like the UK Biobank or ADNI, it often constitutes the largest fraction of total variance in morphometric measures.

Table 1: Estimated Biological Variance Components in Common Neuroimaging Metrics

| Neuroimaging Metric | Population | Estimated Biological Variance (%) | Primary Source |

|---|---|---|---|

| Grey Matter Volume (Regional) | Healthy Adults (20-80 yrs) | 40-60% | ENIGMA Consortium, 2022 |

| White Matter Fractional Anisotropy | Healthy Adults | 30-50% | Human Connectome Project, 2023 |

| Resting-state fMRI (Default Mode Network amplitude) | Healthy Adults | 25-40% | BIOS Consortium, 2023 |

| Amyloid-β PET SUVR | Cognitively Normal Elderly | 20-35% | Alzheimer's Disease Neuroimaging Initiative (ADNI-4), 2024 |

Technical Variability

Technical (or measurement) variability is introduced by the instrumentation, acquisition protocols, and experimental procedures.

- Manufacturer & Model Differences: Variations in gradient performance, coil design, and software.

- Magnetic Field (B0) Instability: Drift and fluctuations leading to geometric distortion and signal loss.

- Radiofrequency (B1) Inhomogeneity: Non-uniform excitation affecting signal intensity, especially at higher field strengths.

Sequence & Protocol Parameters

- Sequence Type: Differences between MP-RAGE, SPGR, or MPRAGE for T1-weighted imaging.

- Acquisition Parameters: TR/TE/TI, resolution, multiband acceleration factors.

- Subject Positioning & Motion: The single largest source of within-session technical noise.

Longitudinal Instability

- Scanner Upgrades/Repairs: Changes in gradient tables or software versions.

- Phantom Signal Drift: Calibration errors over time.

Experimental Protocol for Quantifying Technical Variance

Title: Test-Retest Reliability Assessment for MRI Sequences.

Objective: To isolate intra-scanner technical variance for a specific imaging protocol. Design: Repeated measurements on the same subject(s) over a short timeframe (e.g., same-day or 1-week apart) to minimize biological change. Participants: N ≥ 10 healthy volunteers (allows variance component estimation). Procedure:

- Positioning: Subject is positioned in the scanner according to standard operating procedure (SOP) using laser alignment and head cushions.

- Initial Scan: Acquire full protocol (e.g., T1w, T2w, rs-fMRI, dMRI).

- Re-positioning: Subject exits the scanner bore, walks out of the scan room, and re-enters after a 15-minute break.

- Re-scan: The subject is re-positioned by the same technician, and the identical protocol is re-acquired.

- Analysis: Images are processed through a standardized pipeline. For each metric, the Intraclass Correlation Coefficient (ICC(2,1)) and Coefficient of Variation (CoV) are calculated across the two sessions.

Table 2: Typical Technical Variance (Test-Retest Reliability) Metrics

| Modality | Metric | ICC(2,1) Range | Within-Session CoV | Key Source of Variance |

|---|---|---|---|---|

| Structural MRI | Cortical Thickness | 0.85 - 0.98 | 0.5 - 2.0% | Segmentation algorithm, motion |

| Resting-state fMRI | Functional Connectivity (edge strength) | 0.50 - 0.80 | 5 - 15% | Subject motion, physiological noise |

| Diffusion MRI | Fractional Anisotropy (Tractography) | 0.70 - 0.90 | 2 - 8% | Eddy currents, motion, tractography model |

| Arterial Spin Labeling | Cerebral Blood Flow (gm) | 0.60 - 0.85 | 8 - 12% | Physiological fluctuation, labeling efficiency |

Diagram 1: Sources of Technical Variability

Analytical Variability

Analytical (or methodological) variability stems from choices in data processing, statistical modeling, and software implementation.

Preprocessing Pipelines

- Software Platform: Differences between FSL, SPM, AFNI, FreeSurfer, and ANTs.

- Algorithmic Choices: Registration method (linear vs. non-linear), segmentation algorithm (atlas-based vs. classifier-based), smoothing kernel size.

- Denoising Strategies: Physiological noise correction (COMPCOR, RETROICOR), motion censoring (e.g., framewise displacement threshold).

Statistical Modeling & Inference

- Model Specification: Inclusion of covariates (e.g., age, sex, ICV), handling of interactions.

- Multiple Comparison Correction: Method (FWE, FDR, cluster-based) and threshold.

- Statistical Software & Version: Differences in default algorithms or random number generators.

Experimental Protocol for Quantifying Analytical Variance

Title: Multiverse Analysis for Pipeline Robustness Assessment.

Objective: To quantify the variance in outcomes attributable to analytical choices. Design: A "multiverse" or "specification curve" analysis applied to a single dataset. Input Data: A curated dataset (e.g., from an open repository like OpenNeuro) with matched clinical/phenotypic information. Procedure:

- Define Decision Points: Identify key analytical choices (e.g., preprocessing software, normalization target, smoothing kernel, statistical model covariates).

- Generate Pipeline Variants: Systematically create all reasonable combinations (the "multiverse") of these choices.

- Parallel Processing: Run the target dataset through all pipeline variants using a high-performance computing cluster.

- Extract Outcome Metrics: For each pipeline, extract the primary outcome (e.g., effect size for a group difference, correlation coefficient with a behavior).

- Quantify Variance: Calculate the distribution of the outcome metric across all pipelines. The standard deviation or range of this distribution quantifies analytical uncertainty. Report the proportion of pipelines yielding a statistically significant result.

Table 3: Analytical Variance in Common Processing Decisions

| Processing Stage | Common Choice | Alternative Choice | Impact on Key Metric (Example) |

|---|---|---|---|

| T1 Segmentation | FreeSurfer v7.3.2 | SPM12 CAT12 | Hippocampal volume diff. up to 8% |

| fMRI Motion Correction | Volume Registration (FSL) | Volume Registration (AFNI) | Negligible difference in displacement estimates |

| Global Signal Regression | Included | Not Included | Can reverse sign of functional connectivity correlations |

| dMRI Tractography | Deterministic (FACT) | Probabilistic (Probtrackx) | Tract volume estimates vary by 20-40% |

Diagram 2: Analytical Variability Multiverse

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Characterizing Neuroimaging Variability

| Item / Solution | Category | Function & Rationale |

|---|---|---|

| Geometric Phantom | Technical Control | A physical object with known dimensions and signal properties for quantifying scanner geometric distortion, intensity uniformity, and spatial resolution. |

| Multimodal Dynamic Phantom (e.g., "MAGIC") | Technical Control | A programmable phantom that can simulate physiological signals (e.g., cardiac, respiratory) and motion to test and validate pulse sequences and processing pipelines under controlled conditions. |

| Standardized Reference Dataset (e.g., "MCIC") | Analytical Control | A publicly available, high-quality dataset with known ground truth or consensus findings, used as a benchmark to validate new processing pipelines and quantify analytical variability. |

| Containerized Processing Pipeline (e.g., Docker/Singularity) | Analytical Control | A software container that encapsulates a complete analysis environment (OS, libraries, code) to eliminate "works on my machine" variability and ensure computational reproducibility. |

| Longitudinal Traveling Subject/Human Phantom | Biological/Technical Control | A small cohort of individuals scanned repeatedly across all sites/machines in a multi-center study to directly estimate and calibrate out inter-site technical variance. |

| High-Resolution Multishell Diffusion Phantom | Technical Control | Physical phantom with known diffusion properties for characterizing and correcting dMRI sequence distortions, eddy currents, and gradient nonlinearities. |

| Version-Controlled Analysis Scripts (e.g., Git) | Analytical Control | Tracks every change to analysis code, allowing precise replication of any past analysis and clear attribution of results to specific software states. |

| Open-Source Processing Framework (e.g., Nipype, fMRIPrep) | Analytical Control | Provides standardized, best-practice implementations of common preprocessing steps, reducing variability introduced by in-house script differences. |

Synthesis and Best Practices for Capturing Analytical Variation

Best practices require proactive measurement and reporting of all variance components.

Pre-Experiment Planning:

- Power Analysis: Use estimates of biological and technical variance from published tables to inform sample size.

- Protocol Harmonization: Use SOPs for acquisition, phantoms, and traveling subjects in multi-center studies.

- Pre-registration: Publicly register analysis plans to distinguish confirmatory from exploratory analysis.

During Data Acquisition:

- Collect Auxiliary Data: Acquire physiological monitoring (cardiac, respiratory) and structured motion metrics for noise modeling.

- Implement QC in Real-Time: Use automated quality assessment (e.g., MRIQC) to flag and potentially re-acquire poor-quality scans.

During Data Analysis:

- Adopt Containerization: Use Docker/Singularity containers for processing.

- Perform Multiverse/Sensitivity Analyses: Systematically test the robustness of key findings to analytical choices.

- Report Variance Components: Where possible, report estimates of biological/technical/analytical variance for primary outcomes.

Reporting & Dissemination:

- Adhere to Community Standards: Follow guidelines like COBIDAS, ARRIVE, or MIAMI.

- Share Code & Data: Use public repositories (GitHub, OpenNeuro, BIDS) to share raw data, code, and derivatives.

- Quantify and Report Uncertainty: Present confidence intervals for effect sizes and explicitly discuss sources of analytical uncertainty in the manuscript.

By systematically deconstructing, measuring, and mitigating these three pillars of variability, neuroimaging research can achieve the rigor and reproducibility required for definitive neuroscience and robust drug development.

Within the context of best practices for capturing analytical variation in neuroimaging experiments, the concept of 'Researcher Degrees of Freedom' (RDoF) has emerged as a critical concern. Flexible analytical pipelines, while enabling methodological innovation, inadvertently introduce a multidimensional space of choices that can significantly influence experimental outcomes. This whitepaper details how these flexibilities manifest in neuroimaging data analysis and provides structured guidance for quantifying and managing this analytical variation, particularly relevant for preclinical and clinical drug development research.

Quantitative Landscape of Analytical Variation

Recent empirical studies have quantified the impact of pipeline variability on neuroimaging results. The data below summarizes key findings from the literature.

Table 1: Impact of Analytical Choices on Neuroimaging Outcomes

| Analysis Domain | Number of Common Pipeline Variants | Reported Effect Size Variation | Key Influencing Choice |

|---|---|---|---|

| fMRI Preprocessing | 20+ | Cohen's d: 0.2 to 1.7 | Motion correction algorithm, smoothing kernel |

| Structural MRI Segmentation | 15+ | Volume difference: 5-15% | Atlas selection, tissue probability threshold |

| Diffusion MRI Tractography | 30+ | Tract count variation: 10-40% | Tracking algorithm, curvature threshold |

| Task fMRI GLM Analysis | 25+ | Activated voxel difference: 15-30% | HRF model, multiple comparison correction |

| Resting-State Connectivity | 20+ | Correlation variance: 0.1-0.3 | Band-pass filter range, global signal regression |

Table 2: Sources of Researcher Degrees of Freedom in a Typical Neuroimaging Pipeline

| Pipeline Stage | Typical Number of Choice Points | Example Decisions | Potential Outcome Divergence |

|---|---|---|---|

| Data Acquisition | 5-10 | Sequence parameters, coil configuration, resolution | Signal-to-Noise Ratio variation |

| Preprocessing | 15-25 | Slice timing correction, motion censoring threshold, distortion correction method | Inter-subject alignment quality |

| First-Level Analysis | 10-20 | Hemodynamic response function, temporal derivative inclusion, serial correlation model | Individual activation maps |

| Second-Level (Group) Analysis | 10-15 | Normalization method, statistical model (fixed/random effects), outlier handling | Group statistic maps |

| Statistical Inference | 5-10 | Cluster-forming threshold, multiple comparison method, significance threshold | Final reported results |

Experimental Protocols for Quantifying Pipeline Variability

The Multiverse Analysis Protocol

Objective: To systematically quantify the impact of analytical choices on a specific hypothesis. Materials: A single neuroimaging dataset (e.g., a publicly available cohort from ABIDE or HCP). Method:

- Define the Analysis Space: Enumerate all reasonable analytical choices at each pipeline stage.

- Generate Pipeline Instances: Create a full factorial or random sample of all possible pipeline combinations.

- Execute All Pipelines: Apply each pipeline variant to the same dataset using containerized computing (Docker/Singularity).

- Compute Outcome Distribution: For each brain region or statistical parameter of interest, calculate the distribution of results across all pipelines.

- Quantify Variability: Compute the variance, range, and confidence intervals of the effect sizes across the "multiverse" of analyses.

The Consensus Benchmarking Protocol

Objective: To establish a consensus result from multiple independent analytical teams. Method:

- Data Distribution: Provide identical raw datasets to multiple analysis teams (minimum: 5 teams).

- Independent Analysis: Each team processes data using their preferred, validated pipeline.

- Result Collection: Collect primary outcome measures from all teams.

- Meta-Analysis: Apply random-effects meta-analysis to combine results, quantifying between-team heterogeneity (I² statistic).

- Sensitivity Analysis: Identify analytical choices most strongly associated with result divergence.

The Parameter Sweep Simulation

Objective: To map the sensitivity of results to specific parameter choices. Method:

- Select a Base Pipeline: Choose a standard pipeline (e.g., fMRIPrep for fMRI).

- Identify Critical Parameters: Select 3-5 parameters suspected of high influence (e.g., smoothing FWHM, motion threshold).

- Define Parameter Ranges: Set physiologically/statistically plausible ranges for each.

- Grid Search: Perform a full grid search across parameter combinations.

- Response Surface Modeling: Fit a model to understand how parameters influence outcomes.

Signaling Pathways and Workflow Diagrams

Diagram Title: Researcher Degrees of Freedom in Neuroimaging Pipeline

Diagram Title: Protocol for Quantifying Analytical Variation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing Analytical Variation

| Tool/Reagent | Primary Function | Application in RDoF Management |

|---|---|---|

| Containerization Platforms (Docker, Singularity) | Create reproducible computational environments | Ensures identical software versions across all analyses |

| Pipeline Frameworks (Nipype, fMRIPrep, QSIPrep) | Standardized processing workflows | Reduces implementation variability between researchers |

| Version Control Systems (Git, DataLad) | Track exact analytical code and parameters | Enables precise replication of any pipeline instance |

| Neuroimaging Databases (BIDS, COINS, XNAT) | Standardized data organization | Eliminates variability in data structure and naming |

| Meta-Analysis Software (Seed-based d Mapping, NiMARE) | Combine results across multiple analyses | Quantifies between-pipeline heterogeneity |

| Parameter Optimization Suites (Optuna, Hyperopt) | Systematic exploration of parameter spaces | Maps sensitivity of results to specific parameter choices |

| Reporting Standards (BIDS-Apps, C-PAC) | Community-developed standardized pipelines | Provides consensus starting points for analysis |

Mitigation Strategies for Drug Development Research

For translational neuroimaging in drug development, the following practices are recommended:

- Pre-registration of Analytical Pipelines: Before data collection or unblinding, document the exact pipeline with all choice points specified.

- Pipeline Registration: Develop and register multiple plausible pipelines, reporting results from all.

- Sensitivity Reporting: Include supplementary materials showing how key results vary with analytical choices.

- Blinded Analysis: Keep analysts blinded to group allocation during pipeline development and initial application.

- Consensus Meetings: For pivotal studies, convene analysis teams to agree on primary pipeline before unblinding.

The flexibility inherent in neuroimaging analysis pipelines creates substantial Researcher Degrees of Freedom that can influence scientific conclusions, particularly in drug development contexts where effect sizes may be modest. By implementing systematic protocols for quantifying this variability, using standardized tools, and transparently reporting analytical flexibility, researchers can better capture and communicate the uncertainty in their findings, leading to more reproducible and reliable neuroimaging science.

This whitepaper examines the pervasive issues of effect size inflation and false discovery in neuroimaging research, contextualized within a broader thesis on capturing analytical variation. It presents quantitative case studies, details methodological pitfalls, and provides protocols to mitigate these risks, thereby enhancing the reliability of findings for translational drug development.

Neuroimaging experiments are particularly susceptible to analytical flexibility, which can dramatically inflate reported effect sizes and increase false positive rates. This undermines reproducibility and the translation of biomarkers into clinical drug development pipelines.

| Study & Year | Neuroimaging Modality | Primary Analysis | Reported Effect Size (Inflation Adjusted) | Inflated Effect Size (Original) | Inflation Factor | Key Source of Bias | |

|---|---|---|---|---|---|---|---|

| Botvinik-Nezer et al. (2020) | fMRI | Pain prediction | Cohen's d = 0.42 | Cohen's d = 0.70 - 1.57 | 1.7 - 3.7 | Analytic flexibility (model selection) | |

| Carp (2012) | fMRI | Task activation | -- | -- | 40-80% false positive rate | Cluster-size thresholding | |

| Eklund et al. (2016) | fMRI (resting state) | Null data analysis | Family-wise error rate (FWER) = 0.01-0.1 | FWER up to 0.7 (for cluster inference) | Up to 70x nominal rate | Invalid parametric assumptions | |

| IBMA Simulation (2022) | Multimodal Meta-Analysis | Voxel-based mapping | Hedges' g = 0.5 (true) | Hedges' g = 0.8 (aggregated) | 1.6 | Publication bias, selective reporting |

Detailed Experimental Protocols

Protocol: The "Multiverse" or Specification Curve Analysis for fMRI

Purpose: To quantify analytical variation and its impact on effect size.

- Data Acquisition: Acquire a task-based fMRI dataset (e.g., N-back working memory task).

- Define Analysis Pipelines: Systematically vary key analysis decisions to create a "multiverse" of pipelines. This includes:

- Preprocessing: Spatial smoothing kernel (4mm, 6mm, 8mm FWHM).

- Modeling: Hemodynamic Response Function (HRF) type (canonical, derivative), inclusion of motion parameters as covariates.

- Statistical Inference: Voxel-wise threshold (p<0.001, p<0.01), cluster-forming threshold, and correction method (FWE, FDR, permutation).

- Parallel Execution: Run all pipeline combinations on the same dataset.

- Effect Size Extraction: For a pre-defined Region of Interest (ROI), extract the statistic of interest (e.g., peak t-value, mean beta) from each pipeline output.

- Quantification of Variation: Calculate the distribution (range, standard deviation) of the effect size across all pipelines. The ratio of maximum to minimum observed effect size quantifies potential inflation.

Protocol: Controlled False Discovery Rate (FDR) Simulation

Purpose: To demonstrate the impact of analytical flexibility on false discovery using null data.

- Data Source: Use publicly available resting-state fMRI data (e.g., from the Human Connectome Project) as biologically plausible null data with no true experimental effect.

- Impose Synthetic "Analyst" Behaviors: Programmatically simulate common researcher behaviors:

- Peeking: Analyzing data after every N subjects until significance is reached.

- Selective Reporting: Testing multiple ROIs but only reporting the one with the lowest p-value.

- Model Tuning: Iteratively adding/removing covariates to improve model fit for a target signal.

- Mass Univariate Testing: Perform voxel-wise or ROI-wise correlations between brain activity and a simulated, randomly generated behavioral measure.

- Result Aggregation: Run the simulation 10,000 times. Record the proportion of iterations where any "significant" result (p < 0.05, corrected or uncorrected) is found. This estimates the empirical false discovery rate.

Visualizing Concepts and Workflows

Title: The Analytical Flexibility Pipeline

Title: Drivers of Effect Size Inflation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools for Mitigating Inflation and False Discovery

| Category | Item/Resource | Function & Rationale |

|---|---|---|

| Pre-registration Platforms | AsPredicted, OSF Registries | To pre-specify hypotheses, analysis plans, and ROI definitions before data collection/analysis, eliminating selective reporting. |

| Data & Code Repositories | OpenNeuro, GitHub, Code Ocean | To enable full transparency, allow direct replication of analysis pipelines, and facilitate re-analysis. |

| Standardized Pipelines | fMRIPrep, BIDS Apps, HCP Pipelines | To reduce preprocessing variability with robust, containerized software that generates quality reports. |

| Multiverse Analysis Tools | R/Python SpecCurve packages, COSMOS | To systematically map the space of analytic choices and visualize the distribution of results. |

| Null Data & Benchmarks | NeuroVault null datasets, SPM's "twister" | To provide realistic null data for validating statistical methods and empirically establishing false positive rates. |

| Robust Statistics Software | Permutation/Cluster-wise tools (FSL's Randomise, AFNI's 3dttest++), Bayesian Toolboxes (SPM12) | To use non-parametric inference methods that make fewer assumptions, controlling false positives more accurately. |

Within the framework of a thesis on Best practices for capturing analytical variation in neuroimaging experiments, understanding core psychometric concepts is paramount. Neuroimaging data is a composite signal reflecting true neural activity, confounded by multiple sources of noise. This technical guide deconstructs the concepts of measurement error, variance components, reliability, and validity, providing a quantitative foundation for improving experimental rigor in neuroscience and translational drug development.

Foundational Concepts

Measurement Error

Measurement error is the deviation of an observed score from the true score. In neuroimaging, this error is rarely singular but arises from a hierarchy of sources:

- Systematic Error (Bias): Non-random error that consistently skews results in one direction (e.g., scanner drift, gradient nonlinearities).

- Random Error (Noise): Fluctuations with no consistent pattern (e.g., thermal noise, physiological pulsations, subject motion).

The classical test theory model formalizes this:

X = T + E

where X is the observed measurement, T is the true score, and E is the measurement error.

Variance Components

The total variance in a set of neuroimaging measurements can be partitioned into components attributable to different sources. This is typically achieved using Generalizability (G) Theory or intraclass correlation (ICC) models.

A basic two-facet model for a repeated-measures fMRI study might include:

- σ²(Subject): Variance due to stable inter-individual differences (signal of interest).

- σ²(Session): Variance due to testing occasions.

- σ²(Run): Variance between scanning runs within a session.

- σ²(Residual): Unexplained variance, including random error and Subject x Condition interactions.

Reliability vs. Validity

- Reliability quantifies the consistency or reproducibility of a measurement. It is the proportion of total variance not attributable to measurement error:

Reliability = σ²(True) / [σ²(True) + σ²(Error)]. High reliability is necessary but insufficient for validity. - Validity assesses whether a measurement accurately captures the intended construct (e.g., "working memory load," "threat reactivity"). Types include construct, criterion, and face validity.

Diagram 1: Relationship between score, error, reliability, and validity.

Quantitative Synthesis of Neuroimaging Variance Components

The following tables summarize key variance component estimates from recent neuroimaging reliability studies, highlighting the field-specific challenges.

Table 1: Variance Components for Resting-State fMRI Functional Connectivity (ICC Studies)

| Brain Network/Measure | σ²(Subject) | σ²(Session) | σ²(Residual) | ICC (Reliability) | Reference (Example) |

|---|---|---|---|---|---|

| Default Mode Network (DMN) | 0.22 | 0.05 | 0.73 | 0.22 (Poor) | Noble et al., 2019 |

| Frontoparietal Network (FPN) | 0.30 | 0.10 | 0.60 | 0.30 (Fair) | Noble et al., 2019 |

| High-Motion Subgroup | 0.10 | 0.15 | 0.75 | 0.10 (Poor) | Data Synthesis |

| Low-Motion Subgroup | 0.40 | 0.05 | 0.55 | 0.40 (Fair) | Data Synthesis |

Table 2: Variance Components for Task-fMRI BOLD Response (Generalizability Studies)

| Paradigm & ROI | σ²(Subject) | σ²(Condition) | σ²(Subj x Cond) | σ²(Error) | Reliability (G-coefficient) |

|---|---|---|---|---|---|

| N-back (DLPFC) | 0.25 | 0.15 | 0.20 | 0.40 | 0.38 (Fair) |

| Emotional Faces (Amygdala) | 0.15 | 0.05 | 0.25 | 0.55 | 0.21 (Poor) |

| Pain (Insula) | 0.35 | 0.20 | 0.10 | 0.35 | 0.50 (Moderate) |

Experimental Protocols for Assessing Reliability

Test-Retest Reliability Protocol for fMRI

Objective: Quantify the temporal stability of BOLD-derived metrics across separate scanning sessions.

- Participant Cohort: Recruit N ≥ 30 healthy controls. Power analysis should guide sample size.

- Scanning Schedule: Two identical scanning sessions spaced 1-4 weeks apart to minimize memory effects while capturing temporal variance.

- Imaging Acquisition:

- Use the same 3T MRI scanner and phased-array head coil.

- Employ a multiband EPI sequence (e.g., MB factor=6, TR=800ms, TE=30ms, voxel=2.5mm³).

- Include field map scans for geometric distortion correction.

- Acquire a high-resolution T1-weighted MPRAGE for anatomical coregistration (1mm isotropic).

- Paradigms: Administer identical tasks in each session (e.g., block-design N-back, event-related monetary incentive delay). Order should be counterbalanced.

- Preprocessing Pipeline (fMRIPrep):

- Slice-time correction, motion realignment, distortion correction.

- Non-linear registration to MNI152 space.

- Nuisance regression: 24 motion parameters, mean CSF/White matter signals, ICA-AROMA for denoising.

- Spatial smoothing (6mm FWHM Gaussian kernel).

- Analysis: Extract mean contrast estimates (e.g., High-Load > Low-Load) from a priori regions of interest (ROIs).

- Statistical Evaluation: Calculate ICC(2,1) (two-way random, absolute agreement) for each ROI metric.

Within-Session Generalizability Protocol

Objective: Partition variance across runs within a single session to estimate immediate scan-rescan reliability.

- Design: Acquire 3-4 short, identical task runs within a ~1-hour session.

- Analysis: Conduct a variance component analysis using a linear mixed model:

Y_{sri} = μ + α_s + β_r + (αβ)_{sr} + ε_{sri}whereα_s=Subject,β_r=Run,(αβ)_{sr}=SubjectxRun interaction, andε=residual. - Output: Estimate σ²(Subject), σ²(Run), σ²(SubjxRun), and σ²(Residual). Calculate ICC = σ²(Subject) / (σ²(Subject) + σ²(Error)).

Diagram 2: Test-retest reliability assessment workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Neuroimaging Reliability Studies

| Item/Category | Function & Rationale | Example/Supplier |

|---|---|---|

| Multiband EPI Sequence | Accelerates data acquisition, reducing scan duration and motion-related variance. Enables denser sampling of hemodynamic response. | Siemens CMRR MB-EPI, GE's Hyperband. |

| Head Motion Stabilization | Physically restricts head movement, the largest source of non-neural variance in fMRI. | Moldable foam pillows, thermoplastic masks, bite bars. |

| Physiological Monitoring | Records cardiac and respiratory cycles for nuisance regression, removing physiological noise. | MRI-compatible pulse oximeter, respiratory belt (Biopac). |

| Automated Preprocessing Pipelines | Ensures reproducible, standardized data cleaning, minimizing analyst-induced variability. | fMRIPrep, HCP Pipelines, SPM12. |

| Quality Control Metrics | Quantifies data quality per scan to exclude or covary for poor-quality data. | Framewise Displacement (FD), DVARS, Signal-to-Noise Ratio (SNR). Qoala-T tool. |

| Reliability Analysis Toolboxes | Computes ICC, variance components, and generalizability coefficients from neuroimaging data. | pingouin (Python), psych (R), In-house MATLAB scripts for G-theory. |

| Phantom Test Objects | For scanner stability monitoring across time, separating instrumental from biological variance. | 3D printed fMRI phantoms, Magphan. |

Building Robust Pipelines: Methodological Best Practices for Minimizing Variation

Within the field of neuroimaging experiments, analytical flexibility—the ability to make numerous, often subjective decisions during data processing and analysis—is a primary source of irreproducible findings and inflated false-positive rates. This whitepaper, framed within a broader thesis on best practices for capturing and controlling analytical variation, advocates for the implementation of preregistration and preanalysis plans (PAPs) as a methodological imperative. By locking down the analytical strategy prior to data collection or access, researchers can distinguish confirmatory hypothesis testing from exploratory data analysis, thereby enhancing the credibility and replicability of neuroimaging research in both academic and drug development contexts.

The Problem of Analytical Variation in Neuroimaging

Neuroimaging data analysis involves a complex pipeline with multiple "researcher degrees of freedom." Choices at each step can significantly alter the final results.

- Preprocessing: Spatial smoothing kernel size, motion correction algorithms, global signal regression, slice-timing correction.

- First-Level Analysis: Hemodynamic response function (HRF) modeling, inclusion of nuisance regressors, thresholding for outlier removal.

- Group-Level Analysis: Statistical correction methods (FWE, FDR, cluster-forming thresholds), small volume correction, inclusion of covariates.

- Hypothesis Testing: Region of Interest (ROI) definition (anatomical vs. functional), voxel-wise vs. multivariate approaches.

A survey of fMRI studies (Carp, 2012) demonstrated that the combination of different analytical choices could yield a wide range of effect sizes and statistical significances from the same underlying data.

Core Components of a Neuroimaging Preanalysis Plan

A robust PAP for neuroimaging must prospectively specify the following elements.

Primary Hypothesis and Outcome Measures

- Precisely define the experimental question.

- Specify the primary dependent variable (e.g., BOLD signal change in a pre-defined ROI, connectivity strength between two networks).

Experimental Design and Data Acquisition

- Detailed scanning parameters (field strength, sequence type, TR, TE, voxel size, number of slices).

- Experimental task design (block/event-related, timing, stimuli presentation software).

Data Exclusion and Quality Control Criteria

- Define explicit, objective criteria for excluding participants (e.g., excessive head motion > 3mm, scanner artifacts, poor behavioral performance).

- Specify quality control metrics and thresholds (e.g., signal-to-noise ratio, visual inspection protocols).

Data Processing and Analysis Pipeline

- Specify software and version (e.g., SPM12, FSL 6.0.7, AFNI, CONN toolbox).

- Detail every preprocessing step in order.

- Define the statistical model for first and second-level analysis.

- Specify the exact brain coordinates or method for defining ROIs.

Statistical Inference Plan

- Define the primary statistical test and alpha level.

- Specify the method for multiple comparisons correction.

- State the minimum cluster size (if using cluster-based inference).

Sensitivity and Additional Analyses

- Outline planned sensitivity analyses (e.g., analysis with and without global signal regression).

- List any pre-planned exploratory or secondary analyses.

Experimental Protocols for Validating PAP Efficacy

The following methodology outlines a typical experiment used to quantify the impact of analytical flexibility and the protective effect of PAPs.

Protocol: Quantifying Analytical Variability in fMRI Analysis

- Data: Use a publicly available neuroimaging dataset (e.g., from OpenNeuro) with a task-based fMRI paradigm.

- Analytical Teams: Engage multiple independent analysis teams or create distinct analysis pipelines.

- Intervention: Provide half the teams with only the raw data and research question (unconstrained analysis). Provide the other half with a strict, pre-registered analysis plan.

- Outcome Measures: Measure the variability in key outcomes (e.g., peak activation coordinates, effect sizes, statistical significance) across teams within each group.

- Comparison: Statistically compare the between-team variance for the unconstrained group versus the PAP-constrained group.

Results from a similar multi-analysis study (Botvinik-Nezer et al., Nature, 2020):

Table 1: Variability in Reported Brain Activations Across Analysis Teams

| Analysis Condition | Number of Teams | Variability in Primary ROI Activation (%) | Range of Reported p-values | Consistency in Cluster Location |

|---|---|---|---|---|

| Unconstrained | 70 | 85% | 0.001 to 0.89 | Low |

| PAP-Constrained | 70 | 15% | 0.02 to 0.04 | High |

Note: Data adapted from a large-scale analysis of a single fMRI dataset by multiple independent teams, demonstrating the stabilizing effect of a preanalysis plan.

Implementation Workflow

The logical flow for implementing a preregistration and PAP in a neuroimaging study is outlined below.

Diagram Title: Workflow for Neuroimaging Study with Preregistration

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Implementing Preanalysis Plans in Neuroimaging

| Item/Category | Function/Benefit | Example Platforms/Tools |

|---|---|---|

| Preregistration Repositories | Provides a time-stamped, immutable record of the research plan, establishing precedence. | Open Science Framework (OSF), ClinicalTrials.gov, AsPredicted |

| Data Analysis Software | Standardized, version-controlled software ensures reproducibility of the analysis pipeline. | SPM, FSL, AFNI, FreeSurfer, MATLAB, Python (NiPype, nilearn) |

| Containerization Tools | Packages the complete software environment (OS, libraries, code) for exact replication. | Docker, Singularity, Neurodocker |

| Version Control Systems | Tracks all changes to analysis code, enabling collaboration and audit trails. | Git, GitHub, GitLab |

| Data Sharing Repositories | Facilitates open data, enabling independent verification and re-analysis. | OpenNeuro, NeuroVault, LORIS, XNAT |

| Reporting Guidelines | Checklists to ensure the PAP and final manuscript include all critical methodological details. | CONSORT, STROBE, ARRIVE, COBIDAS |

| Project Management Tools | Organizes protocols, SOPs, and team communication around the locked analysis plan. | Notion, Trello, Slack (with dedicated channels) |

Preregistration and preanalysis plans are not constraints on scientific creativity but rather foundational tools for rigorous science. In neuroimaging—a field beset by analytical complexity—PAPs provide a necessary framework to distinguish validated discoveries from statistical noise. By adopting these practices, researchers and drug development professionals can produce more reliable, interpretable, and ultimately, more translatable neuroimaging findings, directly addressing the core challenge of capturing and controlling analytical variation.

This guide is framed within a broader thesis on Best practices for capturing analytical variation in neuroimaging experiments. The reproducibility crisis in neuroscience is exacerbated by uncontrolled analytical variability introduced during data preprocessing. This whitepaper details a standardized pipeline from raw data organization using the Brain Imaging Data Structure (BIDS) to comprehensive provenance tracking, a critical framework for quantifying and mitigating this variation in research and drug development.

The Foundation: BIDS Specification

The Brain Imaging Data Structure (BIDS) is a community-driven standard for organizing and describing neuroimaging data. It provides a predictable directory hierarchy and file naming convention, which is the essential first step in standardizing inputs to any preprocessing pipeline.

Core BIDS Directory Structure

A standard BIDS dataset includes the following key components:

sub-<label>: Subject directories.ses-<label>: Session directories (optional).anat/: Anatomical imaging data (e.g., T1w, T2w).func/: Functional imaging data (e.g., task-based fMRI, resting-state).dwi/: Diffusion-weighted imaging data.fmap/: Field maps for distortion correction.dataset_description.json: Mandatory file describing the dataset.participants.tsv: Tab-separated file listing participant metadata.

Quantitative Impact of BIDS Adoption

The adoption of BIDS standardization has demonstrated measurable benefits for research efficiency and data sharing.

Table 1: Impact of BIDS Standardization on Data Management Workflows

| Metric | Pre-BIDS Workflow | BIDS-Standardized Workflow | % Improvement | Source (Study/Report) |

|---|---|---|---|---|

| Time to data onboarding | 1-2 weeks | 1-2 days | ~80% | NIMH Data Archive (NDA) Case Studies |

| Data sharing success rate | ~65% | >95% | ~46% | OpenNeuro Repository Statistics |

| Pipeline error rate (due to input formatting) | 25-40% | 5-10% | ~75% | BIDS Validator Community Reports |

| Inter-lab collaboration setup time | High (months) | Low (weeks) | ~70% | International Neuroimaging Consortia |

Standardized Preprocessing Workflow

A canonical, modular preprocessing workflow for T1-weighted anatomical and resting-state fMRI (rs-fMRI) data is described below. This serves as a reference model for capturing analytical variation.

Experimental Protocol: Anatomical (T1w) Preprocessing

Objective: Produce a cleaned, normalized anatomical image for tissue segmentation and spatial reference.

- Input: BIDS-formatted

sub-X_ses-Y_T1w.nii.gz. - Intensity Non-uniformity Correction: Use N4BiasFieldCorrection (ANTs) or FSL FAST to correct low-frequency intensity drifts caused by magnetic field inhomogeneities.

- Skull Stripping: Isolate brain tissue from non-brain tissue (skull, scalp) using SynthStrip (FreeSurfer) or FSL BET.

- Tissue Segmentation: Classify voxels into Gray Matter (GM), White Matter (WM), and Cerebrospinal Fluid (CSF) using SPM12's Unified Segmentation or FSL FAST.

- Spatial Normalization: Linearly (affine) and non-linearly warp the native brain to a standard template space (e.g., MNI152) using ANTs SyN or FSL FNIRT.

- Output: Normalized, segmented tissue probability maps in MNI space.

Experimental Protocol: Functional (rs-fMRI) Preprocessing

Objective: Reduce non-neural noise and align functional data to standard space for analysis.

- Input: BIDS-formatted

sub-X_ses-Y_task-rest_bold.nii.gzand associated*_events.tsv,*_physio.tsvif available. - Slice Timing Correction: Correct for acquisition time differences between slices using FSL slicetimer or SPM's temporal interpolation.

- Realignment (Motion Correction): Estimate and correct for head motion across time using rigid-body registration (e.g., FSL MCFLIRT). Generate framewise displacement (FD) metrics.

- Coregistration: Align the mean functional image to the subject's T1w anatomical using boundary-based registration (FSL FLIRT BBR) or mutual information.

- Normalization: Apply the transformation from T1w normalization to bring functional data into MNI space in one resampling step.

- Spatial Smoothing: Apply a Gaussian kernel (e.g., 6mm FWHM) to improve signal-to-noise ratio and mitigate residual anatomical differences.

- Nuissance Regression: Regress out signals from WM, CSF, global signal (optional), motion parameters, and derivatives. Apply band-pass filtering (e.g., 0.008-0.09 Hz).

- Output: Cleaned, normalized 4D time-series data ready for connectivity or activation analysis.

Diagram 1: Standard Neuroimaging Preprocessing Pipeline

Capturing Variation Through Provenance Tracking

Provenance tracking is the systematic recording of all data transformations, parameters, software versions, and execution environments. It is the key to understanding analytical variation.

The Provenance Data Model

Provenance can be captured using standards like the W3C PROV Data Model, which defines:

- Entity: A digital object (e.g.,

sub-01_T1w.nii,skull_stripped_T1w.nii). - Activity: An action performed (e.g.,

FSL BET execution). - Agent: Something that facilitated the activity (e.g.,

software: FSL v6.0.5,container: fsl_docker.sif).

Different stages of preprocessing introduce distinct types of variation.

Table 2: Major Sources of Analytical Variation in Preprocessing

| Processing Stage | Source of Variation | Example Parameter Choices | Impact Metric | Provenance Capture Method |

|---|---|---|---|---|

| Skull Stripping | Algorithm Choice | BET (FSL) vs. SynthStrip (FreeSurfer) vs. HD-BET | Brain extraction volume (cc) | Container image hash, software version, command-line call. |

| Normalization | Template & Algorithm | MNI152 (1mm vs 2mm); ANTs SyN vs FSL FNIRT | Normalized cross-correlation, warp field Jacobian | Template file hash, algorithm, cost function, regularization. |

| Smoothing | Kernel Size | 4mm vs 6mm vs 8mm FWHM Gaussian | Effective image resolution | Kernel size (FWHM) recorded in JSON sidecar. |

| Nuissance Regression | Model Specification | 24-param motion, ICA-AROMA, global signal regression | Degrees of freedom removed, QC-FC correlation | Regressor list, filter cutoffs, tool version. |

| Software Environment | Version & OS | FSL v6.0.1 vs v6.0.5; Linux vs macOS | Potential numerical differences | Docker/Singularity image ID, OS version, library versions. |

Diagram 2: Provenance Tracking Model for a Processing Step

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Standardized Preprocessing & Provenance

| Tool / Reagent | Category | Primary Function | Role in Capturing Variation |

|---|---|---|---|

| BIDS Validator | Data Standardization | Validates compliance of a dataset with the BIDS specification. | Ensures consistent input format, eliminating a major source of pipeline failure. |

| fMRIPrep / qsiprep | Pipeline Software | Automated, BIDS-compliant preprocessing pipelines for fMRI/dMRI. | Provides a standardized, versioned baseline workflow; emits detailed provenance. |

| Nipype | Pipeline Framework | A Python framework for creating interoperable, workflow-based pipelines. | Enables modular, traceable pipelines that combine tools from FSL, SPM, ANTs, etc. |

| Docker / Singularity | Containerization | Packages software and its dependencies into portable, isolated units. | Captures the complete computational environment, fixing OS and library versions. |

| BIDS-Prov / ProvStore | Provenance Tracking | Libraries and formats for recording and querying provenance in BIDS derivatives. | Directly implements W3C PROV model within the BIDS ecosystem. |

| C-PAC / fMRIPrep's XDG | Pipeline Configuration | Systems for defining and sharing pipeline configuration files (YAML/JSON). | Explicitly records all parameter choices, enabling direct comparison of variants. |

| Datalad / Git-Annex | Data Versioning | Manages and versions large scientific datasets alongside code. | Tracks the evolution of both data and processing scripts over time. |

| OpenNeuro / NDA | Data Repository | Public and controlled repositories for sharing BIDS datasets. | Provides a real-world benchmark for testing pipeline robustness across diverse data. |

Implementation Protocol: A Reproducible Pipeline

Methodology for Deploying a Provenance-Capturing Pipeline:

- Data Curation: Convert raw data to BIDS using tools like

dcm2bids. Validate with thebids-validator. - Containerization: Select or build a Docker/Singularity container encompassing all necessary software (e.g.,

nipype/neurodocker). - Pipeline Definition: Use

NipypeorNextflowto define the workflow graph, explicitly linking processing nodes. - Execution with Tracking: Run the pipeline via a tool like

nipype2bidsprov, which automatically generates PROV-JSON files in thederivatives/folder for each subject. - Derivative Organization: Structure outputs following BIDS Derivatives specification, including a

dataset_description.jsonwith aPipelineDescriptionfield. - Variation Analysis: Use recorded provenance to re-run pipelines with altered parameters (e.g., different smoothing kernels) and compare outputs using metrics from Table 2.

Standardizing preprocessing from BIDS formatting through to comprehensive provenance tracking is not merely a technical convenience but a foundational requirement for rigorous neuroimaging science. By implementing the practices and tools outlined here, researchers and drug development professionals can transition from treating preprocessing as a "black box" to quantitatively capturing analytical variation. This enables robust sensitivity analyses, facilitates true computational reproducibility, and strengthens the validity of biomarkers and treatment effects discovered in neuroimaging experiments.

Choosing and Documenting Analysis Software & Version Control (Docker, Singularity)

Within the broader thesis on Best practices for capturing analytical variation in neuroimaging experiments, the selection and rigorous documentation of analysis software and computational environments is paramount. Neuroimaging analyses, from fMRI preprocessing to PET kinetic modeling, involve complex pipelines with numerous interdependent software packages. Inconsistent software versions, library dependencies, or operating systems introduce significant analytical variation, threatening the reproducibility and reliability of scientific findings. This technical guide details the implementation of containerization (Docker, Singularity) and version control systems as foundational best practices for eliminating this source of variability, thereby isolating the biological and technical signals of interest in neuroimaging research for both academia and drug development.

The Imperative for Computational Reproducibility in Neuroimaging

Analytical variation in neuroimaging stems from two primary software-related sources: 1) Explicit dependencies: the version of the primary analysis tool (e.g., FSL, SPM, FreeSurfer, AFNI). 2) Implicit dependencies: underlying system libraries (e.g., libc, BLAS), interpreters (Python, MATLAB), and compiler versions. A change in any layer can alter numerical outputs, even with identical input data and nominal software version.

Table 1: Documented Instances of Software-Induced Variation in Neuroimaging

| Software Component | Version Difference | Impact on Neuroimaging Output | Citation |

|---|---|---|---|

| FSL (FEAT) | 5.0.10 vs 6.0.1 | Significant voxel-wise differences in group-level fMRI statistics, varying by analysis model. | Bowring et al., 2019 |

| FreeSurfer | 5.3.0 vs 6.0.0 | Systematic bias in cortical thickness estimates, average absolute difference of ~0.1mm. | Glatard et al., 2015 |

| Python (NumPy) | 1.15.4 vs 1.16.0 | Altered random number generation, affecting permutation testing results in connectivity analysis. | N/A (Community Advisory) |

| GNU C Library | 2.28 vs 2.31 | Can affect mathematical rounding in compiled toolkits, leading to minor intensity variations. | N/A (System Updates) |

Core Technologies for Environment Control

Docker

Docker is a platform for developing, shipping, and running applications within lightweight, portable containers. A container encapsulates an application and its complete dependency tree, ensuring it runs uniformly across any Linux system with a Docker engine.

Singularity

Singularity is a container platform designed specifically for high-performance computing (HPC) and scientific environments. Key features include: the ability to run containers without root privileges, native support for GPU and InfiniBand hardware, and direct access to cluster filesystems (e.g., NFS, Lustre). It is now the de facto standard for containers in academic HPC centers.

Table 2: Docker vs. Singularity for Neuroimaging Research

| Feature | Docker | Singularity |

|---|---|---|

| Primary Use Case | Development, CI/CD, cloud deployment. | Scientific workloads on shared HPC systems. |

| Security Model | Requires root daemon (security concern on shared systems). | User runs without elevated privileges. |

| Filesystem Integration | Isolated; requires explicit volume mounts. | Seamlessly binds to host directories (e.g., /project, /scratch). |

| Portability | Excellent via Docker Hub. | Excellent via Sylabs Cloud & Docker Hub conversion. |

| GPU Support | Good (via --gpus flag). |

Excellent native support. |

| Ideal For | Building, testing, and sharing pipelines. | Executing pipelines at scale on HPC clusters. |

Experimental Protocol: Implementing a Containerized Neuroimaging Pipeline

This protocol details the creation and execution of a reproducible fMRI preprocessing pipeline using FSL.

Protocol: Building and Versioning a Docker Image for FSL Preprocessing

Objective: Create a immutable, versioned container with FSL 6.0.7, Python 3.9, and all necessary dependencies.

Author a Dockerfile: This text file defines the build steps.

Create a

requirements.txtfile with version-pinned packages:Build and tag the image:

Push to a container registry for sharing and archiving:

Protocol: Executing the Pipeline on HPC with Singularity

Objective: Run the FEAT preprocessing workflow using the containerized environment on an HPC cluster.

Pull the Docker image to create a Singularity Image File (SIF):

Create a batch submission script (

run_feat.sh):Submit the job:

Integrating with Version Control Systems (VCS)

Containers must be paired with a VCS (e.g., Git) to manage pipeline code, configuration files, and documentation.

Recommended Repository Structure:

Workflow: Version-Controlled Analysis

- Commit: All code and configuration files are committed to Git with descriptive messages.

- Tag: Upon achieving a stable analysis state, tag the repository (e.g.,

v1.0-fsl-6.0.7). - Link: The Git commit hash or tag is recorded in the final analysis output's provenance metadata, often via tools like DataLad or BIDS Derivatives.

Diagram Title: Version-Controlled Container Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Reproducible Neuroimaging Analysis

| Tool / Reagent | Function in Capturing Analytical Variation | Example / URL |

|---|---|---|

| Docker | Creates portable, self-contained software environments for development and testing. | docker.io/library/python:3.9-slim |

| Singularity/Apptainer | Executes containerized environments securely on shared HPC resources. | apptainer.org |

| Git | Version control for all analysis code, scripts, and documentation. | git-scm.com |

| DataLad | Version control for large-scale neuroimaging data, integrated with Git. | www.datalad.org |

| BIDS (Brain Imaging Data Structure) | Standardized organization of input data, reducing pipeline configuration errors. | bids-specification.readthedocs.io |

| BIDS Apps | Containerized pipelines that accept BIDS data, ensuring consistent execution. | bids-apps.github.io |

| Conda/Bioconda | Package manager for bioinformatics software; used within containers for dependency resolution. | conda.io, bioconda.github.io |

| Continuous Integration (CI) Services (e.g., GitHub Actions, GitLab CI) | Automatically rebuilds containers and runs tests on each code commit. | docs.github.com/en/actions |

| Research Resource Identifiers (RRIDs) | Unique identifiers for software tools (e.g., RRID:SCR_002823 for FSL) for unambiguous citation. | scicrunch.org/resources |

| Makeflow/Nextflow/Snakemake | Workflow management systems to define, execute, and reproduce complex, multi-step analyses. | nextflow.io, snakemake.github.io |

Adopting robust practices for choosing and documenting analysis software via containerization and version control is not an ancillary concern but a core methodological component in the neuroscience of neuroimaging. By freezing the computational environment using Docker and Singularity, and meticulously versioning all associated code, researchers can decisively eliminate a major source of analytical noise. This practice directly supports the thesis's goal of capturing true analytical variation—such as differences in algorithmic parameters or statistical models—while ensuring that findings in both academic and drug development contexts are computationally reproducible, robust, and trustworthy.

Implementing Quality Control (QC) Metrics at Every Processing Stage

Accurate characterization of biological and pathological processes in neuroimaging experiments is contingent on distinguishing true signal from noise and analytical variation. A broader thesis on Best practices for capturing analytical variation in neuroimaging experiments research posits that systematic error must be quantified and managed at each computational and analytical step to ensure reproducible, biologically valid results. This guide operationalizes that thesis by mandating the implementation of specific, quantitative QC metrics throughout the neuroimaging pipeline, from acquisition to final statistical inference.

The Multi-Stage Neuroimaging Pipeline and Corresponding QC Metrics

The analytical variation in neuroimaging can be partitioned into stages. The following table summarizes the critical QC metrics for each stage, derived from current community standards and recent literature (e.g., the MRIQC and fMRIPrep frameworks, QSIPrep standards).

Table 1: Stage-Specific QC Metrics for Neuroimaging Analysis

| Processing Stage | Primary Sources of Analytical Variation | Recommended QC Metrics | Quantitative Benchmark (Typical Range for Acceptance) |

|---|---|---|---|

| Acquisition | Scanner drift, motion, protocol deviations, signal-to-noise ratio (SNR) | Signal-to-Noise Ratio (SNR); Contrast-to-Noise Ratio (CNR); Temporal SNR (tSNR); Frame-wise displacement (FD); Visual inspection of raw images. | Anatomical SNR > 20; fMRI tSNR > 100; Mean FD < 0.2mm per volume. |

| Preprocessing | Registration errors, normalization accuracy, distortion correction efficacy, tissue segmentation errors | Normalization cost function (e.g., mutual information); Segmentation Dice coefficient; Edge displacement (e.g., for motion correction); Contamination factor (e.g., FSL's tedana). |

Cost function value < 0.5; Dice coefficient for CSF/GM/WM > 0.85; Mean edge displacement < 1 voxel. |

| First-Level Analysis (e.g., fMRI GLM) | Model misspecification, residual motion, physiological noise confounds | Explained variance (R²); Mean-squared error (MSE); Voxel-wise smoothness (FWHM); Quality of model fit (e.g., contrast estimates vs. noise). | Mean R² within ROI should be > 5-10%; Smoothness estimates consistent with applied kernel. |

| Higher-Level Analysis (Group/Population) | Inter-subject registration errors, outlier influence, homogeneity of variance | Mahalanobis distance for outlier detection; Inter-subject correlation matrices; Variability of contrast maps across subjects (ICC). | Subjects with Mahalanobis distance > χ² crit (p<0.001) flagged; ICC > 0.4 for key contrasts. |

| Visualization & Reporting | Inappropriate statistical thresholds, misleading colormaps, selective reporting | Adherence to statistical reporting standards (e.g., p-values, effect sizes, confidence intervals); Use of colorblind-friendly palettes. | p-values reported exactly; Effect sizes (Cohen's d, β) provided for all significant results. |

Detailed Experimental Protocols for Key QC Experiments

Protocol 1: Quantifying Acquisition Quality via Temporal SNR (tSNR) Mapping

Application: Essential for resting-state and task fMRI quality assessment.

- Data Requirement: A 4D fMRI timeseries (e.g.,

func.nii.gz). - Procedure:

a. Mask Creation: Create a brain mask from the mean functional image using

fslmaths -mean -thr <value> -bin. b. Mean & SD Calculation: Compute the mean (μ) and standard deviation (σ) across time for each voxel within the mask. c. tSNR Calculation: Compute voxel-wise tSNR asμ/σ. d. Summary Metric: Calculate the median tSNR within a primary region of interest (e.g., whole-brain gray matter mask). - QC Decision: Flag datasets where the median tSNR falls below 100 (at 3T) for review of acquisition parameters or participant compliance.

Protocol 2: Assessing Structural Preprocessing via Tissue Segmentation Accuracy

Application: Validating outputs of tools like FSL FAST, FreeSurfer, or SPM.

- Data Requirement: T1-weighted image and its corresponding segmented outputs (GM, WM, CSF probability maps).

- Procedure (Manual Audit Sub-Sample):

a. Select Random Subset: Randomly select 10-20% of datasets.

b. Visual Overlay: Use software (e.g.,

fsleyes,Freeview) to overlay segmentation contours on the native T1 image. c. Scoring: A trained rater scores segmentation accuracy for each tissue class on a 1-5 scale (1=Major errors, 5=Flawless) in three pre-defined slices (axial, coronal, sagittal). d. Quantitative Backup: Compute the Dice Similarity Coefficient (DSC) between the automated segmentation and a manually corrected gold standard for the audited subset. DSC = (2\|A∩B\|) / (\|A\|+\|B\|). - QC Decision: If average audit score < 3.5 or median DSC < 0.85, review segmentation parameters or re-run with corrected inputs.

Visualizing the Integrated QC Workflow

Diagram Title: Integrated QC Checkpoint Workflow for Neuroimaging

Diagram Title: Sources of Variation in Neuroimaging Signal

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software Tools & Resources for Implementing QC Metrics

| Item Name (Software/Package) | Primary Function in QC | Brief Explanation of Use |

|---|---|---|

| MRIQC (v23.1.0) | Automated extraction of no-reference IQMs | Computes a comprehensive suite of image quality metrics (IQMs) from raw T1w, T2w, and BOLD data, enabling outlier detection. |

| fMRIPrep (v23.1.4) / QSIPrep (v0.19.1) | Robust preprocessing with embedded QC | Standardized preprocessing pipelines for fMRI and dMRI that generate visual and quantitative QC reports (e.g., registration, segmentation). |

| FSL (v6.0.7) | General processing and QC utilities | Provides tools like fsl_motion_outliers (for FD), fsl_smoothness (for FWHM), and FSLeyes for visual QC. |

| turkeltaub/QC_reporter | Aggregate and visualize multi-stage QC | A MATLAB-based tool to compile metrics from various stages into an interactive HTML dashboard for cohort-level review. |

| PNG (PNG Palette) | Standardized visual reporting | Using perceptually uniform, colorblind-friendly colormaps (e.g., viridis, plasma) for statistical maps ensures accessible, non-misleading visualization. |

| BIDS (Brain Imaging Data Structure) | Data organization foundation | A standardized file system and metadata structure that is prerequisite for automated, scalable QC across datasets and sites. |

The Role of Computational Environments and High-Performance Computing (HPC).

In the context of best practices for capturing analytical variation in neuroimaging experiments, computational environments and HPC are not merely conveniences but foundational necessities. Modern neuroimaging, particularly multi-modal studies integrating fMRI, DTI, and M/EEG, generates datasets at the petabyte scale. Reproducible analysis requires identical software stacks, controlled resource allocation, and the ability to execute complex processing pipelines (e.g., fMRIPrep, FSL, FreeSurfer) across thousands of data permutations to quantify analytical variability. This guide details the technical infrastructure and methodologies enabling robust, large-scale computational neuroimaging.

Core Computational Architectures & Performance Metrics

The choice of computational environment dictates the scale, speed, and reproducibility of analytical workflows. The table below summarizes key architectures and their relevance to neuroimaging.

Table 1: Computational Environments for Neuroimaging Analysis

| Environment Type | Typical Configuration | Key Use Case in Neuroimaging | Throughput Example (Subject Processing) |

|---|---|---|---|

| Local Workstation | 16-64 CPU cores, 128-512 GB RAM, 1-2 GPUs | Pipeline development, small cohort analysis (<50 subjects), quality control visualization. | 1 subject (fMRI preprocessing): 4-12 hours |

| On-Premise HPC Cluster | 1000s of CPU cores, shared high-memory nodes, parallel filesystem (Lustre, GPFS) | Large-scale batch processing for cohort studies, parameter sweep studies to assess analytical variability. | 1000 subjects (DTI tractography): ~24 hours via massive parallelization |

| Cloud Computing (e.g., AWS, GCP) | Elastic, scalable virtual clusters (Spot/Preemptible VMs), object storage (S3) | Bursty, collaborative multi-site analysis, publicly sharing reproducible pipelines (BIDS Apps via containers). | Cost-driven; scalable to match on-premise HPC. |

| Containerized Environments (Docker/Singularity) | Consistent, portable software stacks defined via image files. | Ensuring absolute analytical consistency across all above environments, critical for reproducible variation studies. | Negligible performance overhead (<5%) |

Experimental Protocol: A Computational Study of Analytical Variation

This protocol outlines a systematic computational experiment to quantify the impact of different software toolchains and preprocessing parameters on neuroimaging results.

A. Objective: To measure the variance in functional connectivity outcomes introduced by four different fMRI preprocessing pipelines across a standardized dataset (e.g., ABCD Study subset, n=500).

B. Computational Workflow:

- Data Curation: Fetch a BIDS-formatted dataset from a data repository (e.g., OpenNeuro).

- Environment Provisioning: Instantiate four identical virtual machines on a cloud platform, each with 32 vCPUs and 120 GB RAM.

- Pipeline Deployment: Deploy a distinct containerized pipeline on each VM:

- VM1: fMRIPrep default output + Nilearn connectivity.

- VM2: FSL FEAT standard processing + dual regression.

- VM3: SPM12-based pipeline with AAL atlas.

- VM4: A custom C-PAC configuration.

- High-Throughput Execution: Use a workload manager (e.g., Snakemake, Nextflow) to submit all 500 subjects per pipeline as parallel jobs.

- Result Aggregation: Compute group-level resting-state networks (e.g., DMN) for each pipeline.

- Variance Quantification: Calculate voxel-wise ICC (Intraclass Correlation Coefficient) across the four pipeline outputs to create maps of "analytical uncertainty."

Diagram Title: Workflow for Quantifying Analytical Variation

The Scientist's Computational Toolkit

Table 2: Essential Research Reagent Solutions for Computational Neuroimaging

| Tool/Reagent | Function & Role in Experiment |

|---|---|

| BIDS Validator | Ensures input dataset adheres to Brain Imaging Data Structure standard, guaranteeing format consistency. |

| Docker/Singularity Containers | Encapsulates entire software stack (OS, libraries, tools), eliminating "works on my machine" variability. |

| fMRIPrep | A robust, standardized fMRI preprocessing pipeline, used as a benchmark in variation studies. |

| Quality Assessment Tools (MRIQC) | Automatically computes a suite of image quality metrics for each processed subject, enabling QC-driven exclusion. |

| Nilearn / nilearn-connectome | Python library for statistical learning on neuroimaging data and network-level connectivity analysis. |

| Slurm / Sun Grid Engine | HPC job scheduler for managing, queuing, and executing thousands of parallel processing jobs. |

| XNAT / COINSTAC | Platform for managing, sharing, and performing federated analysis on neuroimaging data across sites. |

Data Management & Reproducibility Protocols

HPC-enabled analysis demands systematic data governance. The logical relationship between raw data, derivatives, and provenance is critical.

Diagram Title: Neuroimaging Data Provenance & Management

Quantitative Benchmarks & Scaling Laws

Performance characteristics directly influence the feasibility of large-scale variation studies.

Table 3: HPC Scaling Benchmarks for a Typical fMRI Preprocessing Pipeline

| Number of Subjects | Compute Resources Allocated | Wall-clock Time (Single Pipeline) | Estimated Cost (Cloud, Spot Instances) |

|---|---|---|---|

| 50 | 1 node, 32 cores, 64 GB RAM | 18 hours | ~$15 |

| 500 | 10 nodes, 320 cores, 640 GB RAM | 20 hours (parallel efficiency ~90%) | ~$150 |

| 5000 | 100 nodes, 3200 cores, 6.4 TB RAM | 24 hours (due to I/O overhead) | ~$1,800 |

Within the thesis of capturing analytical variation, dedicated computational environments and HPC are the enabling substrates. They allow researchers to systematically exercise the parameter and algorithmic space of neuroimaging analysis at scale, transforming a philosophical concern about reproducibility into a quantifiable, mapable outcome. Adopting containerization, workflow managers, and scalable architectures is no longer optional for best practices; it is the bedrock of rigorous, transparent, and generalizable neuroimaging science.

Troubleshooting Common Pitfalls and Optimizing Analysis Robustness

Within the broader thesis on Best practices for capturing analytical variation in neuroimaging experiments, diagnosing high variability is a critical precursor to robust, reproducible science. This technical guide outlines systematic, practical approaches for researchers, scientists, and drug development professionals to identify and mitigate sources of excessive variance in neuroimaging data, which can confound biological signals and impede translational applications.