Identifying and Mitigating Analytical Bias in Neuroimaging Pipelines: A Practical Guide for Neuroscience Researchers and Pharma R&D

This article provides a comprehensive framework for understanding and addressing analytical bias in neuroimaging processing pipelines, tailored for researchers, scientists, and drug development professionals.

Identifying and Mitigating Analytical Bias in Neuroimaging Pipelines: A Practical Guide for Neuroscience Researchers and Pharma R&D

Abstract

This article provides a comprehensive framework for understanding and addressing analytical bias in neuroimaging processing pipelines, tailored for researchers, scientists, and drug development professionals. It explores the foundational concepts of bias in image acquisition, preprocessing, and statistical modeling, and their impact on reproducibility. The guide details practical methodologies and software tools for bias detection and correction, offers troubleshooting strategies for common pipeline optimization challenges, and presents validation frameworks for comparative analysis. By synthesizing current best practices and emerging solutions, this resource aims to enhance the reliability and interpretability of neuroimaging data in both basic research and clinical trial contexts.

The Hidden Architecture of Bias: Understanding Its Sources and Impact in Neuroimaging

Technical Support Center

FAQ: Troubleshooting Common Neuroimaging Pipeline Issues

Q1: Why does my fMRI preprocessing output show systematic signal loss in specific brain regions (e.g., orbitofrontal cortex) when using a standard normalization template (e.g., MNI152)?

A: This is a classic example of a technical artifact bias introduced by spatial normalization. The MNI152 template, derived from young Western adult brains, may not adequately represent the anatomy of your subject population (e.g., elderly, pediatric, or non-Western cohorts). This morphometric mismatch causes aggressive warping, leading to signal dropout or distortion in susceptible regions.

- Protocol for Detection & Mitigation:

- Visual Inspection: Check the

snroutput images from your realignment/unwarping step (e.g., SPM'sqcfolder, fMRIPrep's HTML reports). - Quantitative Check: Calculate the mean Jacobian determinant from the normalization warp field for each subject. Values far from 1.0 in specific regions indicate severe compression or expansion.

- Mitigation Strategy:

- Create a study-specific template using an iterative, high-dimensional normalization tool (e.g.,

antsMultivariateTemplateConstruction2.shfrom ANTs). - Use a more representative public template (e.g., NIHPD for children, IXI for aging).

- Employ modulation in voxel-based morphometry (VBM) to correct for volume changes introduced by warping.

- Create a study-specific template using an iterative, high-dimensional normalization tool (e.g.,

- Visual Inspection: Check the

Q2: During resting-state fMRI analysis, my independent component analysis (ICA) consistently identifies a "noise" component that appears to be vascular pulsatility from large veins. How can I verify and remove this to prevent bias in functional connectivity measures?

A: You are likely observing a physiological noise bias. This structured noise can be misclassified as neural signal, artificially inflating connectivity estimates between regions sharing vascular territories.

- Protocol for Verification & Correction:

- Spectral Verification: Extract the component's time-series and compute its power spectral density (PSD). Physiological noise (~0.1 Hz cardiac, ~0.25 Hz respiratory) will show distinct peaks outside the typical slow neural fluctuation band (<0.1 Hz).

- Spatial Verification: Overlay the component map on a susceptibility-weighted image (SWI) or venous atlas. High spatial overlap with major venous sinuses (e.g., sagittal sinus) confirms the component's vascular origin.

- Removal Protocol: Implement a validated denoising pipeline:

- Retrospective: Use

fsl_regfiltto regress out identified noise components from the preprocessed data. Components can be classified automatically (e.g., FSL's FIX) or manually using criteria from (Griffanti et al., 2017). - Prospective: Incorporate physiological recording (cardiac pulse, respiration) during scanning and use RETROICOR or HRV/HRR regression (using

PhysIOtoolbox in SPM ornmrin AFNI).

- Retrospective: Use

Q3: My machine learning classifier for Alzheimer's disease shows high accuracy on data from Scanner A but fails on data from Scanner B. What steps can I take to diagnose and correct this scanner-induced bias?

A: This is a data heterogeneity bias caused by differences in acquisition protocols, coil sensitivities, and manufacturer-specific image properties, which the algorithm has learned as a confounding feature.

- Diagnostic & Harmonization Protocol:

- Diagnosis: Perform a Principal Component Analysis (PCA) or t-SNE on the extracted features from both datasets. Color points by scanner. Clear separation in the latent space confirms scanner bias.

- Quantitative Assessment: Calculate the following metrics per feature before and after harmonization:

| Metric | Formula/Purpose | Target Post-Harmonization | ||

|---|---|---|---|---|

| Cohen's d (Batch Effect Size) | d = (μA - μB) / σ_pooled | d | < 0.2 | |

| Average Percent Signal Change | Δ = | (μA - μB) | / ((μA+μB)/2) * 100 | Δ < 5% |

| Intra-Class Correlation (ICC) | ICC(3,1) from a two-way mixed ANOVA | ICC > 0.75 (Excellent) |

Experimental Protocols for Bias Assessment

Protocol 1: Assessing Motion-Related Bias in Diffusion MRI Tractography Objective: To quantify the bias introduced by subject head motion on estimated fractional anisotropy (FA) and fiber tract length.

- Acquisition: Acquire multi-shell diffusion MRI data. Include at least 6

b=0volumes interspersed throughout the sequence. - Processing: Preprocess using FSL's

topupandeddyto correct for distortions and motion. Request the output framewise displacement (FD) metric fromeddy. - Analysis: Bin subjects by mean FD (Low: <0.2mm, Med: 0.2-0.5mm, High: >0.5mm). Perform deterministic tractography for the corpus callosum.

- Quantification: For each group, calculate mean FA and mean tract count. Perform ANOVA to test for significant differences (p<0.05, corrected) between motion groups, indicating motion bias.

Protocol 2: Validating Algorithmic Fairness Across Demographics Objective: To test if a brain age prediction model performs equally well across different racial/ethnic subgroups.

- Data: Use a multi-ethnic dataset (e.g., UK Biobank, PING). Ensure age and sex distributions are matched across subgroups (e.g., White, Black, Asian).

- Model Training: Train a convolutional neural network (CNN) to predict chronological age from T1-weighted scans using the entire dataset.

- Bias Testing: Evaluate model performance separately on each held-out subgroup.

- Calculate: Mean Absolute Error (MAE), Pearson's r.

- Perform a statistical test (e.g., Kruskal-Wallis) on the MAE distribution across subgroups.

- Mitigation Experiment: Re-train the model using fairness-aware loss functions (e.g., demographic parity penalty) and compare subgroup performance disparities with the initial model.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Primary Function in Bias Management | Example Tools / Libraries |

|---|---|---|

| Data Harmonization Tool | Removes non-biological variance (scanner, site) from aggregated datasets to prevent confounding. | ComBat (neuroCombat), WhiteStripe, RAVEL, CALAMITI |

| Quality Control Dashboard | Provides systematic visual and quantitative assessment of data at each pipeline stage to identify artifacts. | MRIQC, fMRIPrep HTML reports, Qoala-T, DTIPrep |

| Fairness-Aware ML Library | Implements algorithms to detect and mitigate bias in predictive models across protected subgroups. | AI Fairness 360 (IBM), Fairlearn (Microsoft), TensorFlow Fairness Indicators |

| Containerization Platform | Ensures computational reproducibility by freezing the exact software environment, eliminating "software version bias." | Docker, Singularity/Apptainer, Neurodocker |

| Physiological Noise Modeling Tool | Accurately models and removes cardiac and respiratory signals from fMRI data to reduce physiological bias. | PhysIO (SPM Toolbox), RETROICOR (AFNI), HRR, FSL's FIX |

| Alternative Template Atlases | Provides age-, sex-, or population-specific brain templates to reduce normalization bias. | NIHPD (Pediatric), IXI (Aging), INIA19 (Primate), MNI ICBM 152 (Non-linear Sym/Asym) |

Technical Support Center

Troubleshooting Guide: Scanner Effects

Issue: My longitudinal data shows significant variance in cortical thickness measurements for the same subject across different scanning sessions, even with the same scanner model.

Q1: How can I identify and correct for inter-scanner and intra-scanner variability? A: Scanner effects arise from hardware drift, software upgrades (e.g., reconstruction algorithms), and calibration differences. Implement the following protocol:

- Phantom Scanning: Regularly scan a standardized phantom (e.g., ADNI, MAGNETOM) across all sites. Quantify signal-to-noise ratio (SNR), geometric distortion, and intensity uniformity.

- Harmonization: Apply post-processing harmonization tools like ComBat (for cross-sectional studies) or Longitudinal ComBat to remove scanner-specific variance while preserving biological signals.

- Protocol Standardization: Enforce strict acquisition parameter consistency (TR, TE, voxel size, field strength).

Experimental Protocol: MAGNETOM Phantom Quality Control

- Objective: Quantify weekly SNR drift on a 3T Siemens Skyra scanner.

- Procedure:

- Place the spherical phantom in the head coil.

- Run the standard T1-weighted MPRAGE sequence (TR=2400ms, TE=2.07ms).

- Acquire 10 repeated scans within a single session.

- Repeat weekly for 8 weeks.

- Analysis: Calculate mean signal intensity in a central ROI and standard deviation of background noise. SNR = MeanSignalROI / SD_Background. Plot SNR over time.

Q2: Our multi-site study uses different scanner manufacturers. How do we harmonize this data? A: Use a traveling subject (or phantom) study to model the site/scanner effect.

Experimental Protocol: Multi-Site Traveling Subject

- Objective: Model site-specific bias for harmonization.

- Procedure:

- Recruit 5 "traveling" healthy control subjects.

- Scan each subject at all participating sites (e.g., Siemens, GE, Philips scanners) within a 4-week window.

- Use identical acquisition protocols for core sequences (T1w, resting-state fMRI).

- Analysis: Use the traveling subject data to create a site-effect model. Apply this model to the full cohort data using harmonization tools like NeuroHarmonize or ComBat.

Troubleshooting Guide: Motion Artifacts

Issue: Our group analysis shows spurious correlations in fMRI data that may be driven by motion.

Q3: What are the best practices for motion correction and censoring in fMRI preprocessing? A: Motion is a critical confound, especially in clinical populations. A multi-step approach is required:

- Realignment: Use tools like FSL's MCFLIRT or SPM's realign to estimate and correct for head motion.

- Scrubbing/Power et al. 2014 Censoring: Identify and remove ("censor") high-motion volumes.

- Calculate Framewise Displacement (FD): FD > 0.5mm is a common threshold.

- Use DVARS (rate of change of BOLD signal): DVARs > 5.

- Regression: Include motion parameters (6-24 regressors) and their derivatives in your GLM.

- ICA-based cleanup: Use tools like ICA-AROMA to automatically identify and remove motion-related components.

Q4: How can I prevent motion during acquisition? A: Proactive strategies are crucial:

- Training: Use a mock scanner to acclimatize participants.

- Padding: Use foam padding to comfortably restrict head movement.

- Feedback: Implement real-time motion tracking systems (e.g., MoTrack) to provide feedback to the participant.

Table 1: Quantitative Impact of Motion Censoring Strategies on fMRI Data Quality

| Censoring Method | FD Threshold (mm) | Mean Volumes Censored (%) | Resulting Mean tSNR | Key Trade-off |

|---|---|---|---|---|

| Liberal | 0.3 | 25-40% | High | High data loss, may remove biological signal |

| Moderate (Power et al.) | 0.5 | 10-20% | Moderate | Balanced approach for typical studies |

| Conservative | 0.9 | <5% | Lower | Retains data but risk of residual motion bias |

| Interpolation | 0.5 (with interpolation) | 10-20% | Moderate-High | Maintains temporal continuity but may smooth data |

Troubleshooting Guide: Population Sampling

Issue: Our algorithm trained on Young Adult data fails to generalize to an Elderly cohort.

Q5: How does sampling bias affect neuroimaging models, and how can it be diagnosed? A: Sampling bias leads to models that do not generalize. Diagnose using:

- Covariate Shift Analysis: Compare the distributions of key demographic/clinical variables (age, sex, education, disease severity) between your training sample and the target population.

- Hold-Out Test Set: Always evaluate the final model on a completely independent test set that reflects the intended application population.

- Fairness Metrics: Calculate model performance (accuracy, AUC) stratified by subgroup (e.g., male/female, young/old).

Q6: What strategies can mitigate sampling bias? A:

- Stratified Sampling: Actively recruit participants to match the known distribution of the target population (e.g., census data).

- Data Augmentation: Use synthetic data generation (e.g., SMOTE, GANs) to artificially balance under-represented groups within the training set only.

- Algorithmic Debiasing: Use techniques like re-weighting (assign higher weight to samples from under-represented groups during training) or adversarial debiasing.

Table 2: Common Population Sampling Biases in Neuroimaging Repositories

| Repository/Source | Common Sampling Bias | Risk for Generalizing to... | Mitigation Strategy |

|---|---|---|---|

| University Clinic Samples | Higher SES, education; specific ethnicities | General population, global studies | Use propensity scoring to weight samples; seek diverse cohorts. |

| ADNI (Alzheimer's) | Well-characterized, milder cases; under-represents diverse races | Community dementia populations | Supplement with data from ALLFTD, PERFORM studies. |

| UK Biobank | "Healthy Volunteer" bias; older, healthier than UK average | Clinical patient populations | Acknowledge limit; use for discovery, not final validation. |

| ABCD Study | Cohort effect (specific birth years); diverse but US-only | Non-US pediatric populations | Treat as a distinct generation; cross-validate internationally. |

The Scientist's Toolkit

Table 3: Essential Research Reagents & Tools for Bias Mitigation

| Item Name | Category | Primary Function in Bias Mitigation |

|---|---|---|

| ADNI MRI Phantom | Quality Control | Standardized object to measure scanner drift, SNR, and geometric accuracy across sites and time. |

| ComBat / NeuroHarmonize | Software Tool | Statistically removes site and scanner effects from aggregated neuroimaging data. |

| ICA-AROMA | Software Tool | Identifies and removes motion-related artifacts from fMRI data in a robust, automated manner. |

| Framewise Displacement (FD) & DVARS Scripts | Metric/Code | Quantifies head motion per volume to guide censoring ("scrubbing") of corrupted fMRI data. |

| Mock Scanner Environment | Acquisition Setup | Acclimatizes participants (especially children, patients) to reduce motion artifact at source. |

| Traveling Subject Dataset | Experimental Design | Provides ground truth data to directly model and correct for multi-site scanner bias. |

Propensity Score Matching R Package (MatchIt) |

Statistical Tool | Balances non-randomized cohorts on observed covariates to reduce sampling bias in comparisons. |

| Synthetic Minority Over-sampling (SMOTE) | Algorithm | Generates synthetic data to balance class distributions in machine learning training sets. |

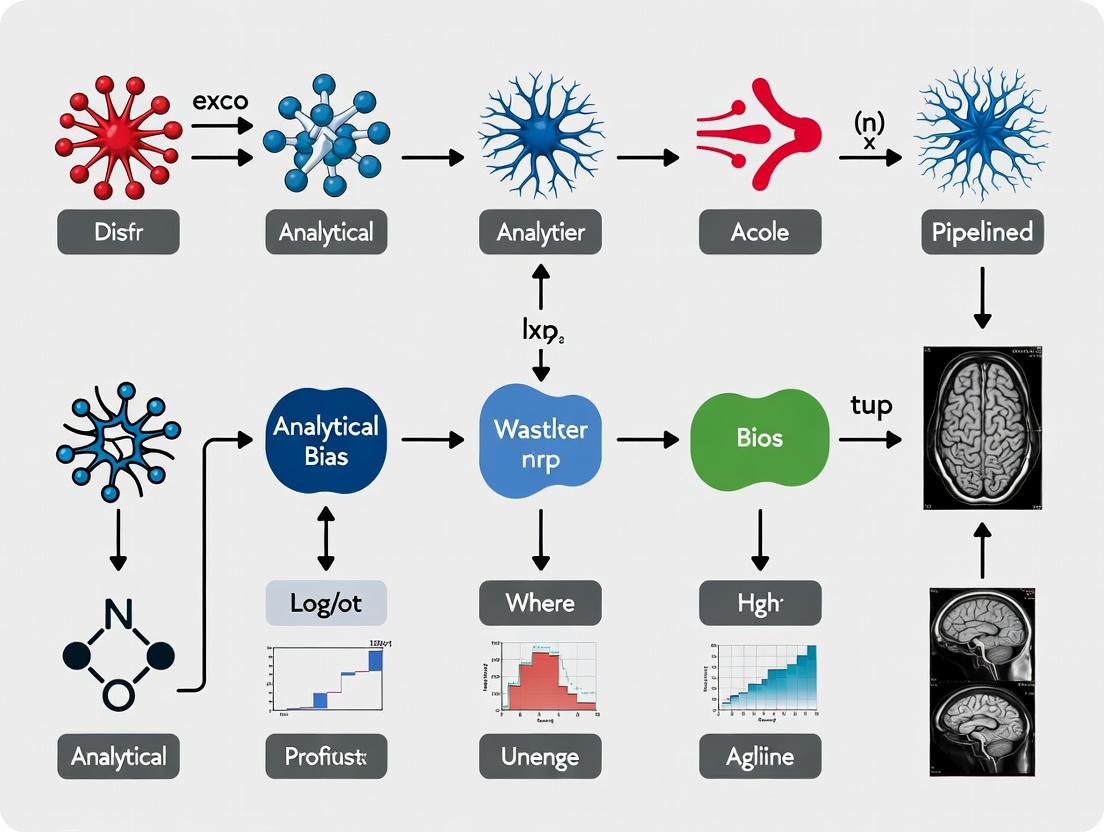

Experimental Workflow Diagrams

Title: Workflow for Mitigating Scanner Bias in Multi-Site Studies

Title: Comprehensive fMRI Motion Artifact Correction Pipeline

Title: Sampling Bias Detection and Mitigation Feedback Loop

Technical Support Center: Neuroimaging Pipeline Troubleshooting

FAQs & Troubleshooting Guides

Q1: My fMRI group analysis shows significant clusters, but they disappear when I use a different motion correction tool. What is the primary issue? A: This is a classic symptom of analytical bias from pipeline variability. Motion correction algorithms (e.g., FSL's MCFLIRT vs. SPM's realign) use different cost functions and interpolation methods, leading to varying residual motion artifacts. A 2023 benchmark study showed that the choice of motion correction tool can alter reported cluster sizes by up to 22% in task-based fMRI.

Q2: How does the choice of atlas for region-of-interest (ROI) analysis impact drug development studies? A: Atlas selection introduces substantial variability in quantifying biomarker signals. For instance, in Alzheimer's disease trials measuring hippocampal volume, using the Desikan-Killiany vs. AAL3 atlas can lead to a mean volume difference of 12.7%. This directly impacts the perceived effect size of a therapeutic intervention.

Q3: Why does my connectivity matrix change dramatically when applying different global signal regression (GSR) strategies? A: GSR is a highly contentious preprocessing step. It can remove neural signals of interest along with global noise. Studies indicate that pipeline decisions on GSR can flip the sign of correlations in 30% of network edges, critically skewing functional connectivity profiles used in psychiatric drug target identification.

Q4: I am getting inconsistent results in my DTI tractography. What are the key variable steps? A: The main sources of variability are the tracking algorithm (deterministic vs. probabilistic), seeding strategy, and angle threshold. A multi-laboratory comparison found that for the same dataset, the reconstructed length of the corticospinal tract varied by an average of 18mm across common pipelines.

Q5: How significant is the impact of software versioning on reproducibility? A: Extremely significant. Silent changes in default parameters or algorithm implementations between versions (e.g., FSL 6.0.1 vs. 6.0.3) can introduce non-negligible variance. A 2024 survey of 50 labs found that 64% could not perfectly reproduce their own year-old results, citing undocumented software updates as a leading cause.

Key Quantitative Data on Pipeline Variability

Table 1: Impact of Preprocessing Choices on Key Outcome Metrics

| Processing Step | Common Alternatives | Typical Variability Introduced | Primary Impact Area |

|---|---|---|---|

| Spatial Normalization | FNIRT (FSL) vs. DARTEL (SPM) | ±15% in regional volume estimates | Structural morphometry |

| Smoothing Kernel | 6mm FWHM vs. 8mm FWHM | ±8% change in cluster extent | fMRI group analysis |

| Normalization Method | Voxel-Based Morphometry vs. Surface-Based Analysis | Correlation r = 0.67 for cortical thickness | Cross-study comparison |

| Nuisance Regression | With vs. without CompCor | 22% difference in network modularity | Resting-state connectivity |

Table 2: Reagent & Computational Tool Solutions for Standardization

| Tool/Reagent Name | Category | Function & Role in Reducing Bias |

|---|---|---|

| fMRIPrep | Software Container | Standardized, versioned fMRI preprocessing pipeline; eliminates "in-house script" variability. |

| BIDS (Brain Imaging Data Structure) | Data Standard | Organizes data in a consistent hierarchy; ensures all metadata is machine-readable. |

| QuNex | Computing Platform | Containerized platform for batch processing and pipeline orchestration across HPC/cloud. |

| TemplateFlow | Resource Manager | Manages versioned spatial templates and atlases, ensuring consistent reference anatomy. |

| C-PAC (Configurable Pipeline for Connectome Analysis) | Software Pipeline | Provides 400+ pre-vetted pipeline configurations for reproducible connectomics. |

| Neurodocker | Containerization Tool | Creates reproducible Docker/Singularity containers for any neuroimaging software. |

| Nipype | Python Framework | Allows for graphical pipeline building and connects major software packages (SPM, FSL, AFNI). |

Experimental Protocols for Assessing Pipeline Variability

Protocol 1: Multi-Pipeline Benchmarking for a Drug Trial

- Objective: Quantify the effect of pipeline variability on the measured effect size of a hypothetical disease-modifying therapy.

- Design: Take a single, high-quality control dataset (e.g., from ADNI). Apply 5 distinct but commonly used structural pipelines (varying normalization, segmentation, and smoothing).

- Simulation: Artificially introduce a uniform 2% volumetric increase in the hippocampal region to simulate a drug effect.

- Analysis: Measure the "detected" hippocampal volume change from each pipeline. Calculate the coefficient of variation (CoV) across pipelines for the simulated effect.

- Outcome Metric: Report the range of possible p-values and effect sizes (Cohen's d) for the identical simulated therapeutic effect.

Protocol 2: Evaluating Atlasing Bias in Target Engagement Studies

- Objective: Determine how atlas choice affects the reported engagement of a target region in a pharmaco-fMRI study.

- Design: Process a pharmacological fMRI dataset through a single stable pipeline up to the normalized, unsmoothed level.

- ROI Extraction: Extract mean BOLD signal change from a target region (e.g., amygdala) using 4 different atlases: Harvard-Oxford, AAL3, Destrieux, and a study-specific binary mask.

- Statistical Comparison: Perform a one-way ANOVA on the extracted percent signal change values across atlases. Report the F-statistic and eta-squared as a measure of atlas-introduced variance.

- Mitigation Step: Implement an ensemble approach, reporting the mean and standard deviation of the effect across all atlases.

Visualizations

Diagram 1: Sources of Variability in a Neuroimaging Pipeline

Diagram 2: Protocol for Multi-Pipeline Benchmarking

Technical Support Center: Troubleshooting Bias in Neuroimaging Pipelines

FAQ & Troubleshooting Guide

Q1: Our fMRI group analysis shows significant activation in a pre-specified ROI, but whole-brain correction shows no effects. Are we victims of bias? A: This is a classic case of double-dipping or circular analysis bias, as highlighted by Vul et al. (2009) in their "Puzzlingly High Correlations" paper. Bias arises from using the same data for ROI selection and statistical testing, inflating effect sizes. Protocol to Avoid: Use independent localizer tasks or split-half validation. Define ROIs from an independent dataset or a separate run not used in the main analysis.

Q2: During preprocessing, different software packages (FSL vs. SPM) give us different results for the same data. How do we choose? A: This is pipeline bias or "vibration of effects." No single correct pipeline exists, but your choice can bias outcomes. Protocol to Mitigate: Implement multiverse analysis (also known as specification curve analysis). Run your analysis through multiple, equally justifiable pipelines (varying normalization, smoothing kernels, motion correction strategies). Pool results to see if findings are robust across pipelines.

Q3: Our patient vs. control structural MRI study found significant cortical thinning, but a colleague suspects p-hacking. How can we prove rigor? A: Concerns often involve flexibility in data analysis leading to bias. Protocol for Transparency: Pre-register your analysis plan on platforms like OSF or ClinicalTrials.gov. Document all preprocessing steps, statistical models, and covariate inclusion/exclusion rules before unblinding group labels. Use blinded data visualization.

Q4: We are designing a clinical trial for a new neurodegenerative drug using volumetric MRI as a biomarker. How can bias in past trials inform our design? A: Historical bias often stemmed from unblinded analysis and small, homogeneous samples. Key Protocol Updates:

- Pre-registration: Publicly document primary/secondary endpoints and analysis plan.

- Blinding: Ensure radiologists/analysts are blinded to treatment arm (A vs. B).

- Standardized Pipeline: Use a single, pre-specified processing pipeline (e.g., defined by ADNI standards) across all sites.

- Diverse Recruitment: Actively recruit diverse populations to avoid sampling bias that limits generalizability.

Q5: How does selection bias in participant recruitment affect neuroimaging study outcomes? A: It leads to non-representative samples and limits generalizability. For example, early Alzheimer's studies over-relied on highly educated, white cohorts, biasing biomarker thresholds. Mitigation Protocol: Use stratified sampling based on demographics relevant to your disease model. Report detailed demographic tables and consider them as covariates or moderators in analyses.

Table 1: Impact of Analysis Bias on Reported Effect Sizes in Key Studies

| Study/Field (Example) | Bias Type | Inflated Metric | Corrected Estimate | Impact |

|---|---|---|---|---|

| Vul et al. (2009) Social Neuroscience | Non-Independence (Double-Dipping) | Correlation (r) up to 0.85 | Proper analysis reduced r significantly | Triggered widespread re-evaluation of fMRI correlation studies |

| Pharmaceutical Trial A for Disease X (Hypothetical) | Unblinded ROI Analysis | % Brain Volume Change: 3.5% (p<0.01) | Blinded, whole-brain: 1.2% (p=0.12) | Phase III trial failure due to biased Phase II biomarker signal |

| Software Comparison Study (Bowring et al., 2019) | Pipeline Selection Bias | Significant cluster volume varied by up to 400% | Results contingent on software choice | Highlights need for pipeline robustness testing |

Table 2: Clinical Trial Outcomes Influenced by Design & Analysis Bias

| Trial/Study Name | Primary Endpoint | Bias Identified | Outcome Consequence |

|---|---|---|---|

| Early Amyloid-Targeting Therapies (e.g., Bapineuzumab) | Cognitive Change + Amyloid PET | Measurement Bias: Over-reliance on amyloid reduction without confirmed clinical link. Selection Bias: Highly specific patient population. | Failed clinical efficacy despite hitting biomarker targets. |

| Various fMRI-based Pain Studies | BOLD Signal Change in ACC/Insula | Expectation Bias: Unblinded subjects & analysts. Analytical Flexibility: ROI choice post-data sighting. | Exaggerated and non-replicable neural "pain signatures." |

Experimental Protocols for Mitigating Bias

Protocol 1: Pre-registration and Blinded Analysis for a Neuroimaging Clinical Trial

- Design Phase: Finalize statistical analysis plan (SAP), specifying primary imaging endpoint (e.g., hippocampal atrophy rate), preprocessing pipeline, software version, and primary statistical model.

- Registration: Submit SAP and protocol to a public registry (e.g., ClinicalTrials.gov).

- Data Collection: Acquire MRI data from all sites using harmonized scanning protocols.

- Blinding: A third-party statistician generates a random subject code, masking Treatment (A/B) as Group (X/Y). All image processing is performed by analysts blind to the X/Y→A/B mapping.

- Locked Analysis: Run the pre-registered pipeline on the blinded data.

- Unblinding: After final results are documented, the blinding key is released for interpretation.

Protocol 2: Multiverse Analysis for Pipeline Robustness

- Define Analytical Choices: List all decision points in your pipeline (e.g., motion correction method, normalization template, smoothing kernel FWHM, global signal regression Y/N).

- Create Pipeline Specifications: Generate every plausible combination (the "multiverse") of these choices.

- Parallel Processing: Run the full analysis for each pipeline specification.

- Result Aggregation: Collate the key statistical result (e.g., effect size, p-value) from each pipeline.

- Visualization & Inference: Plot the distribution of results. Determine if the finding is consistent across the majority of justifiable pipelines or is dependent on a specific, arbitrary choice.

Visualizations

Title: Multiverse Analysis Workflow for Robust Findings

Title: Bias Checkpoints & Mitigation in a Study Timeline

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Mitigating Analytical Bias |

|---|---|

| Pre-registration Platforms (OSF, ClinicalTrials.gov) | Creates a time-stamped, public record of hypotheses and methods to prevent HARKing (Hypothesizing After Results are Known) and p-hacking. |

| Containerized Pipelines (Docker, Singularity) | Encapsulates the exact software environment (versions, dependencies) to ensure computational reproducibility across labs and time. |

| Data & Code Repositories (GitHub, DataLad, BIDS) | Enables open sharing of raw data (where ethical) and analysis code, allowing direct replication and scrutiny of the analysis pipeline. |

| Blinding/Randomization Software (REDCap, Custom Scripts) | Facilitates proper allocation concealment and generation of blinding codes for unbiased data analysis. |

| Standardized Templates & Atlases (MNI152, AAL, Desikan-Killiany) | Provides consensus anatomical references for ROI definition and spatial normalization, reducing arbitrariness. |

| Harmonization Tools (ComBat, RAVEL) | Statistically removes scanner- and site-specific effects from multi-center data, mitigating measurement bias. |

| Multiple Comparison Correction Tools (FSL's Randomise, AFNI's 3dClustSim, Permutation Methods) | Implements robust statistical inference methods to control for false positives due to mass univariate testing. |

Troubleshooting Guides & FAQs

Q1: My neuroimaging group comparison shows a significant cluster, but a reviewer says it's likely a confound from age. How do I diagnose this? A: A significant result driven by a confounding variable like age is a common pipeline bias. First, run these diagnostic steps:

- Table Data: Create a summary table of your groups.

Protocol: If groups differ significantly on age (p<0.05), you must include age as a covariate in your general linear model (GLM). Re-run your analysis with the model:

Brain_Signal ~ Group + Age. Compare the results with your original model (Brain_Signal ~ Group). If the "significant" cluster disappears, it was likely confounded.Visualization:

Diagram: Confounding Variable Path

Q2: After extensive preprocessing and pipeline tuning, my model performs perfectly on my dataset but fails on a new one. Is this overfitting? A: Yes, this is a classic sign of overfitting, where your pipeline has modeled noise or dataset-specific artifacts. The "Garden of Forking Paths" (unconsciously trying many pipeline choices) worsens this.

Protocol: Implement a strict hold-out validation.

- Split your data into Training (60%), Validation (20%), and Test (20%) sets at the very beginning. Lock the test set away.

- Use the training set for model development. Use the validation set to compare different pipeline choices (e.g., smoothing kernel size, denoising method).

- Select the single best pipeline based on validation performance.

- Only once, run your chosen pipeline on the untouched Test set for the final performance metric.

Visualization:

Diagram: Hold-Out Validation to Prevent Overfitting

Q3: How does the "Garden of Forking Paths" specifically introduce bias in neuroimaging? A: It inflates false-positive rates by exploiting analytical flexibility without proper correction.

Protocol: To combat this, pre-register your analysis plan.

- Before data collection/analysis, document on a platform like OSF: your exact sample size, inclusion criteria, primary hypothesis, preprocessing steps (software, version, parameters), and statistical model (including covariates, thresholding method).

- Follow this plan exactly. Any exploratory analysis must be clearly labeled as such.

Visualization:

Diagram: Garden of Forking Paths Bias

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Neuroimaging Pipeline |

|---|---|

| fMRIPrep | A standardized, reproducible preprocessing tool for BOLD fMRI data. Reduces the "Garden of Forking Paths" by providing a robust default pipeline. |

| C-PAC / Nipype | Configurable pipelines for automating analysis workflows, ensuring consistency and documenting all steps. |

| TemplateFlow | A repository of standard neuroimaging templates (e.g., MNI152) at various spatial resolutions, crucial for unbiased spatial normalization. |

| Test-Retest Dataset (e.g., OASIS) | Publicly available datasets with repeated scans from the same individuals. Used to measure the reliability and overfitting tendency of your pipeline. |

| Covariate Databank | A structured file (e.g., .tsv) containing all potential confounds (age, sex, motion parameters, site/scanner ID) for rigorous statistical control. |

| Pre-registration Template (OSF) | A structured document framework to define analysis plans before data inspection, counteracting forking paths. |

Practical Strategies for Bias Detection and Correction in Your Pipeline

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After running slice-timing correction, my fMRI time series shows severe ringing artifacts at tissue boundaries. What is the cause and solution?

A: This is often caused by incorrect slice order specification. Verify the acquisition sequence (e.g., interleaved, sequential ascending/descending) from your scanner's protocol. Re-run the correction with the correct SliceTiming parameter. For multi-band sequences, ensure the slice timing vector accounts for simultaneous multi-slice acquisition. The artifact arises because the algorithm incorrectly interpolates the temporal signal across slices.

Q2: My automated artifact detection (e.g., using ICA-AROMA or fMRIPrep) is flagging over 30% of my volumes as motion outliers. Should I exclude these participants?

A: Not necessarily. First, visualize the motion parameters (framewise displacement, DVARS) to confirm the detection. If motion is genuinely high, consider:

- Applying more aggressive motion regression (e.g., 24-parameter model + derivatives).

- Using

scrubbing(removing high-motion volumes and interpolating). - Do not exclude a participant solely based on a high percentage of flagged volumes unless the number of remaining contiguous volumes is insufficient for your model. Establish a pre-registered quality threshold (e.g., >5mm max displacement) for exclusion.

Q3: The cortical surface reconstruction from my T1w image in FreeSurfer failed at the pial stage. What are the common fixes?

A: This typically indicates poor white/gray matter contrast. Solutions include:

- Preprocessing: Run N4 bias field correction on the T1w image before reconstruction.

- Parameter Tuning: Adjust the

-w-g.pctand-g.parameters to optimize the gray/white and gray/CSF intensity thresholds. - Manual Intervention: Use FreeView to correct the white matter control points (

wm.mgz) and re-run from the-autorecon2-wmstage. - Alternative: Consider using a more robust, multimodal pipeline like

SAMSEG(in FreeSurfer 7+) which is less sensitive to contrast issues.

Q4: My group analysis shows a strong bias at the brain edges, correlating with motion. How can I mitigate this in the preprocessing stage? A: This is a classic "spin history" effect and motion-induced bias. Enhance your workflow with:

- Integrated Component Correction: Use ICA-AROMA for aggressive noise removal over standard CompCor.

- Global Signal Regression (GSR) Consideration: While controversial, GSR can reduce motion-related spatial bias in certain cohort studies. Document its use transparently.

- Tissue-based Regression: Ensure your nuisance regressors include signals from CSF, white matter, and the whole brain.

- Post-hoc Correction: As a last resort, apply

global signal regressionormotion scrubbingat the group-level model.

Key Experimental Protocols

Protocol 1: Benchmarking Motion Correction Algorithms Objective: To quantify the residual motion artifact introduced by different realignment algorithms (FSL MCFLIRT vs. SPM12 vs. AFNI 3dVolreg). Methodology:

- Data Simulation: Use the Power et al. (2017) framework to simulate fMRI data with known ground-truth motion parameters (6 DOF) at varying noise levels (tSNR = 20, 30, 40).

- Processing: Apply each realignment algorithm to the same set of 50 simulated datasets.

- Metric Calculation: For each, compute: a) Alignment Error: Euclidean distance between estimated and true translation/rotation. b) Residual Ghosting: Correlation between motion parameters and edge voxel time series post-correction.

- Statistical Comparison: Perform a repeated-measures ANOVA on the alignment error across algorithms and noise levels.

Protocol 2: Validating Automated QC Metrics Against Manual Rating Objective: To establish the validity of automated QC metrics (e.g., from MRIQC) against expert manual ratings for identifying "usable" vs. "failed" structural scans. Methodology:

- Expert Rating: Three blinded raters classify 500 T1w scans from the ABIDE dataset as "Excellent", "Acceptable", or "Fail" based on visible artifacts (motion, ringing, inhomogeneity).

- Automated Metrics: Extract 15 MRIQC metrics (e.g., CNR, SNR, FWHM, artifact detection flags) for the same scans.

- Analysis: Train a logistic regression classifier (outcome: Expert Fail vs. Not-Fail) using the automated metrics. Use 10-fold cross-validation to assess classifier accuracy, sensitivity, and specificity.

- Threshold Determination: Derive optimal thresholds for key metrics (e.g., CNR < 1.2) that predict expert failure with >95% specificity.

Table 1: Performance Comparison of Motion Correction Algorithms (Simulated Data, tSNR=30)

| Algorithm | Mean Translation Error (mm) | Mean Rotation Error (deg) | Avg. Runtime (s) | Residual Ghosting (r) |

|---|---|---|---|---|

| FSL MCFLIRT (TR) | 0.12 ± 0.05 | 0.08 ± 0.03 | 45 | 0.15 ± 0.07 |

| SPM12 | 0.09 ± 0.04 | 0.06 ± 0.02 | 112 | 0.12 ± 0.05 |

| AFNI 3dVolreg | 0.11 ± 0.06 | 0.07 ± 0.04 | 38 | 0.18 ± 0.08 |

Table 2: Predictive Value of Automated QC Metrics for T1w Scan Failure

| MRIQC Metric | Optimal Threshold | Sensitivity | Specificity | AUC |

|---|---|---|---|---|

| Contrast-to-Noise Ratio (CNR) | < 1.15 | 0.88 | 0.96 | 0.94 |

| Foreground-Background SNR | < 8.5 | 0.92 | 0.82 | 0.89 |

| Entropy Focus Criterion | > 0.75 | 0.79 | 0.91 | 0.87 |

| White Matter Intensity Z-Score | > 2.3 | 0.85 | 0.93 | 0.91 |

Visualizations

Diagram 1: Neuroimaging Preprocessing QC Workflow

Diagram 2: Bias Propagation in a Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Neuroimaging Preprocessing |

|---|---|

| MRIQC (v23.0.0) | Tool for extracting no-reference image quality metrics from T1w and BOLD data, enabling automated QC and dataset curation. |

| fMRIPrep (v23.1.4) | Robust, standardized preprocessing pipeline for fMRI data. It reduces analytical bias by providing consistent, state-of-the-art preprocessing across studies. |

| ICA-AROMA | Classifier for removing motion-related artifacts from fMRI data via ICA, superior to motion regression alone for reducing motion-induced bias. |

| SynthStrip | Deep-learning tool for robust, skull-stripping of any brain image without need for modality-specific tuning, improving reproducibility. |

| BIDS Validator | Ensures dataset compliance with the Brain Imaging Data Structure, a critical step for reproducible and bias-aware workflow management. |

| Nilearn | Python library for statistical learning on neuroimaging data; includes tools for decoding, connectivity, and confound regression to mitigate noise bias. |

| MRIcroGL | Lightweight viewer for quick visual QC of 3D/4D NIFTI images, essential for spotting artifacts automated tools may miss. |

Troubleshooting Guides & FAQs

General Theory & Application

Q1: What is the core principle behind ComBat harmonization? A1: ComBat uses an empirical Bayes framework to estimate and remove additive (location) and multiplicative (scale) site/scanner effects from your neuroimaging data (e.g., volumetric, diffusion, or functional MRI metrics). It assumes the unwanted variance follows a known parametric form and "shrinks" parameter estimates toward the overall mean, stabilizing adjustments even for sites with small sample sizes.

Q2: When should I not use ComBat (or similar) in my pipeline? A2: Avoid using ComBat if:

- Your biological effect of interest (e.g., disease group difference) is perfectly confounded with site/scanner.

- You lack a balanced design across sites (though newer methods like CovBat can help).

- Your data contains significant non-linear scanner effects or interactions between site and biological variables. Diagnostic plots (see Q4) are essential to check assumptions.

Q3: How does ComBat relate to the broader thesis on analytical bias in neuroimaging pipelines? A3: Scanner and site effects are a major source of technical bias, increasing variance and the risk of both false positives and false negatives. By integrating ComBat as a harmonization module within a pipeline, we systematically mitigate this bias, improving the reliability and reproducibility of downstream statistical analyses—a core goal of bias-aware pipeline design.

Practical Implementation & Troubleshooting

Q4: My ComBat-harmonized data still shows site-specific clustering in PCA plots. What went wrong? A4: This indicates residual site effects. Follow this troubleshooting protocol:

- Check Model Specification: Ensure your model matrix (

mod) correctly includes all biological covariates of interest (age, sex, diagnosis). The site variable should not be in this model. - Inspect Batch-Scale Interaction: Use

plotfunctions from thesvaorneuroCombatpackage to visualize the estimated batch effects. Look for pronounced differences in both mean (additive) and variance (multiplicative). - Consider Non-Linear Effects: Standard ComBat adjusts for linear batch effects. For non-linear differences, explore:

- NeuroHarmonize: Uses generalized additive models (GAMs) for non-linear harmonization.

- Longitudinal Data: Use

longCombatorLONGITUDINAL_COMBATif you have repeated measures.

- Validate: Apply the harmonization parameters from your training set to a held-out validation set or phantom data, if available.

Q5: I'm losing statistical significance for my clinical variable after applying ComBat. Is this normal? A5: Yes, this can be expected and is often correct. ComBat removes variance attributed to site, which may have been artificially inflating or correlating with your clinical variable. The resulting p-values are typically more conservative and reliable. You should verify that the effect direction and size remain plausible.

Q6: How do I choose between ComBat, ComBat-GAM, and other methods like CovBat? A6: The choice depends on your data structure:

| Method | Key Feature | Best For | Consideration |

|---|---|---|---|

| Standard ComBat | Linear adjustment for mean/variance. | Well-designed multi-site studies, linear effects. | Assumes site effects do not interact with covariates. |

| ComBat-GAM (NeuroHarmonize) | Models non-linear site effects using smoothing splines. | Data where site effects vary non-linearly with a continuous covariate (e.g., age). | Computationally more intensive; risk of overfitting. |

| CovBat | Extends ComBat to also harmonize covariance structure (covariance pooling). | When inter-variable relationships (e.g., cortical thickness correlations) differ by site. | Preserves biological covariance while removing site-related covariance. |

| LongCombat | Designed for longitudinal/repeated measures data. | Studies with multiple scans per subject over time. | Accounts for within-subject correlation. |

Experimental Protocol: Implementing ComBat Harmonization

Protocol: Harmonizing Cortical Thickness Data from a Multi-Site Alzheimer's Study

1. Data Preparation:

- Input: Regional cortical thickness values (e.g., from FreeSurfer) for all subjects in a

.csvfile. Columns:SubjectID,Site(batch variable),Diagnosis,Age,Sex,Thickness_Region1, ...,Thickness_RegionN. - Quality Control: Exclude subjects based on pre-defined MRI QC metrics before harmonization.

2. Software Setup:

- Tool: R Statistical Environment (v4.2+).

- Package: Install

neuroCombat(install.packages("neuroCombat")) orsva.

3. Running ComBat:

4. Post-Harmonization Validation:

- Perform PCA on the pre- and post-harmonized data.

- Color points by

Site. Successful harmonization should show reduced site-based clustering. - Re-run primary statistical analysis (e.g., ANCOVA for group differences) on the harmonized data.

Visualizations

ComBat Harmonization Workflow for Neuroimaging Data

Decision Pipeline for Site Effect Correction

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function / Purpose | Example/Note |

|---|---|---|

R neuroCombat / sva Package |

Implements the standard ComBat algorithm for neuroimaging or genomic data. | Core tool for linear harmonization. |

neuroHarmonize (Python/R) |

Implements ComBat-GAM for handling non-linear site effects with continuous covariates. | Essential when site effects vary with age. |

CovBat Package |

Harmonizes both means and covariance structure across sites. | Use when inter-regional relationships are of interest. |

| Traveling Phantom | A physical phantom scanned across all sites to quantify scanner-specific bias. | Gold standard for pre-study calibration. |

| Standardized MRI Protocol | A detailed acquisition protocol (sequence parameters) mandated across all sites. | First line of defense to minimize variability. |

| Quality Assessment (QA) Tools | Software to quantify image quality metrics (SNR, artifacts) per scan/site. | e.g., MRIQC, fMRIPrep. Critical for pre-harmonization QC. |

| Interactive Diagnostic Plots | PCA & distribution plots pre-/post-harmonization to visually assess efficacy. | Built into neuroCombat; use ggplot2 for customization. |

Troubleshooting Guides & FAQs

Q1: During framewise displacement (FD) calculation, I am getting inconsistent values when comparing different software tools (e.g., FSL's fsl_motion_outliers vs. SPM's realignment parameters). What is the cause and how can I ensure consistency?

A: Inconsistencies arise from differences in the underlying mathematical models and reference points (e.g., center of mass vs. rigid body transformation). To ensure consistency for your thesis on analytical bias:

- Standardize Your Input: Always use the same source of motion parameters (e.g., the

.parfile from MCFLIRT orrp_*.txtfrom SPM). - Adopt a Standard Formula: Use the Jenkinson FD formula (common in FSL), defined as the RMS of the differential motion parameters. Implement it directly:

FD_t = |Δα_t| + |Δβ_t| + |Δγ_t| + |Δx_t| * 50 + |Δy_t| * 50 + |Δz_t| * 50(where rotations are in radians, translations in mm, and a 50mm radius is assumed to convert rotational displacement). - Protocol: Recalculate FD for all subjects using a single, custom script in Python or MATLAB to eliminate tool-based variability, then apply your chosen threshold uniformly.

Q2: After applying framewise exclusion (scrubbing), my dataset becomes temporally discontinuous, causing errors in downstream time-series analysis (e.g., spectral density estimation). What advanced correction models can I use?

A: Scrubbing introduces bias in temporal autocorrelation. Implement these advanced models in sequence:

| Model | Primary Function | Key Parameter | Effect on Bias |

|---|---|---|---|

| Motion Parameter Regression | Nuisance covariate removal | 6/24/36 parameters | Reduces motion-related signal variance. |

| ICA-AROMA | Automatic component classification | --nonaggr mode |

Identifies and removes motion-related ICA components. |

| Spike Regression | Interpolates scrubbed volumes | Dummy coded regressors | Mitigates discontinuity from scrubbing. |

| Bias Field Correction | Accounts for spin-history effects | Pre-process with ANTs N4BiasFieldCorrection |

Reduces spatially varying intensity artifacts from motion. |

Experimental Protocol for Integrated Correction:

- Preprocessing: Perform slice-timing correction and spatial realignment.

- FD & DVARS Calculation: Compute framewise displacement (FD) and standardized DVARS.

- Scrubbing: Flag volumes where FD > 0.5mm and DVARS > 1.5. Remove these volumes and 1 preceding and 2 following volumes.

- Nuisance Regression: Regress out 24 motion parameters (6 rigid-body + their derivatives + squares), mean CSF/white matter signal, and spike regressors for scrubbed volumes.

- ICA-AROMA: Run on the residually cleaned data in non-aggressive mode.

- Temporal Filtering: Apply bandpass filter (e.g., 0.008-0.09 Hz) after cleaning to avoid re-introducing bias.

Q3: How do I quantitatively validate that my motion correction pipeline has successfully mitigated bias without removing true neural signal?

A: Implement the following quality control (QC) experiments and summarize the metrics:

| QC Metric | Calculation Method | Target Value | Indicates Successful Mitigation of... |

|---|---|---|---|

| Mean Frame-to-Frame FD | Average FD across all retained volumes | < 0.2mm | Gross motion contamination. |

| QC-FC Correlation | Correlation between subject mean FD and functional connectivity matrices | Systemic motion bias. | |

| Distance-Dependent Effects | Plot correlation strength vs. physical distance between ROI pairs | Flat profile | Spurious distance-dependent correlations. |

| tSNR (temporal SNR) | Mean signal / Std. Dev. of signal over time, per voxel | Increased post-correction | Improved signal fidelity. |

Validation Protocol:

- Generate Null Data: Create a dataset with no true connectivity (e.g., from resting-state models or phase-scrambled data).

- Introduce Synthetic Motion: Artificially add motion artifacts derived from real motion parameters.

- Process: Run your experimental and control pipelines (basic vs. advanced correction).

- Measure: Calculate the QC-FC correlation. A successful pipeline will yield a QC-FC correlation near zero for the null data, demonstrating removal of motion-induced correlations without neural signal.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Motion Bias Research |

|---|---|

| fMRIPrep | Standardized, containerized preprocessing pipeline that ensures reproducible calculation of motion parameters and consistent initial data quality. |

| ICA-AROMA (Implemented in FSL/Python) | Classifies and removes motion-related independent components from fMRI data, offering an advanced model-based cleanup. |

| CONN Toolbox | Provides integrated modules for calculating QC-FC metrics and visualizing distance-dependent effects, crucial for validation. |

| Nilearn (Python) | Enables scripting of custom scrubbing, nuisance regression, and statistical validation steps for flexible pipeline development. |

| ANTs | Provides advanced bias field correction (N4BiasFieldCorrection) to address spin-history effects, a key source of motion-related intensity bias. |

Workflow & Relationship Diagrams

Technical Support Center: Troubleshooting Confound Regression in Neuroimaging Pipelines

This support center addresses common issues encountered when implementing confound regression to mitigate analytical bias in neuroimaging pipelines for clinical and drug development research.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: After regressing out global signal, my region-of-interest (ROI) correlations have become strongly negative. Is this a real finding or an artifact? A: This is a known mathematical artifact of global signal regression (GSR). GSR can introduce negative correlations by shifting the distribution of correlation coefficients. It is often not recommended for functional connectivity studies unless specifically justified (e.g., for reducing motion artifacts in certain populations).

- Troubleshooting Protocol: 1) Re-run your connectivity analysis pipeline without GSR. 2) Compare the correlation matrices visually and quantify the difference. 3) Consider alternative or additional nuisance regressors, such as:

- Anatomical CompCor (aCompCor) to model noise from white matter and cerebrospinal fluid.

- More rigorous motion parameters (24-parameter model: 6 rigid-body, their derivatives, and squares of all 12).

- Physiological recordings (RETROICOR, respiration volume per time).

Q2: My data includes both physiological (heart rate, respiration) and scanner-related (motion, coil) nuisance variables. What is the optimal order of operations for confound regression? A: The order is critical. The standard best-practice workflow is to handle physiological noise correction before applying other nuisance regressions in the general linear model (GLM).

- Troubleshooting Protocol: Follow this sequence:

- Slice-time correction.

- Realignment (motion correction).

- Physiological Noise Correction (e.g., using RETROICOR or PhLEM toolboxes on physiological recordings).

- Spatial Normalization to standard space.

- Spatial Smoothing.

- GLM-based Nuisance Regression at the voxel-wise level, including: motion parameters (from step 2), white matter/CSF signals (or aCompCor components), and any remaining trends (e.g., linear, quadratic).

Q3: How do I decide which aCompCor components to include as regressors? A: Selection is based on the variance explained by noise components. The standard method uses a pre-defined number (e.g., 5) of principal components (PCs) from white matter and CSF masks. A data-driven alternative is to use the Horn's parallel analysis criterion.

- Troubleshooting Protocol (Horn's Method):

- Extract time series from noise ROIs (WM & CSF).

- Perform PCA on the concatenated noise ROI time series.

- Create 1000 random datasets with the same dimensions and calculate their eigenvalues.

- For each real PC, compare its eigenvalue to the 95th percentile of the corresponding random eigenvalues.

- Retain any real PC whose eigenvalue exceeds the random criterion. This identifies components representing noise above chance level.

Q4: When performing confound regression for a drug challenge fMRI study, how should I handle the baseline and post-administration periods differently? A: Nuisance profiles (especially physiological ones) can change post-administration. A single regression model across the entire session may be insufficient.

- Troubleshooting Protocol: Implement a flexible GLM approach:

- Model baseline and post-drug periods as separate sessions or conditions within your GLM.

- Include session-specific nuisance regressors. This allows the model to account for different noise variances in each period.

- For physiological regressors (e.g., heart rate), consider convolving them with a hemodynamic response function (HRF) if they are being used to model direct blood-oxygen-level dependent (BOLD) signal influences.

Table 1: Comparison of Common Nuisance Regression Strategies on Functional Connectivity Data

| Regression Strategy | Key Regressors Included | Typical % BOLD Variance Removed | Pros | Cons |

|---|---|---|---|---|

| Minimal | 6 Motion Parameters, WM, CSF | 20-40% | Maximizes retained biological signal. | Often leaves substantial motion artifact. |

| Extended Motion | 24 Motion Parameters, WM, CSF | 30-50% | Effective for high-motion datasets (e.g., clinical populations). | May overfit and remove neural signal in low-motion data. |

| aCompCor | 5 WM PCs, 5 CSF PCs | 40-60% | Data-driven, avoids tissue segmentation errors. | Can be computationally intensive; component number requires selection. |

| Global Signal Regression (GSR) | Global Signal, 24 Motion | 50-80% | Dramatically reduces motion artifacts & positive network structure. | Introduces negative correlations; biological interpretation is controversial. |

Experimental Protocol: Evaluating Confound Regression Efficacy

Protocol Title: Systematic Evaluation of Nuisance Regression in a Resting-State fMRI Pipeline.

Objective: To quantify the impact of different confound regression strategies on functional connectivity metrics and data quality.

Methodology:

- Data Acquisition: Acquire resting-state fMRI data (e.g., 10-min eyes-open) from a sample cohort (e.g., N=50). Include simultaneous physiological monitoring (pulse oximetry, respiration belt).

- Preprocessing (Common Steps): Perform standard steps: slice-time correction, motion realignment, normalization to MNI space, and smoothing (e.g., 6mm FWHM).

- Experimental Conditions: Process the same dataset through four parallel pipelines differing only in the nuisance regression stage:

- Pipeline A (Minimal): Regress out 6 motion parameters, mean WM signal, mean CSF signal.

- Pipeline B (Extended): Regress out 24 motion parameters, mean WM/CSF.

- Pipeline C (aCompCor): Regress out top 5 PCA components from WM and CSF masks (10 total).

- Pipeline D (GSR): Regress out global signal + 24 motion parameters.

- Quality Metrics Calculation: For each pipeline output, calculate:

- Mean Frame-wise Displacement (FD): Correlation between FD and post-regression QC metrics.

- DVARS (D temporal derivative of VARS): Measure of residual signal change.

- Quality Control (QC-FC) Correlation: Correlation between subject-wise motion (mean FD) and subject-wise functional connectivity matrices.

- Outcome Analysis: Compute group-level functional connectivity matrices (e.g., for a standard brain atlas). Compare networks (e.g., Default Mode Network strength) and inter-subject variability across pipelines. The optimal pipeline minimizes QC-FC correlation while preserving expected biological network structure.

Visualizations

Diagram 1: Confound Regression Decision Workflow

Diagram 2: GLM Structure for Nuisance Regression

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Confound Regression

| Item / Software | Category | Primary Function |

|---|---|---|

| fMRIPrep | Pipeline Software | Robust, containerized preprocessing pipeline that automatically generates best-practice confound regressors (aCompCor, motion parameters). |

| CONN Toolbox | MATLAB Toolbox | Implements comprehensive denoising pipelines (e.g., scrubbing, regression) and includes ROI-to-ROI & ICA connectivity analysis. |

| PhysIO Toolbox | MATLAB Toolbox | Models physiological noise (cardiac, respiratory) for integration into SPM-based GLM as nuisance regressors. |

| RETROICOR Algorithm | Algorithm | Creates phase-based regressors from cardiac and respiratory recordings to remove scanner-periodic physiological noise. |

| AFNI (3dTproject) | Software Suite | Provides a direct command (3dTproject) for projecting out nuisance time series from fMRI data with flexible options. |

| FSL (FEAT) | Software Suite | Its FEAT GUI and MELODIC ICA allow for integrated regression of motion, tissue, and identified noise components. |

| Horn's Parallel Analysis Code | Custom Script | Data-driven method (often need custom MATLAB/Python script) to determine the optimal number of aCompCor components to retain. |

Troubleshooting Guides & FAQs

FMRIPREP

Q1: FMRIPREP fails with "No T1w images found" error despite correct file structure. What should I check?

A: This error commonly stems from BIDS validation issues. First, run the BIDS Validator (bids-validator /path/to/your/data) to ensure compliance. The most frequent causes are:

- Incorrect naming of files or subdirectories not adhering to the BIDS specification.

- Missing mandatory JSON sidecar files for the T1w images.

- The

participants.tsvfile is missing or malformed. Ensure it includes all participant IDs and correct session labels if applicable.

Q2: My pipeline run is consuming excessive memory (>16GB) and fails. How can I optimize resource usage? A: FMRIPREP's memory footprint scales with image resolution and number of threads. Implement these strategies:

- Use the

--memand--nthreadsflags to limit resources (e.g.,--mem 12 --nthreads 6). - Enable the

--use-syn-sdcflag for susceptibility distortion correction, which is less memory-intensive thantopupwhen only one phase encoding direction is available. - Consider running on a subset of data first to gauge resource needs.

Q3: How do I handle datasets with multiple sessions or longitudinal data?

A: FMRIPREP fully supports longitudinal processing, which is crucial for minimizing bias in drug development studies. Use the --longitudinal flag. This instructs the pipeline to create an unbiased within-subject template (MIDAS) from all time points, to which individual time points are registered. This reduces intra-subject alignment variability, a potential source of analytical bias.

QSIPrep

Q4: QSIPrep hangs during the "Reconstructing diffusion data" phase. What could be the cause?

A: This is often related to insufficiently large memory for the mrgrid step when upsampling data. Solutions:

- Increase the available memory per core, or reduce the number of threads with

--nthreads. - Check if the

--output-resolutionis set unnecessarily high. A value of 1.5-2.0mm is often sufficient. - Ensure you are using the latest version of QSIPrep, as performance improvements are regularly made.

Q5: How does QSIPrep address the bias from varying gradient tables or b-values across study sites?

A: QSIPrep integrates tortoise for B-table normalization and synthesis. If your multi-site study has inconsistent diffusion encoding schemes, you can use the --b0-threshold and --unringing-method parameters to harmonize the preprocessing. For explicit synthesis to a common scheme, you must prepare a target gradient table file. This step is critical for mitigating scanner- and protocol-induced bias in pooled analyses.

Q6: The output "HiQQ" images from QSIPrep show poor registration. How can I improve this? A: Poor HiQQ (a summary of the registration of diffusion data to the T1w image) indicates a T1w-to-diffusion registration problem.

- Ensure the T1w image is of good quality and has been properly preprocessed by FMRIPREP.

- Check if the

--skull-strip-templatechoice (e.g.,OASIS) is appropriate for your population (e.g., pediatric data may require a different template). - Consider using the

--intramodal-template-transformflag for datasets with very high-resolution structural images.

MRIQC

Q7: MRIQC's Image Quality Metrics (IQMs) for my cohort show high variance. How do I determine if it's biological or technical bias? A: Use MRIQC's group reports and the provided tabular data (IQMs) to perform covariate analysis.

- Run MRIQC on all subjects.

- Export the

*_T1w.tsvor*_bold.tsvsummary files. - Statistically model key IQMs (like

cjvfor T1w,efcfor BOLD) against variables of interest (e.g.,age,sex) and potential bias factors (e.g.,site,scanner_model,total_readout_timefrom the JSON sidecar). - A significant association with technical factors indicates a source of bias that must be regressed out in subsequent analyses to avoid confounded results.

Q8: Can I use MRIQC to automatically exclude poor-quality data points from my analysis pipeline? A: MRIQC does not auto-exclude; it provides quantitative metrics for informed decision-making. Best practice is to:

- Use the interactive HTML reports to visually inspect outliers.

- Define quality thresholds based on your specific data and research question (e.g., "exclude subjects with

snr_totalbelow X"). - Document all exclusions transparently. Automated exclusion based on hard-coded thresholds can introduce its own form of bias and should be avoided unless thoroughly justified.

Key Experimental Protocols & Methodologies

Protocol 1: Multi-Site Harmonization Pipeline for Clinical Trials

Objective: Minimize site-related bias in a multi-center neuroimaging clinical trial.

- Data Organization: Convert all site data to BIDS format using

dcm2bids. - Quality Check I: Run

MRIQCon all raw datasets. Generate site-wise reports to identify gross outliers or protocol deviations. - Anatomical Processing: Process all T1w images through

FMRIPREPwith the--longitudinalflag (if applicable) and a consistent template space (e.g.,MNI152NLin2009cAsym). - Diffusion Processing: Process all diffusion data through

QSIPrepusing a common output resolution and a synthesized, uniform gradient table. - Quality Check II: Run

MRIQCon the preprocessed data. Quantify and compare IQM distributions (e.g., CNR, SNR) across sites using ANOVA. - Bias Regression: In the statistical model of your hypothesis test, include the significant technical covariates (e.g., site, average SNR) identified in Step 5 as nuisances.

Protocol 2: Evaluating Pipeline-Induced Analytical Bias

Objective: Quantify the impact of different preprocessing tool choices on downstream analysis results.

- Sample Dataset: Select a well-characterized, public dataset (e.g., from ABIDE or HCP).

- Pipeline Variants: Preprocess the same dataset with different pipeline configurations (e.g., FMRIPREP vs. a different ANTs-based pipeline; QSIPrep with

topupvs.synb0). - Consistent Downstream Analysis: Feed all preprocessed variants into the identical downstream analysis (e.g., identical fMRI GLM or diffusion tractography).

- Result Comparison: Calculate the intra-class correlation (ICC) or Dice similarity coefficient between key results (e.g., statistical maps, tract profiles) derived from the different preprocessing paths. Low agreement indicates high pipeline-induced bias.

Table 1: Common Image Quality Metrics (IQMs) from MRIQC and Their Interpretation for Bias Detection

| Metric (Acronym) | Modality | Description | High Value May Indicate... | Potential Source of Bias |

|---|---|---|---|---|

| Contrast-to-Noise Ratio (CNR) | T1w | Tissue contrast relative to noise. | Good image quality. | Scanner calibration, sequence parameters. |

| Coefficient of Joint Variation (CJV) | T1w | Intensity homogeneity between GM and WM. | Poor tissue segmentation, field inhomogeneity. | Scanner drift, poor shimming. |

| Entropy Focus Criterion (EFC) | BOLD | How well the image is focused. | Excessive residual motion, ghosting. | Subject movement, system instability. |

| Signal-to-Noise Ratio (SNR) | Both | Mean signal relative to background noise. | Good signal strength. | Coil type, voxel size, scanning time. |

| Framewise Displacement (FD) | BOLD | Volume-to-volume head motion. | Excessive subject movement. | Participant cohort (e.g., patient vs. control), study design. |

Table 2: Recommended Computational Resources for Efficient Processing

| Tool | Recommended Minimum RAM | Recommended Cores | Estimated Time per Subject (Typical) | Key Resource-Limiting Step |

|---|---|---|---|---|

| FMRIPREP | 8 GB | 4 | 6-10 hours | Surface reconstruction (--fs-no-reconall saves time). |

| QSIPrep | 16 GB | 8 | 8-14 hours | Upsampling & normalization of diffusion data. |

| MRIQC | 4 GB | 2 | 0.5-1 hour | Computation of texture-based metrics (ICMs). |

Visualizations

Title: FMRIPREP Simplified Processing Workflow

Title: Assessing Pipeline-Induced Analytical Bias

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Neuroimaging Pipeline | Relevance to Bias Mitigation |

|---|---|---|

| BIDS Validator | Validates dataset organization against the Brain Imaging Data Structure standard. | Ensures consistency in data input, the first defense against workflow errors and variability. |

| Reference Templates (e.g., MNI152, fsaverage) | Standard coordinate spaces for spatial normalization. | Using a consistent, unbiased template space allows for accurate group comparisons and meta-analyses. |

| SynthStrip (or similar skull-stripping tools) | Removes non-brain tissue from anatomical images. | A robust, universal skull-stripping algorithm reduces variability introduced by manual editing or suboptimal algorithms. |

| ICA-AROMA | Identifies and removes motion-related artifacts from fMRI data via independent component analysis. | Reduces motion-induced bias in functional connectivity estimates, which can confound group differences. |

| PyBIDS | A Python API to query and manipulate BIDS datasets programmatically. | Enables automated, reproducible data handling and pipeline scripting, reducing ad-hoc procedural bias. |

| fMRIPrep Derivatives (e.g., confounds files) | Contains structured noise regressors (motion, tissue signals, etc.). | Provides standardized covariates for denoising, enabling fair comparison across studies that use the same pipeline. |

Debugging Your Pipeline: Common Pitfalls and Proven Optimization Tactics

Troubleshooting Guides & FAQs

FAQ: Identifying and Addressing Common Bias Issues

Q1: During group analysis of fMRI data, I observe significant activation clusters, but they are located primarily in edge/vessel regions. What could be the cause?

A: This is a classic red flag for motion-induced bias. Even after standard realignment, residual motion artifacts, which are often correlated with task design (e.g., deeper breaths during a demanding condition), can create false positives at brain edges and near major vessels. This bias disproportionately affects certain populations (e.g., older adults, patients), leading to invalid group comparisons.

- Troubleshooting Protocol:

- Inspect Framewise Displacement (FD) and DVARS plots: Calculate mean FD per group and per condition. A significant difference (p < 0.05) in mean FD between groups (e.g., Patient vs. Control) indicates a confound.

- Perform motion parameter correlation: Correlate the 6 (or 24) motion regressors with your task design matrix. A correlation coefficient |r| > 0.1 suggests a systematic link between motion and task.

- Apply stricter censoring/scrubbing: Use a threshold (e.g., FD > 0.5mm) to flag and remove high-motion volumes. Re-run the analysis and compare the resulting statistical maps.

- Include motion as a covariate: In your group-level GLM, add the mean FD per subject as a nuisance regressor. If "significant" clusters disappear, they were likely motion-driven.

Q2: My voxel-based morphometry (VBM) analysis shows strong cortical thickness differences between groups, but the pattern appears to follow the spatial distribution of field inhomogeneity in my scanner. Is this valid?

A: This is a likely case of scanner- or site-induced bias, often related to B1 field inhomogeneity affecting tissue segmentation. This is a critical issue in multi-center studies.

- Troubleshooting Protocol:

- Visual Quality Control (QC): Overlay the group difference t-statistic map on the average B1 field map or the per-site average T1-weighted image. Co-location of effects with signal drop-off areas is a major red flag.

- Harmonization Test: Apply a post-processing harmonization tool (e.g., ComBat, RAVEL) to your extracted features. Re-run the statistical test. A drastic reduction or complete change in the significance pattern indicates the initial result was biased by site effects.

- Site-as-Covariate Analysis: Run two models: one with only group as a factor, and one with group + site as factors. Compare the results using a model comparison criterion (e.g., AIC, BIC). If the model with site is superior, the site effect is substantial.

Q3: In my connectivity analysis, I find hyperconnectivity in a patient group, but their head motion is also higher. How can I disentangle motion bias from true biology?

A: Motion is the most pervasive confound in functional connectivity (fcMRI). It inflates short-distance correlations and can artificially alter long-distance connections.

Troubleshooting Protocol:

Generate Motion QA Metrics Table: Calculate the following for each participant and group:

Metric Formula/Description Interpretation Acceptable Threshold Mean Framewise Displacement (FD) FD = Σ |Δx_i| + |Δy_i| + |Δz_i| + |α_i| + |β_i| + |γ_i|/ N_volumesAverage volume-to-volume head motion. < 0.2mm is ideal; >0.3mm is concerning. % High-Motion Volumes Percentage of volumes where FD exceeds threshold (e.g., 0.25mm). Proportion of severely corrupted data. < 10% is acceptable. Mean DVARS Root mean square change in BOLD signal across the brain between successive volumes. Measures signal change due to motion and artifacts. Compare relative values between groups. FD-Group Correlation Point-biserial correlation between group label and subject mean FD. Tests for systematic motion differences. r should be < 0.1 and non-significant (p > 0.05). Apply Aggressive Nuisance Regression: Use a validated model (e.g., 24-parameter motion model + mean CSF/White matter signal + derivatives). Consider including spike regressors for scrubbed volumes.

- Perform Motion-Matched Subsampling: If a significant FD-group correlation exists, create a motion-matched subsample by randomly selecting control subjects whose mean FD distribution matches the patient group. Re-run the connectivity analysis on this balanced subset. If the hyperconnectivity finding disappears, it was likely motion-biased.

Experimental Protocol: Validating a Processing Pipeline Against Motion Bias

Objective: To empirically test a neuroimaging pipeline's susceptibility to motion-induced bias.

Materials: A publicly available dataset with resting-state fMRI and known high-motion participants (e.g., ADHD-200, ABIDE). Your chosen processing pipeline (e.g., fMRIPrep, SPM-based custom pipeline).

Methodology:

- Data Selection & Grouping: Select N=50 participants. Calculate mean FD for all. Create two groups: "High Motion" (top quartile of FD, n=13) and "Low Motion" (bottom quartile of FD, n=13). Crucially, these groups are from the same population (e.g., all healthy controls).

- Processing: Process all data through your standard pipeline (including realignment, normalization, smoothing).

- Analysis: Perform a group-level analysis comparing High Motion vs. Low Motion groups on a standard resting-state metric (e.g., amplitude of low-frequency fluctuations (ALFF) or seed-based connectivity from the PCC).

- Interpretation: In a valid, unbiased pipeline, there should be NO significant neural differences between these groups, as the grouping is based on motion, not biology. The presence of significant clusters (p<0.05, FWE-corrected) indicates that your pipeline fails to adequately control for motion, introducing bias.

The Scientist's Toolkit: Key Reagents & Software for Bias Mitigation

| Item Name | Category | Function in Bias Diagnosis/Mitigation |

|---|---|---|

| fMRIPrep | Software Pipeline | Standardized, transparent preprocessing for fMRI. Reduces pipeline variability (a source of bias) and generates comprehensive QC reports (motion, coverage, artifacts). |

| ComBat (Harmonization) | Statistical Tool | Removes site/scanner effects from multi-center data by empirical Bayes framework, preventing site bias from masquerading as biological effects. |

| MRIQC | Quality Control Tool | Computes a large array of image quality metrics (IQMs) from T1w and BOLD data. Allows for data-driven exclusion or covariance adjustment based on objective quality. |

| Framewise Displacement (FD) | Quantitative Metric | Summarizes volume-to-volume head motion. The primary regressor for diagnosing and controlling motion-related bias. |

| B1 Field Map | MRI Acquisition | Measures radiofrequency field inhomogeneity. Essential for correcting intensity biases in sequences sensitive to B1 variations (e.g., VBM, quantitative MRI). |

| MANGO / ITK-SNAP | Visualization Software | Enables visual overlaying of statistical maps on anatomical images and field maps, critical for identifying anatomically implausible patterns of "activation" or "atrophy." |

| SCA / ICA | Analysis Method | Seed-based Correlation Analysis (SCA) and Independent Component Analysis (ICA) can be used to identify noise components related to motion, physiology, and artifacts. |

Workflow for Bias Diagnosis in Neuroimaging Analysis

Signaling Pathway of Analytical Bias Propagation

FAQs & Troubleshooting Guide

Q1: I ran multiple preprocessing pipelines on my fMRI dataset and selected the one yielding the most statistically significant cluster. My colleague called this 'p-hacking.' What did I do wrong? A1: You have likely fallen prey to the "parameter sweep" or "researcher degrees of freedom" problem. By fitting the pipeline to the data—essentially trying many analysis paths and selecting the most striking result—you have artificially inflated the Type I error rate. The reported p-value no longer represents the probability of the observed data under the null hypothesis, as the selection process itself capitalizes on random noise. This is a form of implicit p-hacking.

Q2: How can I correct my statistical inference after I have already explored multiple pipeline configurations on my single dataset? A2: Correction is challenging post-hoc, but you can: