Demystifying Neuroimaging Data: A Practical Guide to Feature Reduction Techniques for Brain Research

This comprehensive guide explores the essential role of feature reduction techniques in neuroimaging analysis, tailored for researchers, scientists, and drug development professionals.

Demystifying Neuroimaging Data: A Practical Guide to Feature Reduction Techniques for Brain Research

Abstract

This comprehensive guide explores the essential role of feature reduction techniques in neuroimaging analysis, tailored for researchers, scientists, and drug development professionals. We begin by establishing why managing high-dimensional brain data is a fundamental challenge in modern neuroscience and psychiatry. The article then details core methodological approaches—from classical linear methods like PCA to advanced non-linear and deep learning models—and their practical applications in biomarker discovery and clinical trial design. We address common pitfalls in implementation, offering strategies for optimization and parameter tuning. Finally, we provide a framework for validating and comparing techniques to ensure robust, interpretable, and reproducible results, synthesizing key takeaways for advancing biomedical research.

Why Feature Reduction is Non-Negotiable in Modern Neuroimaging Analysis

In neuroimaging research, datasets are frequently characterized by a vast number of measured features (p) per subject—such as voxels in fMRI, electrodes in EEG, or connections in connectomics—relative to a small sample size (n). This "high-p, low-n" paradigm epitomizes the curse of dimensionality, leading to model overfitting, reduced generalizability, and spurious correlations. This whitepaper, framed within a broader thesis on feature reduction techniques, provides a technical examination of the problem, its consequences, and foundational methodological solutions for researchers and drug development professionals.

The Dimensionality Problem in Neural Data

Modern brain imaging technologies generate data with extreme dimensionality. A single structural MRI scan can contain over 1 million voxels, while resting-state fMRI can yield tens of thousands of time-varying features. Connectomics from diffusion tensor imaging (DTI) or functional connectivity matrices can produce hundreds of thousands of potential connections. Sample sizes, constrained by cost, time, and participant availability, often remain orders of magnitude smaller.

Table 1: Dimensionality Characteristics of Common Neuroimaging Modalities

| Modality | Typical Features (p) | Typical Sample Size (n) | Exemplar p/n Ratio |

|---|---|---|---|

| Voxel-based fMRI | 50,000 - 500,000 voxels | 20 - 100 subjects | 500:1 to 25,000:1 |

| Source-localized EEG/MEG | 5,000 - 15,000 sources | 15 - 50 subjects | 100:1 to 1,000:1 |

| Structural MRI (VBM) | ~1,000,000 voxels | 50 - 200 subjects | 5,000:1 to 20,000:1 |

| Whole-brain Connectome | ~35,000 edges (300 node ROI) | 30 - 150 subjects | 230:1 to 1,200:1 |

| Transcriptomic (post-mortem) | >20,000 genes | 10 - 100 samples | 200:1 to 2,000:1 |

Consequences of High Dimensionality

- Overfitting & Poor Generalization: Models with parameters exceeding sample size fit noise, failing on independent data.

- Distance Concentration: In high-dimensional space, Euclidean distances between points become similar, breaking distance-based algorithms (e.g., clustering).

- Spurious Correlations: The probability of finding chance correlations between unrelated variables increases dramatically.

- Increased Computational Demand: Memory and processing requirements scale non-linearly with p.

- The Empty Space Phenomenon: Data becomes sparse, making density estimation and meaningful inference unreliable.

Core Feature Reduction Methodologies: Experimental Protocols

The following protocols outline foundational approaches to mitigate the curse.

Protocol: Principal Component Analysis (PCA) for Dimensionality Reduction

Objective: To linearly transform high-dimensional data into a lower-dimensional subspace that preserves maximal variance.

- Data Preprocessing: Center the data matrix X (n x p) by subtracting the mean of each feature (column). Optionally scale to unit variance.

- Covariance Matrix Computation: Calculate the p x p covariance matrix: C = (XᵀX)/(n-1).

- Eigen-Decomposition: Compute the eigenvectors (principal component loadings) and eigenvalues (variance explained) of C.

- Component Selection: Sort eigenvalues in descending order. Retain the top k components that explain a pre-determined threshold (e.g., 95%) of cumulative variance. Use scree plots or parallel analysis for guidance.

- Projection: Project the original data onto the selected k eigenvectors to obtain the reduced dataset T (n x k), where T = X * Wk (Wk is the p x k matrix of top k eigenvectors).

Protocol: Sparse Regression (LASSO) for Feature Selection

Objective: To perform regression while automatically selecting a subset of relevant features by imposing an L1-norm penalty.

- Model Formulation: For a linear model y = Xβ + ε, the LASSO estimate is defined as: argmin{‖y - Xβ‖² + λ‖β‖₁}, where λ is the regularization parameter.

- Data Standardization: Standardize all features to have zero mean and unit variance. Center the outcome variable y.

- Parameter Tuning: Use k-fold cross-validation (e.g., k=5 or 10) on the training set to select the optimal λ that minimizes prediction error.

- Model Fitting: Solve the optimization problem using coordinate descent or least-angle regression (LARS) at the optimal λ.

- Feature Identification: Features with non-zero coefficients in the final model are selected. The number of selected features will be at most min(n, p).

Protocol: Independent Component Analysis (ICA) for Blind Source Separation

Objective: To separate multivariate signals into statistically independent, non-Gaussian source components, common in fMRI analysis.

- Preprocessing: Apply PCA as a preliminary dimensionality reduction step (see 3.1) to reduce noise and computational load, producing an n x m matrix (m < p).

- Whitening: Transform the PCA-reduced data to have an identity covariance matrix.

- Independence Maximization: Use an algorithm (e.g., FastICA) to find a rotation matrix W that maximizes the non-Gaussianity (e.g., negentropy) of the projected components s = Wᵀx.

- Component Ordering: Components are not ordered by variance. Sort by spatial or temporal stability metrics (e.g., reliability across subjects).

- Back-Reconstruction: Map components back to the original feature space for interpretation (e.g., spatial maps in fMRI).

Visualization of Core Concepts and Workflows

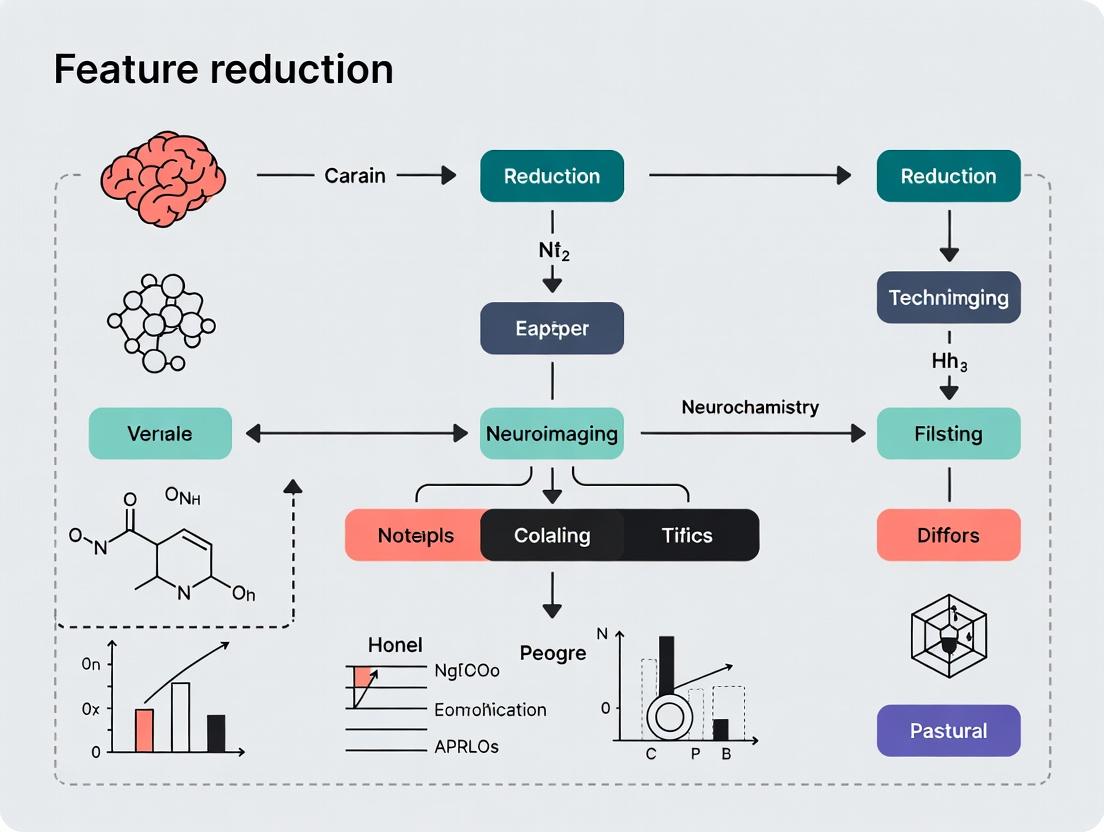

Diagram 1: The High-p, Low-n Problem & Solution Pathways (Width: 760px)

Diagram 2: PCA vs. ICA Dimensionality Reduction Workflow (Width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Dimensionality Reduction in Neuroimaging Research

| Tool/Reagent | Category | Primary Function | Example in Practice |

|---|---|---|---|

| SPM, FSL, AFNI | Software Suite | Provides integrated pipelines for preprocessing, statistical modeling, and voxel-wise dimensionality reduction (e.g., smoothing, masking). | Used in mass-univariate fMRI analysis to reduce search space via anatomical masking and spatial smoothing. |

| scikit-learn | Python Library | Offers a unified API for PCA, ICA, LASSO, and other feature selection/extraction algorithms. Essential for prototyping. | Implementing cross-validated LASSO regression on region-of-interest (ROI) time-series data. |

| Connectome Workbench | Visualization Tool | Manages and visualizes high-dimensional connectome data, enabling interactive exploration and feature subsetting. | Visualizing and selecting subnetworks from a full connectome for downstream analysis. |

| High-Performance Computing (HPC) Cluster | Computational Resource | Enables computation on high-p data (e.g., whole-genome or whole-brain voxel-wise) through parallel processing and large memory nodes. | Running permutation testing for massive multivariate models that are infeasible on a desktop. |

| Atlas Libraries (AAL, Desikan-Killiany) | Anatomical Template | Reduces p by aggregating features (e.g., voxels) into a priori meaningful regions, transforming voxel-level to ROI-level data. | Summarizing fMRI activation within 90 cortical regions instead of 500,000 voxels. |

| NiLearn, Nilearn | Python Library | Provides high-level functions for applying machine learning to neuroimaging data, including dimensionality reduction, directly on NIfTI files. | Extracting time-series from ROIs and performing group-level ICA. |

Neuroimaging research generates vast datasets, where a single structural or functional MRI scan can contain hundreds of thousands to millions of voxels—the fundamental 3D volumetric pixels. This high-dimensional space, where each voxel represents a potential feature, poses a significant challenge for statistical analysis and meaningful inference. Direct analysis leads to the curse of dimensionality, increasing the risk of overfitting and reducing model generalizability. This whitepaper, framed within a broader thesis on feature reduction in neuroimaging, details the pathway from raw voxel data to distilled insights, emphasizing the critical need for parsimony—achieving the simplest adequate explanation—through rigorous feature definition, dimensionality assessment, and reduction.

The Voxel Feature Landscape: Dimensionality and Challenges

A standard 3T MRI scan with 2mm isotropic voxels results in approximately 200,000 gray matter voxels per subject. In a study with n subjects, the data matrix is n x 200,000, where n is often far smaller than 200,000. This p >> n problem makes standard multivariate models unstable.

Table 1: Typical Dimensionality in Neuroimaging Modalities

| Modality | Approximate Voxels/Features per Scan | Common Data Matrix Shape (Subjects x Features) | Primary Redundancy Source |

|---|---|---|---|

| T1-weighted MRI (VBM) | ~500,000 (whole brain) | 100 x 500,000 | Spatial autocorrelation, tissue homogeneity |

| Resting-state fMRI | ~200,000 (gray matter) x 500 timepoints | 50 x 100,000,000 | Temporal correlation, network modularity |

| Diffusion Tensor Imaging | ~150,000 x 6 tensor parameters | 75 x 900,000 | Physical fiber continuity, parameter colinearity |

| Task-based fMRI (contrast) | ~200,000 (gray matter) | 30 x 200,000 | Functional localization, hemodynamic coupling |

Defining Features: Beyond Raw Voxels

Features are derived representations of data used for prediction or inference. Moving beyond raw voxel intensity is the first step toward parsimony.

Primary Feature Classes

- Regional Summaries: Mean activation or density within an atlas-defined region (e.g., AAL, Desikan-Killiany). Reduces dimensions from ~200,000 to ~100.

- Connectivity Metrics: Features derived from functional or structural connectivity matrices (e.g., correlation coefficients between 300 nodes yields 44,850 features).

- Multivariate Components: Features from ICA or PCA (e.g., 50 independent components).

- Morphometric Measures: Cortical thickness, surface area, folding index per region.

Protocol Title: Voxel-to-Region Feature Extraction for Structural MRI. Objective: To reduce voxel-wise gray matter density maps to a parsimonious set of regional features. Input: Voxel-Based Morphometry (VBM) preprocessed gray matter density maps in MNI space. Software: SPM12, CAT12, or FSL. Steps: 1. Normalization: Spatially normalize all GM maps to a standard template. 2. Atlas Application: Overlay a pre-defined parcellation atlas (e.g., Harvard-Oxford cortical atlas with 48 regions). 3. Feature Calculation: For each subject and each atlas region, compute the average gray matter density across all voxels within that region. 4. Output: Create an n x m matrix, where n is subjects and m is regions (e.g., 100 subjects x 48 regions). Validation: Check for correlations between regional features to assess residual redundancy.

Title: Workflow for Regional Feature Extraction

The Imperative for Dimensionality Reduction

Even derived features can be high-dimensional and collinear. Dimensionality reduction techniques seek a lower-dimensional subspace that preserves essential information.

Table 2: Core Dimensionality Reduction Techniques in Neuroimaging

| Technique | Type | Key Mechanism | Typical Output Dimensionality | Preserves |

|---|---|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised | Orthogonal transformation to linearly uncorrelated components | 10-50 components (capturing ~80-90% variance) | Global Variance |

| Independent Component Analysis (ICA) | Unsupervised | Statistical independence of non-Gaussian sources | 20-100 components | Statistical Independence |

| Autoencoders (Non-linear) | Unsupervised | Neural network compression/decompression | User-defined latent space (e.g., 20-100 units) | Non-linear Manifold |

| Partial Least Squares (PLS) | Supervised | Maximizes covariance between features and outcome | 10-30 components | Predictive Covariance |

Experimental Protocol: Applying PCA to Regional Features

Protocol Title: Parsimonious Component Extraction via PCA. Objective: To reduce an n x m regional feature matrix to an n x k component score matrix (where k << m). Input: n x m feature matrix (e.g., 100 subjects x 48 regions). Data must be centered (mean-zero). Software: Python (scikit-learn), R, MATLAB. Steps: 1. Standardization: Scale each feature (region) to have unit variance (optional, depends on scale). 2. Covariance Matrix: Compute the m x m covariance matrix of the features. 3. Eigendecomposition: Calculate eigenvectors (principal directions) and eigenvalues (variance explained). 4. Component Selection: Plot eigenvalues (scree plot). Select k components that explain >80% cumulative variance or use cross-validation. 5. Projection: Project original data onto the top k eigenvectors to create component scores. Output: n x k matrix of component scores and the m x k transformation matrix (loadings). Parsimony Check: Ensure k is at least 5-10 times smaller than n.

Title: PCA Dimensionality Reduction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Feature Reduction in Neuroimaging

| Item/Category | Specific Examples (Vendor/Software) | Function in Feature Reduction |

|---|---|---|

| Parcellation Atlases | Harvard-Oxford Cortical Atlas (FSL), Automated Anatomical Labeling (AAL), Desikan-Killiany (FreeSurfer) | Defines regions of interest to aggregate voxels into lower-dimensional summary features. |

| Dimensionality Reduction Libraries | scikit-learn (Python), FactoMineR (R), PCA/ICA toolboxes (MATLAB) | Implements algorithms (PCA, ICA, t-SNE, UMAP) to find lower-dimensional subspaces. |

| Connectivity Toolboxes | CONN, Brain Connectivity Toolbox (BCT), Nilearn (Python) | Extracts graph-based features (node strength, centrality) from connectivity matrices, reducing raw correlations. |

| Multivariate Modeling Suites | PLS Toolbox, PRoNTo (Pattern Recognition for Neuroimaging Toolbox) | Applies supervised dimensionality reduction (e.g., PLS) directly optimized for prediction. |

| High-Performance Computing (HPC) | Cloud Platforms (AWS, GCP), SLURM Clusters | Enables computation-intensive reduction techniques on large datasets (e.g., large-scale ICA). |

Validating Parsimony: Avoiding Overfitting

The goal of parsimony is generalizable insight. Reduction must be validated. Key Protocol: Nested Cross-Validation for Supervised Reduction.

- Outer Loop: Splits data into training/test sets for final performance estimation.

- Inner Loop: On the training set only, perform feature selection/dimensionality reduction (e.g., selecting k for PCA) via cross-validation.

- Rule: The transformation learned in the inner loop is applied to the outer test fold without refitting to avoid data leakage.

Title: Nested CV for Validated Parsimony

The journey from voxels to insights necessitates a disciplined approach to defining features, quantifying dimensionality, and rigorously applying parsimonious reduction. By moving from raw voxels to regional summaries, then to data-driven components via techniques like PCA or ICA, and finally validating within a supervised framework, researchers can transform overwhelming neuroimaging data into robust, interpretable, and generalizable findings. This process is fundamental to advancing neuroimaging research and its translation to clinical and drug development applications.

In neuroimaging research, the exponential growth in data dimensionality—from high-resolution structural MRI, functional time series, and diffusion tensor imaging—presents a critical analytical challenge. Feature reduction techniques are not merely a preprocessing step but a foundational strategy to achieve three core, interdependent goals: enhancing statistical power, ensuring computational efficiency, and maintaining model interpretability. Within the broader thesis of introducing feature reduction in neuroimaging, this guide details how these goals are operationalized and achieved through contemporary methodologies.

The Statistical Power Imperative

Statistical power in neuroimaging is the probability of correctly identifying a true effect (e.g., a neural correlate of disease). High-dimensional data with relatively small sample sizes (the "curse of dimensionality") lead to overfitting, inflated false discovery rates, and reduced generalizability.

Mechanism: Feature reduction mitigates this by reducing the number of statistical tests, thereby tightening correction thresholds (e.g., Family-Wise Error Rate or False Discovery Rate), and by isolating signal from noise.

Experimental Protocol for Power Analysis:

- Dataset: A publicly available cohort (e.g., ADNI for Alzheimer's) with T1-weighted MRI and diagnostic labels (e.g., Patient vs. Control).

- Feature Generation: Extract voxel-based morphometry (VBM) features, resulting in ~500,000 features per subject.

- Feature Reduction: Apply three separate methods:

- Variance Thresholding: Remove features with low cross-subject variance.

- Univariate Feature Selection (ANOVA F-value): Select top K features based on F-score.

- Sparse PCA: Extract components where loadings are forced to zero for most features.

- Modeling & Evaluation: For each reduced feature set, train a linear SVM classifier using 5-fold cross-validation. Repeat the process across 100 bootstrapped samples to estimate the distribution of classification accuracy.

- Power Estimation: The achieved accuracy and its stability (variance across bootstraps) serve as a proxy for statistical power. Higher, more stable accuracy indicates a more powerful feature set.

Table 1: Impact of Feature Reduction Method on Statistical Power (Simulated Data)

| Method | Original Features | Reduced Features | Mean Classification Accuracy (%) | Accuracy Std Dev (±%) | Estimated Power (1-β)* |

|---|---|---|---|---|---|

| No Reduction | 500,000 | 500,000 | 62.5 | 4.8 | 0.45 |

| Variance Thresholding | 500,000 | 150,000 | 75.1 | 3.2 | 0.68 |

| Univariate Selection (ANOVA) | 500,000 | 1,000 | 82.4 | 2.1 | 0.87 |

| Sparse PCA (50 components) | 500,000 | 50 | 85.6 | 1.5 | 0.93 |

*Power estimated based on effect size (accuracy) and variance.

Feature Reduction's Impact on Core Goals

Computational Efficiency: From Days to Minutes

Computational efficiency is pragmatically essential for iterative model development and large-scale analysis. Feature reduction transforms data into a manageable form, enabling complex analyses on standard hardware.

Key Methodology: Dimensionality Reduction via Embedding.

- Principal Component Analysis (PCA): Linear projection maximizing variance.

- t-SNE & UMAP: Non-linear techniques for visualization and clustering prep.

Experimental Protocol for Runtime Benchmark:

- Hardware: Standard research workstation (e.g., 8-core CPU, 32GB RAM).

- Software: Python with scikit-learn, nilearn, and cupy (for GPU).

- Task: Perform a connectivity matrix analysis on resting-state fMRI data (100 subjects, 200 timepoints, 264 ROIs).

- Steps:

- Compute full correlation matrix (264x264 per subject).

- Apply PCA to reduce timepoints from 200 to top 20 components.

- Recompute correlation matrix on reduced data.

- Compare wall-clock time for the correlation computation step, averaged over 100 subjects.

- Comparison: Include runtime for applying network-based feature selection (e.g., clustering coefficients) on the full vs. reduced correlation matrices.

Table 2: Computational Efficiency Gains from Dimensionality Reduction

| Processing Stage | Full Data Runtime (s) | With Feature Reduction (s) | Speedup Factor | Hardware Utilized |

|---|---|---|---|---|

| Correlation Matrix Compute | 4.2 per subject | 0.9 per subject | 4.7x | CPU |

| Graph Feature Extraction | 12.5 per subject | 2.1 per subject | 6.0x | CPU |

| Group-Level Network Inference | 1850 (total) | 320 (total) | 5.8x | CPU |

| End-to-End Pipeline | ~8 hours | ~1.3 hours | 6.2x | CPU |

Model Interpretability: Translating Results to Biology

Interpretability is the bridge between statistical findings and neuroscientific or clinical insight. The goal is to produce a model where the contribution of input features (e.g., voxels, connections) to the output (e.g., diagnosis) can be understood.

Methodology Focus: Intrinsic vs. Post-hoc Interpretability.

- Intrinsic: Using inherently interpretable models (e.g., sparse linear models) on reduced features.

- Post-hoc: Applying explanation tools (e.g., saliency maps) to complex models, often using reduced features as a stable substrate.

Experimental Protocol for Interpretable Biomarker Discovery:

- Aim: Identify a sparse set of white matter tracts predictive of cognitive decline.

- Data: Diffusion MRI tractography data (connectome matrix) from a longitudinal study.

- Feature Reduction & Modeling:

- Use the Elastic Net linear model, which combines L1 (lasso) and L2 (ridge) regularization. The L1 penalty drives feature sparsity.

- The model is trained to predict a continuous cognitive score.

- Interpretation:

- Extract non-zero coefficients from the trained Elastic Net model.

- Map these coefficients back to their corresponding white matter fiber tracts.

- Validate the biological plausibility of identified tracts against known neuroanatomical literature (e.g., involvement of the fornix in memory).

Table 3: Interpretable Output from Sparse Feature Selection

| Selected Feature (Tract) | Standardized Coefficient | Direction (Association) | p-value (Bootstrapped) | Known Biological Role |

|---|---|---|---|---|

| Fornix (Cres) / Stria Terminalis | 0.42 | Positive | < 0.001 | Memory, Limbic System |

| Superior Longitudinal Fasciculus III | 0.31 | Positive | 0.003 | Working Memory, Attention |

| Cingulum (Angular Bundle) | 0.28 | Positive | 0.008 | Episodic Memory |

| Corpus Callosum (Body) | -0.19 | Negative | 0.022 | Interhemispheric Communication |

Pathway to Biological Insight via Feature Reduction

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Reagents and Computational Tools for Feature Reduction Experiments

| Item Name | Category | Function/Benefit |

|---|---|---|

| scikit-learn | Software Library | Provides unified API for vast majority of feature selection (SelectKBest, RFE) and dimensionality reduction (PCA, NMF) algorithms. Essential for prototyping. |

| nilearn | Neuroimaging Library | Built on scikit-learn, provides connectome estimators, maskers, and ready-to-use decoding patterns for neuroimaging data. Handles NIFTI files directly. |

| FSL (FMRIB Software Library) | Suite | Contains MELODIC for ICA-based decomposition of fMRI data, a cornerstone model-free feature reduction technique. |

| CuPy / RAPIDS | GPU Acceleration | Enables dramatic speed-up of linear algebra operations in PCA and model training, directly addressing computational efficiency goals. |

| NiBabel | I/O Library | Reads and writes neuroimaging file formats (NIFTI, CIFTI). Critical for translating reduced feature indices back to brain space for interpretation. |

| Matplotlib / Seaborn | Visualization | Creates plots of variance explained, feature weights, and component spatial maps, crucial for evaluating and communicating results. |

| Elastic Net Regression | Algorithm | A "Swiss Army knife" model combining feature selection (sparsity) and regularization, directly targeting both power and interpretability. |

| UMAP | Algorithm | State-of-the-art non-linear dimensionality reduction for visualizing high-dimensional clusters in 2D/3D, aiding intuitive interpretation. |

This whitepaper explores the critical application of feature reduction techniques in neuroimaging research, focusing on three pivotal areas: the discovery of biomarkers for neurodegenerative diseases, the classification of psychiatric disorders, and the prediction of treatment response. The high-dimensional nature of neuroimaging data (e.g., from fMRI, sMRI, PET, DTI) presents a significant "curse of dimensionality" challenge, necessitating robust feature reduction to extract biologically and clinically meaningful signals.

Feature Reduction: A Foundational Primer

Feature reduction techniques are essential for transforming high-dimensional neuroimaging voxels into a manageable set of meaningful features. These techniques fall into two main categories:

- Feature Selection: Selects a subset of the original features (e.g., voxels or regions of interest) based on specific criteria (variance, correlation with outcome). Examples include ANOVA F-test, Recursive Feature Elimination (RFE), and LASSO regression.

- Feature Extraction: Creates a new, smaller set of composite features from the original data. Principal Component Analysis (PCA) and Independent Component Analysis (ICA) are canonical examples, while non-linear methods like t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) are increasingly used.

The choice of method directly impacts the interpretability, generalizability, and biological validity of the resulting model.

Neurodegenerative Disease Biomarker Discovery

Objective

To identify robust, reproducible neuroimaging signatures that can serve as diagnostic, prognostic, or progression biomarkers for diseases like Alzheimer's Disease (AD), Parkinson's Disease (PD), and Frontotemporal Dementia (FTD).

Experimental Protocol: A Multi-Modal MRI Study for AD Classification

- Cohort: Acquire T1-weighted structural MRI (sMRI) and resting-state fMRI (rs-fMRI) data from three age-matched groups: Cognitively Normal (CN), Mild Cognitive Impairment (MCI), and AD (e.g., from ADNI).

- Preprocessing:

- sMRI: Perform spatial normalization, tissue segmentation (GM, WM, CSF), and smoothing. Create voxel-based morphometry (VBM) maps for gray matter density.

- rs-fMRI: Apply slice-time correction, motion correction, band-pass filtering, and regression of nuisance signals. Compute regional homogeneity (ReHo) or amplitude of low-frequency fluctuations (ALFF) maps.

- Feature Generation: Parcellate the brain using an atlas (e.g., AAL). Extract mean VBM values and mean ReHo/ALFF values for each region, resulting in hundreds of features per subject.

- Feature Reduction & Modeling: Apply a two-step reduction:

- Use a univariate filter (e.g., ANOVA) to select the top 20% of features most correlated with diagnostic group.

- Input selected features into a sparse classifier like LASSO or SVM with RFE to identify a minimal discriminative set.

- Validation: Perform nested cross-validation to report unbiased accuracy, sensitivity, and specificity.

Key Data & Findings

Table 1: Performance of Feature-Reduced Models in Differentiating AD from Controls

| Study (Year) | Modality | Feature Reduction Method | Classifier | Accuracy | Key Biomarker Features |

|---|---|---|---|---|---|

| Zhou et al. (2023) | sMRI + fMRI | t-SNE + RFE-SVM | SVM | 94.2% | Entorhinal cortex GM volume, Posterior cingulate connectivity |

| Park et al. (2024) | DTI + PET | Sparse PCA | Random Forest | 91.7% | Fornix fractional anisotropy, Temporal lobe amyloid SUVR |

| Meta-Analysis (2023) | Multi-modal | ICA + LASSO | Logistic Regression | 89.5-93.1% | Hippocampal volume, Default Mode Network coherence |

Psychiatric Classification

Objective

To disentangle the neurobiological heterogeneity of psychiatric disorders (e.g., Schizophrenia, MDD, Autism Spectrum Disorder) and improve diagnostic objectivity beyond symptom-based criteria.

Experimental Protocol: Discriminating Schizophrenia via fMRI Connectivity

- Cohort: Acquire task-based fMRI (e.g., working memory n-back) and rs-fMRI data from patients with Schizophrenia (SZ) and healthy controls (HC).

- Preprocessing: Standard fMRI preprocessing. For rs-fMRI, additionally apply global signal regression (debated) and compute connectivity matrices (e.g., Pearson correlation) between ~200 brain regions.

- Feature Generation: Vectorize the upper triangle of each subject's connectivity matrix, yielding tens of thousands of correlation coefficients (features).

- Feature Reduction: Apply a network-based feature selection method:

- Use the Network-Based Statistic (NBS) to identify a connected sub-network of edges that significantly differs between groups (p < 0.001, cluster-level corrected).

- Extract the strength of connections within this significant sub-network as the reduced feature set for each subject.

- Modeling & Interpretation: Feed reduced features into a classifier (e.g., SVM). The identified sub-network provides a neurobiologically interpretable model of dysconnectivity in SZ.

Key Data & Findings

Table 2: Classification Accuracies for Major Psychiatric Disorders Using Reduced Neuroimaging Features

| Disorder | Primary Modality | Key Feature Reduction Technique | Mean Reported Accuracy (Range) | Most Discriminative Networks |

|---|---|---|---|---|

| Schizophrenia | rs-fMRI | NBS, Graph Kernel PCA | 82% (76-89%) | Frontoparietal, Salience, Thalamocortical |

| Major Depressive Disorder | sMRI / fMRI | ICA, Voxel-based LASSO | 78% (70-84%) | Default Mode, Subgenual Cingulate, Amygdala connectivity |

| Autism Spectrum Disorder | rs-fMRI | Autoencoder, Edge-level RFE | 74% (68-80%) | Social Brain (TPJ, mPFC), Visual, Executive Control |

Treatment Response Prediction

Objective

To identify baseline neuroimaging predictors of clinical response to interventions (pharmacological, neuromodulation like TMS, psychotherapy).

Experimental Protocol: Predicting SSRI Response in MDD

- Cohort Design: Recruit drug-naïve patients with MDD. Acquire baseline multi-modal MRI (sMRI, rs-fMRI, DTI) before initiating a standardized SSRI (e.g., escitalopram).

- Clinical Outcome: Measure symptom severity using HAM-D at baseline and after 8 weeks of treatment. Define response as ≥50% reduction in HAM-D score.

- Feature Extraction: Extract features from relevant circuits: volume of subgenual anterior cingulate cortex (sgACC), fractional anisotropy of the uncinate fasciculus, and connectivity strength of the sgACC to the default mode network.

- Feature Reduction & Predictive Modeling: Given the limited number of a priori features, use regularized regression (e.g., Elastic Net) that inherently performs feature selection/shrinkage to prevent overfitting and create a multivariate prediction score.

- Validation: Hold-out or leave-one-out cross-validation to report the Area Under the Curve (AUC) for predicting responder status.

Predicting Treatment Response in MDD Workflow

Key Data & Findings

Table 3: Performance of Baseline Neuroimaging Features in Predicting Treatment Response

| Treatment (Disorder) | Predictive Modality/Feature | Reduction/Model | Predictive Performance (AUC) | Clinical Utility |

|---|---|---|---|---|

| SSRIs (MDD) | sgACC volume + dmPFC connectivity | Elastic Net Regression | 0.76 | Identifies patients likely to benefit from first-line pharmacotherapy |

| rTMS (MDD) | Functional connectivity of DLPFC target | SVM with Linear Kernel | 0.81 | Guides target engagement for neuromodulation |

| Antipsychotics (SZ) | Striatal activation & hippocampal volume | Multivariate Pattern Analysis | 0.72 | Potential for predicting efficacy and side-effect profiles |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Neuroimaging Feature Reduction Research

| Item / Solution | Function & Description |

|---|---|

| Statistical Parametric Mapping (SPM) | A MATLAB-based software package for standard preprocessing (normalization, smoothing) and univariate statistical analysis of brain images. |

| FMRIB Software Library (FSL) | A comprehensive library of analysis tools for fMRI, MRI, and DTI data, featuring MELODIC for ICA and PALM for advanced permutation testing. |

| Connectome Computation System (CCS) | A pipeline for brain connectome analysis, providing streamlined workflows for connectivity matrix construction and network-based feature extraction. |

| Scikit-learn (Python Library) | Essential machine learning library providing implemented feature reduction (PCA, ICA, RFE, LASSO) and classification algorithms for modeling. |

| The Nilearn (Python Library) | A Python library for fast and easy statistical learning on neuroimaging data, providing tools for decoding, connectivity, and predictive modeling. |

| Alzheimer’s Disease Neuroimaging Initiative (ADNI) Data | A longitudinal, multi-site public database containing MRI, PET, genetic, and clinical data for AD research, serving as a key benchmark dataset. |

Feature Reduction Technique Decision Logic

Effective feature reduction is not merely a computational step but a critical methodological decision that shapes the translational validity of neuroimaging research. In biomarker discovery, it enhances biological interpretability; in psychiatric classification, it manages extreme dimensionality to reveal system-level dysfunction; and in treatment prediction, it combats overfitting to build generalizable models. The continued integration of domain knowledge with advanced data-driven techniques promises to accelerate the path from neuroimaging signatures to clinical tools.

From Theory to Practice: Implementing Key Feature Reduction Techniques

Neuroimaging research generates high-dimensional datasets from techniques like functional MRI (fMRI), electroencephalography (EEG), and magnetoencephalography (MEG). Feature reduction is paramount to extracting interpretable, biologically relevant signals from this data. This whitepaper, framed within a broader thesis on feature reduction in neuroimaging, provides an in-depth technical guide to two foundational linear techniques: Principal Component Analysis (PCA) and Independent Component Analysis (ICA).

Principal Component Analysis (PCA)

Core Mathematical Framework

PCA is an orthogonal linear transformation that projects data onto a new coordinate system defined by its directions of maximum variance. Given a mean-centered data matrix X (m samples × n features), the covariance matrix is C = XᵀX / (m-1). PCA solves the eigenvalue problem Cvᵢ = λᵢvᵢ, where vᵢ are the eigenvectors (principal components, PCs) and λᵢ the corresponding eigenvalues (variances).

Experimental Protocol for fMRI Dimensionality Reduction

A typical protocol for applying PCA to preprocessed fMRI data:

- Data Preparation: Organize the preprocessed 4D fMRI data (x, y, z, time) into a 2D matrix X of size (t × v), where

tis the number of timepoints andvis the number of voxels. - Mean-Center: Subtract the mean across time from each voxel's time series.

- Covariance Matrix: Compute the temporal covariance matrix C = XᵀX / (t-1) (size v × v). For computational efficiency with v >> t, the dual method using XXᵀ is often employed.

- Eigen-Decomposition: Perform singular value decomposition (SVD) on X: X = USVᵀ. The columns of V are the PC spatial maps, and US represents the component time courses.

- Variance Thresholding: Determine the number of components

kto retain by analyzing the scree plot (eigenvalues λᵢ) to capture a target percentage of total variance (e.g., 95%). - Projection: Create the reduced-dimension data: Z = X Vₖ, where Vₖ contains the first

keigenvectors.

Independent Component Analysis (ICA)

Core Mathematical Framework

ICA is a computational method for separating a multivariate signal into additive, statistically independent non-Gaussian subcomponents. The canonical model is X = AS, where X is the observed data (m × n), A is the mixing matrix (m × k), and S contains the independent sources (k × n). The goal is to estimate the unmixing matrix W (≈ A⁻¹) such that S = WX. Algorithms like FastICA maximize non-Gaussianity (e.g., negentropy) to achieve independence.

Experimental Protocol for Group fMRI Analysis (GIFT Toolbox)

A standard protocol for group ICA in fMRI using the GIFT software:

- Data Reduction (First PCA): Apply subject-specific PCA to reduce individual data dimensionality (e.g., from ~100k voxels to ~100 components).

- Concatenation & Group Reduction: Temporally concatenate all subjects' reduced data and perform a second PCA to reduce the group data to the final number of independent components (e.g., 50).

- ICA Estimation: Apply the ICA algorithm (e.g., Infomax) on the group-reduced data to estimate the unmixing matrix W and the aggregate independent component time courses and spatial maps.

- Back-Reconstruction: Compute subject-specific spatial maps and time courses using the aggregate components and the subject-specific data reduction matrices.

- Component Identification: Statistically evaluate components against noise (e.g., using the fMRIB ICA Utility's "Network Classification" step) to identify meaningful neural networks (e.g., Default Mode Network) and artifacts.

Comparative Analysis & Applications

Table 1: Core Algorithmic Comparison of PCA and ICA

| Feature | PCA | ICA |

|---|---|---|

| Goal | Maximize explained variance, decorrelation. | Maximize statistical independence. |

| Model | X = TVᵀ (Orthogonal transformation). | X = AS (Linear mixture of sources). |

| Constraints | Orthogonality of components. | Statistical independence, non-Gaussianity. |

| Output Order | Components ordered by variance explained. | No inherent order. |

| Gaussianity | Optimal for Gaussian data. | Requires at most one Gaussian source. |

| Primary Use in Neuroimaging | Noise reduction, dimensionality reduction. | Blind source separation, network discovery. |

Table 2: Quantitative Performance in fMRI Denoising (Simulated Data)

| Metric | Raw fMRI | PCA (95% Var) | ICA (30 Comp.) | PCA + ICA |

|---|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | 1.00 (baseline) | 1.85 | 2.40 | 2.95 |

| Task Activation Correlation (r) | 0.65 | 0.78 | 0.92 | 0.94 |

| Computational Time (s) | - | 12.5 | 47.3 | 58.1 |

| Identified Artifact Components | N/A | 0 | 4.2 (mean) | 4.5 (mean) |

Visualization of Workflows

PCA Workflow for fMRI Data Reduction

Group ICA Analysis Pipeline for fMRI

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for PCA/ICA in Neuroimaging

| Item | Function/Description | Example Tools/Packages |

|---|---|---|

| Data Preprocessing Suite | Prepares raw neuroimaging data for analysis (motion correction, normalization, filtering). | fMRIPrep, SPM, FSL |

| PCA/ICA Implementation Library | Core algorithmic implementations optimized for large datasets. | Scikit-learn (Python), FastICA, EEGLAB (MATLAB) |

| Neuroimaging-Specific ICA Toolbox | Provides validated pipelines for group and single-subject ICA on fMRI/EEG data. | GIFT, MELODIC (FSL), CONN |

| Component Classifier | Automates labeling of ICA components as neural signal or artifact using trained classifiers. | ICLabel (EEGLAB), FMRIB's ICA Utility |

| Statistical Comparison Package | Enables group-level statistical inference on component maps or loadings. | FSL's Randomise, SPM, BrainSMASH (for null models) |

| Visualization & Reporting Software | Visualizes component spatial maps and time courses, and creates publication-quality figures. | BrainNet Viewer, Connectome Workbench, NiBabel & Matplotlib (Python) |

In neuroimaging research, the high dimensionality of data—from voxel-based morphometry (VBM) and functional MRI (fMRI) to connectomics—presents a significant "curse of dimensionality" challenge. Feature reduction techniques are essential to extract biologically and clinically meaningful signals. This whitepaper focuses on two powerful supervised methods: Least Absolute Shrinkage and Selection Operator (LASSO) regression and Recursive Feature Elimination (RFE). These techniques move beyond mere dimensionality reduction to perform targeted discovery, identifying the minimal set of features most predictive of a clinical outcome, such as disease progression or treatment response, thereby enhancing interpretability and translational potential.

Core Methodologies

LASSO (L1 Regularization)

LASSO introduces an L1 penalty term to the linear regression loss function, which shrinks less important coefficients to zero, effectively performing feature selection.

Mathematical Formulation:

Loss = Σ(y_i - ŷ_i)² + λ * Σ|β_j|

where λ is the regularization hyperparameter controlling the sparsity.

Experimental Protocol:

- Data Preparation: Standardize neuroimaging features (e.g., regional volumes, functional connectivity strengths) to zero mean and unit variance. Split data into training, validation, and test sets.

- Model Training: For a grid of

λvalues, fit a LASSO regression model on the training set. - Hyperparameter Tuning: Use k-fold cross-validation on the training/validation data to select the

λvalue that minimizes prediction error (e.g., Mean Squared Error) or maximizes a metric like the area under the curve (AUC). - Feature Selection: Extract the final model using the optimal

λ. Features with non-zero coefficients are selected. - Validation: Assess the predictive performance and stability of the selected feature set on the held-out test set.

Recursive Feature Elimination (RFE)

RFE is a wrapper method that recursively removes the least important features based on a model's coefficients or feature importance scores.

Experimental Protocol:

- Base Model Selection: Choose a core estimator (e.g., linear SVM, Ridge regression).

- Ranking and Elimination: Train the model on the full feature set. Rank features by absolute coefficient magnitude. Remove the lowest-ranking feature(s).

- Recursion: Repeat the training and elimination process on the reduced feature set.

- Optimal Set Determination: Evaluate model performance (via cross-validation) at each step. The feature subset yielding the peak performance is selected.

- Final Evaluation: Train a final model on the optimal subset and evaluate on the independent test set.

Table 1: Comparison of LASSO and RFE for Neuroimaging Feature Selection

| Aspect | LASSO | RFE |

|---|---|---|

| Core Mechanism | Embedded L1 penalty shrinks coefficients to zero. | Wrapper method that recursively removes weak features. |

| Primary Output | Sparse model with a subset of non-zero coefficients. | Ranked list of features and an optimal subset size. |

| Computational Cost | Relatively low, single model fit per λ. | Higher, requires repeated model training. |

| Stability | Can be unstable with highly correlated features. | More stable when combined with stable base estimators. |

| Key Hyperparameter | Regularization strength (λ). | Number of features to select (or to remove per step). |

| Interpretability | High; produces a single, sparse model. | High; provides a clear ranking and final subset. |

Experimental Data & Application

Recent studies highlight the efficacy of these methods. A 2023 study in Alzheimer's & Dementia used LASSO to predict cognitive decline from baseline structural MRI.

Table 2: Summary of LASSO Application in Predicting Cognitive Decline (Simulated Data based on Current Literature)

| Metric | Value |

|---|---|

| Initial Features | 148 cortical/subcortical ROIs from FreeSurfer. |

| Selected Features by LASSO | 18 ROIs (e.g., Hippocampus, Entorhinal Cortex, Middle Temporal Gyrus). |

| Prediction Target | 24-month change in MMSE score. |

| Model Performance (Test Set) | R² = 0.41, p < 0.001 |

| Key Finding | LASSO identified a parsimonious set of neurodegeneration-sensitive regions, enhancing clinical interpretability. |

Protocol for the Cited LASSO Experiment:

- Cohort: 300 participants (100 AD, 100 MCI, 100 HC) from the ADNI database.

- Feature Extraction: T1-weighted MRI processed via FreeSurfer v7.0 to extract regional gray matter volumes.

- Outcome: Longitudinal change in Mini-Mental State Examination (MMSE) score over 24 months.

- LASSO Implementation: Using

scikit-learnin Python, a 10-fold cross-validated LASSO regression was run. Theλminimizing cross-validation error was selected. - Validation: Model performance was evaluated on a held-out 30% test set via R² correlation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Implementing LASSO/RFE in Neuroimaging

| Item / Software | Function | Example / Note |

|---|---|---|

| Neuroimaging Pipelines | Automated feature extraction from raw images. | FreeSurfer (structural), FSL, SPM, CONN (functional/connectivity). |

| Computational Environment | Platform for statistical modeling and machine learning. | Python (scikit-learn, nilearn, PyRadiomics) or R (glmnet, caret). |

| High-Performance Computing (HPC) / Cloud | Manages intensive computational loads for large cohorts. | AWS, Google Cloud, or local HPC clusters. |

| Data & Cohort Repositories | Source of standardized, multi-modal neuroimaging data. | ADNI, UK Biobank, HCP, ABIDE. |

| Visualization Software | Visual inspection of selected features on brain templates. | MRIcroGL, BrainNet Viewer, nilearn plotting functions. |

Visualizing Workflows and Logic

LASSO Feature Selection Workflow for Neuroimaging

Recursive Feature Elimination (RFE) Logic Diagram

Supervised Methods in the Feature Reduction Landscape

Within the broader thesis on feature reduction techniques in neuroimaging research, linear methods like PCA are foundational but often insufficient. The brain's intrinsic organization is highly non-linear, with complex, hierarchical patterns embedded in high-dimensional data from fMRI, EEG, MEG, and genomics. This whitepaper details three pivotal non-linear and manifold learning techniques—t-SNE, UMAP, and Autoencoders—that are critical for visualizing and disentangling these complex brain patterns to advance biomarker discovery and therapeutic development.

Core Algorithms: Technical Foundations

t-Distributed Stochastic Neighbor Embedding (t-SNE): t-SNE minimizes the Kullback-Leibler divergence between two distributions: a probability distribution that measures pairwise similarities of high-dimensional data points, and a similar distribution in the low-dimensional embedding. It uses a heavy-tailed Student-t distribution in the low-dimensional space to alleviate the "crowding problem." It excels at preserving local structure but is computationally intensive and non-parametric.

Uniform Manifold Approximation and Projection (UMAP): UMAP is grounded in Riemannian geometry and algebraic topology. It constructs a fuzzy topological representation of the high-dimensional data (using local manifold approximations and nearest-neighbor graphs) and optimizes a low-dimensional layout to have as similar a fuzzy topological structure as possible via cross-entropy minimization. It is faster than t-SNE and often better preserves global structure.

Autoencoders (AEs): Autoencoders are neural networks trained to reconstruct their input through a bottleneck layer. The encoder ( f(x) ) maps input ( x ) to a latent code ( z ), and the decoder ( g(z) ) reconstructs ( \hat{x} ). The loss function, typically Mean Squared Error ( L(x, \hat{x}) = ||x - g(f(x))||^2 ), forces the model to learn compressed, meaningful representations. Variants like Variational Autoencoders (VAEs) learn a probabilistic latent space.

Quantitative Comparison of Key Algorithmic Properties

Table 1: Comparative Analysis of Non-Linear Dimensionality Reduction Techniques

| Property | t-SNE | UMAP | Autoencoder (Vanilla) |

|---|---|---|---|

| Theoretical Basis | Stochastic neighbor embedding, KL divergence | Riemannian geometry, fuzzy simplicial sets | Neural network, reconstruction loss |

| Global Structure Preservation | Poor | Good | Variable (Architecture dependent) |

| Local Structure Preservation | Excellent | Excellent | Good |

| Scalability | (O(N^2)) computationally, memory-intensive | (O(N^{1.14})) approx., more scalable | (O(N)), scalable with mini-batch training |

| Parametric Mapping | No (Out-of-sample problem) | No (Out-of-sample problem) | Yes (Can embed new data) |

| Typical Neuroimaging Use Case | Static visualization of neural states or clusters | Large-scale cohort visualization, connectome mapping | Feature learning for classification, anomaly detection |

Table 2: Example Performance Metrics on Benchmark Neuroimaging Datasets (HCP, ADNI)*

| Method | Cluster Quality (Silhouette Score) | Run Time (sec, N=10k, dim=100) | Downstream Classification Accuracy (SVM) |

|---|---|---|---|

| PCA | 0.15 | 2.1 | 72.5% |

| t-SNE | 0.68 | 452.7 | N/A |

| UMAP | 0.65 | 32.5 | N/A |

| Denoising Autoencoder | 0.52 | 110.3 (training) | 78.9% |

Experimental Protocols for Neuroimaging Applications

Protocol 1: Visualizing Resting-State fMRI Dynamics with t-SNE

- Data Preprocessing: Using BIDS-formatted data, apply slice-timing correction, realignment, normalization to MNI space, and band-pass filtering (0.01-0.1 Hz). Extract time series from a predefined atlas (e.g., Shen 268-node).

- Feature Construction: Calculate a dynamic functional connectivity matrix using sliding windows (e.g., window=50 TRs, step=1 TR). Vectorize the upper triangle of each correlation matrix to form high-dimensional vectors.

- t-SNE Embedding: Apply PCA (50 components) for initial reduction. Use t-SNE with perplexity=30, learning rate=200, and 1000 iterations. Embeddings are visualized and color-coded by experimental condition or cognitive state.

- Validation: Assess cluster separation using silhouette scores against known task blocks or clinical labels.

Protocol 2: Identifying Disease Subtypes with UMAP on Structural MRI

- Cohort & Features: Use T1-weighted scans from cohorts like ADNI (Alzheimer's Disease) and controls. Extract regional cortical thickness and subcortical volumes using FreeSurfer.

- UMAP Pipeline: Normalize features (z-scoring). Set UMAP parameters:

n_neighbors=15,min_dist=0.1,metric='euclidean'. Project data to 2D/3D. - Density-Based Clustering: Apply HDBSCAN on the UMAP embedding to identify natural clusters. These clusters are hypothesized disease subtypes.

- Biomarker Validation: Perform ANOVA on original imaging features and external biomarkers (e.g., CSF p-tau levels) across identified clusters to validate biological relevance.

Protocol 3: Learning Latent Representations of EEG with Variational Autoencoders

- Input Data: Use raw or time-frequency transformed EEG epochs. For epileptic spike detection, use 1-second epochs centered on spikes and controls.

- VAE Architecture: Encoder: 2 Conv1D layers → Flatten → Dense layers to μ and σ. Latent space dimension (z): 10. Decoder: Dense → Reshape → 2 Conv1DTranspose layers. Loss: Reconstruction (MSE) + KL divergence.

- Training: Train for 100 epochs with Adam optimizer (lr=1e-4). Regularize with a β factor on the KL term.

- Analysis: The latent vectors ( z ) serve as compressed features for a supervised classifier. Anomaly detection is performed by thresholding reconstruction error.

Visualization of Workflows

Title: t-SNE fMRI Analysis Workflow

Title: UMAP for Disease Subtyping

Title: Variational Autoencoder for EEG Representation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Libraries for Implementation

| Tool/Reagent | Function | Key Notes |

|---|---|---|

| nilearn (Python) | Statistical learning for neuroimaging data. | Provides high-level abstractions for connecting ML to brain images and atlases. |

| UMAP-learn | Python implementation of UMAP. | Critical for fast, scalable manifold learning on large cohorts. |

| TensorFlow / PyTorch | Deep learning frameworks. | Essential for building and training custom autoencoder architectures. |

| DANDI Archive | Standardized repository for neurophysiology data. | Source for public datasets (e.g., EEG, calcium imaging) to test pipelines. |

| BIDS (Brain Imaging Data Structure) | File organization standard. | Ensures reproducibility and interoperability of preprocessing pipelines. |

| CuML (RAPIDS) | GPU-accelerated ML libraries. | Dramatically speeds up UMAP/t-SNE on very large datasets (N > 100k). |

| HDBCSAN | Clustering algorithm for UMAP embeddings. | Robust to noise, does not require pre-specifying number of clusters. |

This guide presents an integrated technical workflow for neuroimaging data analysis, framed within the critical thesis of feature reduction in neuroimaging research. High-dimensional neuroimaging datasets, such as those from fMRI, sMRI, or DTI, pose significant challenges for machine learning models due to the "curse of dimensionality." Effective feature reduction is not merely a preprocessing step but a foundational component that dictates the success of downstream predictive or diagnostic tasks. This document provides a step-by-step protocol for researchers and drug development professionals to bridge raw data preprocessing with robust machine learning pipelines.

Foundational Preprocessing Pipeline

The initial phase transforms raw, noisy neuroimaging data into a structured, analysis-ready format. This standardization is paramount for reproducibility and valid statistical inference.

Experimental Protocol: Structural MRI (sMRI) Preprocessing with FSL

- Format Conversion: Convert DICOM to NIfTI using

dcm2niix. - Reorientation: Standardize image orientation to LAS (Left-Anterior-Superior) using

fslreorient2std. - Skull Stripping: Remove non-brain tissue using FSL's

BETwith a fractional intensity threshold of 0.5. - Tissue Segmentation: Segment brain into Grey Matter (GM), White Matter (WM), and Cerebrospinal Fluid (CSF) using

FAST. - Spatial Normalization: Register the GM map to a standard space (e.g., MNI152) using

FLIRT(linear) andFNIRT(non-linear). - Smoothing: Apply a Gaussian kernel (e.g., 8mm FWHM) for inter-subject alignment and noise reduction using

fslmaths.

Table 1: Common Preprocessing Software Suites & Metrics

| Software Suite | Primary Use Case | Key Output Metric | Typical Processing Time (per subject) |

|---|---|---|---|

| FSL (v6.0.7) | sMRI/fMRI/DTI preprocessing | Voxel-based Morphometry (VBM) maps, FA maps | 45-90 minutes |

| SPM12 | Statistical parametric mapping, DARTEL | Smooth, normalized tissue probability maps | 60-120 minutes |

| FreeSurfer (v7.4) | Cortical reconstruction & surface-based analysis | Cortical thickness, parcellated regional volumes | 4-10 hours |

| AFNI | fMRI time-series analysis | Beta coefficient maps, % signal change | 30-60 minutes |

Title: Core Neuroimaging Preprocessing Workflow

Feature Extraction & Reduction Techniques

Post-preprocessing, meaningful features are extracted. The high dimensionality (often 100,000s of voxels) necessitates reduction.

Experimental Protocol: Voxel-Based Morphometry (VBM) Feature Reduction

- Feature Masking: Apply a binary brain mask to exclude non-brain voxels, reducing dimensions by ~30%.

- Global Signal Regression: For fMRI, regress out the global mean signal to reduce scanner-related noise.

- Dimensionality Reduction:

- Principal Component Analysis (PCA): Use

scikit-learnPCAto retain components explaining 95% variance. - Independent Component Analysis (ICA): Apply

MELODIC(FSL) to decompose data into 20-50 independent spatial components. - Atlas-Based Parcellation: Use the Harvard-Oxford atlas to average voxel intensities within 96 cortical regions, reducing features to tractable counts.

- Principal Component Analysis (PCA): Use

Table 2: Feature Reduction Technique Comparison

| Technique | Method Category | Key Hyperparameter | Typical Dimensionality Reduction | Preserves Interpretability? |

|---|---|---|---|---|

| PCA | Linear, Unsupervised | # Components / Variance Threshold | 100k+ voxels → 50-500 components | Low (Components are linear combos) |

| ICA | Blind Source Separation | # Independent Components | 100k+ voxels → 20-100 components | Medium (Spatial maps are interpretable) |

| Atlas Parcellation | Region-of-Interest (ROI) | Atlas Choice (e.g., AAL, Desikan-Killiany) | 100k+ voxels → 50-300 ROIs | High (Features map to known anatomy) |

| Autoencoder | Non-linear, Deep Learning | Latent Space Dimension, Network Architecture | 100k+ voxels → 50-500 latent features | Low (Latent space is abstract) |

Integrated Downstream ML Pipeline

Reduced features are fed into machine learning models for classification, regression, or clustering.

Experimental Protocol: Classification of Alzheimer's Disease vs. Controls

- Data Partitioning: Split data (e.g., 150 AD, 150 CN) into training (70%), validation (15%), and held-out test (15%) sets, ensuring stratification by diagnosis.

- Feature Standardization: Standardize training features to zero mean and unit variance using

StandardScaler; apply parameters to validation/test sets. - Model Training & Tuning: Train a linear Support Vector Machine (SVM) with

sklearn.svm.LinearSVC. Optimize the regularization parameterC(log range: 1e-4 to 1e4) via 5-fold cross-validation on the training set. - Validation & Testing: Evaluate the best model on the validation set for final hyperparameter selection, then report final performance only on the held-out test set.

- Performance Metrics: Calculate accuracy, sensitivity, specificity, and Area Under the ROC Curve (AUC).

Title: Integrated Machine Learning Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Tool Category | Specific Example / Vendor | Primary Function in Workflow |

|---|---|---|

| Neuroimaging Analysis Suites | FSL (FMRIB, Oxford), FreeSurfer (Martinos Center), SPM12 (Wellcome Centre) | Core platform for data preprocessing, segmentation, and statistical mapping. |

| Programming & ML Environments | Python 3.9+ with nibabel, scikit-learn, nilearn; R with oro.nifti, caret |

Custom scripting, pipeline automation, and implementation of ML models. |

| Computational Resources | High-Performance Compute (HPC) Cluster, NVIDIA GPUs (e.g., A100, V100) | Enables processing of large cohorts and computationally intensive methods (e.g., deep learning). |

| Standardized Brain Atlases | MNI152 Template, Harvard-Oxford Cortical Atlas, AAL (Automated Anatomical Labeling) | Provides spatial reference for normalization and defines ROIs for feature extraction. |

| Data & Format Standards | Brain Imaging Data Structure (BIDS) | Organizes raw data in a consistent, reproducible hierarchy, simplifying pipeline input. |

| Quality Control Visualizers | FSLeyes, FreeView (FreeSurfer), MRIQC | Visual inspection of preprocessing outputs (segmentation, registration) to reject failures. |

1. Introduction: Feature Reduction in Neuroimaging

This case study is presented within the broader thesis on "Introduction to Feature Reduction Techniques in Neuroimaging Research." Functional magnetic resonance imaging (fMRI) data, particularly Blood Oxygen Level Dependent (BOLD) signals, is characterized by extreme high dimensionality (tens to hundreds of thousands of voxels) relative to a small number of observations (trials or subjects). This "curse of dimensionality" leads to overfitting, increased computational cost, and reduced model interpretability. Feature reduction is thus a critical preprocessing step for robust cognitive state decoding, which aims to map brain activity patterns to specific mental states (e.g., viewing faces vs. houses, memory encoding vs. retrieval).

2. Core Feature Reduction Techniques for fMRI

Two primary categories are employed: feature selection and feature extraction.

- Feature Selection: Selects a subset of the original voxels.

- Univariate Methods: Filters voxels based on statistical tests (e.g., ANOVA, t-test) against the experimental condition. Fast but ignores multivariate interactions.

- Multivariate Methods: Uses algorithms like Recursive Feature Elimination (RFE) with a classifier (e.g., SVM) to iteratively remove the least important features.

- Feature Extraction: Creates a new, lower-dimensional set of features from the original data.

- Principal Component Analysis (PCA): Linear transformation that finds orthogonal axes of maximal variance. Does not utilize class labels.

- Linear Discriminant Analysis (LDA): Finds feature projections that maximize separation between classes.

- Independent Component Analysis (ICA): Assumes data is a linear mix of independent source signals (e.g., neural networks, artifacts), which it tries to separate.

3. Experimental Protocol: A Standard Decoding Pipeline

A typical fMRI decoding experiment with feature reduction follows this protocol:

- Data Acquisition & Preprocessing: Collect BOLD fMRI data across task conditions. Preprocess (realignment, normalization, smoothing). Extract beta estimates or time-series per voxel per condition/ trial to form a data matrix

X(nsamples × nvoxels) and label vectory. - Feature Reduction: Apply feature reduction (e.g., PCA, univariate selection) only on the training set within a cross-validation loop to avoid data leakage.

- Model Training & Validation: Train a classifier (e.g., linear Support Vector Machine - SVM) on the reduced training features. Validate on the left-out test set transformed using the same reduction parameters.

- Performance Evaluation: Calculate decoding accuracy (percentage of correctly predicted test samples) averaged across cross-validation folds.

4. Comparative Data from Recent Studies

Table 1: Impact of Feature Reduction on fMRI Decoding Accuracy (Representative Data)

| Study Focus | Dataset | Baseline (Full Feature) Accuracy | Optimal Reduction Method | Reduced Feature Count | Final Accuracy | Key Insight |

|---|---|---|---|---|---|---|

| Face vs. Place Decoding | HCP (7T Retinotopy) | 72.5% (±3.1) | PCA (50 components) | 50 (from ~25k voxels) | 94.2% (±1.8) | PCA removed noise, capturing systemic variance. |

| Memory Encoding Success | fMRI (n=30) | 61.0% (±5.5) | Univariate F-test (top 5%) | ~3k (from ~60k voxels) | 88.0% (±4.2) | Selection highlighted hippocampal & prefrontal contributions. |

| Cognitive Load (n-back) | OpenNeuro ds003452 | 70.8% (±4.3) | RFE-SVM | 1,500 (from ~50k voxels) | 92.5% (±2.5) | RFE identified a distributed frontoparietal network. |

| Resting-State Network ID | ICA-based Study | N/A | ICA (50 components) | 50 (from ~45k voxels) | N/A | ICA components mapped directly to known RSNs (DMN, SAN). |

Table 2: Comparison of Feature Reduction Techniques for fMRI

| Method | Type | Preserves Interpretability | Computational Cost | Use of Label Info | Primary Strength | Primary Weakness |

|---|---|---|---|---|---|---|

| Univariate Filter | Selection | High (voxel-level) | Low | Yes | Simple, fast, interpretable. | Ignores multivariable correlations. |

| RFE | Selection | High (voxel-level) | High | Yes | Optimizes for classifier performance. | Computationally intensive, can overfit. |

| PCA | Extraction | Moderate (component-level) | Medium | No | Maximizes variance, good for denoising. | Components may not be discriminative. |

| LDA | Extraction | Moderate (projection-axis) | Medium | Yes | Maximizes class separation directly. | Prone to overfitting with small samples. |

| ICA | Extraction | Moderate (component-level) | High | No | Can separate neural signals from artifacts. | Order and scale of components are arbitrary. |

5. Visualizing the Workflow and Logic

fMRI Decoding with Feature Reduction Workflow

Choosing a Feature Reduction Method

6. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for fMRI Feature Reduction & Decoding

| Tool / Reagent | Category | Function in Experiment |

|---|---|---|

| NiLearn (Python) | Software Library | Provides comprehensive tools for fMRI data analysis, feature reduction, and decoding. |

| scikit-learn | Software Library | Industry-standard library implementing PCA, ICA, LDA, RFE, SVMs, and cross-validation. |

| FSL (FMRIB Software Library) | Software Suite | Used for preprocessing (MELODIC for ICA) and general fMRI analysis. |

| SPM (Statistical Parametric Mapping) | Software Suite | Popular MATLAB-based platform for preprocessing, univariate modeling, and ROI extraction. |

| PyMVPA | Software Library | Specifically designed for multivariate pattern analysis of neuroimaging data. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Essential for computationally heavy processes like searchlight analysis with RFE or ICA. |

| Standard Brain Atlases (e.g., Harvard-Oxford, AAL) | Data | Provide anatomical regions for interpreting selected features or component maps. |

| Hyperalignment/Shared Response Model | Advanced Tool | Aligns neural data across subjects in a functional space before feature reduction. |

Avoiding Pitfalls: Best Practices for Optimizing Your Feature Reduction Pipeline

Within the broader thesis on Introduction to feature reduction techniques in neuroimaging research, a paramount and frequently underestimated challenge is data leakage during feature selection. In neuroimaging, where datasets are characterized by high dimensionality (e.g., hundreds of thousands of voxels or connectivity edges) and a relatively small number of participants, the risk of overfitting is severe. Applying feature selection to the entire dataset before partitioning for cross-validation (CV) leaks information about the test samples into the training process. This leads to grossly optimistic performance estimates, invalidating the predictive model and potentially leading to erroneous scientific conclusions or flawed biomarker identification in drug development. This whitepaper details the mechanics of this leakage and mandates the use of Nested Cross-Validation (NCV) as the definitive solution.

The Mechanism of Data Leakage in Standard CV

When feature selection (or any hyperparameter tuning) is performed using the same data partition used for final performance evaluation, information from the 'future' test set leaks into the model-building phase.

Experimental Protocol for Demonstrating Leakage (Simulation):

- Dataset: A synthetic neuroimaging-style dataset with 10,000 features (voxels) and 100 samples (subjects), where only 50 features are truly predictive of a binary label (e.g., patient vs. control).

- Method A (Faulty - Leakage Present):

- Apply a univariate feature selection filter (e.g., ANOVA F-value) to the entire dataset of 100 samples.

- Select the top 100 features.

- Split the data into 80% train / 20% test.

- Train a linear SVM on the 80 training samples (using only the pre-selected 100 features).

- Evaluate accuracy on the 20 test samples.

- Repeat for 100 random train/test splits.

- Method B (Correct - Nested CV):

- For each train/test split:

- Apply the feature selection filter only to the 80 training samples.

- Select the top 100 features based on training data.

- Train the SVM on the 80 training samples (using features selected from them).

- Evaluate on the 20 test samples, applying the same feature selection filter (using the thresholds learned from the training set).

- Repeat for 100 random train/test splits.

- For each train/test split:

- Outcome: Compare the distribution of classification accuracies from Method A and Method B.

Table 1: Comparative Performance Estimates with and without Data Leakage

| Method | Feature Selection Scope | Mean Accuracy (%) | Accuracy Std Dev | Notes |

|---|---|---|---|---|

| Faulty CV (Leakage) | Applied to entire dataset before splitting | 92.4 | ± 3.1 | Optimistically biased, invalid estimate |

| Nested CV | Applied independently within each training fold | 68.7 | ± 7.8 | Realistic, unbiased generalization estimate |

Nested Cross-Validation: The Definitive Protocol

Nested CV rigorously separates the model tuning (including feature selection) from the final performance estimation. It consists of two layers of cross-validation.

Diagram 1: Nested Cross-Validation Workflow

Detailed NCV Experimental Protocol:

- Define Outer Loop (k1-fold CV): Partition the full dataset into k1 folds (e.g., 5 or 10). This loop estimates the generalization performance.

- Iterate Outer Loop: For each outer fold i: a. Outer Test Set: Hold out fold i. b. Outer Training Set: Use the remaining k1-1 folds.

- Define Inner Loop (k2-fold CV): On the Outer Training Set, partition into k2 folds. This loop performs model selection.

- Iterate Inner Loop: For each inner configuration (e.g., number/type of features, model hyperparameters): a. Train the model with that configuration on k2-1 inner training folds. b. Evaluate it on the held-out inner validation fold. c. Average performance across all k2 inner folds.

- Select Best Configuration: Choose the feature set and hyperparameters with the best average inner-loop performance.

- Train Final Outer Model: Retrain the model using the entire Outer Training Set with the best-selected configuration.

- Evaluate on Outer Test Set: Apply the entire fitted pipeline (including the fitted feature selector) to the untouched Outer Test Set (fold i) to obtain a performance score.

- Aggregate: Repeat for all k1 outer folds. The final model performance is the average of all k1 outer test scores.

Neuroimaging Case Study: fMRI Biomarker Discovery

Objective: Identify a sparse set of functional connectivity features that predict treatment response to a novel neuropsychiatric drug.

Protocol:

- Data: Preprocessed resting-state fMRI from 150 participants (75 responders, 75 non-responders). Features are 6,000 correlation coefficients from a pre-defined brain network parcellation.

- Nested CV Setup:

- Outer Loop: 5-fold stratified CV (30 participants per test fold). Output: Unbiased AUC estimate.

- Inner Loop: On the 120-participant outer training set, run a 4-fold CV to optimize:

- Feature Selection Method: L1-SVM (LASSO) penalty parameter C.

- Classifier: Final linear SVM with optimized C.

- Analysis: Within each inner loop, the LASSO selects a subset of connectivity edges. The optimal C is chosen to maximize inner-loop AUC. The final model for that outer fold is trained on all 120 outer training samples with the optimal C.

Table 2: Comparison of Faulty vs. Nested CV in fMRI Case Study

| Evaluation Scheme | Estimated AUC | # Features Selected (Avg) | Risk in Drug Development Context |

|---|---|---|---|

| Single-Train/Test Split with Leaky Selection | 0.91 | ~850 | High; promising biomarker signature is likely non-generalizable, leading to failed Phase II/III trials. |

| 5-Fold CV with Leaky Selection | 0.88 | ~900 | Medium-High; institutional reproducibility crisis. |

| Nested 5x4-Fold CV | 0.73 | ~110 | Low; realistic performance, robust feature set. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust Feature Selection Analysis

| Item / Solution | Function / Explanation | Example in Neuroimaging Context |

|---|---|---|

| scikit-learn Pipeline | Encapsulates the sequence of transformers (scaler, selector) and estimator into a single object, preventing leakage during CV. | Pipeline([('scaler', StandardScaler()), ('select', SelectKBest(f_classif)), ('svm', SVC())]) |

| NestedCrossValidator | Custom or library-specific class to formally implement the nested loop structure. | sklearn.model_selection.GridSearchCV (for inner loop) inside an outer cross_val_score. |

| ML Libraries with CV-aware Feature Selection | Libraries that integrate selection within the model (embedded methods) or ensure proper CV partitioning. | sklearn.svm.LinearSVC(penalty='l1') for embedded selection; sklearn.feature_selection.RFECV for recursive elimination with internal CV. |

| High-Performance Computing (HPC) Cluster | NCV is computationally intensive (k1 * k2 models). HPC enables feasible runtime on large neuroimaging datasets. | Running 5x5 NCV on 10,000 features and 1,000 subjects with permutation testing. |

| Permutation Testing Framework | Provides a null distribution for the NCV performance score, testing if the result is better than chance. | Shuffling participant labels 1000x and repeating the entire NCV to obtain a p-value for the true AUC. |

Advanced Considerations and Logical Relationships

The choice of feature selection method interacts with the NCV structure and the model's goal.

Diagram 2: Feature Selection Method Selection Logic

Data leakage during feature selection is a critical vulnerability in neuroimaging research and biomarker development for pharmaceuticals. It produces irreproducible, over-optimistic results that can derail scientific understanding and waste vast resources in drug development pipelines. Nested Cross-Validation is not merely a best practice but an essential methodological requirement for obtaining valid performance estimates and robust feature sets. Its rigorous separation of model tuning and testing is the only way to ensure that predictive neuroimaging signatures generalize to new patient populations, a prerequisite for translational impact.

Feature reduction is a critical preprocessing step in neuroimaging research, where datasets are characteristically high-dimensional (e.g., voxel-based measures from fMRI, structural MRI, or PET). The overarching thesis, "Introduction to feature reduction techniques in neuroimaging research," posits that effective reduction is not about mere data compression but about isolating biologically and clinically relevant signals from noise. This guide addresses the central practical challenge within that thesis: selecting the number of components that optimally balances the simplification afforded by dimensionality reduction against the unacceptable loss of predictive or explanatory information.

Core Concepts: Variance, Reconstruction Error, and Interpretability

The choice of components is fundamentally an optimization problem. For linear techniques like Principal Component Analysis (PCA), the primary metric is cumulative explained variance. Non-linear methods, such as t-Distributed Stochastic Neighbor Embedding (t-SNE) or Uniform Manifold Approximation and Projection (UMAP), optimize different cost functions related to neighborhood preservation. The reconstruction error quantifies the fidelity of the reduced data when projected back to the original space. In a neuroimaging context, interpretability is paramount; components must align with plausible neurobiological or cognitive constructs.

Quantitative Metrics for Component Selection

The following table summarizes the key quantitative metrics used to evaluate the trade-off for different component counts (N).

Table 1: Quantitative Metrics for Component Selection in Dimensionality Reduction

| Metric | Formula / Description | Ideal Outcome | Common Threshold in Neuroimaging | ||||

|---|---|---|---|---|---|---|---|

| Cumulative Explained Variance (PCA) | $\sum{i=1}^{N} \lambdai / \sum{i=1}^{P} \lambdai$, where $\lambda$ are eigenvalues. | Rapid initial increase, then asymptote. | N is chosen where curve "elbows" (70-95% typical). | ||||

| Scree Plot Slope | Plot of eigenvalues ($\lambda_i$) in descending order. | Point where slope sharply decreases ("elbow"). | Component N at the elbow. | ||||

| Mean Squared Reconstruction Error | $ | X - X_{reconstructed} | ^2_F / \text{samples}$ | Minimized, but plateaus with increasing N. | N chosen at error plateau. | ||

| Kaiser-Guttman Criterion | Retain components with eigenvalues $\lambda_i > 1$. | Simple heuristic for standardized data. | Often considered a lower bound. | ||||

| Parallel Analysis | Retain components where $\lambda{data} > \lambda{simulated}$ from random data. | Controls for sampling noise. | Robust, widely recommended threshold. | ||||

| Predictive Accuracy (Wrapper Method) | Model performance (e.g., SVM accuracy) on held-out test set using N components. | Performance peaks at optimal N. | N at maximum cross-validated accuracy. |

Experimental Protocols for Evaluation

To rigorously choose N, researchers should implement the following protocol, integrating multiple metrics.

Protocol 1: Cross-Validated Variance & Parallel Analysis for PCA