Bayesian Statistics for Small Neurochemical Studies: A Practical Guide for Neuroscience Research and Drug Development

This article provides a comprehensive guide for biomedical researchers on applying Bayesian statistical methods to small-scale neurochemical studies, a common yet challenging scenario in neuroscience and drug development.

Bayesian Statistics for Small Neurochemical Studies: A Practical Guide for Neuroscience Research and Drug Development

Abstract

This article provides a comprehensive guide for biomedical researchers on applying Bayesian statistical methods to small-scale neurochemical studies, a common yet challenging scenario in neuroscience and drug development. It addresses the limitations of traditional frequentist approaches when sample sizes are limited, such as low statistical power and an inability to quantify evidence for the null hypothesis. The content systematically explores the foundational philosophy of Bayesian inference, demonstrates practical workflows for model specification, prior selection, and computation using modern software tools like Stan and JAGS, and offers solutions for common challenges including prior sensitivity and model validation. Furthermore, it compares Bayesian and frequentist results in neurochemical contexts and discusses how Bayesian methods enhance the robustness and interpretability of findings from pilot studies, preclinical trials, and exploratory biomarker research. The goal is to equip researchers with the knowledge to make more informative inferences from limited data, thereby accelerating discovery and improving decision-making in translational neuroscience.

Why Bayesian Statistics? Overcoming Small Sample Challenges in Neurochemical Research

This whitepaper addresses the critical issue of statistical power in small-sample (small-N) neurochemistry studies, a prevalent challenge in exploratory neuroscience and early-stage psychopharmacology research. The inherent difficulty of obtaining large datasets—due to the complexity, cost, and ethical constraints of in vivo neurochemical measurements—often leads to studies with low statistical power under traditional frequentist frameworks. This results in a high risk of both Type II errors (missing true effects) and, paradoxically, inflated Type I errors when coupled with questionable research practices.

Framed within a broader thesis on Bayesian statistics for neurochemical research, this guide argues for a paradigm shift. Bayesian methods offer a coherent framework for quantifying evidence, incorporating prior knowledge from related literature or pilot studies, and making probabilistic statements about parameters of interest. This is particularly valuable for small-N designs, where Bayesian approaches can provide more nuanced interpretations than simple binary "significant/non-significant" outcomes, ultimately leading to more cumulative and informative science.

The Core Statistical Problem: Power in Small-N Designs

In frequentist statistics, power is the probability of correctly rejecting a null hypothesis when it is false. Power depends on sample size (N), effect size, and alpha level. In neurochemistry, typical effect sizes for novel manipulations can be modest, and N is often limited.

Table 1: Statistical Power for Common Small-N Neurochemistry Study Designs

| Experimental Design | Typical N (per group) | Assumed Cohen's d | Frequentist Power (α=0.05) | Bayesian Alternative |

|---|---|---|---|---|

| Microdialysis (Rat, paired) | 8-12 | 0.8 | ~0.30 - 0.50 | Bayes Factor (BF) or HDI + ROPE |

| Voltammetry (Mouse) | 6-10 | 1.0 | ~0.35 - 0.60 | Posterior Distribution Comparison |

| Brain Tissue HPLC (Human post-mortem) | 10-15 (total) | 0.7 | ~0.25 - 0.40 | Hierarchical Bayesian Model |

| PET Radiotracer Binding (Pilot) | 5-8 | 1.2 | ~0.40 - 0.65 | Prior-Informed Bayesian Estimation |

Note: Power calculations based on two-sample t-test approximations. Cohen's d estimates are illustrative and vary by specific model and analyte.

The table demonstrates the critically low power in standard designs. A study with 80% power requires an N of approximately 26 per group for d=0.8. This is frequently unattainable, leading to unreliable literature.

Bayesian Approaches for Small-N Neurochemical Data

Key Concepts

- Prior Distribution: Encapsulates existing knowledge about a parameter (e.g., expected dopamine increase from a known drug).

- Likelihood: The probability of the observed data given the parameters.

- Posterior Distribution: The updated belief about the parameters after observing the data. This is the core output for inference.

- Bayes Factor (BF): A ratio of the probability of the data under two competing hypotheses (e.g., H1: an effect exists vs. H0: no effect). BF10 > 3 provides modest evidence for H1.

- Highest Density Interval (HDI) + ROPE: The posterior distribution's most credible parameter values are summarized by an HDI (e.g., 95%). A Region of Practical Equivalence (ROPE) is defined as a range of effect sizes considered trivial. Inference is based on the HDI's relation to the ROPE.

Experimental Protocol: Bayesian Analysis of a Microdialysis Study

Aim: To assess the effect of Novel Drug X on extracellular prefrontal cortex glutamate in rats (N=9 treatment, N=9 vehicle).

Step-by-Step Protocol:

- Data Collection: Perform in vivo microdialysis. Collect baseline dialysate for 60min, administer Drug X or vehicle, and collect samples every 20min for 180min. Analyze samples via HPLC-MS/MS.

- Data Preprocessing: Express glutamate levels as percent change from mean baseline. Calculate area under the curve (AUC) for the 0-180min period for each subject.

- Define Statistical Model: Use a linear model:

AUC ~ group + (1|batch), wheregroupis the fixed effect andbatchis a random effect for experimental day. - Specify Priors:

- Intercept (Vehicle Mean): Normal(μ=100%, σ=15%) – based on historical vehicle data.

- Effect of Drug X: Normal(μ=120%, σ=20%) – a weakly informative prior suggesting an increase, based on related drug classes.

- Sigma (Residual SD): Half-Cauchy(0, 10) – a non-informative prior for variance.

- Compute Posterior: Use Markov Chain Monte Carlo (MCMC) sampling (e.g.,

brmsin R, orPyMC3in Python) to generate the posterior distribution for the group difference. - Inference & Interpretation:

- Plot the posterior distribution for the drug effect.

- Calculate the 95% HDI. If the entire 95% HDI falls above a ROPE defined as [-10%, +10%] (a trivial change), conclude a practical effect.

- Alternatively, compute a Bayes Factor comparing the model including the

groupeffect to one without it.

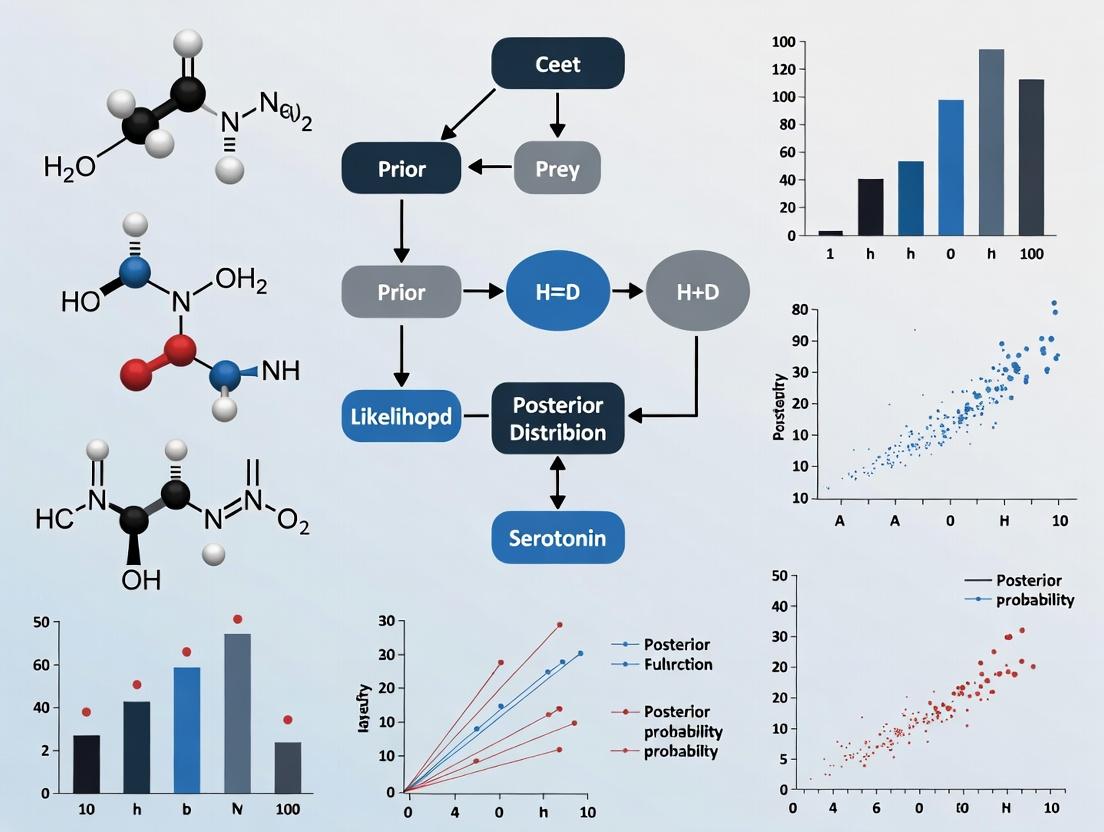

Bayesian Inference Workflow

Signaling Pathway & Experimental Visualization

Neurochemistry Study Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Research Reagents & Materials for Small-N Neurochemistry

| Item & Example Product | Primary Function in Small-N Studies |

|---|---|

| CMA 7 Microdialysis Probes (or similar) | In vivo recovery of extracellular fluid from specific brain regions. Critical for longitudinal chemical measurement in a single subject, increasing within-subject power. |

| PBS-based Perfusion Fluid (aCSF) | Isotonic solution for microdialysis perfusion. Its precise ionic composition is vital for maintaining tissue viability and obtaining physiologically relevant measurements. |

| Enzymatic Assay Kits (e.g., Glutamate Assay Kit, Abcam ab83389) | High-sensitivity fluorometric/colorimetric detection of specific analytes in low-volume dialysate or tissue homogenate, enabling measurement of low abundance targets. |

| LC-MS/MS Grade Solvents & Standards (e.g., Cerilliant Certified Reference Standards) | Essential for mass spectrometry quantification. Highest purity ensures low background noise and accurate calibration, maximizing signal-to-noise in precious small-N samples. |

| C18 Solid-Phase Extraction (SPE) Columns | Clean-up and concentrate neurochemicals from biological samples prior to analysis, improving detectability and assay precision. |

| Brain Matrix (Roboz) & Precision Punch Tools | Allow for highly reproducible dissection of sub-regions (e.g., nucleus accumbens core vs. shell) from cryopreserved brain tissue, reducing anatomical variability. |

| Multiplex Immunoassay Panels (e.g., MILLIPLEX MAP Magnetic Bead Panels) | Simultaneously quantify multiple neuropeptides or signaling phosphoproteins from a single small tissue lysate, maximizing information yield per subject. |

| Slow-Release Drug Formulations (e.g., osmotic minipumps) | Enable stable, chronic drug delivery in rodents, reducing inter-subject variability caused by injection stress and pharmacokinetic fluctuations compared to acute dosing. |

This whitepaper details the core computational concepts of Bayesian statistics as applied to small-sample neurochemical studies, a common scenario in preclinical drug development and neuroscience research. The broader thesis argues that a Bayesian framework is uniquely suited for this domain due to its ability to formally incorporate existing knowledge (e.g., from animal models or prior compounds) and provide direct probabilistic answers to research questions (e.g., "What is the probability that this new neurotransmitter analog reduces inflammatory markers by at least 20%?"). This approach contrasts with frequentist methods that are often underpowered and less intuitive in small-n studies typical of exploratory neurochemical work.

The Bayesian Triad: Definitions and Neurochemical Interpretations

Prior Probability Distribution (P(θ))

The prior represents pre-existing belief or knowledge about a parameter θ before seeing the new experimental data. In neurochemical studies, this often derives from historical control data, pilot studies, or literature on similar compounds.

- Informative Prior: Based on strong previous evidence. Example: For a study on a novel D2 receptor partial agonist, a prior for binding affinity (Ki) could be centered on 5 nM with narrow uncertainty, based on known structural analogs.

- Weakly Informative or Regularizing Prior: Used to constrain parameters to plausible ranges without strongly influencing results. Example: A normal prior with mean 0 and SD of 50 for a log-fold change in cytokine concentration, keeping estimates biologically plausible.

- Diffuse/Vague Prior: Expresses substantial uncertainty. Used cautiously in small-n studies as it provides little stabilization.

Likelihood Function (P(Data | θ))

The likelihood describes the probability of observing the collected experimental data given a specific parameter value θ. It encodes the assumptions of the statistical model and the measurement process.

- Neurochemical Example: Measuring glutamate concentration via microdialysis yields continuous, positive values. A common likelihood is the Normal distribution (for log-transformed values) or a Gamma distribution, where θ represents the true underlying mean concentration and the distribution's spread captures technical and biological variability.

Posterior Probability Distribution (P(θ | Data))

The posterior is the ultimate output of Bayesian analysis. It combines the prior and the likelihood (via Bayes' Theorem) to yield an updated probability distribution for the parameter θ after considering the new data.

- Interpretation: The full posterior distribution can be summarized by its median (a point estimate) and a 95% Credible Interval (CrI), which has a direct probabilistic interpretation: "There is a 95% probability that the true parameter value lies within this interval," given the prior and the data.

Bayes' Theorem: P(θ | Data) = [P(Data | θ) * P(θ)] / P(Data)

A Worked Neurochemical Example: Drug Effect on Striatal Dopamine

Research Question: What is the estimated percent change in striatal dopamine release induced by a new candidate drug (Drug X) compared to saline control, based on a small in vivo voltammetry study?

Experimental Protocol (Summarized):

- Subjects: 8 Sprague-Dawley rats, randomly assigned to Saline (n=4) or Drug X (n=4).

- Surgery: Implant a carbon-fiber microelectrode into the dorsolateral striatum.

- Measurement: Use fast-scan cyclic voltammetry (FSCV) to measure evoked dopamine release every 5 minutes.

- Intervention: After 30-min baseline, administer Saline or Drug X (5 mg/kg, i.p.).

- Data Extraction: Calculate the average peak dopamine concentration (μM) for the 30-min post-injection period as a percent of the baseline average for each subject.

- Outcome: The raw data (percent of baseline) for the Drug X group:

[125%, 118%, 135%, 128%].

Bayesian Analysis Setup:

- Parameter of interest (θ): True mean percent change from baseline in the population.

- Likelihood: Assume observed data are Normally distributed around θ with an unknown standard deviation σ. We estimate both parameters.

- Priors:

- For θ (mean % change): A Normal(100, 10) prior, centered at no change (100%), with SD=10%, encoding a belief that large effects (>120% or <80%) are a priori less probable.

- For σ (std dev): A Half-Cauchy(0, 5) prior, weakly constraining the within-group variability to be positive and not excessively large.

Computational Inference: Using Markov Chain Monte Carlo (MCMC) sampling (e.g., via Stan or PyMC), we obtain the joint posterior distribution for θ and σ.

Table 1: Posterior Distribution Summaries for Dopamine % Change

| Parameter | Prior Distribution | Posterior Median | 95% Credible Interval | Probability θ > 115% |

|---|---|---|---|---|

| Mean % Change (θ) | Normal(100, 10) | 121.5% | (112.8%, 130.1%) | 0.89 |

| Within-Group SD (σ) | Half-Cauchy(0, 5) | 6.8% | (3.5%, 15.9%) | — |

Interpretation: Given the prior and the data, there is an 89% probability that Drug X increases dopamine release by more than 15% above baseline. The most plausible value is a 21.5% increase.

Visualizing the Bayesian Workflow in Neurochemistry

Diagram 1: Bayesian Inference Workflow for Neurochemical Data.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Featured Neurochemical Experiments

| Item | Function in Context | Example/Notes |

|---|---|---|

| Carbon-Fiber Microelectrode | Sensing element for in vivo voltammetry; detects electroactive neurotransmitters (DA, NE, 5-HT) via oxidation/reduction. | ~7 μm diameter, cylindrical. |

| Artificial Cerebrospinal Fluid (aCSF) | Physiological perfusate for microdialysis probes; maintains ionic homeostasis at the tissue interface. | Contains NaCl, KCl, NaHCO3, MgCl2, CaCl2 at physiological pH. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kit | Quantifies specific neurochemicals (BDNF, cytokines, Aβ peptides) from brain homogenate or dialysate. | High sensitivity (pg/mL). |

| Liquid Chromatography (HPLC/UHPLC) Column | Stationary phase for separating neurochemicals in a mixture prior to detection (e.g., electrochemical, fluorescent). | C18 reverse-phase column common for monoamines. |

| Internal Standard (e.g., Dihydroxybenzylamine) | Added to tissue samples or dialysate prior to processing; corrects for recovery variability in HPLC-ECD. | Structurally similar analyte with similar extraction properties. |

| Receptor-Specific Radioligand (e.g., [³H]SCH-23390) | Binds with high affinity to target receptor (e.g., D1 receptor); used in autoradiography/binding assays for receptor density. | Tritiated or iodinated forms; requires scintillation counting. |

| Phospho-Specific Antibody | Detects activation state of signaling proteins (e.g., pERK, pCREB) via Western blot or IHC in drug-treated brain slices. | Validated for use in rodent tissue. |

In the context of small-scale neurochemical research—such as studies measuring neurotransmitter release, receptor binding affinity, or drug effects in specific brain regions—traditional frequentist p-values provide a limited and often misinterpreted measure of evidence. This guide advocates for a shift towards Bayesian methods, which allow direct quantification of the probability for both an alternative hypothesis (H₁: an effect exists) and a null hypothesis (H₀: no effect). This is critical in early-stage drug development where sample sizes are constrained by cost, ethical considerations, and tissue availability.

The Limitations of the p-Value in Neurochemical Research

A p-value represents the probability of observing data as extreme as, or more extreme than, the actual data, assuming the null hypothesis is true: P(data | H₀). It does not provide P(H₀ | data) or P(H₁ | data). In a neurochemical assay with n=5-10 per group (common due to labor-intensive microdialysis or HPLC procedures), p-values are highly unstable and prone to false positives and negatives.

Bayesian Foundations: From Likelihoods to Posterior Probabilities

Bayes' Theorem provides the mechanism to invert the conditional probability: P(H₁ | data) = [P(data | H₁) * P(H₁)] / P(data)

Where:

- P(H₁ | data) is the posterior probability of the alternative hypothesis.

- P(data | H₁) is the likelihood of the data under H₁ (informed by the effect size distribution).

- P(H₁) is the prior probability of H₁.

- P(data) is the total probability of the data.

An equivalent calculation can be made for P(H₀ | data). The ratio of these posteriors gives the posterior odds, directly quantifying the evidence.

Key Bayesian Metrics for Quantifying Evidence

Table 1: Comparison of Frequentist and Bayesian Evidence Metrics

| Metric | Formula / Principle | Interpretation in Neurochemical Context | ||

|---|---|---|---|---|

| p-value | P(Data ≥ Observed | H₀) | Probability of data assuming no drug effect. Low value suggests inconsistency with null. | |

| Bayes Factor (BF₁₀) | BF₁₀ = P(Data | H₁) / P(Data | H₀) | Relative support for H₁ vs H₀ from the data. BF=10 means data 10x more likely under H₁. |

| Posterior Probability | P(H₁ | Data) = (BF₁₀ * Prior Odds) / (1 + BF₁₀ * Prior Odds) | Direct probability that the drug effect is real, given the data and prior belief. | |

| Maximum Effect Size | Posterior distribution of Δ (e.g., % change in dopamine) | Provides a credible interval (e.g., 95% CrI) for the plausible magnitude of the neurochemical effect. |

Table 2: Interpreting Bayes Factors (Lee & Wagenmakers, 2013)

| BF₁₀ | Evidence Category | P(H₁) with Prior Odds 1:1 |

|---|---|---|

| > 100 | Extreme for H₁ | > 0.99 |

| 30 – 100 | Very Strong for H₁ | 0.97 – 0.99 |

| 10 – 30 | Strong for H₁ | 0.91 – 0.97 |

| 3 – 10 | Moderate for H₁ | 0.75 – 0.91 |

| 1 – 3 | Anecdotal for H₁ | 0.5 – 0.75 |

| 1 | No evidence | 0.5 |

| 1/3 – 1 | Anecdotal for H₀ | 0.25 – 0.5 |

| 1/10 – 1/3 | Moderate for H₀ | 0.09 – 0.25 |

| 1/30 – 1/10 | Strong for H₀ | 0.03 – 0.09 |

| 1/100 – 1/30 | Very Strong for H₀ | 0.01 – 0.03 |

| < 1/100 | Extreme for H₀ | < 0.01 |

Experimental Protocol & Analysis Workflow

This protocol outlines a Bayesian re-analysis of a typical in vivo microdialysis experiment measuring striatal dopamine release in response to a novel compound.

A. Experimental Design (Original Study)

- Subjects: 16 male Sprague-Dawley rats, randomly assigned to Vehicle (n=8) and Drug (n=8) groups.

- Surgery: Implant microdialysis guide cannula targeting striatum.

- Microdialysis: Post-recovery, perfuse with artificial cerebrospinal fluid (aCSF). Collect baseline samples every 20 min for 1 hour.

- Intervention: Administer drug or vehicle subcutaneously.

- Sampling: Continue collecting dialysate samples for 3 hours post-injection.

- Analysis: Analyze samples via HPLC-ECD. Express data as % change from baseline.

B. Bayesian Re-analysis Protocol

- Define Priors: Elicit prior belief based on historical data for similar drug classes. For a novel mechanism, use a skeptical or weakly informative prior (e.g., Cauchy(0, 0.707) for standardized effect size δ).

- Specify Models: Define H₀ (δ = 0) and H₁ (δ ≠ 0, with prior distribution).

- Compute Bayes Factor: Use the

BayesFactorpackage in R or JASP software. Input group data (mean, SD, n) for the key time point (e.g., 60 min post-injection).

- Calculate Posterior Distribution: Using the

brmspackage, model the full time-course data to estimate the posterior distribution of the drug effect at all time points and derive 95% credible intervals. - Compute Posterior Probability: Convert BF and prior odds (e.g., 1:1) to P(H₁ | Data).

Title: Workflow: Frequentist vs. Bayesian Analysis of Neurochemical Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Neurochemical Studies with Bayesian Analysis

| Item | Function | Example/Supplier |

|---|---|---|

| HPLC-ECD System | High-sensitivity separation and electrochemical detection of monoamines (DA, 5-HT, NE) and metabolites. | Thermo Scientific Dionex, BASi LC-4C |

| Microdialysis Probes & aCSF | In vivo sampling of extracellular fluid from specific brain regions. | MAB-4 Probes (SciPro), Custom aCSF. |

| Statistical Software (JASP) | Open-source GUI software with comprehensive Bayesian t-tests, ANOVAs, and regression. | jasp-stats.org |

| R with Bayes Packages | Flexible scripting for advanced Bayesian modeling (brms, BayesFactor, rstanarm). |

CRAN repositories. |

| Prior Distribution Elicitation Tools | Structured frameworks to translate historical data or expert knowledge into quantitative priors. | SHELF (Sheffield Elicitation Framework). |

Application: Signaling Pathway Analysis

Consider a study investigating if Drug X increases phosphorylated ERK (pERK) in cultured neurons via a novel receptor 'R'.

Title: Bayesian Modeling of Drug Effect on a Signaling Pathway

Bayesian Modeling Approach:

- Model data at each pathway node (e.g., pERK levels).

- Use hierarchical models to share information across related experiments.

- Compute Bayes Factors for each link (e.g., BF for Drug → Receptor activation).

- The posterior probability of the entire pathway being activated by Drug X can be quantified, providing a more integrative measure than multiple independent p-values.

Moving from p-values to posterior probabilities represents a paradigm shift ideally suited for small neurochemical studies in drug development. It replaces dichotomous "significant/non-significant" judgments with a continuous quantification of evidence, allowing for more nuanced and rational decision-making. This approach directly answers the question most critical to researchers: "Given my data, what is the probability that this drug has a real neurochemical effect?"

Within neurochemical studies for drug development, where sample sizes are inherently limited, Bayesian statistics offers a paradigm shift. This guide details its core advantages: the principled incorporation of prior knowledge and the robust handling of complex, biologically realistic models, providing a framework for more informative inference in small-n research.

The Imperative of Prior Information in Small-nStudies

Neurochemical research often involves expensive, low-throughput assays (e.g., microdialysis HPLC, receptor autoradiography) resulting in limited data. Frequentist methods struggle here, yielding estimates with wide confidence intervals. Bayesian methods formally integrate existing knowledge (prior distributions) with new experimental data to produce posterior distributions.

Prior information is encoded as probability distributions for model parameters.

Table 1: Common Prior Sources in Neurochemical Research

| Prior Source | Example | Typical Distribution Form |

|---|---|---|

| Historical Control Data | Basal dopamine levels in striatal microdialysate from previous studies. | Normal(μ=1.2 nM, σ=0.3) |

| In Vitro Binding Assays | Ki or EC50 values for a ligand from high-throughput screening. | Log-Normal(log(mean), log(sd)) |

| Pharmacokinetic Studies | Published clearance rates of a drug analog. | Gamma(shape, rate) |

| Expert Elicitation | Expected % change in a metabolite post-treatment. | Beta(α, β) or Student-t |

Experimental Protocol: Bayesian Analysis of Microdialysis Time-Course

Aim: To estimate the effect of a novel compound on extracellular serotonin (5-HT) levels in prefrontal cortex.

Methodology:

- Data Collection: Perform in vivo microdialysis in rodent PFC (n=6-8 per group). Collect baseline samples (3x20min), administer compound or vehicle, and collect post-treatment samples (6-8x20min). Quantify 5-HT via HPLC-ECD.

- Model Specification: Use a non-linear mixed-effects model. The mean response for animal i at time t is modeled as:

μ_it = Baseline_i + (Δ_i * t) / (T50_i + t), whereΔ_iis the maximum change andT50_iis the time to half-effect. - Prior Elicitation:

Baseline_i ~ Normal(μ=0.5 nM, σ=0.2): Truncated at 0. Informed by historical control data.Δ_i ~ Normal(μ=0, σ=1.0): Weakly informative, allowing for increase or decrease.T50_i ~ Gamma(shape=3, rate=0.5): Positive, with a mode around 40 minutes based on drug class.- Use hierarchical priors for group-level parameters.

- Computation: Perform Markov Chain Monte Carlo (MCMC) sampling (e.g., Stan, PyMC) to obtain posterior distributions for all parameters.

- Inference: Calculate probability that

Δ > 0.2 nM(a clinically relevant threshold) directly from the posterior. Report 95% credible intervals.

Handling Complex, Mechanistic Models

Bayesian frameworks seamlessly integrate multi-level (hierarchical) and non-linear models, which are essential for capturing the complexity of neurochemical systems but are often intractable with frequentist approaches in small samples.

Example: Hierarchical Model for Multi-Region Receptor Occupancy

A study may measure occupancy via PET or autoradiography across multiple brain regions (e.g., striatum, cortex, cerebellum) in a few subjects.

Table 2: Comparison of Model Structures

| Model Type | Frequentist Approach | Bayesian Hierarchical Approach |

|---|---|---|

| Pooled | Ignores region-specificity. High risk of bias. | Not applicable. |

| Fully Separate | Fits a model per region. Fails with small n per region. | Possible, but inefficient. |

| Hierarchical | Complex random-effects models can fail to converge. | Optimal. Partially pools estimates: regions with less data shrink toward the global mean, improving stability. |

Experimental Protocol: Fitting a Complex Pharmacodynamic Model

Aim: To model the biphasic dose-response of a drug on glutamate release, involving receptor synergy.

Methodology:

- System: Ex vivo fast-scan cyclic voltammetry measuring electrically evoked glutamate transients in hippocampal slices under varying drug doses (n=5-7 slices per dose).

- Mechanistic Model: Specify a model where the drug acts on two receptor subtypes (A & B) to modulate release probability:

E(d) = E0 * (1 - (Imax_A * d^γ_A)/(ED50_A^γ_A + d^γ_A) - (Imax_B * d^γ_B)/(ED50_B^γ_B + d^γ_B) + I_synergy*(d/(ED50_syn+d))) - Bayesian Implementation:

- Assign informed priors to

ED50_AandED50_Bbased on known receptor affinity. - Use weakly informative priors for

Imax(Beta distribution between 0 and 1). - Fit the full, non-linear model using Hamiltonian Monte Carlo (HMC).

- Assess convergence via R-hat statistics and effective sample size.

- Assign informed priors to

- Output: Full joint posterior distribution for all 7+ parameters, enabling prediction of response at untested doses and quantification of uncertainty in the synergy term (

I_synergy).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian Neurochemical Studies

| Item | Function & Rationale |

|---|---|

| Stan/PyMC Software | Probabilistic programming languages for specifying Bayesian models and performing efficient MCMC/VI sampling. |

| JAGS/BUGS | Established software for Gibbs sampling, useful for standard hierarchical models. |

R/brms Package |

High-level interface to Stan, allowing specification of complex multilevel models with familiar R formula syntax. |

| Pharmacokinetic Database (e.g., PK-DB) | Source for constructing informative priors on drug absorption, distribution, metabolism, and excretion (ADME) parameters. |

| Brain Atlas Data (e.g., Allen Brain Map) | Provides region-specific gene expression or connectivity data to inform hierarchical prior structures for multi-region analyses. |

| Bayesian Analysis Reporting Guidelines (BARG) | Checklist to ensure transparent reporting of priors, model checking, and computational details. |

Visualizing Workflows and Relationships

Title: Bayesian Analysis Core Workflow

Title: Hierarchical Model for Multi-Subject Neurochemical Data

Common Neurochemical Data Types Ideal for Bayesian Analysis (e.g., HPLC, MS, ELISA, Microdialysis)

Within the framework of advancing Bayesian statistics for small-sample neurochemical research, selecting appropriate data types is paramount. Bayesian methods excel in quantifying uncertainty, integrating prior knowledge, and drawing robust inferences from limited data—common challenges in neurochemical studies. This guide details core neurochemical data acquisition techniques whose inherent properties make them particularly amenable to Bayesian analysis.

Ideal Data Types & Their Bayesian Rationale

High-Performance Liquid Chromatography (HPLC)

HPLC separates and quantifies neurochemicals (e.g., monoamines, amino acids) from brain tissue homogenate or cerebrospinal fluid (CSF). Output is typically concentration (ng/mg tissue or ng/mL) with associated calibration curve uncertainty.

- Bayesian Fit: Natural quantification of uncertainty from calibration curves (likelihood) can be combined with informative priors from historical control data. Hierarchical models can pool information across multiple runs or brain regions.

Mass Spectrometry (MS) & Liquid Chromatography-MS (LC-MS)

MS provides highly specific identification and quantification of neurotransmitters, metabolites, and lipids. It yields high-dimensional data (m/z ratios, retention times, intensities) with complex noise structures.

- Bayesian Fit: Ideal for modeling intricate error distributions and co-variance structures in high-dimensional data. Bayesian model selection can identify significant peaks amidst noise, and multilevel models handle batch effects.

Enzyme-Linked Immunosorbent Assay (ELISA)

ELISA measures protein or peptide concentrations (e.g., BDNF, cytokines) via antibody-antigen binding, producing concentration data derived from a sigmoidal standard curve.

- Bayesian Fit: The four- or five-parameter logistic standard curve can be directly embedded as a probabilistic Bayesian model, providing full posterior distributions for unknown sample concentrations and naturally propagating curve-fitting uncertainty.

Microdialysis

Microdialysis involves the semi-continuous sampling of extracellular fluid, yielding time-series data of neurotransmitter levels (e.g., glutamate, dopamine) often at low temporal resolution.

- Bayesian Fit: Time-series analysis using Bayesian dynamical models (e.g., Gaussian Processes, state-space models) can infer underlying release kinetics, handle missing data, and deconvolve signal from slow temporal dialysis recovery.

Quantitative Data Comparison

Table 1: Characteristics of Neurochemical Data Types for Bayesian Analysis

| Data Type | Typical Output | Key Uncertainty Sources | Primary Bayesian Advantage |

|---|---|---|---|

| HPLC | Concentration from peak area/height. | Calibration curve error, baseline noise, extraction efficiency. | Probabilistic calibration; priors on expected physiological ranges. |

| LC-MS | High-dim. peak intensities for 100s-1000s of features. | Ion suppression, matrix effects, instrument drift. | Modeling complex error covariance; robust feature selection. |

| ELISA | Concentration from optical density (OD). | Standard curve interpolation, plate-to-plate variability, cross-reactivity. | Embedded probabilistic standard curve; hierarchical plate modeling. |

| Microdialysis | Time-series of extracellular concentration. | Probe recovery variance, autocorrelation, basal level determination. | Dynamic modeling of temporal processes; imputation of missing points. |

Detailed Experimental Protocols

Protocol 1: LC-MS Metabolomics of Brain Tissue with Bayesian Calibration

Objective: Quantify polar metabolites (e.g., neurotransmitters, TCA cycle intermediates) from prefrontal cortex tissue.

- Tissue Homogenization: Snap-frozen tissue (10 mg) is homogenized in 500 µL of 80% methanol/water at -20°C.

- Protein Precipitation & Extraction: Vortex, sonicate (10 min, 4°C), then centrifuge (16,000 x g, 15 min, 4°C). Collect supernatant.

- LC-MS Analysis: Inject 5 µL onto a HILIC column. Use a Q-Exactive HF mass spectrometer in positive/negative switching mode (Full MS scan, 120k resolution).

- Bayesian Data Processing: Apply a Bayesian hybrid peak detection/modeling algorithm. For quantification, use a hierarchical model where the intensity of a known standard (likelihood ~ Student-t) informs the posterior for the unknown sample, with a weakly informative prior (e.g., Cauchy) on concentration.

- Statistical Analysis: Perform differential analysis using a Bayesian t-test (e.g., BEST model), reporting posterior probability of difference and credible intervals.

Protocol 2: In Vivo Microdialysis for Dopamine with Bayesian Time-Series Analysis

Objective: Measure phasic changes in striatal dopamine following pharmacological challenge.

- Probe Implantation: Implant a concentric microdialysis guide cannula into the rat striatum (AP: +1.2 mm, ML: ±2.5 mm, DV: -4.0 mm from bregma).

- Perfusion & Collection: 24h post-surgery, insert a probe (2 mm membrane, 38 kDa MWCO). Perfuse with artificial CSF (aCSF) at 1.0 µL/min. After 2h equilibration, collect dialysate every 20 min.

- Basal & Challenge Collection: Collect 3 baseline samples. Administer drug (e.g., amphetamine, 2 mg/kg, i.p.) and collect samples for 180 min.

- HPLC-EC Analysis: Analyze dialysate immediately via HPLC with electrochemical detection for dopamine.

- Bayesian Time-Series Analysis: Model data using a Gaussian Process (GP) with a Matern kernel. The GP defines a prior over functions describing dopamine concentration over time. The likelihood (observed data) updates this to a posterior distribution of the underlying continuous trace, providing credible intervals for the inferred concentration at any time point, including between samples.

Visualizations

Title: Bayesian Neurochemical Data Analysis Workflow

Title: Bayesian Gaussian Process Model for Microdialysis Data

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Neurochemical Assays

| Item | Primary Function | Example in Protocol |

|---|---|---|

| Methanol/Water (80:20) w/ Internal Standards | Extraction solvent for polar metabolites; internal standards correct for technical variance in MS. | LC-MS tissue homogenization. |

| Artificial Cerebrospinal Fluid (aCSF) | Physiological perfusion fluid for microdialysis, maintaining ionic balance and preventing tissue damage. | Microdialysis perfusion medium. |

| Protein Coated ELISA Plates | Solid phase for antibody immobilization, enabling the sandwich or competitive binding assay. | Solid support for antigen capture. |

| Perchloric Acid (0.1-0.5 M) | Deproteinizing agent for tissue homogenates prior to HPLC, preventing column fouling and degradation. | Sample prep for monoamine HPLC. |

| Derivatization Reagent (e.g., OPA, ACCQ-Tag) | Reacts with primary amines/amino acids to form compounds detectable by fluorescence or MS. | Enhancing sensitivity for HPLC. |

| Calibration Standard Mix | A series of known concentrations of target analytes to construct the response curve, essential for Bayesian likelihood. | Quantification in all methods. |

| Stable Isotope-Labeled Analogs (e.g., 13C, 15N) | Serve as ideal internal standards for MS, matching analyte chemistry precisely for accurate quantification. | Gold-standard for LC-MS calibration. |

A Step-by-Step Bayesian Workflow for Neurochemical Data Analysis

In small neurochemical studies, such as those examining neurotransmitter release, receptor affinity, or metabolomic changes, the choice of statistical model is paramount. Traditional frequentist approaches often struggle with the limited sample sizes and high variability inherent in this field. A Bayesian framework provides a coherent paradigm for incorporating prior knowledge (e.g., from pilot studies or related literature) and quantifying uncertainty through posterior probability distributions. This guide details the critical first step: formally defining the research question and selecting the corresponding statistical model—comparisons, correlations, or dose-response analyses—tailored for Bayesian inference in neurochemical research.

The core quantitative models for neurochemical analysis are summarized in the table below. Each model type answers a distinct research question and requires specific data structures and prior specifications.

Table 1: Core Bayesian Models for Small Neurochemical Studies

| Model Type | Primary Research Question | Example Neurochemical Application | Key Model Parameters (Likelihood) | Typical Priors for Parameters |

|---|---|---|---|---|

| Comparing Groups | Do mean levels differ between conditions? | [Dopamine] in microdialysate: Control vs. Drug-treated group. | Mean (μ₁, μ₂), Standard Deviation (σ). | μ ~ Normal(priormean, priorsd); σ ~ Half-Cauchy(0, scale). |

| Correlations | What is the strength/direction of association between two continuous measures? | Correlation between CSF Aβ42 and cortical tau-PET signal. | Correlation coefficient (ρ), Means, Standard Deviations. | ρ ~ Beta(α, β) or ρ ~ Uniform(-1, 1). |

| Dose-Response | How does the response variable change with increasing dose/concentration? | In vitro receptor occupancy as a function of ligand concentration (IC50/EC50 estimation). | Slope (α), Half-maximal dose (EC50), Maximum effect (Emax). | log(EC50) ~ Normal(priorlogconc, sd); Emax ~ Normal(prior_max, sd). |

Detailed Experimental Protocols for Cited Applications

Protocol 1: Microdialysis for Comparing Neurotransmitter Groups

- Objective: To compare extracellular dopamine concentration in the striatum of rats under control and drug-treated conditions.

- Surgical Procedure: Implant a guide cannula targeting the striatum. Allow 5-7 days for recovery.

- Microdialysis: Insert a probe with a 3mm active membrane. Perfuse with artificial cerebrospinal fluid (aCSF) at 1.0 µL/min. Begin sample collection after a 2-hour equilibration period.

- Experimental Design: Collect 6 baseline samples (30 min each). Administer drug or vehicle subcutaneously. Collect 6-8 post-treatment samples.

- HPLC Analysis: Analyze dialysate samples using HPLC with electrochemical detection. Quantify dopamine against external standards.

- Data for Model: Calculate mean dopamine concentration (pg/µL) for the baseline (all groups) and a stable post-treatment window (e.g., samples 3-6) for each subject.

Protocol 2: Isotherm Binding for Dose-Response (IC50)

- Objective: To determine the inhibitory concentration (IC50) of a novel compound at the serotonin transporter (SERT).

- Membrane Preparation: Homogenize SERT-expressing cell lysates or brain tissue in ice-cold buffer. Centrifuge to obtain a membrane pellet.

- Radioligand Binding: Incubate membranes with a fixed concentration of a selective radioligand (e.g., [³H]paroxetine) and 8-12 increasing concentrations of the test compound in binding buffer for 1-2 hours at room temperature.

- Separation & Quantification: Terminate reactions by rapid filtration onto glass-fiber filters. Wash filters, dry, and measure bound radioactivity via scintillation counting.

- Data for Model: Calculate % specific binding inhibition at each inhibitor concentration. Fit a Bayesian 4-parameter logistic (sigmoidal) model to estimate IC50.

Visualizing the Model Selection Workflow

Title: Bayesian Model Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Featured Neurochemical Experiments

| Item | Function | Example Product/Catalog # |

|---|---|---|

| aCSF for Microdialysis | Physiological perfusion fluid to maintain tissue viability and collect analytes. | Artificial CSF (Sigma, C5910) or custom formulation (NaCl, KCl, CaCl2, MgCl2). |

| HPLC Column for Monoamines | Separates neurotransmitters (DA, 5-HT, NE) and metabolites in dialysate. | C18 reverse-phase column, 3µm particle size, 150mm length (e.g., Phenomenex Luna). |

| Selective Radioligand | High-affinity labeled compound to specifically tag the target of interest (e.g., receptor). | [³H]Paroxetine for SERT (PerkinElmer, NET-818). [³H]SCH-23390 for D1 receptors. |

| Scintillation Cocktail | Emits light when interacting with beta particles from tritium/carbon-14 for quantification. | Ultima Gold XR (PerkinElmer, 6013119). |

| WGA-coated SPA Beads | Enables homogeneous "mix-and-read" binding assays by capturing membrane proteins. | WGA PVT SPA Beads (Cytiva, RPNQ001). |

| Bayesian Software Package | Implements Markov Chain Monte Carlo (MCMC) sampling for model fitting. | Stan (via brms in R or PyMC3 in Python), JAGS. |

This guide, a component of a broader thesis on Bayesian statistics for small neurochemical studies, provides a technical framework for selecting and justifying prior distributions. In neuroscience research—particularly in studies of neurotransmitter dynamics, receptor binding, or drug efficacy—the move from vague to informative priors is critical for obtaining meaningful posterior estimates from limited datasets. This process formalizes existing knowledge from literature, pilot studies, or mechanistic models.

Classes of Priors and Their Quantitative Justification

Priors can be categorized by their informational content, directly impacting posterior inference in small-sample neurochemical studies.

Table 1: Classes of Priors for Common Neurochemical Parameters

| Prior Class | Typical Use Case | Example Parameter (Neuroscience Context) | Mathematical Form | Justification Source |

|---|---|---|---|---|

| Vague/Non-informative | Initial analysis, default choice | Baseline dopamine level (μ) in a novel region | μ ~ Normal(0, 1000) | Principle of minimal influence; reference prior. |

| Weakly Informative | Regularization, stabilizing estimation | Treatment effect (β) in a behavioral assay | β ~ Normal(0, 10) | Constrains to plausible range; prevents overfitting. |

| Informative (Literature-Based) | Incorporating established findings | Mean AMPA receptor NMJ conductance (g) | g ~ Normal(1.2, 0.2) | Meta-analysis of prior electrophysiology studies. |

| Informative (Mechanistic) | Parameters from computational models | Rate constant (k) for glutamate reuptake | k ~ LogNormal(−1, 0.5) | Constrained by biophysical transporter kinetics. |

| Skeptical/Pessimistic | Clinical trial analysis | Drug effect size (Δ) for a novel antidepressant | Δ ~ Normal(0, 0.5) | Assumes true effect is likely small or null. |

| Optimistic/Enthusiastic | Pilot study extension | % BOLD signal change (θ) in target ROI | θ ~ Normal(3, 1) | Prior belief based on strong pilot data. |

Table 2: Prior Elicitation from Historical Data: Dopamine Transporter Knockout Study

| Parameter | Control Group Mean (Wild-Type) | Control Group SD | Historical N | Elicited Prior for KO Study | Justification Method |

|---|---|---|---|---|---|

| Striatal DA (ng/mg) | 12.1 | 2.3 | 45 | μ_control ~ Normal(12.1, 0.35) | Prior mean = historical mean; Prior SD = historical SE (2.3/√45). |

| DA Turnover Rate (hr⁻¹) | 0.85 | 0.15 | 30 | k ~ Gamma(shape=32, rate=37.6) | Moments matched: E[k]=0.85, SD[k]=0.15. |

| Treatment Effect (Δ) | -- | -- | -- | Δ ~ Student-t(ν=4, μ=0, σ=2.5) | Weakly informative; allows for outliers. |

This section details methodologies for generating data used to construct informative priors.

Protocol: Microdialysis for Baseline Neurotransmitter Level Estimation

Objective: To establish an informative prior for baseline extracellular dopamine concentration in rat medial prefrontal cortex (mPFC).

- Surgery: Implant a guide cannula targeting the mPFC (AP +3.2 mm, ML ±0.6 mm, DV −4.0 mm from bregma).

- Recovery: Allow 5-7 days post-surgery with daily handling.

- Microdialysis: Insert a 2 mm active membrane probe. Perfuse with artificial cerebrospinal fluid (aCSF) at 1.0 μL/min.

- Baseline Sampling: After a 2-hour equilibration period, collect dialysate every 20 minutes for 3 hours.

- HPLC-ECD Analysis: Analyze samples via High-Performance Liquid Chromatography with Electrochemical Detection. Quantify dopamine against external standards.

- Data for Prior: Calculate mean and standard error of mean (SEM) from the 9 baseline samples. The prior for a new study: μ_baseline ~ Normal(historical mean, historical SEM).

Protocol: Radioligand Binding for Receptor Density Prior

Objective: To elicit an informative prior for B_max (maximal receptor binding) of serotonin 5-HT1A receptors in human hippocampus via PET.

- Subject Selection: Healthy control cohort (n=20), age 30-50, screened for psychiatric history.

- PET Acquisition: Administer [¹¹C]WAY-100635 bolus plus constant infusion. Acquire dynamic PET data over 90 minutes.

- Kinetic Modeling: Use a two-tissue compartment model to estimate Bmax and KD (equilibrium dissociation constant) for each subject.

- Population Summary: Fit a hierarchical model to the per-subject Bmax estimates. Extract population mean (μpop) and between-subject SD (τ).

- Prior for New Study: For a new subject group (e.g., patients), use Bmax ~ Normal(μpop, τ) as an informative prior, where τ represents plausible between-group variation.

Visualization: Pathways and Workflows

Diagram Title: Prior Selection Decision Workflow

Diagram Title: Sources and Methods for Informative Priors

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Prior-Eliciting Neuroscience Experiments

| Item Name & Supplier Example | Function in Prior Elicitation | Key Specification for Quantification |

|---|---|---|

| CMA 7 Microdialysis Probe (Harvard Apparatus) | In vivo sampling of extracellular neurotransmitters (e.g., DA, Glu) for baseline level estimation. | Membrane length (e.g., 2-4 mm); Molecular Weight Cut-Off (e.g., 6 kDa). |

| aCSF Perfusion Solution (Tocris Bioscience) | Physiological perfusion fluid for microdialysis to maintain tissue viability. | Ionic composition (e.g., 147 mM NaCl, 2.7 mM KCl, 1.2 mM CaCl₂). |

| Dopamine ELISA Kit (Abcam) | Quantification of dopamine levels in dialysate or tissue homogenate. | Sensitivity (e.g., 10 pg/mL); Cross-reactivity profile. |

| [¹¹C]WAY-100635 (PET Radioligand) | Selective labeling of 5-HT1A receptors for in vivo PET binding studies. | Specific activity (> 1 Ci/μmol); Radiochemical purity (> 95%). |

| GraphPad Prism Software | Statistical analysis of pilot/historical data for moments calculation and distribution fitting. | Supports non-linear regression and descriptive statistics. |

| Stan Modeling Language (mc-stan.org) | Implements Bayesian models with user-specified priors; performs prior predictive checks. | Hamiltonian Monte Carlo (HMC) sampling efficiency. |

| JASP (jasp-stats.org) | Open-source GUI for Bayesian analysis; includes prior sensitivity analysis tools. | Supports Bayes Factor and Posterior Estimation. |

In small neurochemical studies, where sample sizes are limited and measurements are noisy, Bayesian statistics offers a principled framework for quantifying uncertainty and incorporating prior knowledge. The core computational challenge is generating samples from posterior distributions of model parameters, such as neurotransmitter concentration, receptor affinity, or drug potency. Markov Chain Monte Carlo (MCMC) is the dominant family of algorithms for this task. This guide introduces MCMC fundamentals and reviews three pivotal tools: Stan, JAGS, and the R package brms, framing their application within neurochemical research.

MCMC Fundamentals for Neurochemical Data

MCMC constructs a Markov chain whose stationary distribution is the target posterior distribution. After a burn-in period, samples from the chain approximate draws from the posterior.

Key Algorithms:

- Gibbs Sampling: Iteratively samples each parameter from its conditional posterior distribution. Efficient when conditionals are standard distributions.

- Metropolis-Hastings: Proposes a new parameter state and accepts it with a probability that maintains detailed balance. More generally applicable.

- Hamiltonian Monte Carlo (HMC): Uses gradient information to propose distant states with high acceptance probability. Efficient for high-dimensional, correlated posteriors (the core algorithm of Stan).

Stan and its Ecosystem

Stan implements state-of-the-art No-U-Turn Sampler (NUTS), an adaptive variant of HMC.

Key Features:

- Language: Standalone probabilistic programming language.

- Inference: Primarily uses NUTS.

- Strengths: Handles complex models with correlated parameters efficiently; strong diagnostics.

- Weaknesses: Steeper learning curve; requires model specification in its own language.

Example Stan Model Snippet for a Dose-Response Analysis:

JAGS (Just Another Gibbs Sampler)

JAGS is a Gibbs/Metropolis-Hastings sampler that uses a BUGS-like model specification.

Key Features:

- Language: BUGS dialect.

- Inference: Gibbs and Metropolis-Hastings.

- Strengths: Familiar syntax for BUGS users; extensive library of examples.

- Weaknesses: Less efficient for complex, high-dimensional models; fewer convergence diagnostics.

brms (Bayesian Regression Models using Stan)

brms is an R package that provides a high-level formula interface to Stan.

Key Features:

- Language: R formula syntax.

- Inference: Uses Stan as backend.

- Strengths: Dramatically reduces coding overhead for common models (linear, GLM, multilevel, etc.); access to full Stan power.

- Weaknesses: Less flexible for highly custom, non-standard models than pure Stan.

Example brms Code for a Linear Model:

Quantitative Comparison of Tools

Table 1: Tool Comparison for Neurochemical Modeling

| Feature | Stan | JAGS | brms |

|---|---|---|---|

| Primary Algorithm | NUTS (HMC) | Gibbs / Metropolis | NUTS (via Stan) |

| Model Specification | Standalone language | BUGS language | R formula syntax |

| Ease of Learning | Steep | Moderate | Easy (for R users) |

| Efficiency (Complex Models) | High | Moderate to Low | High |

| Convergence Diagnostics | Extensive (Rhat, ESS, divergences) | Basic | Extensive (via Stan) |

| Best For | Complex, custom models; high-dimensional posteriors | Standard models; transition from BUGS | Rapid prototyping of common models |

Table 2: Example Computational Performance on a Pharmacokinetic Model*

| Tool | Mean ESS/sec | Rhat (<1.01) | Total Sampling Time (s) |

|---|---|---|---|

| Stan (NUTS) | 85.2 | Yes | 45.3 |

| JAGS (Gibbs) | 12.7 | Yes | 312.8 |

| brms (Stan) | 81.5 | Yes | 47.1 |

*Simulated two-compartment model for drug concentration time-series (n=20 subjects, 4 chains, 5000 iterations post-warmup). ESS: Effective Sample Size.

Experimental Protocol: Implementing an MCMC Analysis for Receptor Binding Data

Protocol Title: Bayesian Analysis of Competitive Binding Assay Data using Stan.

Objective: Estimate inhibition constant (Ki) of a novel compound for a dopamine receptor from a radioligand binding experiment.

Materials & Reagents: (See "The Scientist's Toolkit" below).

Procedure:

- Data Preparation: Format data into a list containing: total ligand concentration [L], competitor concentration [I], total bound counts (B), and non-specific binding counts (NSB). Calculate specific binding = B - NSB.

- Model Specification: Code the Cheng-Prusoff competitive binding model in Stan. Key parameters: logKi (log10 of inhibition constant), Bmax (total receptor density), and logKd (log10 of ligand dissociation constant, if not fixed).

- Prior Elicitation: Set weakly informative priors based on historical data (e.g.,

logKi ~ normal(-8, 2);). - Model Compilation: Use

stan_model()orbrm()to compile the model. - Sampling: Run 4 independent MCMC chains with 2000 warmup and 2000 sampling iterations per chain.

- Diagnostics: Check Rhat values (<1.05), effective sample size (>400 per chain), and trace plots for stationarity.

- Posterior Analysis: Extract and summarize the posterior distribution for logKi. Report median and 95% Credible Interval (CrI). Visualize posterior predictive checks against observed data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Neurochemical Binding Studies

| Item | Function in Experiment |

|---|---|

| Radioisotope-labeled Ligand (e.g., [³H]SCH-23390) | High-affinity binder used to selectively tag and quantify target receptor populations. |

| Test Compound / Novel Drug Candidate | Unlabeled molecule whose binding affinity (Ki) is being determined. |

| Membrane Preparation from Brain Tissue | Source of the target receptor protein. |

| Specific Binding Inhibitor (e.g., Butaclamol) | Used to determine non-specific binding by displacing the radioligand from non-target sites. |

| Scintillation Cocktail & Vials | For detection of beta radiation emitted by tritiated ligands. |

| Cell Harvester & Filter Mats (GF/B) | To separate bound from free radioligand rapidly and reproducibly. |

| Wash Buffer (e.g., Tris-HCl, pH 7.4) | Maintains physiological pH and ionic strength during binding assay. |

| Liquid Scintillation Counter | Instrument to quantify radioactivity (DPM/CPM) on filter mats. |

Visualizations

Title: MCMC Analysis Workflow for Neurochemical Data

Title: MCMC Tool Selection Decision Tree

In neurochemical research with limited sample sizes (e.g., n<20 per group), frequentist statistics often yield inconclusive p-values and wide confidence intervals. Bayesian analysis offers a more intuitive framework. After obtaining a posterior distribution for a parameter of interest—such as the difference in dopamine metabolite levels between a novel drug and a control—the critical step is interpretation. This guide details three complementary tools: Credible Intervals for direct probability statements, the Region of Practical Equivalence (ROPE) for assessing practical significance, and Bayes Factors for hypothesis testing, all contextualized for small-n experimental neuroscience.

Core Interpretive Frameworks

Highest Density Credible Interval (HDI)

The 95% HDI is the interval that contains 95% of the posterior probability mass, with the property that every point inside the interval has a higher probability density than any point outside it. It directly states: "Given the data and model, there is a 95% probability the true parameter value lies within this interval."

Methodology for Construction:

- Specify a model (e.g.,

y ~ Normal(μ, σ)with priors forμandσ). - Using Markov Chain Monte Carlo (MCMC) sampling (e.g., Stan, PyMC), obtain a large number of posterior samples for the target parameter.

- Sort the samples and identify the shortest interval that contains 95% of these values. This is the 95% HDI.

Workflow: From Prior and Data to Posterior HDI

Region of Practical Equivalence (ROPE)

The ROPE defines a range of parameter values considered practically equivalent to no effect (e.g., a difference in concentration of ±0.2 pg/mg, deemed biologically negligible). It is used in conjunction with the HDI to declare an effect as practically significant, negligible, or ambiguous.

Decision Protocol:

- Define ROPE: Based on domain expertise. For a standardized mean difference (Cohen's d), a common ROPE is [-0.1, 0.1].

- Compare Entire 95% HDI to ROPE:

- If the entire HDI falls inside the ROPE, accept the null (practically equivalent).

- If the entire HDI falls outside the ROPE, reject the null (practically significant).

- If the HDI overlaps the ROPE, the result is ambiguous (data insensitive).

ROPE-based Decision Logic for Practical Significance

Bayes Factor (BF)

The Bayes Factor quantifies the relative evidence for one statistical model (e.g., H₁: effect exists) over another (e.g., H₀: no effect). BF₁₀ = 10 means the data are 10 times more likely under H₁ than H₀.

Calculation Methodology (Simplified):

- Specify Models: Precisely define H₀ and H₁, including priors for all parameters (e.g., H₀: δ = 0; H₁: δ ~ Cauchy(0, 0.707)).

- Compute Marginal Likelihood: For each model, calculate the probability of the observed data averaged over all possible parameter values defined by the prior. This is often computationally intensive.

- Take the Ratio: BF₁₀ = (Marginal Likelihood under H₁) / (Marginal Likelihood under H₀).

Quantitative Comparison of Methods

Table 1: Interpretation of Bayes Factor Values

| BF₁₀ Value | Evidence Category for H₁ over H₀ |

|---|---|

| > 100 | Decisive |

| 30 – 100 | Very Strong |

| 10 – 30 | Strong |

| 3 – 10 | Moderate |

| 1 – 3 | Anecdotal |

| 1 | No evidence |

| 1/3 – 1 | Anecdotal for H₀ |

| 1/10 – 1/3 | Moderate for H₀ |

| < 1/10 | Strong for H₀ |

Table 2: Application in a Hypothetical Neurochemical Study (Difference in Striatal Serotonin)

| Method | Result (Hypothetical) | Interpretation for the Researcher |

|---|---|---|

| 95% HDI | 0.4 [0.1, 0.7] pg/mg | "There is a 95% probability the true increase is between 0.1 and 0.7 pg/mg." |

| ROPE ([-0.15, 0.15]) | HDI excludes ROPE | "The effect is of practical significance (not negligible)." |

| Bayes Factor | BF₁₀ = 8.2 | "Moderate evidence (≈8x) for an effect over no effect." |

Table 3: Strengths and Limitations for Small-n Studies

| Method | Primary Strength | Key Limitation in Small-n Context |

|---|---|---|

| Credible Interval | Direct probabilistic interpretation. Incorporates prior knowledge. | Width heavily influenced by sample size; may remain wide. |

| ROPE | Translates statistical effect to practical/biological significance. | Requires justified, context-specific ROPE definition. |

| Bayes Factor | Quantifies evidence for/against a hypothesis. Can favor H₀. | Highly sensitive to prior specification on effect size. Computationally complex. |

Experimental Protocol: A Bayesian Workflow for Microdialysis Data

Objective: To compare extracellular glutamate levels in the prefrontal cortex between a drug-treated and vehicle-treated group (n=8 per group).

Protocol:

- Data Collection: Perform in vivo microdialysis. Collect fractions at baseline and post-administration. Quantify glutamate via HPLC.

- Define Analysis Metric: Calculate % change from baseline for each subject, then the mean difference (Δ) between groups.

- Specify Bayesian Model (Using

brmsin R or equivalent):- Likelihood:

Δ_observed ~ Normal(μ, σ) - Prior for

μ(effect):Normal(0, 5)// weakly informative, expecting changes <5 SDs. - Prior for

σ(sd):Exponential(1)

- Likelihood:

- Compute Posterior: Run MCMC sampling (4 chains, 4000 iterations).

- Interpretation:

- Extract 95% HDI for

μ. - Define ROPE as [-2%, 2%] based on assay variability.

- Compute BF comparing a model where

μis estimated to one whereμ = 0.

- Extract 95% HDI for

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 4: Essential Tools for Bayesian Neurochemical Analysis

| Item | Function & Relevance | Example Product/Software |

|---|---|---|

| HPLC-ECD/MS Systems | Quantifies monoamines, amino acids, etc. Generates the primary continuous data for analysis. | Thermo Vanquish, Waters ACQUITY, BASi LC-4C |

| Statistical Software (R/Python) | Core environment for Bayesian modeling and visualization. | R (RStudio), Python (Jupyter) |

| Bayesian Modeling Packages | Facilitates model specification, MCMC sampling, and diagnostic checks. | brms, rstanarm, PyMC, Stan |

| MCMC Diagnostic Tools | Assesses chain convergence, a critical step for valid inference. | bayesplot, ArviZ (Python), R-hat & n_eff statistics |

| Domain-Specific ROPE Guidelines | Published criteria for biologically negligible changes in neurochemistry. | Literature on assay variance & minimal physiological effect sizes |

This whitepaper serves as a practical chapter within a broader thesis advocating for the adoption of Bayesian statistical frameworks in small-sample neurochemical research. Traditional frequentist analyses often fail in pilot studies due to low statistical power, inability to incorporate prior knowledge, and non-intuitive output (e.g., p-values vs. direct probabilities). Here, we demonstrate a Bayesian workflow for analyzing neurotransmitter changes in a rodent model following an experimental treatment, showcasing how it provides more informative and actionable results for drug development professionals.

Experimental Protocol: A Pilot Study on SSRI Treatment

Objective: To assess the effects of a 14-day administration of a selective serotonin reuptake inhibitor (SSRI) on extracellular levels of serotonin (5-HT), dopamine (DA), and their metabolite, 5-hydroxyindoleacetic acid (5-HIAA), in the medial prefrontal cortex (mPFC) of rats.

Detailed Methodology:

- Animals: Male Sprague-Dawley rats (n=8 total, n=4 per group: Vehicle vs. SSRI). Small N is intentional for a pilot study.

- Treatment: Daily intraperitoneal injections of either vehicle (saline) or the SSRI (10 mg/kg) for 14 days.

- In Vivo Microdialysis: On day 15, a microdialysis probe (CMA 12, 4 mm membrane) was implanted into the mPFC (AP: +3.2 mm, ML: ±0.8 mm, DV: -5.0 mm from bregma). After a 24-hour recovery and 2-hour habituation, baseline dialysate was collected every 20 minutes for 2 hours.

- Sample Analysis: Dialysate samples were analyzed using High-Performance Liquid Chromatography with electrochemical detection (HPLC-ECD). Analytes were separated on a C18 reverse-phase column (ESA HR-80) with a mobile phase (pH 3.8) and detected by a dual-electrode analytical cell.

- Data Point: The mean baseline concentration (pg/µL) for each analyte across the 2-hour collection period was used for statistical analysis.

Data Presentation

Table 1: Raw Neurotransmitter Data (Mean Baseline Concentration, pg/µL)

| Animal ID | Group | 5-HT | 5-HIAA | DA |

|---|---|---|---|---|

| R01 | Vehicle | 0.12 | 45.2 | 0.08 |

| R02 | Vehicle | 0.15 | 48.7 | 0.10 |

| R03 | Vehicle | 0.09 | 42.1 | 0.06 |

| R04 | Vehicle | 0.11 | 46.5 | 0.09 |

| R05 | SSRI | 0.31 | 38.4 | 0.07 |

| R06 | SSRI | 0.25 | 35.8 | 0.09 |

| R07 | SSRI | 0.28 | 40.1 | 0.10 |

| R08 | SSRI | 0.33 | 36.9 | 0.08 |

Table 2: Bayesian Analysis Summary (Posterior Distributions)

| Analyte | Model | Mean Difference (SSRI - Vehicle) | 95% Highest Density Interval (HDI) | Probability of Effect > 0 | Bayes Factor (BF10) |

|---|---|---|---|---|---|

| 5-HT | Robust T-test | +0.17 pg/µL | [0.13, 0.22] | >99.9% | >100 (Extreme evidence) |

| 5-HIAA | Robust T-test | -8.4 pg/µL | [-13.1, -3.8] | 99.8% | 85.2 (Very strong evidence) |

| DA | Robust T-test | -0.005 pg/µL | [-0.03, +0.02] | 32.1% | 0.41 (Anecdotal evidence for H0) |

Interpretation: The SSRI treatment almost certainly increases extracellular 5-HT and decreases its metabolite 5-HIAA. There is no meaningful evidence for an effect on DA levels in this pilot study.

Visualizing Pathways & Workflow

Diagram 1: SSRI Action on Serotonergic Synapse (76 chars)

Diagram 2: Bayesian Pilot Study Workflow (46 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Neurochemical Microdialysis Studies

| Item | Function & Rationale |

|---|---|

| CMA 12 Microdialysis Probe | Implanted into brain tissue; semi-permeable membrane allows passive diffusion of extracellular fluid analytes (e.g., 5-HT) into the perfusate for collection. |

| Artificial Cerebrospinal Fluid (aCSF) | Perfusion fluid mimicking ionic composition of brain extracellular fluid. Must contain an SSRI reuptake inhibitor in vitro (e.g., citalopram) for accurate 5-HT recovery. |

| HPLC-ECD System with HR-80 Column | Gold standard for separation (column) and detection (electrochemical cell) of monoamines and metabolites with high sensitivity (pg/µL range). |

| SSRI Reference Standard | High-purity chemical used to validate the HPLC method, prepare calibration curves, and confirm drug presence in pharmacokinetic studies. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | Alternative/confirmatory method for specific analytes (e.g., BDNF, cytokines) in dialysate when multiplexing beyond classic neurotransmitters. |

| MCMC Sampling Software (Stan/pyMC3) | Computational engine for Bayesian inference. Specifies probability models and performs Hamiltonian Monte Carlo sampling to generate posterior distributions. |

Solving Common Problems: Priors, Convergence, and Model Checks in Small Studies

Within Bayesian statistics for small neurochemical studies, prior distributions encode existing knowledge about parameters (e.g., receptor occupancy, neurotransmitter concentration). In studies with limited sample sizes (n < 20), posterior estimates can be unduly sensitive to prior specification, threatening the validity of conclusions. This guide provides a technical framework for conducting and reporting comprehensive prior sensitivity and robustness analyses, a critical component for credible inference in early-stage drug development.

Formal Framework for Prior Sensitivity

The sensitivity of a posterior inference to the prior is quantified by the rate of change in the posterior with respect to changes in the prior. For a parameter θ, data D, prior π(θ), and posterior p(θ|D) ∝ L(D|θ)π(θ), a local sensitivity measure can be derived from the derivative of the log-posterior with respect to the prior hyperparameters. A practical global measure is the ε-contamination model: πα(θ) = (1-ε)π0(θ) + ε q(θ), where π_0 is the base prior, q is a contaminating prior, and ε ∈ [0,1]. Robustness is assessed by observing the variation in key posterior summaries (mean, credible interval) as ε and q vary.

Table 1: Common Prior Families & Hyperparameters in Neurochemistry

| Parameter Type | Common Prior Family | Typical Hyperparameters | Sensitivity Focus |

|---|---|---|---|

| Receptor Binding (Kd) | Log-Normal | μ (log-scale mean), σ (log-scale SD) | σ (scale parameter) |

| Baseline Concentration (μM) | Gamma | α (shape), β (rate) | α, β (small values imply high variance) |

| Treatment Effect (Δ) | Normal | μ0 (mean), τ0 (precision) | τ_0 (prior precision) |

| Variance (σ²) | Inverse-Gamma | ν (shape), s² (scale) | ν (small values imply weak information) |

Experimental Protocols for Robustness Analysis

Protocol 3.1: Hyperparameter Grid Search

- Define Ranges: For each prior hyperparameter, define a biologically plausible range. Example: For a Gamma(α,β) prior on baseline glutamate, set α ∈ [0.5, 3], β ∈ [0.1, 1].

- Generate Grid: Create a full factorial grid of hyperparameter combinations.

- Compute Posteriors: For each grid point, compute the posterior distribution of the target parameter (e.g., treatment effect size).

- Summarize Variation: Extract the posterior mean and 95% Highest Density Interval (HDI) for each posterior.

- Analyze: Calculate the range and standard deviation of posterior means across the grid. Report the maximum deviation from the base model's estimate.

Protocol 3.2: ε-Contamination Analysis

- Define Base and Contaminating Priors: Let π_0 be the chosen informative prior. Define a set Q of alternative priors (e.g., flatter priors, priors centered at different values).

- Set Contamination Levels: Define a vector ε = [0.0, 0.2, 0.4, 0.6].

- Compute Mixture Posteriors: For each q ∈ Q and each ε value, compute the posterior under the mixture prior π_α.

- Visualize: Plot key posterior statistics (mean, HDI limits) against ε for each q. The slope indicates sensitivity.

Protocol 3.3: Prior-Predictive Checking (Calibration)

- Simulate: Generate N (e.g., 1000) hypothetical datasets {Drep} from the prior-predictive distribution: p(Drep) = ∫ p(D_rep|θ) π(θ) dθ.

- Define Test Quantity T(D): Choose a statistic relevant to the research question (e.g., sample mean difference, maximum observed concentration).

- Calculate Distribution: Compute T(D_rep) for each simulated dataset to get the prior-predictive distribution of T.

- Compare: Plot the observed T(Dactual) against this distribution. A T(Dactual) in the extreme tails suggests prior-data conflict, flagging potential sensitivity.

Data Presentation and Reporting Standards

Table 2: Example Robustness Analysis Summary for a Dopamine Release Study

| Analysis Type | Hyperparameter/Variant | Posterior Mean Δ [95% HDI] | Deviation from Base |

|---|---|---|---|

| Base Model | Gamma(α=2, β=0.5) | 12.5 μM [8.1, 17.2] | 0.0 (Reference) |

| Grid Search (Min) | Gamma(α=0.5, β=1) | 14.1 μM [7.8, 20.5] | +1.6 μM |

| Grid Search (Max) | Gamma(α=3, β=0.1) | 11.2 μM [9.0, 13.5] | -1.3 μM |

| ε-Contam. (Flat q, ε=0.4) | Mixture Prior | 13.0 μM [7.5, 18.8] | +0.5 μM |

| Alternative Prior | Log-Normal(μ=2, σ=1) | 12.8 μM [7.9, 17.9] | +0.3 μM |

| Sensitivity Metric | Range of Means | 2.9 μM | - |

| Sensitivity Metric | Range of HDI Widths | 3.0 μM | - |

Visualizing Workflows and Relationships

Workflow for Prior Robustness Analysis

Factors Influencing Prior Sensitivity

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Bayesian Neurochemical Studies

| Reagent / Tool | Function / Purpose | Example in Analysis |

|---|---|---|

| Probabilistic Programming Language (e.g., Stan, PyMC) | Enables flexible specification of Bayesian models and sampling from complex posteriors. | Implementing the ε-contamination model and sampling from mixture posteriors. |

| High-Performance Computing (HPC) or Cloud Clusters | Facilitates running large-scale robustness analyses (grid searches, simulation studies) in parallel. | Simultaneously computing posteriors for 1000+ hyperparameter combinations. |

Sensitivity Analysis R Packages (e.g., sensemakr, bayesplot) |

Provides dedicated functions for local/global sensitivity measures and visualization. | Calculating robustness values and generating tornado plots for hyperparameters. |

| Prior Database/Literature Meta-Analysis | Source of empirically justified, weakly informative hyperparameter ranges for biological parameters. | Informing the plausible range for a log-normal prior on EC₅₀ from published EC₅₀ values. |

| Interactive Visualization Dashboard (e.g., Shiny, Dash) | Allows dynamic exploration of how posterior summaries change with prior hyperparameters. | Creating a tool for co-investigators to interactively adjust priors and see updated results. |

Within the context of Bayesian statistics for small neurochemical studies research, robust inference depends critically on the validity of the posterior distribution approximations generated by Markov Chain Monte Carlo (MCMC) methods. For researchers investigating neurotransmitter dynamics, receptor binding kinetics, or drug efficacy in small-sample preclinical studies, failing to diagnose poor MCMC convergence can lead to biased parameter estimates, misleading credible intervals, and ultimately, invalid scientific conclusions. This guide details the three pillars of practical MCMC convergence diagnosis—trace plots, the R-hat statistic, and effective sample size (ESS)—providing the neurochemical researcher with the tools necessary to ensure computational reliability.

Core Convergence Diagnostics: Theory and Application

Trace Plots: Visual Assessment of Stationarity

Experimental Protocol for Visual Diagnosis:

- Run at least four independent MCMC chains from dispersed starting points (e.g., drawn from a distribution with a variance an order of magnitude larger than the expected posterior variance).

- For each parameter of interest (e.g., a dissociation constant Kd, maximal binding Bmax, or treatment effect size), plot iteration number against sampled parameter value for all chains.

- Overlay all chains on a single plot, using distinct, high-contrast colors.

Interpretation Protocol: A well-converged trace plot will show:

- Stationarity: No discernible upward or downward trend. The chains fluctuate around a stable mean.

- Good Mixing: Chains rapidly traverse the full posterior support, resembling a "hairy caterpillar."

- Overlap: All chains are intermingled, indicating they are sampling from the same target distribution.

R-hat (Gelman-Rubin Statistic): Quantifying Between-Chain Consistency

R-hat measures the ratio of between-chain variance to within-chain variance for a given parameter. As chains converge to the common target, this ratio approaches 1.

Computational Protocol:

- Run m ≥ 4 chains for n iterations post-warmup.

- For parameter θ, calculate:

- Between-chain variance (B): Variance of the chain means.

- Within-chain variance (W): Average of the within-chain variances.

- Compute the potential scale reduction factor: (\hat{R} = \sqrt{\frac{\hat{V}}{W}}) where (\hat{V} = \frac{n-1}{n}W + \frac{1}{n}B).

- The modern rank-normalized, split-(\hat{R}) (Vehtari et al., 2021) is recommended, as it is more robust to non-stationary tails.

Decision Threshold: An (\hat{R} < 1.01) for all parameters is typically considered evidence of convergence. Values >1.05 indicate significant between-chain variance and failure to converge.

Table 1: R-hat Interpretation Guide for Neurochemical Parameters

| R-hat Value | Interpretation | Action for a Receptor Binding Study |

|---|---|---|

| ≤ 1.01 | Excellent convergence. | Proceed with posterior analysis of Kd and Bmax. |

| 1.01 – 1.05 | Adequate convergence. | Acceptable for preliminary analysis; consider increasing iterations. |

| > 1.05 | Poor convergence. | Unacceptable. Increase warmup, iterations, or reparameterize model. |

| > 1.10 | Severe convergence failure. | Model or sampling algorithm is likely misspecified. |

Effective Sample Size (ESS): Quantifying Within-Chain Efficiency

ESS estimates the number of independent draws from the posterior equivalent to the autocorrelated MCMC samples. It quantifies the precision of posterior mean estimates.

Computational Protocol (Monte Carlo Standard Error):

- Estimate the autocorrelation function ρl for lag l for each chain.

- Compute the ESS for m chains: (ESS = m \times n \times \frac{1}{1 + 2\sum{l=1}^{T}\hat{\rho}l})

- Report both bulk-ESS (for central posterior summaries like the median) and tail-ESS (for 95% credible intervals).

Decision Protocol: ESS should be sufficiently large for reliable inference.

- A bulk-ESS > 400 is a bare minimum.

- For reliable 95% interval estimates, a tail-ESS > 400 is required (Vehtari et al., 2021).

- ESS per second is a useful metric for comparing sampler efficiency.

Table 2: ESS Benchmarks for a Small Neurochemical Study (e.g., n=8 per group)

| Parameter Type | Target Bulk-ESS | Implication of Low ESS (<400) |

|---|---|---|

| Primary Treatment Effect | ≥ 1000 | Credible intervals for drug effect are unstable/unreliable. |

| Key Model Constants (e.g., Baseline) | ≥ 400 | Increased MC error in baseline estimate. |

| Variance Parameters (e.g., σ) | ≥ 400 | Poor characterization of between-sample variability. |

| All Parameters | Tail-ESS ≥ 400 | 95% CrIs for any parameter may be inaccurate. |

Integrated Diagnostic Workflow

A robust convergence check requires the sequential application of all three diagnostics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for MCMC in Neurochemical Research

| Tool / Reagent | Function in Convergence Diagnosis | Example / Note |

|---|---|---|

| Probabilistic Programming Language | Implements Bayesian model and MCMC sampler. | Stan (via rstan, cmdstanr), PyMC, JAGS. Stan's NUTS sampler is state-of-the-art. |

| Diagnostic Calculation Library | Computes R-hat, ESS, and other diagnostics. | posterior R package, ArviZ (Python), Stan's built-in diagnostics. |

| Visualization Package | Generates trace plots, autocorrelation plots, and posterior densities. | bayesplot (R), ggplot2, ArviZ (Python), Matplotlib. |

| High-Performance Computing (HPC) Environment | Runs multiple long chains in parallel. | Local multi-core machines, computing clusters, or cloud resources. |

| Prior Distribution Database/Library | Informs weakly informative prior specification to improve geometry. | brms prior() functions, literature meta-analyses of neurochemical parameters. |

| Divergence & Tree Depth Monitor | Diagnoses Hamiltonian Monte Carlo (HMC/NUTS) specific sampling issues. | Monitors in Stan (adapt_delta, max_treedepth), indicating areas of poor posterior curvature. |