A Practical Guide to Cross-Validation for Robust and Reproducible Neurochemical Data Analysis

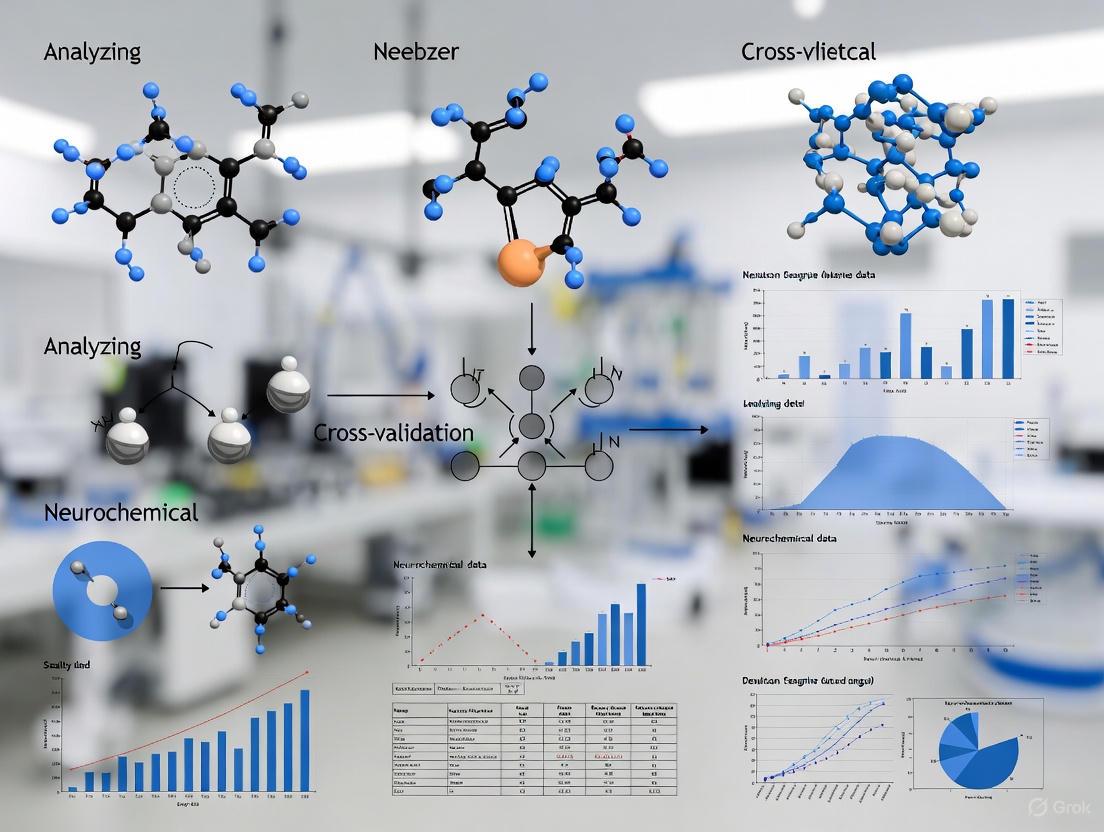

This article provides a comprehensive guide to implementing cross-validation (CV) in neurochemical data analysis, addressing critical challenges from foundational principles to advanced validation techniques.

A Practical Guide to Cross-Validation for Robust and Reproducible Neurochemical Data Analysis

Abstract

This article provides a comprehensive guide to implementing cross-validation (CV) in neurochemical data analysis, addressing critical challenges from foundational principles to advanced validation techniques. Tailored for researchers, scientists, and drug development professionals, it explores core CV methodologies, their application to neurochemical datasets prone to non-stationarity and temporal dependencies, and strategies to mitigate overfitting and inflation of performance metrics. The content details troubleshooting common pitfalls like data leakage and overhyping, offers optimization procedures for parameter tuning, and presents rigorous frameworks for model comparison and statistical significance testing. By synthesizing these elements, this guide aims to equip practitioners with the knowledge to build more generalizable, reliable, and clinically translatable predictive models in neuroscience and drug development.

Core Principles and Critical Importance of Cross-Validation in Neurochemistry

Cross-validation (CV) is a foundational statistical procedure used to evaluate how well a predictive model will generalize to unseen data. In neurochemical research, where data collection is often expensive and sample sizes can be limited, CV provides a critical framework for robust model assessment, algorithm selection, and hyperparameter tuning. It operates by repeatedly partitioning the available dataset into complementary training and testing subsets, enabling researchers to obtain a realistic estimate of a model's predictive performance on new, independent data and to guard against over-optimistic results from overfitting [1] [2]. This guide addresses the specific challenges and solutions for applying cross-validation in the context of neurochemical data analysis.

Troubleshooting Guides

Guide 1: Addressing Inflated Accuracy Due to Temporal Dependencies

Problem: Model performance metrics are unrealistically high because the cross-validation procedure does not account for temporal dependencies or block structures in the data collection protocol.

Explanation: Neurochemical and neurophysiological data (e.g., from EEG) often contain inherent temporal correlations. Factors like participant drowsiness, nervousness, or equipment drift can create patterns that are consistent within a recording block [3] [4]. If data from the same continuous block are split into both training and testing sets, the model may learn to recognize these temporal "signatures" rather than the underlying neurochemical state of interest, leading to optimistically biased performance estimates [4].

Solution:

- Implement Block-Wise Splitting: Ensure that all data points from a single experimental block or trial are contained entirely within either the training set or the test set for any given CV fold [3] [4].

- Respect the Data Structure: Partition your data at the level of independent experimental sessions or participants, not at the level of individual, temporally adjacent samples.

Experimental Protocol for Validation:

- For an dataset with

Bexperimental blocks, choose ak-fold CV wherek <= B. - Assign entire blocks to each fold, rather than randomly shuffling individual samples across blocks.

- Train and test your model using these block-wise folds.

- Compare the resulting accuracy with that obtained from a standard, non-block-wise CV. A significantly lower accuracy from the block-wise method indicates that the initial model was likely biased by temporal dependencies [4].

Guide 2: Mitigating P-Hacking and Statistical Flaws in Model Comparison

Problem: The statistical significance (p-value) indicating that one model outperforms another changes drastically based on the choice of the number of CV folds (K) and the number of CV repetitions (M). This variability can lead to "p-hacking," where researchers might inadvertently or deliberately choose CV settings that produce significant results [5].

Explanation: Using a simple paired t-test on the K x M accuracy scores from two models is a common but flawed practice. The inherent dependency between CV folds (due to overlapping training data) violates the independence assumption of the test. Research has shown that increasing K and M can artificially increase the sensitivity of the test, making it more likely to find a "significant" difference even between models with no intrinsic predictive difference [5].

Solution:

- Use Correct Statistical Tests: Employ statistical methods that account for the dependencies in CV results, such as permutation tests or dedicated tests like the corrected resampled t-test [5] [6].

- Predefine CV Settings: Finalize and report the values of

KandMin your experimental design, before evaluating the models, to avoid the temptation of tuning them to achieve a desired p-value.

Experimental Protocol for Validation: A framework to illustrate this pitfall can be implemented as follows [5]:

- Train a single linear model (e.g., Logistic Regression) on your neurochemical data.

- Create two "perturbed" models by adding and subtracting a small random noise vector to the weights of the original model.

- Compare these two functionally equivalent models using different (

K,M) CV setups with a paired t-test. - The framework will demonstrate that with higher

KandM, the null hypothesis (that there is no difference) is increasingly rejected, confirming the flaw of the standard test [5].

Guide 3: Preventing Data Leakage During Preprocessing

Problem: Information from the test set leaks into the training process, resulting in an overfit model and an invalid performance estimate.

Explanation: Data preprocessing steps (e.g., normalization, feature selection) must be learned from the training data only. If you apply preprocessing (like standardization) to the entire dataset before splitting it into training and test sets, the parameters of the scaler (mean and standard deviation) will have been influenced by the test samples. This gives the model an unfair advantage, as it has indirectly received information about the global distribution of the data, including the test set [7].

Solution:

- Use Pipelines: Integrate your preprocessing steps and model into a single pipeline object. This ensures that within each CV fold, the preprocessing is fit solely on the training split and then applied to the test split [7].

- Nested Cross-Validation: For a final, unbiased performance estimate when also tuning hyperparameters, use nested CV. This involves an outer CV loop for performance estimation and an inner CV loop within each training fold for model selection, perfectly isolating the test set at every stage [2] [8].

Experimental Protocol for Validation:

- Split your data into training and test sets.

- Incorrect Method: Fit a scaler on the entire dataset, then transform the entire dataset, and finally perform CV.

- Correct Method: For each fold in the CV, fit the scaler on the training split for that fold, transform both the training and test splits, train the model on the scaled training data, and score it on the scaled test data.

- Compare the performance estimates from both methods. The incorrect method will typically show an unrealistically high performance [7].

Frequently Asked Questions (FAQs)

FAQ 1: What is the optimal number of folds (K) to use in k-fold cross-validation for typical neurochemical datasets?

There is no universal optimal value for K. The choice involves a bias-variance tradeoff [8].

- Lower K (e.g., 5): Provides a lower variance in the performance estimate (because each test set is larger) but a higher bias (because the training sets are smaller and may not be fully representative).

- Higher K (e.g., 10 or Leave-One-Out): Reduces bias (by providing larger training sets) but increases the variance of the estimate (because the test sets are small, leading to noisy performance scores).

For many neurochemical studies with small-to-moderate sample sizes, K=5 or K=10 is a common and practical choice [2] [8]. It is recommended to use stratified k-fold CV for classification problems to preserve the proportion of each class in every fold, which is especially important for imbalanced datasets [8].

FAQ 2: How should I split my data if multiple measurements come from the same subject?

You must perform subject-wise (or patient-wise) splitting [2] [8]. All measurements from a single subject must be kept together in either the training set or the test set for a given CV fold. Splitting individual records from the same subject across training and test sets (record-wise splitting) leads to data leakage and massively inflated, unrealistic performance, as the model can learn to identify individuals rather than the generalizable neurochemical signal of interest [8].

FAQ 3: When is a simple holdout test set preferable to cross-validation?

A holdout test set (a single train/test split) is preferable when you have a very large dataset, such that the holdout test set is itself large enough to be a reliable and representative estimate of generalization performance [2]. However, for the typical small-to-moderate sized datasets in neurochemical research, CV is almost always preferred because it makes more efficient use of the available data and provides a more stable performance estimate [2] [8].

FAQ 4: What is the difference between cross-validation used for performance estimation versus hyperparameter tuning?

It is critical to distinguish these two purposes:

- Performance Estimation: The goal is to get an unbiased estimate of how a fully-specified model (with its hyperparameters already set) will perform on future data.

- Hyperparameter Tuning: The goal is to find the best hyperparameters for your model.

To avoid optimism bias, you cannot use the same CV procedure for both. Using the same CV for tuning and performance estimation will tune the model to that specific data, overfitting the test folds. The solution is Nested Cross-Validation, where an inner CV loop is used for tuning within the training set of an outer CV loop that is used for final performance estimation [2] [8].

The following table summarizes key quantitative findings from research on the impact of cross-validation configurations, illustrating potential pitfalls.

Table 1: Impact of Cross-Validation Configurations on Model Comparison

| Dataset | CV Configuration | Key Finding | Practical Implication |

|---|---|---|---|

| Adolescent Brain Cognitive Development (ABCD) [5] | Varying folds (K) & repetitions (M) | The rate of falsely detecting a significant difference between models increased by an average of 0.49 from M=1 to M=10 across K settings [5]. | Increased CV repetitions can artificially inflate statistical significance in model comparison, leading to p-hacking [5]. |

| EEG n-back Datasets [3] [4] | Block-wise vs. standard k-fold split | Classification accuracy for a Filter Bank Common Spatial Pattern (FBCSP) classifier differed by up to 30.4% between validation schemes [3] [4]. | Ignoring temporal block structure can severely inflate accuracy metrics, making conclusions unreliable [3] [4]. |

| fMRI Decoding Studies [4] | Leave-one-sample-out vs. independent test set | Leave-one-sample-out CV overestimated performance by up to 43% compared to evaluations on independent test sets [4]. | Simple CV schemes that ignore temporal dependencies can provide a highly misleading picture of a model's true utility [4]. |

Experimental Protocols

Protocol 1: A Framework for Unbiased Model Comparison

This protocol is designed to rigorously test whether the observed superiority of a new model is genuine or an artifact of the cross-validation setup [5].

- Objective: To assess the impact of CV setups (the number of folds

Kand repetitionsM) on the statistical significance of accuracy differences between two models. - Method:

a. From your dataset, randomly select a balanced set of

Nsamples per class. b. Train a baseline model (e.g., Logistic Regression) on the data. c. Create two "perturbed" models by adding and subtracting a small random Gaussian noise vector (with standard deviation1/E, whereEis a perturbation level) to the weights of the baseline model. This creates two models with no intrinsic difference in predictive power. d. Evaluate the two perturbed models using repeated K-fold CV (with variousKandMcombinations). e. Use a statistical test (e.g., a paired t-test) to compare theK x Maccuracy scores of the two models. - Expected Outcome: If the testing procedure is unbiased, it should rarely (e.g., <5% of the time) find a significant difference (p < 0.05) between the two equivalent models. However, this framework demonstrates that with higher

KandM, the rate of false positives increases significantly [5].

Protocol 2: Evaluating the Impact of Temporal Dependencies

This protocol helps determine if your model's performance is biased by temporal correlations in your data [4].

- Objective: To quantify the inflation of performance metrics caused by ignoring the block structure of data collection.

- Method:

a. Identify the natural blocks or trials in your experimental data (e.g., each 5-minute recording period or each distinct experimental condition presentation).

b. Implement two CV schemes:

* Standard K-fold: Randomly split individual samples into

Kfolds, ignoring block structure. * Block-wise K-fold: Assign entire blocks toKfolds, ensuring no data from a single block appears in both training and test sets for any fold. c. Train and evaluate your model using both CV schemes, keeping all other factors constant. d. Compute the performance metric (e.g., accuracy) for both schemes. - Expected Outcome: A large performance difference (e.g., >10%) between the standard and block-wise CV results indicates that the standard approach is likely producing a positively biased estimate due to temporal dependencies [3] [4].

Workflow Visualization

Cross-Validation Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Neurochemical Modeling

| Tool / 'Reagent' | Function / 'Role in Experiment' | Key Feature / 'Stability' |

|---|---|---|

| Scikit-learn (Python) [7] | A comprehensive library providing implementations of various cross-validation schemes, machine learning models, and preprocessing utilities. | Offers robust, well-tested, and consistent APIs for building modeling pipelines, ensuring reproducibility. |

| Stratified K-Fold [8] | A CV "reagent" that preserves the percentage of samples for each class in every fold. | Prevents bias in performance estimation that can occur with imbalanced class distributions, a common issue in clinical data. |

| Pipeline Object [7] | A container that sequentially applies a list of transforms and a final estimator, preventing data leakage. | Ensures that preprocessing steps (like scaling) are fit only on the training data in each CV fold. |

| Permutation Tests [6] | A statistical "assay" used to compute the significance of a model's performance by comparing it to a null distribution. | Provides a non-parametric and reliable way to test hypotheses without relying on potentially flawed assumptions of normality and independence in CV scores. |

Frequently Asked Questions (FAQs)

Core Concepts

Q1: What is the fundamental purpose of cross-validation (CV) in data analysis? Cross-validation is a fundamental technique used to simulate the replicability of research findings on new data. It repeatedly partitions a single dataset to train a model on one subset and test it on another, providing an unbiased estimate of how well the model will perform on unseen data [9]. Its primary purpose is to protect against overfitting, which occurs when a model learns the specific patterns—including noise—of a training dataset, rather than the general underlying relationships, leading to poor performance on new data [10] [11].

Q2: What is the difference between "overfitting" and "overhyping"?

- Overfitting traditionally refers to a model learning the training data too well, including its noise and random fluctuations, often because it has too many parameters relative to the amount of data [10] [11].

- Overhyping is a specific, widespread, and often unintentional form of overfitting. It occurs when analysis hyperparameters (e.g., artifact rejection criteria, feature selection, frequency filter settings, choice of classifier) are tuned to improve results for a specific dataset. A model may appear excellent with one set of hyperparameters but fails to generalize to new data, even when using the same hyperparameters [10].

Q3: How does CV relate to the broader concepts of reproducibility and replicability? Reproducibility and replicability are key goals of robust science, and CV is a practical tool to achieve them [12].

- Reproducibility means obtaining consistent results when re-running the same code on the same data [13] [12].

- Replicability means obtaining consistent results when applying the same methods to new data [13] [12]. CV acts as a form of simulated replication within your existing dataset, giving you confidence that your findings are not a one-off occurrence and are likely to hold in future studies [9].

Implementation and Best Practices

Q4: What are the most common CV schemes, and when should I use them? The choice of CV scheme depends on your sample size and experimental design [9] [3].

| CV Scheme | Description | Ideal Use Case |

|---|---|---|

| Holdout | Single split into training and testing sets (e.g., 2/3 for training, 1/3 for testing). | Quick initial model evaluation; very large datasets [9]. |

| K-Fold | Data divided into K equal folds. Model is trained on K-1 folds and tested on the remaining fold, repeated K times. | Standard for small-to-medium-sized datasets; balances bias and variance [10] [5]. |

| Stratified K-Fold | Ensures each fold has an equal proportion of samples from each class. | Classification tasks with imbalanced class sizes [10]. |

| Leave-One-Subject-Out (LOSO) | Each subject's data is held out as the test set once; model is trained on all other subjects. | Clinical diagnostics; models intended to generalize to new, unseen individuals [9]. |

| Nested CV | An outer CV loop estimates model performance, while an inner CV loop selects optimal hyperparameters. | Essential when tuning hyperparameters to get an unbiased performance estimate [11]. |

Q5: Why is it critical that my training and testing data remain independent? Independence is the core principle that makes CV work. If information from the test set "leaks" into the training process, your model will be evaluated on data it has already effectively seen, leading to a highly optimistic and biased performance estimate [11]. This directly undermines the goal of assessing generalizability. A common source of non-independence is temporal dependencies in time-series data (like EEG or fMRI), where splitting data randomly without respecting the experimental block structure can allow the model to learn temporal patterns instead of true cognitive states, inflating accuracy by up to 30% [3].

Q6: How can the choice of CV setup lead to misleading conclusions or "p-hacking"? The flexibility in choosing CV parameters (number of folds, number of repetitions) can itself be a source of researcher degrees of freedom. A recent study demonstrated that even when comparing two classifiers with the same intrinsic predictive power, different K and M (repetitions) combinations could produce statistically significant p-values (p < 0.05) for their non-existent difference [5]. This means that by trying different CV setups, a researcher could inadvertently (or intentionally) "hack" their way to a significant result, exacerbating the reproducibility crisis [5].

Troubleshooting Guides

Problem 1: My model performs excellently during cross-validation but fails on new, external data.

Potential Causes and Solutions:

- Cause: Overhyping (Hyperparameter Tuning on the Entire Dataset): You may have optimized your analysis hyperparameters (e.g., feature selection, classifier settings) using the results from your initial CV, then re-run the CV with those "best" settings. This tunes the model to the specific dataset, violating the independence principle [10].

- Solution: Implement Nested Cross-Validation [11]. Use an inner CV loop within your training data to find the best hyperparameters, and an outer CV loop to evaluate the final model's performance. This ensures the test data in the outer loop is never used for any tuning decisions.

- Cause: Data Mismatch: The new data may come from a different distribution (e.g., different scanner, site, or patient population) than your training data [14].

- Cause: Inadequate CV Scheme: Your CV scheme did not properly account for the structure of your data (e.g., using random splits on temporally dependent data) [3].

Problem 2: I get highly variable performance metrics every time I re-run my cross-validation.

Potential Causes and Solutions:

- Cause: High Variance in Performance Estimate: This is common with small sample sizes and CV schemes with small test set sizes (e.g., Leave-One-Out CV with few samples) [3].

- Solution: Increase the number of folds in K-Fold CV (e.g., 10-fold) to increase the size of each test set and reduce variance [9]. Consider repeating the K-fold CV multiple times with different random partitions and reporting the average [5]. Most importantly, strive to increase your sample size [11].

- Cause: Random Seed Dependency: The model or data splitting process is sensitive to the initial random state.

- Solution: While you should not "shop" for a random seed that gives good results, it is good practice to run CV multiple times with different seeds to understand the stability of your performance estimate. Report the mean and variance of the results.

Problem 3: I am unsure how to statistically compare two different models using cross-validation.

Potential Cause and Solution:

- Cause: Using Invalid Statistical Tests: Applying a standard paired t-test to the K accuracy scores from a single K-fold CV run is flawed because the scores are not independent (the training sets overlap) [5].

- Solution: Use statistical tests designed for correlated samples or based on resampling. One robust approach is to perform repeated K-fold CV (e.g., 100 times) for both models, then use a paired test on the 100 resulting mean accuracy scores [5]. Always be transparent about your CV and testing procedures to avoid the potential for p-hacking [5].

This table outlines key resources for implementing reproducible, CV-based analysis in neuroimaging.

| Resource / Tool | Function / Purpose | Key Context |

|---|---|---|

| Scikit-learn (Python) | Provides efficient, standardized implementations of numerous ML models and CV schemes [9]. | De facto standard for ML in Python; simplifies creating complex, reproducible analysis pipelines. |

| PredPsych (R) | A toolbox specifically developed for psychologists to perform multivariate analyses with easy CV implementation [9]. | Lowers the programming barrier for field specialists. |

| PRoNTO | A neuroimaging toolbox with a focus on machine learning and detailed CV protocols [9]. | Designed specifically for neuroimaging data analysis. |

| Clinica / QuNex | Open-source platforms for reproducible processing of clinical neuroimaging data (MRI, PET) [12]. | Manages the entire workflow from raw data (BIDS) to processed output, ensuring reproducibility. |

| Nested CV | A workflow, not a tool, that is critical for unbiased hyperparameter tuning and performance estimation [11]. | Non-negotiable methodological practice when model configuration is part of the analysis. |

| Containerization (Docker/Singularity) | Packages code, software, and dependencies into a single, portable unit that runs consistently anywhere [12]. | Eliminates "it worked on my machine" problems, ensuring computational reproducibility. |

Experimental Protocol: Assessing Model Generalizability

This workflow details a robust methodology for evaluating a predictive model, incorporating best practices from the cited literature.

Title: Nested Cross-Validation Workflow

Objective: To obtain an unbiased estimate of a machine learning model's performance on unseen data while rigorously tuning its hyperparameters.

Procedure:

- Initial Setup: Begin with a fully acquired dataset. Define the set of analysis hyperparameters (e.g., classifier type, regularization strength) to be explored [10].

- Outer Loop (Performance Estimation): Partition the data into K folds (e.g., K=10). For each fold

i(the test fold): a. The remaining K-1 folds form the training fold. b. Inner Loop (Hyperparameter Tuning): On the training fold only, perform a second, independent CV (e.g., 5-fold). Train the model with different hyperparameter combinations on these inner training sets and evaluate them on the inner test sets. Select the hyperparameter set that yields the best average performance [11]. c. Final Training: Train a new model on the entire training fold using the single best set of hyperparameters identified in the inner loop. d. Testing: Apply this final model to the held-out test foldifrom the outer loop to obtain a performance score. This score is unbiased because the test data was not used for any tuning. - Aggregation: Once every outer fold has been used as the test set, aggregate the performance scores (e.g., accuracy, AUC). The final model performance is reported as the mean and standard deviation of these K scores [11].

Frequently Asked Questions (FAQs)

1. What are the most common sources of temporal dependencies in neurochemical and neuroimaging data? Temporal dependencies arise from multiple sources across different timescales. These include the intrinsic non-stationarity of neural signals themselves, minor shifts in recording hardware (like EEG sensors) over time, and cognitive-behavioral factors such as participants initially feeling nervous and then relaxing, or increasing drowsiness as an experiment progresses. Bodily needs (hunger, thirst, eye strain) can also introduce systematic changes that manifest as temporal structure in the data [3].

2. How can inappropriate cross-validation lead to inflated or biased performance metrics? If cross-validation splits do not respect the natural block structure of an experiment, data from the same continuous block can end up in both training and testing sets. The model can then learn to recognize the specific temporal "signature" or context of a block rather than the generalizable neurochemical signal of interest. One study demonstrated that this can inflate the reported classification accuracy of a common spatial pattern algorithm by up to 30.4% [3]. This creates a falsely optimistic estimate of how well the model will perform on truly new data [5].

3. What is the practical impact of choosing different cross-validation schemes? The choice of cross-validation can directly change the conclusions of a study. Research has shown that depending on whether the CV scheme respects the block structure of the data, the relative performance of classifiers can vary significantly. For instance, the same Riemannian minimum distance classifier showed accuracy differences of up to 12.7% across different CV implementations. This means a model might appear superior to another not because of its intrinsic merit, but due to an evaluation method that inadvertently introduces bias [3] [5].

4. How can I measure and account for temporal dependencies in my data? Several methods exist to quantify temporal structure:

- Autocorrelation at Lag 1 (AC1): Measures the correlation of a data series with itself at a one-time-step delay. Reaction time data, for instance, often show positive autocorrelations at short lags [16].

- Power Spectrum Density (PSD) Slope: Analyzes the data in the frequency domain. The slope of the log-power versus log-frequency relationship indicates the presence of temporal structure (e.g., a slope of 0 suggests white noise, while a slope of 2 suggests brown noise) [16].

- Detrended Fluctuation Analysis (DFA) Slope: Quantifies long-range temporal correlations by analyzing the data after removing local trends [16]. These measures have been shown to be stable individual traits and correlate with task performance, making them useful for characterizing your dataset before deciding on a validation strategy [16].

5. What is a "block structure" in experimental design and why is it critical for data splitting? Many neurochemical experiments present conditions in long blocks (e.g., a 10-minute block of a high-workload task followed by a 10-minute rest block). This block structure means that all data samples within a single block share not only the experimental condition but also a common temporal context (e.g., the same level of participant fatigue or habituation). If data is split randomly across these blocks for cross-validation, the model can learn these confounding temporal patterns. Therefore, the best practice is to split data at the block boundary, keeping all data from entire blocks together in either training or testing sets to ensure a realistic evaluation [3].

Troubleshooting Guides

Problem: Inflated Model Accuracy During Offline Evaluation

Symptoms: Your machine learning model shows high classification accuracy during offline cross-validation, but this performance drops drastically when deployed in a real-time setting or on a truly independent dataset.

Potential Causes and Solutions:

- Cause 1: Data leakage due to a cross-validation procedure that ignores temporal dependencies.

- Solution: Implement a block-wise or group-wise cross-validation scheme. Instead of randomly assigning individual samples to folds, assign entire experimental blocks. This ensures that all data from a single continuous block is kept entirely within the same fold (either for training or testing) [3].

- Cause 2: High variance in performance metrics due to an inappropriate number of cross-validation folds.

- Solution: Optimize the bias-variance tradeoff in your CV setup. Using too few folds (e.g., 2-fold CV) can lead to high variance in the accuracy estimate, while using too many (e.g., leave-one-out CV) can be computationally expensive and potentially increase bias. A repeated k-fold CV (e.g., 5 or 10 folds) is often a good compromise, but the block structure must still be respected [17].

Problem: Unreliable Comparison Between Models

Symptoms: You cannot consistently determine if one model is statistically superior to another, as the conclusion changes with different cross-validation setups.

Potential Causes and Solutions:

- Cause: The statistical test used to compare models is sensitive to the specific cross-validation configuration (number of folds K and repetitions M), rather than reflecting a true difference in intrinsic predictive power [5].

- Solution: Avoid using simple paired t-tests on repeated CV results, as this practice is known to be flawed and can produce misleading p-values. Instead, use statistical tests specifically designed for correlated cross-validation results, such as the Nadeau and Bengio corrected t-test. Furthermore, always report the exact cross-validation parameters (K, M, and most importantly, the splitting strategy) used in your comparisons to ensure transparency and reproducibility [5].

Table 1: Impact of Cross-Validation Scheme on Classifier Performance [3]

| Classifier Type | Maximum Reported Accuracy Difference | Primary Cause of Variance |

|---|---|---|

| Riemannian Minimum Distance (RMDM) | 12.7% | Whether CV respected block structure of data |

| Filter Bank CSP with LDA | 30.4% | Whether CV respected block structure of data |

Table 2: Common Measures for Quantifying Temporal Dependencies [16]

| Measure | What It Quantifies | Interpretation Guide |

|---|---|---|

| Autocorrelation at Lag 1 (AC1) | Short-term dependency; how similar a data point is to the one immediately following it. | High positive value: strong short-term dependencies (e.g., slow drifts). Near zero: minimal short-term structure. |

| Power Spectrum Density (PSD) Slope | The "color" of the noise and the balance of long- vs. short-term fluctuations. | 0 (White Noise): No temporal structure. -1 (Pink Noise): Balanced structure. -2 (Brown Noise): Strong long-term dependencies. |

| Detrended Fluctuation Analysis (DFA) Slope | Long-range, power-law temporal correlations. | 0.5: No correlations (white noise). >0.5: Positive long-range correlations. <0.5: Negative long-range correlations. |

Experimental Protocols

Protocol: Implementing Block-Wise Cross-Validation

Objective: To evaluate a neurochemical state classifier in a way that prevents data leakage and provides a realistic performance estimate by respecting the experimental block structure.

- Data Preparation: Organize your preprocessed data into distinct blocks corresponding to the continuous periods of each experimental condition (e.g., Block 1: Rest, Block 2: n-back task, Block 3: Rest, Block 4: n-back task).

- Fold Generation: Instead of randomly splitting individual samples, assign entire blocks to folds. For example, in a 4-fold CV, you would have 4 folds, each containing one of the blocks.

- Model Training and Testing: Iteratively hold out one fold (one entire block) as the test set and use the remaining folds (all other blocks) as the training set.

- Performance Calculation: Train the model on the training blocks and calculate the accuracy on the held-out test block. Repeat this process until each block has been used as the test set once. The final performance metric is the average across all folds [3].

Protocol: Quantifying Temporal Dependencies in a Behavioral or Neural Time Series

Objective: To characterize the temporal structure of a univariate time series (e.g., reaction times, power of a neural oscillation) using standard metrics.

- Data Extraction: Extract the time series of interest from your data, ensuring it is from a single participant and condition to avoid confounding effects.

- Compute Autocorrelation:

- Calculate the autocorrelation function for a range of lags.

- Extract the value at lag 1 (AC1) as a measure of short-term dependency [16].

- Compute Power Spectrum Density (PSD) Slope:

- Perform a Fourier transform on the time series to obtain the power spectrum.

- Plot the log of power against the log of frequency.

- Fit a linear regression line to this log-log plot; the slope of this line is the PSD slope [16].

- Compute Detrended Fluctuation Analysis (DFA) Slope:

- Integrate the time series.

- Divide the integrated series into windows of varying sizes.

- In each window, detrend the data by subtracting a local least-squares fit.

- Calculate the average fluctuation for each window size.

- Plot the log of the fluctuation against the log of the window size and fit a line; the slope is the DFA exponent [16].

Signaling Pathways and Workflows

CV Strategy Selection

Temporal Metrics Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Neurochemical Experimental Design & Analysis

| Item / Concept | Function / Role in Research |

|---|---|

| Block-Wise Cross-Validation | A data splitting strategy that keeps all samples from an experimental block together to prevent data leakage and provide realistic model performance estimates [3]. |

| Temporal Dependency Metrics (AC1, PSD, DFA) | Quantitative tools to characterize the structure of variability in time-series data, which is a stable individual trait and crucial for informing analysis choices [16]. |

| Binding Potential (BP) | A common endpoint in PET neuroimaging, representing the steady-state ratio of specifically bound tracer to free tracer. It serves as a surrogate for neuroreceptor density and is sensitive to changes in neurotransmitter levels [18]. |

| Positron Emission Tomography (PET) Tracers | Radiolabeled molecules (e.g., [11C]raclopride for dopamine D2/D3 receptors) that allow for the in vivo quantification of specific neurochemical targets, such as receptors, transporters, and enzymes [18]. |

| Kinetic Modeling | A mathematical framework applied to dynamic PET data to separate the PET signal into its constituent parts (e.g., blood-borne, free, specifically bound), enabling the estimation of parameters like Binding Potential [18]. |

FAQs: Core Concepts for Researchers

What is the bias-variance trade-off and why is it critical for neurochemical data analysis?

The total error of a machine learning model can be decomposed into three parts: bias², variance, and irreducible error [19] [20] [21]. The bias-variance trade-off describes the inverse relationship between a model's bias and its variance; reducing one typically increases the other [22] [23]. Your goal is to find the model complexity that minimizes the total error by striking a balance between the two [20]. For neurochemical data, which is often high-dimensional and noisy, managing this trade-off is essential for building models that generalize reliably to new, unseen experimental data.

How do I diagnose if my model is suffering from high bias or high variance?

Diagnosing these issues involves examining your model's performance on training versus validation data [19] [23].

- High Bias (Underfitting): This occurs when your model is too simple to capture the underlying patterns in the data. The symptoms include high error on both the training and validation sets, and the learning curves (plots of error vs. training set size) for both sets converge at a high error value [19] [21].

- High Variance (Overfitting): This happens when your model is too complex and learns the noise in the training data. The key symptom is a large gap between training and validation error—your model performs well on the training data but poorly on the validation data [19] [23].

The table below summarizes the key characteristics:

| Condition | Training Error | Validation Error | Model Behavior |

|---|---|---|---|

| High Bias (Underfitting) | High | High | Oversimplified, misses data patterns [23] [21] |

| High Variance (Overfitting) | Low | High | Overly complex, memorizes noise [23] [21] |

| Ideal Balance | Acceptably Low | Acceptably Low | Generalizes well to new data [19] |

Which cross-validation (CV) method should I use for my neuroimaging dataset?

The choice of CV is crucial for obtaining a robust performance estimate and is a primary tool for navigating the bias-variance trade-off [17]. The optimal method depends on your dataset's size and structure [17].

- k-Fold CV: The standard choice for many scenarios. It randomly splits the data into k folds, using k-1 for training and one for validation, and rotates this process [24] [17]. It offers a good balance between bias and variance; lower k (e.g., 5) has higher bias but lower variance, while higher k (e.g., 20) has lower bias but higher variance [5] [17].

- Leave-One-Out CV (LOOCV): Useful for very small datasets. It uses a single sample for validation and the rest for training. While it has low bias, it is computationally expensive and can have high variance [17].

- Stratified K-Fold: Essential for classification problems with class imbalance. It ensures each fold maintains the same proportion of class labels as the complete dataset, leading to more reliable performance estimates [21].

- Grouped CV: Critical when your data has inherent groupings (e.g., multiple samples from the same patient). This method ensures all samples from a single group are in either the training or validation set, preventing optimistic bias from data leakage [17].

I've implemented cross-validation, but my model still fails on external data. What might be wrong?

This is a common challenge, often related to the CV setup itself. A 2025 study highlights that the statistical significance of model comparisons can be highly sensitive to CV configurations (e.g., the number of folds K and repetitions M) [5]. Using a high number of folds and repetitions might lead you to conclude a model is significantly better when the difference is, in fact, due to chance (a form of p-hacking) [5]. To mitigate this:

- Use a nested cross-validation approach, where an inner loop handles model tuning and an outer loop provides an unbiased performance estimate [17].

- Hold out a completely independent test set from the entire model development and CV process, using it only for the final evaluation [17].

- Be cautious when comparing models and ensure your CV procedure is consistent and accounts for dependencies in the data [5].

Troubleshooting Guides

Guide: Correcting an Underfitting Model (High Bias)

An underfitting model is too simplistic and fails to capture relevant relationships in your neurochemical data.

Symptoms:

- Consistently poor performance on both training and validation sets [19] [21].

- Learning curves show training and validation errors converging at a high value [19].

Actionable Steps:

- Increase Model Complexity: Transition from simple models (e.g., linear regression) to more flexible ones like polynomial regression, decision trees, or neural networks [19] [23].

- Engineer More Informative Features: Use your domain expertise to create new, relevant features from the raw data. Incorporating interaction terms between existing features can also help [19].

- Reduce Regularization: Regularization techniques (L1/L2) are designed to penalize complexity. If your model has high bias, decreasing the regularization strength can allow it to learn more complex patterns [19] [21].

- Increase Training Time: For iterative models like neural networks, underfitting can sometimes be alleviated by training for more epochs [19].

Guide: Correcting an Overfitting Model (High Variance)

An overfitting model has learned the training data too well, including its noise and random fluctuations, and fails to generalize.

Symptoms:

- The model's performance on the training data is excellent, but it performs poorly on the validation data [19] [23].

- A significant gap exists between the training and validation learning curves [19].

Actionable Steps:

- Acquire More Training Data: This is often the most effective solution. More data helps the model learn the true underlying distribution rather than memorizing noise [19].

- Apply Regularization: Introduce techniques like L1 (Lasso) or L2 (Ridge) regression. These methods penalize overly complex models by constraining the size of the model coefficients, which discourages overfitting [24] [23] [21].

- Reduce Model Complexity: Simplify your model. This can involve reducing the number of features through feature selection, using a simpler algorithm, or limiting parameters (e.g., pruning a decision tree or reducing the number of layers in a neural network) [19] [23].

- Use Ensemble Methods: Methods like Random Forest (bagging) combine predictions from multiple models trained on different data subsets. Averaging these predictions reduces overall variance [19] [23].

- Implement Early Stopping: For iterative learners, stop the training process as soon as the validation performance starts to degrade, even if the training performance is still improving [19].

Experimental Protocols & Methodologies

Protocol: Evaluating Model Performance via Cross-Validation

This protocol outlines a robust method for estimating the generalization error of a predictive model using k-fold cross-validation, a cornerstone of reliable model evaluation [17].

Objective: To obtain an unbiased and stable estimate of model performance on unseen neurochemical data.

Workflow:

- Data Preparation: Preprocess your entire dataset (e.g., normalization, feature scaling). Crucially, set aside a final hold-out test set (typically 20-30%) before any CV begins. This test set will be used only for the final evaluation of the chosen model [17].

- Stratified Splitting: Split the remaining data (the training pool) into k stratified folds. Stratification ensures that the distribution of the target variable (e.g., patient response) is preserved in each fold, which is vital for classification tasks with imbalanced data [21].

- Model Training & Validation:

- For i = 1 to k:

- Train: Use folds {1, 2, ..., k} excluding fold i as your training data.

- Validate: Use fold i as your validation data to compute a performance metric (e.g., accuracy, AUC).

- Record: Store the performance score from the validation fold.

- For i = 1 to k:

- Performance Analysis: The final performance estimate is the mean of the k validation scores. The standard deviation of these scores provides insight into the model's stability (variance) [24].

This process is visualized in the following workflow diagram:

Protocol: A Framework for Rigorous Model Comparison

When developing a new model, it is essential to compare it against existing baselines. The following protocol, inspired by a framework proposed in Scientific Reports, helps ensure this comparison is statistically sound and not an artifact of the cross-validation setup [5].

Objective: To assess whether the observed performance difference between two models is statistically significant and not unduly influenced by the choice of cross-validation parameters.

Methodology:

- Define CV Regime: Choose a specific cross-validation scheme (e.g., 5-fold, 10-fold) and decide on the number of repetitions (M).

- Generate Performance Distributions: For each model, run the defined CV procedure, resulting in a distribution of performance scores (e.g., (K \times M) accuracy values).

- Apply Statistical Testing: Use a appropriate statistical test (e.g., a corrected t-test or permutation test) that accounts for the dependencies introduced by the overlapping training sets in CV. Caution: A standard paired t-test applied to the (K \times M$ scores can be flawed and inflate significance [5].

- Sensitivity Analysis: Repeat the comparison across multiple CV setups (e.g., different values of K and M). A robust finding should hold across various reasonable configurations. If the significance of the result fluctuates dramatically with the CV parameters, it may be unreliable [5].

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" essential for conducting rigorous machine learning experiments in neurochemical data analysis.

| Research Reagent | Function & Purpose |

|---|---|

| k-Fold Cross-Validation | Provides a robust estimate of model generalization error by rotating training and validation data splits, directly helping to evaluate the bias-variance trade-off [24] [17]. |

| Stratified K-Fold | A variant of k-fold CV that preserves the percentage of samples for each class in every fold, crucial for imbalanced biomedical datasets [21]. |

| L2 (Ridge) Regularization | A technique to control high variance (overfitting) by adding a penalty proportional to the square of the model coefficients' magnitude, discouraging overly complex models [23] [21]. |

| L1 (Lasso) Regularization | A technique to control variance and perform feature selection by adding a penalty that can force some model coefficients to become exactly zero [23] [21]. |

| Ensemble Methods (e.g., Random Forest) | Methods that reduce prediction variance by combining the outputs of multiple, slightly different models (e.g., via bagging) [19] [23]. |

| Learning Curves | Diagnostic plots of model performance (error) versus training set size, used to identify whether a model is suffering from high bias or high variance [19] [23]. |

| Nested Cross-Validation | A method used when both model tuning and performance estimation are required. It provides an almost unbiased performance estimate by using an inner loop for hyperparameter tuning and an outer loop for evaluation [17]. |

Visualizing the Trade-Off and Relationships

Visual Explainer: The Bias-Variance Trade-Off

The core challenge in model selection is finding the sweet spot between underfitting and overfitting. The following diagram illustrates how a model's total error is composed and how bias and variance change with model complexity.

Visual Guide: Model Diagnosis and Remediation

This decision tree provides a structured path for diagnosing and correcting common model performance issues.

FAQs on Cross-Validation for Neurochemical Data

What is the primary purpose of cross-validation in my research?

Cross-validation (CV) is a model validation technique used to assess how the results of your statistical analysis will generalize to an independent dataset [1]. Its primary purpose is to predict model performance on unseen data, helping to flag problems like overfitting or selection bias [1] [17]. In neurochemical data analysis, this provides an insight into how robust your model will be when deployed in real-world scenarios, ensuring that findings related to biomarker discovery or drug efficacy are reliable and not artifacts of a specific data sample [6] [17].

How do I choose the right cross-validation method for my neurochemical dataset?

The choice of cross-validation method depends on your dataset's size, structure, and the goals of your analysis. The table below summarizes the key characteristics of common methods to guide your selection.

| Method | Best For | Key Advantages | Key Disadvantages |

|---|---|---|---|

| k-Fold [1] [25] | Small to medium-sized datasets [25]. | Reduces variability in performance estimate; all data is used for training and validation [1] [25]. | Computationally more expensive than holdout [25]. |

| Stratified k-Fold [26] | Imbalanced datasets (e.g., rare event prediction). | Ensures each fold retains the class distribution of the full dataset, leading to more reliable estimates [26]. | Slightly more complex to implement than standard k-fold [26]. |

| Leave-One-Out (LOO) [26] [1] [25] | Very small datasets where maximizing training data is critical [25]. | Uses nearly all data for training, resulting in low bias [25]. | High variance in estimate (especially with outliers); computationally expensive for large datasets [26] [25]. |

| Hold-Out [1] [25] | Very large datasets or when a quick initial evaluation is needed [25]. | Simple and fast to execute [25]. | Performance estimate can be highly dependent on a single, potentially non-representative, data split; higher bias [1] [25]. |

| Blocked/Grouped [17] | Data with inherent groupings (e.g., multiple samples from the same patient, experiments run on different days). | Prevents data leakage by keeping all samples from a group in either the training or validation set, providing a more realistic performance estimate [17]. | Requires prior identification of groups within the data [17]. |

For neurochemical data with correlated measurements (e.g., repeated samples from the same subject), Blocked CV designs are often essential to avoid optimistically biased results [17].

What are the common pitfalls when setting up cross-validation?

- Ignoring Data Structure: Using standard CV on grouped data (e.g., multiple measurements from the same animal) leaks information between training and test sets, invalidating the results. Always use a blocked design for such data [17].

- Incorrect Performance Metrics: Using a CV score (e.g., misclassification rate) without a proper statistical test for significance. For robust inference, use permutation tests to simulate the null distribution of your performance measure [6].

- Data Leakage During Pre-processing: Performing steps like feature selection or normalization on the entire dataset before splitting into folds. This gives the model implicit knowledge about the test set. All pre-processing should be fit on the training data and then applied to the validation data within each CV fold.

- Unrepresentative Splits: Simple random splitting can create folds with different distributions of the target variable. For classification, use Stratified k-Fold to maintain class proportions [26].

Troubleshooting Common Experimental Issues

My model performs well during cross-validation but poorly on new data. What went wrong?

This classic sign of overfitting indicates that your model has learned patterns specific to your training data that do not generalize.

- Problem: The model may be too complex, or the CV setup may not accurately reflect real-world application conditions.

- Solution:

- Simplify the Model: Reduce model complexity by using regularization (e.g., L1/L2 penalty) or selecting fewer features.

- Review CV Procedure: Ensure you are using a CV method that accounts for the structure of your data. If your neurochemical data has a temporal component or is grouped, a standard k-fold will be inadequate. Switch to a blocked or time-series CV.

- Add a Hold-Out Test Set: Use a nested cross-validation approach. An outer loop handles the train-validation split (e.g., with k-fold), and an inner loop is used for model tuning on the training set only. A final, completely untouched hold-out test set is used for the ultimate evaluation of the chosen model [17].

The cross-validation results are highly variable between folds. How can I stabilize them?

High variability (variance) between folds suggests that your model's performance is highly sensitive to the specific data used for training.

- Problem: The dataset might be too small, or the model might be unstable.

- Solution:

- Increase the Number of Folds: In k-fold CV, using a higher value of

k(e.g., 10 or 20) can reduce the variance of the performance estimate [25]. - Repeat the Cross-Validation: Perform repeated k-fold CV (e.g., 10-fold CV repeated 5 times) with different random splits and average the results. This provides a more stable estimate [1].

- Check for Outliers: Identify and investigate potential outliers in your neurochemical data that might be disproportionately influencing the model in certain folds.

- Use a Simpler Model: A less complex model often has lower variance.

- Increase the Number of Folds: In k-fold CV, using a higher value of

How do I implement a blocked cross-validation design for data from multiple subjects?

When your neurochemical dataset contains multiple measurements from the same subject (or batch, or site), you must keep all data from one subject together in a single fold to prevent information leakage.

Methodology:

- Identify Groups: Define your grouping factor (e.g.,

Subject_ID). - Assign Groups to Folds: Instead of splitting individual samples randomly, randomly assign each unique subject (group) to one of the k folds.

- Iterate: For each fold, the validation set contains all samples from the subjects assigned to that fold. The training set contains all samples from all other subjects.

The following workflow diagram illustrates this process:

Experimental Protocols & Workflows

Protocol: Standard k-Fold Cross-Validation

This protocol is suitable for modeling neurochemical concentration-response relationships when data points are independent.

- Shuffle the Dataset: Randomly shuffle the entire dataset to remove any order effects [1].

- Split into k Folds: Partition the data into

k(commonly 10) equal-sized subsets, or "folds" [1] [25]. - Iterative Training and Validation: For each unique fold

i(whereiranges from 1 tok):- Set Aside Fold

i: Use this single fold as the validation (test) dataset. - Train the Model: Use the remaining

k-1folds as the training dataset. Fit your model on this data. - Validate the Model: Use the trained model to make predictions on the validation set (fold

i). Calculate the performance metric (e.g., Mean Squared Error, Accuracy).

- Set Aside Fold

- Calculate Final Performance: Once all

kfolds have been used as the validation set, compute the average of thekperformance metrics. This average is the final CV performance estimate [1] [25].

Protocol: Implementing a Permutation Test for CV Significance

This test determines if your model's cross-validated performance is statistically significant compared to a chance model [6].

- Compute True CV Score: Perform your chosen CV method (e.g., 10-fold) on the original dataset. Record the performance score (e.g., prediction accuracy),

S_obs. - Generate Null Distribution: For a large number of permutations

M(e.g., 1000):- Permute Labels: Randomly shuffle the response variable (e.g., treatment group, disease state) while keeping the predictor variables unchanged. This simulates the null hypothesis where no relationship exists.

- Compute Permuted Score: Run the same CV procedure on this permuted dataset and record the performance score,

S_perm_m.

- Calculate P-value: The p-value is calculated as the proportion of permutation scores that are better than or equal to the true observed score:

p = (# of S_perm_m >= S_obs + 1) / (M + 1)[6].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Neurochemical Analysis |

|---|---|

| LC-MS/MS Systems | Gold standard for precise identification and quantification of neurotransmitters, metabolites, and drugs in complex biological samples like brain tissue or cerebrospinal fluid. |

| Electrochemical Sensors | Enable real-time, in vivo monitoring of dynamic changes in neurochemical levels (e.g., dopamine, glutamate) in specific brain regions. |

| Immunoassay Kits (ELISA) | Allow for high-throughput screening of specific neurochemical targets or biomarkers using antibody-based detection. |

| Stable Isotope-Labeled Internal Standards | Essential for mass spectrometry to correct for sample matrix effects and variability in extraction efficiency, ensuring accurate quantification. |

| Solid Phase Extraction (SPE) Plates | Used for rapid and efficient clean-up and concentration of complex biological samples prior to analysis, improving signal-to-noise ratio. |

Implementing Cross-Validation Schemes for Neurochemical Datasets

Step-by-Step Guide to k-Fold and Stratified k-Fold Cross-Validation

This guide provides technical support for implementing k-Fold and Stratified k-Fold Cross-Validation, specifically contextualized for neurochemical data analysis research. These methods are crucial for developing robust and generalizable predictive models, as they provide a more reliable estimate of model performance on unseen data compared to a simple train/test split [27] [28]. The following FAQs, workflows, and protocols are designed to help researchers and drug development professionals avoid common pitfalls and apply these validation techniques correctly.

Frequently Asked Questions (FAQs) & Troubleshooting

1. FAQ: Why should I use k-Fold Cross-Validation instead of a simple holdout (train/test split) method?

- Answer: A simple holdout method uses a single, random split of your data for training and testing. This can result in a biased performance estimate, especially if your dataset is small or the split is unlucky. k-Fold Cross-Validation mitigates this by using multiple splits, ensuring that every data point is used for testing exactly once. This provides a more stable and reliable estimate of your model's generalizability by averaging performance across all folds [27] [28].

2. FAQ: My dataset has a severe class imbalance (e.g., few active compounds vs. many inactive ones). Which method should I use?

- Answer: For imbalanced datasets, standard k-Fold can be problematic as one or more folds might not contain any samples from the minority class. You should use Stratified k-Fold Cross-Validation. This method ensures that each fold has the same (or very similar) proportion of class labels as the complete dataset, leading to a more representative and valid performance estimate for the minority class [29].

3. FAQ: My neurochemical data involves repeated measurements from the same subject. How should I split the data to avoid data leakage?

- Answer: This is a critical consideration. If multiple records from the same subject are randomly split across training and test folds, your model may learn to "identify" the subject rather than the underlying neurochemical pattern, creating an overly optimistic performance estimate [27]. You must use Subject-Wise (or Group-Wise) Splitting. All data from a single subject must be contained entirely within a single fold (either all in training or all in testing). Most machine learning libraries, like scikit-learn, offer

GroupKFoldfor this specific purpose.

4. FAQ: I am getting very different performance metrics each time I run my k-Fold. What could be the cause?

- Answer: High variance in scores across folds can stem from two main issues:

- Small Dataset or High k-Value: With a small dataset, a high k-value (e.g., Leave-One-Out) leads to small test sets, making the score highly sensitive to the specific sample chosen for testing [28]. Consider using k=5 or k=10, which offer a good bias-variance trade-off [28].

- Improper Shuffling: Ensure you are shuffling your data before creating the folds. If your data is ordered (e.g., by experimental batch), not shuffling will create folds with very different distributions, inflating variance.

5. FAQ: How do I statistically compare two models when both are evaluated using k-Fold Cross-Validation?

- Answer: Caution is required. A common but flawed practice is to perform a paired t-test directly on the

k x Maccuracy scores from repeated k-fold runs. This violates the independence assumption of the test, as the training sets between folds overlap, and can lead to an inflated false positive rate (p-hacking) [5]. Recommended approaches include using a single, nested cross-validation for final model comparison or employing specialized statistical tests designed for correlated samples, such as the corrected resampled t-test.

Experimental Protocols & Workflows

Protocol 1: Standard k-Fold Cross-Validation

This is the general procedure for estimating model performance on a dataset without strong class imbalance.

- Shuffle the Dataset: Randomly shuffle your entire dataset to remove any order effects.

- Split into k Folds: Split the shuffled dataset into

k(e.g., 5 or 10) groups (folds) of approximately equal size [28]. - Iterate and Validate: For each of the

kfolds:- Hold-out Set: Treat the current fold as the test set.

- Training Set: Combine the remaining

k-1folds to form the training set. - Train Model: Fit your model on the training set. Crucially, any data preprocessing (e.g., scaling, imputation) must be fit on the training set and then applied to the test set to prevent data leakage.

- Evaluate Model: Score the model on the held-out test fold. Retain the evaluation score (e.g., accuracy, F1-score).

- Summarize Performance: Calculate the mean and standard deviation of the

kperformance scores. The mean represents the expected model performance, while the standard deviation indicates its variability [28].

Protocol 2: Stratified k-Fold Cross-Validation for Imbalanced Data

Use this protocol when working with imbalanced datasets, which are common in biomedical research (e.g., rare disease detection, high-throughput screening hits).

- Shuffle and Stratify: Shuffle the dataset. Instead of a random split, the data is split such that each fold preserves the percentage of samples for each class [29].

- Generate Folds: The algorithm ensures each fold contains roughly the same proportion of each class label as the full dataset.

- Iterate and Validate: The remaining steps are identical to the standard k-fold protocol (train on k-1 folds, validate on the held-out fold, and collect scores).

- Summarize Performance: Report the mean and standard deviation of the scores. For imbalanced data, consider using metrics beyond accuracy, such as AUC-ROC, F1-score, or precision-recall curves.

The following workflow diagram illustrates the core k-fold procedure, common to both standard and stratified approaches.

Decision Support and Comparative Analysis

The table below summarizes key characteristics to help you choose the appropriate cross-validation method.

Table 1: Comparison of Cross-Validation Methods for Neurochemical Data

| Aspect | Standard k-Fold | Stratified k-Fold | Subject-Wise/Group k-Fold |

|---|---|---|---|

| Primary Use Case | Balanced datasets with independent samples. | Imbalanced classification tasks. | Data with multiple correlated samples per subject (e.g., longitudinal studies). |

| Key Advantage | Simple; reduces variance of performance estimate compared to a single holdout. | Preserves class distribution in each fold; provides a more reliable estimate for minority classes [29]. | Prevents data leakage and over-optimistic performance by keeping a subject's data in one fold [27]. |

| Key Consideration | Will perform poorly on imbalanced data. | Only applicable to classification problems. | Requires a group identifier for each sample. |

| Recommended k-values | k=5 or k=10 [28]. | k=5 or k=10. | k=5 or k=10, but ensure enough groups per fold. |

The following decision chart provides a logical pathway for selecting the right validation strategy based on your dataset's characteristics.

Table 2: Key Research Reagent Solutions for a Cross-Validation Pipeline

| Item / Concept | Function / Explanation |

|---|---|

| Scikit-learn Library (Python) | The primary toolkit providing implementations for KFold, StratifiedKFold, GroupKFold, and model training/evaluation. |

| Stratified Splitting | An algorithm that maintains class distribution across folds, crucial for validating models on imbalanced neurochemical data [29]. |

| Hyperparameter Tuning | The process of optimizing model settings. Must be performed within the training folds of each CV cycle (e.g., via nested CV) to avoid bias [27]. |

| Performance Metrics (AUC, F1) | Evaluation measures robust to class imbalance. Prefer these over accuracy for most real-world neurochemical datasets. |

| Data Preprocessors (Scalers) | Tools for standardizing data. Must be fit on the training fold and applied to the validation/test fold to prevent data leakage. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the fundamental difference between Blocked Cross-Validation (BCV) and Repeated Cross-Validation (RCV), and why should I use BCV for my neurochemical data?

Blocked Cross-Validation is a novel approach where the repetitions are blocked with respect to both the cross-validation partition and the random behavior of the learner itself [30]. The key advantage over Repeated Cross-Validation is that BCV provides more precise error estimates for hyperparameter tuning, often with a significantly reduced number of computational runs [30]. For neurochemical data, where experiments can be costly and data is limited, this increased efficiency and precision directly translates to more reliable model selection without excessive computational expense.

Q2: My dataset contains multiple measurements from the same subject. Is standard Leave-One-Out Cross-Validation (LOOCV) appropriate?

No, standard LOOCV is likely inappropriate. When your data has a grouped or hierarchical structure (e.g., multiple measurements per subject), you must use a validation scheme that respects this structure, such as Leave-One-Subject-Out (LOSO) or, more generally, Leave-One-Group-Out Cross-Validation (LOGOCV) [31] [32]. Using standard LOOCV, which treats all measurements as independent, can create a data leakage where the model is trained on some data from a subject and tested on other data from the same subject. This leads to overly optimistic performance estimates because the model may be learning subject-specific nuisances rather than the general underlying neurochemical relationship [32].

Q3: How do I choose between Blocked CV and LOSO for my specific research problem?

The choice hinges on the structure of your data and the source of randomness you wish to control.

- Use Blocked CV when your primary goal is precise hyperparameter tuning for a single model and you want to control for the inherent randomness in both the data splitting and the learning algorithm itself [30].

- Use LOSO (a type of LOGOCV) when your data is grouped by "subjects" or other experimental units, and your goal is to estimate how well your model generalizes to entirely new, unseen subjects [31]. This is common in neuroimaging and clinical studies.

Q4: A reviewer criticized my use of cross-validation for hypothesis testing, citing the Neyman-Pearson Lemma. How should I respond?

This is a nuanced point in neuroimaging and related fields. While the Neyman-Pearson Lemma establishes the optimality of likelihood-ratio tests for simple hypotheses, cross-validation-based tests fulfill a different need: assessing predictive performance [6]. A cogent response is that "the inference made using cross-validation accuracy pertains to ... the statistical dependence (mutual information) between our explanatory variables and neuroimaging data" [6]. Cross-validation tests, especially when combined with permutation testing ( Predictive Performance Permutation or "P3" tests), are valid for testing the null hypothesis that a model's predictive accuracy is no better than chance, an inferential need not directly met by classical tests [6].

Troubleshooting Common Experimental Issues

Problem: High variance in cross-validation error estimates.

- Potential Cause & Solution: Using an inappropriate validation scheme for the data structure. If your data is grouped, you are likely violating the assumption of independence between training and test sets. Solution: Switch to LOGOCV/LOSO to ensure groups are not split across training and test sets [32].

- Potential Cause & Solution: The evaluation metric is unstable due to small test set sizes. Solution: Consider Blocked CV, which is designed to provide more precise (lower variance) error estimates than Repeated CV for hyperparameter tuning [30].

Problem: Cross-validation results are too optimistic compared to real-world deployment.

- Potential Cause & Solution: Data leakage. Ensure that all preprocessing steps (like standardization or feature selection) are learned from and applied to the training fold only, and not the entire dataset before splitting. Solution: Use a pipeline that encapsulates all preprocessing and model fitting steps, then pass this entire pipeline to the cross-validation function [7].

Problem: Model selected via cross-validation performs poorly on new subjects.

- Potential Cause & Solution: The cross-validation method does not match the intended prediction task. If the goal is to predict for new subjects, but your CV method randomly splits all measurements, the evaluation is not realistic. Solution: Re-evaluate your model using LOSO, which directly simulates the scenario of making predictions for a new, unseen subject [31].

Table 1: Comparison of Cross-Validation Schemes for Structured Data

| Scheme | Primary Use Case | Key Advantage | Key Disadvantage | Suitable for Neurochemical Data? |

|---|---|---|---|---|

| Blocked CV | Hyperparameter tuning | More precise error estimates with fewer computations [30] | Novel method, less established in common libraries | Yes, for efficient and precise model optimization |

| LOSO/LOGOCV | Grouped data (e.g., subjects) | Accurate generalization estimate to new groups [31] [32] | Computationally expensive for many groups; correlated training sets can increase variance [33] [32] | Yes, essential for subject-based data structures |

| Standard LOOCV | Small, non-grouped datasets | Low bias, uses most data for training [33] | High variance in error estimate; invalid for correlated/grouped data [33] [32] | No, unless all measurements are truly independent |

| k-Fold CV | General model evaluation | Good bias-variance trade-off [7] | Can be invalid if data is grouped or has temporal structure | No, for subject-based data, unless folds are created by group |

Table 2: Key Parameters for Implementing Advanced CV Schemes

| Parameter | Blocked CV | LOSO/LOGOCV |

|---|---|---|

| Number of Splits | Defined by the number of blocks and random seeds [30] | Equal to the number of unique groups (e.g., subjects) [33] |

| Training Set Size | Varies by the underlying CV partition | (Total samples - samples in left-out group) per split |

| Test Set Size | Varies by the underlying CV partition | All samples from the left-out group |

| Critical Implementation Note | Blocking must account for randomness in the learner algorithm [30] | The grouping factor (e.g., 'Subject_ID') must be explicitly defined [32] |

Experimental Protocols

Detailed Methodology for Implementing Blocked Cross-Validation

Blocked Cross-Validation aims to provide a more precise estimate of model performance by controlling for two sources of variance: the randomness in the data splitting (partition variance) and the randomness in the learning algorithm itself (algorithmic variance) [30].

- Define the Base Resampling Method: Start with a standard cross-validation scheme, such as 5-fold CV, as the base partition.

- Introduce Blocking over Randomness: For each fold in the base partition, run the model training multiple times. However, instead of using different random seeds for every run (as in RCV), use the same random seed for the learner for all folds within a single "block."

- Repeat with New Blocks: Repeat the process from step 2 for a desired number of blocks (

B), each time using a new random seed for the learner, but keeping it consistent across folds within that block. - Calculate the Performance Metric: For each block, calculate the performance metric (e.g., mean squared error) by averaging the results across all folds within that block.

- Final Estimate: The final performance estimate is the average of the

Bblock-level estimates.

This procedure "blocks" the randomness of the learner, leading to a more stable and precise comparison between different hyperparameter settings [30].

Detailed Methodology for Implementing Leave-One-Subject-Out Cross-Validation

LOSO is a specific application of Leave-One-Group-Out CV where the group is an individual subject.

- Identify the Grouping Variable: Define the variable that identifies each subject (e.g.,

Subject_ID). LetSbe the total number of unique subjects. - Iterate Over Subjects: For each subject

sin the set ofSsubjects: a. Test Set: All data points belonging to subjectsare held out as the test set. b. Training Set: All data points from the remainingS-1subjects form the training set. c. Train and Evaluate: A model is trained on the training set and used to predict the held-out test set for subjects. A performance metric (e.g., accuracy, RMSE) is recorded. - Aggregate Results: This process is repeated until every subject has been the test set exactly once. The final model performance is the average of the

Sperformance estimates obtained in each iteration [33] [31].

This method provides an almost unbiased estimate of a model's ability to generalize to new, unseen subjects, which is critical for clinical and translational neurochemical research.

Workflow and Relationship Diagrams

LOSO CV Workflow for N Subjects

CV Scheme Selection Guide

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Cross-Validation

| Tool / 'Reagent' | Function / Purpose | Example in Python (scikit-learn) |

|---|---|---|

| GroupKFold / LeaveOneGroupOut | Splits data into folds based on a defined group structure, preventing data leakage. Essential for LOSO. | from sklearn.model_selection import LeaveOneGroupOut, GroupKFold |

| Pipeline | Ensures that all preprocessing (scaling, imputation) is fitted only on the training data within each CV fold, preventing leakage. | from sklearn.pipeline import make_pipeline |

| Blocked Resampler | Implements the Blocked CV procedure to reduce variance in performance estimates. (May require custom implementation based on [30]) | Custom implementation based on KFold and controlling random_state per block. |

| Permutation Test | Generates a valid null distribution for testing the statistical significance of a CV-based performance metric [6]. | from sklearn.model_selection import permutation_test_score |

| Cross-Validate Function | Performs cross-validation and returns multiple metrics, fit times, and score times for a more comprehensive evaluation. | from sklearn.model_selection import cross_validate |

Frequently Asked Questions

What is the core principle behind temporal data splitting?

The core principle is to split data based on time to mimic real-world scenarios where models are trained on historical data and used to predict future outcomes. This prevents data leakage, where information from the future inadvertently influences the training of the model, ensuring a more realistic performance evaluation [34].

Why shouldn't I just split my data randomly when time is a factor?